FactExtractionReport1 - ITACS | International Technology

advertisement

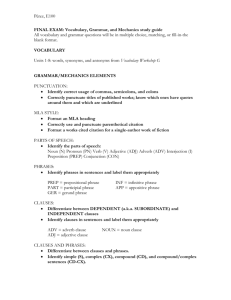

Report on using the English Resource Grammar to extend fact extraction capabilities 1 Introduction This report is a deliverable for Project 4 task 2, Quarter 1, originally named “Initial principles for mapping external resources into CE framework”. It is an extension of the long paper submitted to the Fall Meeting 2013 [22] Fact extraction from unstructured sources is a key component in the supply of information to human users such as analysts, but the extraction of the complete set of facts is a complex and challenging task. In addition it is necessary to express these facts using a conceptual model of the domain understood by the users and to present the rationale for their extraction in order that they can use and assess the facts in their analysis tasks. In the BPP11, [1,2] we demonstrated an approach of using Controlled English (CE) [3,4] for the facts extracted by Natural Language (NL) processing and the configuration of the NL processing itself, in order that extracted facts may be used for inference of high value information, and that linguistic processing is made more accessible to the analyst user. Two key aspects were the development of a common linguistic model and the mapping of the linguistic structures into the domain semantics of the user. However linguistic capabilities of the BPP11 parsing system was limited, and we proposed to address this in the BPP13 research by seeking to integrate more sophisticated linguistic systems developed by the DELPH-IN consortium [6]. This report describes initial work into the use of the English Resource Grammar (ERG) [11] from the DELPH-IN community, capable of generating high quality and detailed representations of the syntax and semantics of English sentences, and outlines how transformations might be made between knowledge in the ERG and CE-based representations, so that the semantic output can be extracted into higher quality CE facts, that domain semantics contained in a CE model can be applied to assist parsing and that linguistic reasoning can be made more available to the nontechnical human user when configuring the extraction process to a particular domain, in support of information access and knowledge sharing in coalition operations. 2 An Example We continue to use the SYNCOIN dataset [13] as an example of NL text to be interpreted. This provides reports from a military-relevant scenario with consistent story threads for different aspects of military and civilian operations under a background of counter intelligence. One thread is the operation of the HTT (Human Terrain Team) responsible for maintaining a good relationship with the local population. One sentence from a report notes that: HTT are conducting surveys in Adhamiya to judge the level of support for Bath’est return. Such a sentence states a complex set of relationships, including entities (HTT, survey), situations (to conduct) relationships (for Bath’est return) and motivational links (to judge). The ERG system is able to parse this sentence and represent the semantic relationships, and our task is to convert these relationships into domain specific CE facts. Steps to produce some of the possible CE facts are described below. 3 The English Resource Grammar (ERG) The ERG is one of the linguistic resources developed over the last two decades by the DELPHIN consortium, a “collaborative effort aimed at deep linguistic processing of human language”, with ERG development undertaken principally at CSLI, Stanford, by Flickinger [11,21]. The ERG defines rules and structures to model a significant portion of the linguistic phenomena of English and it is capable of analysing sentences more accurately, and to a greater level of detail, than the Stanford statistical parser [14] used in BPP11. Although DELPH-IN is developing other David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 1 Report on using the English Resource Grammar to extend fact extraction capabilities grammars, e.g. the Matrix, [9] we have chosen to use the ERG, due to the higher coverage of English that it affords. The theoretical foundation of the ERG language model is Head-Driven Phrase Structure Grammar [10] which provides an account of language as the composition of substructures (such as phrases of different types) into higher level structures, where substructures are characterized by key “head” subcomponents (e.g. nouns, verbs) and where the linguistic constraints on composition are based on the nature of the heads and are highly lexicalized, i.e. the majority of information is contained in lexical types on which the lexicon is built. Such linguistic information is represented in a formal constraint-based language called Typed Feature Structures (TFS) [5], which defines a hierarchy of types containing attributes, values, variables and equalities between variables. TFS is used to represent (nearly) all of the model of linguistic phenomena, with TFS types defining compositional grammar rules and lexical types, and TFS instances of these types defining the lexicon of words. Thus a TFS type in the ERG defines linguistic notions such as “count noun”, and “head-initial phrase”. TFS instances in the ERG lexicon define such entries as “cat” being a “count noun”; such an entry also includes the orthography of the word (i.e. the way the word is written on the page). In order to parse a sentence, it is necessary to provide a logical definition of the nature of a “well formed” sentence. The TFS definition is that all words in the sentence must be matched to a lexical entry, via the orthography; that all such lexical entries must be composed by grammatical rules into higher level phrases; that all such phrases must be further composed into higher level phrases or root phrases; that there must be a root phrase that covers all the words and phrases, and that this root phrase must be defined as one of an acceptable set of roots. By allowing different types of roots, it is possible to define fragments of sentences as being acceptable, if this is warranted by the nature of the texts being parsed. Here, “matching” between lexical items and phrases, and between phrases and phrases, is defined as unification of structures, including creation of structure when unification of variables is attempted. The ERG defines the grammar rules and lexical items for English, which are to be interpreted as defined above. However, in order to actually parse a sentence it is necessary to run a parser against the sentence, which applies the TFS structures to the sentence. The DELPH-IN consortium has developed several parsers, and we have chosen to use the PET parsing system [15], as this is an efficient implementation of the unification and parsing algorithm written in C++, which may potentially be integrated into other systems, for example the CE store. As a result of parsing a sentence, the ERG provides a definition of the semantics of the sentence in the Minimal Recursion Semantics (MRS) formalism [8]. This specifies a set of elementary predications, each being a logical predicate together with arguments. The predicate is derived from the lexicon or the grammar rules, and may indicate the existence of an individual (e.g. that individual x7 is the HTT or x9 is a survey) or the occurrence of an event together with the individuals involved (e.g. that the event e3 is a “conduct” event with the individual x7 being the first (subject) argument), or the presence of a more abstract piece of information (such as that the HTT is a definite object). As far as the basic ERG/PET system is concerned, the output of the MRS completes the parsing process. However for our use in fact extraction it is necessary to turn the MRS into domain semantics, and thereby generating CE facts representing the meaning of the sentence. The transformation of the MRS into domain facts is a key research item in the next stages of the tasks. Nevertheless, even the output of the semantics in the form of MRS is a significant addition compared to the output from the Stanford parser, which did not provide semantics. Further information is provided to assist the handling of scope relations between quantifiers in sentences such as “all dogs chase a cat”, and further work is required to handle this information. David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 2 Report on using the English Resource Grammar to extend fact extraction capabilities The use of the ERG for fact extraction requires the combination of several theories and technologies, the ERG, the PET parser, TFS and MRS. We will refer to this combination as the “ERG system”. 4 Integrating CE and the ERG system A key step in using the ERG system for fact extraction in a given analyst’s domain is to be able to transform in both directions between the various language structures (represented as TFS and MRS) and the CE representing the analyst’s CE conceptual model, facts and rationale. We aim to reuse the BPP11 linguistic model [16] as the basis of this mapping, in order that existing applications can make use of the new CE outputs. Such transformations are to be performed: between the ERG lexicon of words (in TFS and associated MRS relations) and the CE domain concepts as this provides the starting point for the extraction of facts from the sentence. The lexicon itself may also have to be augmented with specialist words that are likely to occur in the text sources for the domain. between the parse tree output by the PET parser and a CE representation of the parse tree, in order that existing BPP11 applications that work with a parse tree can be supported between the ERG grammar rules (in TFS) and CE structures for representing parsing rules in order that users may better understand the nature of the processing. This will facilitate the development of new domain-specific parsing rules if different linguistic phenomena occur in the texts for the domain. This is more advanced than changing the lexicon and may require some degree of linguistic skill to reengineer the grammar. The mapping will also facilitate the presentation of rationale for the linking of extracted facts to the original sentences between the semantics of the sentence expressed in the MRS output by the PET parser and CE facts. This transformation may also be useful for using domain semantics to guide the parsing itself To address these transformations we propose the following architecture, revised from the BPP11 version [2]: where the ERG system provides the phrase structures and general semantics, and we seek to extend the use of MRS to represent domain semantics as well as potentially providing guidance to guide the parsing. David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 3 Report on using the English Resource Grammar to extend fact extraction capabilities 4.1 The Lexicon Whilst the ERG lexicon is comprehensive, there is still a need to construct CE sentences to define new words to be added to the lexicon, that may be present only in the domain. For example, consider the ERG lexical entry for “survey”: survey_n1 := n_-_c_le & [ ORTH < "survey" >, SYNSEM [ LKEYS.KEYREL.PRED "_survey_n_1_rel", PHON.ONSET con ] ]. “survey_n1” is the name of the instance of the entry, which acts only as an identifier and does not provide any type information. “n_-_c_le” defines the lexical type of this entry, here a count noun. The string “survey” defines the orthography of the word. “_survey_n_1_rel” defines the MRS relation, providing the “meaning” of the word. To translate between CE and the TFS needed to express words in the ERG lexicon, we propose some translation principles. The MRS relation ("_survey_n_1_rel") must be mapped to the equivalent entity concept in the CE conceptual model (survey), since the MRS relation is the semantic output of the parser. We propose that the MRS relation is equivalent to the word sense in the CE lexical model, so it is possible to state “the noun sense _survey_n_1_rel”. This may then be linked to the conceptual model with an “expresses” relation, e.g. “the noun sense _survey_n_1_rel expresses the entity concept survey”. As in the BPP11, this link must be defined by the creator of the conceptual model, assisted by an “Analyst’s Helper”. The rest of the ERG lexical entry defines a “lexeme”. In the CE lexical model, the lexeme is not explicit and is represented by a grammatical form, with an associated orthogonal form, and (optionally) with a Penn tag as postfix on the identifier, for example “the grammatical form |surveys_NNS| is written as the word |surveys|”. This may then be associated with the word sense using the “is a form of” relation, eg “the grammatical form |surveys_NNS| is a form of the word sense _survey_n_1_rel”. In the CE lexical model there is only 1 grammatical form entity for a combination of word (survey) and Penn tag (NN), thus it does not make sense to say “the grammatical form |surveys_NNS_1|”. This leads to potential ambiguities, where a single grammatical form G may have several meanings, and this would be expressed by a set of relations “the grammatical form G is a form of the word sense Y” with multiple values of Y. Whereas this is logically equivalent to the TFS structures, nevertheless there may be merit in having the different form-wordsense relations given unique names (equivalent to the lexicon entry names) for tracing purposes; in which case the need for a lexeme from the CE model may have to be re-evaluated. The ERG lexical type (n_-_c_le) also serves to define a subtype of the grammatical form, for example “the count noun”. Such lexical types could form a hierarchy of CE concepts, in an equivalent manner to the hierarchy of ERG types (count noun is a type of noun). Orthography is represented by an attribute of the grammatical form (e.g. the plural noun |surveys_NNS| is written as the word |surveys|), with multiple word orthographies being mapped to compound nouns. Using these principles we may define an equivalent CE definition for the TFS lexical entry: there is a count noun named |surveys_NNS| that is a plural noun and is written as the word |surveys| and is a form of the noun sense _survey_n_1_rel. the noun sense _survey_n_1_rel David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 4 Report on using the English Resource Grammar to extend fact extraction capabilities expresses the entity concept survey. Note that in the parse tree for the example, shown below, the lexical entry for “surveys” is implicit, being computed by a lexical rule (n_pl_olr) as required. However here we take such implicit entries to be already contained in the lexicon; hence the difference between the orthography of the count noun above (surveys) and the ERG lexical entry. A Prolog program has been constructed to demonstrate the mapping of CE into TFS, thus allowing the user to construct new word definitions in CE to be added to the ERG lexicon. Only the first sentence pattern (there is a count noun …) is required for this purpose, since the second sentence pattern does not provide information that is added to the TFS lexical entry. The alternative direction, TFS to CE, is yet to be investigated. 4.2 The Parse Tree and Grammar Rules The ERG defines a set of grammar rules that constrain how the words in the input sentences can be combined and turned into a tree of phrase types, and the PET parser uses these rules to generate valid parse trees from the input sentences. In the BPP11 work, a similar role was played by the Stanford parser, although the mechanism by which this occurred was markedly different. It is necessary to translate between the parse tree and CE, if the ERG system is to be used by NL processing systems that require use of the parse tree; such systems may be used by other projects in the BPP13 research, such as the conversational interface. However it is of importance that the CE version of the PET parse tree is based on the same linguistic model as the previous BPP11 works, so that the Stanford and ERG parsers could be used together. It is also necessary to translate between ERG grammar rules and CE, because users may need to modify the grammar rules in order to handle domain specific language structure and because the user may wish to understand the nature of the linguistic processing in order to provide rationale for the inference of high value information. Our research is focusing mainly on the semantic processing, so the details of the parse tree are of lesser priority. Furthermore the linguistic processing performed by the ERG system is complex, due to the complex nature of the linguistic phenomena that occurs in English. For these reasons only a limited amount of detail will be given about the parse trees and grammar rules in this paper. For the example sentence, the raw parse tree output from the ERG parser is too long to show in its complete form. A fragment for the phrase “conducting surveys” is shown below: (563 hd-cmp_u_c 0 2 4 (289 v_prp_olr 0 2 3 (25 conduct_v1/v_np*_le 0 2 3 [v_prp_olr] (3 "conducting" 0 2 3 ))) (328 hdn_bnp_c 0 3 4 (260 n_pl_olr 0 3 4 (26 survey_n1/n_-_c_le 0 3 4 [n_pl_olr] (4 "surveys" 0 3 4 ))))) Although this phrase is taken out of context, (it is a adjunct to the auxiliary verb “are”, and also includes the prepositional ”in Adhamiya”), it can be seen that there are two subcomponents, a verb phrase based on “conduct” and a noun phrase based on “survey”. Harder to see is that the verb “conduct” has been derived by a lexical rule (v_prp_olr) from the word “conducting”, that the noun “survey” has been derived by a lexical rule (n_pl_olr) from the word “surveys”; that the lexical entry (v_np*_le) for conduct_v1 indicates it is a verb taking a noun phrase as a complement; that the lexical entry survey_n1 (n_-_c_le) indicates it is a count noun. The top part David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 5 Report on using the English Resource Grammar to extend fact extraction capabilities of this tree is constructed from the rule whose type is “hd-cmp_u_c” which takes a head and a complement (the head is the verb phrase and the complement is the noun phrase) and constructs a single sub-component, which becomes the adjunct structure for the auxiliary verb “are”. This parse tree fragment may be turned into CE following the BPP11 lexical model, in order that other parsing systems may use it. The model is based upon phrases that have heads and dependencies, but such information is not directly present in the ERG parse trees; therefore the current translation uses specific information about the lexical types to determine which subcomponents are heads and dependents. Such a bespoke process is fragile to fundamental changes in the lexical types, so requires further investigation to provide a more robust solution. Further work is also required to generate all of the tree structure that was provided by the Stanford parser. Following this process, the resulting CE sentences are: the verb phrase #p_563 has the verb phrase #p_289 as head and has the noun phrase #p_328 as dependent. the verb phrase #p_289 has the verb |conducting_VBG| as head. the noun phrase #p_328 has the noun phrase #p_260 as head. the noun phrase #p_260 has the noun |surveys_NNS| as head. The ERG parse tree provides types of information not available from the Stanford parser, such as the lexical type (n_-_c_le), the nature of the phrase structure (being a head complement phrase) and additional features (n_pl_plr indicating a noun is a plural form). For completeness this information is provided in CE about the phrases and grammatical forms: the verb phrase #p_563 is a head complement phrase and has 'hd-cmp_u_c' as erg type. the verb phrase #p_289 is a head phrase and has 'v_prp_olr' as erg type. the verb |conducting_VBG| is a present verb and has 'v_np*_le' as erg type and has the thing v_prp_olr as feature. the noun phrase #p_328 is a head phrase and has 'hdn_bnp_c' as erg type. the noun phrase #p_260 is a head phrase and has 'n_pl_olr' as erg type. the noun |surveys_NNS| is a plural noun and has 'n_-_c_le' as erg type and has the thing n_pl_olr as feature. Phrase structures may also be diagrammed as a tabular version of the CE, where the two subphrases (verb and noun) are more easily be seen: David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 6 Report on using the English Resource Grammar to extend fact extraction capabilities the head complement phrase #p_563 that has as head and has as dependent the head phrase #p_289 that has as head the present verb |conducting_VBG| and has as erg type v_prp_olr the head phrase #p_328 that has as head and has as erg type and has as erg type the head phrase #p_260 that has as head the plural noun |surveys_NNS| and has as erg type n_pl_olr hdn_bnp_c hd-cmp_u_c The topmost grammar rule for this construction is “hd-cmp_u_c”, which combines a head and a complement. This rule is defined in TFS, but it is difficult to explain this rule in any detail here, due to lack of space and due to the complexity of the linguistic theory that it follows. The rule is composed of structures at different levels of the type hierarchy, and to give a flavour, two of its (relatively simple) supertypes are shown below. Firstly it is a “headed” phrase: headed_phrase := phrase & [ SYNSEM.LOCAL [ CAT [ HEAD head & #head, HC-LEX #hclex ], AGR #agr,CONJ #conj ], HD-DTR.SYNSEM.LOCAL local & [ CAT [ HEAD #head, HC-LEX #hclex ], AGR #agr,CONJ #conj ] ]. Such phrases obey the Head Feature Principle [10], which states that a phrase must share its key properties with the head of the phrase, where the head is defined as being one of the words in the phrase whose type defines its essential nature (for example a noun phrase with have a noun as its head). In this type, the HD-DTR (“ head daughter”) holds the head of the phrase and this is passed up to the head of the phrase itself, along with agreement information (e.g. its person, number and gender). Secondly it is a “head initial” phrase: basic_head_initial := basic_binary_headed_phrase & [ HD-DTR #head, NH-DTR #non-head, ARGS < #head, #non-head > ]. David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 7 Report on using the English Resource Grammar to extend fact extraction capabilities This states that the phrase is composed an ordered sequence of two subphrases, the first being the head daughter (HD-DTR) and the second being the non-head daughter (NH-DTR); in effect the “head” is the initial subphrase. It should be noted that the full definition of the hd_cmp_u_c rule is far more complex that this, and comprises information from 23 different types, each providing a set of constraints on the structure of the phrase. This includes information that it is a binary phrase, and that it operates left to right. To get a better visualisation of these TFS definitions, we are exploring the use of CE in two ways. Firstly as a graph, with CE entities and CE relations, where the uppercase names are attributes (eg HEAD), and the pathways from the central phrase can be followed to the entities that are the values of these attributes. The diagram below shows just the information from the “basic_head_initial” definition. The dotted lines indicate matching (unification) between the entities that are forced by the type definitions. For example the matching of the ARGS (0th and 1st) of the subphrases below the arrow shape to the HD-DTR and NH_DTR are caused by the “basic_head_initial” type definition. Another example is given below, where both of the two rules are combined in the same diagram: David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 8 Report on using the English Resource Grammar to extend fact extraction capabilities A second way to visualize the rule definition is via a “linguistic frame” [17] that defines the constraints between the phrase and its subcomponents via CE statements. For example the linguistic frame for the basic_head_initial type is: there is a linguistic frame named f1 that defines the basic-head-initial PH and has the sequence ( the sign A0 , and the sign A1 ) as subcomponents and has the statement that ( the basic-head-initial PH has the sign A0 as HD-DTR and has the sign A1 as NH-DTR ) as semantics. This may be read as defining a phrase of type “basic-head-initial” which composes two subphrases (or signs) on the parse tree called A0 and A1 and has these subphrases as head daughter and non head daughter respectively. The type “headed-phrase” may be defined as a further linguistic frame: there is a linguistic frame named f2 that defines the headed-phrase PH and has the statement that ( the headed-phrase PH has the HEAD of the LOCAL CAT of the HD-DTR as the HC-LEX of the LOCAL CAT and has the HC-LEX of the LOCAL CAT of the HD-DTR as the HC-LEX of the LOCAL CAT and has the AGR of the LOCAL SYNSEM of the HD-DTR as the AGR of the LOCAL SYNSEM and has the CONJ of the LOCAL SYNSEM of the HD-DTR as the CONJ of the LOCAL SYNSEM ) as semantics. This defines constraints on any phrase of this type such that the stated attributes of the head daughter match the same attributes of the headed-phrase. Several extensions are being explored to CE in order to simplify these linguistic frames: firstly, an ability to have a path of attributes, following the graph of relations, for example “the HEAD of the LOCAL CAT of the HD-DTR”; secondly the definition of attribute names as representing common subpaths, for example “LOCAL CAT” as being a shorthand for “the CAT of the LOCAL of the SYNSEM”. These extensions are experimental, and are particularly useful when defining TFS structures, which are sets of pathways across the graph of entity attributes. It is necessary to be able to convert between TFS structures and CE linguistic frames, in order that users can create new rules and more easily understand existing rules. More work is required to design the mechanisms for such translations. 5 Semantics and Rationale A key aspect is the mapping of the semantics extracted by the ERG system in the MRS formalism to the domain semantics of the user’s conceptual model as this allows the output of facts in CE. We propose that the MRS output be translated into domain specific CE facts in three David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 9 Report on using the English Resource Grammar to extend fact extraction capabilities stages: a raw CE form containing only the same information as the output MRS; an intermediate CE form containing useful abstractions of the semantics; the domain specific CE form. To illustrate the process, we show a fragment of the MRS output for the fragment about “HTT are conducting surveys”: [ LTOP: h1 INDEX: e3 [ e SF: PROP TENSE: PRES MOOD: INDICATIVE PROG: + PERF: - ] RELS: < [ udef_q_rel<-1:-1> LBL: h4 ARG0: x6 [ x PERS: 3 NUM: PL IND: + ] RSTR: h7 BODY: h5 ] [ named_rel<-1:-1> LBL: h8 ARG0: x6 CARG: "HTT" ] [ "_conduct_v_1_rel"<-1:-1> LBL: h9 ARG0: e3 ARG1: x6 ARG2: x10 [ x PERS: 3 NUM: PL IND: + ] ] [ udef_q_rel<-1:-1> LBL: h11 ARG0: x10 RSTR: h13 BODY: h12 ] [ "_survey_n_1_rel"<-1:-1> LBL: h14 ARG0: x10 ] ... HCONS: < h1 qeq h2 h7 qeq h8 h13 qeq h14 ... > ] There is insufficient space here to describe all of the information in this MRS output, which itself has been shorted from the original. However two examples may be picked out. Firstly the MRS relation "_survey_n_1_rel” corresponds to the “surveys” being conducted. Here the relation contains a single argument (ARG0) whose value (x10) “stands for” the survey. This is the equivalent to the BPP11 general semantic intuition of noun phrases “standing for” things in the real world. There is further information about x10 in the ARG2 argument for the MRS relation “_conduct_v_1_rel”, which has the “NUM: PL” feature set, which indicates that this is plural, and hence x10 is actually a group of surveys (with unknown cardinality). There is a further relation in the MRS, udef_q_rel, indicating the indefinite nature of the phrase “surveys”, and this will be taken up below. Secondly, the act of “conducting” the surveys is shown by the MRS relation "_conduct_v_1_rel", which has three arguments, the “event” of conducting (e3), the thing doing the conducting (x6) and the thing being conducted (x10). This is equivalent to the BPP11 general semantic intuition that verb phrases “stand for” situations (of which event is a subtype) and there are things fulfilling roles in this situation (although the roles of agent and patient are not here specified, and it is a matter of research as to whether the roles should be extracted, especially as more complex sentences can generate pragmatic “passive markers” in the MRS relations). David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 10 Report on using the English Resource Grammar to extend fact extraction capabilities 5.1 The Raw MRS form The first stage in turning the MRS output into CE is to translate into “elementary predications” with their arguments: the mrs elementary predication #ep1_0 is an instance of the mrs predicate 'udef_q_rel' and has the thing x6 as zeroth argument. the mrs elementary predication #ep1_1 is an instance of the mrs predicate 'named_rel' and has the thing x6 as zeroth argument and has 'HTT' as c argument. the mrs elementary predication #ep1_2 is an instance of the mrs predicate '_conduct_v_1_rel' and has the situation e3 as zeroth argument and has the thing x6 as first argument and has the thing x10 as second argument. the mrs elementary predication #ep1_3 is an instance of the mrs predicate 'udef_q_rel' and has the thing x10 as zeroth argument. the mrs elementary predication #ep1_4 is an instance of the mrs predicate '_survey_n_1_rel' and has the thing x10 as zeroth argument. Further information may be provided about the features of the entities, for example, that the “surveys” are plural: there is a thing named x10 that has the person category third as feature and has the number category plural as feature and has the category 'IND:+' as feature. The remaining information about scope quantification (held in the HCONS section) is also captured as CE, in the form of an “equals modulo quantifiers” relation between mrs elementary predications. 5.2 Intermediate MRS It would be possible to translate this “raw CE” directly into domain specific CE, but it is proposed to generate some intermediate, more abstract, representations of certain aspects of the raw MRS, as such representation may provide useful for understanding what is being represented. For example, the quantification of things (such as the plural nature of “surveys”) is spread across several MRS relations, and it may be useful to build an intermediate “quantification” specification. In this example we might state: there is a group quantification q1 that is on the thing x10 and has the mrs predicate ‘_survey_n_1_rel’ as characteristic type. If the cardinality of the quantification were known (as in “three surveys”) then this information could be added to q1. Further quantification types could be created to express definite quantification of individuals, indefinite quantification, etc. Such information could be inferred by CE rules that match against patterns of MRS relations (including, in this example, the “udef_q_rel” and the NUM: PL feature). David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 11 Report on using the English Resource Grammar to extend fact extraction capabilities 5.3 Domain Semantics Using similar intuitions [2] as for BPP11, the MRS in raw and intermediate CE form can be turned into domain CE: Noun and verbs may be turned into types of domain concepts via the expresses relation. A noun represents an entity concept, for example “the mrs predicate ‘_survey_n_1_rel’ expresses the entity concept survey” and a verb expresses a relation concept, for example “the mrs predicate '_conduct_v_1_rel' expresses the relation concept conducts”. Things “stood for” by noun and verb phrases are inferred to have the type of CE concept defined by the head (e.g. survey or conducts); noun phrases standing for things and verb phrases standing for situations. It is not yet clear if it is necessary to infer the roles (eg patient and agent) played by things associated with the situation, as was done in BPP11, since sufficient information may be present in the MRS. However there is major extension to the BPP11 translation of noun phrases and verb phrases noted above if the intermediate abstract MRS information is to be utilized. For example, a group quantification on a thing indicates that it must be turned into the domain representation of a group (of surveys) rather that an individual (survey). This requires the construction of a model of “groups” that was missing from the BPP11 work. A tentative proposal is that there be a “group of XXXs” where XXX is the name of a concept, and that this group has an optional cardinality. Other linguistic types, such as proper names, adjectives and prepositions, may be turned into domain semantics in a similar way to BPP11 processing. However some of the MRS relations for certain linguistic types contain additional information, for example adjectives contain an “event” argument, for which it is not yet clear how it should be handled. It is necessary to understand the generic semantic principles that were used to determine the additional ERG information; discussion with the DELPH-IN community suggests that some of this information is available in the semantic interface definition (the SEM-I [18]), but that a specification of the semantic design is not explicitly available. This seems an area that is fruitful for further study during this BPP13 task. The extraction of high quality facts is not the focus of the first three months of the research proposal, which, rather, is focused on the mechanisms for representing ERG linguistic information in CE. However, for completeness, the result of applying the initial linguistic processes to the output of the MRS is shown below: the organisation x6 known as HTT conducts the group of surveys x10. the situation e3 is contained in the container x15 known as Adhamiya. This is not yet as readable as the BPP11 results. The first sentence captures the conducting of the surveys, but the fact that the conducting situation occurs in Adhamiya (the third sentence) has missed the link between the situation e3 and the conducting situation. Furthermore, no processing has been done for the motivational link “in order to judge …”. Nevertheless, that there is a group of surveys is new information in comparison to the BPP11 work, and significantly less semantic processing rules had to be written and executed in CE to generate the above sentences, due to the increased semantic output of the ERG system. We anticipate that the facts will become more readable and that more detailed information will be extracted as the work continues. David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 12 Report on using the English Resource Grammar to extend fact extraction capabilities 5.4 Rationale It is necessary to extract and display the rationale. The reasoning occurs in two parts, the ERG grammatical rules applied by PET and the CE-based rules applied to the raw MRS. The reasoning derived from the elementary predications may be displayed in the manner defined in BPP11 [2]. for example, given the sentence: the group of things x10 has the entity concept survey as categorisation. the rationale is similar to the following: However, for the ERG grammatical reasoning, the steps that generate these elementary predications are not directly available in the PET parser. The parse tree does provide some form of “template” for the reasoning, and the detailed TFS for each type in the parse tree could in theory be extracted from the ERG. However it is not currently possible to map between the parse tree and the MRS in the PET output, and without such mapping it would not be possible to determine which parts of the parse tree were involved. Further research is necessary in this area. 6 Integrating to the CE processing chain The PET parsing system and the Prolog program to turn the MRS into CE must be provide as a web service in order that it may be called from the CEStore [19], or from other programs such as the CE-embedded Word documents [20]. An architecture is being constructed, running under Debian Linux, for this purpose, as diagrammed below: The web service is provided by a Prolog program that takes a sentence to be parsed, calls the PET parser with the ERG loaded, receives the output of the parser, and turns this output into CE. We aim to support the following diagram of relationships between information in the CE system, the ERG system and the Stanford parser that have been described in this paper: David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 13 Report on using the English Resource Grammar to extend fact extraction capabilities Additional Prolog code has been constructed that parses the TFS for the ERG and provides some utility functions, such as showing all paths for a given TFS type. It was this information that was used to help generate the “palm tree” diagram (although this was drawn by hand). Such facilities may be useful in converting between linguistic frames and TFS. 7 Integration of Domain reasoning A key research topic is to integrate the domain reasoning with the ERG/PET system, potentially allowing domain semantics to guide parsing of the text. The simplest possibility is for the domain model to source new lexical entries or grammar rules, following some of the suggestions in translations between CE and TFS noted above. Such integration can be done when the grammar is compiled, creating new grammar information which is then added into the ERG as diagrammed below: A more interesting, but complex, integration might occur at parse time, where domain reasoning is called upon by the parser to affect the current state of the parse, for example by ruling out inconsistent parses (in effect providing selectional restrictions to rule out alternatives) or to feed into the ranking of the parses. Such an architecture is diagrammed below: David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 14 Report on using the English Resource Grammar to extend fact extraction capabilities The development of such integration is complex and will require significant research, as originally proposed under the “deeper semantics” aspect of the task. 8 Discussion and Conclusion This paper has presented preliminary work on the use and integration of the ERG system into the BPP11 CE fact extraction process. Application of the ERG to SYNCOIN sentences suggests informally that more accurate linguistic detail can be extracted in comparison to the Stanford parser, but that the ERG system is slower and in some cases does not generate any parse in cases where the Stanford parser would. This is in keeping with the nature of the deep linguistic approach of the ERG as opposed to the statistical approach of the Stanford parser, where only relative coarse-grained linguistic information is available. We conclude that the ERG system is of potential benefit in generating higher quality parses, together with greater semantic detail, but that we may still need to use the Stanford parser as a “backup” when the ERG system fails to generate a parse. This makes it particularly important to ensure that both systems provide information in the same CE linguistic model. Our preliminary techniques described in this report suggest that it is possible to transform linguistic information between the ERG system and the CE-based representation, allowing the integration of the ERG system into our fact extraction approach, and potentially providing a greater degree of involvement by non-linguist users in the understanding and modification of linguistic processing for specific domains, although the transformations involving grammar rules will still require linguistic skill. Informal inspection of the MRS output by the PET parser suggests that the structures are quite closely linked to the linguistic processing, and that it may be useful to abstract out some of the underlying concepts, as suggested in the section on MRS representation. This was described by [12] as being the result of tension between the need for a generic representation versus the fact that MRS relations are ultimately sourced from grammar rules, which by definition must follow linguistic phenomena. Such abstraction may facilitate understanding by non-linguists and the further mapping of the MRS into domain semantics; this is consistent with the approach taken in the BPP11 processing that separated generic from domain specific semantics. We therefore propose that this is a worthwhile area for further research. One of the authors attended the DELPH-IN summit, where this work was presented [7], and was made aware that the use of domain semantics for assisting the down-stream use of the MRS output was something that would be of interest of the community; such use of domain semantics is a key component of our work in building CE based analyst’s models, and therefore this is an area where we could potentially make a contribution to the DELPH-IN work. This task has just started, and much work needs to be done; further research should include a better integration of the PET software to the CE-based systems, including the CEstore; refinement and testing of the CE-based representational structures; extending the concept of intermediate MRS representations to cover more general semantic phenomena; the extraction of rationale from the ERG system and the use of domain semantics to assist the parsing. 9 Acknowledgement This research was sponsored by the U.S. Army Research Laboratory and the U.K. Ministry of Defence and was accomplished under Agreement Number W911NF-06-3-0001. The views and conclusions contained in this document are those of the author(s) and should not be interpreted as representing the official policies, either expressed or implied, of the U.S. Army Research Laboratory, the U.S. Government, the U.K. Ministry of Defence or the U.K. Government. The U.S. and U.K. Governments are authorized to reproduce and distribute reprints for Government purposes notwithstanding any copyright notation hereon. David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 15 Report on using the English Resource Grammar to extend fact extraction capabilities 10 References [1] [2] [3] [4] [5] [6] [7] [8] [9] [10] [11] [12] [13] [14] [15] [16] [17] [18] [19] [20] [21] [22] Xue, P., Mott, D., Braines, D., Poteet, S., Kao, A., Giammanco, C., Pham, T., McGowan, R. Information Extraction using Controlled English to support Knowledge-Sharing and Decision-Making. In 17th ICCRTS “Operationalizing C2 Agility.”, Fairfax VA, USA, June 2012 Mott, D., Braines, D., Poteet, S., Kao, A., Controlled Natural Language to facilitate information extraction Fact extraction using Controlled English, ACITA 2012. Sowa, J., Common Logic Controlled English, http://www.jfsowa.com/clce/clce07.htm Mott, D., Summary of Controlled English, ITACS, https://www.usukita.org/papers/5658/details.html, 2010. Copestake, Ann, Implementing Typed Feature Structure Grammars, CSLI Publications, 2002. http://www.delph-in.net/ Mott, D., Poteet, S., Xue, P., Kao, A, Copestake, A, Fact Extraction using Controlled English and the English Resource Grammar, DELPH-IN Summit, July 2013.http://www.delph-in.net/2013/david.pdf Copestake, Ann., Flickinger, D., Sag, I. A., and Pollard, C., Minimal Recursion Semantics: an introduction. Research on Language and Computation, 3(2-3):281–332. 2005. Bender, E.M., Flickinger, D., and Oepen, S.. The Grammar Matrix: An open-source starter-kit for the rapid development of crosslinguistically consistent broad-coverage precision grammars. In Proc. Workshop on Grammar Engineering and Evaluation, Coling 2002, pages 8–14, Taipei, Taiwan Sag, I.A., Wasow, T., and Bender, E.M.. Syntactic Theory: A formal introduction, Second Edition. Stanford: CSLI Publications [distributed by University of Chicago Press], 2003 Copestake A, and Flickinger, D., An open-source grammar development environment and broadcoverage English grammar using HPSG In Proceedings of the Second conference on Language Resources and Evaluation (LREC-2000), Athens, Greece, 2002. Bender, E.M., personal communication, August 2013. Rimland, G., Hall, A.: A COIN-inspired Synthetic Dataset for Qualitative Evaluation of Hard and Soft Fusion Systems. Information Fusion, Chicago, Illinois, USA (2011) The Stanford Parser, A statistical parser, http://nlp.stanford.edu/software/lex-parser.shtml The PET parser, http://moin.delph-in.net/PetTop Mott, D, Poteet, S., Xue, P., A New CE-based Lexical Model https://www.usukitacs.com/node/2271 Mott, D., Braines, D., Laws, S., Xue, P. Exploring Controlled English for representing knowledge in the Linguistic Knowledge Builder, Sept 2012, https://www.usukitacs.com/node/2231 Flickinger, D., Lønning, J.T., Dyvik, H., Oepen, S., Bond, F., SEM-I Rational MT: Enriching Deep Grammars with a Semantic Interface for Scalable Machine Translation, 2005, http://web.mysites.ntu.edu.sg/fcbond/open/pubs/2005-summit-semi.pdf "CE Store - Alpha Version 2", https://www.usukitacs.com/node/1670 4th Battalion Communications Report https://www.usukitacs.com/node/2341 Flickinger, D., The English Resource Grammar, LOGON technical report #2007-7, www.emmtee.net/reports/7.pdf Mott, D., Poteet, S., Xue, P., Kao, A., Copestake, A. Using the English Resource Grammar to extend fact extraction capabilities, Fall Meeting 2013, https://www.usukitacs.com/node/2498 David Mott IBM UK, Stephen Poteet, Ping Xue, Anne Kao Boeing Research & Technology 16