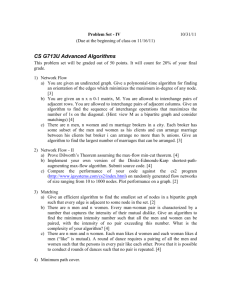

References on basis path testing

advertisement

http://hissa.nist.gov/publications/nistir5737/#section1, accessed May 13, 2012:

NISTIR 5737

A Method to Determine a Basis Set of Paths to Perform Program Testing

Joseph Poole

Abstract

A major problem in unit testing of programs is to determine which test cases to use. One technique that is in widespread

use is to take the control flowgraph from each of the program functions and calculate a basis set of test paths. A basis set

is a set of paths through the functions that are linearly independent [*defn—see below] and the paths in the basis set can

be used to construct any path through the program flowgraph. Path construction is defined as adding or subtracting the

number of times each edge is traversed. While this is not a total solution for test case generation, it does provide a good

starting set of test cases. This paper gives an algorithm for taking a function's flowgraph and determining a basis set of

paths. Proofs that the algorithm generates a set of paths that fulfill the above requirements are provided. A prototype tool,

STest, is also discussed. Some general ideas for further improvement are also provided.

Keywords: basis testing; branch testing; complexity measure; cyclomatic complexity; program testing; STest; structured

testing; testing

TABLE OF CONTENTS

1 Introduction

2 The Algorithm and Proofs

2.1 Proof of Theorem 1

2.2 Proof of Theorem 2

2.3 Proof of Theorem 3

2.4 The Algorithm Generates the Marked Spanning Tree

3. Stest

4. Conclusions

References

1 Introduction

One of the biggest problems in unit testing is how to determine test cases. The tester has to ensure that there are enough

tests to do thorough testing, but not so many tests that all of the limited testing time is used up. There are several different

techniques to pick test cases including using boundary cases, dataflow testing and branch testing[BEIZER90]. No single

technique can supply sufficient test cases, so usually a combination of techniques are used. This paper address one of

those techniques, basis set testing. A basis set is a set of linearly independent paths that can be used to construct any path

through the program flow graph. In this paper, we examine a method that determines a set of paths for basis testing and

give proofs that the algorithm fulfills the requirements of basis testing. In this section an overview of basis testing will be

given. Section 2 presents the algorithm and proof of the algorithm. Section 3 describes Stest, a prototype testing tool

which implements the algorithm. Section 4 presents conclusions.

Testing techniques can be divided into various categories. Black box testing ignores the internals of the software unit.

Structured testing is, on the other hand, "Testing that takes into account the internal mechanism of a system or

component"[IEEE610]. This can be further subdivided into different types including path testing, "Testing designed to

execute all or selected paths through a computer program"[IEEE610] and branch testing, "Testing designed to execute

each outcome of each decision point in a computer program"[IEEE610]. Basis testing, also known as Structured

Testing[SP500-99], is a hybrid between these two techniques. The test paths in a basis set fulfill the requirements of

branch testing and also test all of the paths that could be used to construct any arbitrary path through the graph.

Any function in a program can be represented as a control flow graph. The nodes in this graph are program statements,

while the directed edges are flow of control. Two nodes can be either unconnected, connected by an edge in either

direction or connected by an edge in each direction. When tracing a path from the source to the sink, a backedge is a edge

that leads back to a node that has already been visited. A flowgraph contains one source node and one sink. A source node

is a node which has no incoming edges, while a sink node is a node with no outgoing edges. A program function may

have more than one sink, but this graph can be converted into a graph with only one sink as described in section 2. Some

languages also allow more than one source. This construct is very rare and not used in Structured

Programming[LINGER79].

A basis set is a set of linearly independent test paths. A path can be associated with a vector, where each element in the

vector is the number of times that an edge is traversed. For example, consider a graph with 4 edges: a, b, c and d. The

path ac can be represented by the vector [1 0 1 0]. Paths are combined by adding or subtracting the paths' vector

representations. Each path in the basis set can not be formed as a combination of other paths in the basis set. Also, any

path through the control flow graph can be formed as a combination of paths in the basis set.

Figure 1 shows a simplified control flow graph. While a complete flow graph would not have two edges going to the same

destination, this requirement has been relaxed to keep the number of paths to a manageable size for this example. A basis

set for this graph is {ac, ad, bc}. The path bd can be constructed by the combination bc + ad - ac as shown in Table 1.

The set {ac, bd} is not a basis set, because there is no way to construct the path ad. The set {ac, ad, bd} is also a basis

set. Basis sets are not unique; thus a flowgraph can have more than one basis set.

McCabe's complexity measure[MCCABE76] is a software metric that attempts to evaluate how complex a function is.

The number of paths in the basis set is equal to the complexity measure of that function. The value of the complexity

measure is equal to the cyclomatic complexity of the flowgraph if all of the edges were undirected instead of directed.

This can be calculated as equal to e - n + 2, where e is the number of edges and n is the number of nodes.

Figure 1. Simplified Control Flowgraph

Edge

bd

bc

bc + ad

bc + ad - ac

a

0

0

1

0

b

1

1

1

1

c

0

1

1

0

d

1

0

1

1

Table 1. Demonstration of Path Construction

2 The Algorithm and Proofs

The algorithm for our basis set method is a modified depth-first search algorithm. The search starts at the source node and

recursively descends down all possible outgoing paths. If the node visited has never been visited before, a default

outgoing edge is picked, then the current path is split into new paths that traverse each outgoing edge, going down the

default edge first. The default edge is any edge which is not a back edge or which later causes a node to have two

incoming edges. For example, in the test condition of a pre-test loop, the default edge would be the edge which exits from

the loop. If the edge that traversed the body of the loop was chosen, then a back edge from the last node in the body to the

test condition node would have to be traversed later. If the node visited is a sink (no exit edges), then a path in the basis

set has been found. Otherwise, the path traverses the default edge. A pseudo-code outline of this method is as follows:

FindBasis( node)

if this node is a sink then print out this path as a solution

else if this node has not been visited before

mark the node as visited

label a default edge

FindBasis( destination of default edge)

for all other outgoing edges, FindBasis( destination of edge)

else

FindBasis( destination of default edge).

The flowgraph from Figure 1 can be used to demonstrate this method. The algorithm starts at the source node. The default

edge can be either edge, let us pick a. Edge a is traversed and the intermediate node visited. Edge c is picked as the default

edge for the intermediate node. Edge c is traversed and the final node visited. There are no outgoing edges, so path ac is

added to the basis set. The algorithm has traversed the default edge of the intermediate node, so now d is traversed. The

destination is again the sink, so ad is added to the basis set. The intermediate node has had all of its edges traversed,

therefore b from the source node is traversed. The destination of b is the intermediate node which has been visited before.

Therefore the default edge c leading to the sink is traversed and the path bc is added to the basis set. The source has had

all outgoing edges traversed, so the algorithm terminates. A program trace of this example would look as follows:

FindBasis( source)

pick default -> a

FindBasis( destination(a)) -> FindBasis( intermediate)

pick default -> c

FindBasis( destination(c)) -> FindBasis(sink)

print ac

FindBasis( destination(d)) -> FindBasis(sink)

print ad

FindBasis( destination(b)) -> FindBasis( intermediate)

FindBasis( destination( default)) -> FindBasis(c) -> FindBasis( sink)

print bc

There are three assumptions that are required for this algorithm to work:

Assumption 1. There exists one source and one sink

Assumption 2. From the source, there exists a path to any node

Assumption 3. From any node, there exists a path to the sink

The algorithm will produce a basis set if there is more than one sink, but the number of paths determined is no longer

equal to the complexity of the flowgraph. To convert a flowgraph with more than one sink to one with one sink, add edges

to one sink from all of the other sinks in the graph. Assumption 2 means that the function contains no unreachable code,

while Assumption 3 prohibits useless code.

For the paths generated by this algorithm to form a basis set, three theorems must be true:

Theorem 1. Each edge in the control graph will be traversed

Theorem 2. The set of paths in the basis set are linearly independent.

Theorem 3. Any path through the control graph can be formed as a combination of paths of the basis set.

Theorem 1 is required to show that the set of paths produced by the algorithm can be used for branch testing. Theorem 2

and Theorem 3 are needed to show that the paths are a basis set. Because of the complexity of the proofs for Theorem 2

and Theorem 3, the proofs will be done in two steps. The first step is to generate paths using a flowgraph with certain

edges marked and prove that Theorem 2 and Theorem 3 are true for that marked flowgraph. The second step will show

that the algorithm produces the same set of paths as does the method using the marked flowgraph.

2.1 Proof of Theorem 1

To prove Theorem 1, we will first prove Lemma 1.1 which states that all nodes will be visited, then show that all of the

edges are traversed.

Lemma 1.1. All nodes will be visited.

This can be proven by induction on the length of the path between nodes.

Base Case: If a node is the destination of an edge from a visited node, then that node will be visited.

This follows because when the previous node is first visited, all outgoing edges will be traversed and their destination

nodes will be visited.

Induction Step: Assume that any node that is n steps away from a visited node will be visited, prove that a node

n+1 steps away will be visited.

When the parent of the n+1 node, which is n steps away, is visited, all of it's outgoing edges will be traversed. Since the

n+1st node is a destination of one of these edges, it must be visited. This proves the inductive step. We start the algorithm

at the source node and know from Assumption 2 that a path can be traced from the source to any node, therefore Lemma

1.1 is true.

On the first visit all edges out of a node will be traversed. Since Lemma 1.1 shows that all the nodes will be visited, we

know that all the outgoing edges of all nodes will be traversed. Therefore all of the edges in the control graph are

traversed as Theorem 1 states.

2.2 Proof of Theorem 2

Proving Theorem 2 is more complex. First we will examine a flow graph and label some of the edges. We will then

construct a set of paths from this labeled flowgraph and show that the paths are linear independent. In section 2.4, we will

show that our algorithm generates this set of paths.

Label one outgoing edge of each node by performing a depth-first search from the sink and reversing the arcs. The marked

edges form a spanning tree. A spanning tree is a graph where all of the nodes are connected and the removal of any edge

causes the graph to become disconnected. See Figure 2 for a sample marked graph.

Figure 2. Flowgraph with marked edges

We will now prove three lemmas:

Lemma 2.1 The marked edges, ignoring arc direction, form a spanning tree.

Lemma 2.2 This is one and only one path of only marked edges from the source to the sink.

Lemma 2.3 There are e-n+1 chords (unmarked edges).

Lemma 2.1 and Lemma 2.2 are true because the graph is generated by a depth first search. If e is the total number of

edges and there are n-1 marked edges, there must be e-(n-1) unmarked edges, so Lemma 2.3 is true. These edges are

called chords.

We will now add paths to the set of basis paths. The goal is to associate each path with an unique chord. The one marked

path through the graph is the first path to be placed in the basis set. Now add all additional paths from the source to the

sink that include only one chord. There are some chords that are not on paths in the basis set. For each remaining chord c,

it is impossible to trace a path from the source to c without including another chord d. Some paths will have more than

one d chord. Chord d is called a required chord for c.

We know that there exists a separate path in the basis set that includes d but not c. The node at the head of c must have a

marked outgoing edge since every node has one outgoing edge marked. This edge would have been chosen over c for

traversal because this would be a path with only one chord.

All of the paths in the set are linearly independent, because each path is associated with an edge (the chord) that no other

path contains. The only exception are the paths that have required chords. The path with both the required chord and the

unique chord can not be eliminated from the basis set because it is the only path that contains the new chord. There is no

combination that will include that chord. The path with only the required edge can not be eliminated because there are no

paths that contain the new edge without the required chord. There is no way to create a linear combination that is

equivalent to the path with only the required edge. This shows that Theorem 2 is true.

2.3 Proof of Theorem 3

Theorem 3 states that any path through the flow graph can be generated as a linear combination of paths in the basis set.

This will be shown by considering the arbitrary path p to be a combination of chords of the graph. To construct p, for each

chord in p, add the basis set path associated with that chord the number of times the chord appears in p. The combination

of paths will now have some extra traverses of marked edges. These can be removed by subtracting the path of only

marked edges the required number of times.

For an example, use the flowgraph from Figure 1. Edges a and c are the marked edges. The basis set is { ac, ad, bc}. The

path bd is created by adding the path containing the chord b, which is path bc, and the path ad, which contains the chord

d. There is an extra occurrence of the edges a and c. These are removed by subtracting path ac, the path containing only

marked edges.

An example using looping uses the flow graph in figure 3. The construction of path adadbc is presented in Table 2. The

basis set of the flowgraph is { ac, bc, adac}. The chords are b and d. Constructing the path first uses the path associated

with chord b, path bc, once because b only appears once in the target path. The other chord d appears twice so the basis

path adac appears twice in the set. All of the chords are now in the set, but there are two extra occurrences of edges a and

c. These are removed by subtracting the vector of the all marked-edge path ac.

Figure 3. Simplified Flowgraph with Looping

Edge adadbc bc bc + 2(adac) bc + 2(adac) - 2(ac)

a

2

0

4

2

b

1

1

1

1

c

1

1

3

1

d

2

0

2

2

Table 2. Path Construction for Complex Example

2.4 The Algorithm Generates the Marked Spanning Tree

The final step is to show that the basis set algorithm generates the marked spanning tree used for the previous proofs. This

can be shown to be true by examining the set of default edges generated by the algorithm. The default edges generated by

the algorithm are the same as the marked edges of the spanning tree. Each node will have one outgoing edge marked. If

the flows were reversed in direction, this would be equivalent to each node having one incoming edge marked. Since each

node is visited and only n-1 edges are marked, the marked edges have to form a spanning tree. The default edge out of a

node representing a test condition has to be carefully selected to ensure that all of the nodes are visited. For example, in a

pre-test loop, the edge representing the exit case must be taken. If the edge representing the body of the loop was taken,

the last node of the body will mark the edge going into the testing node. There would be no way to visit the node that is

the destination of the exit edge because the node has already marked its one outgoing edge.

3 STest

STest is a prototype tool built to implement the basis set algorithm. It relies on another tool, unravel[NISTIR5691] to

generate the program flow graphs. unravel is a static analysis tool that performs program slicing[WEISER84]. As part of

it's processing, it generates an intermediate representation of the program including control and data flow. A LIF

(language independent format) file is this intermediate format. LIF is described as follows in [NISTIR5691]:

The language independent format represents the program as an annotated flow graph of nodes and edges. Nodes are

generated to represent semantic or syntactic units of the program that correspond to statements or parts of statements.

Edges are of two types, control flow and requires. A control flow edge between two nodes indicates the flow of control

from one node to the other. The requires edges from a node indicate other nodes that should be included in any slice

containing the node the edges are from. The requires edges are a general mechanism for specifying control dependence

between nodes, pieces of required source code, or other slicing dependencies. The annotations specify location of

corresponding source code, variables referenced or assigned and special statements such as goto and return.

The input of the STest program is a LIF file and the program source file. The LIF file contains the flow control graph

information for all of the functions in that source code file. The LIF file also contains the mapping of the nodes to the

source code file. The base name of the LIF file is assumed to be the same as the source code file. For example, file1.lif is

the LIF file created from file1.c.

The program has two main components, the representation of the control flow graph and the parser function. The job of

the parser is to take the LIF file and construct the control flowgraph. The parser function takes the representation as a

input parameter and calls methods of the representation to create the control flowgraph.

The control flow graph is implemented as a FlowGraph object. This object contains data of each of the nodes in the source

file, a list of which nodes are source nodes and the current path through the graph. Each node in the flow graph contains

the number of incoming paths into the node, the number of outgoing paths into the node, any program text associated with

the node, whether that node has been visited as part of the search and a linked list of all of the nodes which are adjacent to

the node. There is a unique identification number, the NodeID, assigned to each of the nodes to distinguish them. The

FlowGraph object has methods which perform the following operations:

initialize a new flowgraph

make a edge between two nodes

add source code to a node

make a node a source node

traverse a node as part of a search

traverse the entire set of graphs, starting at each source node.

The initialize step sets of the values for each node to defaults. Connecting two nodes adds a node to the starting node's

linked list of adjacent nodes. It also increments the outgoing count of the start node and the incoming count of the ending

node. Testing to see if two nodes are connected searches the linked list of the start node to see if the end node is present.

The return value of the function is true if the end node is present, otherwise it is false. To add source code to a node

requires the starting line and column of the text and the ending line and column. The program searches the source code

file for the text and then copies it into the data for that node. Making a node a source node involves adding the NodeID

number to a list of the source nodes.

Traversing a node is the heart of the program. The function checks to see if there are any outgoing paths from the node. If

there are no outgoing edges, then this node is a sink and the path is printed out. If the node has not been visited, the

function determines a default edge according to the algorithm. The function then marks the node as visited and then

traverses all of the outgoing edges starting with the default edge. If the node was visited, then traverse is called recursively

on the node that is the destination of the default edge. The program starts by traversing each start node in turn.

There are several extensions that can be made to improve STest. A major one is to add Myer's extension to the complexity

measure[MYERS77]. The test condition for flow of control constructs can be a combination of conditions. This condition

could be rewritten as two or more control constructs. For example, the test A and B for an if-clause can be rewritten as

two nested if-clauses. The complexity for the first construct is 2, while the second construct has a complexity of 3. Both

variations have the same effect. Myers proposed to extend the complexity measure by counting the boolean operations in

the test condition. This would allow both constructs to have the same complexity value. In the first case, the construct has

a complexity of 2 plus 1 for the "and" for a final complexity of 3. Myer's paper contains a fuller discussion.

4 Conclusions

This report presents a method for determining a basis set for testing. The basis set gives the unit tester a set of paths that

can help in determining test cases for testing. The algorithm presented lends itself well to automatic testing. There are

several limitations to the technique. The major limitation is that the method is unreliable on unstructured code. Useful test

cases may be generated, but the number of paths in the basis set is no longer equal to the complexity measure of the

flowgraph.

This algorithm provides a set of paths, but it does not provide data that can be used in the actual execution of the test

cases. The data is impossible to provide for some functions. For example, in the following C function, there is no value of

x that will execute the body in the first statement, but not execute the body in the second.

void f( int x) {

if ( x < 5) y = 2;

if ( x < 5) z = 1;

}

Basis set testing is not a complete testing solution, see [BEIZER90] and [EVANG84] for a discussion. Basis set testing

does provide a good starting point. More test cases using other techniques can then be added for better testing coverage.

References

BEIZER90:B. Beizer, Software Testing Techniques, 2 Edition, Van Nostrand Reinhold, 1990.

EVANG84: M. Evangelist, "An Analysis of Control Flow Complexity", Eighth International Computer Software and

Application Conference, pg. 388 - 396, 1984.

HETZEL84: W. Hetzel, The Complete Guide to Software Testing, QED Information Sciences, Inc., 1984.

IEEE610: ANSI/IEEE Std 610.12-1990, "Glossary of Software Engineering Terminology", The Institute of Electrical and

Electronics Engineers, Inc., February, 1991.

LINGER79: R. Linger, H. Mills and B. Witt, Structured Programming: Theory and Practice, Addison-Wesley Publishing

Company, 1979.

MCCABE76: T. McCabe, "A Complexity Measure", IEEE Transactions on Software Engineering, Vol SE-2, Number 4,

December 1976, pg. 308-320.

MYERS77: G. Myers, "An Extension to the Cyclomatic Measure of Program Complexity", SIGPLAN Notices, October,

1977.

NISTIR5691: NIST IR 5691, J. Lyle, D. Wallace, J. Graham, K. Gallagher, J. Poole and D. Binkley, "Unravel: A CASE

Tool to Assist Evaluation of High Integrity Software", volumes 1 and 2, Department of Commerce, August, 1995.

SP500-99: NBS Special Publication 500-99, T. McCabe, "Structured Testing: A Software Testing Methodology Using

the Cyclomatic Complexity Measure", December, 1982.

WEISER84: M. Weiser, "Program Slicing", IEEE Transactions on Software Engineering, 10:352-357, July 1984.

+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

*defn of linearly independent paths:

Cyclomatic complexity : From Wikipedia, the free encyclopedia, http://en.wikipedia.org/wiki/Cyclomatic_complexity,

accessed May 13, 2012

Cyclomatic complexity (or conditional complexity) is a software metric (measurement). It was developed by Thomas J.

McCabe, Sr. in 1976 and is used to indicate the complexity of a program. It directly measures the number of linearly

independent paths through a program's source code. The concept, although not the method, is somewhat similar to that of

general text complexity measured by the Flesch-Kincaid Readability Test.

Cyclomatic complexity is computed using the control flow graph of the program: the nodes of the graph correspond to

indivisible groups of commands of a program, and a directed edge connects two nodes if the second command might be

executed immediately after the first command. Cyclomatic complexity may also be applied to individual functions,

modules, methods or classes within a program.

One testing strategy, called Basis Path Testing by McCabe who first proposed it, is to test each linearly independent path

through the program; in this case, the number of test cases will equal the cyclomatic complexity of the program.[1]

Contents

1 Description

o 1.1 Formal definition

o 1.2 Etymology / Naming

2 Applications

o 2.1 Limiting complexity during development

o 2.2 Implications for Software Testing

o 2.3 Cohesion

o 2.4 Correlation to number of defects

3 See also

4 Notes

5 References

6 External links

Description

A control flow graph of a simple program.

The program begins executing at the red

node, then enters a loop (group of three

nodes immediately below the red node). On

exiting the loop, there is a conditional

statement (group below the loop), and

finally the program exits at the blue node.

For this graph, E = 9, N = 8 and P = 1, so the

cyclomatic complexity of the program is 9 8 + (2*1) = 3.

The cyclomatic complexity of a section of source code is the count of the number of linearly independent paths through

the source code. For instance, if the source code contained no decision points such as IF statements or FOR loops, the

complexity would be 1, since there is only a single path through the code. If the code had a single IF statement containing

a single condition there would be two paths through the code, one path where the IF statement is evaluated as TRUE and

one path where the IF statement is evaluated as FALSE.

Mathematically, the cyclomatic complexity of a structured program[note 1] is defined with reference to the control flow

graph of the program, a directed graph containing the basic blocks of the program, with an edge between two basic blocks

if control may pass from the first to the second. The complexity M is then defined as:[2]

M = E − N + 2P

where

E = the number of edges of the graph

N = the number of nodes of the graph

P = the number of connected components (exit nodes).

The same function as above, shown as a

strongly connected control flow graph, for

calculation via the alternative method. For

this graph, E = 10, N = 8 and P = 1, so the

cyclomatic complexity of the program is 10 8 + 1 = 3.

An alternative formulation is to use a graph in which each exit point is connected back to the entry point. In this case, the

graph is said to be strongly connected, and the cyclomatic complexity of the program is equal to the cyclomatic number of

its graph (also known as the first Betti number), which is defined as:[2]

M=E−N+P

This may be seen as calculating the number of linearly independent cycles that exist in the graph, i.e. those cycles that do

not contain other cycles within themselves. Note that because each exit point loops back to the entry point, there is at least

one such cycle for each exit point.

For a single program (or subroutine or method), P is always equal to 1. Cyclomatic complexity may, however, be applied

to several such programs or subprograms at the same time (e.g., to all of the methods in a class), and in these cases P will

be equal to the number of programs in question, as each subprogram will appear as a disconnected subset of the graph.

It can be shown that the cyclomatic complexity of any structured program with only one entrance point and one exit point

is equal to the number of decision points (i.e., 'if' statements or conditional loops) contained in that program plus one. [2][3]

Cyclomatic complexity may be extended to a program with multiple exit points; in this case it is equal to:

π-s+2

where π is the number of decision points in the program, and s is the number of exit points.[3][4]

Formal definition

An even subgraph of a graph (also known as an Eulerian subgraph) is one where every vertex is incident with an even

number of edges; such subgraphs are unions of cycles and isolated vertices. In the following, even subgraphs will be

identified with their edge sets, which is equivalent to only considering those even subgraphs which contain all vertices of

the full graph.

The set of all even subgraphs of a graph is closed under symmetric difference, and may thus be viewed as a vector space

over GF(2); this vector space is called the cycle space of the graph. The cyclomatic number of the graph is defined as the

dimension of this space. Since GF(2) has two elements, the cyclomatic number is also equal to the 2-logarithm of the

number of elements in the cycle space.

A basis for the cycle space is easily constructed by first fixing a maximal spanning forest of the graph, and then

considering the cycles formed by one edge not in the forest and the path in the forest connecting the endpoints of that

edge; these cycles constitute a basis for the cycle space. Hence, the cyclomatic number also equals the number of edges

not in a maximal spanning forest of a graph. Since the number of edges in a maximal spanning forest of a graph is equal to

the number of vertices minus the number of components, the formula

above for the cyclomatic number

follows.[5]

For the more topologically inclined, cyclomatic complexity can alternatively be defined as a relative Betti number, the

size of a relative homology group:

which is read as “the first homology of the graph G, relative to the terminal nodes t”. This is a technical way of saying

“the number of linearly independent paths through the flow graph from an entry to an exit”, where:

“linearly independent” corresponds to homology, and means one does not double-count backtracking;

“paths” corresponds to first homology: a path is a 1-dimensional object;

“relative” means the path must begin and end at an entry or exit point.

This corresponds to the intuitive notion of cyclomatic complexity, and can be calculated as above.

Alternatively, one can compute this via absolute Betti number (absolute homology – not relative) by identifying (gluing

together) all terminal nodes on a given component (or equivalently, draw paths connecting the exits to the entrance), in

which case (calling the new, augmented graph

, which is ), one obtains:

This corresponds to the characterization of cyclomatic complexity as “number of loops plus number of components”.

Etymology / Naming

The name Cyclomatic Complexity presents some confusion, as this metric does not only count cycles (loops) in the

program. Instead, the name refers to the number of different cycles in the program control flow graph, after having added

an imagined branch back from the exit node to the entry node.[2]

A better name for popular usage would be Conditional Complexity, as "it has been found to be more convenient to count

conditions instead of predicates when calculating complexity".[6] Cyclometic complexity is also known as the number of

FAN IN and FAN OUT in a program.

Applications

Limiting complexity during development

One of McCabe's original applications was to limit the complexity of routines during program development; he

recommended that programmers should count the complexity of the modules they are developing, and split them into

smaller modules whenever the cyclomatic complexity of the module exceeded 10.[2] This practice was adopted by the

NIST Structured Testing methodology, with an observation that since McCabe's original publication, the figure of 10 had

received substantial corroborating evidence, but that in some circumstances it may be appropriate to relax the restriction

and permit modules with a complexity as high as 15. As the methodology acknowledged that there were occasional

reasons for going beyond the agreed-upon limit, it phrased its recommendation as: "For each module, either limit

cyclomatic complexity to [the agreed-upon limit] or provide a written explanation of why the limit was exceeded."[7]

Implications for Software Testing

Another application of cyclomatic complexity is in determining the number of test cases that are necessary to achieve

thorough test coverage of a particular module.

It is useful because of two properties of the cyclomatic complexity, M, for a specific module:

M is an upper bound for the number of test cases that are necessary to achieve a complete branch coverage.

M is a lower bound for the number of paths through the control flow graph (CFG). Assuming each test case takes

one path, the number of cases needed to achieve path coverage is equal to the number of paths that can actually be

taken. But some paths may be impossible, so although the number of paths through the CFG is clearly an upper

bound on the number of test cases needed for path coverage, this latter number (of possible paths) is sometimes

less than M.

All three of the above numbers may be equal: branch coverage

cyclomatic complexity

For example, consider a program that consists of two sequential if-then-else statements.

if( c1() )

f1();

else

f2();

if( c2() )

f3();

else

f4();

number of paths.

The control flow graph of the source code

above; the red circle is the entry point of

the function, and the blue circle is the exit

point. The exit has been connected to the

entry to make the graph strongly connected.

In this example, two test cases are sufficient to achieve a complete branch coverage, while four are necessary for complete

path coverage. The cyclomatic complexity of the program is 3 (as the strongly connected graph for the program contains 9

edges, 7 nodes and 1 connected component) (9-7+1).

In general, in order to fully test a module all execution paths through the module should be exercised. This implies a

module with a high complexity number requires more testing effort than a module with a lower value since the higher

complexity number indicates more pathways through the code. This also implies that a module with higher complexity is

more difficult for a programmer to understand since the programmer must understand the different pathways and the

results of those pathways.

Unfortunately, it is not always practical to test all possible paths through a program. Considering the example above, each

time an additional if-then-else statement is added, the number of possible paths doubles. As the program grew in this

fashion, it would quickly reach the point where testing all of the paths was impractical.

One common testing strategy, espoused for example by the NIST Structured Testing methodology, is to use the

cyclomatic complexity of a module to determine the number of white-box tests that are required to obtain sufficient

coverage of the module. In almost all cases, according to such a methodology, a module should have at least as many tests

as its cyclomatic complexity; in most cases, this number of tests is adequate to exercise all the relevant paths of the

function.[7]

As an example of a function that requires more than simply branch coverage to test accurately, consider again the above

function, but assume that to avoid a bug occurring, any code that calls either f1() or f3() must also call the other. [note 2]

Assuming that the results of c1() and c2() are independent, that means that the function as presented above contains a bug.

Branch coverage would allow us to test the method with just two tests, and one possible set of tests would be to test the

following cases:

c1() returns true and c2() returns true

c1() returns false and c2() returns false

Neither of these cases exposes the bug. If, however, we use cyclomatic complexity to indicate the number of tests we

require, the number increases to 3. We must therefore test one of the following paths:

c1() returns true and c2() returns false

c1() returns false and c2() returns true

Either of these tests will expose the bug.

Cohesion

One would also expect that a module with higher complexity would tend to have lower cohesion (less than functional

cohesion) than a module with lower complexity. The possible correlation between higher complexity measure with a

lower level of cohesion is predicated on a module with more decision points generally implementing more than a single

well defined function. A 2005 study showed stronger correlations between complexity metrics and an expert assessment

of cohesion in the classes studied than the correlation between the expert's assessment and metrics designed to calculate

cohesion.[8]

Correlation to number of defects

A number of studies have investigated cyclomatic complexity's correlation to the number of defects contained in a

module. Most such studies find a strong positive correlation between cyclomatic complexity and defects: modules that

have the highest complexity tend to also contain the most defects. For example, a 2008 study by metric-monitoring

software supplier Enerjy analyzed classes of open-source Java applications and divided them into two sets based on how

commonly faults were found in them. They found strong correlation between cyclomatic complexity and their faultiness,

with classes with a combined complexity of 11 having a probability of being fault-prone of just 0.28, rising to 0.98 for

classes with a complexity of 74.[9]

However, studies that control for program size (i.e., comparing modules that have different complexities but similar size,

typically measured in lines of code) are generally less conclusive, with many finding no significant correlation, while

others do find correlation. Some researchers who have studied the area question the validity of the methods used by the

studies finding no correlation.[10]

Les Hatton claimed (Keynote at TAIC-PART 2008, Windsor, UK, Sept 2008) that McCabe Cyclomatic Complexity has

the same prediction ability as lines of code.[11]

See also

Complexity

Complexity trap

Computer program

Computer programming

Control flow

Decision-to-decision path

Design predicates

Essential complexity

Halstead complexity measures

Panopticode

Software engineering

Software testing

Synchronization Complexity

Notes

1. ^ Here "structured" means in particular "with a single exit (return statement) per function".

2. ^ This is a fairly common type of condition; consider the possibility that f1 allocates some resource which f3

releases.

References

1. ^ A J Sojev. "Basis Path Testing".

2. ^ a b c d e McCabe (December 1976). "A Complexity Measure". IEEE Transactions on Software Engineering: 308–

320. Template:Working link

3. ^ a b Belzer, Kent, Holzman and Williams (1992). Encyclopedia of Computer Science and Technology. CRC

Press. pp. 367–368.

4. ^ Harrison (October 1984). "Applying Mccabe's complexity measure to multiple-exit programs". Software:

Practice and Experience (J Wiley & Sons).

5. ^ Diestel, Reinhard (2000). Graph theory. Graduate texts in mathematics 173 (2 ed.). New York: Springer.

ISBN 0-387-98976-5.

6. ^ McCabe (December 1976). "A Complexity Measure". IEEE Transactions on Software Engineering: 315.

7. ^ a b McCabe, Watson (1996). "Structured Testing: A Testing Methodology Using the Cyclomatic Complexity

Metric".

8. ^ Stein et al; Cox, Glenn; Etzkorn, Letha (2005). "Exploring the relationship between cohesion and complexity".

Journal of Computer Science 1 (2): 137–144. doi:10.3844/jcssp.2005.137.144.

9. ^ Rich Sharpe. "McCabe Cyclomatic Complexity: the proof in the pudding". Enerjy.

10. ^ Kan (2003). Metrics and Models in Software Quality Engineering. Addison-Wesley. pp. 316–317. ISBN 0-20172915-6.

11. ^ Les Hatton (2008). "The role of empiricism in improving the reliability of future software".

External links

A Complexity Measure (McCabe's original paper in IEEE Transactions on Software Engineering Vol. 2, No. 4,

p. 308 (1976))

Structured Testing: A Testing Methodology Using the Cyclomatic Complexity Metric NIST Special Publication

500-235

The role of empiricism in improving the reliability of future software

Non linear paths from Application Code