Lecture 7

advertisement

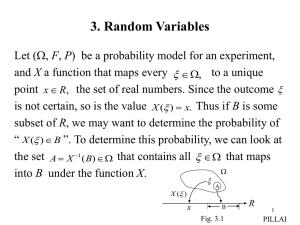

7. Two Random Variables

In many experiments, the observations are expressible not

as a single quantity, but as a family of quantities. For

example to record the height and weight of each person in

a community or the number of people and the total income

in a family, we need two numbers.

Let X and Y denote two random variables (r.v) based on a

probability model (, F, P). Then

Px1 X ( ) x2 FX ( x2 ) FX ( x1 )

x2

x1

and

P y1 Y ( ) y2 FY ( y2 ) FY ( y1 )

y2

y1

f X ( x)dx,

fY ( y )dy.

1

PILLAI

What about the probability that the pair of r.vs (X,Y) belongs

to an arbitrary region D? In other words, how does one

estimate, for example, P( x1 X ( ) x2 ) ( y1 Y ( ) y2 ) ?

Towards this, we define the joint probability distribution

function of X and Y to be

FXY ( x, y ) P( X ( ) x ) (Y ( ) y )

P( X x, Y y ) 0,

(7-1)

where x and y are arbitrary real numbers.

Properties

(i) FXY (, y) FXY ( x,) 0, FXY (,) 1.

(7-2)

since X ( ) ,Y ( ) y X ( ) , we get

2

PILLAI

FXY (, y ) P X ( ) 0.

Similarly X ( ) ,Y ( ) ,

we get FXY (, ) P() 1.

(ii)

Px1 X ( ) x2 , Y ( ) y FXY ( x2 , y ) FXY ( x1, y ).

(7-3)

P X ( ) x, y1 Y ( ) y2 FXY ( x, y2 ) FXY ( x, y1 ).

(7-4)

To prove (7-3), we note that for x2 x1,

X ( ) x2 ,Y ( ) y X ( ) x1,Y ( ) y x1 X ( ) x2 ,Y ( ) y

and the mutually exclusive property of the events on the

right side gives

P X ( ) x2 ,Y ( ) y P X ( ) x1,Y ( ) y Px1 X ( ) x2 ,Y ( ) y

which proves (7-3). Similarly (7-4) follows.

3

PILLAI

(iii)

Px1 X ( ) x2 , y1 Y ( ) y2 FXY ( x2 , y2 ) FXY ( x2 , y1 )

FXY ( x1, y2 ) FXY ( x1, y1 ).

(7-5)

This is the probability that (X,Y) belongs to the rectangle R0

in Fig. 7.1. To prove (7-5), we can make use of the

following identity involving mutually exclusive events on

the right side.

x1 X ( ) x2 ,Y ( ) y2 x1 X ( ) x2 ,Y ( ) y1 x1 X ( ) x2 , y1 Y ( ) y2 .

Y

y2

R0

y1

X

x2

x1

Fig. 7.1

4

PILLAI

This gives

Px1 X ( ) x2 ,Y ( ) y2 Px1 X ( ) x2 ,Y ( ) y1 Px1 X ( ) x2 , y1 Y ( ) y2

and the desired result in (7-5) follows by making use of (73) with y y2 and y1 respectively.

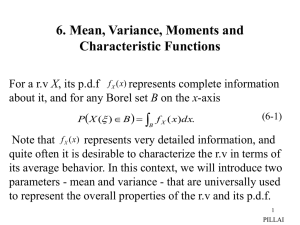

Joint probability density function (Joint p.d.f)

By definition, the joint p.d.f of X and Y is given by

2 FXY ( x, y )

f XY ( x, y )

.

x y

(7-6)

and hence we obtain the useful formula

FXY ( x, y )

x

y

(7-7)

f XY (u, v ) dudv.

Using (7-2), we also get

f XY ( x, y ) dxdy 1.

(7-8)

5

PILLAI

To find the probability that (X,Y) belongs to an arbitrary

region D, we can make use of (7-5) and (7-7). From (7-5)

and (7-7)

P x X ( ) x x, y Y ( ) y y FXY ( x x, y y )

FXY ( x, y y ) FXY ( x x, y ) FXY ( x, y )

x x

x

y y

y

f XY (u, v )dudv f XY ( x, y )xy.

(7-9)

Thus the probability that (X,Y) belongs to a differential

rectangle x y equals f XY ( x, y) xy, and repeating this

procedure over the union of no overlapping differential

rectangles in D, we get the useful result

Y

D

y

x

X

Fig. 7.2

6

PILLAI

P( X , Y ) D

( x , y )D

f XY ( x, y )dxdy.

(7-10)

(iv) Marginal Statistics

In the context of several r.vs, the statistics of each individual

ones are called marginal statistics. Thus FX (x) is the

marginal probability distribution function of X, and f X (x) is

the marginal p.d.f of X. It is interesting to note that all

marginals can be obtained from the joint p.d.f. In fact

FX ( x) FXY ( x,), FY ( y ) FXY (, y ).

(7-11)

Also

f X ( x)

f XY ( x, y )dy, fY ( y )

f XY ( x, y )dx.

(7-12)

To prove (7-11), we can make use of the identity

( X x) ( X x ) (Y )

7

PILLAI

so that FX ( x) P X x P X x,Y FXY ( x,).

To prove (7-12), we can make use of (7-7) and (7-11),

which gives

FX ( x) FXY ( x,)

x

f XY (u, y ) dudy

(7-13)

and taking derivative with respect to x in (7-13), we get

f X ( x)

f XY ( x, y )dy.

(7-14)

At this point, it is useful to know the formula for

differentiation under integrals. Let

H ( x)

b( x )

a( x)

h( x, y )dy.

(7-15)

Then its derivative with respect to x is given by

b ( x ) h ( x , y )

dH ( x ) db( x )

da ( x )

h ( x , b)

h ( x, a )

dy.

a ( x ) x

dx

dx

dx

Obvious use of (7-16) in (7-13) gives (7-14).

(7-16)

8

PILLAI

If X and Y are discrete r.vs, then pij P( X xi ,Y y j ) represents

their joint p.d.f, and their respective marginal p.d.fs are

given by

P( X xi ) P( X xi , Y y j ) pij

and

j

(7-17)

j

P(Y y j ) P( X xi , Y y j ) pij

i

(7-18)

i

Assuming that P( X xi ,Y y j ) is written out in the form of a

rectangular array, to obtain P( X xi ), from (7-17), one need to

add up all entries in the i-th row.

p

p

p

p

p

It used to be a practice for insurance

p

p

p

p

companies routinely to scribble out

these sum values in the left and top p p p p p

p

p

p

p

margins, thus suggesting the name

Fig. 7.3 9

marginal densities! (Fig 7.3).

PILLAI

ij

i

ij

11

12

1j

1n

21

22

2j

2n

i1

i2

ij

in

m1

m2

mj

mn

j

From (7-11) and (7-12), the joint P.D.F and/or the joint p.d.f

represent complete information about the r.vs, and their

marginal p.d.fs can be evaluated from the joint p.d.f. However,

given marginals, (most often) it will not be possible to

compute the joint p.d.f. Consider the following example:

Y

Example 7.1: Given

1

constant, 0 x y 1,

f XY ( x, y )

0,

otherwise .

y

(7-19)

X

0

1

Fig. 7.4

Obtain the marginal p.d.fs f X (x) and fY ( y ).

Solution: It is given that the joint p.d.f f XY ( x, y) is a constant in

the shaded region in Fig. 7.4. We can use (7-8) to determine

that constant c. From (7-8)

2

cy

f XY ( x, y )dxdy c dx dy cydy

y 0 x 0

y 0

2

1

y

1

1

0

c

1. (7-20)

2

10

PILLAI

Thus c = 2. Moreover from (7-14)

f X ( x)

f XY ( x, y )dy

1

2dy 2(1 x), 0 x 1,

(7-21)

2dx 2 y,

(7-22)

yx

and similarly

fY ( y )

f XY ( x, y )dx

y

x 0

0 y 1.

Clearly, in this case given f X (x) and fY ( y) as in (7-21)-(7-22),

it will not be possible to obtain the original joint p.d.f in (719).

Example 7.2: X and Y are said to be jointly normal (Gaussian)

distributed, if their joint p.d.f has the following form:

f XY ( x, y )

1

2 X Y 1

2

e

1 ( x X )2 2 ( x X )( y Y ) ( y Y ) 2

XY

2 (1 2 ) X2

Y2

,

x , y , | | 1.

(7-23)

11

PILLAI

By direct integration, using (7-14) and completing the

square in (7-23), it can be shown that

f X ( x)

f XY ( x, y )dy

1

2

2

X

e( x X )

2

2

/ 2 X

~

N ( X , X2 ),

(7-24)

/ 2 Y2

~

N ( Y , Y2 ),

(7-25)

and similarly

fY ( y )

f XY ( x, y )dx

1

2 Y2

e( y Y )

2

Following the above notation, we will denote (7-23)

as N (X , Y , X2 , Y2 , ). Once again, knowing the marginals

in (7-24) and (7-25) alone doesn’t tell us everything about

the joint p.d.f in (7-23).

As we show below, the only situation where the marginal

p.d.fs can be used to recover the joint p.d.f is when the

random variables are statistically independent.

12

PILLAI

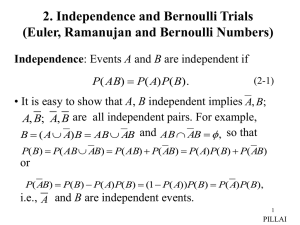

Independence of r.vs

Definition: The random variables X and Y are said to be

statistically independent if the events X ( ) A and {Y ( ) B}

are independent events for any two Borel sets A and B in x

and y axes respectively. Applying the above definition to

the events X ( ) x and Y ( ) y, we conclude that, if

the r.vs X and Y are independent, then

P( X ( ) x) (Y ( ) y ) P( X ( ) x) P(Y ( ) y )

(7-26)

i.e.,

FXY ( x, y ) FX ( x) FY ( y )

(7-27)

or equivalently, if X and Y are independent, then we must

have

f XY ( x, y ) f X ( x) fY ( y ).

(7-28)

13

PILLAI

If X and Y are discrete-type r.vs then their independence

implies

P( X xi ,Y y j ) P( X xi )P(Y y j ) for all i, j.

(7-29)

Equations (7-26)-(7-29) give us the procedure to test for

independence. Given f XY ( x, y), obtain the marginal p.d.fs f X (x)

and fY ( y) and examine whether (7-28) or (7-29) is valid. If

so, the r.vs are independent, otherwise they are dependent.

Returning back to Example 7.1, from (7-19)-(7-22), we

observe by direct verification that f XY ( x, y) f X ( x) fY ( y). Hence

X and Y are dependent r.vs in that case. It is easy to see that

such is the case in the case of Example 7.2 also, unless 0.

In other words, two jointly Gaussian r.vs as in (7-23) are

independent if and only if the fifth parameter 0.

14

PILLAI

Example 7.3: Given

xy 2e y , 0 y , 0 x 1,

f XY ( x, y )

otherwise.

0,

(7-30)

Determine whether X and Y are independent.

Solution:

f X ( x ) f XY ( x, y )dy x y 2e y dy

0

0

y

x 2 ye

2 ye y dy 2 x,

0

0

Similarly

fY ( y )

1

0

y2 y

f XY ( x, y )dx

e ,

2

0 x 1.

0 y .

(7-31)

(7-32)

In this case

f XY ( x, y ) f X ( x) fY ( y ),

and hence X and Y are independent random variables.

15

PILLAI