PPTX

advertisement

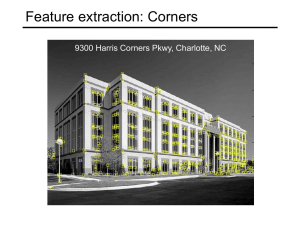

Key Points Often we are unable to detect the complete contour of an object or characterize the details of its shape. In such cases, we can still represent shape information in an implicit way. The idea is to find key points in the image of the object that can be used to identify the objects and that do not change dramatically when the orientation or lighting of the object change. A good choice for this purpose are corners in the image. November 18, 2014 Computer Vision Lecture 18: Object Recognition II 1 Corner Detection with FAST A very efficient algorithm for corner detection is called FAST (Features from Accelerated Segment Test). For any given point c in the image, we can test whether it is a corner by: • Considering the 16 pixels at a radius of 3 pixels around c and • finding the longest run (i.e., uninterrupted sequence) of pixels whose intensity is either • greater than that of c plus a threshold or • less that that of c minus the same threshold. • If the run is at least 12 pixels long, then c is a corner. November 18, 2014 Computer Vision Lecture 18: Object Recognition II 2 Corner Detection with FAST November 18, 2014 Computer Vision Lecture 18: Object Recognition II 3 Corner Detection with FAST The FAST algorithm can be made even faster by first checking only pixels 1, 5, 9, and 13. If not at least three of them fulfill the intensity condition, we can immediately rule out that the given point is a corner. In order to avoid detecting multiple corners near the same pixel, we can require a minimum distance between corners. If two corners are too close, we only keep the one with the higher corner score. Such a score can be computed as the sum of intensity differences between c and the pixels in the run. November 18, 2014 Computer Vision Lecture 18: Object Recognition II 4 Key Point Description with BRIEF Now that we have identified interesting points in the image, how can we describe them so that we can detect them in other images? A very efficient method is to use BRIEF (Binary Robust Independent Elementary Features) descriptors. They can be described with minimal memory requirement (32 bytes per point). Their comparison only requires 256 binary operations. November 18, 2014 Computer Vision Lecture 18: Object Recognition II 5 Key Point Description with BRIEF First, smooth the input image with a 9×9 Gaussian filter. Then choose 256 pairs of points within a 35×35 pixel area, following a Gaussian distribution with = 7 pixels. Center the resulting mask on a corner c. For every pair of points, if intensity at the first point is greater than at the second one, add a 0 to the bitstring, otherwise add a 1. The resulting bit string of length 256 is the descriptor for point c. November 18, 2014 Computer Vision Lecture 18: Object Recognition II 6 Key Point Description with BRIEF November 18, 2014 Computer Vision Lecture 18: Object Recognition II 7 Key Point Matching with BRIEF In order to compute the matching distance between the descriptors of two different points, we can simply count the number of mismatching bits in their description (Hamming distance). For example, the bit strings 100110 and 110100 have a Hamming distance of 2, because they differ in their second and fifth bits. In order to find the match for point c in another image, we can find the pixel in that image whose descriptor has the smallest Hamming distance to the one for c. November 18, 2014 Computer Vision Lecture 18: Object Recognition II 8 Key Point Matching with BRIEF November 18, 2014 Computer Vision Lecture 18: Object Recognition II 9 How do Neural Networks (NNs) work? • NNs are able to learn by adapting their connectivity patterns so that the organism improves its behavior in terms of reaching certain (evolutionary) goals. • The strength of a connection, or whether it is excitatory or inhibitory, depends on the state of a receiving neuron’s synapses. • The NN achieves learning by appropriately adapting the states of its synapses. November 18, 2014 Computer Vision Lecture 18: Object Recognition II 10 Supervised Function Approximation In supervised learning, we train an artificial NN (ANN) with a set of vector pairs, so-called exemplars. Each pair (x, y) consists of an input vector x and a corresponding output vector y. Whenever the network receives input x, we would like it to provide output y. The exemplars thus describe the function that we want to “teach” our network. Besides learning the exemplars, we would like our network to generalize, that is, give plausible output for inputs that the network had not been trained with. November 18, 2014 Computer Vision Lecture 18: Object Recognition II 11 An Artificial Neuron synapses x1 neuron i x2 Wi,1 Wi,2 … … xi Wi,n xn n net input signal net i (t ) wi , j (t ) x j (t ) j 1 output November 18, 2014 x i (t ) f i (neti (t )) Computer Vision Lecture 18: Object Recognition II 12 Linear Separability n w x f ( x1 , x2 ,..., xn ) 1, if i i i 1 0, otherwise Input space in the two-dimensional case (n = 2): x2 -3 -2 -1 0 3 2 1 1 1 -1 -2 -3 2 1 3 x1 w1 = 1, w2 = 2, =2 November 18, 2014 x2 -3 -2 -1 3 2 1 1 1 -1 -2 -3 2 0 3 x1 w1 = -2, w2 = 1, =2 Computer Vision Lecture 18: Object Recognition II x2 -3 -2 -1 3 2 1 1 -1 -2 -3 2 0 3 x1 w1 = -2, w2 = 1, =1 13