Providing High and Controllable Performance in Multicore

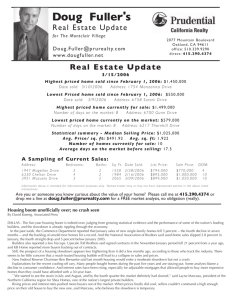

advertisement