Algorithms and Big O analysis

advertisement

1

WEEK 2

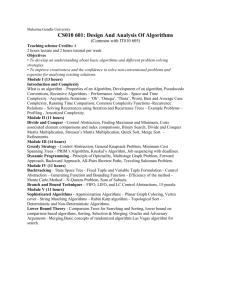

CS 361: ADVANCED DATA STRUCTURES AND

ALGORITHMS

Introduction to Algorithms

2

Class Overview

Start thinking about analyzing a program or algorithm.

Understand algorithm efficiency and running-time complexity.

Analysis of an algorithm using Big-O notation.

Which Cost More to Feed?

3

Algorithm Efficiency

• There are often many approaches (algorithms) to solve a problem.

• How do we choose between them?

• There are two (sometimes conflicting) goals at the heart of computer

program design. To design an algorithm that:

1) is easy to understand, code, debug.

2) makes efficient use of the resources.

• Goal (1) is the concern of Software Engineering.

• Goal (2) is the concern of data structures and algorithm analysis.

• When goal (2) is important,

• how do we measure an algorithm’s cost?

4

Estimation Techniques

• Known as “back of the envelope” or “back of the napkin” calculation

1.

Determine the major parameters that effect the problem.

2.

Derive an equation that relates the parameters to the problem.

3.

Select values for the parameters, and apply the equation to yield and

estimated solution.

Essentially, you need to understand the problem

5

Estimation Example

• How many library bookcases does it take to store books totaling one

million pages?

• Estimate:

• Pages/inch

• Shelf/Feet

• Shelves/bookcase

6

Best, Worst, Average Cases

• Not all inputs of a given size take the same time to run.

• Sequential search for K in an array of n integers:

• Begin at first element in array and look at each element in turn until K is

found

• Best case:

• Worst case:

• Average case:

7

Time Analysis

• Provides upper and lower bounds of running time.

Lower Bound Running Time Upper Bound

• Different types of analysis:

- Worst case

- Best case

- Average case

8

Worst Case

• Provides an upper bound on running time.

• An absolute guarantee that the algorithm would not run

longer, no matter what the inputs are.

Lower Bound Running Time Upper Bound

9

Best Case

• Provides a lower bound on running time.

• Input is the one for which the algorithm runs the fastest.

Lower Bound Running Time Upper Bound

10

Average Case

• Provides an estimate of “average” running time.

• Assumes that the input is random.

• Useful when best/worst cases do not happen very often

• i.e., few input cases lead to best/worst cases.

Lower Bound Running Time Upper Bound

11

Which Analysis to Use?

• While average time appears to be the fairest measure,

It may be difficult to determine.

For example, algorithms that are designed to operate on strings of text.

• Why is the worst case time important?

In some situations it may be necessary to use a pessimistic analysis in

order to guarantee safety.

Recall the “bookcase” problem.

How to Measure Efficiency?

• Critical resources:

• Time, memory, programmer effort, user effort

• Factors affecting running time:

• For most algorithms, running time depends on “size” of the input.

• Running time is expressed as T(n) for some function T on input size n.

12

13

How do we analyze an algorithm?

• Need to define objective measures.

(1) Compare execution times?

Not good:

times are specific to a particular machine.

(2) Count the number of statements?

Not good:

number of statements varies with programming language and style.

14

How do we analyze an algorithm? (cont.)

(3) Express running time T as a function of problem size n

(i.e., T=f(n) )

Asymptotic Algorithm Analysis

- Given two algorithms having running times f(n) and g(n), find which

functions grows faster?

- Compare “rates of growth” of f(n) and g(n).

- Such an analysis is independent of machine time, programming style,

etc.

15

Understanding Rate of Growth

• Consider the example of feeding elephants and goldfish:

Total Cost: (cost_of_feeding_elephants) + (cost_of_feeding_goldfish)

Approximation:

Total Cost ~ cost_of_feeding_elephants

16

Understanding Rate of Growth (cont’d)

• The low order terms of a function are relatively insignificant for

large n

n4 + 100n2 + 10n + 50

Approximation:

n4

• Highest order term determines rate of growth!

17

Visualizing Orders of Growth

• On a graph, as you go to the right, a faster growing function

eventually becomes larger...

18

Growth Rate Graph

19

Common orders of magnitude

Orders of Magnitude

n

2

4

8

16

32

128

1024

65536

log2n

1

2

3

4

5

7

10

16

n log2n

2

8

24

64

160

896

10240

1048576

n2

4

16

64

256

1024

16384

1048576

4294967296

n3

8

64

512

4096

32768

2097152

1073741824

2.8 x 1014

2n

4

16

256

65536

4294967296

3.4 x 1038

1.8 x 10308

Forget it!

21

Rate of Growth ≡ Asymptotic Analysis

• Using rate of growth as a measure to compare different functions

implies comparing them asymptotically

• i.e., as n

• If f(x) is growing faster than g(x), then f(x) always eventually becomes

larger than g(x) in the limit

• i.e., for large enough values of x

Because we prefer the worst-case analysis !

Complexity

• Let us assume two algorithms A and B that solve the same class of

problems.

• The time complexity of A is 5,000n, T = f(n) = 5000*n

• the one for B is 2n for an input with n elements, T= g(n) = 2n

• For n = 10,

• A requires 5*104 steps,

• but B only 1024,

• so B seems to be superior to A.

• For n = 1000,

• A requires 5*106 steps,

• while B requires 1.07*10301 steps.

22

23

Asymptotic Notation

O notation: asymptotic “less than”:

f(n) = O(g(n)) implies: f(n) “≤” c*g(n) in the limit, c is a constant

In English: “ f(n) grows asymptotically no faster than g(n) ”

c is a constant

worst-case analysis

24

Asymptotic Notation

notation: asymptotic “greater than”:

f(n) = (g(n)) implies: f(n) “≥” c*g(n) in the limit , c is a constant

In English: “ f(n) grows asymptotically faster than g(n) ”

c is a constant

best-case analysis

*formal

definition in CS477/677

25

Asymptotic Notation

notation: asymptotic “equality”:

f(n)= (g(n)) implies: f(n) “=” c*g(n) in the limit , c is a constant

In English: “ f(n) grows asymptotically as fast as g(n) ”

tight bound analysis c is a constant

(best and worst cases are same)

*formal

definition in CS477/677

26

Common Misunderstanding

Worst case & Upper bound

Upper bound refers to a limit for the run-time of that algorithm.

Worst case refers to the worst input among the choices for possible

inputs of a given size.

27

Big O in practice

1.

Figure out T=f(n): run-time (number of basic operations) required

on an input of size n

2.

Remove low-order terms

28

More on big-O

O(g(n)) can be related to a set of functions f(n)

f(n) = O(g(n)) if “f(n)≤c*g(n)”

Big-O notation provides a machine independent means

for determining the efficiency of an algorithm.

Names of Orders of Magnitude

O(1)

bounded (by a constant) time

O(log2N)

logarithmic time

O(N)

linear time

O(N*log2N) N*log2N time

O(N2)

quadratic time

O(N3)

cubic time

O(2N )

exponential time

29

30

Constant Time Algorithms

• An algorithm is O(1) when its running time is independent of the

number of data items. The algorithm runs in constant time.

Direct Insert at Rear

front

rear

The storing of the element involves a simple assignment statement

and thus has efficiency O(1).

31

Linear Time Algorithms

• An algorithm is O(n) when its running time is proportional to the size

of the list.

• When the number of elements doubles, the number of operations

doubles.

Sequential Search for the Minimum Element in an Array

32

46

8

12

3

n=5

1

2

3

4

5

minimum element found

in the list after n comparisons

32

Logarithmic Time Algorithms

• The logarithm of n, base 2, is commonly used when analyzing

computer algorithms. For example, sorting algorithms.

Ex. log2(2) = 1

log2(75) = 6.2288

• When compared to the functions n and n2, the function log2n grows

very slowly.

n2

n

log2n

33

How do we calculate T=f(n) for a program/algorithm?

1)

Associate a "cost" with each statement

2)

Find total number of times each statement is executed

3)

Add up the costs

34

Running Time Examples

i = 0;

while (i<N)

{

X=X+Y;

// O(1)

result = mystery(X); // O(N)

i++;

// O(1)

}

• The body of the while loop: O(N)

• Loop is executed: N times

Running time of the entire iteration?

N x O(N) = O(N2)

35

Running Time Examples (cont.’d)

if (i<j)

for ( i=0; i<N; i++ )

X = X+i;

else

O(1)

X=0;

O(N)

Running time of the entire if-else statement?

Max (O(N), O(1)) = O(N)

Complexity Examples

What does the following algorithm compute?

int who_knows(int a[n])

{

int m = 0;

for {int i = 0; i<n; i++}

for {int j = i+1; j<n; j++}

if (abs(a[i] – a[j]) > m )

m = abs(a[i] – a[j]);

return m;

}

returns the maximum difference between any two numbers in the input array

# of Comparisons: n-1 + n-2 + n-3 + … + 1 = (n-1)n/2 = 0.5n2 - 0.5n

Time complexity is O(n2)

36

Complexity Examples

Another algorithm solving the same problem:

int max_diff(int a[n])

{

int min = a[0];

int max = a[0];

for {int i = 1; i<n; i++}

{

if (a[i] < min )

min = a[i];

else if (a[i] > max )

max = a[i];

}

return max-min;

}

# of Comparisons: 2n - 2

Time complexity is O(n).

37

38

Running time of various statements

39

Examples (cont.’d)

40

Examples (cont.’d)

41

Analyze the complexity of the following code segments

42

Homework #2: Algorithm analysis

• Already assigned on BB, due on 9/14/2014, 11:59PM

43

Next class & Reading

• Next class: ADTs of Lists, Stacks, and Queues

• Book Chapter 3: “Lists, Stacks, and Queues”