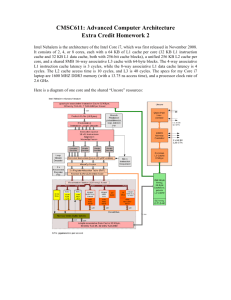

associative mapping cache

advertisement

Cheng-Chang Yang Generally speaking, faster memory is more expensive than slower memory. To provide the best performance at the reasonable cost, memory is organized in a hierarchical function. Memory Hierarchy The base types that hierarchical memory system include: Registers Cache Memory Main Memory Secondary Memory: hard disk, CD… What is Cache Memory? Cache memory is to speed up memory accesses by storing recently used data closer to the CPU, instead of storing it in main memory. Cache and Main Memory Cache and Main Memory Cache and Main Memory The Level 2 cache is slower and larger than the Level 1 cache, and the Level 3 cache is slower and Larger than the Level 2 cache. Transmission speed Level 1 > Level 2 > Level 3 Transmission capacity Level 1 < Level 2 < Level 3 Flow Chart (Cache Read Operation) RA: read address Cache Mapping Function How to determining which main memory block currently holds a cache line? Direct Mapping Associate Mapping Set Associate Mapping Direct Mapped Cache Direct Mapped Cache Maps each block of main memory into only one possible cache line. Associative Mapping Instead of placing main memory blocks in specific cache locations based on main memory address, we could allow a block to go anywhere in cache. In this way, cache would have to fill up before any blocks move out. This is how associative mapping cache works. Associative Mapping Associative mapping overcomes the disadvantages of direct mapping by permitting each main memory block to be loaded into any line of the cache. Associative Mapping We must determine which block to move out from the cache . A simple first-in first-out (FIFO) algorithm would work. However, there are many replacement algorithms that can be used; these are discussed in later. Set Associative Mapping The problem of the direct mapping is eased by having a few choices for block placement. At the same time, the hardware cost is reduced by decreasing the size of the associative mapping search. Set associative mapping is a compromise that exhibits both the direct mapping and associative mapping while reducing their disadvantages. Replacement Algorithms For direct mapping there is only one possible line for any block, and no choice is possible. For associative and set associative mapping, a replacement algorithms is needed. Least recently used (LRU) First in first out (FIFO) Least frequently used (LFU) Random Replacement Algorithms Least recently used (LRU) algorithm keeps track of the last time that a block was assessed and evicts the block that has been unused for the longest period of time. First in first out (FIFO) algorithm: the block that has been in cache the longest would be selected and removed from cache memory. Replacement Algorithms Least frequently used (LFU) algorithm: replace that block in the set that has experienced the fewest references. The most effective is least recently used (LRU) Reference Internet Source Wikipedia (http://en.wikipedia.org/wiki/Cache_memory) Book Computer Organization And Embedded Systems(6th) Computer Organization And Architecture(8th)