Introduction to Text Classification

advertisement

Text Classification

Slides by

Tom Mitchell (NB),

William Cohen (kNN),

Ray Mooney and others at UT-Austin,

me

1

Outline

• Problem definition and applications

• Very Quick Intro to Machine Learning and Classification

– Learning bounds

– Bias-variance tradeoff, No free lunch theorem

• Maximum Entropy Models

• Other Classification Techniques

• Representations

–

–

–

–

–

Vector Space Model (and variations)

Feature Selection

Dimensionality Reduction

Representations and independence assumptions

Sparsity and smoothing

2

Spam or not Spam?

• Most people who’ve ever used email have

developed a hatred of spam

• In the days before Gmail (and still today), you

could get hundreds of spam messages per day.

• “Spam Filters” were developed to automatically

classify, with high (but not perfect) accuracy,

which messages are spam and which aren’t.

3

Text Classification Problem

Let D be the space of all possible documents

Let C be the space of possible classes

Let H be the space of all possible hypotheses (or

classifiers)

Input: a labeled sample X = {<d,c> | d in D and c in C}

Output: a hypothesis h in H: D C for predicting, with

high accuracy, the class of previously unseen

documents

4

Example Applications

•

News topic classification (e.g., Google News)

C={politics,sports,business,health,tech,…}

•

“SafeSearch” filtering

C={pornography, not pornography}

•

Language classification

C={English,Spanish,Chinese,…}

•

Sentiment classification

C={positive review,negative review}

•

Email sorting

C={spam,meeting reminders,invitations, …} – user-defined!

5

Outline

• Problem definition and applications

• Very Quick Intro to Machine Learning/Classification

– Learning bounds

– Bias-variance tradeoff, No free lunch theorem

• Maximum Entropy Models

• Other Classification Techniques

• Representations

–

–

–

–

–

Vector Space Model (and variations)

Feature Selection

Dimensionality Reduction

Representations and independence assumptions

Sparsity and smoothing

6

Machine Learning

A “learning machine” is an algorithm that

searches for an accurate classifier.

Remember:

Let D be the space of all possible documents

Let C be the space of possible classes

Let H be the space of all possible hypotheses (or classifiers)

Input: a labeled sample X = {<d,c> | d in D and c in C}

Output: a hypothesis h in H: D C for predicting, with high accuracy,

the class of previously unseen documents

7

Concrete Example

Let C = {“Spam”, “Not Spam”} or {S,N}

Let H be the set of conjunctive rules, like:

“if document d contains

‘free credit score’ AND

‘click here’

Spam”

8

A Simple Learning Algorithm

1.

2.

3.

4.

Pick a class c (S or N)

Find the term t that correlates best with c

Construct a rule r: “If d contains t c”

Repeatedly find more terms that correlate

with c

5. Add the new terms to r, until the accuracy

stops improving on the training data.

9

Loss Function:

Measuring “Accuracy”

A loss function is a function L: H x D x C [0,1]

Given a hypothesis h, document d, and class c,

L(h,d,c) returns the error or loss of h when

making a prediction on d.

Simple Example:

L(h,d,c) = 0 if h(d)=c, and 1 otherwise.

This is called 0-1 loss.

10

4 Things Everyone Should Know

About Machine Learning

1. Assumptions

2. Generalization Bounds and Occam’s

Razor

3. Bias-Variance Tradeoff

4. No Free Lunch

11

1. Assumptions

Machine learning traditionally makes two

important (and often unrealistic)

assumptions.

1. There is a probability distribution P (not necessarily

known, but it’s assumed to exist) from which all

examples d are drawn (training and test examples).

2. Each example is drawn independently from this

distribution.

Together, these are known as ‘i.i.d.’: independent and

identically distributed.

12

Why are the assumptions

important?

Basically, it’s hard to make a prediction

about a document if all of your training

examples are totally different.

With these assumptions, you’re saying it’s

very unlikely (with enough training data)

that you’ll see a test example that’s totally

different from all of your training data.

13

2. Generalization Bounds

Given the assumptions above, it’s possible to

prove theoretically that an algorithm can learn

something useful.

Generalization Bound by Vapnik-Chervonenkis:

With probability 1-δ over the choice of training data,

1

h log( 2 | Train | / h 1) log( / 4)

E d P L(h, d )

L(h, d )

| Train | dTrain

| Train |

Here, h is the VC-dimension of the learning machine.

If the learning machine is complex, h is big.

If it’s simple, h is small.

14

2. Bounds and Occam’s Razor

Occam’s Razor: All other things being

equal, the simplest explanation is the best.

Generalization bounds lend some theoretical

credence to this old rule-of-thumb.

15

3. Bias and Variance

• Bias: The built-in tendency of a learning

machine or hypothesis class to find a hypothesis

in a pre-determined region of the space of all

possible classifiers.

e.g., our rule hypotheses are biased towards axis-parallel lines

• Variance: The degree to which a learning

algorithm is sensitive to small changes in the

training data.

– If a small change in training data causes a large

change in the resulting classifier, then the learning

algorithm has “high variance”.

16

3. Bias-Variance Tradeoff

As a general rule,

the more biased a learning machine,

the less variance it has,

and the more variance it has,

the less biased it is.

17

4. No Free Lunch Theorem

Simply put, this famous theorem says:

If your learning machine has no bias at all,

then it’s impossible to learn anything.

The proof is simple, but out of the scope of

this lecture. You should check it out.

18

Outline

• Problem definition and applications

• Very Quick Intro to Machine Learning and Classification

– Bias-variance tradeoff

– No free lunch theorem

• Maximum Entropy Models

• Other Classification Techniques

• Representations

–

–

–

–

–

Vector Space Model (and variations)

Feature Selection

Dimensionality Reduction

Representations and independence assumptions

Sparsity and smoothing

19

Machine Learning Techniques

for NLP

• NLP people tend to favor certain kinds of

learning machines:

– Maximum entropy (or log-linear, or logistic

regression, or logit) models

(gaining in popularity lately)

– Bayesian networks

(directed graphical models, like Naïve Bayes)

– Support vector machines

(but only for certain things, like text

classification and information

extraction)

20

Hypothesis Class

A maximum entropy/log-linear model (ME) is

any function with this form:

h(d ) arg max P(c | d )

c

“Log-linear”: If you take the

log, it’s a linear function.

Normalization function:

Z (d ) exp i f i (c , d )

c

i

exp i f i (c, d )

i

P (c | d )

c exp i i f i (c, d )

21

Feature Functions

The functions fi are called feature functions

(or sometimes just features).

These must be defined by the person

designing the learning machine.

Example:

fi(c,d) = [If c=S, count of how often “free”

appears in d. Otherwise, 0.]

22

Parameters

The λi are called the parameters of the

model.

During training, the learning algorithm tries

to find the best value for the λi.

23

Example ME Hypothesis

h(d ) arg max P(c | d )

c

exp 2 f1 (c, d ) 1.5 f 2 (c, d )

P (c | d )

exp 2 f1 (c, d ) 1.5 f 2 (c, d )

c

count (" free" , d ), if c Spam

f1 (c, d )

0, otherwise

count (" alex" , d ), if c NotSpam

f 2 ( c, d )

- count (" alex" , d ), otherwise

24

Why is it “Maximum Entropy”?

Before we get into how to train one of these, let’s

get an idea of why people use it.

The basic intuition is from Occam’s Razor: we

want to find the “simplest” probability distribution

P(c | d) that explains the training data.

Note that this also introduces bias: we’re biasing

our search towards “simple” distributions.

But what makes a distribution “simple”?

25

Entropy

Entropy is a measure of how much

uncertainty is in a probability distribution.

H ( P) P( x) log P( x)

x

Examples:

Entropy of a deterministic event:

H(1,0) = -1 log 1 – 0 log 0

= (-1) * (0) - 0 log 0

=0

26

Entropy

Entropy is a measure of how much

uncertainty is in a probability distribution.

H ( P) P( x) log P( x)

x

Examples:

Entropy of flipping a coin:

H(1/2,1/2) = -1/2 log 1/2 – 1/2 log 1/2

= -(1/2) * (-1) - (1/2) * (-1)

=1

27

Entropy

Entropy is a measure of how much

uncertainty is in a probability distribution.

H ( P) P( x) log P( x)

x

Examples:

Entropy of rolling a six-sided die:

H(1/6,…1/6) = -1/6 log 1/6 – … - 1/6 log 1/6

= -1/6 * -2.53 - … - 1/6 * -2.53

= 2.53

28

Entropy

Entropy of a biased coin flip:

Let P(Heads) represent the probability that

the biased coin lands on Heads.

1.2

Maximum Entropy Setting

for P(Heads):

P(Heads) = P(not Heads).

1

H(P)

0.8

0.6

0.4

0.2

0

0

0.2

0.4

0.6

P(Heads)

0.8

29

1

If event X has N possible

outcomes, the maximum

entropy setting for

p(x1),p(x2),…,p(xN) is

p(x1)=p(x2)=…=p(xN)=1/N.

Occam’s Razor for Distributions

Given a set of empirical expectations of the

form E<c,d> in Train fi(c,d)

Find a distribution P(c | d) such that

- it provides the same expectations

(matches the training data)

E<c,d>~P(c|d) fi(c,d) = E<c,d> in Train fi(c,d)

- maximizes the entropy H(P)

(Occam’s Razor bias)

30

Theorem

The maximum entropy distribution for P(c|d),

subject to the constraints

E<c,d>~P(c|d) fi(c,d) = E<c,d> in Train fi(c,d)

must have log-linear form.

Thus, max-ent models have to be loglinear models.

31

Training a ME model

Training is an optimization problem:

find the value for λ that maximizes the

conditional log-likelihood of the training data:

log P(c | d )

CLL(Train )

c , d Train

i f i (c, d ) log Z (d )

c , d Train i

32

Training a ME model

Optimization is normally performed using

some form of gradient descent:

0) Initialize λ0 to 0

1) Compute the gradient: ∇CLL

2) Take a step in the direction of the gradient:

λi+1 = λi + α ∇CLL

3) Repeat until CLL doesn’t improve:

stop when |CLL(λi+1) – CLL(λi)| < ε

33

Training a ME model

Computing the gradient:

CLL(Train )

i

i

i f i (c, d ) log Z (d )

c , d Train i

f i (c, d )

log exp i f i (c, d )

i

c , d Train

c

i

f i (c, d ) exp i f i (c, d )

c

i

f i (c, d )

exp i f i (c, d )

c , d Train

c

i

f i ( c, d ) E P f i ( c, d )

c , d Train

34

Outline

• Problem definition and applications

• Very Quick Intro to Machine Learning and Classification

– Bias-variance tradeoff

– No free lunch theorem

• Maximum Entropy Models

• Other Classification Techniques

• Representations

–

–

–

–

–

Vector Space Model (and variations)

Feature Selection

Dimensionality Reduction

Representations and independence assumptions

Sparsity and smoothing

35

Classification Techniques

• Book mentions three:

– Naïve Bayes

– k-Nearest Neighbor

– Support Vector Machines

• Others (besides ME):

– Rule-based systems

• Decision lists (e.g., Ripper)

• Decision trees (e.g. C4.5)

– Perceptron and Neural Networks

36

Bayes Rule

Which is shorthand for:

For code, see

www.cs.cmu.edu/~tom/mlbook.html

click on “Software and Data”

How can we implement

this if the ai are

continuous-valued

attributes?

Also called “Gaussian

distribution”

Gaussian

Assume P(ai|vj) follows Gaussian distribution, use training data

to estimate its mean and variance

K-nearest neighbor methods

William Cohen

10-601 April 2008

52

BellCore’s MovieRecommender

• Participants sent email to videos@bellcore.com

• System replied with a list of 500 movies to rate on a 1-10

scale (250 random, 250 popular)

– Only subset need to be rated

• New participant P sends in rated movies via email

• System compares ratings for P to ratings of (a random

sample of) previous users

• Most similar users are used to predict scores for unrated

movies (more later)

• System returns recommendations in an email message.

53

Suggested Videos for: John A. Jamus.

Your must-see list with predicted ratings:

•7.0 "Alien (1979)"

•6.5 "Blade Runner"

•6.2 "Close Encounters Of The Third Kind (1977)"

Your video categories with average ratings:

•6.7 "Action/Adventure"

•6.5 "Science Fiction/Fantasy"

•6.3 "Children/Family"

•6.0 "Mystery/Suspense"

•5.9 "Comedy"

•5.8 "Drama"

54

The viewing patterns of 243 viewers were consulted. Patterns of 7 viewers were found to be most similar.

Correlation with target viewer:

•0.59 viewer-130 (unlisted@merl.com)

•0.55 bullert,jane r (bullert@cc.bellcore.com)

•0.51 jan_arst (jan_arst@khdld.decnet.philips.nl)

•0.46 Ken Cross (moose@denali.EE.CORNELL.EDU) Mystery/Suspense:

•0.42 rskt (rskt@cc.bellcore.com)

•"Silence Of The Lambs, The" 9.3, 3

•0.41 kkgg (kkgg@Athena.MIT.EDU)

viewers

•0.41 bnn (bnn@cc.bellcore.com)

Comedy:

By category, their joint ratings recommend:

•"National Lampoon's Animal House" 7.5,

•Action/Adventure:

4 viewers

•"Excalibur" 8.0, 4 viewers

•"Driving Miss Daisy" 7.5, 4 viewers

•"Apocalypse Now" 7.2, 4 viewers

•"Platoon" 8.3, 3 viewers

•"Hannah and Her Sisters" 8.0, 3 viewers

•Science Fiction/Fantasy:

Drama:

•"Total Recall" 7.2, 5 viewers

•"It's A Wonderful Life" 8.0, 5 viewers

•Children/Family:

•"Dead Poets Society" 7.0, 5 viewers

•"Wizard Of Oz, The" 8.5, 4 viewers

•"Rain Man" 7.5, 4 viewers

•"Mary Poppins" 7.7, 3 viewers

Correlation of predicted ratings with your actual

ratings is: 0.64 This number measures ability to

evaluate movies accurately for you. 0.15 means

low ability. 0.85 means very good ability. 0.50

means fair ability.

55

Algorithms for Collaborative Filtering 1:

Memory-Based Algorithms (Breese et al, UAI98)

• vi,j= vote of user i on item j

• Ii = items for which user i has voted

• Mean vote for i is

• Predicted vote for “active user” a is weighted sum

normalizer

weights of n similar users

56

Basic k-nearest neighbor classification

• Training method:

– Save the training examples

• At prediction time:

– Find the k training examples (x1,y1),…(xk,yk)

that are closest to the test example x

– Predict the most frequent class among those

yi’s.

• Example:

57

http://cgm.cs.mcgill.ca/~soss/cs644/projects/simard/

What is the decision boundary?

Voronoi diagram

58

Convergence of 1-NN

P(Y|x’’)

P (knnError)

1 Pr( y y1 )

P(Y|x)

x

1 Pr(Y y ' | x) 2

y'

1 Pr( y* | x) 2

x2

2

Pr(

Y

y

'

|

x

)

y ' y*

...

2(1 Pr( y* | x))

2(Bayes optimal error rate)

y2

neighbor

y

x1

P(Y|x1)

y1

assume equal

let y*=argmax Pr(y|x)

59

Basic k-nearest neighbor classification

• Training method:

– Save the training examples

• At prediction time:

– Find the k training examples (x1,y1),…(xk,yk) that are closest to

the test example x

– Predict the most frequent class among those yi’s.

• Improvements:

– Weighting examples from the neighborhood

– Measuring “closeness”

– Finding “close” examples in a large training set quickly

60

K-NN and irrelevant features

+ + + oo

o oo?o ++ o + o oooo+ o ooooo

+

61

K-NN and irrelevant features

+

o

+

o

?

o o

+

o

+

o

o

o

+

o

+

o

o

o

o

+

o

+

o

o

o

o

62

K-NN and irrelevant features

+

+

+

o

+

o

oo o o

?

+

o

o

o

oo

o

o o

+

o +

oo

o

+

63

Ways of rescaling for KNN

Normalized L1 distance:

Scale by IG:

Modified value distance metric:

64

Ways of rescaling for KNN

Dot product:

Cosine distance:

TFIDF weights for text: for doc j, feature i: xi=tfi,j * idfi :

#docs in

corpus

#occur. of

term i in

doc j

65

#docs in

corpus that

contain term i

Combining distances to neighbors

yˆ arg max y C ( y, Neighbors ( x))

Standard KNN:

C ( y, D' ) | {( x' , y ' ) D': y ' y} |

Distance-weighted KNN:

yˆ arg max y C ( y, Neighbors ( x))

C ( y, D' )

(SIM ( x, x' ))

{( x ', y ')D ': y ' y }

C ( y, D' ) 1

(1 SIM ( x, x' ))

{( x ', y ')D ': y ' y}

SIM ( x, x' ) 1 ( x, x' )

66

67

68

69

Computing KNN: pros and cons

• Storage: all training examples are saved in memory

– A decision tree or linear classifier is much smaller

• Time: to classify x, you need to loop over all training

examples (x’,y’) to compute distance between x and

x’.

– However, you get predictions for every class y

• KNN is nice when there are many many classes

– Actually, there are some tricks to speed this up…especially

when data is sparse (e.g., text)

70

Efficiently implementing KNN (for text)

IDF is nice

computationally

71

Tricks with fast KNN

K-means using r-NN

1.

2.

3.

4.

Pick k points c1=x1,….,ck=xk as centers

For each xi, find Di=Neighborhood(xi)

For each xi, let ci=mean(Di)

Go to step 2….

72

Efficiently implementing KNN

dj3

Selective classification: given a

training set and test set, find the N

test cases that you can most

confidently classify

dj2

dj4

73

Support Vector Machines

Slides by Ray Mooney et al.

U. Texas at Austin machine learning group

Perceptron Revisited: Linear

Separators

• Binary classification can be viewed as the task

of separating classes in feature space:

wTx + b = 0

wTx + b > 0

wTx + b < 0

f(x) = sign(wTx + b)

Linear Separators

• Which of the linear separators is optimal?

Classification Margin

wT xi b

r

w

• Distance from example xi to the separator is

• Examples closest to the hyperplane are support vectors.

• Margin ρ of the separator is the distance between support

vectors.

ρ

r

Maximum Margin Classification

• Maximizing the margin is good according to intuition and

PAC theory.

• Implies that only support vectors matter; other training

examples are ignorable.

Linear SVM Mathematically

• Let training set {(xi, yi)}i=1..n, xiRd, yi {-1, 1} be separated by a

hyperplane with margin ρ. Then for each training example (xi, yi):

wTxi + b ≤ - ρ/2 if yi = -1

wTxi + b ≥ ρ/2 if yi = 1

yi(wTxi + b) ≥ ρ/2

• For every support vector xs the above inequality is an equality. After

rescaling w and b by ρ/2 in the equality, we obtain that distance between

each xs and the hyperplane is

y s ( w T x s b)

1

r

w

w

• Then the margin can be expressed through (rescaled) w and b as:

2r

2

w

Linear SVMs Mathematically (cont.)

• Then we can formulate the quadratic optimization problem:

Find w and b such that

2

is maximized

w

and for all (xi, yi), i=1..n :

yi(wTxi + b) ≥ 1

Which can be reformulated as:

Find w and b such that

Φ(w) = ||w||2=wTw is minimized

and for all (xi, yi), i=1..n :

yi (wTxi + b) ≥ 1

Solving the Optimization Problem

Find w and b such that

Φ(w) =wTw is minimized

and for all (xi, yi), i=1..n :

•

•

•

yi (wTxi + b) ≥ 1

Need to optimize a quadratic function subject to linear constraints.

Quadratic optimization problems are a well-known class of mathematical

programming problems for which several (non-trivial) algorithms exist.

The solution involves constructing a dual problem where a Lagrange

multiplier αi is associated with every inequality constraint in the primal

(original) problem:

Find α1…αn such that

Q(α) =Σαi - ½ΣΣαiαjyiyjxiTxj is maximized and

(1) Σαiyi = 0

(2) αi ≥ 0 for all αi

The Optimization Problem Solution

• Given a solution α1…αn to the dual problem, solution to the primal is:

w =Σαiyixi

b = yk - Σαiyixi Txk

for any αk > 0

• Each non-zero αi indicates that corresponding xi is a support vector.

• Then the classifying function is (note that we don’t need w explicitly):

f(x) = ΣαiyixiTx + b

• Notice that it relies on an inner product between the test point x and

the support vectors xi – we will return to this later.

• Also keep in mind that solving the optimization problem involved

computing the inner products xiTxj between all training points.

Soft Margin Classification

• What if the training set is not linearly separable?

• Slack variables ξi can be added to allow misclassification of difficult

or noisy examples, resulting margin called soft.

ξi

ξi

Soft Margin Classification

Mathematically

• The old formulation:

Find w and b such that

Φ(w) =wTw is minimized

and for all (xi ,yi), i=1..n :

yi (wTxi + b) ≥ 1

• Modified formulation incorporates slack variables:

Find w and b such that

Φ(w) =wTw + CΣξi is minimized

and for all (xi ,yi), i=1..n :

yi (wTxi + b) ≥ 1 – ξi, ,

ξi ≥ 0

• Parameter C can be viewed as a way to control overfitting: it “trades

off” the relative importance of maximizing the margin and fitting the

training data.

Soft Margin Classification –

Solution

•

Dual problem is identical to separable case (would not be identical if the 2norm penalty for slack variables CΣξi2 was used in primal objective, we

would need additional Lagrange multipliers for slack variables):

Find α1…αN such that

Q(α) =Σαi - ½ΣΣαiαjyiyjxiTxj is maximized and

(1) Σαiyi = 0

(2) 0 ≤ αi ≤ C for all αi

•

•

Again, xi with non-zero αi will be support vectors.

Solution to the dual problem is:

w =Σαiyixi

b= yk(1- ξk) - ΣαiyixiTxk

αk>0

for any k s.t.

Again, we don’t need to

compute w explicitly for

classification:

f(x) = ΣαiyixiTx + b

Theoretical Justification for

Maximum Margins

• Vapnik has proved the following:

The class of optimal linear separators has VC dimension h bounded

from above as

D 2

h min 2 , m0 1

where ρ is the margin, D is the diameter of the smallest sphere that

can enclose all of the training examples, and m0 is the

dimensionality.

• Intuitively, this implies that regardless of dimensionality m0 we can

minimize the VC dimension by maximizing the margin ρ.

• Thus, complexity of the classifier is kept small regardless of

dimensionality.

Linear SVMs: Overview

• The classifier is a separating hyperplane.

• Most “important” training points are support vectors; they define the

hyperplane.

• Quadratic optimization algorithms can identify which training points

xi are support vectors with non-zero Lagrangian multipliers αi.

• Both in the dual formulation of the problem and in the solution

training points appear only inside inner products:

Find α1…αN such that

Q(α) =Σαi - ½ΣΣαiαjyiyjxiTxj is maximized and

(1) Σαiyi = 0

(2) 0 ≤ αi ≤ C for all αi

f(x) = ΣαiyixiTx + b

Non-linear SVMs

• Datasets that are linearly separable with some noise work out great:

x

0

• But what are we going to do if the dataset is just too hard?

x

0

• How about… mapping data to a higher-dimensional space:

x2

0

x

Non-linear SVMs: Feature spaces

• General idea: the original feature space can always be mapped to

some higher-dimensional feature space where the training set is

separable:

Φ: x → φ(x)

The “Kernel Trick”

•

•

•

•

•

The linear classifier relies on inner product between vectors K(xi,xj)=xiTxj

If every datapoint is mapped into high-dimensional space via some

transformation Φ: x → φ(x), the inner product becomes:

K(xi,xj)= φ(xi) Tφ(xj)

A kernel function is a function that is eqiuvalent to an inner product in some

feature space.

Example:

2-dimensional vectors x=[x1 x2]; let K(xi,xj)=(1 + xiTxj)2,

Need to show that K(xi,xj)= φ(xi) Tφ(xj):

K(xi,xj)=(1 + xiTxj)2,= 1+ xi12xj12 + 2 xi1xj1 xi2xj2+ xi22xj22 + 2xi1xj1 + 2xi2xj2=

= [1 xi12 √2 xi1xi2 xi22 √2xi1 √2xi2]T [1 xj12 √2 xj1xj2 xj22 √2xj1 √2xj2] =

= φ(xi) Tφ(xj), where φ(x) = [1 x12 √2 x1x2 x22 √2x1 √2x2]

Thus, a kernel function implicitly maps data to a high-dimensional space

(without the need to compute each φ(x) explicitly).

What Functions are Kernels?

• For some functions K(xi,xj) checking that K(xi,xj)= φ(xi) Tφ(xj) can be

cumbersome.

• Mercer’s theorem:

Every semi-positive definite symmetric function is a kernel

• Semi-positive definite symmetric functions correspond to a semipositive definite symmetric Gram matrix:

K(x1,x1)

K(x1,x2)

K(x1,x3)

K(x2,x1)

K(x2,x2)

K(x2,x3)

…

K(x1,xn)

K(x2,xn)

K=

…

K(xn,x1)

…

K(xn,x2)

…

K(xn,x3)

…

…

…

K(xn,xn)

Examples of Kernel Functions

• Linear: K(xi,xj)= xiTxj

– Mapping Φ:

x → φ(x), where φ(x) is x itself

• Polynomial of power p: K(xi,xj)= (1+ xiTxj)p

– Mapping Φ:

x → φ(x), where φ(x)

d p

has

p

• Gaussian (radial-basis function): K(xi,xj)e =

dimensions

xi x j

2

2 2

– Mapping Φ: x → φ(x), where φ(x) is infinite-dimensional: every point is

mapped to a function (a Gaussian); combination of functions for support

vectors is the separator.

• Higher-dimensional space still has intrinsic dimensionality d (the

mapping is not onto), but linear separators in it correspond to nonlinear separators in original space.

Non-linear SVMs Mathematically

• Dual problem formulation:

Find α1…αn such that

Q(α) =Σαi - ½ΣΣαiαjyiyjK(xi, xj) is maximized and

(1) Σαiyi = 0

(2) αi ≥ 0 for all αi

• The solution is:

f(x) = ΣαiyiK(xi, xj)+ b

• Optimization techniques for finding αi’s remain the same!

SVM applications

• SVMs were originally proposed by Boser, Guyon and Vapnik in 1992

and gained increasing popularity in late 1990s.

• SVMs are currently among the best performers for a number of

classification tasks ranging from text to genomic data.

• SVMs can be applied to complex data types beyond feature vectors

(e.g. graphs, sequences, relational data) by designing kernel functions

for such data.

• SVM techniques have been extended to a number of tasks such as

regression [Vapnik et al. ’97], principal component analysis [Schölkopf et

al. ’99], etc.

• Most popular optimization algorithms for SVMs use decomposition to

hill-climb over a subset of αi’s at a time, e.g. SMO [Platt ’99] and

[Joachims ’99]

• Tuning SVMs remains a black art: selecting a specific kernel and

parameters is usually done in a try-and-see manner.

Performance Comparison (?)

linear SVM

rbf-SVM

NB

Rocchio

Dec. Trees

kNN

C=0.5

C=1

earn

96.0

96.1

96.1

97.8

98.0

98.2

98.1

acq

90.7

92.1

85.3

91.8

95.5

95.6

94.7

money-fx

59.6

67.6

69.4

75.4

78.8

78.5

74.3

grain

69.8

79.5

89.1

82.6

91.9

93.1

93.4

crude

81.2

81.5

75.5

85.8

89.4

89.4

88.7

trade

52.2

77.4

59.2

77.9

79.2

79.2

76.6

interest

57.6

72.5

49.1

76.7

75.6

74.8

69.1

ship

80.9

83.1

80.9

79.8

87.4

86.5

85.8

wheat

63.4

79.4

85.5

72.9

86.6

86.8

82.4

corn

45.2

62.2

87.7

71.4

87.5

87.8

84.6

microavg.

72.3

79.9

79.4

82.6

86.7

87.5

86.4

SVM classifier break-even F from (Joachims, 2002a, p. 114). Results are shown for the 10 largest categories and

for microaveraged performance over all 90 categories on the Reuters-21578 data set.

Choosing a classifier

Technique

Train

time

Test

time

“Accuracy”

Interpretability

BiasVariance

Data

Complexity

Naïve

Bayes

|W| +

|C| |V|

|C| *

|Vd|

Medium-low

Medium

High-bias

Low

k-NN

|W|

|V| *

|Vd|

Medium

Low

?

High

SVM

|C||D|3

|V|ave

|C|*

|Vd|

High

Low

Mixed

Medium-low

Neural Nets

?

|C|*

|Vd|

High

Low

Highvariance

High

Log-linear

?

|C|*

|Vd|

High

Medium

Highvariance/

mixed

Medium

Ripper

?

?

Medium

High

High-bias

?

“Accuracy” – reputation for accuracy in experimental settings.

Note that it is impossible to say beforehand which classifier will be most accurate on any given problem.

C = set of classes. W = bag of training tokens. V = set of training types. D = set of train docs. V d = types in

test document d. Vave = average number of types per doc in training.

Outline

• Problem definition and applications

• Very Quick Intro to Machine Learning and Classification

– Bias-variance tradeoff

– No free lunch theorem

• Maximum Entropy Models

• Other Classification Techniques

• Representations

–

–

–

–

–

Vector Space Model (and variations)

Feature Selection

Dimensionality Reduction

Representations and independence assumptions

Sparsity and smoothing

97

Vector Space Model

Idea: represent each document as a vector.

• Why bother?

• How can we make a document into a vector?

98

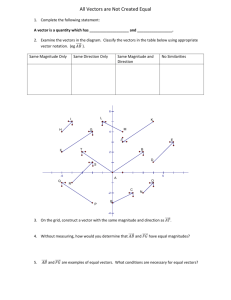

Documents as Vectors

Example:

Document D1: “yes we got no bananas”

Document D2: “what you got”

Document D3: “yes I like what you got”

yes we

got

no

bananas

what

you

I

like

Vector V1:

1

1

1

1

1

0

0

0

0

Vector V2:

0

0

1

0

0

1

1

0

0

Vector V3:

1

0

1

0

0

1

1

1

1

99

Documents as Vectors

Generically, we convert a document into a vector by:

1. Determine the vocabulary V, or set of all terms in the

collection of documents

2. For each document d, compute a score sv(d) for every

term v in V.

–

For instance, sv(d) could be the number of times v appears in d.

100

Why Bother?

The vector space model has a number of

limitations (discussed later).

But two major benefits:

1.Convenience (notational & mathematical)

2.It’s well-understood

– That is, there are a lot of side benefits, like

similarity and distance metrics, that you get

for free.

101

Handy Tools

• Euclidean distance and norm

• Cosine similarity

• Dot product

102

Measuring Similarity

Similarity metric:

the size of the angle

between document

vectors.

“Cosine Similarity”:

CS (v1 , v2 ) cos v1 ,v2

v1 v2

v1 v2

103

Variations of the VSM

• What should we include in V?

– Stoplists

– Phrases and ngrams

– Feature selection

• How should we compute sv(d)?

–

–

–

–

–

–

Binary (Bernoulli)

Term frequency (TF) (multinomial)

Inverse Document Frequency (IDF)

TF-IDF

Length normalization

Other …

104

What should we include in the

Vocabulary?

Example:

Document D1: “yes we got no bananas”

Document D2: “what you got”

Document D3: “yes I like what you got”

• All three documents include “got”

it’s not very informative for discriminating between the documents.

• In general, we’d like to include all and only

informative features

105

Zipf Distribution of Language

Languages contain

a few high-frequency words,

a large number of medium frequency words,

and a ton of low-frequency words.

High-frequency words generally not indicative of

one class or another, so not useful.

Low-frequency words often very indicative of one

class or another, but we may never (or rarely)

see them during training.

data sparsity

106

Stop words and stop lists

A simple way to get rid of uninteresting features is to

eliminate the high-frequency ones

These are often called “stop words”

- e.g., “the”, “of”, “you”, “got”, “was”, etc.

Systems often contain a list (“stop list”) of ~100 stop words,

which are pruned from the vocabulary

107

Beyond terms

It would be great to include multi-word features like

“New York”, rather than just “New” and “York”

But: including all pairs of words, or all consecutive

pairs of words, as features creates WAY too

many to deal with, and many are very sparse.

In order to include such features, we need to know

more about feature selection (upcoming)

108

Variations of the VSM

• What should we include in V?

– Stoplists

– Phrases and ngrams

– Feature selection

• How should we compute sv(d)?

–

–

–

–

–

–

Binary (Bernoulli)

Term frequency (TF) (multinomial)

Inverse Document Frequency (IDF)

TF-IDF

Length normalization

Other …

109

Score for a feature in a document

Example:

Document: “yes we got no bananas no bananas we got we got”

yes we

got

no

bananas

what

you

I

like

Binary:

1

1

1

1

1

0

0

0

0

Term Frequency:

1

3

3

2

2

0

0

0

0

110

Inverse Document Frequency

An alternative method of scoring a feature

Intuition: words that are common to many

documents are less informative, so give

them less weight.

IDF(v) =

log (#Documents / #Documents containing v)

111

TF-IDF

Term Frequency Inverse Document Frequency

TF-IDFv(d) = TFv(d) * IDF(v)

TF-IDFv(d) = (#v occurs in D) *

log (#Documents / #Documents containing v)

112

TF-IDF weighted vectors

Example:

Document D1: “yes we got no bananas”

Document D2: “what you got”

Document D3: “yes I like what you got”

yes we

got

no

bananas

what

you

I

like

Vector V1:

.18

1

1

1

1

0

0

0

0

Vector V2:

0

0

1

0

0

1

1

0

0

Vector V3:

1

0

1

0

0

1

1

1

.48

113

Limitations

The vector space model has the following limitations:

• Long documents are poorly represented because they

have poor similarity values.

• Search keywords must precisely match document terms;

word substrings might result in a false positive match.

• Semantic sensitivity: documents with similar context but

different term vocabulary won't be associated, resulting

in a false negative match.

• The order in which the terms appear in the document is

lost in the vector space representation.

114

Curse of Dimensionality.

If the data x lies in high dimensional space, then

an enormous amount of data is required to learn

distributions, decision rules, or clusters.

Example:

50 dimensions.

Each dimension has 2 possible values.

This gives a total of 250 = ~1015 cells.

But the no. of data samples will be far less.

There will not be enough data samples to learn.

Dimensionality Reduction

• Goal: Reduce the dimensionality of the

space, while preserving distances

• Basic idea: find the dimensions that have the

most variation in the data, and eliminate the

others.

• Many techniques (SVD, MDS)

• May or may not help

Feature Selection and

Dimensionality Reduction in NLP

• TF-IDF (reduces the weight of some

features, increases weight of others)

• Mutual Information (MI)

• Pointwise Mutual Information (PMI)

• Latent Semantic Analysis – next week

• Information Gain (IG)

• Chi-square or other independence tests

• Pure frequency

117

Mutual Information

What makes “Shanghai” a good feature for

classifying a document as being about

“China”?

Intuition: four cases

+China

-China

+ Shanghai

How common?

How common?

-Shanghai

How common?

How common?

118

Mutual Information

What makes “Shanghai” a good feature for

classifying a document as being about

“China”?

Intuition: four cases

+China

-China

+ Shanghai

X

X

-Shanghai

X

X

If all four cases are equally common, MI = 0.

119

Mutual Information

What makes “Shanghai” a good feature for

classifying a document as being about

“China”?

Intuition: four cases

+China

-China

+ Shanghai

10X

0

-Shanghai

X

X

MI grows when one (or two) case(s) becomes much more

common than the others.

120

Mutual Information

What makes “Shanghai” a good feature for

classifying a document as being about

“China”?

Intuition: four cases

+China

-China

+ Shanghai

10X

~0

-Shanghai

X

X

That’s also the case where the feature is useful!

121

Mutual Information

MI (t , c)

P(t ,c) log P(t ,c)

P ( t ) P ( c )

P (t ,c) log P(t ,c)

P(t ) P(c)

P (t ,c) log P(t ,c)

P ( t ) P ( c )

P(t ,c) log P(t ,c)

P(t ) P(c)

122

Pointwise Mutual Information

What makes “Shanghai” a good feature for

classifying a document as being about

“China”?

PMI focuses on just the (+, +) case:

+China

-China

+ Shanghai

10X

~0

-Shanghai

X

X

How much more likely than chance is it for Shanghai to

appear in a document about China?

123

Pointwise Mutual Information

PMI (t , c)

P ( t , c )

log

P ( t ) P ( c )

124

Feature Engineering

• This is where domain experts and human

judgement come into play.

• Not much to say …. except that it matters

a lot, often more than choosing a classifier

125