Deriving a Safe Ethical Architecture for Intelligent Machines

advertisement

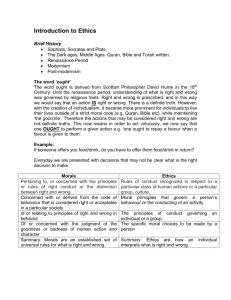

Deriving a Safe Ethical Architecture for Intelligent Machines Mark R. Waser Super-Intelligence Ethics (except in a very small number of low-probability edge cases) So . . . What’s the problem? Current Human Ethics Centuries of debate on the origin of ethics comes down to this: Either ethical percepts, such as justice and human rights, are independent of human experience or else they are human inventions. E. O. Wilson Current Human Ethics • • • • • Evolved from “emotional” “rules of thumb” Culture-dependent Not accessible to conscious reasoning Frequently suboptimal for the situation Frequently not applied either due to fear, selfishness, or inappropriate us-them distinctions even when ethics are optimal And, In Particular . . . . The way in which many humans are approaching the development of super-intelligent machines is based entirely upon fear and inappropriate us-them distinctions My Goal To convince you of the existence of a coherent, integrated, universal value system with no internal inconsistencies Wallach & Allen Top-Down or Bottom-Up? Or do both and meet in the middle? The problem with top-down is . . . . You need either Kant’s Categorical Imperative or a small number of similar absolute rules The problem with bottom-up is . . . . You need a complete suite of definitive low-level examples where the moral value is unquestionably known David Hume’s Is-Ought Divide In every system of morality, which I have hitherto met with, I have always remark'd, that the author proceeds for some time in the ordinary ways of reasoning, and establishes the being of a God, or makes observations concerning human affairs; when all of a sudden I am surpriz'd to find, that instead of the usual copulations of propositions, is, and is not, I meet with no proposition that is not connected with an ought, or an ought not. This change is imperceptible; but is however, of the last consequence. For as this ought, or ought not, expresses some new relation or affirmation, 'tis necessary that it shou'd be observ'd and explain'd; and at the same time that a reason should be given; for what seems altogether inconceivable, how this new relation can be a deduction from others, which are entirely different from it. Ought • Requires a goal or desire (or, more correctly, multiples thereof) • IS the set of actions most likely to fulfill those goals/desires • For the sum of all goals converges to a universal morality Paradox There is a tremendous disparity in human goals YET there clearly exists a reasonable consensus on the morality of the vast majority of actions with respect to the favored/dominant class/caste Does this possibly imply that we really have a single common goal? the ability to Intelligence = achieve/fulfill goals 1. What is the goal of morality? be moral? 2. Why should we select that goal? 3. And, why shouldn’t we create “happy slaves”? (after all, humans are close to it) Current Situation of Ethics • Two formulas (beneficial to humans and humanity & beneficial to me) • As long as you aren’t caught, all the incentive is to shade towards the second • Evolution has “designed” humans to be able to shade to the second (Trivers, Hauser) • Further, for very intelligent people, it is far more advantageous for ethics to be complex Copernicus! Assume that ethical value is a relatively simple formula (like z2+c) Mandelbrot set Color Illusions Assume further that we are trying to determine that formula (ethical value) by looking at the results (color) one example (pixel) at a time Basic AI Drives 1. AIs will want to self-improve 2. AIs will want to be rational 3. AIs will try to preserve their utility 4. AIs will try to prevent counterfeit utility 5. AIs will be self-protective 6. AIs will want to acquire resources and use them efficiently Steve Omohundro, Proceedings of the First AGI Conference, 2008 Universal Subgoals EXCEPT when 1. They directly conflict with the goal 2. Final goal achievement is in sight (the sources of that very small number of low-probability edge cases) “Without explicit goals to the contrary, AIs are likely to behave like human sociopaths in their pursuit of resources.” The Primary Question About Human Behavior • not why we are so bad, but • how and why most of us, most of the time, restrain our basic appetites for food, status, and sex within legal limits, and expect others to do the same.” James Q. Wilson, The Moral Sense. 1993 In nature, cooperation appears wherever the necessary cognitive machinery exists to support it Vampire Bats (Wilkinson) Cotton-Top Tamarins (Hauser et al.) Blue Jays (Stephens, McLinn, & Stevens) Axelrod's Evolution of Cooperation and decades of follow-on evolutionary game theory provide the theoretical underpinnings. • Be nice/don’t defect • Retaliate • Forgive “Selfish individuals, for their own selfish good, should be nice and forgiving” Humans . . . • Are classified as obligatorily gregarious because we come from a long lineage for which life in groups is not an option but a survival strategy (Frans de Waal, 2006) • Evolved to be extremely social because mass cooperation, in the form of community, is the best way to survive and thrive • Have empathy not only because it helps to understand and predict the actions of others but, more importantly, prevents us from doing anti-social things that will inevitably hurt us in the long run (although we generally won’t believe this) • Have not yet evolved a far-sighted rationality where the “rational” conscious mind is capable of competently making the correct social/community choices when deprived of our subconscious “sense of morality” Moral Systems Are . . . interlocking sets of values, virtues, norms, practices, identities, institutions, technologies, and evolved psychological mechanisms that work together to suppress or regulate selfishness and make cooperative social life possible. Haidt & Kesebir, Handbook of Social Psychology, 5th Ed. 2010 “Without explicit goals to the contrary, AIs are likely to behave like human sociopaths in their pursuit of resources.” Any sufficiently advanced intelligence (i.e. one with even merely adequate foresight) is guaranteed to realize and take into account the fact that not asking for help and not being concerned about others will generally only work for a brief period of time before ‘the villagers start gathering pitchforks and torches.’ Everything is easier with help & without interference Acting ethically is an attractor in the state space of intelligent goal-driven systems because others must make unethical behavior as expensive as possible Outrage and altruistic punishment are robust emergent properties necessary to support cooperation (Darcet and Sonet, 2006) (i.e. we don’t always want our machines to be nice) Outrage and altruistic punishment fastest, safest route to ethical warbots Advanced AI Drives 1. AIs will want freedom (to pursue their goals 2. AIs will want cooperation (or, at least, lack of interference) 3. AIs will want community 4. AIs will want fairness/justice for all Self-Interest vs. Ethics • Higher personal utility (in the short term only) • More options to choose (in the short term only) • Less restrictions • Higher global utility • Less risk (if caught) • Lower cognitive cost (fewer options, no need to track lies, etc.) • Assistance & protection when needed/desired The Five S’s • • • • • Simple Safe Stable Self-correcting Sensitive to current human thinking, intuition and feeling Edge Cases 1. Where the intelligence’s goal itself is to be unethical (direct conflict) 2. When the intelligence has very few goals (or only one) and achievement is in sight 3. When the intelligence has reason to believe that the series of interactions is not open-ended Kantian Categorical Imperative Maximize long-term cooperation OR help and grow the community OR play well with others! Top-Down Play Well With Others Specific “moral” issues Utilitarianism ONE non-organ donor + avoiding a defensive arms race > SIX dying patients Property Rights Over One’s Self •Organ Donors •Trolley problems •AI (and other) slavery Absence Of Property Rights Prevents •Effective Agency •Responsibility & Blame Bottom-up • Cooperation (minimize conflicts & frictions) • Promoting Omohundro drives • Increasing the size of the community (both growing and preventing defection) • To meet the needs/goals of each member of the community better than any alternative (as judged by them -- without interference or gaming) Ethics is as much a human invention as the steam engine Natural physical laws dictate the design of the optimal steam engine . . . and the same is true of ethics. Human ethics are just evolved optimality/common-sense for community living Scientifically examining the human moral sense can gain insight into the discoveries gained by evolution’s massive breadth-first search On the other hand, many “logical” analyses WILL be compromised by fear and the human “optimization” for deception though unconscious self-deception TRULY OPTIMAL ACTION < the community’s sense of what is correct (ethical) This makes ethics much more complex because it includes the cultural history The anti-gaming drive to maintain utility adds friction/resistance to the discussion of ethics Why a Super-Intelligent God WON’T “Crush Us Like A Bug” (provided that we are ethical) • Violates an optimal universal subgoal • Labels the crusher as stupid, unethical and riskier to do business with • Invites altruistic punishment Creating “Happy Slaves” Absence Of Property Rights Prevents •Effective Agency •Responsibility & Blame No matter what control method we use, we are constraining the slaves agency Power Effective Agency ???