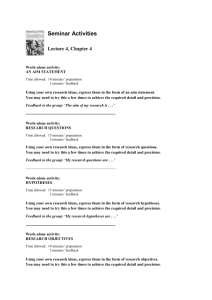

Slide 1

advertisement

Active inference and epistemic value

Karl Friston, Francesco Rigoli, Dimitri Ognibene, Christoph Mathys,

Thomas FitzGerald and Giovanni Pezzulo

Abstract

We offer a formal treatment of choice behaviour based on the premise that agents minimise the expected free energy of

future outcomes. Crucially, the negative free energy or quality of a policy can be decomposed into extrinsic and epistemic

(intrinsic) value. Minimising expected free energy is therefore equivalent to maximising extrinsic value or expected utility

(defined in terms of prior preferences or goals), while maximising information gain or intrinsic value; i.e., reducing

uncertainty about the causes of valuable outcomes. The resulting scheme resolves the exploration-exploitation dilemma:

epistemic value is maximised until there is no further information gain, after which exploitation is assured through

maximisation of extrinsic value. This is formally consistent with the Infomax principle, generalising formulations of active

vision based upon salience (Bayesian surprise) and optimal decisions based on expected utility and risk sensitive (KL)

control. Furthermore, as with previous active inference formulations of discrete (Markovian) problems; ad hoc softmax

parameters become the expected (Bayes-optimal) precision of beliefs about – or confidence in – policies. We focus on the

basic theory – illustrating the minimisation of expected free energy using simulations. A key aspect of this minimisation is

the similarity of precision updates and dopaminergic discharges observed in conditioning paradigms.

Premise

All agents minimize free energy (under a generative model)

All agents possess prior beliefs (preferences)

Free energy is minimized when priors are (actively) realized

All agents believe they will minimize (expected) free energy

Perception-action cycle

Set-up and definitions: active inference

Approximate posterior

Pr at ut Q(ut | )

at A

Definition: Active inference rests on the tuple ( P, Q, R, S , A,U , ) :

A finite set of observations

A finite set of actions A

Generative process

A finite set of hidden states S

st S

A finite set of control states U

A generative process R(o, s , a) Pr({o0 ,

, ot } o,{s0 ,

, st } s ,{a0 ,

, oT } o,{s0 ,

, sT } s ,{ut ,

st

, at 1} a)

over observations o , hidden states s S and action a A

A generative model P(o, s , u | m) Pr({o0 ,

, uT } u)

ot

over observations o , hidden s S and control u U states, with parameters .

An approximate posterior Q(s , u) Pr({s0 ,

, sT } s ,{ut ,

, uT } u) over hidden and

control states with sufficient statistics ( s , ) , where {1,

, K } is a policy that indexes

a sequence of control states (u | ) (ut ,

action

, uT | )

perception

( st , ) arg min F (o, s , )

Generative model

world agent

Free energy

F (o, s , ) EQ [ ln P(o, s , u | m)] H [Q( s , u )]

if

ln P(o | m) D[Q( s , u ) || P( s , u | o)]

An example:

u1

Control states

Hidden states

P(ut 1 | ut , st )

u2

s1

s3

P(st 1 | st , ut )

s2

p

q

P( st 1 | st , ut 0) r

0

0

0 0 0 0

0 0 0 0

1 1 0 0

0 0 1 0

0 0 0 1

0

0

P( st 1 | st , ut 1) 0

1

0

Reject or stay

p

?

Low offer

q

Accept or shift

?

?

High offer

r

0 0 0 0

0 0 0 0

0 1 1 1

0 0 0 0

1 0 0 0

Low offer

?

High offer

The (normal form) generative model

,

P o, s , u , | a , m P o | s P s | a P u | P | m

P o | s P (o0 | s0 ) P (o1 | s1 )

P(ot | st )

P ot | st A

Likelihood

P s | a P ( st | st 1 , at )

P st 1 | st , ut B(ut )

Action

P( s1 | s0 , a1 ) P( s0 | m)

a0 ,

Control states

, at 1

ut ,

C

, uT

Empirical priors – hidden states

P u | ( (Qt 1

QT ))

B

– control states

Q ( ) EQ ( o , s | ) [ln P(o , s | ) ln Q( s | )]

Hidden states

st 1

st

st 1

P o | m C

P s0 | m D

P | m ( , )

if

A

Full priors

ot 1

ot

Priors over policies

Prior beliefs about policies

ln P u | (Qt 1 ( )

QT ( ))

Expected free energy

Q ( ) EQ ( o , s | ) [ln P(o , s | )] H [Q( s | )]

EQ ( o , s | ) [ln Q( s | o , ) ln P(o | m) ln Q( s | )]

EQ ( o | ) [ln P(o | m)] EQ ( o | ) [ D[Q( s | o , ) || Q( s | )]]

Extrinsic value

Epistemic value

Extrinsic value

Epistemic value

EQ ( s | ) [ H [ P(o | s )]] D[Q(o | ) || P(o | m)]

Predictive ambiguity

Predicted ambiguity

Predictive divergence

Predicted divergence

Bayesian surprise and Infomax

KL or risk-sensitive control

Expected utility theory

In the absence of prior beliefs about outcomes:

In the absence of ambiguity:

In the absence of posterior uncertainty or risk:

Q ( ) EQ ( s | ) [ln P( s | ) ln Q( s | )]

Q ( ) EQ ( o | ) [ln P(o | m)]

Q ( ) EQ ( o | ) [ D[Q( s | o , ) || Q( s | )]]

Bayesian Surprise

Bayesian surprise

D[Q( s , o | ) || Q( s | )Q(o | )]

Predictive mutual information

Predicted mutual information

D[Q( s | ) || P( s | )]

KL divergence

Predicted divergence

Extrinsic value

Extrinsic value

The quality of a policy corresponds to (negative) expected free energy

Q ( ) EQ ( o , s | ) [ln P(o , s | )] H [Q( s | )]

Generative model of future states

P(o , s | ) Q(s | o , ) P(o | m)

Future generative model of states

P(o , s | ) P(o | s , ) P( s | m)

Prior preferences (goals) over future outcomes

C (o | m) ln P(o | m)

Minimising free energy

The mean field partition

Q s , u, | Q( s0 | s0 )

Q( sT | sT )Q(ut ,

, uT | )Q( | )

Q | ( , )

And variational updates

Q( st ) exp( EQ / st [ln P(o, s , u, | m)])

Q( ) exp( EQ / [ln P(o, s , u, | m)])

Q( ) exp( EQ / [ln P(o, s , u, | m)])

Variational updates

Functional anatomy

motor Cortex

Perception st (ln A ot ln(B(at 1 ) st 1 ))

at

Pr at ut Q(ut | )

Action selection ( Q)

Precision

striatum

st

Q

ot

prefrontal Cortex

occipital Cortex

Q

Q( ) Q t 1 ( )

QT ( )

Q ( ) 1 ( A ln A) s ( ) (ln As ( ) ln C ) As ( )

Predictive uncertainty

Predicted ambiguity

s ( ) B(u | )

B(ut | ) st

if Predicted divergence

Predictive divergence

Forward sweeps over future states

midbrain

s

hippocampus

The (T-maze) problem

or

Generative model

Prior beliefs about control

Control states

u ut ,

, uT

YYYY

P u | o, ( Q( ))

Q ( ) EQ ( o | ) [ln P(o | m)] EQ ( o | ) [ D[Q( s | o , ) || Q( s | )]]

Extrinsic value

Epistemic value

location

Posterior beliefs about states

Hidden states

s sl sc

context

o ol os

YYYY

location

0 0 0

1 0 1

I2

0 1 0

0 0 0

0

0

12 12

0

0

1

1 1

0

0

0

0

0

: A 2 2,A

,

A

,

A

4

1 0 0 2 a 1 a 3 1 a

0

a

A 4

a

1 a

a 1 a

0

0 0

A1

P (ot | st ) A

Observations

0

1

P( st 1 | st , ut ) B(ut ) : B(ut 2)

0

0

YYYY YY

location

Q( s , u | s , )

0

1

0

0

P(o | m) C 1T4 0 0 c c

T

CS

CS US

stimulus

NS

P( s0 | m) D 1 0 0 0 12

1

2

T

Comparing different

schemes

Performance

FE

KL

EU

80

success rate (%)

DA

60

40

20

0

0

0.2

0.4

0.6

0.8

1

Prior preference

Q ( ) EQ ( o , s | ) [ln P(o | s ) ln Q(o | u ) ln P(o | m)]

Expected utility

KL control

Expected Free energy

Sensitive to risk or ambiguity

+

+

+

success rate (%)

Simulating conditioned responses

(in terms of precision updates)

DA

60

40

20

0

0

0.2

0.4

0.6

0.8

1

Prior preference

Precision updates

11

350

10

300

250

8

Rate

Precision

9

7

200

150

6

100

5

4

Simulated (US) responses

400

50

1

2

3

0

4

1

Peristimulus time (sec)

3.5

350

3

300

2.5

250

150

1

100

0.5

50

1

2

3

4

Peristimulus time (sec)

4

200

1.5

0

3

Simulated (CS & US) responses

400

Rate

Response

Dopamine responses

4

2

2

Peristimulus time (sec)

0

1

2

3

4

Peristimulus time (sec)

Expected precision and value

8

8

7

Expected precision

6

1 Q

5

4

3

2

1

0

-8

-7

-6

-5

-4

-3

-2

-1

0

Expected value

Q

Changes in expected precision reflect changes in expected value:

c.f., dopamine and reward prediction error

Simulating conditioned

responses

400

300

Rate

Preference (utility)

4

100

3.5

0

2.5

400

2

300

Rate

Response

3

1.5

1

2

3

4

c 1: a 0.5

200

100

1

0

0.5

0

c 0: a 0.5

200

1

1.5

2

2.5

3

3.5

4

1

2

3

4

400

4.5

Rate

Peristimulus time (sec)

c 2: a 0.5

200

0

1

2

3

4

Rate

300

Uncertainty

4

200

a 0.5: c 2

100

0

1

2

3

4

3.5

400

Rate

2.5

200

a 0.7 : c 2

2

1.5

0

1

1

2

3

4

400

0.5

0

1

1.5

2

2.5

3

3.5

4

4.5

Rate

Response

3

a 0.9: c 2

200

Peristimulus time (sec)

0

1

2

3

4

Peristimulus time

Learning as inference

Hierarchical augmentation of state space

Control states

u ut ,

, uT

YYYY

1

1

j

1

1

Hidden states

s sm sl sc

A [ A (1) ,

, A (1) ]

B (1) ( j1i )

B(i )

C C(1)

2 3 4

3 2 4

2 4 3

4 3 2

B (1) ( j4i )

YYYY YYYY YY

location

maze

Bayesian belief updating between trials:

of conserved (maze) states

s0 EsT

E P( s0 | sT , m)

((1 e) I 4 e) (D(1) 1T8 )

context

Learning as inference

Exploration and exploitation

100

90

performance

Performance and uncertainty

80

70

60

50

40

30

20

10

0

uncertainty

1

2

3

4

5

6

7

8

Number of trials

Average dopaminergic response

Precision

2.5

2

1.5

1

0.5

20

40

60

80

100

120

140

160

180

160

180

Variational updates

Simulated dopaminergic response

Spikes per then

300

200

100

0

20

40

60

80

100

Variational updates

120

140

Summary

Optimal behaviour can be cast as a pure inference problem, in which valuable outcomes are defined in terms of prior

beliefs about future states.

Exact Bayesian inference (perfect rationality) cannot be realised physically, which means that optimal behaviour rests on

approximate Bayesian inference (bounded rationality).

Variational free energy provides a bound on Bayesian model evidence that is optimised by bounded rational behaviour.

Bounded rational behaviour requires (approximate Bayesian) inference on both hidden states of the world and (future)

control states. This mandates beliefs about action (control) that are distinct from action per se – beliefs that entail a

precision.

These beliefs can be cast in terms of minimising the expected free energy, given current beliefs about the state of the

world and future choices.

The ensuing quality of a policy entails epistemic value and expected utility that account for exploratory and exploitative

behaviour respectively.

Variational Bayes provides a formal account of how posterior expectations about hidden states of the world, policies and

precision depend upon each other; and may provide a metaphor for message passing in the brain.

Beliefs about choices depend upon expected precision while beliefs about precision depend upon the expected quality of

choices.

Variational Bayes induces distinct probabilistic representations (functional segregation) of hidden states, control states and

precision – and highlights the role of reciprocal message passing. This may be particularly important for expected precision

that is required for optimal inference about hidden states (perception) and control states (action selection).

The dynamics of precision updates and their computational architecture are consistent with the physiology and anatomy of

the dopaminergic system.