Transactional Memory

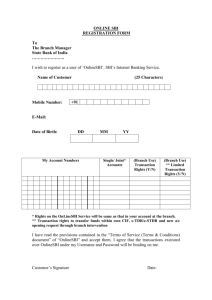

advertisement

ECE8833 Polymorphous and Many-Core Computer Architecture

Lecture 7 Lock Elision and Transactional Memory

Prof. Hsien-Hsin S. Lee

School of Electrical and Computer Engineering

Speculative Lock Elision (SLE) &

Speculative Synchronization

Lock May Not Be Needed

• OoO won’t speculate beyond lock acquisition, critical section (CS)

executions are serialized

• In the example, if condition failed, no shared data is updated

• Potential thread-level parallelism is lost

Thread 1

Thread 2

LOCK(queue);

if (!search_queue(input))

enqueue(input);

UNLOCK(queue);

How to detect such hidden parallelism?

3

Bottom Line

• Appearance of Instantaneous Changes (i.e., Atomicity)

• Lock can be elided if

– Data read in CS is not modified by other threads

– Data write in CS is not read by other threads

• Any violation of above will not commit the instructions in CS

4

Speculative Lock Elision (SLE)

[Rajwar & Goodman, MICRO-34]

Hardware-based scheme (no ISA extension)

• Dynamically identifies synchronization operations

• Predicts them being unnecessary

• Elides them

• When speculation is wrong, recover using existing

cache coherence mechanism

5

SLE Scenario

• Silent store pair

– stl_c (store on lock flag) : perform a “lock acquire” (lock=1)

– stl (regular store): perform a “lock release” (lock = 0)

– Why silent? “Release” will undo the write performed by “acquire”

• Goal

– Elide these silent store pair

– Speculate all memory operations inside critical sections will occur atomically

– Buffer store results during execution within the critical section

6

Predict a Lock Acquire

• A lock-predictor detects ldl_l/stl_c pairs

• View “elided lock acquire” as making a “branch prediction”

– Buffer register and memory state until SLE is validated

• View “elided lock release” as a “branch outcome resolution”

7

Speculation During Critical Section

• Speculative register state, use either of below

– ROB

• Critical section needs to be smaller than ROB

• Instructions cannot speculatively retire

– Register checkpoint

• Done once after elided lock acquire

• Allow speculative retirement for registers

• Speculative memory state

– Use write-buffer

– Multiple writes can be collapsed inside the write-buffer

– Write-buffer cannot be flushed prior to elided lock release

• Rollback the states when mis-speculated

8

Mis-speculation Triggers

• Atomicity violation

–

(1)

(2)

–

Use existing coherence protocol, the following two basic principle

Any external invalidation to an accessed line

Any external request to access an “exclusive” line

Use LSQ if ROB approach is used (in-flight CS instructions cannot

retire and will be checked via snooping)

– Add an access bit to cache if Checkpoint is used

• Violation due to limited resources

– Write-buffer is filled before elided lock release

– ROB is filled before elided lock release

– Uncached access events

9

Microbenchmark Result

[Rajwar & Goodman, MICRO-34]

10

Percentage of Dynamic Locks Elided

[Rajwar & Goodman, MICRO-34]

11

Speculative Synchronization [Martinez et al. ASPLOS-02]

• Similar rationale

– Synchronization may be too conservative

– bypass synchronization

• Off-load synchronization operations from processor to an Spec.Sync.U (SSU)

• Use a “speculative thread” to pass

– Active barriers

– Busy locks

– Unset flags

• TLS (Thread-level speculation) hardware

– Disambiguate data violation

– Roll back

• Always keep at least one “safe” thread to

– Guarantee forward progress

– In case the speculative buffer is overflowed

– Actual conflict occurs

12

Speculative Lock Example

A

B

C

D

E

ACQUIRE

RELEASE

Safe

Speculative

Slide Source: Jose Martinez

13

Speculative Lock Example

B

C

D

E

ACQUIRE

A

RELEASE

Safe

Speculative

Slide Source: Jose Martinez

14

Speculative Lock Example

C

D

ACQUIRE

A

B

E

RELEASE

Safe

Speculative

Slide Source: Jose Martinez

15

Speculative Lock Example

D

ACQUIRE

A

B

C

RELEASE

E

Safe

Speculative

Slide Source: Jose Martinez

16

Speculative Lock Example

D

ACQUIRE

B

C

RELEASE

A

E

C becomes the new “safe”

thread and “lock owner”

Safe

Speculative

Slide Source: Jose Martinez

17

Hardware Support for Speculative Synchronization

Indicating speculative

memory operations

Processor Tag

A

R

Processor

Keep synchronization

variable under

speculation

Logic

L1

Set Acquire and Release bits

and take over the job of

“acquiring lock”

L2

Speculative bit per

cache line

Upon hitting a “lock

acquire” instruction, a

library call is invoked to

issue a request to the

SSU, and processor

moves on to pass lock

for speculative

execution

18

Speculative Lock Request

• Processor Side

– Program SSU for speculative lock

– Checkpoint register file

• Speculative Synchronization Unit (SSU) Side

– Initiate Test&Test&Set loop on lock variable

• Use caches as speculative buffer (like TLS)

– Set “Speculative bit” in lines accessed speculatively

Slide Source: Jose Martinez

19

Lock Acquire

• SSU acquires lock (i.e., T&S successful)

– Clears all speculative bits

– Becomes idle

• Release (store) later by processor

20

Release While Speculative

• Processor issues release, SSU still trying to acquire the lock

– SSU intercepts release (store) by processor

– SSU toggles Release bit — thread “already done”

• SSU can pretend that ownership has been acquired and

released (although it never happened)

– Acquire and Release bit are cleared

– All speculative bits in caches are cleared

21

Violation Detection

• Rely on underlying cache coherence protocol

– A thread receiving an external invalidation

– An external read for a local dirty cache line

• If the accessed line is *not* marked speculative normal coherence

protocol applied

• If a *speculative thread* receives an external message for a line marked

speculative

–

–

–

–

SSU squashes the local thread

All dirty lines w/ speculative bits are gang-invalidated

All speculative bits are cleared

Processor restores check-pointed states

• Lock owner was never squashed (since none of its cache line would be

marked as speculative)

22

Speculative Synchronization Result

• Average sync time reduction: 40%

• Execution time reduction up to 15%, average 7.5%

23

Transaction Memory

Current Parallel Programming Model

• Shared data consistency

• Use “Lock”

• Fine grained lock

– Error prone

– Deadlock prone

– Overhead

• Coarse grained lock

– Sequentialize threads

– Prevent parallelism

// WITH LOCKS

void move(T s, T d, Obj key){

LOCK(s);

LOCK(d);

tmp = s.remove(key);

d.insert(key, tmp);

UNLOCK(d);

UNLOCK(s);

}

Thread 0

move(a, b, key1);

Thread 1

move(b, a, key2);

DEADLOCK!

(& can’t abort)

Code example source: Mark Hill @Wisconsin

25

Parallel Software Problems

• Parallel systems are often programmed with

– Synchronization through barriers

– Shared objects access control through locks

• Lock granularity and organization must balance performance

and correctness

–

–

–

–

Coarse-grain locking: Lock contention

Fine-grain locking: Extra overhead

Must be careful to avoid deadlocks or data races

Must be careful not to leave anything unprotected for correctness

• Performance tuning is not intuitive

– Performance bottlenecks are related to low level events

• E.g. false sharing, coherence misses

– Feedback is often indirect (cache lines, rather than variables)

26

Parallel Hardware Complexity (TCC’s view)

• Cache coherence protocols are complex

– Must track ownership of cache lines

– Difficult to implement and verify all corner cases

• Consistency protocols are complex

– Must provide rules to correctly order individual loads/stores

– Difficult for both hardware and software

• Current protocols rely on low latency, not bandwidth

– Critical short control messages on ownership transfers

– Latency of short messages unlikely to scale well in the future

– Bandwidth is likely to scale much better

• High speed interchip connections

• Multicore (CMP) = on-chip bandwidth

27

What do we want?

• A shared memory system with

– A simple, easy programming model (unlike message passing)

– A simple, low-complexity hardware implementation (unlike shared

memory)

– Good performance

28

Lock Freedom

• Why lock is bad?

• Common problems in conventional locking mechanisms in

concurrent systems

– Priority inversion: When low-priority process is preempted while

holding a lock needed by a high-priority process

– Convoying: When a process holding a lock is de-scheduled (e.g. page

fault, no more quantum), no forward progress for other processes

capable of running

– Deadlock (or Livelock): Processes attempt to lock the same set of

objects in different orders (could be bugs by programmers)

• Error-prone

29

Using Transactions

• What is a transaction?

– A sequence of instructions that is guaranteed to execute and

complete only as an atomic unit

Begin Transaction

Inst #1

Inst #2

Inst #3

…

End Transaction

– Satisfy the following properties

• Serializability: Transactions appear to execute serially.

• Atomicity (or Failure-Atomicity): A transaction either

– commits changes when complete, visible to all; or

– aborts, discarding changes (will retry again)

• Isolation: concurrently executing threads cannot affect the result

of a transaction, so a transaction produces the same result as

when no other task was executing

30

TCC (Stanford) [Hammond et al. ISCA 2004]

• Transactional Coherence and Consistency

• Programmer-defined groups of instructions within a program

Begin Transaction

Inst #1

Inst #2

Inst #3

…

End Transaction

Start Buffering Results

Commit Results Now

• Only commit machine state at the end of each transaction

– Each must update machine state atomically, all at once

– To other processors, all instructions within one transaction appear to

execute only when the transaction commits

– These commits impose an order on how processors may modify

machine state

31

Transaction Code Example

• MIT LTM instruction set

xstart:

XBEGIN on_abort

lw

r1, 0(r2)

addi r1, r1, 1

...

XEND

...

on_abort:

…

j

xstart

// back off

// retry

32

Transactional Memory

• Transactions appear to execute in commit order

– Flow (RAW) dependency cause transaction violation and restart

Transaction A

Time

Arbitrate

Commit

Transaction C

Transaction B

ld 0xdddd

...

st 0xbeef

0xbeef

ld 0xdddd

...

ld 0xbbbb

Arbitrate

Commit

0xbeef

ld 0xbeef

Violation!

ld 0xbeef

Re-execute

with new data

33

Transaction Atomicity

Init MEM

A

0

T0

Load r = A

Add r = r + 5

T1

What are the values when T0

and T1 are atomically

executed?

Load r = A

T0 T1

T1 T0

Add r = r + 5

A = 10

A = 10

Store A = r

Store A = r

34

Transaction Atomicity

Init MEM

A

0

T0

Load r = A

Add r = r + 5

T1

What are the values when T0

and T1 are atomically

executed?

Load r = A

Time

Add r = r + 5

Store A = r

Store A = r

35

Transaction Atomicity

Init MEM

A

0

T0

T1

Load r = A

What are the values when T0

and T1 are atomically

executed?

T0 T1

T1 T0

A=7

A=2

Store A = 2

Add r = r + 5

Store A = r

36

Transaction Atomicity

Init MEM

A

0

T0

T1

T1 tries to be atomic,

unfortunately, some

operation modified the

shared var A in the middle.

Load r = A

Time

Store A = 2

T1: r = 0

Add r = r + 5

A=2

T1: r = 0+5 = 5

T1: A = 5

Store A = r

37

Transaction Atomicity

Init MEM

A

0

T0

T1

Store A = 9

What are the values when T0

and T1 are atomically

executed?

T0 T1

T1 T0

A = 11

A=2

Store A = 2

Load r = A

Add r = r + 2

Store A = r

38

Transaction Atomicity

T1

T0

T2

Store A = Y

Load r = A

Time

ReadSet = {X,Y}

WriteSet ={A}

ReadSet = {A}

WriteSet ={}

Arbitrate

Commit

ReadSet = {B,C}

WriteSet ={A}

39

Hardware Transactional Memory Taxonomy

Conflict Detection

• Write set against another thread’s read set and write set

– Lazy

• Wait till last minute

– Eager

• Check on each write

• Squash during a transaction

Version Management

• Where to put speculative data

– Lazy

• Into speculative buffer (assuming transaction will abort)

• No rollback needed when abort

– Eager

• Into cache hierarchy (assuming transaction will commit

• No data copy needed when go through

40

HTM Taxonomy [LogTM 2006]

Version Management

Lazy

Conflict

Detection

Lazy

Eager

Eager

Optimistic C. Ctrl. DBMS

None

MIT LTM

Intel/Brown VTM

Conservative C. Ctrl DBMS

MIT UTM

LogTM

41

TCC System

• Similar to prior thread-level speculation (TLS) techniques

–

–

–

–

CMU Stampede

Stanford Hydra

Wisconsin Multiscalar

UIUC speculative multithreading CMP

• Loosely coupled TLS system

• Completely eliminates conventional cache coherence and

consistency models

– No MESI-style cache coherence protocol

• But require new hardware support

42

The TCC Cycle

• Transactions run in a cycle

• Speculatively execute code and buffer

• Wait for commit permission

– Phase provides synchronization, if

necessary (assigned phase number,

oldest phase commit first)

– Arbitrate with other processors

• Commit stores together (as a packet)

– Provides a well-defined write ordering

– Can invalidate or update other caches

– Large packet utilizes bandwidth

effectively

• And repeat

43

Advantages of TCC

• Trades bandwidth for simplicity and latency tolerance

– Easier to build

– Not dependent on timing/latency of loads and stores

• Transactions eliminate locks

– Transactions are inherently atomic

– Catches most common parallel programming errors

• Shared memory consistency is simplified

– Conventional model sequences individual loads and stores

– Now only have hardware sequence transaction commits

• Shared memory coherence is simplified

– Processors may have copies of cache lines in any state (no MESI !)

– Commit order implies an ownership sequence

44

How to Use TCC

• Divide code into potentially parallel tasks

– Usually loop iterations

– For initial division, tasks = transactions

• But can be subdivided up or grouped to match HW limits

(buffering)

– Similar to threading in conventional parallel programming, but:

• We do not have to verify parallelism in advance

• Locking is handled automatically

• Easier to get parallel programs running correctly

• Programmer then orders transactions as necessary

– Ordering techniques implemented using phase number

– Deadlock-free (At least one transaction is the oldest one)

– Livelock-free (watchdog HW can easily insert barriers anywhere)

45

How to Use TCC

• Three common ordering scenarios

– Unordered for purely parallel tasks

– Fully ordered to specify sequential task (algorithm level)

– Partially ordered to insert synchronization like barriers

46

Basic TCC Transaction Control Bits

• In each local cache

– Read bits (per cache line, or per word to eliminate false sharing)

• Set on speculative loads

• Snooped by a committing transaction (writes by other CPU)

– Modified bits (per cache line)

• Set on speculative stores

• Indicate what to rollback if a violation is detected

• Different from dirty bit

– Renamed bits (optional)

• At word or byte granularity

• To indicate local updates (RAW) that do not cause a violation

• Subsequent reads that read lines with these bits set, they do NOT

set read bits because local RAW is not considered a violation

47

During A Transaction Commit

• Need to collect all of the modified caches together into a

commit packet

• Potential solutions

– A separate write buffer, or

– An address buffer maintaining a list of the line tags to be committed

– Size?

• Broadcast all writes out as one single (large) packet to the

rest of the system

48

Re-execute A Transaction

• Rollback is needed when a transaction cannot commit

• Checkpoints needed prior to a transaction

• Checkpoint memory

– Use local cache

– Overflow issue

• Conflict or capacity misses require all the victim lines to be kept

somewhere (e.g. victim cache)

• Checkpoint register state

– Hardware approach: Flash-copying rename table / arch register file

– Software approach: extra instruction overheads

49

Sample TCC Hardware

• Write buffers and L1 Transaction Control Bits

– Write buffer in processor, before broadcast

• A broadcast bus or network to distribute commit packets

– All processors see the commits in a single order

– Snooping on broadcasts triggers violations, if necessary

• Commit arbitration/sequence logic

50

Ideal Speedups with TCC

• equake_l : long transactions

• equake_s : short transactions

51

Speculative Write Buffer Needs

• Only a few KB of write buffering needed

– Set by the natural transaction sizes in applications

– Small write buffer can capture 90% of modified state

– Infrequent overflow can be always handled by committing early

52

Broadcast Bandwidth

• Broadcast is bursty

• Average bandwidth

– Needs ~16 bytes/cycle @ 32 processors with whole modified lines

– Needs ~8 bytes/cycle @ 32 processors with dirty data only

• High, but feasible on-chip

53

TCC vs MESI [PACT 2005]

• Application, Protocol + Processor count

54

Implementation of MIT’s LTM [HPCA 05]

• Transactional Memory should support transactions of

arbitrary size and duration

• LTM ─ Large Transactional Memory

• No change in cache coherence protocol

• Abort when a memory conflict is detected

– Use coherency protocol to check conflicts

– Abort (younger) transactions during conflict resolution to guarantee

forward progress

• For potential rollback

– Checkpoint rename table and physical registers

– Use local cache for all speculative memory operations

– Use shared L2 (or low level memory) for non-speculative data storage

55

Multiple In-Flight Transactions

decode

Original

XBEGIN L1

ADD R1, R1, R1

ST 1000, R1

XEND

XBEGIN L2

ADD R1, R1, R1

ST 2000, R1

XEND

Rename Table

R1 P1, …

Saved Set

{P1, …} (was)

• During instruction decode:

– Maintain rename table and “saved” bits in physical registers

– “Saved” bits track registers mentioned in current rename table

• Constant # of set bits: every time a register is added to “saved” set we

also remove one

56

Multiple In-Flight Transactions

decode

Original

XBEGIN L1

ADD R1, R1, R1

ST 1000, R1

XEND

XBEGIN L2

ADD R1, R1, R1

ST 2000, R1

XEND

Rename Table

R1 P1, …

R1 P2, …

Saved Set

{P1, …}

{P2, …}

• When XBEGIN is decoded

– Snapshots taken of current rename table and S bits

– This snapshot is not active until XBEGIN retires

57

Multiple In-Flight Transactions

decode

Original

XBEGIN L1

ADD R1, R1, R1

ST 1000, R1

XEND

XBEGIN L2

ADD R1, R1, R1

ST 2000, R1

XEND

Rename Table

R1 P1, …

Saved Set

{P1, …}

R1 P2, …

{P2, …}

58

Multiple In-Flight Transactions

decode

Original

XBEGIN L1

ADD R1, R1, R1

ST 1000, R1

XEND

XBEGIN L2

ADD R1, R1, R1

ST 2000, R1

XEND

Rename Table

R1 P1, …

Saved Set

{P1, …}

R1 P2, …

{P2, …}

59

Multiple In-Flight Transactions

retire

decode

Original

XBEGIN L1

ADD R1, R1, R1

ST 1000, R1

XEND

XBEGIN L2

ADD R1, R1, R1

ST 2000, R1

XEND

Rename Table

R1 P1, …

Saved Set

{P1, …}

R1 P2, …

{P2, …}

Active

snapshot

• When XBEGIN retires

– Snapshots taken at decode become active, which will prevent P1 from reuse

– 1st transaction queued to become active in memory

– To abort, we just restore the active snapshot’s rename table

60

Multiple In-Flight Transactions

retire

decode

Original

XBEGIN L1

ADD R1, R1, R1

ST 1000, R1

XEND

XBEGIN L2

ADD R1, R1, R1

ST 2000, R1

XEND

Rename Table

R1 P1, …

Saved Set

{P1, …}

R1 P2, …

R1 P3, …

{P2, …}

{P3, …}

Active

snapshot

• We are only reserving registers in the active set

– This implies that exactly # of arch registers are saved

– This number is strictly limited, even as we speculatively execute

through multiple transactions

61

Multiple In-Flight Transactions

retire

decode

Original

XBEGIN L1

ADD R1, R1, R1

ST 1000, R1

XEND

XBEGIN L2

ADD R1, R1, R1

ST 2000, R1

XEND

Rename Table

R1 P1, …

Saved Set

{P1, …}

R1 P2, …

{P2, …}

R1 P3, …

{P3, …}

Active

snapshot

• Normally, P1 would be freed here

• Since it is in the active snapshot’s “saved” set, we place it

onto the register reserved list

62

Multiple In-Flight Transactions

retire

decode

Original

XBEGIN L1

ADD R1, R1, R1

ST 1000, R1

XEND

XBEGIN L2

ADD R1, R1, R1

ST 2000, R1

XEND

Rename Table

Saved Set

R1 P2, …

{P2, …}

R1 P3, …

{P3, …}

• When XEND retires:

– Reserved physical registers (e.g., P1) are freed, and active snapshot

is cleared

– Store queue is empty

63

Multiple In-Flight Transactions

retire

Original

XBEGIN L1

ADD R1, R1, R1

ST 1000, R1

XEND

XBEGIN L2

ADD R1, R1, R1

ST 2000, R1

XEND

Rename Table

R1 P2, …

Saved Set

{P2, …}

Active

snapshot

• Second transaction becomes active in memory

64

Cache Overflow Mechanism

O

T

tag

Overflow Hashtable

key

data

ST 1000, 55

XBEGIN L1

LD R1, 1000

ST 2000, 66

ST 3000, 77

LD R1, 1000

XEND

Way 0

data

T

tag

Way 1

data

• Need to keep

– Current (speculative) values

– Rollback values

• Common case is commit, so keep Current in cache

• Problem:

– uncommitted current values do not fit in local cache

• Solution

– Overflow hashtable as extension of cache

65

Cache Overflow Mechanism

O

T

tag

Overflow Hashtable

key

data

Way 0

data

T

tag

Way 1

data

• T bit per cache line

– Set if accessed during a transaction

• O bit per cache set

– Indicate set overflow

ST 1000, 55

XBEGIN L1

LD R1, 1000

ST 2000, 66

ST 3000, 77

LD R1, 1000

XEND

• Overflow storage in physical DRAM

– Allocate and resize by the OS

– Search when miss : complexity of a page table

walk

– If a line is found, swapped with a line in the set

66

Cache Overflow Mechanism

O

T

tag

1000

Overflow Hashtable

key

data

Way 0

data

T

tag

Way 1

data

55

• Start with non-transactional data in the

cache

ST 1000, 55

XBEGIN L1

LD R1, 1000

ST 2000, 66

ST 3000, 77

LD R1, 1000

XEND

67

Cache Overflow Mechanism

O

T

tag

1

1000

Overflow Hashtable

key

data

Way 0

data

T

tag

Way 1

data

55

• Transactional read sets the T bit

ST 1000, 55

XBEGIN L1

LD R1, 1000

ST 2000, 66

ST 3000, 77

LD R1, 1000

XEND

68

Cache Overflow Mechanism

O

T

tag

1

1000

Overflow Hashtable

key

data

Way 0

data

T

tag

55

1

2000

Way 1

data

66

• Expect most transactional writes fit in the

cache

ST 1000, 55

XBEGIN L1

LD R1, 1000

ST 2000, 66

ST 3000, 77

LD R1, 1000

XEND

69

Cache Overflow Mechanism

O

T

tag

1

1

3000

Overflow Hashtable

key

data

1000

55

ST 1000, 55

XBEGIN L1

LD R1, 1000

ST 2000, 66

ST 3000, 77

LD R1, 1000

XEND

Way 0

•

•

•

•

data

T

tag

77

1

2000

Way 1

data

66

A conflict miss

Overflow sets O bit

Replacement taken place (LRU)

Old data spilled to DRAM (hashtable)

70

Cache Overflow Mechanism

O

T

tag

1

1

1000

Overflow Hashtable

key

data

3000

77

ST 1000, 55

XBEGIN L1

LD R1, 1000

ST 2000, 66

ST 3000, 77

LD R1, 1000

XEND

Way 0

data

T

tag

55

1

2000

Way 1

data

66

• Miss to an overflowed line, checks

overflow table

• If found, swap (like a victim cache)

• Else, proceed as miss

71

Cache Overflow Mechanism

Way 0

data

T

tag

1000

55

0

2000

Overflow Hashtable

key

data

3000

77

• Abort

L2

O

T

tag

0

0

ST 1000, 55

XBEGIN L1

LD R1, 1000

ST 2000, 66

ST 3000, 77

LD R1, 1000

XEND

Way 1

data

66

– Invalidate all lines with T set (assume L2 or

lower level memory contains original values)

– Discard overflow hashtable

– Clear O and T bits

• Commit

– Write back hashtable; NACK interventions

during this

– Clear O and T bits in the cache

72

LTM vs. Lock-based

73