argmine2014_DG_ppt

advertisement

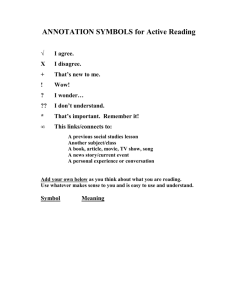

Analyzing Argumentative Discourse Units in Online Interactions Debanjan Ghosh, Smaranda Muresan, Nina Wacholder, Mark Aakhus and Matthew Mitsui First Workshop on Argumentation Mining, ACL June 26, 2014 when we we first tried But when tried the theiPhone iPhoneititfelt feltnatural naturalimmediately, immediately, we didn't have to 'unlearn' old habits from our antiquated Nokias & Blackberrys. That happened because the iPhone is a truly great User1 design. That's very true. With the iPhone, the sweet goodness part of That’s very true. With the iPhone, the sweet goodness part of the UI is immediately apparent. After a minute or two, you’re The UI is immediately apparent. After a minute or two, you’re feeling empowered and comfortable. Feeling empowered and comfortable. User2 It's the weaknesses that take several days or weeks for you to really understanding and get frustrated by. disagreethat thatthe theiPhone iPhonejust just“felt "felt natural immediately"... In my II disagree natural immediately”… in my opinion itit feels feelsrestrictive restrictiveand andover oversimplified, simplified, sometimes to the Opinion sometimes to the point of frustration. User3 Point of frustration. Argumentative Discourse Units (ADU; Peldszus and Stede, 2013) 1. Segmentation 2. Segment Classification 3. Relation Identification Annotation Challenges • A complex annotation scheme seems infeasible – The problem of high *cognitive load* (annotators have to read all the threads) – High complexity demands two or more annotators – Use of expert annotators for all tasks is costly 3 Our Approach: Two-tiered Annotation Scheme • Coarse-grained annotation – Expert annotators (EAs) – Annotate entire thread • Fine-grained annotation – Novice annotators (Turkers) – Annotate only text labeled by EAs 4 Our Approach: Two-tiered Annotation Scheme • Coarse-grained annotation – Expert annotators (EAs) – Annotate entire thread • Fine-grained annotation – Novice annotators (Turkers) – Annotate only text labeled by EAs 5 Coarse-grained Expert Annotation Target Post1 Post2 Post2 Post3 Post4 Post3 Callout Pragmatic Argumentation Theory (PAT; Van Eemeren et al., 1993) based annotation 6 ADUs: Callout and Target • A Callout is a subsequent action that selects all or some part of a prior action (i.e., Target) and comments on it in some way. • A Target is a part of a prior action that has been called out by a subsequent action. 7 Target when we we first tried But when tried the theiPhone iPhoneititfelt feltnatural naturalimmediately, immediately, we didn't have to 'unlearn' old habits from our antiquated Nokias & Blackberrys. That happened because the iPhone is a truly great User1 design. That's very true. With the iPhone, the sweet goodness part of That’s very true. With the iPhone, the sweet goodness part of the UI is immediately apparent. After a minute or two, you’re The UI is immediately apparent. After a minute or two, you’re feeling empowered and comfortable. Feeling empowered and comfortable. User2 It's the weaknesses that take several days or Callout weeks for you to really understanding and get frustrated by. disagreethat thatthe theiPhone iPhonejust just“felt "felt natural immediately"... In my II disagree natural immediately”… in my opinion itit feels feelsrestrictive restrictiveand andover oversimplified, simplified, sometimes to the Opinion sometimes to the point of frustration. User3 Point of frustration. Callout More on Expert Annotations and Corpus • Five Annotators were free to choose any text segment to represent an ADU • Four blogs and their first one-hundred comment sections are used as our argumentative corpus – Android (iPhone vs. Android phones) – iPad (usability of iPad as a tablet) – Twitter (use of Twitter as a micro-blog platform) – Job Layoffs (layoffs and outsourcing) 9 Inter Annotator Agreement (IAA) for Expert Annotations • P/R/F1 based IAA (Wiebe et al., 2005) • exact match (EM) • overlap match (OM) • Krippendorff’s a (Krippendorff, 2004) Thread F1_EM F1_OM Krippendorff’s Android 54.4 87.8 0.64 iPad 51.2 86.0 0.73 Layoffs 51.9 87.5 0.87 Twitter 53.8 88.5 0.82 a 10 Issues • Different IAA metrics have different outcome • It is difficult to infer from IAA that what segments of the text are easier or harder to annotate 11 Our solution: Hierarchical Clustering We utilize a hierarchical clustering technique to cluster ADUs that are variant of a same Callout # of Expert Annotator/ADUs per cluster 5 4 3 2 1 Thread # of Clusters Android 91 52 16 11 7 5 Ipad 88 41 17 7 13 10 Layoffs 86 41 18 11 6 10 Twitter 84 44 17 14 4 5 • Clusters with 5 and 4 annotators shows Callouts that are plausibly easier to identify • Clusters selected by only one or two annotators are harder to identify 12 Example of a Callout Cluster 13 Motivation for a finer-grained annotation • What is the nature of the relation between a Callout and a Target? • Can we identify finer-grained ADUs in a Callout? 14 Our Approach: Two-tiered Annotation Scheme • Coarse-grained annotation – Expert annotators (EAs) – Annotate entire thread • Fine-grained annotation – Novice annotators (Turkers) – Annotate only text labeled by EAs 15 Novice Annotation: task 1 T T CO CO Agree/Disagree/Other T CO T CO This is related to annotation of agreement/disagreement (Misra and Walker, 2013; Andreas et al., 2012) identification research. 16 Target when we we first tried But when tried the theiPhone iPhoneititfelt feltnatural naturalimmediately, immediately, we didn't have to 'unlearn' old habits from our antiquated Nokias & Blackberrys. That happened because the iPhone is a truly great User1 design. That's very true. With the iPhone, the sweet goodness part of That’s very true. With the iPhone, the sweet goodness part of the UI is immediately apparent. After a minute or two, you’re The UI is immediately apparent. After a minute or two, you’re feeling empowered and comfortable. Feeling empowered and comfortable. User2 It's the weaknesses that take several days or Callout weeks for you to really understanding and get frustrated by. disagreethat thatthe theiPhone iPhonejust just“felt "felt natural immediately"... In my II disagree natural immediately”… in my opinion itit feels feelsrestrictive restrictiveand andover oversimplified, simplified, sometimes to the Opinion sometimes to the point of frustration. User3 Point of frustration. Callout More from Agree/Disagree Relation Label • For each Target/Callout pair we employed five Turkers • Fleiss’ Kappa shows moderate agreement between the Turkers • 143 Agree/153 Disagree/50 Other data instance • We run preliminary experiments for predicting the relation label (rule based, BoW, Lexical Features…) • Best results (F1): 66.9% (Agree) 62.9% (Disagree) 18 Novice Annotation: task 2 T CO Difficulty S R 2: Identifying Stance vs. Rationale This is related to identification of justification task (Biran and Rambow, 2011) 19 User2 That's very true. With the iPhone, the sweet goodness part of That’s verytrue true. With the iPhone, the sweet goodness part of That’s very the UI is immediately apparent. After a minute or two, you’re The UI is immediately apparent. After a minute or two, you’re feeling empowered and comfortable. Feeling empowered and comfortable. It's the weaknesses that take several days or weeks for you to really understanding and get frustrated by. User3 Stance Rationale II disagree that the theiPhone iPhonejust just“felt "feltnatural natural immediately"... In my disagree that immediately” immediately”… in my opinion andover oversimplified, simplified,sometimes sometimes Opinion itit feels feels restrictive restrictive and to to thethe point of frustration. frustration. Point of Examples of Callout/Target pairs with difficulty level (majority voting) Target Callout the iPhone is a I disagree too. truly great design. some things they get right, some things they do not. the dedicated that back button is `Back' button key. navigation is actually much easier on the android. It's more about the Just because the features and apps iPhone has a huge and Android amount of apps, seriously lacks on doesn't mean latter. they're all worth having. I feel like your I feel like your poor comments about grammar are to Nexus One is too obvious to be self positive … thought... Stance Rationale I…too Some not things…do Easy That back button is Navigation key is…android - - Difficulty Moderate Just because the Difficult iPhone has a huge amount of apps, doesn't mean they're all worth having. Too unsure difficult/ 21 Difficulty judgment (majority voting) Number of Expert Annotators per cluster Diff 5 4 3 2 1 Easy 81.0 70.8 60.9 63.6 25.0 Moderate 7.7 7.0 17.1 6.1 25.0 Difficult 5.9 5.9 7.3 9.1 12.5 Too Difficult to code 5.4 16.4 14.6 21.2 37.5 22 Conclusion • We propose a two-tiered annotation scheme for argument annotation for online discussion forums • Expert annotators detect Callout/Target pairs where crowdsourcing is employed to discover finer units like Stance/Rationale • Our study also assists in detecting the text that is easy/hard to annotate • Preliminary experiments to predict agreement/disagreement among ADUs 23 Future Work • Qualitative analysis of the Callout phenomenon to process finer-grained analysis • Study the different use of the ADUs on different situations • Annotation on different domain (e.g. healthcare forums) and adjust our annotation scheme • Predictive modeling of Stance/Rationale phenomenon 24 Thank you! 25 Example from the discussion thread User2 User3 Stance Rationale 26 Predicting the Agree/Disagree Relation Label • Training data (143 Agree/153 Disagree) • Salient Features for the experiments – Baseline: rule based (`agree’, `disagree’) – Mutual Information (MI): MI is used to select words to represent each category – LexFeat: Lexical features based on sentiment lexicons (Hu and Liu, 2004), lexical overlaps, initial words of the Callouts… • 10-fold CV using SVM 27 Predicting the Agree/Disagree Relation Label (preliminary result) • Lexical features result in F1 score between 6070% for Agree/Disagree relations • Ablation tests show initial words of the Callout is the strongest feature • Rule-based system show very low recall (7%), which indicates a lot of Target-Callout relations are *implicit* • Limitation – lack of data (in process of annotating more data currently…) 28 # of Clusters for each Corpus Thread # of Clusters # of EA ADUs per cluster 5 4 3 2 1 91 52 16 11 7 5 Ipad 88 41 17 7 13 10 Layoffs 86 41 18 11 6 10 Twitter 84 44 17 14 4 5 • Clusters with 5 and 4 annotators shows Callouts that are plausibly easier to identify • Clusters selected by only one or two annotators are harder to identify 29 Target User1 Callout1 User2 Callout2 User3 30 Target User1 Callout1 User2 Callout2 User3 31 Fine-Grained Novice Annotation T T CO E.g., Relation Identification CO E.g., Agree/Disagree/ Other T T Finer-Grained Annotation CO CO E.g., Stance &Rationale 32 Motivation and Challenges Post1 1. Segmentation 2. Segment Classification 3. Relation Identification Post2 Post3 Post4 Argumentative Discourse Units (ADU; Peldszus and Stede, 2013) 33 Why we propose a two-layer annotation? • A two-layer annotation schema – Expert Annotation • Five annotators who received extensive training for the task • Primary task includes selecting discourse units from user’ posts (argumentative discourse units: ADU) • Peldszus and Stede (2013 – Novice Annotation • Use of Amazon Mechanical Turk (AMT) platform to detect the nature and role of the ADUs selected by the experts 34 Annotation Schema for Expert Annotators • Call Out A Callout is a subsequent action that selects all or some part of a prior action (i.e., Target) and comments on it in some way. • Target A Target is a part of a prior action that has been called out by a subsequent action 35 Motivation and Challenges • User generated conversational data provides a wealth of naturally generated arguments • Argument mining of such online interactions, however, is still in its infancy… 36 Detail on Corpora • Four blog posts and the responses (e.g. first 100 comments) from Technorati between 20082010. • We selected blog postings in the general topic of technology, which contain many disputes and arguments. • Together they are denoted as – argumentative corpus 37 Motivation and Challenges (cont.) • A detailed single annotation scheme seems infeasible – The problem of high *cognitive load* (e.g. annotators have to read all the threads) – Use of expert annotators for all tasks is costly • We propose a scalable and principled two-tier scheme to annotate corpora for arguments 38 Annotation Schema(s) • A two-layer annotation schema – Expert Annotation • Five annotators who received extensive training for the task • Primary task includes a) segmentation, b) segment classification, and c) relation identification lecting discourse units from user’ posts (argumentative discourse units: ADU) – Novice Annotation • Use of Amazon Mechanical Turk (AMT) platform to detect the nature and role of the ADUs selected by the experts 39 Example from the discussion thread 40 A picture is worth… 41 Motivation and Challenges 1. Segmentation 2. Segment Classification 3. Relation Identification Argument annotation includes three tasks (Peldszus and Stede, 2013)42 Summary of the Annotation Schema(s) • First stage of annotation – Annotators: expert (trained) annotators – A coarse-grained annotation scheme inspired by Pragmatic Argumentation Theory (PAT; Van Eemeren et al., 1993) – Segment, label, and link Callout and Target • Second stage of annotation – Annotators: novice (crowd) annotators – A finer-grained annotation to detect Stance and Rationale of an argument 43 Expert Annotation Expert Annotators • Segmentation • Labeling • Linking Peldszus and Stede (2013) • Five Expert (trained) annotators detect two types of ADUs • ADU: Callout and Target Coarse-grained annotation 44 The Argumentative Corpus 2 1 4 3 Blogs and comments extracted from Technorati (2008-2010) 45 Novice Annotations: Identifying Stance and Rationale Callout Crowdsourcing • Identify the task-difficulty (very difficult….very easy) • Identify the text segments (Stance and Rationale) 46 Novice Annotations: Identifying the relation between ADUs Callout Target … … … … Relation label Crowdsourcing Number of EA ADUs per cluster 5 4 3 2 1 Agree 39.4 43.3 42.5 35.5 48.4 Disagree 56.9 31.7 32.5 25.8 19.4 Other 3.70 25.0 25.0 38.7 32.3 47 More on Expert Annotations • Annotators were free to chose any text segment to represent an ADU Splitters Lumpers 48 Novice Annotation: task 1 1: Identifying the relation (agree/disagree/other) This is related to annotation of agreement/disagreement (Misra and Walker, 2013; Andreas et al., 2012) and classification of stances (Somasundaran and Wiebe, 2010) in online forums. 49 ADUs: Callout and Target 50 Examples of Clusters # of EAs Callout Target 5 I disagree too. some things the iPhone is a truly great design. they get right, some things they do not. I disagree too…they do not. That happened because the iPhone is a truly great design. 2 These iPhone Clones are playing catchup. Good luck with that. griping about issues that will only affect them once in a blue moon 1 Do you know why the Pre Except for games?? iPhone is ...various handclearly dominant there. set/builds/resolution issues? 51 More on Expert Annotations • Annotators were free to chose any text segment to represent an ADU 52 Example from the discussion thread 53 Coarse-grained Expert Annotation Target Callout Pragmatic Argumentation Theory (PAT; Van Eemeren et al., 1993) based annotation 54 ADUs: Callout and Target 55 More on Expert Annotations and Corpus • Five Annotators were free to chose any text segment to represent an ADU • Four blogs and their first one-hundred comment sections are used as our argumentative corpus Twitter Android Layoffs iPad 56 Examples of Cluster # of EAs Callout Target I disagree too. some things they the iPhone is a truly great design. get right, some things they do not. I disagree too…they do not. I disagree too. 5 That happened because the iPhone is a truly great design. But when we first tried the iPhone it felt natural immediately . . . iPhone is a truly great design. Hi there, I disagree too . . . they -Same as abovedo not. Same as OSX. I disagree too. . . Same as OSX . . . -Same as aboveno problem. 57 Predicting the Agree/Disagree Relation Label Features Baseline Unigrams MI-based unigram LexF Categ. Agree Disagree Agree Disagree Agree Disagree Agree Disagree P 83.3 50.0 57.9 61.8 60.1 65.2 61.4 69.6 R 6.90 5.20 61.5 58.2 66.4 58.8 73.4 56.9 F1 12.9 9.50 59.7 59.9 63.1 61.9 66.9 62.6 58 Novice Annotation: task 2 2: Identifying Stance vs. Rationale This is related to identification of claim/justification task(Biran and Rambow, 2011) 59