PSP Refresher

5/25/2006

Personal Software Process (PSP)

Ref: Introduction to the Personal Software Process (Humphrey)

SE 652- PSP Refresher 1

What is the Personal Software Process?

High quality is critical to project & product success

& the most important single factor is the personal commitment of each software engineer to deliver a quality product!

2 5/25/2006 SE 652- PSP Refresher

Personal Commitment to Quality

Every Engineer must be committed to quality!

– Project/Product Success

– Personal Satisfaction

The PSP Paradigm:

– Software engineers establish personal process goals

– They define the methods to use

– They measure their work

– They analyze the results

– Based on the results, they adjust their methods to improve towards personal goals

5/25/2006 SE 652- PSP Refresher 3

PSP Quality Metrics

Fundamental Measures:

Volume Produced

Quality

Time

Resources

Cost of Quality (COQ) Types:

Failure Cost – diagnosing & fixing defects

Appraisal Cost –checking product for defects

Prevention Cost – analyzing data, design & modifying process

Appraisal / Failure Ratio (A/FR) is an indicator of effort spent to avoid introducing defects into the product

COQ = COQ Type effort / Total Project effort

A/FR = Appraisal COQ / Failure COQ

5/25/2006 SE 652- PSP Refresher 4

COQ & Defect Relationships

Defect Relationships

High A/F Ratios (i.e. > 1) => fewer defects

Strong Correlation between compile detected defects & overall defects

High A/F Ratios => fewer test hours

Review Rates & Improvement

Review Rate = Defects found / code review hours

To Improve:

Measure

Analyze defects that get through, look for ways to catch them earlier

Delete steps that don’t find or miss defects

5/25/2006 SE 652- PSP Refresher 5

Tracking Time

Form: Time Log ( LOGT )

Data Tracked:

Date

Start/Stop Time

Interruption Time

Net Delta Time (i.e. Stop time – Start time =Interruption time)

Activity

Comments

Completed (yes/no)

Units (e.g. # of lines of code, # of pages read / reviewed)

5/25/2006 SE 652- PSP Refresher 6

Quality & Defects

Quality Defined:

Adherence to users requirements, both explicit & implicit

Defect:

Anything that detracts from program’s ability to completely & effectively meet the needs of the end users. Includes code, design & requirements.

Defect Injection:

Introduction of mistakes that cause the program to operate incorrectly.

Cost of Defects

Defect identification & removal typically > ½ of total project effort

Defect removal most efficiently done by person who injects them

Why not bugs?

5/25/2006 SE 652- PSP Refresher 7

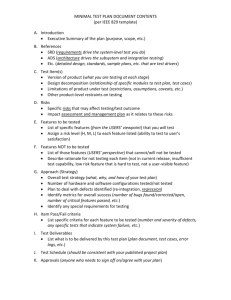

Defect Management

Form: Defect Recording Log ( LOGD )

Record Defects:

Improve programming

If you can’t measure it, you can’t manage it.

If you aren’t measuring, you aren’t managing.

Reduce # of defects

Save Time & Money

Find / fix cost increases 10x with every subsequent phase

Types of Defects:

See LOGD form

Defect Severities:

Major, Minor, etc.

5/25/2006 SE 652- PSP Refresher 8

Defect Detection

Compilers catch ~90% of syntax errors

Testing efficiency varies based on:

Quality into test

Quality of test coverage

Size & Complexity of product

Field found

Customer Trials

Post General Availability

(IBM: $250M / year to fix 13,000 customer reported defects, avg.=$20K/defect)

Reviews

Code (Individual & Team)

Design

Requirements

5/25/2006 SE 652- PSP Refresher 9

Code Reviews

Time consuming, but 3x-5x more efficient than testing

If Done Well:

Expect 6-10 defects / engineer hour

Expect 75% - 80% defect removal efficiency

Expect 30 min / 100 LOC

Personal Code Review before compiling?

•Approximately same time, before or after compiling & can save compile time

(typical, 12-15% development time vs. 3 %)

•Once programs are compiled, reviews typically not as thorough

•Compiling equally effective before or after the code review

•Correlation between defects found during compile & defects found in test

5/25/2006 SE 652- PSP Refresher 10

Code Review Checklist

Prerequisite: Coding Guidelines

Systematic checklists help ensure thorough reviews

(See PSP Table 14.1 for example)

Refine checklist based on individual performance

5/25/2006 SE 652- PSP Refresher 11

Sample Code Review Script

(Sample Checklist: Table 14.1 in PSP)

1. Scan program once for each item on checklist

2. Note # of defects found in ‘#’ column

(no defects, put an ‘x’)

3. For multiple objects, tally separately, same form

4. Scan entire program for unexpected / new defect types

5/25/2006 SE 652- PSP Refresher 12

Product Size

Size one of primary tools in estimating product size & development effort

Estimating is a skill, can be developed & improved with practice

Better if based on historical data

Improve with increased granularity

Size measures good for normalizing estimates, but beware …

Common size measures

Programs – Lines of Code (NCSL/KLOC, Function Points)

Documents – Pages

Size Estimation

Use historical data (whenever possible)

Estimate often

Compare estimates with actuals

5/25/2006 SE 652- PSP Refresher 13

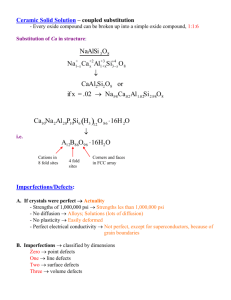

Parentheses

Brackets

Semicolon

Period

Key Words

Special

Comma

5/25/2006

LOC Counting Exercise (Pascal)

Code Counting Guidelines

No statement or punctuation marks within '( Š )' are counted.

No statement or punctuation marks within '{ Š }' are counted

Every occurrence of a ';' is counted once

Every occurrence of a '.' that follows a terminating END statement is counted once.

Every occurrence of the following selected key words is counted once:

BEGIN, CASE, DO, ELSE, END, IF, RECORD, REPEAT, THEN

Where there is no ';' at a line end, every statement preceding the following key words is counted once (if not already counted):

ELSE, END, UNTIL

Every occurrence of a ',' in the USES or VAR portions of the program is counted once.

SE 652- PSP Refresher 14

Task & Schedule Planning

Schedule Development

Identify & document all tasks, assign resources & communicate to each individual

Obtain commitment dates for each task

Identify & document interdependencies between tasks, inputs to start each task & supplier of inputs

Review with entire team to identify conflicts, disagreements or misunderstandings

Checkpoints

Objectively identifiable points in a project

(Typically want at least one checkpoint per week)

Gantt Charts

5/25/2006 SE 652- PSP Refresher 15

Schedule Tracking & Earned Value

Milestone tracking by % complete ( 10/90 rule )

Earned Value (EV)

Assign value to each task based on estimate

Planned Value (PV) of a task = value /

of all task values

EV =

PV for completed tasks

E.G.

Task 1 PV = 10%

Task 2 PV = 15%

On completion of task 1 & task 2, EV = 25%

5/25/2006 SE 652- PSP Refresher 16