Measuring Effectiveness-CDV

advertisement

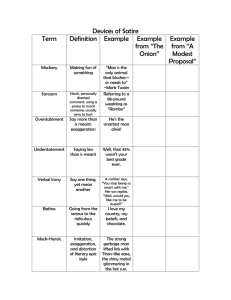

Measuring effectiveness - one foundation’s experience M. Christine DeVita President The Wallace Foundation April 11, 2005 56th Annual Conference The Council on Foundations Evolution of The Wallace Foundation strategy Created 15 years ago through bequest of DeWitt and Lila Wallace Through 1999, made grants of nearly $1 billion to non-profits through 100+ different program Much accomplished -- but thought we could do better in fostering long-term improvements in areas we were working Board retreat in 1999 and agreement to address: – Root causes rather than symptoms – Seek improvements that outlasted grant dollars – Seek impact beyond our grant dollars Contracted dozens of initiatives down to three: education leadership, arts participation, and out-of-school time learning Clarified our approach – Support for innovation on the ground – Develop evidence about what works and does not – Share knowledge to inform the wider field Page 2 With new way of working, new challenge: measuring progress If it’s about catalyzing positive change in major public systems… Then to be effective, needed ongoing information about progress – for ourselves and our board – See how the pieces – innovation, knowledge development, communication - fit together – Because work is challenging and uncertain, ongoing feedback, revisions, mid-course corrections are crucial for success To have useful strategy discussions with board – a focus beyond individual grants – needed evidence of results Page 3 Driving the measurement goal – focus on good governance and effectiveness Good governance is the bedrock of effectiveness – Investment performance – Human resources – Expenses Tougher challenge: What happens after money leaves the door Robert Wood Johnson’s scorecard was a model Decision: Aggregate progress of individual grantees within each focus area; produce report for annual board planning retreat in January Page 4 First try in 2003 – a focus on ‘what happened’ was a partial success The idea: Compare multiple progress measures against long-term desired results The how: Measured progress in three categories – Grantee work – Development of useful knowledge for the field – Achievement of national benefits What we got: 23 pages of data on our focus area work – but little guidance on how to interpret Board comment: ‘This is very useful for internal management, but we need to know what it all means’ Page 5 Second try in 2004 – not only ‘what happened’ but ‘what does it mean?’ Fine-tuned what we measured – added sustainability – Working the plan – Institutionalizing change – Benefits to people – Developing and spreading knowledge Asked one or two questions in each category to assess progress Answered each question with ‘core finding’ Page 6 How do we make ‘core finding’ visible at a glance? Considered three options – Traffic light approach: misleading – Scores of 1-5: too definitive – Eight-point progress gauges (little or none/modest/significant): much better Permits an assessment Allows comparisons Suggests relative, rather than exact, determinations Board comment: ‘A big improvement. The gauges are useful.’ Mixed/Modest Progress No/Unclear Progress Significant Progress Page 7 What it looks like in practice – working the plan Are core groups of grantees working the plan? – Example Our work in 12 school districts to improve public education leadership – The question: “Is leadership development improving?” – Core finding: Increases in number and diversity of leaders getting leadership training and placed in high-need schools – but quality of training not systematically assessed – Evidence: Participation in training activities up almost 20 percent over previous year, with 9,500 in total trained. About 70 percent of those trained are female, 40 percent people of color – Gauge: Mixed or modest progress Mixed/Modest Progress No/Unclear Progress Significant Progress Page 8 What it looks like in practice – institutionalizing change Are sites making institutional changes? – Example: Our work with arts organizations and state arts agencies to help them build participation – The question: “Are grantees changing priorities to reflect participation-building?” – Core finding: Non-Wallace spending on participation activities by our grantees declined slightly (2%). A minority of our state grantees adopted performance measures; many changed their strategic plans to focus on participation – Evidence: Among arts organizations, 47 percent spent more on participation; 53 percent spent less. Among state arts agencies, 77 percent changed their strategic plans; 40 percent substantially increased focus on participation – Gauge: Mixed or modest progress Mixed/Modest Progress No/Unclear Progress Significant Progress Page 9 What it looks like in practice – benefiting people Are people benefiting from our work? – Example: Our work in arts participation – The question: “Are more people from diverse demographic backgrounds attending arts performances or exhibits at the organizations we fund?” – Core finding: Organizations that collect demographic data reported significant attendance gains among low-income and nonwhite participants from 2002 to 2003 – Evidence: Median increases of 15% for low-income attendees (13 organizations reporting), and 11% for non-white participants (14 organizations reporting) – Gauge: Significant progress Mixed/Modest Progress No/Unclear Progress Significant Progress Page 10 What it looks like in practice – producing useful knowledge Are we producing and sharing useful knowledge? – Example: Our work in out-of-school time learning – The question: “Are we producing knowledge that addresses key issues relevant to improving quality?” – Core finding: Wallace-commissioned studies contribute to understanding demand and principles behind quality. No lessons yet on our work with cities to improve systems to support quality. – Evidence: Publication of All Work and No Play, a Public Agenda survey – Gauge: In between mixed and significant progress Mixed/Modest Progress No/Unclear Progress Significant Progress Page 11 Measures helped us determine action priorities Finding: We don’t know quality of leader training programs – Action: Invest in assessment of quality Finding: Arts organizations are slightly cutting spending on participation-building – Action: Invest in helping organizations build endowments or cash reserves for this purpose Finding: Practitioners find Wallace-commissioned studies helpful, but modest circulation of them – Action: Invest in doing more to share knowledge Page 12 Lessons we’ve learned We needed to get the questions right – ‘Absent a clear conception of effectiveness, foundations cannot assess (quantitatively or otherwise) whether or not they have achieved it, and risk adopting measures first and then adjusting their conceptions of success to fit the measures.’ – Foundation Effectiveness, Francie Ostrower, The Urban Institute We needed to answer both ‘what happened?’ and ‘what did it mean?’ – Board members found gauges useful to answer the latter Early indication: Helps board and staff get ‘big picture’ of how we are doing Early indication: Helps us plan more effectively Page 13 Challenges we face How easy or tough to grade? How do we account for barriers to action? How do we make the process efficient? How do we incorporate it into ongoing strategy development? How do we continue to engage board on effectiveness? Page 14 Measurement – a means to the end of greater effectiveness Provide input to strategy development The search for an appropriate measure starts with a clear description of what you want to achieve Use measurement of results to guide decisions about allocating funds between different initiatives Use measurement of results to guide decisions about what to invest within an initiative Page 15