MixedReality

advertisement

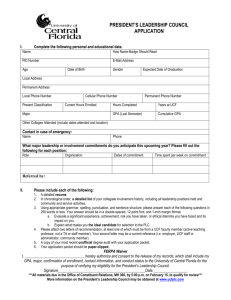

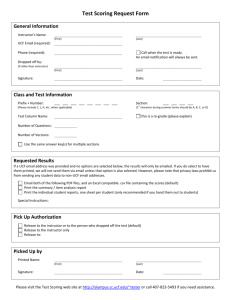

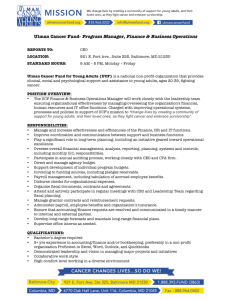

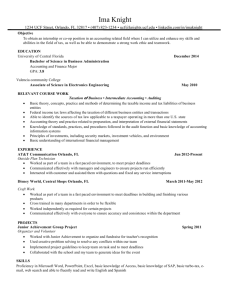

Mixed Reality University of Central Florida Media Convergence Laboratory @ IST School of Electrical Engineering & Computer Science Digital Media Department Media Convergence Laboratory Using mixed reality to solve real problems The faces of MR Physical Reality (PR) – real world Virtual Reality (VR) – purely synthetic Augmented Reality (AR) – virtual assets registered in real world Augmented Virtuality (AV) – real (people, props) layered in virtual space Mixed Reality Properties Blends the real and the synthetic into a single landscape & experience Addresses multiple senses Requires proper registration of real and virtual, relative to each other Typically requires complex behaviors of virtual characters Enables buy-in through imagination Limitations along the continuum PR is constrained by physical space VR limits person-to-person expression and context of PR AR often limits escape from real world AV allows rich layering In general, we want to move smoothly along the MR continuum Visual capture HMDs 3d laser Optical Video mocap Light capture Visual rendering HMDs Dome Screens Flat World Demo Dome MR Windows Audio capture / rendering Surround Hydrophones Designing Mixing Holophone Delivery in constrained settings Pipeline: Audio for VR/MR Planning & Prescripting Capturing Synthesizing, Mixing, and Mastering Designing Sound & Integrating Delivering Special effects - Colorkinetics SmartJack3 (USB to DMX) - Colorkinetics JuiceBox2 / iColor MR Lights - Gilderfluke MP3-50/40 - 4 Channel Dimmer Packs - Pneumatic / Smoke System - Sound Transducers (”Bass Shakers”) Tracking Technologies Magnetic Optical Acoustical Inertial Vision (often with markers/features) Hybrid (hardware and soft/hardware) What we currently use Wide field of view: 51 degree in horizontal direction, 37 degree in vertical direction-high resolution: VGA (640 × 480) weight: 286 g standard camera I/O: NTSC Coastar (Co-Optical Axis for AR) • IS 900 Wide Area Tracker InterTrax2 Wireless Tracker Registration / Illumination Virtual and real must be properly placed relative to each other Inter-occlusion must be properly managed Mutual shadowing must occur, including shadows from real caused by virtual light The effects of ambient light (real and virtual) must be rendered Story Virtual characters must have appropriate behaviors, reactive and proactive Virtual characters often need to mimic those of real characters Capture, replay must be provided for entertainment, after-action review, etc. Putting it together Content is king Create experiences that have long-term impact Experiential movie trailers Situational awareness training Creative collaboration Cognitive and physical rehabilitation Teacher screening and training Free-choice learning … Imagination is the most important dimension Siggraph ‘03 – experiential trailer Situational awareness MR MINI-MOUT Peer Collaboration Along the Continuum Start in PR (look at current plant) Move to AR (add new equipment and new windows) Individual jumps out to VR to privately review designs Move to AV as all are surrounded by new design, but still see each other What We Must Support Jointly visualized “what if?” scenarios Face-to-face interactions Alternative and often opposite POVs Personal and group creation Enablers Tangible and tactile components Constructive distractions (sandbox) Shared display (1st & 3rd person views) Shared interfaces Scalability, interoperability & portability Cognitive rehabilitation reconfigurable kitchen real items to use tethered for now as seen thru HMD patient at home movement for after action review Patient and therapist in context of patient’s kitchen but in safety of clinic Pre vs. Post Spoon Make Cereal Spoon Make Cereal Milk Milk Cereal Cereal Bowls Bowls Pre-testing Home Post-testing Home Stuttering Teacher screening and training Cost to hire a teacher is $15,000 Cost to remove can be much higher Turnover in inner city is 50% in 3 years Many last less than a year Children are unintended guinea pigs Want to select those who can succeed Want to help those who want to succeed Front room – early prototype Back room -- puppeteers Interaction with a Virtual Class In front of class At desk of student Personal contact Vision-based tracking AI for all but student being directly addressed Free-choice learning Free-choice learning Water’s journey Coming to a science center near you Initial focus is on the Everglades But, water is central to all ecologies Move clock backwards or forwards Real blends to virtual User-chosen policies affect what is experienced Forest fire visualizations Fire spread model from Farsite Tree rendering from SpeedTree Fire and smoke visualization is ours Used in environmental economics & Everglades museum projects Some basic research Blending of real and virtual in changing illumination Overcoming HMD camera limitations Real-time video chroma keying in presence of noise and inconsistent lighting LOD management (forests) Neoroevolution for virtual character behaviors Painting in HDR Lab people characteristics Original director from theater and theme parks Lab manager from museum industry Chief programmer from Lego Content delivery people from digital media, children’s literature, audio, theater, museum, … Content providers from all over campus PhD students have interests in graphics, neuroevolution, vision, ecology, software engineering, simulation & modeling Undergraduates are mainly from CS and DM Contact information Charles E. Hughes Professor & Associate Director School of Electrical Engineering & Computer Science Professor Digital Media Director, Media Convergence Lab E-Mail: ceh@cs.ucf.edu Home Page: http://www.cs.ucf.edu/~ceh/ Graphics Lab: http://graphics.cs.ucf.edu Media Convergence Lab: http://mcl.ucf.edu Contact information J. Michael Moshell Professor Digital Media Professor School of Electrical Engineering & Computer Science Co-founder, Media Convergence Lab Home Page: http://www.cs.ucf.edu/~jmmoshell/ Media Convergence Lab: http://mcl.ucf.edu