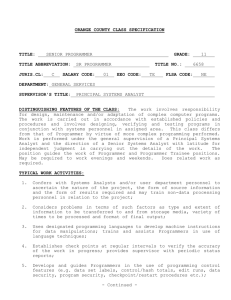

Disappearing into the code: A deadline brings

programmers to the place of no shame. Excerpt

from "Close to the Machine."

By Ellen Ullman Oct. 9, 1997

This is the first of two excerpts in

Salon 21st from Ellen Ullman's new

book, "Close to the Machine:

Technophilia and its Discontents"

(City Lights Books, $21.95, 189

pp.), an autobiographical

exploration of the lives and minds of

software engineers.

I have no idea what time it is. There

are no windows in this office and no

clock, only the blinking red LED

display of a microwave, which

flashes 12:00, 12:00, 12:00, 12:00.

Joel and I have been programming for days. We have a bug, a stubborn

demon of a bug. So the red pulse no-time feels right, like a read-out of our

brains, which have somehow synchronized themselves at the same blink

rate.

"But what if they select all the text and --"

" -- hit Delete."

"Damn! The NULL case!"

"And if not we're out of the text field and they hit space --"

"-- yeah, like for --"

"-- no parameter --"

"Hell!"

"So what if we space-pad?"

"I don't know ... Wait a minute!"

"Yeah, we could space-pad --"

" -- and do space as numeric."

"Yes! We'll call SendKey(space) to --"

"-- the numeric object."

"My God! That fixes it!"

"Yeah! That'll work if --"

"-- space is numeric!"

"-- if space is numeric!"

We lock eyes. We barely breathe. For a slim moment, we are together in a

universe where two human beings can simultaneously understand the

statement "if space is numeric!"

Joel and I started this round of debugging on Friday morning. Sometime

later, maybe Friday night, another programmer, Danny, came to work. I

suppose it must be Sunday by now because it's been a while since we've

seen my client's employees around the office. Along the way, at odd times of

day or night that have completely escaped us, we've ordered in three meals

of Chinese food, eaten six large pizzas, consumed several beers, had

innumerable bottles of fizzy water, and finished two entire bottles of wine. It

has occurred to me that if people really knew how software got written, I'm

not sure if they'd give their money to a bank or get on an airplane ever

again.

What are we working on? An artificial intelligence project to find "subversive"

talk over international phone lines? Software for the second start-up of a

Silicon Valley executive banished from his first company? A system to help

AIDS patients get services across a city? The details escape me just now. We

may be helping poor sick people or tuning a set of low-level routines to verify

bits on a distributed database protocol -- I don't care. I should care; in

another part of my being -- later, perhaps when we emerge from this room

full of computers -- I will care very much why and for whom and for what

purpose I am writing software. But just now: no. I have passed through a

membrane where the real world and its uses no longer matter. I am a

software engineer, an independent contractor working for a department of a

city government. I've hired Joel and three other programmers to work with

me. Down the hall is Danny, a slim guy in wire-rimmed glasses who comes to

work with a big, wire-haired dog. Across the bay in his converted backyard

shed is Mark, who works on the database. Somewhere, probably asleep by

now, is Bill the network guy. Right now, there are only two things in the

universe that matter to us. One, we have some bad bugs to fix. Two, we're

supposed to install the system on Monday, which I think is tomorrow.

"Oh no, no!" moans Joel, who is slumped over his keyboard. "No-o-o-o ." It

comes out in a long wail. It has the sound of lost love, lifetime regret. We've

both been programmers long enough to know that we are at that place. If we

find one more serious problem we can't solve right away, we will not make it.

We won't install. We'll go the terrible, familiar way of all software: we'll be

late.

"No, no, no, no. What if the members of the set start with spaces. Oh, God.

It won't work."

He is as near to naked despair as has ever been shown to me by anyone not

in a film. Here, in that place, we have no shame. He has seen me sleeping on

the floor, drooling. We have both seen Danny's puffy, white midsection -young as he is, it's a pity -- when he stripped to his underwear in the heat of

the machine room. I have seen Joel's dandruff, light coating of cat fur on his

clothes, noticed things about his body I should not. And I'm sure he's seen

my sticky hair, noticed how dull I look without make-up, caught sight of

other details too intimate to mention. Still, none of this matters anymore.

Our bodies were abandoned long ago, reduced to hunger and sleeplessness

and the ravages of sitting for hours at a keyboard and a mouse. Our physical

selves have been battered away. Now we know each other in one way and

one way only: the code.

Besides, I know I can now give him pleasure of an order which is rare in any

life: I am about to save him from despair.

"No problem," I say evenly. I put my hand on his shoulder, intending a

gesture of reassurance. "The parameters never start with a space."

It is just as I hoped. His despair vanishes. He becomes electric, turns to the

keyboard and begins to type at a rapid speed. Now he is gone from me. He is

disappearing into the code -- now that he knows it will work, now that I have

reassured him that, in our universe, the one we created together, space can

indeed be forever and reliably numeric.

The connection, the shared thought-stream, is cut. It has all the frustration

of being abandoned by a lover just before climax. I know this is not physical

love. He is too young, he works for me; he's a man and I've been tending

toward women; in any case, he's too prim and business-schooled for my

tastes. I know this sensation is not real attraction: it is only the spillover, the

excess charge, of the mind back into the abandoned body. Only. Ha. This is

another real-world thing that does not matter. My entire self wants to melt

into this brilliant, electric being who has shared his mind with me for twenty

seconds.

Restless, I go into the next room where Danny is slouched at his keyboard.

The big, wire-haired dog growls at me. Danny looks up, scowls like his dog,

then goes back to typing. I am the designer of this system, his boss on this

project. But he's not even trying to hide his contempt. Normal programmer, I

think. He has 15 windows full of code open on his desktop. He has

overpopulated his eyes, thoughts, imagination. He is drowning in bugs and I

know I could help him, but he wants me dead just at the moment. I am the

last-straw irritant. Talking: Shit! What the hell is wrong with me? Why would

I want to talk to him? Can't I see that his stack is overflowing?

"Joel may have the overlapping controls working," I say.

"Oh, yeah?" He doesn't look up.

"He's been using me as a programming dummy," I say. "Do you want to talk

me through the navigation errors?" Navigation errors: bad. You click to go

somewhere but get somewhere else. Very, very bad.

"What?" He pretends not to hear me.

"Navigation errors. How are they?"

"I'm working on them." Huge, hateful scowl. Contempt that one human being

should not express to another under any circumstances. Hostility that should

kill me, if I were not used to it, familiar with it, practiced in receiving it.

Besides, we are at that place. I know that this hateful programmer is all I

have between me and the navigation bug. "I'll come back later," I say.

Later: how much later can it get? Daylight can't be far off now. This small

shoal of pre-installation madness is washing away even as I wander back

down the hall to Joel.

"Yes! It's working!" says Joel, hearing my approach.

He looks up at me. "You were right," he says. The ultimate one programmer

can say to another, the accolade given so rarely as to be almost unknown in

our species. He looks right at me as he says it: "You were right. As always."

This is beyond rare. Right: the thing a programmer desires above, beyond

all. As always: unspeakable, incalculable gift.

"I could not have been right without you," I say. This is true beyond

question. "I only opened the door. You figured out how to go through."

I immediately see a certain perfume advertisement: A man holding a violin

embraces a woman at a piano. I want to be that ad. I want efficacies of

reality to vanish, and I want to be the man with violin, my programmer to be

the woman at the piano. As in the ad, I want the teacher to interrupt the

lesson and embrace the student. I want the rules to be broken. Tabu. That is

the name of the perfume. I want to do what is taboo. I am the boss, the

senior, the employer, the person in charge. So I must not touch him. It is all

taboo. Still -Danny appears in the doorway.

"The navigation bug is fixed. I'm going home."

"I'll test it --"

"It's fixed."

He leaves.

It is sometime in the early morning. Joel and I are not sure if the night guard

is still on duty. If we leave, we may not get back up the elevator. We leave

anyway.

We find ourselves on the street in a light drizzle. He has on a raincoat, one

that he usually wears over his too-prim, too-straight, good-biz-school suits. I

have on a second-hand-store leather bomber jacket, black beret, boots.

Someone walking by might wonder what we were doing together at this stilldark hour of the morning.

"Goodnight," I say. We're still charged with thought energy. I don't dare

extend my hand to shake his.

"Goodnight, " he says.

We stand awkwardly for two beats more. "This will sound strange," he says,

"but I hope I don't see you tomorrow."

We stare at each other, still drifting in the wake of our shared mind-stream. I

know exactly what he means. We will only see each other tomorrow if I find

a really bad bug.

"Not strange at all," I say, "I hope I don't see you either."

"THE OUTWARD MANIFESTATION OF THE MESSINESS OF HUMAN THOUGHT"

The project begins in the programmer's mind with the beauty of a crystal. I

remember the feel of a system at the early stages of programming, when the

knowledge I am to represent in code seems lovely in its structuredness. For a

time, the world is a calm, mathematical place. Human and machine seem

attuned to a cut-diamond-like state of grace. Once in my life I tried

methamphetamine: That speed high is the only state that approximates the

feel of a project at its inception. Yes, I understand. Yes, it can be done. Yes,

how straightforward. Oh yes. I see.

Then something happens. As the months of coding go on, the irregularities of

human thinking start to emerge. You write some code, and suddenly there

are dark, unspecified areas. All the pages of careful design documents, and

still, between the sentences, something is missing. Human thinking can skip

over a great deal, leap over small misunderstandings, can contain ifs and

buts in untroubled corners of the mind. But the machine has no corners.

Despite all the attempts to see the computer as a brain, the machine has no

foreground or background. It can be programmed to behave as if it were

working with uncertainty, but -- underneath, at the code, at the circuits -- it

cannot simultaneously do something and withhold for later something that

remains unknown. In the painstaking working out of the specification, line by

code line, the programmer confronts an awful, inevitable truth: the ways of

human and machine understanding are disjunct.

Now begins a process of frustration. The programmer goes back to the

analysts with questions, the analysts to the users, the users to their

managers, the managers back to the analysts, the analysts to the

programmers. It turns out that some things are just not understood. No one

knows the answers to some questions. Or worse, there are too many

answers. A long list of exceptional situations is revealed, things that occur

very rarely but that occur all the same. Should these be programmed? Yes,

of course. How else will the system do the work human beings need to

accomplish? Details and exceptions accumulate. Soon the beautiful crystal

must be recut. This lovely edge and that are lost. What began in a state of

grace soon reveals itself to be a jumble. The human mind, as it turns out, is

messy.

Gone is the calm, mathematical world. The clear, clean methedrine high is

over. The whole endeavor has become a struggle against disorder. A battle of

wills. A testing of endurance. Requirements muddle up; changes are needed

immediately. Meanwhile, no one has changed the system deadline. The

programmer, who needs clarity, who must talk all day to a machine that

demands declarations, hunkers down into a low-grade annoyance. It is here

that the stereotype of the programmer, sitting in a dim room, growling from

behind Coke cans, has its origins. The disorder of the desk, the floor; the

yellow post-it notes everywhere; the white boards covered with scrawl: all

this is the outward manifestation of the messiness of human thought. The

messiness cannot go into the program; it piles up around the programmer.

Soon the programmer has no choice but to retreat into some private interior

space, closer to the machine, where things can be accomplished. The

machine begins to seem friendlier than the analysts, the users, the

managers. The real-world reflection of the program -- who cares anymore?

Guide an X-ray machine or target a missile; print a budget or a dossier; run

a city subway or a disk-drive read/write arm: it all begins to blur. The system

has crossed the membrane -- the great filter of logic, instruction by

instruction -- where it has been cleansed of its linkages to actual human life.

The goal now is not whatever all the analysts first set out to do; the goal

becomes the creation of the system itself. Any ethics or morals or second

thoughts, any questions or muddles or exceptions, all dissolve into a junky

Nike-mind: Just do it. If I just sit here and code, you think, I can make

something run. When the humans come back to talk changes, I can just run

the program. Show them: Here. Look at this. See? This is not just talk. This

runs. Whatever you might say, whatever the consequences, all you have are

words and what I have is this, this thing I've built, this operational system.

Talk all you want, but this thing here: it works.

SALON | Oct. 9, 1997

Ellen Ullman is a software engineer who lives in San Francisco and writes

about her profession.

sliced off by the cutting edge

http://www.salonmagazine.com/21st/feature/1997/10/cov_16ullman.html

Sliced off by the cutting edge: It's impossible for programmers to keep up with every trend even when

they're eager and willing. What happens when they despair? Excerpt from "Close to the Machine."

By Ellen Ullman Oct. 16, 1997

IT'S IMPOSSIBLE FOR SOFTWARE ENGINEERS TO KEEP UP WITH

EVERY NEW TECHNO-TREND EVEN WHEN THEY'RE EAGER AND

WILLING. BUT WHAT HAPPENS WHEN THEY START TO DESPAIR?

-----------------------------------This is the second of two excerpts in Salon 21st from Ellen Ullman's new book, "Close to the

Machine: Technophilia and its Discontents" (City Lights Books, $21.95, 189 pages), an

autobiographical exploration of the lives and minds of software engineers.

It had to happen to me sometime: sooner or later I would have to lose sight

of the cutting edge. That moment every technical person fears -- the fall into

knowledge exhaustion, obsolescence, techno-fuddy-duddyism -- there was

no reason to think I could escape it forever. Still, I didn't expect it so soon.

And not there: not at the AIDS project I'd been developing, where I fancied

myself the very deliverer of high technology to the masses.

It happened in the way of all true-life humiliations: when you think you're

better than the people around you. I had decided to leave the project; I

agreed to help find another consultant, train another team. There I was,

finding my own replacement. I called a woman I thought was capable,

experienced -- and my junior. I thought I was doing her a favor; I thought

she should be grateful.

She arrived with an entourage of eight, a group she had described on the

telephone as "Internet heavy-hitters from Palo Alto." They were all in their

early 30s. The men had excellent briefcases, wore beautiful suits, and each

breast pocket bulged ever so slightly with what was later revealed to be a

tiny, exquisite cellular phone. One young man was so blonde, so pale-eyed,

so perfectly white, he seemed to have stepped out of a propaganda film for

National Socialism. Next to him was a woman with blonde frosted hair,

chunky real-gold bracelets, red nails, and a short skirt, whom I took for a

marketing type; she turned out to be in charge of "physical network

configuration." This group strutted in with all the fresh-faced drive of technocapitalism, took their seats beneath the AIDS prevention posters ("Warriors

wear shields with men and women!" "I take this condom everywhere I bring

my penis!"), and began their sales presentation.

They were pushing an intranet. This is a system using all the tools of the

Internet -- Web browser, net server -- but on a private network. It is all the

rage, it is cool, it is what everyone is talking about. It is the future and, as

the woman leading the group made clear, what I have been doing is the

past. "An old-style enterprise system" is what she called the application as I

had built it, "a classic."

My client was immediately awed by their wealth, stunned silent by their selfassurance. The last interviewee had been a nervous man in an ill-fitting suit,

shirt washed but not quite ironed, collar crumpled over shiny polyester tie.

Now here came these smooth new visitors, with their "physical network

configuration" specialist, their security expert, their application designer, and

their "technology paradigm." And they came with an attitude -- the AIDS

project would be lucky to have them.

It was not only their youth and high-IQ arrogance that bothered me. It

wasn't just their unbelievable condescension ("For your edification, ma'am,"

said one slouch-suited young man by way of beginning an answer to one of

my questions). No, this was common enough. I'd seen it all before,

everywhere, and I'd see it again in the next software engineer I'd meet.

What bothered me was just that: the ordinariness of it. From the hostile

scowl of my own programmer to the hard-driving egos of these "Internet

heavy-hitters": normal as pie. There they were on the cutting edge of our

profession, and their arrogance was as natural as breathing. And in those

slow moments while their vision of the future application was sketched across

the white boards -- intranet, Internet, cool, hip, and happening -- I knew I

had utterly and completely lost that arrogance in myself.

I missed it. Suddenly and inexplicably, I wanted my arrogance back. I

wanted to go back to the time when I thought that, if I tinkered a bit, I could

make anything work. That I could learn anything, in no time, and be good at

it. The arrogance is a job requirement. It is the confidence-builder that lets

you keep walking toward the thin cutting edge. It's what lets you forget that

your knowledge will be old in a year, you've never seen this new technology

before, you have only a dim understanding of what you're doing, but -- hey,

this is fun -- and who cares since you'll figure it all out somehow.

But the voice that came out of me was not having fun.

"These intranet tools aren't proven," I found myself saying. "They're all

release 1.0 -- if that. Most are in beta test. And how long have you been

doing this? What -- under a year? Exactly how many intranets have you

implemented successfully?"

My objections were real. The whole idea wasn't a year old. The tools weren't

proven. New versions of everything were being released almost as we spoke.

And these heavy-hitters had maybe done one complete intranet job before

this -- maybe. But in the past none of this would have bothered me. I would

have seen it as part of the usual engineering trade-offs, get something, give

up something else. And the lure of the new would have been irresistible: the

next cover to take off, the next black box to open.

But now, no. I didn't want to take off any covers. I didn't want to confront

any more unknowns. I simply felt exhausted. I didn't want to learn the

intranet, I wanted it to be a bad idea, and I wanted it all just to go away.

"And what about network traffic?" I asked. "Won't this generate a lot of

network traffic? Aren't you optimizing for the wrong resource? I mean,

memory and disk on the desktop are cheap, but the network bandwidth is

still scarce and expensive."

More good objections, more justifications for exhaustion.

"And intranets are good when the content changes frequently -- catalogs,

news, that kind of stuff. This is a stable application. The dataset won't

change but once a year."

Oh, Ellen, I was thinking, What a great fake you are. I was thinking this

because, even as I was raising such excellent issues, I knew it was all beside

the point. What I was really thinking was: I have never written an intranet

program in my life, I have never hacked on one, I have never even seen one.

What I was really feeling was panic.

I'd seen other old programmers act like this, get obstructionist and hostile in

the face of their new-found obsolescence, and there I was, practically

growing an old guy's gut on the spot. But the role had a certain momentum,

and once I'd stepped on the path of the old programmer, there seemed to be

no way back. "And what happens after you leave?" I asked. "There just

aren't that many intranet experts out there. And they're expensive. Do you

really think this technology is appropriate for this client?"

"Well," answered the woman I'd invited, the one I'd thought of as my junior,

the one I was doing a favor, "you know, there are the usual engineering

trade-offs."

Engineering trade-offs. Right answer. Just what I would have said once.

"And besides," said the woman surrounded by her Internet heavy-hitters,

"like it or not, this is what will be happening in the future."

The future. Right again. The new: irresistible, like it or not.

But I didn't like it. I was parting ways with it. And exactly at that moment, I

had a glimpse of the great, elusive cutting edge of technology. I was

surprised to see that it looked like a giant cosmic Frisbee. It was yellow,

rotating at a great rate, and was slicing off into the universe, away from me.

OLD PROGRAMMING LANGUAGES ARE LIKE OLD LOVERS

I learned to program a computer in 1971; my first programming job came in

1978. Since then, I have taught myself six higher-level programming

languages, three assemblers, two data-retrieval languages, eight jobprocessing languages, seventeen scripting languages, ten types of macros,

two object-definition languages, sixty-eight programming-library interfaces,

five varieties of networks, and eight operating environments -- fifteen, if you

cross-multiply the distinct combinations of operating systems and networks. I

don't think this makes me particularly unusual. Given the rate of change in

computing, anyone who's been around for a while could probably make a list

like this.

This process of remembering technologies is a little like trying to remember

all your lovers: you have to root around in the past and wonder, Let's see.

Have I missed anybody? In some ways, my personal life has made me

uniquely suited to the technical life. I'm a dedicated serial monogamist -long periods of intense engagement punctuated by times of great

restlessness and searching. As hard as this may be on the emotions, it is a

good profile for technology.

I've managed to stay in a perpetual state of learning only by maintaining

what I think of as a posture of ignorant humility. This humility is as

mandatory as arrogance. Knowing an IBM mainframe -- knowing it as you

would a person, with all its good qualities and deficiencies, knowledge gained

in years of slow anxious probing -- is no use at all when you sit down for the

first time in front of a UNIX machine. It is sobering to be a senior

programmer and not know how to log on.

There is only one way to deal with this humiliation: bow your head, let go of

the idea that you know anything, and ask politely of this new machine, "How

do you wish to be operated?" If you accept your ignorance, if you really

admit to yourself that everything you know is now useless, the new machine

will be good to you and tell you: here is how to operate me.

Once it tells you, your single days are over. You are involved again. Now you

can be arrogant again. Now you must be arrogant: you must believe you can

come to know this new place as well as the old -- no, better. You must now

dedicate yourself to that deep slow probing, that patience and frustration,

the anxious intimacy of a new technical relationship. You must give yourself

over wholly to this: you must believe this is your last lover.

I have known programmers who managed to stay with one or two operating

systems their entire careers -- solid married folks, if you will. But, sorry to

say, our world has very little use for them. Learn it, do it, learn another:

that's the best way. UNIX programmers used to scoff at COBOL drones, stuck

year by year in the wasteland of corporate mainframes. Then, just last year,

UNIX became old-fashioned, Windows NT is now the new environment, and

it's time to move on again. Don't get comfortable, don't get too attached,

don't get married. Fidelity in technology is not even desirable. Loyalty to one

system is career-death. Is it any wonder that programmers make such good

social libertarians?

Every Monday morning, three trade weeklies come sliding through my mail

slot. I've come to dread Mondays, not for the return to work but for these fat

loads of newness piled on the floor waiting for me. I cannot possibly read all

those pages. But then again, I absolutely must know what's in them.

Somewhere in that pile is what I must know and what I must forget.

Somewhere, if I can only see it, is the outline of the future.

Once a year, I renew my subscription to the Microsoft Professional Developer

Network. And so an inundation of CD-ROMs continues. Quarterly, seasonally,

monthly, whenever -- with an odd and relentless periodicity -- UPS shows up

at my door with a new stack of disks. New versions of operating systems,

libraries, tools -- everything you need to know to keep pace with Microsoft.

The disks are barely loaded before I turn around and UPS is back again: a

new stack of disks, another load of newness.

Every month come the hardware and software catalogs: the Black Box

networking book, five hundred pages of black-housed components turned

around to show the back panel; PCs Compleat, with its luscious just-out

laptops; and my favorite, the Programmer's Paradise, on the cover a cartoon

guy in wild bathing trunks sitting under a palm tree. He is all alone on a tiny

desert island but he is happy: he is surrounded by boxes of the latest

programming tools.

Then there is the Microsoft Systems Journal, a monthly that evangelizes the

Microsoft way while handing out free code samples. The Economist, to

remind myself how my libertarian colleagues see the world. Upside, Wired,

The Red Herring: the People magazines of technology. The daily Times and

Wall Street Journal. And then, as if all this periodical literature were not

enough, as if I weren't already drowning in information -- here comes the

Web. Suddenly, monthly updates are unthinkable, weekly stories laughable,

daily postings almost passé. "If you aren't updating three times a day, you're

not realizing the potential of the medium," said one pundit, complaining

about an on-line journal that was refreshing its content -- shocking! -- only

once a day.

There was a time when all this newness was exhilarating. I would pore over

the trade weeklies, tearing out pages, saving the clips in great messy piles. I

ate my meals reading catalogs. I pestered nice young men taking orders on

the other end of 800 phone lines; I learned their names and they mine. A

manual for a new programming tool would call out to me like a fussy, rustling

baby from inside its wrapping.

What has happened to me that I just feel tired? The weeklies come, and I

barely flip the pages before throwing them on the recycle pile. The new

catalogs come and I just put them on the shelf. The invoice for the

Professional Developer Subscription just came from Microsoft: I'm thinking of

doing the unthinkable and not renewing.

I'm watching the great, spinning, cutting edge slice away from me -- and I'm

just watching. I'm almost fascinated by my own self-destructiveness. I know

the longer I do nothing, the harder it will be to get back. Technologic time is

accelerated, like the lives of very large dogs: six months of inattention might

as well be years. Yet I'm doing nothing anyway. For the first time in nineteen

years, the new has no hold on me. This terrifies me. It also makes me feel

buoyant and light.

SALON | Oct. 16, 1997

Ellen Ullman is a software engineer who lives in San Francisco and writes

about her profession.

Elegance & Entropy

http://www.salonmagazine.com/21st/feature/1997/10/09interview.html

Elegance and entropy: An interview with Ellen Ullman.

By Scott Rosenberg

Oct. 9, 1997

By Scott Rosenberg

Like thousands of software

engineers, Ellen Ullman writes code.

Unlike her colleagues, she also

writes about what it's like to write

code. In a 1995 essay titled "Out of

Time: Reflections on the

Programming Life," included in the

collection "Resisting the Virtual Life,"

Ullman got inside the heads of

professional programmers -- and

introduced a lot of readers to an

intricate new world. "The

programming life," as Ullman depicts

it, is a constant tug of war between the computer's demand for exactitude

and the entropic chaos of real life.

In her new book, "Close to the Machine," which is being excerpted in Salon

21st this week and next, she tells autobiographical stories from inside today's

software-engineering beast -- everything from the trials of programming a

Web service for AIDS patients and clinics to a romance with a shaggy rebel

cryptographer who wants to finance his anonymous global banking system by

running an offshore porn server.

I talked with Ullman in the brick-lined downtown San Francisco loft she

shares with a sleek cat named Sadie and four well-hidden computers.

In "Close to the Machine" you tell the tale of a boss at a small

company whose new computer system lets him monitor the work of a

loyal secretary -- something he'd never before thought necessary.

You call that "the system infecting the user." But a lot of people view

the computer as a neutral tool.

Tools are not neutral. The computer is not a neutral tool. A hammer may or

may not be a neutral tool: you know, smash a skull or build a house. But we

use a hammer to be a hammer. A computer is a general-purpose machine

with which we engage to do some of our deepest thinking and analyzing. This

tool brings with it assumptions about structuredness, about defined

interfaces being better. Computers abhor error.

I hate the new word processors that want to tell you, as you're typing, that

you made a mistake. I have to turn off all that crap. It's like, shut up -- I'm

thinking now. I will worry about that sort of error later. I'm a human being. I

can still read this, even though it's wrong. You stupid machine, the fact that

you can't is irrelevant to me. Abhorring error is not necessarily positive.

It's good to forgive error.

And we learn through error. We're sense-making creatures who make sense

out of chaos, out of error. We zoom around in a sea of half-understood and

half-known things. And so it affects us to have more and more of our life

involved with very authoritarian, error-unforgiving tools. I think people who

work around computers get more and more impatient. Programmers go into

meetings and they hate meetings. If someone meanders around and doesn't

get to the point, they'll say, what's your point!?! I think the more time you

spend around computers, the more you get impatient with other people,

impatient with their errors, you get impatient with your own errors.

Machines are also wonderful. I enjoy sitting there for hours, and there's a

reason it's so deeply engaging. It's very satisfying to us to have this thing

cycling back at you and paying attention to you, not interrupting you. But it's

not an unalloyed good -- that's my point. It's changing our way of life deeply,

like the automobile did, and in ways we don't yet understand.

When people talk about computers, they fall into two groups: the true

believers -- you know, technology will save us, new human beings are being

created. For me, as an ex-Communist, let me tell you, when people start

talking about a new human being, I get really scared. Please! We're having

trouble enough with this one! And on the other hand, other people think

computers are horrible and are ruining our lives. I'm somewhere in the

middle. I mean, we can't live without this any more. Try to imagine modern

banking. Try to imagine your life in the developed world without computers.

Not possible.

There's a lot of romanticism, in places like Wired magazine, about

digital technology evolving its own messy, chaotic systems. In "Close

to the Machine," you lean more to the view that computer systems

are rigid and pristine.

From the standpoint of what one engineer can handle, yes. You can't

program outside of the box, and things that happen that were not anticipated

by the programmer are called design flaws or bugs. A bug is something that

a programmer is supposed to do but doesn't, and a design flaw is something

that the programmer doesn't even think about.

So it's a very beautifully structured world. In computing, when something

works, engineers talk about elegant software. So in one sense, we're talking

about something very structured and reductive. And in another sense, we're

really not, you're talking about elegance, and a notion of beauty.

What makes a piece of software code elegant?

I'll try to speak by analogy. Physicists right now are not happy about their

model of the world because it seems too complicated, there are too many

exceptions. Part of the notion of elegance is that it's compact. And that out of

something very simple a great deal of complexity can grow -- that's why the

notion of fractals is very appealing. You take a very, very simple idea and it

enables tremendous complexity to happen.

So from the standpoint of a small group of engineers, you're striving for

something that's structured and lovely in its structuredness. I don't want to

make too much of this, because with most engineers there's a great deal of

ego, you want to write the most lines of code, more than anybody else,

there's a kind of macho.

Yet the more elegant program does the same thing in fewer lines.

When you're around really serious professional programmers, this code

jockey stuff really falls away, and there is a recognition that the best

programmers spend a lot of time thinking first, and working out the

algorithms on paper or in their heads, at a white board, walking. You dream

about it, you work it out -- you don't just sit there and pump out code. I've

worked with a lot of people who pumped out code, and it's frightening. Two

weeks later, you ask them about it, and it's like it never happened to them.

So the motive of a true program is a certain compact beauty and elegance

and structuredness. But the reality of programming is that programs get old

and they accumulate code over the years -- that's the only word I can use to

describe it, they accumulate modifications. So old programs, after they've

been in use 10 or 15 years, no one person understands them. And there is a

kind of madness in dealing with this.

And with the new systems we're creating, even the ones that are running

now, there's a tremendous amount of complexity. Right now, if you talk to

people who try to run real-world systems, it is a struggle against entropy.

They're always coming apart. And people don't really talk about that part

much. The real-world experience of system managers is a kind of permanent

state of emergency. Whereas programmers are kind of detached for a time

and go into this floating space, networking people live in this perpetual now.

It's the world of pagers.

They've got things that buzz them. They're ready to be gone in a minute. To

try to keep the network running is very much to be at the edge of that

entropy where things really don't want to keep running. There's nothing

smooth, there's nothing elegant. It's ugly, full of patches, let's try this, let's

try that -- very tinker-y.

That's such a different picture from the rosy vision of the Net as this

indestructible, perfectly designed organism.

One of the things I'm really glad about the success of the Web is that more

people now are being exposed to the true reality of working on a network.

Things get slowed up. It doesn't answer you; it's down. How often can't you

get to your e-mail?

I couldn't send e-mail to you to tell you I'd be late for this interview.

Some little server out there, your SMTP server, it's busy, it's gone, it's not

happy. Exactly. And this is a permanent state of affairs. I don't see anything

sinister or horrible about this, but it is more and more complex.

The main reason I wrote "Close to the Machine" was not just to talk about

my life. Of course, everyone just wants to talk about themselves. But I have

this feeling that imbedded in this technology is an implicit way of life. And

that we programmers who are creating it are imbedding our way of being in

it.

Look at the obvious: groupware. That doesn't mean software that helps

people get together and have a meeting. It means, help people have access

to each other when they're far apart, so they don't have to get into a room,

so they don't have to have a meeting. They don't have to speak directly.

That is a programmer's idea of heaven. You can have a machine interface

that takes care of human interaction. And a defined interface. Programmers

like defined interfaces between things. Software, by its nature, creates

defined interfaces. It homogenizes, of necessity. And some of that's just

plain damn useful. I'm happy when I go to the bank and stick a card in and

they give me a wad of money. That's great.

For someone who is so immersed in the world of technology, you

take an unusually critical view of the Net.

The Net is represented as this very democratic tool because everyone's a

potential publisher. And to an extent that's true. However, it is not so easy to

put a Web site up. Technically, it's getting more and more difficult,

depending on what you want to do. It's not as if the average person wants to

put up a Web site.

The main thing that I notice is the distinction between something like a word

processor and a spreadsheet, and a Web browser. From the user's point of

view there's a completely different existential stance. The spreadsheet and

the word processor are pure context -- they just provide a structure in which

human beings can express their knowledge. And it's presumed that the

information resides in the person. These are tools that help you express,

analyze and explore very complex things -- things that you are presumed

already to know. The spreadsheet can be very simple, where essentially

you're just typing things in and it helps you to format them in columns. Or it

can be a tool for really fantastically complicated analysis. You can grow with

it, your information grows with it -- it's the ideal human-computer tool.

With a Web browser, this situation is completely reversed. The Web is all

content with very limited context. With the Web, all the information is on the

system somewhere -- it's not even on your computer. It's out there -- it

belongs to the system. More and more now even the programs don't reside

with you -- that's the notion of the thin client, the NetPC and Java.

So the whole sphere of control has shifted from the human being, the

individual sitting there trying to figure out something, to using stuff that the

system owns and looking for things that are on the system. Everyone starts

at the same level and pretty much stays there in a permanent state of

babyhood. Click. Forward. Back. Unless you get into publishing, which is a

huge leap that most people won't make.

But the Net is not one central system, it's a million systems. That

creates a lot of the confusion -- but the advantage is that there's

such a vast and diverse variety of material available.

My criticism, I suppose, is not of the Net but of the browser as an interface,

as a human tool. I'm looking at it as a piece of software that I have to use.

This is the only way I can interact with all this stuff. Some of what's out there

may be good, some of it may not be. But I don't have the tools to analyze it.

I can print it -- that's it. I can search for occurrences of a certain word. I can

form a link to it. I can go forward and back. Am I missing anything here?

What do you mean by "analyzing" a Web page?

At this point, when you put something up on the Web, you don't have to say

who put it up there, you don't have to say where it really lives, the author

could be anyone. Which is supposedly its freedom. But as a user, I'm

essentially in a position where everyone can represent themselves to me

however they wish. I don't know who I'm talking to. I don't know if this is

something that will lead to interesting conversation and worthwhile

information -- or if it's a loony toon and a waste of my time.

I'm not a big control freak, I don't really know who would administer this or

how it would be. But I would just like to see that a Web page had certain

parameters that are required: where it is and whose it is. I would like to have

some way in which I could have some notion of who I'm talking to. A digital

signature on the other end.

That's feasible today.

Yeah, but the ethos of the Net is that everything should be free, everyone

should do whatever they want -- you're creating this marketplace of ideas I

can pick and choose in. But if I don't have the tools to pick and choose and I

don't know who I'm talking to, essentially I'm walking into a room and I have

blindfolds on.

The political ethos of the Net, its extreme libertarianism -- that's another

thing that comes out of the programming social world. You know, whoever's

the most technically able can do whatever they want. It's really not

"everyone can do whatever they want"; it's that the more technically able

you are, the more you should be able to do. And that's the way it is online to

me. It is a kind of meritocracy in a very narrow sense.

How did you first become a programmer?

I've had one foot in the world that speaks English and one foot in the world

of technology almost my whole life. I majored in English, minored in biology.

The minute I got out of college, I worked as a videographer. A side note:

Those were the days when we thought that giving everybody a Portapak -- a

portable video machine -- would change the world. So I'd seen one technical

revolution. And it made me very skeptical about the idea that everyone

having a PC would change the world.

I was doing some animation stuff, and I saw people doing computer-aided

animation and found it fascinating. I asked them how they did it, and they

said, well, do you know Fortran? I wasn't thinking of becoming a professional

programmer. This was 1971, 1972. The state of the art was primitive, and I

didn't become a programmer at that point. I did photography for a living; I

was a media technician. I moved out here [to the Bay Area], pumped gas,

answered telephones for a living -- I mean, talk about professions that have

been technologically made obsolete. I liked being a switchboard operator, it

passed the time very nicely.

That's a different way of being close to a machine.

The machine is very simple -- it's the people who don't cooperate. Anyway, I

did socially useful media for women's groups, women's radio programs,

photography shows. I came of age doing media at a time when we thought it

should be imbedded in social action. And then I got more involved in political

work, in lesbian politics and women's politics, and then eventually got tired of

the splits -- people were always dividing. So I had some friends who had

joined a Communist formation, and it was the time to sort of put up or shut

up. I joined up. Of course, I did technical and media stuff for them -- I was

responsible for their graphic-arts darkroom and laying out their newspaper.

The inevitable part of my life is to be involved with machines.

In "Close to the Machine" you talk about the parallels between being

a programmer and being a Communist.

It's a very mechanistic way of thinking, very intolerant of error -- and when

things got confusing we tried to move closer to the machine, we tried to

block out notions of human complexity. You tried to turn yourself into this

machine. We were supposed to be proud of being cogs, and really

suppressing and banishing all that messy, wet chemical life that we're a part

of.

Now, supposedly, only a cadre was supposed to go through this; the rest of

humanity wasn't. Eventually, you realize, if the world is being remade by

these people who've suppressed all these other parts of themselves, when

they're done with all their decades and decades of struggle, will they

remember how to be a complicated human being? That can happen to you if

you do programming. So I quit.

I actually went through a very serious and very damaging expulsion. And I

became a professional programmer because of that expulsion. I say in the

book, I was promoted very rapidly because my employer was amazed at my

ability to work hundreds of hours a week without complaining. But I had

been rather damaged by that year. I spent months just sitting there working

symbolic logic proofs. That was all I could do. It was the only way I could

calm myself down and try to get my brain back.

Programming was both a symptom of how crazy I was and also a great

solace. So then I got a job, and I was promoted in a minute, and they

wanted to make me a product manager, I was a product manager for a

minute, then I said no, I can't stand it, I want to program. I was a

programmer, then I was put in charge of designing a new system, then they

wanted to make me a manager again.

This has been the history of my life. Eventually I became a consultant,

because I don't want to manage programmers.

To what extent are programming languages actually languages? Can

you look at someone's code and tell what kind of person wrote this?

I can tell what kind of programmer they were, but not what type of person

they were. Code is not expressive in that way. It doesn't allow for enough

variation. It must conform to very strict rules. But programmers have styles,

they definitely have styles. Some people write very compact code. Compact

and elegant. Also, does one comment the code, and how generous are those

comments? You can get a sense of someone's generosity. Are they writing

the code with the knowledge that someone else has to come by here, or not?

Good code is written with the idea that I'll be long gone and five years from

now it, or some remnant, will still be running, and I don't want someone just

hacking it to pieces. You sort of protect your code, by leaving clear

comments.

Another rule of thumb is that all programmers hate whoever came before

them. You can't help it. There's a real distinction in programming between

new development people and people who work on other people's code. I had

this space of five years where I did only new development for systems that

had no users. Programming heaven! But very few programmers get that. It's

a privilege. The places you have to express yourself are in the algorithm

design, if you're in new development -- and that is an art. But not many

people are programming at that stage.

So if you ask me, it's not a language. We can use English to invent poetry, to

try to express things that are very hard to express. In programming you

really can't. Finally, a computer program has only one meaning: what it

does. It isn't a text for an academic to read. Its entire meaning is its

function.

SALON | Oct. 9, 1997

The dumbing-down of programming

P A R T_O N E:

REBELLING AGAINST MICROSOFT, "MY COMPUTER" AND EASY-TO-USE WIZARDS, AN

ENGINEER REDISCOVERS THE JOYS OF DIFFICULT COMPUTING.

BY ELLEN ULLMAN

Last month I committed an act of technical

rebellion: I bought one operating system

instead of another. On the surface, this may

not seem like much, since an operating system

is something that can seem inevitable. It's

there when you get your machine, some

software from Microsoft, an ur-condition that

can be upgraded but not undone. Yet the world

is filled with operating systems, it turns out.

And since I've always felt that a computer

system is a significant statement about our

relationship to the world -- how we organize

our understanding of it, how we want to

interact with what we know, how we wish to project the whole notion of

intelligence -- I suddenly did not feel like giving in to the inevitable.

My intention had been to buy an upgrade to Windows NT Server, which was a

completely sensible thing for me to be doing. A nice, clean, up-to-date

system for an extra machine was the idea, somewhere to install my clients'

software; a reasonable, professional choice in a world where Microsoft

platforms are everywhere. But somehow I left the store carrying a box of

Linux from a company called Slackware. Linux: home-brewed, hobbyist,

group-hacked. UNIX-like operating system created in 1991 by Linus Torvalds

then passed around from hand to hand like so much anti-Soviet samizdat.

Noncommercial, sold on the cheap mainly for the cost of the documentation,

impracticable except perhaps for the thrill of actually looking at the source

code and utterly useless to my life as a software engineering consultant.

But buying Linux was no mistake. For the mere act of installing the system -stripping down the machine to its components, then rebuilding its capabilities

one by one -- led me to think about what has happened to the profession of

programming, and to consider how the notion of technical expertise has

changed. I began to wonder about the wages, both personal and social, of

spending so much time with a machine that has slowly absorbed into itself as

many complications as possible, so as to present us with a façade that says

everything can and should be "easy."

***

I began by ridding my system of Microsoft. I came of technical age with

UNIX, where I learned with power-greedy pleasure that you could kill a

system right out from under yourself with a single command. It's almost the

first thing anyone teaches you: Run as the root user from the root directory,

type in rm -r f *, and, at the stroke of the ENTER key, gone are all the files

and directories. Recursively, each directory deleting itself once its files have

been deleted, right down to the very directory from which you entered the

command: the snake swallowing its tail. Just the knowledge that one might

do such great destruction is heady. It is the technical equivalent of suicide,

yet UNIX lets you do it anyhow. UNIX always presumes you know what

you're doing. You're the human being, after all, and it is a mere operating

system. Maybe you want to kill off your system.

But Microsoft was determined to protect me from myself. Consumeroriented, idiot-proofed, covered by its pretty skin of icons and dialog boxes,

Windows refused to let me harm it. I had long ago lost my original start-up

disk, the system was too fritzed to make a new one and now it turned away

my subterfuges of DOS installation diskette, boot disks from other machines,

later versions of utilities. Can't reformat active drive. Wrong version

detected. Setup designed for systems without an operating system;

operating system detected; upgrade version required. A cascade of error

messages, warnings, beeps; a sort of sound and light show -- the Wizard of

Oz lighting spectacular fireworks to keep me from flinging back the curtain to

see the short fat bald man.

For Microsoft's self-protective skin is really only a show, a lure to the

determined engineer, a challenge to see if you're clever enough to rip the

covers off. The more it resisted me, the more I knew I would enjoy the

pleasure of deleting it.

Two hours later, I was stripping down the system. Layer by layer it fell away.

Off came Windows NT 3.51; off came a wayward co-installation of Windows

95 where it overlaid DOS. I said goodbye to video and sound; goodbye

wallpaper; goodbye fonts and colors and styles; goodbye windows and icons

and menus and buttons and dialogs. All the lovely graphical skins turned to

so much bitwise detritus. It had the feel of Keir Dullea turning off the keys to

HAL's memory core in the film "2001," each keyturn removing a "higher"

function, HAL's voice all the while descending into mawkish, babyish

pleading. Except that I had the sense that I was performing an exactly

opposite process: I was making my system not dumber but smarter. For now

everything on the system would be something put there by me, and in the

end the system itself would be cleaner, clearer, more knowable -- everything

I associate with the idea of "intelligent."

What I had now was a bare machine, just the hardware and its built-in logic.

No more Microsoft muddle of operating systems. It was like hosing down

your car after washing it: the same feeling of virtuous exertion, the pleasure

of the sparkling clean machine you've just rubbed all over. Yours. Known

down to the crevices. Then, just to see what would happen, I turned on the

computer. It powered up as usual, gave two long beeps, then put up a

message in large letters on the screen:

NO ROM BASIC

What? Had I somehow killed off my read-only memory? It doesn't matter

that you tell yourself you're an engineer and game for whatever happens.

There is still a moment of panic when things seem to go horribly wrong. I

stared at the message for a while, then calmed down: It had to be related to

not having an operating system. What else did I think could happen but

something weird?

But what something weird was this exactly? I searched the Net, found

hundreds of HOW-TO FAQs about installing Linux, thousands about

uninstalling operating systems -- endless pages of obscure factoids, strange

procedures, good and bad advice. I followed trails of links that led to

interesting bits of information, currently useless to me. Long trails that ended

in dead ends, missing pages, junk. Then, sometime about 1 in the morning,

in a FAQ about Enhanced IDE, was the answer:

8.1. Why do I get NO ROM BASIC, SYSTEM HALTED?

This should get a prize for the PC compatible's most obscure

error message. It usually means you haven't made the primary

partition bootable ...

The earliest true-blue PCs had a BASIC interpreter built in, just

like many other home computers those days. Even today, the

Master Boot Record (MBR) code on your hard disk jumps to the

BASIC ROM if it doesn't find any active partitions. Needless to

say, there's no such thing as a BASIC ROM in today's

compatibles....

I had not seen a PC with built-in BASIC in some 16 years, yet here it still

was, vestigial trace of the interpreter, something still remembering a time

when the machine could be used to interpret and execute my entries as lines

in a BASIC program. The least and smallest thing the machine could do in

the absence of all else, its one last imperative: No operating system! Look for

BASIC! It was like happening upon some primitive survival response, a lowlevel bit of hard wiring, like the mysterious built-in knowledge that lets a

blind little mouseling, newborn and helpless, find its way to the teat.

This discovery of the trace of BASIC was somehow thrilling -- an ancient pot

shard found by mistake in the rubble of an excavation. Now I returned to the

FAQs, lost myself in digging, passed another hour in a delirium of trivia. Hex

loading addresses for devices. Mysteries of the BIOS old and new.

Motherboards certified by the company that had written my BIOS and

motherboards that were not. I learned that my motherboard was an orphan.

It was made by a Taiwanese company no longer in business; its BIOS had

been left to languish, supported by no one. And one moment after midnight

on Dec. 31, 1999, it would reset my system clock to ... 1980? What? Why

1980 and not zero? Then I remembered: 1980 was the year of the first IBM

PC. 1980 was Year One in desktop time.

The computer was suddenly revealed as palimpsest. The machine that is

everywhere hailed as the very incarnation of the new had revealed itself to

be not so new after all, but a series of skins, layer on layer, winding around

the messy, evolving idea of the computing machine. Under Windows was

DOS; under DOS, BASIC; and under them both the date of its origins

recorded like a birth memory. Here was the very opposite of the

authoritative, all-knowing system with its pretty screenful of icons. Here was

the antidote to Microsoft's many protections. The mere impulse toward Linux

had led me into an act of desktop archaeology. And down under all those

piles of stuff, the secret was written: We build our computers the way we

build our cities -- over time, without a plan, on top of ruins.

------------

"My Computer" -- the infantilizing baby names of the Windows world

My Computer. This is the face offered to the world by the other machines in

the office. My Computer. I've always hated this icon -- its insulting,

infantilizing tone. Even if you change the name, the damage is done: It's how

you've been encouraged to think of the system. My Computer. My

Documents. Baby names. My world, mine, mine, mine. Network

Neighborhood, just like Mister Rogers'.

On one side of me was the Linux machine, which I'd managed to get booted

from a floppy. It sat there at a login prompt, plain characters on a black-andwhite screen. On the other side was a Windows NT system, colored little

icons on a soothing green background, a screenful of programming tools:

Microsoft Visual C++, Symantec Visual Cafe, Symantec Visual Page, Totally

Hip WebPaint, Sybase PowerBuilder, Microsoft Access, Microsoft Visual Basic

-- tools for everything from ad hoc Web-page design to corporate

development to system engineering. NT is my development platform, the

place where I'm supposed to write serious code. But sitting between my two

machines -- baby-faced NT and no-nonsense Linux -- I couldn't help thinking

about all the layers I had just peeled off the Linux box, and I began to

wonder what the user-friendly NT system was protecting me from.

Developers get the benefit of visual layout without the hassle of having to

remember HTML code.

-- Reviewers' guide to Microsoft J++

Templates, Wizards and JavaBeans Libraries Make Development Fast

-- Box for Symantec's Visual Cafe for Java

Simplify application and applet development with numerous wizards

-- Ad for Borland's JBuilder in the Programmer's Paradise catalog

Thanks to IntelliSense, the Table Wizard designs the structure of your

business and personal databases for you.

-- Box for Microsoft Access

Developers will benefit by being able to create DHTML components without

having to manually code, or even learn, the markup language.

-- Review of J++ 6.0 in PC Week, March 16, 1998.

Has custom controls for all the major Internet protocols (Windows Sockets,

FTP, Telnet, Firewall, Socks 5.0, SMPT, POP, MIME, NNTP, Rcommands, HTTP,

etc.). And you know what? You really don't need to understand any of them

to include the functionality they offer in your program.

-- Ad for Visual Internet Toolkit from the Distinct Corp. in the Components

Paradise catalog

My programming tools were full of wizards. Little dialog boxes waiting for me

to click "Next" and "Next" and "Finish." Click and drag and shazzam! -thousands of lines of working code. No need to get into the "hassle" of

remembering the language. No need to even learn it. It is a powerful sirensong lure: You can make your program do all these wonderful and

complicated things, and you don't really need to understand.

In six clicks of a wizard, the Microsoft C++ AppWizard steps me through the

creation of an application skeleton. The application will have a multidocument

interface, database support from SQL Server, OLE compound document

support as both server and container, docking toolbars, a status line, printer

and print-preview dialogs, 3-D controls, messaging API and Windows sockets

support; and, when my clicks are complete, it will immediately compile, build

and execute. Up pops a parent and child window, already furnished with

window controls, default menus, icons and dialogs for printing, finding,

cutting and pasting, saving and so forth. The process takes three minutes.

Of course, I could look at the code that the Wizard has generated. Of course,

I could read carefully through the 36 generated C++ class definitions.

Ideally, I would not only read the code but also understand all the calls on

the operating system and all the references to the library of standard

Windows objects called the Microsoft Foundation Classes. Most of all, I would

study them until I knew in great detail the complexities of servers and

containers, OLE objects, interaction with relational databases, connections to

a remote data source and the intricacies of messaging -- all the functionality

AppWizard has just slurped into my program, none of it trivial.

But everything in the environment urges me not to. What the tool

encourages me to do now is find the TODO comments in the generated code,

then do a little filling in -- constructors and initializations. Then I am to start

clicking and dragging controls onto the generated windows -- all the

prefabricated text boxes and list boxes and combo boxes and whatnot. Then

I will write a little code that hangs off each control.

In this programming world, the writing of my code has moved away from

being the central task to become a set of appendages to the entire Microsoft

system structure. I'm a scrivener here, a filler-in of forms, a setter of

properties. Why study all that other stuff, since it already works anyway?

Since my deadline is pressing. Since the marketplace is not interested in

programs that do not work well in the entire Microsoft structure, which

AppWizard has so conveniently prebuilt for me.

This not-knowing is a seduction. I feel myself drifting up, away from the core

of what I've known programming to be: text that talks to the system and its

other software, talk that depends on knowing the system as deeply as

possible. These icons and wizards, these prebuilt components that look like

little pictures, are obscuring the view that what lies under all these cascading

windows is only text talking to machine, and underneath it all is something

still looking for a BASIC interpreter. But the view the wizards offer is pleasant

and easy. The temptation never to know what underlies that ease is

overwhelming. It is like the relaxing passivity of television, the calming

blankness when a theater goes dark: It is the sweet allure of using.

My programming tools have become like My Computer. The same impulse

that went into the Windows 95 user interface -- the desire to encapsulate

complexity behind a simplified set of visual representations, the desire to

make me resist opening that capsule -- is now in the tools I use to write

programs for the system. What started out as the annoying, cloying face of a

consumer-oriented system for a naive user has somehow found its way into

C++. Dumbing-down is trickling down. Not content with infantilizing the end

user, the purveyors of point-and-click seem determined to infantilize the

programmer as well.

But what if you're an experienced engineer? What if you've already learned

the technology contained in the tool, and you're ready to stop worrying about

it? Maybe letting the wizard do the work isn't a loss of knowledge but simply

a form of storage: the tool as convenient information repository.

(To be continued.)

SALON | May 12, 1998

------------

Go on to Part Two of "The Dumbing Down of Programming," where Ellen Ullman

explores why wizards aren't merely a helpful convenience -- and how, when

programmers come to rely too much upon "easy" tools, knowledge can disappear

into code.

------------

Ellen Ullman is a software engineer. She is the author of "Close to the Machine:

Technophilia and its Discontents."

The dumbing-down of programming

P A R T_T W O:

RETURNING TO THE SOURCE. ONCE KNOWLEDGE DISAPPEARS INTO CODE, HOW DO WE

RETRIEVE IT?

BY ELLEN ULLMAN

I used to pass by a large computer system with the

feeling that it represented the summed-up knowledge

of human beings. It reassured me to think of all those

programs as a kind of library in which our

understanding of the world was recorded in intricate

and exquisite detail. I managed to hold onto this

comforting belief even in the face of 20 years in the

programming business, where I learned from the

beginning what a hard time we programmers have in

maintaining our own code, let alone understanding

programs written and modified over years by untold

numbers of other programmers. Programmers come

and go; the core group that once understood the issues has written its code

and moved on; new programmers have come, left their bit of understanding

in the code and moved on in turn. Eventually, no one individual or group

knows the full range of the problem behind the program, the solutions we

chose, the ones we rejected and why.

Over time, the only representation of the original knowledge becomes the

code itself, which by now is something we can run but not exactly

understand. It has become a process, something we can operate but no

longer rethink deeply. Even if you have the source code in front of you, there

are limits to what a human reader can absorb from thousands of lines of text

designed primarily to function, not to convey meaning. When knowledge

passes into code, it changes state; like water turned to ice, it becomes a new

thing, with new properties. We use it; but in a human sense we no longer

know it.

The Year 2000 problem is an example on a vast scale of knowledge

disappearing into code. And the soon-to-fail national air-traffic control

system is but one stark instance of how computerized expertise can be lost.

In March, the New York Times reported that IBM had told the Federal

Aviation Administration that, come the millennium, the existing system would

stop functioning reliably. IBM's advice was to completely replace the system

because, they said, there was "no one left who understands the inner

workings of the host computer."

No one left who understands. Air-traffic control systems, bookkeeping,

drafting, circuit design, spelling, differential equations, assembly lines,

ordering systems, network object communications, rocket launchers, atombomb silos, electric generators, operating systems, fuel injectors, CAT scans,

air conditioners -- an exploding list of subjects, objects and processes

rushing into code, which eventually will be left running without anyone left

who understands them. A world full of things like mainframe computers,

which we can use or throw away, with little choice in between. A world

floating atop a sea of programs we've come to rely on but no longer truly

understand or control. Code and forget; code and forget: programming as a

collective exercise in incremental forgetting.

***

Every visual programming tool, every wizard, says to the programmer: No

need for you to know this. What reassures the programmer -- what lulls an

otherwise intelligent, knowledge-seeking individual into giving up the desire

to know -- is the suggestion that the wizard is only taking care of things that

are repetitive or boring. These are only tedious and mundane tasks, says the

wizard, from which I will free you for better things. Why reinvent the wheel?

Why should anyone ever again write code to put up a window or a menu?

Use me and you will be more productive.

Productivity has always been the justification for the prepackaging of

programming knowledge. But it is worth asking about the sort of productivity

gains that come from the simplifications of click-and-drag. I once worked on

a project in which a software product originally written for UNIX was being

redesigned and implemented on Windows NT. Most of the programming team

consisted of programmers who had great facility with Windows, Microsoft

Visual C++ and the Foundation Classes. In no time at all, it seemed, they

had generated many screenfuls of windows and toolbars and dialogs, all with

connections to networks and data sources, thousands and thousands of lines

of code. But when the inevitable difficulties of debugging came, they seemed

at sea. In the face of the usual weird and unexplainable outcomes, they

stood a bit agog. It was left to the UNIX-trained programmers to fix things.

The UNIX team members were accustomed to having to know. Their view of

programming as language-as-text gave them the patience to look slowly

through the code. In the end, the overall "productivity" of the system, the

fact that it came into being at all, was the handiwork not of tools that sought

to make programming seem easy, but the work of engineers who had no fear

of "hard."

And as prebuilt components accomplish larger and larger tasks, it is no

longer only a question of putting up a window or a text box, but of an entire

technical viewpoint encapsulated in a tool or component. No matter if, like

Microsoft's definition of a software object, that viewpoint is haphazardly

designed, verbose, buggy. The tool makes it look clean; the wizard hides bad

engineering as well as complexity.

In the pretty, visual programming world, both the vendor and programmer

can get lazy. The vendor doesn't have to work as hard at producing and

committing itself to well-designed programming interfaces. And the

programmer can stop thinking about the fundamentals of the system. We

programmers can lay back and inherit the vendor's assumptions. We accept

the structure of the universe implicit in the tool. We become dependent on

the vendor. We let knowledge about difficulty and complexity come to reside

not in us, but in the program we use to write programs.

No wizard can possibly banish all the difficulties, of course. Programming is

still a tinkery art. The technical environment has become very complex -- we

expect bits of programs running anywhere to communicate with bits of

programs running anywhere else -- and it is impossible for any one individual

to have deep and detailed knowledge about every niche. So a certain degree

of specialization has always been needed. A certain amount of complexityhiding is useful and inevitable.

Yet, when we allow complexity to be hidden and handled for us, we should at

least notice what we're giving up. We risk becoming users of components,

handlers of black boxes that don't open or don't seem worth opening. We risk

becoming like auto mechanics: people who can't really fix things, who can

only swap components. It's possible to let technology absorb what we know

and then re-express it in intricate mechanisms -- parts and circuit boards and

software objects -- mechanisms we can use but do not understand in crucial

ways. This not-knowing is fine while everything works as we expected. But

when something breaks or goes wrong or needs fundamental change, what

will we do but stand a bit helpless in the face of our own creations?

------------

An epiphany on unscrewing the computer box: Why engineers flock to Linux

Linux won't recognize my CD-ROM drive. I'm using what should be the right

boot kernel, it's supposed to handle CD-ROMs like mine, but no: The

operating system doesn't see anything at all on /dev/hdc. I try various

arcane commands to the boot loader: still nothing. Finally I'm driven back to

the HOW-TO FAQs and realize I should have started there. In just a few

minutes, I find a FAQ that describes my problem in thorough and

knowledgeable detail. Don't let anyone ever say that Linux is an unsupported

operating system. Out there is a global militia of fearless engineers posting

helpful information on the Internet: Linux is the best supported operating

system in the world.

The problem is the way the CD-ROM is wired, and as I reach for the

screwdriver and take the cover off the machine, I realize that this is exactly

what I came for: to take off the covers. And this, I think, is what is driving so

many engineers to Linux: to get their hands on the system again.

Now that I know that the CD-ROM drive should be attached as a master

device on the secondary IDE connector of my orphaned motherboard -- now

that I know this machine to the metal -- it occurs to me that Linux is a

reaction to Microsoft's consumerization of the computer, to its cutesying and

dumbing-down and bulletproofing behind dialog boxes. That Linux represents

a desire to get back to UNIX before it was Hewlett-Packard's HP-UX or Sun's

Solaris or IBM's AIX -- knowledge now owned by a corporation, released in

unreadable binary form, so easy to install, so hard to uninstall. That this

sudden movement to freeware and open source is our desire to revisit the

idea that a professional engineer can and should be able to do the one thing