- University of West Florida

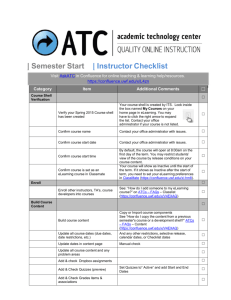

advertisement