D.E. Rumelhart, G.E. Hinton and R.J. Williams

advertisement

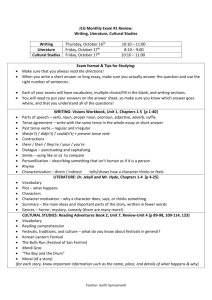

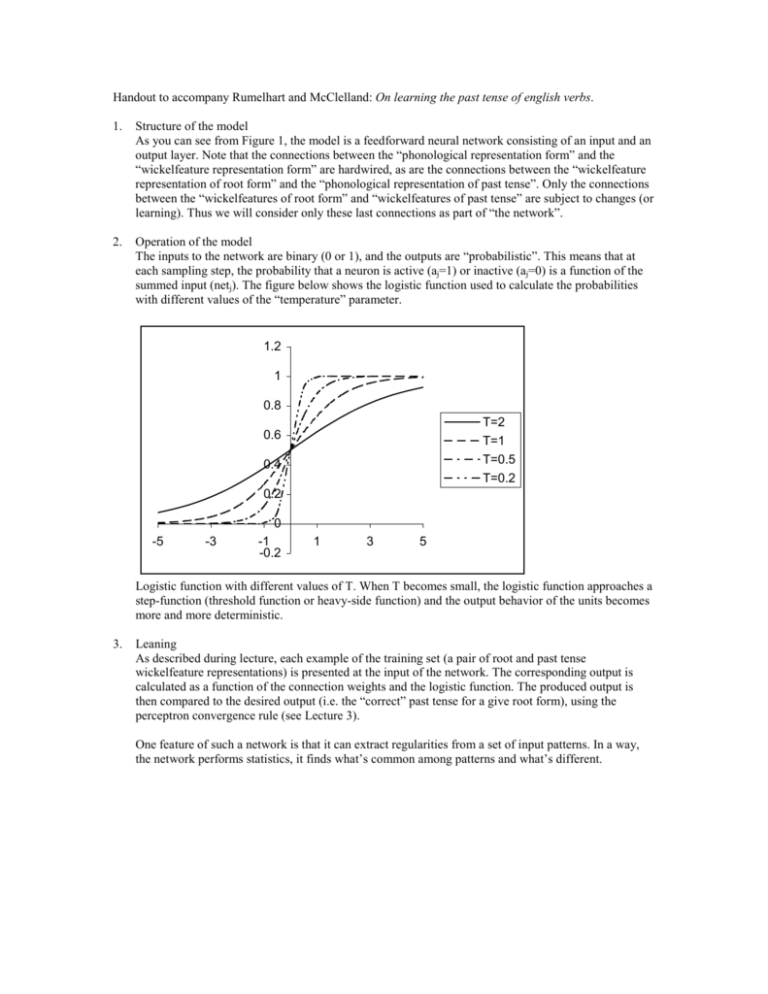

Handout to accompany Rumelhart and McClelland: On learning the past tense of english verbs. 1. Structure of the model As you can see from Figure 1, the model is a feedforward neural network consisting of an input and an output layer. Note that the connections between the “phonological representation form” and the “wickelfeature representation form” are hardwired, as are the connections between the “wickelfeature representation of root form” and the “phonological representation of past tense”. Only the connections between the “wickelfeatures of root form” and “wickelfeatures of past tense” are subject to changes (or learning). Thus we will consider only these last connections as part of “the network”. 2. Operation of the model The inputs to the network are binary (0 or 1), and the outputs are “probabilistic”. This means that at each sampling step, the probability that a neuron is active (aj=1) or inactive (aj=0) is a function of the summed input (netj). The figure below shows the logistic function used to calculate the probabilities with different values of the “temperature” parameter. 1.2 1 0.8 T=2 T=1 T=0.5 T=0.2 0.6 0.4 0.2 -5 -3 0 -1 -0.2 1 3 5 Logistic function with different values of T. When T becomes small, the logistic function approaches a step-function (threshold function or heavy-side function) and the output behavior of the units becomes more and more deterministic. 3. Leaning As described during lecture, each example of the training set (a pair of root and past tense wickelfeature representations) is presented at the input of the network. The corresponding output is calculated as a function of the connection weights and the logistic function. The produced output is then compared to the desired output (i.e. the “correct” past tense for a give root form), using the perceptron convergence rule (see Lecture 3). One feature of such a network is that it can extract regularities from a set of input patterns. In a way, the network performs statistics, it finds what’s common among patterns and what’s different.