Notes

advertisement

REU 2013: Apprentice Program

Summer 2013

Lecture 6: July 5, 2013

Madhur Tulsiani

1

Scribe: David Kim

Eigenvalues & Eigenvectors

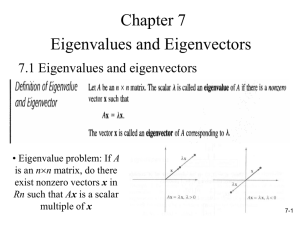

Definition 1.1 (Eigenvalue & Eigenvector) Let A ∈ Cn×n . Then λ ∈ C is said to be an

eigenvalue of A if ∃v 6= 0 such that

Av = λv ⇐⇒ (A − λI)v = 0v .

Such a v is an called eigenvector of A with eigenvalue λ.

Exercise 1.2 λ is an eigenvalue of A if and only if det(λI − A) = 0

Exercise 1.3 Let Uλ = {v : Av = λv}. Prove: Uλ is a subspace of Cn .

Definition 1.4 (Characteristic Polynomial) fA (t) = det(tI − A) is called the characteristic

polynomial of A.

From the above, we know that λ is an eigenvalue of A iff lambda is a root of the characteristic

polynomial fA (t).

1 0

. The characteristic polynomial is fA (t) = det(tI − A) =

Example 1.5 Consider A =

0 1

(t − 1)2 . The only eigenvalue is λ = 1, and Uλ = C2 , since Av = Iv = v for all v ∈ C2 .

1 1

Example 1.6 Consider A =

. The characteristic polynomial is still fA (t) = det(tI − A) =

0 1

(t − 1)2 and the only eigenvalue is λ = 1. However, Uλ is now only the one-dimensional space

1

Uλ =

α·

| α∈C .

0

Exercise 1.7 Calculate the eigenvalues of A =

0 1

.

−1 0

Note that the eigenvalue of a real matrix may be complex valued. Also, any polynomial p(t) of

degree n over C can be factored as c(t − λ1 )...(t − λn ) for c, λ1 , ..., λn ∈ C. Thus, the characteristic

polynomial of a matrix always has n (not necessarily distinct) complex roots.

1

Definition 1.8 (Algebraic Multiplicity) The algebraic multiplicity of an eigenvalue λ is the

number of times t − λ appears as a factor in the characteristic polynomial fA (t).

Definition 1.9 (Geometric Multiplicity) The geometric multiplicity of an eigenvalue λ is dim(Uλ ).

Exercise 1.10 Algebraic multiplicity ≥ geometric multiplicity.

1 1

gives an example where the algebraic multiplicity is strictly greater

0 1

than the geometric multiplicity of an eigenvalue.

Note that the matrix

Exercise 1.11 Let Av = λv for v ∈ Cn , A ∈ Rn×n , λ ∈ R. Then Re(v), Im(v) are also eigenvectors

with eigenvalue λ.

Thus, if a real matrix A has an eigenvector with a real eigenvalue λ ∈ R, then it also has a real

eigenvector with the same eigenvalue.

Example 1.12 Calculate

eigenvectors, and the characteristic polynomial for the

the eigenvalues,

cosθ −sinθ

.

rotation matrix, Rθ =

sinθ cosθ

±iθ

fRθ (t) = (t − cosθ)2 + (sinθ)2 = 0 gives

t = cosθ ± isinθ = e . Let λ1 = cosθ + isinθ,

1

i

: α ∈ C}. Note that the

λ2 = cosθ − isinθ. Then Uλ1 = {α

: α ∈ C}, Uλ2 = {α

i

1

eigenvectors do not depend on θ.

Exercise 1.13 If v1 , ..., vn are eigenvectors with distinct eigenvalues λ1 , ..., λn , then v1 , ..., vn are

linearly independent.

Definition 1.14 (Similar Matrices) A and B are similar, A ∼ B, if ∃S ∈ Cn×n such that

A = S −1 BS.

Definition 1.15 (Diagonalizable Matrices) A is diagonalizable if A = S −1 DS where D is a

diagonal matrix. (A is similar to a diagonal matrix).

Exercise 1.16 Prove that similarity between matrices is an equivalence relation.

Exercise 1.17 Prove: If A ∼ B, then fA (t) = fB (t). Thus, if A ∼ B, then they have the same

eigenvalues and each eigenvalue has the same algebraic multiplicity for both A and B.

Exercise 1.18 Let A ∼ B. Then for each λ which is an eigenvalue of A (and hence also of

(A)

(B)

B), show that Uλ is isomorphic to Uλ . Thus, each eigenvalue also has the same geometric

multiplicity for both A and B.

This follows by noting that S −1 BSv = λv ⇒ BSv = λSv. Hence, v 7→ Sv is a bijective linear map

(A)

(B)

from Uλ to Uλ .

2

2

Inner Products and Unitary Matrices

Definition 2.1 (Inner Product) Let u, v ∈ Cn . Then the Hermitian inner product of u and v is

defined as

n

X

hu, vi =

ui vi ,

i=1

where ui denotes the conjugade of ui . Note that this the same as the usual dot-product if u, v ∈ Rn .

P

Note that thepquantity hu, ui = i |ui |2 is always non-negative and is zero only when u = 0. We

define kuk = hu, ui, which extends the notion of length of a vector, to vectors in Cn .

Definition 2.2 (Orthogonal Vectors) Two vectors u, v are said to be orthogonal if hu, vi = 0.

Definition 2.3 (Orthonormal Basis) {v1 , ..., vk } is an orthonormal basis for V if it is a basis

such that

0 if i =

6 j

.

∀i, j hvi , vj i =

1 if i = j

1

1

are orthogonal. After

and

Example 2.4 For the rotation matrix Rθ , the eigenvectors

i

i

1

1

scaling, the vectors to √12 ·

and √12 ·

, we obtain an orthonormal basis of C2 consisting of

i

i

eigenvectors of Rθ .

Definition 2.5 (Unitary Matrix) A matrix U is called a unitary matrix if the columns of U

form an orthonormal basis of Cn .

Definition 2.6 (Adjoint of a matrix) For a matrix A ∈ Mn (C), its adjoint, denoted as A∗ is

T

the matrix defined as (A∗ )ij = aji i.e., A∗ = A .

Note that it follows from the fact that (AB)T = B T AT that we have (AB)∗ = B ∗ A∗ .

Exercise 2.7 U is unitary if and only if U ∗ U = U U ∗ = I.

Note that (U ∗ U )ij = U (i) , U (j) , where U (i) denotes the ith column of U . Hence U ∗ U = I if and

only if the columns form an orthonormal basis. Also, we have that

U ∗U = I ⇔ U U ∗ = I ∗ = I .

Exercise 2.8 Show that If U1 , U2 are unitary, then so is U1 U2 .

3