Chapter 8 and 9 : Review

advertisement

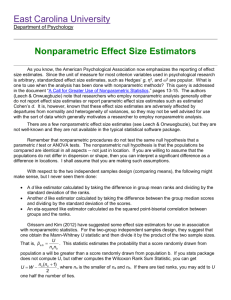

1

One Sample and Two Sample Procedures. Chapters 8 & 9

Review of one-sample t-procedures. Suppose that a random sample of size n is drawn from a population with

unknown mean . Then, a confidence interval for is given by

x t / 2 SE ,

SE

s

n

where t/2(n-1) is the appropriate critical point of the distribution.

A test of H0:

0 is based on the statistic

T

which, under

X 0

S/ n

H0, has a t(n-1) distribution. P-values can thus be calculated for the usual alternatives.

Ha: 0

Ha: > 0

Ha: < 0

Both procedures are valid for a normal population, and are asymptotically valid (that is, for large n) in all other

cases.

Example. The yield (in thousands of kilograms per hectare) of wheat for 100 farms in the Murray Valley was

recorded.

13.39

13.37

13.30

13.29

13.46

13.46

13.34

13.59

13.38

13.28

13.54

13.24

13.40

13.44

13.42

13.32

13.50

13.46

13.30

13.58

13.40

13.53

13.51

13.28

13.41

13.29

13.48

13.43

13.32

13.26

13.40

13.39

13.52

13.47

13.50

13.44

13.37

13.50

13.39

13.28

13.42

13.51

13.40

13.62

13.53

13.43

13.54

13.43

13.34

13.32

13.32

13.39

13.23

13.20

13.45

13.43

13.36

13.41

13.50

13.43

13.28

13.51

13.48

13.43

13.29

13.24

13.23

13.42

13.42

13.33

13.46

13.62

13.62

13.56

13.45

13.58

13.38

13.37

13.35

13.31

Estimate the mean yield . Is it significantly greater than 13.4?

MINITAB: Stats Basic Statistics Normality Test

Normal Probability Plot

.999

.99

Probability

.95

.80

.50

.20

.05

.01

.001

13.18

13.28

13.38

13.48

13.58

13.68

YIELD

Average: 13.4164

StDev: 0.100740

N: 100

Anderson-Darling Normality Test

A-Squared: 0.362

P-Value: 0.436

MTB > TInterval 95.0 'yield'.

Confidence Intervals

Variable N

Mean SE Mean

95.0 % C.I.

yield

100 13.4164 0.0101 (13.3964,13.4364)

13.38

13.57

13.40

13.47

13.55

13.31

13.66

13.38

13.57

13.39

13.52

13.51

13.45

13.29

13.31

13.43

13.40

13.42

13.40

13.41

2

MTB > TTest 13.4 'yield';

SUBC>

Alternative 1.

T-Test of the Mean

Test of mu = 13.4000 vs mu > 13.4000

Variable

N

Mean SE Mean

T P-Value

yield

100 13.4164 0.0101 1.63

0.053

We retain = 13.4 at the 5% level (but only just).

Example (paired sample). An experiment was performed to test whether a chemical treatment increases the

strength of wrapping paper. Each of ten standard sheets was tested for strength before and after treatment.

Before

After

1.21

1.49

1.43

1.37

1.35

1.67

1.51

1.50

1.39

1.31

1.17

1.29

1.48

1.52

1.42

1.37

1.29

1.44

1.40

1.53

MTB > Let 'diff' = 'after' - 'before'

Normal quantile plot of differences:

Normal Probability Plot

.999

.99

Probability

.95

.80

.50

.20

.05

.01

.001

-0.1

0.0

0.1

0.2

0.3

Diff

Average: 0.084

StDev: 0.140728

N: 10

Anderson-Darling Normality Test

A-Squared: 0.334

P-Value: 0.434

MTB > TTest 0 'diff';

SUBC> Alternative 1.

T-Test of the Mean

Test of mu = 0.0000 vs mu > 0.0000

Variable N Mean SE Mean T P-Value

diff 10 0.0840 0.0445 1.89 0.046

At the 5% level we favour the hypothesis that there is an increase (but only just).

Robustness. The above procedures are robust to departures from normality, but not to outliers. They can safely be

used when n > 15for a nonnormal population, unless an outlier or clear skewness is evident. For clearly skewed

data we can safely use these procedures for n > 40.

3

Nonnormal populations. Here are three strategies for dealing with small samples:

If the population distribution is identified, use an appropriate model. (Ask an expert!)

Remove skewness with a log transformation (say), but remember that you will now be performing

inference on the mean of log(X), where X is the quantity sampled; this is not the same as log(mean of X).

However, it is okay to invert the transformation at the confidence limits, but the resulting interval will

usually be nonsymmetric.

Use a nonparametric test, e.g. the sign test.

Sign test. This nonparametric (or distribution- free) test concerns the median of a population. Consider the sample

of differences in the paper-strength example. Firstly, discard zero differences; there are none:

0.28, -0.06, 0.32, -0.01, -0.08, 0.12, 0.04, -0.05, 0.15, 0.13.

Let n+ be the number of positive differences. We shall reject H0: M = 0, where M is the (population) median

difference, in favour of Ha : M > 0 if n+ is too large. Under H0 , n+ has a binomial B(n,½) distribution (where

here n=10), so a P-value can easily be calculated.

MTB > STest 0.0 'diff';

SUBC>

Alternative 1.

Sign Test for Median

Sign test of median = 0.0000 vs GT 0.0000

diff

N BELOW EQUAL ABOVE P-VALUE MEDIAN

10

4

0

6 0.3770 0.08000

At the 5% level we retain the hypothesis that there is no increase.

Review of two-sample t-procedures. Suppose we have two independent samples, of sizes n1 and n2 , from two

distinct normal populations. Then, in an obvious notation, a confidence interval for 1

( x1 x2 ) t / 2 SE , SE

- 2 is given by

s12 s 22

n1 n 2

where t is the appropriate critical point of the t(k)distribution and k is the smaller of n1

-1and n2 -1.

A test of H0

: 1 - 2 = D0 is based on the statistic

( x x2 ) D0

T 1

SE

which, under H0, has a t(k)distribution. P-values can thus be calculated for, or. There is a similar test for

1 - 2 < D0

1 - 2 > D0

1 -2 D0

Two-sample procedures are highly robust. When the population distributions have similar shape, we only require

n1 , n2 > 5. Otherwise, use the one-sample guidelines with n replaced by n1 + n2 – 2. Also, n1 = n2 is

recommended.

Pooling. If the populations have the same standard deviation , we can use the pooled estimator of 2, namely

s 2p

(n1 1) s12 (n2 1) s 22

n1 n2 2

4

The above procedures can then be made more powerful using

SE

SE p s 2p (

1

1

) in place of

n1 n 2

s12 s 22

. All procedures are then based on the t(n1 + n2 – 2 )distribution.

n1 n 2

Warning. Pooled procedures are not robust to departures from the equal variance assumption, particularly when

the sample sizes are markedly different.

Example. Consider the wheat yield example. Suppose now that the yield was also recorded for 100 different farms

which used a new fertilizer treatment. Does the new treatment provide any significant improvement in yield?

13.46

13.36

13.51

13.61

13.47

13.35

13.51

13.51

13.57

13.61

13.67

13.16

13.60

13.59

13.32

13.51

13.42

13.43

13.21

13.41

13.58

13.56

13.43

13.62

13.42

13.28

13.46

13.29

13.49

13.40

13.29

13.60

13.59

13.49

13.51

13.63

13.32

13.41

13.38

13.42

13.53

13.37

13.37

13.57

13.37

13.54

13.36

13.37

13.40

13.23

13.35

13.50

13.40

13.41

13.45

13.41

13.49

13.27

13.64

13.37

13.55

13.51

13.51

13.38

13.41

13.31

13.47

13.58

13.35

13.36

Normal quantile plot of new yield:

Normal Probability Plot

.999

.99

Probability

.95

.80

.50

.20

.05

.01

.001

13.14

13.24

13.34

13.44

13.54

13.64

13.74

NEW YIELD

Average: 13.4464

StDev: 0.115115

N: 100

Anderson-Darling Normality Test

A-Squared: 0.240

P-Value: 0.770

MTB > TwoSample 95.0 'yield' 'newyield';

SUBC>

Alternative -1.

Twosample T for yield vs newyield

N

Mean

yield

100 13.416

newyield 100 13.446

StDev

0.101

0.115

SE Mean

0.010

0.012

95% CI for mu_yield-mu_newyield:(-0.06,0.00)

T-Test (= vs <): T= -1.96 P=0.026 DF=194

MTB > TwoSample 95.0 'yield' 'newyield';

SUBC>

Alternative -1;

SUBC>

Pooled.

95% CI for mu_yield-mu_newyield:(-0.06,0.00)

T-Test (= vs <): T= -1.96 P=0.026 DF=198

Both use Pooled StDev = 0.108

13.66

13.61

13.38

13.54

13.53

13.33

13.30

13.44

13.43

13.48

13.47

13.36

13.39

13.43

13.36

13.48

13.29

13.17

13.34

13.48

13.56

13.43

13.51

13.23

13.72

13.47

13.55

13.55

13.39

13.48

5

Power and Tests for Proportions

(i) Power

Consider a normal population with unknown mean , to be specific, our wheat-yield example, where the yield for

100 farms in the Murray Valley was recorded. We shall test

H 0:

H a:

Two tests are proposed. The first is a t-test which rejects H0 if X > 13.4173. The second is a sign test which

rejects H0 if n+ 59 , where n+ is the number of data which exceed 13.4 (note that since the population is normal,

the population mean and median are the same). It is claimed that the t-test is more powerful than the sign test. Can

we make this statement more precise?

The power of a test is the probability of correctly rejecting H0; the greater the power, the better the test does what

it is meant to. More precisely, the power (function) p of a test (concerning ) is given by

P(0) = P(Reject H0 when = 0)

So, in claiming that the t-test is more powerful than the sign test, we are saying that the probability of correctly

rejecting H0 is greater for the t-test, no matter what the value of (for > 13.4); the t-test always does better

under Ha.

When comparing the power of two or more tests, we should ensure that they have the same significance level (the

probability of incorrectly rejecting H0). In our example, the tests have been constructed so that the significance

level for both is approximately 4.5% :

= P(13.4) = 0.045

Inference as decision. So far we have adopted a philosophy that significance tests provide methods for assessing

the strength of evidence against the null hypothesis by way of a P-value. We think of the predominant significance

level of 5% ( = 0.05) as a description of good evidence against H0.

Another philosophy regards the goal of inference as being a decision between H0 and Ha. This is clearly

appropriate in the case of acceptance sampling. For example, on the basis of testing a small sample of ball

bearings, we decide whether or not to accept the entire shipment:

H0 : shipment meets our standards

Ha : shipment doesn’t meet our standards

We hope our decision will be correct, but sometimes we will be wrong.

From this ``decision theory'' standpoint, there are two types of errors: a Type I error, where we reject H0 when it is

true, and a Type II error, where we reject Ha when it is true. We always choose H0 so that the Type I error is the

more serious, and we fix the significance level to be the probability of a Type I error. Among all tests with the

same significance level, we try to choose one which makes the probability of a Type II error as small as possible;

this is equivalent to choosing the test with greatest power. We can always reduce the probability of a Type II error

by choosing a larger sample.

(ii) Review of Tests for Proportions

We wish to estimate the proportion p of individuals in a large population that have some specified characteristic.

Suppose that, of a random sample of size n from the population, there are X with the specified characteristic. Then,

x

p is an unbiassed estimator for p and a confidence interval for p is given by

n

( pˆ ) z / 2 SE ,

SE

ˆ (1 p

ˆ)

p

where z/2 is the appropriate critical point of the standard normal distribution.

1

n

,

6

A test of H0

p0 is based on the statistic

Z

pˆ p 0

SE

which, under H0 , has an approximate standard normal distribution. P-values can thus be calculated for the usual

alternatives

p1 <p0

p1 > p 0

, or p1 p0

Choosing a sample size. In choosing n to obtain a prescribed margin of error B, we would like to set

pˆ (1 pˆ )

n

and solve for n. But, p̂ is not known before the data is gathered. We might therefore guess the population

B = z/2

proportion as p* and then set

n=

(

z / 2 2

) p * (1 p*)

B

However, since max 0<p*< 1 {p*(1-p*)} = 1/4 is obtained when p* = 1/2 , the margin of error will be less than or

equal to B if p* is chosen to be 1/2; this gives the conservative estimate

n

z2 / 2

(2 B) 2

If p is significantly different from 1/2, this procedure may select a sample size which is much larger than needed.

If we have a basic knowledge of the underlying distribution of a variable, then we can make predictions about

how, in repeated samples of equal size, this particular statistic will "behave," that is, how it is distributed. For

example, if we draw 100 random samples of 100 adults each from the general population, and compute the mean

height in each sample, then the distribution of the standardized means across samples will likely approximate the

normal distribution (to be precise, Student's t distribution with 99 degrees of freedom). Now imagine that we take

an additional sample in Sydney where we suspect that people are taller than the average population. If the mean

height in that sample falls outside the upper 95% tail area of the t distribution then we conclude that, indeed, the

people of Sydney are taller than the average population.

In the above example we relied on our knowledge that, in repeated samples of equal size, the standardized means

(for height) will be distributed following the t distribution (with a particular mean and variance). However, this

will only be true if in the population the variable of interest (height in our example) is normally distributed, that is,

if the distribution of people of particular heights follows the normal distribution (the bell-shape distribution).

For many variables of interest, we simply do not know for sure that this is the case. For example, is income

distributed normally in the population? -- probably not. The incidence rates of rare diseases are not normally

distributed in the population, the number of car accidents is also not normally distributed, and neither are very

many other variables in which a researcher might be interested.

Sample size. Another factor that often limits the applicability of tests based on the assumption that the sampling

distribution is normal is the size of the sample of data available for the analysis (sample size; n). We can assume

that the sampling distribution is normal even if we are not sure that the distribution of the variable in the

population is normal, as long as our sample is large enough (e.g., 100 or more observations). However, if our

sample is very small, then those tests can be used only if we are sure that the variable is normally distributed, and

there is no way to test this assumption if the sample is small.

Problems in measurement. Applications of tests that are based on the normality assumptions are further limited

by a lack of precise measurement. For example, let us consider a study where grade point average (GPA) is

measured as the major variable of interest. Is an A average twice as good as a C average? Is the difference between

a B and an A average comparable to the difference between a D and a C average? Somehow, the GPA is a crude

measure of scholastic accomplishments that only allows us to establish a rank ordering of students from "good"

students to "poor" students. This general measurement issue is usually discussed in statistics textbooks in terms of

types of measurement or scale of measurement. Without going into too much detail, most common statistical

techniques such as analysis of variance (and t- tests), regression, etc. assume that the underlying measurements are

at least of interval, meaning that equally spaced intervals on the scale can be compared in a meaningful manner

7

(e.g, B minus A is equal to D minus C). However, as in our example, this assumption is very often not tenable, and

the data rather represent a rank ordering of observations (ordinal) rather than precise measurements.

Parametric and nonparametric methods. Hopefully, after this somewhat lengthy introduction, the need is

evident for statistical procedures that allow us to process data of "low quality," from small samples, on variables

about which nothing is known (concerning their distribution). Specifically, nonparametric methods were

developed to be used in cases when the researcher knows nothing about the parameters of the variable of interest

in the population (hence the name nonparametric). In more technical terms, nonparametric methods do not rely on

the estimation of parameters (such as the mean or the standard deviation) describing the distribution of the variable

of interest in the population. Therefore, these methods are also sometimes (and more appropriately) called

parameter-free methods or distribution-free methods.

Brief Overview of Nonparametric Methods

Basically, there is at least one nonparametric equivalent for each parametric general type of test. In general, these

tests fall into the following categories:

Tests of differences between groups (independent samples);

Tests of differences between variables (dependent samples);

Tests of relationships between variables.

Differences between independent groups. Usually, when we have two samples that we want to compare

concerning their mean value for some variable of interest, we would use the t-test for independent samples;

nonparametric alternatives for this test are the Wald-Wolfowitz runs test, the Mann-Whitney U test, and the

Kolmogorov-Smirnov two-sample test. If we have multiple groups, we would use analysis of variance (see

ANOVA/MANOVA; the nonparametric equivalents to this method are the Kruskal-Wallis analysis of ranks and the

Median test.

Differences between dependent groups. If we want to compare two variables measured in the same sample we

would customarily use the t-test for dependent samples for example, if we wanted to compare students' math skills

at the beginning of the semester with their skills at the end of the semester). Nonparametric alternatives to this test

are the Sign test and Wilcoxon's matched pairs test. If the variables of interest are dichotomous in nature (i.e.,

"pass" vs. "no pass") then McNemar's Chi-square test is appropriate. If there are more than two variables that were

measured in the same sample, then we would customarily use repeated measures ANOVA. Nonparametric

alternatives to this method are Friedman's two-way analysis of variance and Cochran Q test (if the variable was

measured in terms of categories, e.g., "passed" vs. "failed"). Cochran Q is particularly useful for measuring

changes in frequencies (proportions) across time.

When to Use Which Method

It is not easy to give simple advice concerning the use of nonparametric procedures. Each nonparametric

procedure has its peculiar sensitivities and blind spots. For example, the Kolmogorov-Smirnov two-sample test is

not only sensitive to differences in the location of distributions (for example, differences in means) but is also

greatly affected by differences in their shapes. The Wilcoxon matched pairs test assumes that one can rank order

the magnitude of differences in matched observations in a meaningful manner. If this is not the case, one should

rather use the Sign test. In general, if the result of a study is important (e.g., does a very expensive and painful

drug therapy help people get better?), then it is always advisable to run different nonparametric tests; should

discrepancies in the results occur contingent upon which test is used, one should try to understand why some tests

give different results. On the other hand, nonparametric statistics are less statistically powerful (sensitive) than

their parametric counterparts, and if it is important to detect even small effects (e.g., is this food additive harmful

to people?) one should be very careful in the choice of a test statistic.

Large data sets and nonparametric methods. Nonparametric methods are most appropriate when the sample

sizes are small. When the data set is large (e.g., n > 100) it often makes little sense to use nonparametric statistics

at all. When the samples become very large, then the sample means will follow the normal distribution even if the

respective variable is not normally distributed in the population, or is not measured very well. Thus, parametric

methods, which are usually much more sensitive (i.e., have more statistical power) are in most cases appropriate

for large samples. However, the tests of significance of many of the nonparametric statistics described here are

based on asymptotic (large sample) theory; therefore, meaningful tests can often not be performed if the sample

sizes become too small.

8

9

Nonparametric Correlations

Relationships between variables. To express a relationship between two variables one usually computes the

correlation coefficient. Nonparametric equivalents to the standard correlation coefficient are Spearman R, Kendall

Tau, and coefficient Gamma (see Nonparametric correlations). If the two variables of interest are categorical in

nature (e.g., "passed" vs. "failed" by "male" vs. "female") appropriate nonparametric statistics for testing the

relationship between the two variables are the Chi-square test, the Phi coefficient, and the Fisher exact test. In

addition, a simultaneous test for relationships between multiple cases is available: Kendall coefficient of

concordance. This test is often used for expressing inter-rater agreement among independent judges who are rating

(ranking) the same stimuli.

The following are three types of commonly used nonparametric correlation coefficients (Spearman R, Kendall

Tau, and Gamma coefficients). Note that the chi-square statistic computed for two-way frequency tables, also

provides a careful measure of a relation between the two (tabulated) variables, and unlike the correlation measures

listed below, it can be used for variables that are measured on a simple nominal scale.

Spearman R. Spearman R (Siegel & Castellan, 1988) assumes that the variables under consideration were

measured on at least an ordinal (rank order) scale, that is, that the individual observations can be ranked into two

ordered series. Spearman R can be thought of as the regular Pearson product moment correlation coefficient, that

is, in terms of proportion of variability accounted for, except that Spearman R is computed from ranks.

Kendall tau. Kendall tau is equivalent to Spearman R with regard to the underlying assumptions. It is also

comparable in terms of its statistical power. However, Spearman R and Kendall tau are usually not identical in

magnitude because their underlying logic as well as their computational formulas are very different. Siegel and

Castellan (1988) express the relationship of the two measures in terms of the inequality:

-1 3 * Kendall tau - 2 * Spearman R 1

More importantly, Kendall tau and Spearman R imply different interpretations: Spearman R can be thought of as

the regular Pearson product moment correlation coefficient, that is, in terms of proportion of variability accounted

for, except that Spearman R is computed from ranks. Kendall tau, on the other hand, represents a probability, that

is, it is the difference between the probability that in the observed data the two variables are in the same order

versus the probability that the two variables are in different orders.

Gamma. The Gamma statistic (Siegel & Castellan, 1988) is preferable to Spearman R or Kendall tau when the

data contain many tied observations. In terms of the underlying assumptions, Gamma is equivalent to Spearman R

or Kendall tau; in terms of its interpretation and computation it is more similar to Kendall tau than Spearman R. In

short, Gamma is also a probability; specifically, it is computed as the difference between the probability that the

rank ordering of the two variables agree minus the probability that they disagree, divided by 1 minus the

probability of ties. Thus, Gamma is basically equivalent to Kendall tau, except that ties are explicitly taken into

account.