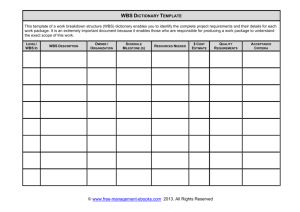

work breakdown structure

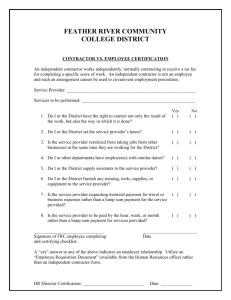

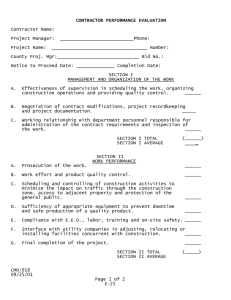

advertisement