crypto-documentation_main

advertisement

1. INTRODUCTION

1.1 A Brief Introduction to Cryptography:

Cryptography is the science of using mathematics to encrypt and decrypt

data. Cryptography enables you to store sensitive information or transmit it across

insecure networks (like the Internet) so that it cannot be read by anyone except the

intended recipient.

Cryptography might be summed up as the study of techniques and

applications that depend on the existence of difficult problems. Cryptology (from

the Greek kryptós lógos, meaning ``hidden word’’) is the discipline of

cryptography and cryptanalysis combined. To most people, cryptography is

concerned with keeping communications private. Indeed, the protection of

sensitive communications has been the emphasis of cryptography throughout much

of its history. However, this is only one part of today’s cryptography.

As we move into an information society, the technological means for global

surveillance of millions of individual people are becoming available to major

governments. Cryptography has become one of the main tools for privacy, trust,

access control, electronic payments, corporate security, and countless other fields.

Cryptography is no longer a military thing that should not be messed with.

Encryption is the transformation of data into a form that is as close to

impossible as possible to read without the appropriate knowledge (a key). Its

purpose is to ensure privacy by keeping information hidden from anyone for whom

it is not intended, even those who have access to the encrypted data.

Decryption is the reverse of encryption; it is the transformation of encrypted

data back into an intelligible form.

1

Encryption and decryption generally require the use of some secret

information, referred to as a key. For some encryption mechanisms, the same key

is used for both encryption and decryption; for other mechanisms, the keys used

for encryption and decryption is different.

Today’s

cryptography

is

more

than

encryption

and

decryption.

Authentication is as fundamentally a part of our lives as privacy. We use

authentication throughout our everyday lives – when we sign our name to some

document for instance – and, as we move to a world where our decisions and

agreements are communicated electronically, we need to have electronic

techniques for providing authentication.

Cryptography provides mechanisms for such procedures. A digital signature

binds a document to the possessor of a particular key, while a digital timestamp

binds a document to its creation at a particular time. These cryptographic

mechanisms can be used to control access to a shared disk drive, a high security

installation, or a pay-per-view TV channel.

The field of cryptography encompasses other uses as well. With just a few

basic cryptographic tools, it is possible to build elaborate schemes and protocols

that allow us to pay using electronic money, to prove we know certain information

without revealing the information itself, and to share a secret quantity in such a

way that a subset of the shares can reconstruct the secret.

While modern cryptography is growing increasingly diverse, cryptography

is fundamentally based on problems that are difficult to solve. A problem may be

difficult because its solution requires some secret knowledge, such as decrypting

an encrypted message or signing some digital document. The problem may also be

hard because it is intrinsically difficult to complete, such as finding a message that

produces a given hash value.

2

1.2 History of cryptography and Data Encryption:

The origin of cryptography probably goes back to the very beginning of

human existence, as people tried to learn how to communicate. They consequently

had to find means to guarantee secrecy as part of their communications. However,

the first deliberate use of technical methods to encipher messages may be

attributed to the ancient Greeks, around 6 years BC: a stick, named “scytale” was

used. The sender would roll a strip of paper around the stick and write his message

longitudinally on it. Then, he’d unfold the paper and send it over to the addressee.

Decrypting the message without knowledge of the stick’s width – acting here as a

secret key – was meant to be impossible. Later, Roman armies used Caesar’s

cipher code to communicate (a three-letter alphabet shift).

The next 19 centuries have been devoted to creating more or less

clever experimental encipher techniques, whose security actually relied on how

much trust user would grant them. During the 19th century, Kerchoffs wrote the

principles of modern cryptography. One of those principles stated that security of a

cryptographic system did not rely on the cryptographic process itself but on the

key that was used.

So, from that point, cryptographic systems were expected to meet those

requirements. However, existing systems still lacked mathematical background,

and therefore tools to measure or benchmark their resistance to attacks. Even better

if somebody could finally reach cryptography’s ultimate goal and find a 100%

unconditionally safe system! In 1948 and 1949, scientific background was added

to cryptography with 2 papers of Claude Shannon: “A Mathematical Theory of

Communication” and mainly “The Communication Theory of Secrecy Systems”.

Those articles swept away hopes and prejudices. Shannon proved Vernam’s cipher

that had been proposed a few years before – and also named One Time Pad – was

the only unconditionally safe system that could ever exist. Unfortunately, that

system was unusable in practice… This is the reason why, nowadays, evaluation of

3

security systems is based on computational security instead. One claims a secret

key cipher is safe if no known attack’s complexity is any better than a full search

on all possible keys.

1.3 Importance of cryptography in Modern World:

Cryptography allows people to carry over the confidence found in the

physical world to the electronic world, thus allowing people to do business

electronically without worries of deceit and deception. Every day hundreds of

thousands of people interact electronically, whether it is through e-mail, ecommerce (business conducted over the Internet), ATM machines, or cellular

phones. The perpetual increase of information transmitted electronically has lead

to an increased reliance on cryptography.

Cryptography on the Internet: The Internet, comprised of millions of

interconnected computers, allows nearly instantaneous communication and transfer

of information, around the world. People use e-mail to correspond with one

another. The World Wide Web is used for online business, data distribution,

marketing, research, learning, and a myriad of other activities.

Cryptography makes secure web sites and electronic safe transmissions

possible. For a web site to be secure all of the data transmitted between the

computers where the data is kept and where it is received must be encrypted. This

allows people to do online banking, online trading, and make online purchases

with their credit cards, without worrying that any of their account information is

being compromised. Cryptography is very important to the continued growth of

the Internet and electronic commerce.

4

E-mail: It is transmitted in plain text over unknown pathways and resides

for various periods of time on computer files over which you have no control.

Whether you’re planning a political campaign, discussing your finances, having an

affair, completing a business deal, or engaging in some totally innocuous activity,

your messages have less privacy than if you sent all of your written

correspondence on postcards.

The nature of the Internet and the electronic medium allows effective

scanning of message contents using sophisticated filtering software. Electronic

mail is gradually replacing conventional paper mail and messages can be easily

and automatically intercepted and scanned for interesting keywords.

Another problem with e-mail is that it is very easy to forge the identity of

the sender. The solution to these problems is to use cryptography. However, there

are restrictions on the export and use of strong cryptography, particularly in the

USA, but now gaining momentum in other countries. Furthermore, some

governments, and again the USA is the most prominent, want decryption keys

lodged with escrow agents, so that law enforcement agencies can, with appropriate

authorization, intercept and decrypt private messages. It is often claimed that this

facility is no different from powers that the government has always possessed to

wiretap telephones. There is however, a vital difference. Citizens are now being

asked to take action to make themselves available for surveillance.

Cryptography today involves more than encryption and decryption of

messages. It also provides mechanisms for authenticating documents using a

digital signature, which binds a document to the possessor of a particular key,

while a digital timestamp binds a document to its creation at a particular time.

These are important functions, which must take the place of equivalent manual

authentication procedures as we move into the digital age. Cryptography also plays

an important part in the developing field of digital cash and electronic funds

transfer.

5

The major applications for encryption may then be summarized as:

To protect privacy and confidentiality.

To transmit secure information (e.g. credit card details)

To provide authentication of the sender of a message.

To provide authentication of the time a message was sent.

E-commerce:

It is increasing at a very rapid rate. By the turn of the century, commercial

transactions on the Internet are expected to total hundreds of billions of dollars a

year. This level of activity could not be supported without cryptographic security.

It has been said that one is safer using a credit card over the Internet than within a

store or restaurant. It requires much more work to seize credit card numbers over

computer networks than it does to simply walk by a table in a restaurant and lay

hold of a credit card receipt. These levels of security, though not yet widely used,

give the means to strengthen the foundation with which e-commerce can grow.

People use e-mail to conduct personal and business matters on a daily basis.

E-mail has no physical form and may exist electronically in more than one place at

a time. This poses a potential problem as it increases the opportunity for an

eavesdropper to get a hold of the transmission. Encryption protects e-mail by

rendering it very difficult to read by any unintended party. Digital signatures can

also be used to authenticate the origin and the content of an e-mail message.

Authentication:

In some case cryptography allows you to have more confidence in your

electronic transactions than you do in real life transactions. For example, signing

documents in real life still leaves one vulnerable to the following scenario. After

signing your will, agreeing to what is put forth in the document, someone can

change that document and your signature is still attached. In the electronic world

this type of falsification is much more difficult because digital signatures are built

using the contents of the document being signed.

6

Access Control:

Cryptography is also used to regulate access to satellite and cable TV.

Cable TV is set up so people can watch only the channels they pay for. Since there

is a direct line from the Cable Company to each individual subscriber’s home, the

Cable Company will only send those channels that are paid for. Many companies

offer pay-per-view channels to their subscribers. Pay-per-view cable allows cable

subscribers to ``rent’’ a movie directly through the cable box. What the cable box

does is decode the incoming movie, but not until the movie has been ``rented.’’ If

a person wants to watch a pay-per-view movie, he/she calls the Cable Company

and requests it. In return, the Cable Company sends out a signal to the subscriber’s

cable box, which unscrambles (decrypts) the requested movie.

Satellite TV works slightly differently since the satellite TV companies do

not have a direct connection to each individual subscriber’s home. This means that

anyone with a satellite dish can pick up the signals. To alleviate the problem of

people getting free TV, they use cryptography. The trick is to allow only those

who have paid for their service to unscramble the transmission; this is done with

receivers (“unscramblers’’). Each subscriber is given a receiver; the satellite

transmits signals that can only be unscrambled by such a receiver (ideally). Payper-view works in essentially the same way as it does for regular cable TV.

As seen, cryptography is widely used. Not only is it used over the Internet,

but also it is used in phones, televisions, and a variety of other common household

items. Without cryptography, hackers could get into our e-mail, listen in on our

phone conversations, tap into our cable companies and acquire free cable service,

or break into our bank/brokerage accounts.

7

2. BASIC TECHNIQUES AND ALGORITHMS

A cryptographic algorithm, or cipher, is a mathematical function used in

the Encryption and decryption process. A cryptographic algorithm works in

Combination with a key, a word, numbers or phrase — to encrypt the plain text.

The same plain text encrypts to different cipher text with different keys. The

security of encrypted data is entirely dependent on two things: the strength of the

cryptographic algorithm and the secrecy of the key. A cryptographic algorithm,

plus all possible keys and all the protocols that make it work comprise a

cryptosystem.

The method of encryption and decryption is called a cipher. Some

cryptographic methods rely on the secrecy of the algorithms; such algorithms are

only of historical interest and are not adequate for real-world needs. All modern

algorithms use a key to control encryption and decryption; a message can be

decrypted only if the key matches the encryption key.

2.1 Symmetric Key Vs. Asymmetric Key Ciphers :

There are two classes of key-based encryption algorithms, symmetric (or

secret-key) and asymmetric (or public-key) algorithms. The difference is that

symmetric algorithms use the same key for encryption and decryption (or the

decryption key is easily derived from the encryption key), whereas asymmetric

algorithms use a different key for encryption and decryption, and the decryption

key cannot be derived from the encryption key.

Symmetric algorithms can be divided into stream ciphers and block

ciphers. Stream ciphers can encrypt a single bit of plain text at a time, whereas

block ciphers take a number of bits (typically 64 bits in modern ciphers), and

encrypt them as a single unit.

8

Asymmetric ciphers (also called public-key algorithms or generally public-key

cryptography) permit the encryption key to be public (it can even be published in

a newspaper), allowing anyone to encrypt with the key, whereas only the proper

recipient (who knows the decryption key) can decrypt the message. The encryption

key is also called the public key and the decryption key the private key or secret

key.

Modern cryptographic algorithms are no longer pencil-and-paper ciphers.

Strong cryptographic algorithms are designed to be executed by computers or

specialized hardware devices. In most applications, cryptography is done in

computer software.

Generally, symmetric algorithms are much faster to execute on a computer

than asymmetric ones. In practice they are often used together, so that a public-key

algorithm is used to encrypt a randomly generated encryption key, and the random

key is used to encrypt the actual message using a symmetric algorithm. This is

sometimes called hybrid encryption.

The most studied and probably the most widely spread symmetric cipher is

DES; the upcoming AES might replace it as the most widely used encryption

algorithm. RSA is probably the best-known asymmetric encryption algorithm.

2.2 Digital Signatures :

Some public-key algorithms can be used to generate digital signatures. A

digital signature is a small amount of data that was created using some secret key,

and there is a public key that can be used to verify that the signature was really

generated using the corresponding private key. The algorithm used to generate the

signature must be such that without knowing the secret key it is not possible to

create a signature that would verify as valid.

9

Digital signatures are used to verify that a message really comes from the

claimed sender (assuming only the sender knows the secret key corresponding to

his/her public key). They can also be used to timestamp documents: a trusted

party signs the document and its timestamp with his/her secret key, thus testifying

that the document existed at the stated time.

Digital signatures can also be used to testify (or certify) that a public key

belongs to a particular person. This is done by signing the combination of the key

and the information about its owner by a trusted key. The digital signature by a

third party (owner of the trusted key), the public key and information about the

owner of the public key are often called certificates.

The reason for trusting that third party key may again be that it was signed

by another trusted key. Eventually some key must be a root of the trust hierarchy

(that is, it is not trusted because it was signed by somebody, but because you

believe a priori that the key can be trusted). In a centralized key infrastructure

there are very few roots in the trust network (e.g., trusted government agencies;

such roots are also called certification authorities). In a distributed

infrastructure there need not be any universally accepted roots, and each party

may have different trusted roots (such of the party’s own key and any keys signed

by it). This is the web of trust concept used in e.g. PGP.

A digital signature of an arbitrary document is typically created by

computing a message digest from the document, and concatenating it with

information about the signer, a timestamp, etc. The resulting string is then

encrypted using the private key of the signer using a suitable algorithm. The

resulting encrypted block of bits is the signature. It is often distributed together

with information about the public key that was used to sign it. To verify a

signature, the recipient first determines whether it trusts that the key belongs to the

person it is supposed to belong to (using the web of trust or a priori knowledge),

10

and then decrypts the signature using the public key of the person. If the signature

decrypts properly and the information matches that of the message (proper

message digest etc.), the signature is accepted as valid.

Several methods for making and verifying digital signatures are freely available.

The most widely known algorithm is RSA.

2.3 Cryptographic Hash Functions :

Cryptographic hash functions are used in various contexts, for example to

compute the message digest when making a digital signature. A hash function

compresses the bits of a message to a fixed-size hash value in a way that

distributes the possible messages evenly among the possible hash values. A

cryptographic hash function does this in a way that makes it extremely difficult to

come up with a message that would hash to a particular hash value.

Cryptographic hash functions typically produce hash values of 128 or more

bits. This number (2128) is vastly larger than the number of different messages

likely to ever be exchanged in the world. The reason for requiring more than 128

bits is based on the birthday paradox. The birthday paradox roughly states that

given a hash function mapping any message to an 128-bit hash digest, we can

expect that the same digest will be computed twice when 264 randomly selected

messages have been hashed. As cheaper memory chips for computers become

available it may become necessary to require larger than 128 bit message digests

(such as 160 bits as has become standard recently).

Many good cryptographic hash functions are freely available. The most

famous cryptographic hash functions are those of the MD family, in particular

MD4 and MD5. MD4 has been broken, and MD5, although still in widespread use,

should be considered insecure as well. SHA-1 and RipeMD-160 are two examples

that are still considered state of the art.

11

2.4 Cryptographic Random Number Generators :

Cryptographic random number generators generate random numbers for use

in cryptographic applications, such as for keys. Conventional random number

generators

available

in

most

programming

languages

or

programming

environments are not suitable for use in cryptographic applications (they are

designed for statistical randomness, not to resist prediction by cryptanalysts).

In the optimal case, random numbers are based on true physical sources of

randomness that cannot be predicted. Such sources may include the noise from a

semiconductor device, the least significant bits of an audio input, or the intervals

between device interrupts or user keystrokes. The noise obtained from a physical

source is then “distilled” by a cryptographic hash function to make every bit

depend on every other bit. Quite often a large pool (several thousand bits) is used

to contain randomness, and every bit of the pool is made to depend on every bit of

input noise and every other bit of the pool in a cryptographically strong way.

When true physical randomness is not available, pseudo-random numbers

must be used. This situation is undesirable, but often arises on general purpose

computers. It is always desirable to obtain some environmental noise – even from

device latencies, resource utilization statistics, network statistics, keyboard

interrupts, or whatever. The point is that the data must be unpredictable for any

external observer; to achieve this, the random pool must contain at least 128 bits of

true entropy.

Cryptographic pseudo-random number generators typically have a large pool

(“seed value”) containing randomness. Bits are returned from this pool by taking

data from the pool, optionally running the data through a cryptographic hash

function to avoid revealing the contents of the pool. When more bits are needed,

the pool is stirred by encrypting its contents by a suitable cipher with a random key

(that may be taken from an unreturned part of the pool) in a mode which makes

12

every bit of the pool depend on every other bit of the pool. New environmental

noise should be mixed into the pool before stirring to make predicting previous or

future values even more impossible.

Even though cryptographically strong random number generators are not very

difficult to build if designed properly, they are often overlooked. The importance

of the random number generator must thus be emphasized – if done badly, it will

easily become the weakest point of the system.

2.5 Strength of Cryptographic Algorithms :

Good cryptographic systems should always be designed so that they are as

difficult to break as possible. It is possible to build systems that cannot be broken

in practice (though this cannot usually be proved). This does not significantly

increase system implementation effort; however, some care and expertise is

required. There is no excuse for a system designer to leave the system breakable.

Any mechanisms that can be used to circumvent security must be made explicit,

documented, and brought into the attention of the end users.

In theory, any cryptographic method with a key can be broken by trying all

possible keys in sequence. If using brute force to try all keys is the only option,

the required computing power increases exponentially with the length of the key.

A 32 bit key takes 232 (about 109) steps. This is something anyone can do on

his/her home computer. A system with 40 bit keys takes 240 steps – this kind of

computation requires something like a week (depending on the efficiency of the

algorithm) on a modern home computer. A system with 56 bit keys (such as DES)

takes a substantial effort (with a large number of home computers using distributed

effort, it has been shown to take just a few months), but is easily breakable with

special hardware. The cost of the special hardware is substantial but easily within

reach of organized criminals, major companies, and governments. Keys with 64

13

bits are probably breakable now by major governments, and within reach of

organized criminals, major companies, and lesser governments in few years. Keys

with 80 bits appear good for a few years, and keys with 128 bits will probably

remain unbreakable by brute force for the foreseeable future. Even larger keys are

sometimes used.

However, key length is not the only relevant issue. Many ciphers can be

broken without trying all possible keys. In general, it is very difficult to design

ciphers that could not be broken more effectively using other methods. Designing

your own ciphers may be fun, but it is not recommended for real applications

unless you are a true expert and know exactly what you are doing.

One should generally be very wary of unpublished or secret algorithms.

Quite often the designer is then not sure of the security of the algorithm, or its

security depends on the secrecy of the algorithm. Generally, no algorithm that

depends on the secrecy of the algorithm is secure. Particularly in software, anyone

can hire someone to disassemble and reverse-engineer the algorithm. Experience

has shown that the vast majority of secret algorithms that have become public

knowledge later have been pitifully weak in reality.

The key lengths used in public-key cryptography are usually much longer than

those used in symmetric ciphers. This is caused by the extra structure that is

available to the cryptanalyst. There the problem is not that of guessing the right

key, but deriving the matching secret key from the public key. In the case of RSA,

this could be done by factoring a large integer that has two large prime factors. In

the case of some other cryptosystems it is equivalent to computing the discrete

logarithm modulo a large integer (which is believed to be roughly comparable to

factoring when the moduli is a large prime number). There are public key

cryptosystems based on yet other problems.

14

To give some idea of the complexity for the RSA cryptosystem, a 256 bit

modulus is easily factored at home, and 512 bit keys can be broken by university

research groups within a few months. Keys with 768 bits are probably not secure

in the long term. Keys with 1024 bits and more should be safe for now unless

major cryptographical advances are made against RSA; keys of 2048 bits are

considered by many to be secure for decades.

It should be emphasized that the strength of a cryptographic system is

usually equal to its weakest link. No aspect of the system design should be

overlooked, from the choice algorithms to the key distribution and usage policies.

2.6 Cryptanalysis and Attacks on Cryptosystems :

Cryptanalysis is the art of deciphering encrypted communications without

knowing the proper keys. There are many cryptanalytic techniques. Some of the

more important ones for a system implementer are described below.

Ciphertext-only attack: This is the situation where the attacker does not

know anything about the contents of the message, and must work from

ciphertext only. In practice it is quite often possible to make guesses about

the plaintext, as many types of messages have fixed format headers. Even

ordinary letters and documents begin in a very predictable way. For

example, many classical attacks use frequency analysis of the ciphertext,

however, this does not work well against modern ciphers.

Modern cryptosystems are not weak against ciphertext-only attacks,

although sometimes they are considered with the added assumption that the

message contains some statistical bias.

15

Known-plaintext attack: The attacker knows or can guess the plaintext for

some parts of the ciphertext. The task is to decrypt the rest of the ciphertext

blocks using this information. This may be done by determining the key

used to encrypt the data, or via some shortcut.

One of the best known modern known-plaintext attacks is linear

cryptanalysis against block ciphers.

Chosen-plaintext attack: The attacker is able to have any text he likes

encrypted with the unknown key. The task is to determine the key used for

encryption. A good example of this attack is the differential cryptanalysis

which can be applied against block ciphers (and in some cases also against

hash functions).

Some cryptosystems, particularly RSA, are vulnerable to chosen-plaintext

attacks. When such algorithms are used, care must be taken to design the

application (or protocol) so that an attacker can never have chosen plaintext

encrypted.

Man-in-the-middle attack: This attack is relevant for cryptographic

communication and key exchange protocols. The idea is that when two

parties, A and B, are exchanging keys for secure communication (e.g., using

Diffie-Hellman), an adversary positions himself between A and B on the

communication line. The adversary then intercepts the signals that A and B

send to each other, and performs a key exchange with A and B separately.

A and B will end up using a different key, each of which is known to the

adversary. The adversary can then decrypt any communication from A with

the key he shares with A, and then resends the communication to B by

encrypting it again with the key he shares with B. Both A and B will think

that they are communicating securely, but in fact the adversary is hearing

everything.

16

The usual way to prevent the man-in-the-middle attack is to use a public

key cryptosystem capable of providing digital signatures. For set up, the

parties must know each others public keys in advance. After the shared

secret has been generated, the parties send digital signatures of it to each

other. The man-in-the-middle can attempt to forge these signatures, but fails

because he cannot fake the signatures. This solution is sufficient in the

presence of a way to securely distribute public keys. One such way is a

certificate hierarchy such as X.509. It is used for example in IPSec.

Correlation between the secret key and the output of the cryptosystem is

the main source of information to the cryptanalyst. In the easiest case, the

information about the secret key is directly leaked by the cryptosystem.

More complicated cases require studying the correlation (basically, any

relation that would not be expected on the basis of chance alone) between

the observed (or measured) information about the cryptosystem and the

guessed key information.

For example, in linear (resp. differential) attacks against block ciphers the

cryptanalyst studies the known (resp. chosen) plaintext and the observed

ciphertext. Guessing some of the key bits of the cryptosystem the analyst

determines by correlation between the plaintext and the ciphertext whether

she guessed correctly. This can be repeated, and has many variations.

The differential cryptanalysis introduced by Eli Biham and Adi Shamir in

late 1980’s was the first attack that fully utilized this idea against block

ciphers (especially against DES). Later Mitsuru Matsui came up with linear

cryptanalysis which was even more effective against DES. More recently,

new attacks using similar ideas have been developed.

17

Perhaps the best introduction to this material is the proceedings of

EUROCRYPT and CRYPTO throughout the 1990’s. There can be found

Mitsuru Matsui’s discussion of linear cryptanalysis of DES, and the ideas of

truncated differentials by Lars Knudsen (for example, IDEA cryptanalysis).

The book by Eli Biham and Adi Shamir about the differential cryptanalysis

of DES is the “classical” work on this subject.

The correlation idea is fundamental to cryptography and several researchers

have tried to construct cryptosystems which are provably secure against

such attacks. For example, Knudsen and Nyberg have studied provable

security against differential cryptanalysis.

Attack against or using the underlying hardware: in the last few years as

more and more small mobile crypto devices have come into widespread use,

a new category of attacks has become relevant which aim directly at the

hardware implementation of the cryptosystem.

The attacks use the data from very fine measurements of the crypto device

doing, say, encryption and compute key information from these

measurements. The basic ideas are then closely related to those in other

correlation attacks. For instance, the attacker guesses some key bits and

attempts to verify the correctness of the guess by studying correlation

against her measurements.

Several attacks have been proposed such as using careful timings of the

device, fine measurements of the power consumption, and radiation

patterns. These measurements can be used to obtain the secret key or other

kinds information stored on the device.

This attack is generally independent of the used cryptographical algorithms

and can be applied to any device that is not explicitly protected against it.

18

Faults in cryptosystems can lead to cryptanalysis and even the discovery

of the secret key. The interest in cryptographical devices lead to the

discovery that some algorithms behaved very badly with the introduction of

small faults in the internal computation.

For example, the usual implementation of RSA private key operations are

very suspectible to fault attacks. It has been shown that by causing one bit

of error at a suitable point can reveal the factorization of the modulus (i.e. it

reveals the private key).

Similar ideas have been applied to a wide range of algorithms and devices.

It is thus necessary that cryptographical devices are designed to be highly

resistant against faults (and against malicious introduction of faults by

cryptanalysts).

Quantum computing: Peter Shor’s paper on polynomial time factoring and

discrete logarithm algorithms with quantum computers has caused growing

interest in quantum computing. Quantum computing is a recent field of

research that uses quantum mechanics to build computers that are, in

theory, more powerful than modern serial computers. The power is derived

from the inherent parallelism of quantum mechanics. So instead of doing

tasks one at a time, as serial machines do, quantum computers can perform

them all at once. Thus it is hoped that with quantum computers we can

solve problems infeasible with serial machines.

Shor’s results imply that if quantum computers could be implemented

effectively then most of public key cryptography will become history.

However, they are much less effective against secret key cryptography.

19

Current state of the art of quantum computing does not appear alarming, as

only very small machines have been implemented. The theory of quantum

computation gives much promise for better performance than serial

computers, however, whether it will be realized in practice is an open

question.

Quantum mechanics is also a source for new ways of data hiding and secure

communication with the potential of offering unbreakable security, this is

the field of quantum cryptography. Unlike quantum computing, many

successful experimental implementations of quantum cryptography have

been already achieved. However, quantum cryptography is still some way

off from being realized in commercial applications.

DNA cryptography: Leonard Adleman (one of the inventors of RSA) came

up with the idea of using DNA as computers. DNA molecules could be

viewed as a very large computer capable of parallel execution. This parallel

nature could give DNA computers exponential speed-up against modern

serial computers.

There are unfortunately problems with DNA computers, one being that the

exponential speed-up requires also exponential growth in the volume of the

material needed. Thus in practice DNA computers would have limits on

their performance. Also, it is not very easy to build one.

There are many other cryptographic attacks and cryptanalysis techniques.

However, these are probably the most important ones for an application designer.

Anyone contemplating to design a new cryptosystem should have a much deeper

understanding of these issues.

20

3. DATA ENCRYPTION AND ITS APPLICATIONS

Applications of Cryptography include the most important protocols and

systems made possible by cryptography. In particular they discuss the issues

involved in establishing a cryptographic infrastructure, and it gives a brief

overview of some of the electronic commerce techniques available today. They

are:

Key Management

Electronic Commerce

3.1 Key Management:

3.1.1 Key management – an Introduction:

Key management deals with the secure generation, distribution, and storage

of keys. Secure methods of key management are extremely important. Once a key

is randomly generated, it must remain secret to avoid unfortunate mishaps (such as

impersonation). In practice, most attacks on public-key systems will probably be

aimed at the key management level, rather than at the cryptographic algorithm

itself.

Users must be able to securely obtain a key pair suited to their efficiency

and security needs. There must be a way to look up other people’s public keys and

to publicize one’s own public key. Users must be able to legitimately obtain

others’ public keys; otherwise, an intruder can either change public keys listed in a

directory, or impersonate another user. Certificates are used for this purpose.

Certificates must be unforgeable. The issuance of certificates must proceed in a

secure way, impervious to attack. In particular, the issuer must authenticate the

identity and the public key of an individual before issuing a certificate to that

individual.

21

If someone’s private key is lost or compromised, others must be made

aware of this, and so they will no longer encrypt messages under the invalid public

key nor accept messages signed with the invalid private key. Users must be able to

store their private keys securely, so no intruder can obtain them, yet the keys must

be readily accessible for legitimate use. Keys need to be valid only until a

specified expiration date but the expiration date must be chosen properly and

publicized in an authenticated channel.

3.1.2 The size of the key :

The key size that should be used in a particular application of cryptography

depends on two things. First of all, the value of the key is an important

consideration. Secondly, the actual key size depends on what cryptographic

algorithm is being used.

Due to the rapid development of new technology and cryptanalytic

methods, the correct key size for a particular application is continuously changing.

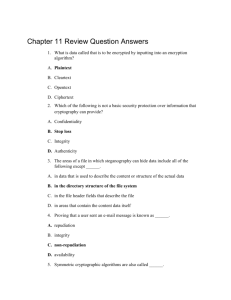

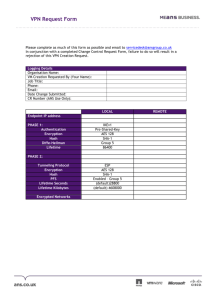

The table below contains key size limits and recommendations from different

sources for block ciphers, the RSA system, the elliptic curve system, and DSA.

Export Grade

Traditional

recommendations

Lenstra/Verheul

2000

Lenstra/Verheul

2010

Block

Cipher

RSA

Elliptic Curve DSA

56

80

112

512

1024

2048

112

160

224

512 / 112

1024 / 160

2048 / 224

70

952

132

952 / 125

78

1369

146 / 160

1369 / 138

Minimal key lengths in bits for different grades.

22

3.1.3 Finding Random Numbers for keys :

Whether using a secret-key cryptosystem or a public-key cryptosystem, one

needs a good source of random numbers for key generation. The main features of a

good source are that it produces numbers that are unknown and unpredictable by

potential adversaries. Random numbers obtained from a physical process are in

principle the best, since many physical processes appear truly random. One could

use a hardware device, such as a noisy diode; some are sold commercially on

computer add-in boards for this purpose. Another idea is to use physical

movements of the computer user, such as inter-key stroke timings measured in

microseconds. Techniques using the spinning of disks to generate random data are

not truly random, as the movement of the disk platter cannot be considered truly

random. A negligible-cost alternative is available; Davis et al. designed a random

number generator based on the variation of a disk drive motor’s speed. This

variation is caused by air turbulence, which has been shown to be unpredictable.

By whichever method they are generated, the random numbers may still contain

some correlation, thus preventing sufficient statistical randomness. Therefore, it is

best to run them through a good hash function before actually using them.

Another approach is to use a pseudo-random number generator fed by a

random seed. The primary difference between random and pseudo-random

numbers is that pseudo-random numbers are necessarily periodic whereas truly

random numbers are not. Since pseudo-random number generators are

deterministic algorithms, it is important to find one that is cryptographically secure

and also to use a good random seed; the generator effectively acts as an

``expander’’ from the seed to a larger amount of pseudo-random data. The seed

must be sufficiently variable to deter attacks based on trying all possible seeds.

It is not sufficient for a pseudo-random number generator just to pass a

variety of statistical tests, as described in Knuth and elsewhere, because the output

of such generators may still be predictable. Rather, it must be computationally

23

infeasible for an attacker to determine any bit of the output sequence, even if all

the others are known, with probability better than ½. Blum and Micali’s generator

based on the discrete logarithm problem satisfies this stronger definition, assuming

that computing discrete logarithm is difficult. Other generators perhaps based on

DES or a hash function can also be considered to satisfy this definition, under

reasonable assumptions.

3.1.4 Life cycle of a key :

Keys have limited lifetimes for a number of reasons. The most important

reason is protection against cryptanalysis. Each time the key is used, it generates a

number of ciphertexts. Using a key repetitively allows an attacker to build up a

store of ciphertexts (and possibly plaintexts) which may prove sufficient for a

successful cryptanalysis of the key value. Thus keys should have a limited lifetime.

If you suspect that an attacker may have obtained your key, the key should be

considered compromised, and its use discontinued.

Research in cryptanalysis can lead to possible attacks against either the key

or the algorithm. For example, recommended RSA key lengths are increased every

few years to ensure that the improved factoring algorithms do not compromise the

security of messages encrypted with RSA. The recommended key length depends

on the expected lifetime of the key. Temporary keys, which are valid for a day or

less, may be as short as 512 bits. Keys used to sign long-term contracts for

example, should be longer, say, 1024 bits or more.

Another reason for limiting the lifetime of a key is to minimize the damage

from a compromised key. It is unlikely a user will discover an attacker has

compromised his or her key if the attacker remains ``passive.’’ Relatively frequent

key changes will limit any potential damage from compromised keys.

24

The life cycle of a key can described as:

1. Key generation and possibly registration (for a public key).

2. Key distribution.

3. Key activation/deactivation.

4. Key replacement or key update.

5. Key revocation.

6. Key termination, involving destruction or possibly archival.

3.2 Electronic Commerce :

Cryptography is extremely useful to electronic commerce in the areas of

payment systems, and transactions over open networks. While several protocols

and payment systems are existing, the most widely used protocol for internet

transactions is SSL.

3.2.1 Electronic money :

Electronic money (also called electronic cash or digital cash) is a term that

is still fairly vague and undefined. It refers to transactions carried out

electronically with a net result of funds transferred from one party to another.

Electronic money may be either debit or credit. Digital cash per se is basically

another currency, and digital cash transactions can be visualized as a foreign

exchange market. This is because we need to convert an amount of money to

digital cash before we can spend it. The conversion process is analogous to

purchasing foreign currency.

Digital cash in its precise definition may be anonymous or identified.

Anonymous schemes do not reveal the identity of the customer and are based on

blind signature schemes. Identified spending schemes always reveal the identity of

the customer and are based on more general forms of signature schemes.

25

Anonymous schemes are the electronic analog of cash, while identified schemes

are the electronic analog of a debit or credit card. There are other approaches,

payments can be anonymous with respect to the merchant but not the bank, or

anonymous to everyone, but traceable (a sequence of purchases can be related, but

not linked directly to the spender’s identity).

Since digital cash is merely an electronic representation of funds, it is

possible to easily duplicate and spend a certain amount of money more than once.

Therefore, digital cash schemes have been structured so that it is not possible to

spend the same money more than once without getting caught immediately or

within a short period of time. Another approach is to have the digital cash stored in

a secure device, which prevents the user from double spending. Electronic money

also encompasses payment systems that are analogous to traditional credit cards

and checks. Here, cryptography protects conventional transaction data such as an

account number and amount; a digital signature can replace a handwritten

signature or a credit-card authorization, and public-key encryption can provide

confidentiality.

There are a variety of systems for this type of electronic money, ranging

from those that are strict analogs of conventional paper transactions with a typical

value of several dollars or more, to those (not digital cash per se) that offer a form

of “micropayments” where the transaction value may be a few pennies or less. The

main difference is that for extremely low-value transactions even the limited

overhead of public-key encryption and digital signatures is too much, not to

mention the cost of ``clearing’’ the transaction with bank. As a result, ``batching’’

of transactions is required, with the public key operations done only occasionally.

26

3.2.2 The iKP :

The Internet Keyed Payments Protocol (iKP) is an architecture for secure

payments involving three or more parties. Developed at IBM’s T.J. Watson

Research Center and Zurich Research Laboratory, the protocol defines transactions

of a “credit card” nature, where a buyer and seller interact with a third party

“acquirer”, such as a credit-card system or a bank, to authorize transactions. The

protocol is based on public-key cryptography.

IKP is no longer widely in use, however it is the current foundation for SET.

3.2.3 SET:

Visa and MasterCard have jointly developed the Secure Electronic

Transaction (SET) protocol as a method for secure, cost effective bankcard

transactions over open networks. SET includes protocols for purchasing goods and

services electronically, requesting authorization of payment, and requesting

“credentials” that is, certificates) binding public keys to identities, among other

services. Once SET is fully adopted, the necessary confidence in secure electronic

transactions will be in place, allowing merchants and customers to partake in

electronic commerce.

SET supports DES for bulk data encryption and RSA for signatures and

public-key encryption of data encryption keys and bankcard numbers. The RSA

public-key encryption employs Optimal Asymmetric Encryption Padding. SET is

being published as open specifications for the industry, which may be used by

software vendors to develop applications.

27

4. EXISTING SYSTEMS – PROS AND CONS

Theoretically speaking, no crypto system is completely unbreakable.

However, as the complexity of the crypto algorithm increases it becomes

practically impossible to the crypto analyst to break it using even the most modern

and powerful computing hardware. As the power of the hardware increases with

time, and more computing power become available to the crypto analyst, the

robustness of the crypto cipher should also be increased.

Traditional private key algorithms like DES increases the length of the

cipher key to resist the brute force attacks. For example, some 10 years ago, when

a typical high-end computer system used to run at the speed in the order of 100

MHz or so, a DES cipher of 64 Bit was pretty secure. But using to day’s high-end

systems which operate typically around 1.5 GHz speed, the old 64 Bit DES cipher

is no longer secure. We need a 128 bit or greater cipher to withstand the brute

force attacks using the modern high-end systems.

Though increasing the key length is a simple solution to make the algorithm

practically impossible to break, it has its own price to pay for. As the length of the

key increases the time to Encrypt and Decrypt the input will drastically increase.

More over, additional secure methods are needed to store and transport such

lengthy keys. All these side effects effectively reduce the overall security the

cipher offers and also hampers the performance of the crypto system.

Public key crypto systems like the RSA can solve the some of the

traditional problems associated with Private key cryptographic systems like DES.

Irrespective of the length of the key, these systems offer a reasonably good degree

of security. However, these algorithms are formidably complex and tediously slow

when compared to symmetric key algorithms. Thus they are not very suitable for

developing Block Cipher based crypto systems.

28

Particularly for developing a static data encryption system like the one that

handles databases, images, audio and video files, binary data using a public key

based algorithm is impractical as they are very slow. Symmetric key crypto

systems like the DES with some degree of sophistication are very well suited for

such applications.

Many such applications use a hybrid of symmetric and asymmetric ciphers.

For example, a system can use the RSA techniques to generate the key, which in

turn will be used by a DES cipher to encrypt the data. Though this solution seems

to be a sound one to avoid the many performance bottlenecks associated with the

traditional Asymmetric key crypto systems, it can’t be an effective and universal

alternative for all cases. There are many tradeoffs in this approach.

To overcome these tradeoffs, crypto experts devised several other

techniques. The proposed cipher is on such system that aims at using the basic

DES routines for core encryption. However, the key that the system uses will be

generated using a complex set of discrete mathematical functions.

29

5. CRYPTOGRAPHIC HASH FUNCTIONS

Hash functions were introduced in cryptology in the late seventies as a tool

to protect the authenticity of information. Soon it became clear that they were a

very useful building block to solve other security problems in telecommunication

and computer networks. This chapter sketches the history of the concept, discusses

the applications of hash functions, and presents the approaches which have been

followed to construct hash functions. An overview of practical constructions and

their performance is given and some attacks are discussed. Special attention is paid

to standards dealing with hash functions.

5.1 Introduction

During the last decades, the nature of telecommunications has changed

completely. Telecommunications more and more pervades every aspect of society.

Recent developments in mobile telecommunications like the GSM system make it

possible to reach a person any where in the world, independent of whether he is at

home, in his office, or on the road. Electronic mail has become the preferable way

of communication between researchers all over the world, and many companies

have introduced this service. At the same time EDI (Electronic Data Interchange)

is being introduced in order to extend the automatic information processing within

a company to suppliers and clients into a single system.

Home banking is becoming more and more popular and is the first step

towards shopping from the home. This evolution of telecommunications presents

new security requirements, posing new challenges to the cryptologists.

Handwritten letters offer reasonable privacy protection and the receiver can be

sure of the authenticity, which encompasses two aspects: he knows whether sender

is and he knows that the contents has not been modified. Voice communications

30

can be eavesdropped easily, but at least they offer a guarantee of the authenticity

of the communication: one is sure that one is talking to a specific person, and that

the conversation is not being modified.

Electronic data communications however offer no protection of privacy or

authenticity. An additional challenge is that it is not sufficient to design solutions

for closed user groups, since one requires often worldwide systems which work in

a wide variety of environments. In this chapter, we will discuss hash functions,

which form an important cryptographic technique to protect the authenticity of

information

5.2 Authentication and Privacy

This section discusses the basic concepts of cryptography, and clarifies the

importance of hash functions in the protection of information authentication. At

the end of this section, other applications of hash functions are presented.

5. 2.1 Privacy protection with symmetric cryptology

Cryptology has been used for thousands of years to protect communications

of kings, soldiers, and diplomats. Until recently, the protection of communications

was almost a synonym for the protection of the secrecy of the information, which

is achieved by encryption. In the encryption operation, the sender transforms the

message to be sent, which is called the plain text, into the cipher text. The

encryption algorithm uses as parameter a secret key; the algorithm itself is public,

which is known as Kerckhoffs’s principle. The receiver can use the decryption

algorithm and the same secret key to transform the cipher text back into the plain

text. The main concept of encryption is to replace the secrecy of a large amount of

data by the secrecy of a short secret key which can be communicated via a secure

channel. Because the key for encryption and decryption are equal, this approach is

called symmetric cryptography.

31

It was widely believed that protection of the authenticity would follow

automatically from protection of the secrecy: if the receiver obtains a

“meaningful” plaintext, he can be sure that the sender with whom he shares the

key has actually sent this message. This belief is wrong: in general “meaningful”

plaintext can only be distinguished from ‘random’ plaintext based on redundancy,

which is not always present. Even if the plaintext has redundancy, modifications

can sometimes be made which will escape detection. This holds especially for

additive ciphers, where the cipher text is obtained by adding a key stream modulo

to the plaintext: complementing a cipher text bit results in a complementation of

the corresponding plain text bit. However, in the old days the authenticity was

protected by the intrinsic properties of the communication channel.

The advent of electronic computers and telecommunication networks

created the need for a widespread commercial encryption algorithm. In this

respect, the publication in 1977 of the Data Encryption Standard (DES) by the U.S.

National Bureau of Standards was with out any doubt an important milestone. The

DES was designed by IBM in cooperation with the National Security Agency

(NSA). It later became an ANSI banking standard. Soon the need for specific

measures to protect the authenticity of the information became obvious, since

authenticity does not come for free together with secrecy protection. The first idea

to solve this problem was to add a simple form of redundancy to the plaintext

before encryption, namely the sum modulo of all plaintext blocks. This showed to

be insufficient, and techniques to construct redundancy which is a complex

function of the complete message were proposed. It is not surprising that the first

constructions were based on the DES.

32

5.2.2 Authentication with symmetric cryptology

In the military world it was known for some time that modern

telecommunication channels like radio require additional protection of the

authenticity. One of the techniques applied was to append a secret key to the

plaintext before encryption. The protection then relies on the error propagating

properties of the encryption algorithm and on the fact that the secret key for

authentication is used only once. In the banking environment, there is a strong

requirement for protecting the authenticity of transactions.

Before the advent of modern cryptology, this was achieved as follows: the

sender computes a function of the transaction totals and a secret key; the result,

which was called the test key, is appended to the transaction. This allows the

receiver of the message, who is also privy to the secret key, to verify the

authenticity of the transaction. Although both solutions are not suited for a wider

and less restrictive environment, they form the embryonic stadium of the concept

of hash functions. New techniques were proposed to produce redundancy under the

form of a short string which is a complex function of the complete message.

A function that compresses its input was already in use in computer science

to allocate as uniformly as possible storage for the records of a file. It was called a

hash function, and its result was called a hash code. If a hash function has to be

useful for cryptographic applications, it has to satisfy some additional conditions.

Informally, one has to impose that the hash function is one-way (hard to invert)

and that it is hard to find two colliding inputs, i.e., two inputs with the same

output. If the information is to be linked with an originator, a secret key has to be

involved in the hashing process (this assumes a coupling between the person and

his key), or a separate integrity channel has to be provided. Hence two basic

methods can be identified:

33

The first approach is analogous to the approach of a symmetric cipher,

where the secrecy of large data quantities is based on the secrecy and

authenticity of a short key. In this case the authentication of the information

will also rely on the secrecy and authenticity of a key. To achieve this goal,

the information is compressed with a hash function, and the hash code is

appended to the information. The basic idea of the protection of the

integrity is to add redundancy to the information. The presence of this

redundancy allows the receiver to make the distinction between authentic

information and bogus information. In order to guarantee the origin of the

data, a secret key that can be associated to the origin has to intervene in the

process. The secret key can be involved in the compression process; the

hash function is then called a Message Authentication Code or MAC. A

MAC is recommended if authentication without secrecy is required. If the

hash function uses no secret key, it is called a Manipulation Detection Code

or MDC; in this case it is necessary to encrypt the hash code and/or the

information with a secret key. In addition, the encryption algorithm must

have a strong error propagation: the cipher text must depend on all previous

plaintext bits in a complex way. Additive stream ciphers can definitely not

be used for this purpose.

The second approach consists of basing the authenticity (both integrity and

origin authentication) of the information on the authenticity of a

Manipulation Detection Code or MDC. A typical example for this approach

is an accountant who will send the payment instructions of his company

over an insecure computer network to the bank. He computes an MDC on

the file, and communicates the MDC over the telephone to the bank

manager. The bank manager computes the MDC on the received message

and verifies whether it has been modified. The authenticity of the telephone

channel is offered here by voice identification. Note that the addition of

redundancy is necessary but not sufficient. Special care has to betaken

against high level attacks, like a replay of an authenticated message.

34

5.2.3 Asymmetric or public-key cryptology

From a scientific viewpoint, the most important breakthrough of the last

decennia is certainly the invention of public-key cryptology in the mid seventies

by W. Diffie and M. Hellman ,and independently by R. Merkle. Public key

cryptology has brought two important insights:

Sender and receiver do not need to share a secret key: it is sufficient that

they use an authentic channel to communicate a key.

One can produce an electronic equivalent of a handwritten signature: the

digital signature. As a by-product of their results, it became clear that

secrecy and authenticity are two in-dependent properties of a cryptosystem:

if the encryption key is public, anyone can use to send an enciphered

message to a certain receiver. Protection of the authenticity of the

information is possible, but this requires a second independent operation.

There are several reasons why conventional techniques are still widely used

in spite of the development of public-key cryptology. The most important

one is certainly that no efficient public-key cryptosystems are known. In the

first years after the invention of public-key crypto systems, serious doubts

have been raised about their security. A good example is the rise and fall of

the knapsack-based schemes. These systems were very attractive because of

their good performance. Unfortunately, almost all public-key cryptosystems

based on knapsacks were shown to be insecure . It has taken more than 10

years before two schemes of the late seventies have reached the market. The

Diffie-Hellman scheme, proposed in 1976, is widely used for key

agreement, and the RSA scheme proposed by R. Rivest,A. Shamir, and L.

Adleman in 1978

is used for both digital signatures and public-key

encryption. The disadvantages of both schemes are that they are two to

three orders of magnitude slower than all conventional systems, and that the

key and block size are about10 times larger. Soon it was realized that one

35

could have the best of both worlds, i.e., more flexibility, a less cumbersome

key management, and a high performance, by using hybrid schemes. One

uses public key techniques for key establishment, and subsequently a

conventional algorithm like DES or triple-DES to encipher large quantities

of data. If one wants to take a similar approach to authenticity protection,

one can use cryptographic hash functions as follows: one first compresses

the data with a fast hash function to a short string of fixed length. The slow

digital signature scheme is then used to protect the authenticity of the hash

code.

5.2.4 Other applications of hash functions

Hash functions have been designed in the first place to protect the

authenticity of information. When efficient and secure hash functions became

available, it was realized that under certain assumptions they can be used for many

other applications. For some applications it is required that the hash function

behaves as a “random” function. This implies that there is no correlation between

input and output bits, no correlation between output bits, etc. The most important

applications are the following:

Protection of pass-phrases: pass phrases are passwords of arbitrary length.

One will store the MDC corresponding to the pass phrase in the computer

rather than the password itself.

Construction of efficient digital signature schemes: this comprises the

construction of efficient signature schemes based on hash functions only, as

well as the construction of digital signature schemes from zero-knowledge

protocols.

Building block in practical protocols including entity authentication

protocols, key distribution protocols, and bit commitment.

36

Construction of encryption algorithms: while the first hash functions were

based on block ciphers, the advent of fast hash functions has led to the

construction of encryption algorithms based on hash functions.

5.3 Definitions

In the previous section two classes of hash functions have been introduced,

namely Message Authentication Codes or MAC’s (which use a secret key), and

Manipulation Detection Codes or MDC’s, which do not make use of a secret key.

According to their properties, the class of MDC’s will be further divided into oneway hash functions (OWHF) and collision resistant hash functions(CRHF). In the

following the hash function will be denoted with h, and its argument, i.e., the

information to be protected with X. The image of X under the hash function h will

be denoted with h(X). The general requirements are that the computation of the

hash code is “easy” if all arguments are known. Moreover it is assumed that the

description of the hash function is public; for MAC’s the only secret information

lies is the secret key.

5.3.1 One-way hash function (OWHF)

The first informal definition of a OWHF was given by R. Merkle and M. Rabin.

Definition: A one-way hash function is a function h satisfying the following

conditions:

1. The argument X can be of arbitrary length and the result h(X) has a fixed length

of nbits (with n = 64).

2. The hash function must be one-way in the sense that given a Y in the image of

h, it is “hard” to find a message X such that h(X) = Y , and given X and h(X) it is

“hard” to find a message X = X such that h(X ) = h(X).The first part of the second

condition corresponds to the intuitive concept of one-way ness, namely that it is

37

“hard” to find a pre image of a given value in the range. In the case of

permutations or injective functions only this concept is relevant. The second part

of this condition, namely that finding a second pre image should be hard, is a

stronger condition, that is relevant for most applications. The meaning of “hard”

still has to be specified. In the case of “ideal security”, introduced by X. Lai and J.

Massey, producing a (second)pre image requires 2n operations. However, it may

be that an attack requires a number of operations that is smaller than 2n, but is still

computationally infeasible.

5.3.2 Collision resistant hash function (CRHF)

The first formal definition of a CRHF was given by I. Damgard; an informal

definition was given by R. Merkle.

Definition: A collision resistant hash function is a function h satisfying the

following conditions:

1. The argument X can be of arbitrary length and the result h(X) has a fixed

length of n bits (with n = 128).

2. The hash function must be one-way in the sense that given a Y in the image of

h, it is “hard” to find a message X such that h(X) = Y , and given X and h(X) it

is “hard” to find a message X = X such that h(X ) = h(X).

3. The hash function must be collision resistant: this means that it is “hard” to find

two distinct messages that hash to the same result. Under certain conditions one

can argue that the first part of the one-way property follows from the collision

resistant property. Again several options are available to specify the word

“hard”. In the case of “ideal security”, producing a (second) pre image requires

2n operations and producing a collision requires O(2n/2) operations. This can

explain why both conditions have been stated separately. One can however also

38

consider the case where producing a (second) pre image and a collision

requires at least O(2n/2) operations, and finally the case where one or both

attacks require less than O(2n/2) operations, but then umber of operations is

still computationally infeasible (e.g., if a larger value of n is selected).The

choice between a OWHF and a CRHF is application dependent. A CRHF is

stronger than a OWHF, which implies that using a CRHF is playing safe. A

OWHF can only be used if the opponent can not exploit the collisions, e.g., if

the argument is randomized before the hashing operation. On the other hand, it

should be noted that designing a OWHF is easier, and that the storage for the

hash code can be halved (64 bits instead of 128 bits). A disadvantage of a

OWHF is that the security level decreases with the number of applications of h:

an outsider who knows hash codes has increased his probability to find an X

with a factor of s. This limitation can be overcome through the use of a

parameterized OWHF.

5.3.3 Message Authentication Code (MAC)

Message Authentication Codes have developed from the test keys in the banking

community. However, these algorithms did not satisfy this strong definition.

Definition: A MAC is a function satisfying the following conditions:

1. The argument X can be of arbitrary length and the result h(K,X) has a fixed

length of n bits (with n = 32...64).

2. Given h and X, it is “hard” to determine h(K,X) with a probability of success

“significantly higher” than 1/2n. Even when a large number of pairs

{Xi,h(K,Xi)} are known, where the Xi have been selected by the opponent, it is

“hard” to determine the key K or to compute h(K,X ) for any X = Xi. This last

attack is called an adaptive chosen text attack .Note that this last property

implies that the MAC should be both one-way and collision resistant for

39

someone who does not know the secret key K. This definition leaves open

whether or not a MAC should be one-way or collision resistant for someone

who knows K. An example where this property could be useful is the

authentication of multi destination messages.

5.4 Attacks on hash functions

The discussion of attacks will be restricted to attacks which depend only on

the size of the external parameters (size of hash code and possibly size of key);

they are thus independent of the nature of the algorithm. In order to asses the

feasibility of these attacks, it is important to know that for the time being

256operations is considered to be on the edge of feasibility.

In view of the fact that the speed of computers is multiplied by four every

three years, 264operations is sufficient for the next 10 years, but it will be only

marginally secure within 20years. For applications with a time frame of 20 years

or more, one should try to design the scheme such that an attack requires at least

280operations.Random attack The opponent selects a random message and hopes

that the change will remain undetected.

In case of a good hash function, his probability of success equals 1/2nwith n

the number of bits of the hash code. The feasibility of this attack depends on the

action taken in case of detection of an erroneous result, on the expected value of a

successful attack, and on the number of attacks that can be carried out. For most

application this implies that n = 32 bits is not sufficient .Birthday attack This

attack can only be used to produce collisions. The idea behind the birthday attack

is that for a group of 23 people the probability that at least two people have a

common birthday exceeds 1/2. Intuitively one would expect that the group should

be significantly larger. This can be exploited to attack a hash function in the

following way: an adversary generates r1variations on a bogus message and

40

r2variations on a genuine message. The probability of finding a bogus message

and a genuine message that hash to the same result is given by1 - exp -r1·

r22n,which is about 63 % when r = r1= r2= 2n2. Note that in case of a MAC the

opponent is unable to generate the MAC of a message. He could however obtain

these MAC’s with a chosen plaintext attack.

A second possibility is that he collects a large number of messages and

corresponding MAC’s and divides them into two categories, which corresponds to

a known plaintext attack. The involved comparison problem does not require

r2operations:after sorting the data, which requires O(r log r) operations,

comparison is easy. Jueneman has shown in 1986 that for n = 64 the processing

and storage requirements were feasible in reasonable time with the computer

power available in every large organization. A time-memory-processor trade-off is

possible. If the function can be called as a black box, one can use the collision

search algorithm proposed by J.-J. Quisquater , that requires about 2 π/2 ·

2n2operations and negligible storage.

To avoid this attack with a reasonable safety margin, n should be at least

128 bits. This explains the second condition in Definition 2 of a CRHF. In case of

digital signatures, a sender can attack his own signature or the receiver or a third

party could offer the signer a message he’s willing to sign and replace it later with

the bogus message. Only the last attack can be thwarted through randomizing the

message just prior to signing. If the sender attacks his own signature, the

occurrence of two messages that hash to the same value might make the signer

suspect, but it will be very difficult to prove the denial to a third party. Exhaustive

key search This attack is only relevant in case of a MAC. It is a known plaintext

attack, where an attacker knows M plaintext-MAC pairs for a given key and will