2010 Final Exam with Solution Sketches

advertisement

Solution Sketches

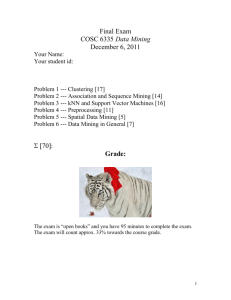

Final Exam

COSC 6335 Data Mining

December 7, 2010

Your Name:

Your student id:

Problem 1 --- Clustering [17]

Problem 2 --- Association and Sequence Mining [19]

Problem 3 --- kNN, Support Vector Machines and Ensembles [14]

Problem 4 --- Preprocessing [10]

Problem 5 --- Spatial Data Mining/Analysis [5]

Problem 6 --- PageRank [5]

:

Grade:

The exam is “open books” and you have 95 minutes to complete the exam.

The exam will count approx. 33% towards the course grade.

1

1) Clustering [17]

a) The DENCLUE algorithm uses density functions to form clusters. How are density

functions created by the DENCLUE algorithm from datasets? What are density

attractors? What role do density attractors play when forming clusters? [4]

The density for a query point is computed by summing up the influences of the points in

the dataset to the query pointthe influence of a point to the query point decreases as the

points distance to the query point increases. Density attractors are local maxima of the

density function. Points that are associated with the same density attractor belong to the

same clusterhill climbing is used to find this association.

b) How do hierarchical clustering algorithms, such as AGNES, form dendrograms?[2]

By merging the closest two clusters

c) What are the key ideas of grid-based clustering? What applications benefit the most

from grid-based clustering? [3]

Objects in datasets are associated with grid-cells and the grid-cells themselves are

clustered; therefore the complexity of grid-based clustering algorithm only depends on

the number of grid-cells and not on the number of objects in the dataset [1.5]; moreover,

it is know which grid-cells are neighboring[0.5], and no distance computations need to be

performed[0.5].

Applications where a lot of objects have to be clustered [1] ; at most 3 points!

d) Compute the Silhouette for the following clustering that consists of 2 clusters:

{(0,0), (0.1), (1,1)}, {(1,2), (4,4)}; use Manhattan distance for distance computations.

Compute each point’s silhouette; interpret the results (what do they say about the

clustering of the 5 points; the overall clustering?)![6]

(5.5-1.5)/5.5

(4.5-1)/4.5

(3.5-1.5)/3.5

(2-5)/5

(5-7)/7

In general, the silhouette of the first 3 points is good, the silhouette of the 4th point is

bad, because this point has been associated with the wrong clustering, and the

silhouette for the 5th points is mediocre because the inter-cluster distance is high due

to the incorrect assignment of the point (1.2). The quality of the first cluster is

decent, whereas the quality of the second cluster and the overall clustering is poor!

e) How does subspace clustering differ from traditional clustering algorithms, such

as k-means? [2]

K-means find clusters the complete attribute space, whereas subspace clustering finds

clusters in subspaces of the complete attribute space. If somewhat mentions that

subspace clustering returns overlapping clusters, you can give an extra single point

for that!

2

2) Association Rule and Sequence Mining [19]

a) What is the anti-monotonicity property of frequent itemsets? How does APRIORI

take advantage of this property to create frequent itemsets efficiently? [4]

If A is frequent, every subset of A is frequent or if A is infrequent very superset of A is

infrequent. [1.5]

1. By creating k-itemsets based on frequent k-1 itemsets [1.5]

2. By pruning k-itemsets that miss some of their subsets [1]

b) Assume the Apriori-style sequence mining algorithm described on pages 429-435 of

our textbook is used and the algorithm generated 3-sequences listed below:

Frequent 3-sequences Candidate Generation Candidates that survived pruning

<(1) (2) (3)>

<(1 (2 3)>

<(1 2 4)>

<(1) (2) (4)>

<(1) (3) (4)>

<(1 2) (3)>

<(2 3) (5)

<(2 3) (4)>

<(2) (3) (4)>

(1)(2)(3)(4)

(1) (2 3) (4)

(1) (2 3) (5)

(1 2) (3) (4)

(1) (2) (3) (4)

(1) (2 3) (4)

What candidate 4-sequences are generated from this 3-sequence set? Which of the

generated 4-sequences survive the pruning step? Use format of Figure 7.6 in the textbook

on page 435 to describe your answer! [5]

c) What is the idea of hash-based/bucket-based implementations of APRIORI outlined in

the “Top 10 Algorithms…” paper? How do they speed up APRIORI? [5]

Item sets a hashed to bucket using a hashing function, allowing itemsets to be found more

quickly. More importantly, if the count in a bucket (that might contain different itemsets)

is less than a threshold than the itemsets in the bucket can be pruned…The dataset is

subdivided into n separate partitions such that… each partition can be mined separately.

Remark: horizontal portioning does not use hashing!

d) Give an example (just one example please!) of a potential commercial application of

mining sequential patterns! Be specific, how sequence mining would be used in the

proposed application! [5]

No answer given

3

3) kNN, SVM, & Ensembles [14]

a) The soft margin support vector machine solves the following optimization problem:

What does the secord term minimize? What does i measure (refer to the figure given

below if helpful)? What is the advantage of the soft margin approach over the linear

SVM approach? [5]

It minimizes the squared error.[1] i is 0 if a black point (white point) is at or above

(below) the …=+1 (…=-1) line, and otherwise, it takes the value of the distance of the

black (white) point to the …=+1 (…=-1) line[2]. The soft margin approach can cope with

problems that are not linearly separable[2].

b) Why are kNN (k-nearest-neighbor) approaches are called lazy? [2]

delay computing the model until it is needed

c) Ensemble approaches obtained high accuracies for challenging classification tasks.

Explain why! [3]

If the used base classifiers are independent or at least partially independent, combining

based classifiers and making decisions by majority vote enhances the accuracy

significantly, even if the base classifiers are not very good. Other correct answers exist!

d) What role do example weights play for the AdaBoost algorithm? Why does AdaBoost

modify weights of training examples? [4]

The weight determines with what probability an example is picked as a training

example.[1.5] The key idea to modify weights is to obtain high weights for examples that

are misclassified ultimately forcing the classification algorithms to learn a different

classifier that classifies those examples correctly, but likely misclassifies other

examples.[2.5]

4

4) Prepocessing [10]

a) What is the goal of attribute normalization techniques, such as z-scores? Assume a

normalized attribute A of an object has a z-score of ; what does this say about the

object’s attribute value in relationship to the values of other objects? [3]

make attributes equally important/making similarity assessment independent on

how values of attributes are measured[2]; it has the mean value[1]

b) What does it mean if an attribute is redundant for a classification problem? Why are

redundant attribute usually removed by preprocessing? [2]

Its values can be computed using the value of other attributes [1] Reduce

classification algorithm complexity/enhance accuracy![1]

c )What is the goal of feature creation? Give an example where feature creation enhances

the accuracy of a classification algorithm! [5]

No solution given! Many possible examples! If you do not provide any convincing

evidence why the suggested feature creation example enhances accuracyonly 3

points.

5) Spatial Data Mining [5]

What are the main challenges in mining spatial datasetshow does mining spatial

datasets differ from mining business datasets?

No answer given!

6) PageRank [5]

How does the PageRank algorithm measure the importance of a webpage? Give a sketch

of the computational methods it uses to compute the importance of a webpage!

The importance of a webpage is recursively defined by summing up the importance of the

webpages that point to that webpage[2]. …random walkthough[1.5]….

5