full_text

advertisement

Binary Classification Trees for Multi-class Classification Problems

Jin-Seon Lee* and Il-Seok Oh**

*

Department of Computer Engineering, Woosuk University, Korea

**

Department of Computer Science, Chonbuk National University, Korea

jslee@woosuk.ac.kr, isoh@moak.chonbuk.ac.kr

Abstract

This paper proposes a binary classification tree aiming at

solving multi-class classification problems using binary

classifiers. The tree design is achieved in a way that a

class group is partitioned into two distinct subgroups at a

node. The node adopts the class-modular scheme to

improve the binary classification capability. The

partitioning is formulated as an optimization problem and

a genetic algorithm is proposed to solve the optimization

problem. The binary classification tree is compared to the

conventional methods in terms of classification accuracy

and timing efficiency. Experiments were performed with

numeral recognition and touching-numeral pair

recognition.

1. Introduction

The pattern recognition problems involve the small-set

classifications like numeral or English alphabet

recognitions and the large-set classifications with

hundreds or thousands of classes such as touchingnumeral pair, Korean character, and Chinese character

recognitions. These multi-class classifications can be

solved using a set of binary classifiers [1].

This paper deals with designing a multi-class classifier

using a collection of binary classifiers. Firstly we should

have a method to decompose the original multi-class

classification into a set of binary classification subproblems. We mention on two conventional approaches:

class-pairwise and class-modular schemes.

Another approach can be found in the tree-structured

classifications [Chapter 8, 1]. The CART splits the

training samples into two groups at a node, and applies the

same process recursively to two children [2]. Perrone used

the confusion matrix to find out the best class partition [3].

Chae et al. used the linear decision function at a node to

partition the training samples. They designed the trees

using a genetic algorithm [4].

In this paper, a new binary classification tree (BCT) is

proposed. In our BCT, the tree design is achieved in a

way that a class group is partitioned into two distinct

subgroups at a node. The node has a binary classifier that

assigns a sample pattern to one of two class subsets. To

gain a higher accuracy, the class-modular scheme is

adopted for implementing the binary classification at a

node. An optimization problem is formulated in order to

search for the best partition, i.e. the one showing the

largest separability. A genetic algorithm that searches for

the best partition will be presented. The same process is

applied to each of the two children recursively until a leaf

node with only one class is reached.

Our BCT method is compared to the conventional

methods in terms of classification accuracy and timing

efficiency. Experiments were performed with numeral

recognition and touching numeral pair recognition. A

promising preliminary result was obtained.

2. Class-decomposed Classifications

Assume there are K classes and k-th class is denoted

by ωk. X represents a feature vector of a sample to be

recognized.

2.1 Class-pairwise scheme

This method constructs a binary classifier for each of

the class pairs. So totally KC2 binary classifiers are needed

to solve K-class classification problems. For example, 45

binary classifiers are made for the numeral recognition.

When K is large, the number of binary classifiers is large

and this method is unpractical. The decision rules are as

follow. The dij is a binary classifier that discriminates the

classes ωi and ωj.

dij(X) = 1 if Xωi, 0 if Xωj

pi(X) = j=1,K and ji dij(X)

Classify X into ωk if k = argmaxi=1,K pi(X)

2.2 Class-modular scheme

This method decomposes the K-class classification

problem into K binary classification sub-problems. A

binary classifier for the class ωi discriminates ωi from the

remaining K-1 classes, {ωj | j=1,K and ji}. So totally K

binary classifiers are required. For the numerals, 10 binary

classifiers are made and for a large-set classification

problem this method is not unpractical. The decision rules

are as follow. The di is a binary classifier for the class ωi.

di(X) = 1 if Xωi, 0 if X{ωj| j=1,K and ji}

Classify X into ωk if k = argmax i=1,K di(X)

3. Binary Classification Trees

This section proposes the binary classification tree

(BCT) as a multi-class classification method using binary

classifiers. An internal node has a class set and also a

binary classifier that discriminates a sample pattern into

one of two class subsets. A leaf node has only one class.

3.1 Structures

The root node is allocated the original class set,

S={ω1, ω2,… ωK}. In the node, S is partitioned into two

class subsets, Sleft and Sright where Sleft, Sright and

S=SleftSright. The Sleft is given to the left child and the

Sright to the right child. This process is applied to each of

the left and right children recursively. When the class set

has only one class, the node becomes a leaf node and the

recursive process terminates.

If the following condition with b=1 is adopted in the

BCT design process, a balanced tree is resulted in. When

no condition is used, a skewed tree is likely to be

generated.

abs(|Sleft|–|Sright|)b where|.| represents the set size ---- (1)

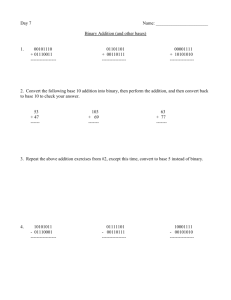

Figure 1 shows a BCT for the numeral recognition

problem (K=10). The numbers of leaf and internal nodes

are K and K-1, respectively [5]. Since only the internal

node has a binary classifier, K-1 binary classifiers are

made.

(a) Balanced BCT

(b) Skewed BCT

Figure 1. An example BCT (K=10 for numerals).

In the above design process, the most important

problem is to search for the best partition in the internal

nodes. In searching for the best partition, the search space

grows exponentially as S becomes large. So we should

develop a sub-optimal algorithm that is computationally

practical and guarantees a solution near the optimum

point.

The above problem of partitioning the class set is

similar to the feature selection problem. The searching

process needs an objective function that evaluates a

partition. Our objective function can be formulated in

terms of the separability, i.e. how well the two class

subsets are discriminated in the feature space. At the

internal nodes near the root, the confusing class pairs are

enforced to be put into the same class subsets. The

discrimination of the confusing pairs is delayed and the

task is given to the nodes near the leaf node. This strategy

is adopted since the nodes near the root should deal with a

larger number of classes than ones near the leaf.

The measurement of the separability can be done in

the wrapper or filter approaches [6]. In the wrapper

approach, specific classifier and database are used to

evaluate the partition. The actual recognition rates or the

confusion matrices can be used. In the filter approach, a

statistic is used like the measurement of overlapping of the

training samples of Sleft and Sright in the feature space. Let

us denote the separability function by J(Sleft, Sright).

3.2 Binary classification at nodes

Each internal node should implement a binary

classifier that classifies a sample X into Sleft or Sright. The

performance of the binary classifiers at the internal nodes

directly influences the final performance of the BCT.

One simple rule follows. It constructs one binary

classifier d(X) at a node.

Rule 1:

Training:

For X from class ωi,

if(ωiSleft) d(X)=0, otherwise d(X)=1.

Recognition:

For X,

if(d(X)0.5) move X to the left,

otherwise move X to the right.

Another rule adopts the class-modular scheme as

shown below. It constructs |S| binary classifiers where |S|

represents the number of classes in S.

Rule 2:

Training:

For X from class ωi,

di(X)=1, dj(X)=0 for j=1,K and ji.

Recognition:

For X,

k = argmax i=1,K di(X)

if(wkSleft) move X to the left,

otherwise move X to the right.

3.3 Genetic algorithm for optimal partitions

The GA is a stochastic algorithm that mimics the

natural evolution. The most distinct aspect of the

algorithm is that it maintains a set of solutions (called

individuals or chromosomes) in a population. Like the

biological evolution, it has a mechanism of selecting fitter

chromosomes at each generation. To simulate the

evolution, the selected chromosomes undergo genetic

operations such as the crossover and mutation.

The steady-state procedure is described below.

Detailed specification of our GA follows. The algorithm

was designed relying on the feature selection algorithm

proposed in [7].

steady_state_GA() {

initialize population P;

repeat {

select two parents p1 and p2 from P;

offspring = crossover(p1,p2);

mutation(offspring);

replace(P, offspring);

} until (stopping condition);

}

A string with K binary digits is used where K

represents the number of classes in S. A binary digit

represents a class; values 0 and 1 mean the membership of

Sleft and Sright, respectively. As an example, chromosome

01101001 means a partition of Sleft = {ω1,ω4,ω6,ω7} and

Sright = {ω2,ω3,ω5,ω8}. The generation of initial population

is straightforward. For each gene, if a random floating

number within [0,1] is less than 0.5, the gene is set to be

0. Otherwise the gene is set to be 1.

The fitness of a chromosome is evaluated by the

separability function J(Sleft, Sright). The chromosome

selection for the next generation is done on the basis of

the fitness. The selection mechanism should ensure that

fitter chromosomes have higher probability of survival.

Our design adopts the rank-based roulette-wheel selection

scheme. The chromosomes in population are sorted nonincreasingly in terms of fitness, and the i-th chromosome

is assigned the probability of selection by a nonlinear

function, P(i) = q(1-q)i-1. A larger value of q enforces

evidently a stronger selection pressure. The actual

selection is done using the roulette wheel procedure.

We use the standard crossover and mutation operators

with a slight modification. An m-point crossover operator

is used which chooses m cutting points at random and

alternately copies each segment out of the two parents.

The crossover may result in offspring that break the

balance requirement because the exchanged gene

segments may have different numbers of 1. The mutation

is applied to the offspring by converting 0-bit to 1–bit or

1-bit to 0-bit with the mutation probability pm. After

mutation, some bits are chosen randomly and converted to

make the chromosome satisfy the tree balancing condition

in (1). This gives further the mutation effect.

If the mutated chromosome is superior to both parents,

it replaces the similar parent; if it is inbetween the two

parents, it replaces the inferior parent; otherwise, the most

inferior chromosome in the population is replaced. The

GA stops when the number of generations reaches the

preset maximum generation T.

No systematic parameter optimization process has

been attempted, but the following parameter set was used

in our experiments: 20 for the population size, 0.1 for the

mutation rate (pm), and 0.25 for q in rank-based selection.

3.4 Exhaustive and sequential search algorithms

each internal node. We prepare the training set by

removing the samples that do not belong to S.

4.2 Recognition

The following two algorithms are designed by

modifying the feature selection algorithms in [8].

(1) Exhaustive search

Every possible partition is generated and evaluated,

and the best partition is chosen as the solution. This

algorithm guarantees the optimal solution. However, the

search space size is O(2K), so this algorithm is unpractical

for the large K.

(2) Sequential search algorithms

These algorithms are very similar to SFS, PTA, and

SFFS algorithms for the feature selection. The following

is a SFS-like algorithm.

Sleft=; Sright=S; max=-1;

while(! Sright) {

k=argmax ωjSright J(Sleftωj, Sright-ωj);

Sleft=Sleftωk; Sright=Sright-ωk;

if(J(Sleft, Sright)>max) {

max = J(Sleft, Sright);

store Sleft and Sright as new optimum;

}

}

return(optimal Sleft and Sright);

4. Training and Recognition

4.1 Training

When we use the Rule 1 in Section 3.2, the training of

a BCT is straightforward. The internal nodes have their

own binary classifiers that are to be trained independently

of other nodes. What we should do is to prepare the

training set by re-organizing the samples in the original

training set into two groups, one for Sleft and the other for

Sright. In our implementation, we use the binary MLP

(Multiple-Layer Perceptron) proposed in [9]. The backpropagation learning algorithm is used to train the binary

MLP classifiers. The expected outputs for the samples in

Sleft and Sright are set to 0 and 1, respectively.

If the Rule 2 is used to implement the node classifiers,

a class-modular classifier is constructed and trained at

In the recognition phase, the root node of BCT

receives the input pattern and attempts to classify the

pattern by using its binary classifier. The pattern moves to

one of the left or right child nodes according to the

classification result.

Let us first introduce the recognition method for the

BCT designed with Rule 1. Assuming d to be the output

of the binary classifier and to be between 0 and 1, the

sample moves to the left child node if the value of d

satisfies the condition 0d0.5. Otherwise the sample

moves to the right child node. This process is applied

recursively. When the sample reaches to the leaf node, the

recognition process terminates by reporting the class label

in the leaf node. We can allow the rejection by adjusting

the rejection parameter α defined in the decision space as

in Figure 2.

Sleft

Sright

reject

α

α

Figure 2. Rejection parameter in decision space.

If we design the BCT using the Rule 2, another

recognition method should be used. Following the Rule 2,

the sample moves left or right at internal nodes. When the

sample reaches to the leaf node, the recognizer reports the

class label in the leaf node.

4.3 Timing analysis of recognition

The timing efficiency of the recognition stage is

measured in terms of the number of binary classifiers to

be executed. The best case arises when the BCT is well

balanced, i.e. when every internal node satisfies the

condition in (1) with b=1. In this case, the depth of BCT is

not greater than ceil(log2K) and the number of binary

classifiers for the balanced BCT using the Rule 1 is the

same as the tree depth. The worst case is when every

partition is such that |Sleft|=1 and |Sright|=|S|-1. The tree is

skewed and the maximum number of binary classifiers is

K-1. For the balanced BCT using the Rule 2, the number

of binary classifiers is about double of K. Table 1

compares those numbers with the ones for class-modular

and class-modular.

Table 1. Comparison of timing efficiency (unit: number of

binary classifiers)

Numerals

(K=10)

Balanced BCT with Rule 1

4

Balanced BCT with Rule 2

20

Class-modular

10

Class-pairwise

45

Touching numeral pairs

(K=100)

7

200

100

4950

5. Experiments

Two different character sets, numerals (K=10) and

touching-numeral pairs (K=100), were chosen for the

experiments. For the numerals, CENPARMI database was

used. For the touching-numeral pairs, the database in [10]

was chosen.

For training the binary MLP, we used the error backpropagation algorithm. A constant learning rate, 0.2, was

used. The input samples are fed after shuffling their order

at every epoch. The initial weight values are obtained by

generating random numbers ranging from -0.5 to +0.5.

The number of hidden nodes was 4.

Table 2 compares the recognition performances of

BCT and class-modular for the numeral and touchingnumeral pair recognitions. The rejection was not allowed

and the recognition rates are for the test sets. The BCT

using the Rule 1 is inferior to the class-modular by 1.25%

for the numerals. However the BCT using the Rule 2 is

superior to class-modular by 0.45%. For the recognition

time, BCT with the Rule 1 is more than two times faster

than class-modular. On the contrary, BCT with the Rule 2

is about two times slower than class-modular.

The TNP recognition is regarded as a large-set

classification problem. For the problem, BCT using the

Rule 2 produced a superior result to the class-modular. It

improves the rate by about 1%. Like the numerals, BCT

using the Rule 1 is inferior to class-modular. For timing

efficiency, BCT with the Rule 1 is dramatically faster than

the others. The BCT with the Rule 2 used about twice the

computation time of class-modular as the analysis in

Table 1.

Table 2. Recognition performance of BCT and classmodular (no rejection)

BCT

Classmodular

Rule 1

Rule 2

Numerals

96.05% 97.75%

97.30%

TNP

72.93%

76.36%

75.47%

6. Concluding Remarks

The binary classification tree was proposed and some

experimental results using the character recognition

problems including the large-set classification were

presented. The recognition accuracies were improved by

the BCT using the Rule 2. The BCT consumed about

twice the computation time of the class-modular.

Our future works are focused on improving the

classification accuracy of the BCT. Several approaches

are under considerations. Currently the BCT design uses a

local optimization. A global optimization method that

searches simultaneously the partitions of the parent and

children would improve the performance. Another

improvement can be made by using the class-dependent

feature sets and classifiers. In this framework, some nodes

may use MLP while others use SVM. And each node

designs its own feature set that provides the best

classification.

7. References

[1]

R.O. Duda, P.E. Hart, and D.G. Stork, Pattern

Classification, 2nd Ed., Wiley-interscience, 2001.

[2] L. Breiman, J.H. Friedman, R.A. Olshen, and C.J. Stone,

Classification and Regression Trees, Chapman & Hall/CRC,

1984.

[3] M.P. Perrone, “A soft-competitive splitting rule for adaptive

tree-structured neural networks,” Proc. of IEEE

International Joint Conf. on Neural Networks, pp.689-693,

1992.

[4] B.-B. Chae, T. Huang, X. Zhuang, Y. Zhao, J. Sklansky,

“Piecewise linear classifiers using binary tree structure and

genetic algorithm,” Pattern Recognition, Vol.29, No.11,

pp.1905-1917, 1996.

[5] E. Horowitz, S. Sahni, and D. Mehta, Fundamentals of Data

Structures in C++, Computer Science Press, 1995.

[6] P. Langley, “Selection of relevant features in machine

learning,” Proc. of AAAI Fall Symposium on Relevance,

pp.1-5, 1994.

[7] I.-S. Oh, J.-S. Lee, and B.-R. Moon, “Hybrid genetic

algorithms for feature selection,” IEEE Tr. on PAMI,

(submitted).

[8] J. Kittler, "Feature selection and extraction," in Handbook of

Pattern Recognition and Image Processing, Academic Press

(Edited by T.Y. Young and K.S. Fu), pp.59-83, 1986.

[9] I.-S. Oh and C.Y. Suen, “A class-modular feedforward

neural network for handwriting recognition,” Pattern

Recognition, Vol.35, pp.229-244, 2002.

[10] S.-M. Choi, “Segmentation-free recognition of handwritten

numeral strings using neural networks,” Ph.D. thesis,

Chonbuk National University, 2000 (in Korean).