A History of Vector Analysis

advertisement

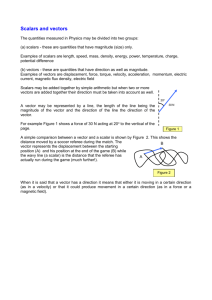

VECTOR ANALYSIS 5 MARKS 1 History of Vector Analysis A History of Vector Analysis (1967) is a book on the history of vector analysis by Michael J. Crowe, originally published by the University of Notre Dame Press. As a scholarly treatment of a reformation in technical communication, the text is a contribution to the history of science. In 2002, Crowe gave a talk[1] summarizing the book, including an entertaining introduction in which he covered its publication history and related the award of a Jean Scott prize of $4000. Crowe had entered the book in a competition for "a study on the history of complex and hypercomplex numbers" twenty-five years after his book was first published. Summary of book The book has eight chapters: the first on the origins of vector analysis including Ancient Greek and 16th and 17th century influences; the second on the 19th century William Rowan Hamilton and quaternions; the third on other 19th and 18th century vectorial systems; the fourth on the general interest in the 19th century on vectorial systems including analysis of journal publications as well as sections on major figures and their views (e.g., Peter Guthrie Tait as an advocate of Quaternions and James Clerk Maxwell as a critic of Quaternions); the fifth on Josiah Willard Gibbs and Oliver Heaviside and their development of a modern system of vector analysis. In chapter six, "Struggle for existence", Michael J. Crowe delves into the zeitgeist that pruned down quaternion theory into vector analysis on three-dimensional space. He makes clear the ambition of this effort by considering five major texts as well as a couple dozen articles authored by participants in "The Great Vector Debate". These are the books: Elementary Treatise on Quaternions (1890) Peter Guthrie Tait Elements of Vector Analysis (1881,1884) Josiah Willard Gibbs Electromagnetic Theory (1893,1899,1912) Oliver Heaviside Utility of Quaternions in Physics (1893) Alexander MacAulay Vector Analysis and Quaternions (1906) Alexander Macfarlane Twenty of the ancillary articles appeared in Nature; others were in Philosophical Magazine, London or Edinburgh Proceedings of the Royal Society, Physical Review, and Proceedings of the American Association for the Advancement of Science. The authors included the book authors, Cargill Gilston Knott, and a half-dozen other hands. The "struggle for existence" is a phrase from Charles Darwin’s Origin of Species and Crowe quotes Darwin: "…young and rising naturalists,…will be able to view both sides of the question with impartiality." After 1901 with the Gibbs/Wilson/Yale publication Vector Analysis, the question was decided in favour of the vectorialists with separate dot and cross products. The pragmatic temper of the times set aside the fourdimensional source of vector algebra. Crowe's chapter seven is a survey of "Twelve major publications in Vector Analysis from 1894 to 1910". Of these twelve, seven are in German, two in Italian, one in Russian, and two in English. Whereas the previous chapter examined a debate in English, the final chapter notes the influence of Heinrich Hertz' results with radio and the rush of German research using vectors. Joseph George Coffin of MIT and Clark University published his Vector Analysis in 1909; it too leaned heavily into applications. Thus Crowe provides a context for Gibbs and Wilson’s famous textbook of 1901. The eighth chapter is the author's summary and conclusions.[2] The book relies on references in chapter endnotes instead of a bibliography section. Crowe also states that theBibliography of the Quaternion Society, and its supplements to 1912, already listed all the primary literature for the study. 2 Vector operations Algebraic operations The basic algebraic (non-differential) operations in vector calculus are referred to as vector algebra, being defined for a vector space and then globally applied to a vector field, and consist of: scalar multiplication multiplication of a scalar field and a vector field, yielding a vector field: vector addition addition of two vector fields, yielding a vector field: ; dot product multiplication of two vector fields, yielding a scalar field: ; cross product multiplication of two vector fields, yielding a vector field: There are also two triple products: ; ; scalar triple product the dot product of a vector and a cross product of two vectors: ; vector triple product the cross product of a vector and a cross product of two vectors: or ; although these are less often used as basic operations, as they can be expressed in terms of the dot and cross products. [edit]Differential operations Vector calculus studies various differential operators defined on scalar or vector fields, which are typically expressed in terms of the del operator ( ), also known as "nabla". The four most important differential operations in vector calculus are: 3 Generalizations Nonlinear generalizations Linear PCA versus nonlinear Principal Manifolds [22] forvisualization of breast cancer microarray data: a) Configuration of nodes and 2D Principal Surface in the 3D PCA linear manifold. The dataset is curved and cannot be mapped adequately on a 2D principal plane; b) The distribution in the internal 2D non-linear principal surface coordinates (ELMap2D) together with an estimation of the density of points; c) The same as b), but for the linear 2D PCA manifold (PCA2D). The "basal" breast cancer subtype is visualized more adequately with ELMap2D and some features of the distribution become better resolved in comparison to PCA2D. Principal manifolds are produced by the elastic maps algorithm. Data are available for public competition.[23] Software is available for free non-commercial use.[24] Most of the modern methods for nonlinear dimensionality reduction find their theoretical and algorithmic roots in PCA or K-means. Pearson's original idea was to take a straight line (or plane) which will be "the best fit" to a set of data points.Principal curves and manifolds[25] give the natural geometric framework for PCA generalization and extend the geometric interpretation of PCA by explicitly constructing an embedded manifold for data approximation, and by encoding using standard geometric projection onto the manifold, as it is illustrated by Fig. See also the elastic map algorithm andprincipal geodesic analysis. Multilinear generalizations In multilinear subspace learning, PCA is generalized to multilinear PCA (MPCA) that extracts features directly from tensor representations. MPCA is solved by performing PCA in each mode of the tensor iteratively. MPCA has been applied to face recognition, gait recognition, etc. MPCA is further extended to uncorrelated MPCA, non-negative MPCA and robust MPCA. Higher order N-way principal component analysis may be performed with models such as Tucker decomposition, PARAFAC, multiple factor analysis, co-inertia analysis, STATIS, and DISTATIS. Robustness - Weighted PCA While PCA finds the mathematically optimal method (as in minimizing the squared error), it is sensitive to outliers in the data that produce large errors PCA tries to avoid. It therefore is common practice to remove outliers before computing PCA. However, in some contexts, outliers can be difficult to identify. For example in data mining algorithms like correlation clustering, the assignment of points to clusters and outliers is not known beforehand. A recently proposed generalization of PCA [26] based on a Weighted PCA increases robustness by assigning different weights to data objects based on their estimated relevancy. 20MARKS 1 Definition A vector space over a field F is a set V together with two binary operations that satisfy the eight axioms listed below. Elements of V are called vectors. Elements of F are calledscalars. In this article, vectors are distinguished from scalars by boldface.[nb 1] In the two examples above, our set consists of the planar arrows with fixed starting point and of pairs of real numbers, respectively, while our field is the real numbers. The first operation, vector addition, takes any two vectors v and w and assigns to them a third vector which is commonly written as v + w, and called the sum of these two vectors. The second operation takes any scalar a and any vector v and gives another vector av. In view of the first example, where the multiplication is done by rescaling the vector v by a scalar a, the multiplication is called scalar multiplication of v by a. To qualify as a vector space, the set V and the operations of addition and multiplication must adhere to a number of requirements called axioms.[1] In the list below, let u, v and wbe arbitrary vectors in V, and a and b scalars in F. Axiom Meaning Associativity of addition u + (v + w) = (u + v) + w Commutativity of addition u+v=v+u Identity element of addition There exists an element 0 ∈ V, called the zero vect Inverse elements of addition For every v ∈ V, there exists an element −v ∈ V, ca Distributivity of scalar multiplication with respect to vector addition a(u + v) = au + av Distributivity of scalar multiplication with respect to field addition (a + b)v = av + bv Compatibility of scalar multiplication with field multiplication a(bv) = (ab)v [nb 2] Identity element of scalar multiplication 1v = v, where 1 denotes the multiplicative identity i These axioms generalize properties of the vectors introduced in the above examples. Indeed, the result of addition of two ordered pairs (as in the second example above) does not depend on the order of the summands: (xv, yv) + (xw, yw) = (xw, yw) + (xv, yv), Likewise, in the geometric example of vectors as arrows, v + w = w + v, since the parallelogram defining the sum of the vectors is independent of the order of the vectors. All other axioms can be checked in a similar manner in both examples. Thus, by disregarding the concrete nature of the particular type of vectors, the definition incorporates these two and many more examples in one notion of vector space. Subtraction of two vectors and division by a (non-zero) scalar can be defined as v − w = v + (−w), v/a = (1/a)v. When the scalar field F is the real numbers R, the vector space is called a real vector space. When the scalar field is the complex numbers, it is called a complex vector space. These two cases are the ones used most often in engineering. The most general definition of a vector space allows scalars to be elements of any fixed field F. The notion is then known as an F-vector spaces or a vector space over F. A field is, essentially, a set of numbers possessing addition, subtraction, multiplication and division operations.[nb 3] For example, rational numbers also form a field. In contrast to the intuition stemming from vectors in the plane and higher-dimensional cases, there is, in general vector spaces, no notion of nearness, angles or distances. To deal with such matters, particular types of vector spaces are introduced; see below. Alternative formulations and elementary consequences The requirement that vector addition and scalar multiplication be binary operations includes (by definition of binary operations) a property called closure: that u + v and av are in Vfor all a in F, and u, v in V. Some older sources mention these properties as separate axioms. [2] In the parlance of abstract algebra, the first four axioms can be subsumed by requiring the set of vectors to be an abelian group under addition. The remaining axioms give this group an F-module structure. In other words there is a ring homomorphism ƒ from the field F into the endomorphism ring of the group of vectors. Then scalar multiplication av is defined as (ƒ(a))(v).[3] There are a number of direct consequences of the vector space axioms. Some of them derive from elementary group theory, applied to the additive group of vectors: for example the zero vector 0 of V and the additive inverse −v of any vector v are unique. Other properties follow from the distributive law, for example av equals 0 if and only if a equals 0 or v equals0. Examples Main article: Examples of vector spaces Coordinate spaces The simplest example of a vector space over a field F is the field itself, equipped with its standard addition and multiplication. More generally, a vector space can be composed of n-tuples (sequences of length n) of elements of F, such as (a1, a2, ..., an), where each ai is an element of F.[12] A vector space composed of all the n-tuples of a field F is known as a coordinate space, usually denoted Fn. The case n = 1 is the above mentioned simplest example, in which the field F is also regarded as a vector space over itself. The case F = R and n = 2 was discussed in the introduction above. The complex numbers and other field extensions The set of complex numbers C, i.e., numbers that can be written in the form x + i y for real numbers x and y where is the imaginary unit, form a vector space over the reals with the usual addition and multiplication: (x + i y) + (a + i b) = (x + a) + i(y + b) and for real numbers x, y, a, b and c. The various axioms of a vector space follow from the fact that the same rules hold for complex number arithmetic. In fact, the example of complex numbers is essentially the same (i.e., it is isomorphic) to the vector space of ordered pairs of real numbers mentioned above: if we think of the complex number x + i y as representing the ordered pair (x, y) in the complex plane then we see that the rules for sum and scalar product correspond exactly to those in the earlier example. More generally, field extensions provide another class of examples of vector spaces, particularly in algebra and algebraic number theory: a field F containing a smaller field E is anE-vector space, by the given multiplication and addition operations of F.[13] For example, the complex numbers are a vector space over R, and the field extension is a vector space over Q. Function spaces Functions from any fixed set Ω to a field F also form vector spaces, by performing addition and scalar multiplication pointwise. That is, the sum of two functions ƒ and g is the function (f + g) given by (ƒ + g)(w) = ƒ(w) + g(w), and similarly for multiplication. Such function spaces occur in many geometric situations, when Ω is the real line or an interval, or other subsets of R. Many notions in topology and analysis, such as continuity, integrability or differentiability are well-behaved with respect to linearity: sums and scalar multiples of functions possessing such a property still have that property. [14] Therefore, the set of such functions are vector spaces. They are studied in greater detail using the methods of functional analysis, see below. Algebraic constraints also yield vector spaces: the vector space F[x] is given by polynomial functions: ƒ(x) = r0 + r1x + ... + rn−1xn−1 + rnxn, where the coefficients r0, ..., rn are in F.[15] Linear equations Main articles: Linear equation, Linear differential equation, and Systems of linear equations Systems of homogeneous linear equations are closely tied to vector spaces.[16] For example, the solutions of a + 3b + c = 0 4a + 2b + 2c = 0 are given by triples with arbitrary a, b = a/2, and c = −5a/2. They form a vector space: sums and scalar multiples of such triples still satisfy the same ratios of the three variables; thus they are solutions, too. Matrices can be used to condense multiple linear equations as above into one vector equation, namely Ax = 0, where A = is the matrix containing the coefficients of the given equations, x is the vector (a, b, c), Ax denotes the matrix product and 0 = (0, 0) is the zero vector. In a similar vein, the solutions of homogeneous linear differential equations form vector spaces. For example ƒ''(x) + 2ƒ'(x) + ƒ(x) = 0 yields ƒ(x) = a e−x + bx e−x, where a and b are arbitrary constants, and ex is the natural exponential function. 2 Applicants Chemistry See also: Analytical chemistry and List of chemical analysis methods The field of chemistry uses analysis in at least three ways: to identify the components of a particular chemical compound (qualitative analysis), to identify the proportions of components in a mixture (quantitative analysis), and to break down chemical processes and examine chemical reactions between elements of matter. For an example of its use, analysis of the concentration of elements is important in managing a nuclear reactor, so nuclear scientists will analyze neutron activation to develop discrete measurements within vast samples. A matrix can have a considerable effect on the way a chemical analysis is conducted and the quality of its results. Analysis can be done manually or with a device. Chemical analysis is an important element of national security among the major world powers with materials measurement and signature intelligence (MASINT) capabilities. Isotopes See also: Isotope analysis and Isotope geochemistry Chemists can use isotope analysis to assist analysts with issues in anthropology, archeology, food chemistry, forensics, geology, and a host of other questions of physical science. Analysts can discern the origins of natural and man-made isotopes in the study of environmental radioactivity. Business Financial statement analysis – the analysis of the accounts and the economic prospects of a firm Fundamental analysis – a stock valuation method that uses financial analysis Technical analysis – the study of price action in securities markets in order to forecast future prices Business analysis – involves identifying the needs and determining the solutions to business problems Price analysis – involves the breakdown of a price to a unit figure Market analysis – consists of suppliers and customers, and price is determined by the interaction of supply and demand Computer science Requirements analysis – encompasses those tasks that go into determining the needs or conditions to meet for a new or altered product, taking account of the possibly conflicting requirements of the various stakeholders, such as beneficiaries or users. Competitive analysis (online algorithm) – shows how online algorithms perform and demonstrates the power of randomization in algorithms Lexical analysis – the process of processing an input sequence of characters and producing as output a sequence of symbols Object-oriented analysis and design – à la Booch Program analysis (computer science) – the process of automatically analyzing the behavior of computer programs Semantic analysis (computer science) – a pass by a compiler that adds semantical information to the parse tree and performs certain checks Static code analysis – the analysis of computer software that is performed without actually executing programs built from that Structured systems analysis and design methodology – à la Yourdon Syntax analysis – a process in compilers that recognizes the structure of programming languages, also known as parsing Worst-case execution time – determines the longest time that a piece of software can take to run Economics Agroecosystem analysis Input-output model if applied to a region, is called Regional Impact Multiplier System Principal components analysis – a technique that can be used to simplify a dataset Engineering See also: Engineering analysis and Systems analysis Analysts in the field of engineering look at requirements, structures, mechanisms, systems and dimensions. Electrical engineers analyze systems in electronics. Life cycles andsystem failures are broken down and studied by engineers. It is also looking at different factors incorporated within the design. Intelligence See also: Intelligence analysis The field of intelligence employs analysts to break down and understand a wide array of questions. Intelligence agencies may use heuristics, inductive and deductive reasoning,social network analysis, dynamic network analysis, link analysis, and brainstorming to sort through problems they face. Military intelligence may explore issues through the use ofgame theory, Red Teaming, and wargaming. Signals intelligence applies cryptanalysis and frequency analysis to break codes and ciphers. Business intelligence applies theories ofcompetitive intelligence analysis and competitor analysis to resolve questions in the marketplace. Law enforcement intelligence applies a number of theories in crime analysis. Linguistics See also: Linguistics Linguistics began with the analysis of Sanskrit and Tamil; today it looks at individual languages and language in general. It breaks language down and analyzes its component parts: theory, sounds and their meaning, utterance usage, word origins, the history of words, the meaning of words and word combinations, sentence construction, basic construction beyond the sentence level, stylistics, and conversation. It examines the above using statistics and modeling, and semantics. It analyzes language in context ofanthropology, biology, evolution, geography, history, neurology, psychology, and sociology. It also takes the applied approach, looking at individual language development andclinical issues. Literature Literary theory is the analysis of literature. Some say that literary criticism is a subset of literary theory. The focus can be as diverse as the analysis of Homer or Freud. This is mainly to do with the breaking up of a topic to make it easier to understand. Mathematics Main article: Mathematical analysis Mathematical analysis is the study of infinite processes. It is the branch of mathematics that includes calculus. It can be applied in the study of classical concepts of mathematics, such as real numbers, complex variables, trigonometric functions, and algorithms, or of nonclassical concepts like constructivism, harmonics, infinity, and vectors. Music Musical analysis – a process attempting to answer the question "How does this music work?" Schenkerian analysis Philosophy Philosophical analysis – a general term for the techniques used by philosophers Analysis is the name of a prominent journal in philosophy. Psychotherapy Psychoanalysis – seeks to elucidate connections among unconscious components of patients' mental processes Transactional analysis Signal processing Finite element analysis – a computer simulation technique used in engineering analysis Independent component analysis Link quality analysis – the analysis of signal quality Path quality analysis Statistics In statistics, the term analysis may refer to any method used for data analysis. Among the many such methods, some are: Analysis of variance (ANOVA) – a collection of statistical models and their associated procedures which compare means by splitting the overall observed variance into different parts Boolean analysis – a method to find deterministic dependencies between variables in a sample, mostly used in exploratory data analysis Cluster analysis – techniques for grouping objects into a collection of groups (called clusters), based on some measure of proximity or similarity Factor analysis – a method to construct models describing a data set of observed variables in terms of a smaller set of unobserved variables (called factors) Meta-analysis – combines the results of several studies that address a set of related research hypotheses Multivariate analysis – analysis of data involving several variables, such as by factor analysis, regression analysis, or principal component analysis Principal component analysis – transformation of a sample of correlated variables into uncorrelated variables (called principal components), mostly used in exploratory data analysis Regression analysis – techniques for analyzing the relationships between several variables in the data Scale analysis (statistics) – methods to analyse survey data by scoring responses on a numeric scale Sensitivity analysis – the study of how the variation in the output of a model depends on variations in the inputs Sequential analysis – evaluation of sampled data as it is collected, until the criterion of a stopping rule is met Spatial analysis – the study of entities using geometric or geographic properties Time-series analysis – methods that attempt to understand a sequence of data points spaced apart at uniform time intervals Other Aura analysis – a technique in which supporters of the method claim that the body's aura, or energy field is analyzed Bowling analysis – a notation summarizing a cricket bowler's performance Lithic analysis – the analysis of stone tools using basic scientific techniques Protocol analysis – a means for extracting persons' thoughts while they are performing a task 3 Scalar potential From Wikipedia, the free encyclopedia This article is about a general description of a function used in mathematics and physics to describe conservative fields. For the scalar potential of electromagnetism, seeelectric potential. For all other uses, see potential. A scalar potential is a fundamental concept in vector analysis and physics (the adjective scalar is frequently omitted if there is no danger of confusion with vector potential). The scalar potential is an example of a scalar field. Given a vector field F, the scalar potential Pis defined such that: ,[1] where ∇P is the gradient of P and the second part of the equation is minus the gradient for a function of the Cartesian coordinatesx,y,z.[2] In some cases, mathematicians may use a positive sign in front of the gradient to define the potential.[3] Because of this definition of P in terms of the gradient, the direction of F at any point is the direction of the steepest decrease of P at that point, its magnitude is the rate of that decrease per unit length. In order for F to be described in terms of a scalar potential only, the following have to be true: 1. , where the integration is over a Jordan arc passing from location a to location b and P(b) is P evaluated at location b . 2. , where the integral is over any simple closed path, otherwise known as a Jordan curve. 3. The first of these conditions represents the fundamental theorem of the gradient and is true for any vector field that is a gradient of a differentiable single valued scalar field P. The second condition is a requirement of F so that it can be expressed as the gradient of a scalar function. The third condition re-expresses the second condition in terms of the curl ofF using the fundamental theorem of the curl. A vector field F that satisfies these conditions is said to be irrotational (Conservative). Scalar potentials play a prominent role in many areas of physics and engineering. The gravity potential is the scalar potential associated with the gravity per unit mass, i.e., theacceleration due to the field, as a function of position. The gravity potential is the gravitational potential energy per unit mass. In electrostatics the electric potential is the scalar potential associated with the electric field, i.e., with the electrostatic force per unit charge. The electric potential is in this case the electrostatic potential energy per unit charge. Influid dynamics, irrotational lamellar fields have a scalar potential only in the special case when it is a Laplacian field. Certain aspects of the nuclear force can be described by aYukawa potential. The potential play a prominent role in the Lagrangian and Hamiltonian formulations of classical mechanics. Further, the scalar potential is the fundamental quantity in quantum mechanics. Not every vector field has a scalar potential. Those that do are called conservative, corresponding to the notion of conservative force in physics. Examples of non-conservative forces include frictional forces, magnetic forces, and in fluid mechanics a solenoidal field velocity field. By the Helmholtz decomposition theorem however, all vector fields can be describable in terms of a scalar potential and corresponding vector potential. In electrodynamics the electromagnetic scalar and vector potentials are known together as theelectromagnetic four-potential. Integrability conditions If F is a conservative vector field (also called irrotational, curl-free, or potential), and its components have continuous partial derivatives, the potential of F with respect to a reference point is defined in terms of the line integral: where C is a parametrized path from to The fact that the line integral depends on the path C only through its terminal points and is, in essence, the path independence property of a conservative vector field. Thefundamental theorem of calculus for line integrals implies that if V is defined in this way, then so that V is a scalar potential of the conservative vector field F. Scalar potential is not determined by the vector field alone: indeed, the gradient of a function is unaffected if a constant is added to it. If V is defined in terms of the line integral, the ambiguity of V reflects the freedom in the choice of the reference point Altitude as gravitational potential energy An example is the (nearly) uniform gravitational field near the Earth's surface. It has a potential energy where U is the gravitational potential energy and h is the height above the surface. This means that gravitational potential energy on acontour map is proportional to altitude. On a contour map, the two-dimensional negative gradient of the altitude is a two-dimensional vector field, whose vectors are always perpendicular to the contours and also perpendicular to the direction of gravity. But on the hilly region represented by the contour map, the threedimensional negative gradient of U always points straight downwards in the direction of gravity; F. However, a ball rolling down a hill cannot move directly downwards due to the normal force of the hill's surface, which cancels out the component of gravity perpendicular to the hill's surface. The component of gravity that remains to move the ball is parallel to the surface: where θ is the angle of inclination, and the component of FS perpendicular to gravity is This force FP, parallel to the ground, is greatest when θ is 45 degrees. Let Δh be the uniform interval of altitude between contours on the contour map, and let Δx be the distance between two contours. Then so that However, on a contour map, the gradient is inversely proportional to Δx, which is not similar to force FP: altitude on a contour map is not exactly a two-dimensional potential field. The magnitudes of forces are different, but the directions of the forces are the same on a contour map as well as on the hilly region of the Earth's surface represented by the contour map. Pressure as buoyant potential In fluid mechanics, a fluid in equilibrium, but in the presence of a uniform gravitational field is permeated by a uniform buoyant force that cancels out the gravitational force: that is how the fluid maintains its equilibrium. This buoyant force is the negative gradient of pressure: Since buoyant force points upwards, in the direction opposite to gravity, then pressure in the fluid increases downwards. Pressure in a static body of water increases proportionally to the depth below the surface of the water. The surfaces of constant pressure are planes parallel to the ground. The surface of the water can be characterized as a plane with zero pressure. If the liquid has a vertical vortex (whose axis of rotation is perpendicular to the ground), then the vortex causes a depression in the pressure field. The surfaces of constant pressure are parallel to the ground far away from the vortex, but near and inside the vortex the surfaces of constant pressure are pulled downwards, closer to the ground. This also happens to the surface of zero pressure. Therefore, inside the vortex, the top surface of the liquid is pulled downwards into a depression, or even into a tube (a solenoid). The buoyant force due to a fluid on a solid object immersed and surrounded by that fluid can be obtained by integrating the negative pressure gradient along the surface of the object: A moving airplane wing makes the air pressure above it decrease relative to the air pressure below it. This creates enough buoyant force to counteract gravity. [edit]Calculating the scalar potential Given a vector field E, its scalar potential Φ can be calculated to be where τ is volume. Then, if E is irrotational (Conservative), This formula is known to be correct if E is continuous and vanishes asymptotically to zero towards infinity, decaying faster than 1/r and if the divergence of E likewise vanishes towards infinity, decaying faster than 1/r2. 4 Applications Computational geometry The cross product can be used to calculate the normal for a triangle or polygon, an operation frequently performed in computer graphics. For example, the winding of polygon (clockwise or anticlockwise) about a point within the polygon (i.e. the centroid or midpoint) can be calculated by triangulating the polygon (like spoking a wheel) and summing the angles (between the spokes) using the cross product to keep track of the sign of each angle. In computational geometry of the plane, the cross product is used to determine the sign of the acute angle defined by three points , and . It corresponds to the direction of the cross product of the two coplanar vectors defined by the pairs of points and , i.e., by the sign of the expression . In the "right-handed" coordinate system, if the result is 0, the points are collinear; if it is positive, the three points constitute a negative angle of rotation around from to , otherwise a positive angle. From another point of view, the sign of tells whether lies to the left or to the right of line . Mechanics Moment of a force applied at point B around point A is given as: Other The cross product occurs in the formula for the vector operator curl. It is also used to describe the Lorentz force experienced by a moving electrical charge in a magnetic field. The definitions of torque and angular momentum also involve the cross product. The trick of rewriting a cross product in terms of a matrix multiplication appears frequently in epipolar and multi-view geometry, in particular when deriving matching constraints. Cross product as an exterior product . The cross product can be viewed in terms of the exterior product. This view allows for a natural geometric interpretation of the cross product. In exterior algebra the exterior product (or wedge product) of two vectors is a bivector. A bivector is an oriented plane element, in much the same way that a vector is an oriented line element. Given two vectors a and b, one can view the bivector a ∧ b as the oriented parallelogram spanned by a and b. The cross product is then obtained by taking the Hodge dual of the bivector a ∧ b, mapping 2-vectorsto vectors: This can be thought of as the oriented multi-dimensional element "perpendicular" to the bivector. Only in three dimensions is the result an oriented line element – a vector – whereas, for example, in 4 dimensions the Hodge dual of a bivector is two-dimensional – another oriented plane element. So, only in three dimensions is the cross product of a and b the vector dual to the bivector a ∧ b: it is perpendicular to the bivector, with orientation dependent on the coordinate system's handedness, and has the same magnitude relative to the unit normal vector as a ∧ b has relative to the unit bivector; precisely the properties described above. Cross product and handedness When measurable quantities involve cross products, the handedness of the coordinate systems used cannot be arbitrary. However, when physics laws are written as equations, it should be possible to make an arbitrary choice of the coordinate system (including handedness). To avoid problems, one should be careful to never write down an equation where the two sides do not behave equally under all transformations that need to be considered. For example, if one side of the equation is a cross product of two vectors, one must take into account that when the handedness of the coordinate system is not fixed a priori, the result is not a (true) vector but a pseudovector. Therefore, for consistency, the other side must also be a pseudovector.[citation needed] More generally, the result of a cross product may be either a vector or a pseudovector, depending on the type of its operands (vectors or pseudovectors). Namely, vectors and pseudovectors are interrelated in the following ways under application of the cross product: vector × vector = pseudovector pseudovector × pseudovector = pseudovector vector × pseudovector = vector pseudovector × vector = vector. So by the above relationships, the unit basis vectors i, j and k of an orthonormal, right-handed (Cartesian) coordinate frame must all be pseudovectors (if a basis of mixed vector types is disallowed, as it normally is) since i × j = k, j × k = i and k × i = j. Because the cross product may also be a (true) vector, it may not change direction with a mirror image transformation. This happens, according to the above relationships, if one of the operands is a (true) vector and the other one is a pseudovector (e.g., the cross product of two vectors). For instance, a vector triple product involving three (true) vectors is a (true) vector. A handedness-free approach is possible using exterior algebra.