jlibbyFinal

advertisement

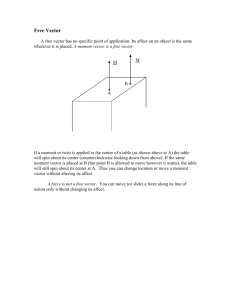

Jackie Libby Mechanics of Manipulation Spring 2009 Paper review This review discusses the paper, “On the Representation and Estimation of Spatial Uncertainty”, by Randall C. Smith and Peter Cheeseman (1). I will attempt to summarize the main concepts discussed in the paper, as well as clarify and flesh out some of the details that they leave out. This is a pivotal paper in Robotics, because it laid the foundations for using error approximations in estimating the relative position and orientation between objects. The paper treats such an object in a very general sense, where an object is anything with its own coordinate frame. In so doing, the results can be applied to many problems. One such problem would be estimating the pose of an end effector on a robotic arm relative to its base. This is the main problem that the paper looks at, and it is the most relevant example to the Manipulation topics covered in this class. Another exemplary problem would be in the field of mobile robots, where the relative objects are different poses of a moving robot, as it traverses through space over time. This would be the main example relevant to my individual research in SLAM (Simultaneous Localization and Mapping). The first part of the SLAM problem is Localization, where I am using an Extended Kalman Filter to figure out a new pose of a robot relative to a previous pose, based on internal and external sensor readings. The underlying theory behind Kalman Filters relies heavily on the ideas discussed in this paper, where error approximations are compounded and merged over time. If we examine the relative position and orientation between two objects, then we are looking at the transformation between the coordinate frames of the two objects. If we are in twodimensional Cartesian space, then the axes of these coordinate frames are X, Y, and ϴ. If we’re dealing with three dimensions, then the axes are X, Y, Z, roll, pitch and yaw. The example used in this paper looks at an X, Y, ϴ coordinate system. The authors mention that the same theory can easily be extended to other dimensions and other domains. It is important for the reader to make this generalization. Not only can it be extended to a three dimensional Cartesian space, but even further to any space whose axes are simply the parameters of interest. I want to stress this point here before moving on, because it is important that the reader visualize the transformation from coordinate frame A to coordinate frame B as simply a vector that starts at the origin of A, and ends at the origin of B. In appreciating such a general vector, it not only helps the reader to abstract the ideas presented in this paper to other domains, but even more so, it helps in understanding the very details of the example presented here. I will continue to gripe about this general vector throughout the rest of this review, so be prepared. Figure 1 shows a relational map of relative coordinate frames. One can think of each coordinate frame as a node in a graph, and the edges linking the nodes as the transformations between one frame and the next. These edges are the vectors I speak of above. For example, edge A in the figure is the transformation from frame W to frame L1. Edge B is the transformation from L1 to L2. Edge E is the transformation from W to L2. Edge E, along with many other edges in this network, are drawn with curved lines, to distinguish they were derived from combinations of other edges. I think it would be much clearer to keep all edge lines straight, to emphasize the fact that these are simply Euclidean vectors, no matter what space you are talking about. The tip of the vector is the coordinates of the new frame. These coordinates can represent any transformation you want, but they are still simply the values along the axes of the original frame. The tail of the vector is at the original frame that it is coming from, and so by turning the point into a free vector, it is clear that it exists only in relation to some frame. The vector is Euclidean, because it preserves length. This is unclear when the vector is drawn as a curved, general edge of a graph. The ellipses around each coordinate frame node in figure 1 represent the uncertainty of those coordinates. One very glaringly obvious mistake that the paper makes is that it switches the dashed and solid lines. Each ellipse is centered around a node, but the reason that there is more than one ellipse at each node is because each ellipse corresponds with an edge leading into that node. The dashed ellipses belong to the solid edges, and the solid ellipses belong to the dashed edges – this is the mistake the paper makes. The paper says in the beginning of section 2.1: “The solid error ellipses express the relative uncertainty of the robot with respect to its last position, while the dashed ellipses express the uncertainty with respect to W.” It’s actually the opposite. With all of this being said, let’s try to appreciate the figure for what it’s worth and keep going. Treating A, B, and E as vectors, we can see that A + B = E. One can think of a mobile robot moving from frame W to frame L1 to frame L2. This ends with the same net result as if the robot moved directly from frame W to L2. It is a little confusing, though, because if the robot is moving along a 2 dimensional plane, with X, Y, and ϴ as its degrees of freedom, then the space of these vectors, A, B and E, are really in three dimensions, for X, Y, and ϴ. So the nodes L1, L2, etc, should not be mistaken for the X, Y positions of the robot. The paper denotes the transformation A with the coordinates (X1 ,Y1, ϴ1), B as coordinates (X2, Y2, ϴ2), and E as coordinates (X3, Y3, ϴ3). Since A and E are both coming from frame W, then (X1 ,Y1, ϴ1) and (X3, Y3, ϴ3) are in world coordinates, whereas (X2, Y2, ϴ2) is with respect to frame L1. Below is a plot of what’s happening here on an X, Y coordinate system, with the origin at W. Notice in this figure where I wrote “not A” and “not B”. This is to denote that edges in figure 1 are in a three dimensional X, Y, ϴ space, and not a two dimensional (X,Y) plane. (X1, Y1) and (X3, Y3) can be plotted in my drawing, because they are in world coordinates, but (X2, Y2) are drawn in as lengths, since they are relative to a different frame. Now that I’ve drawn out the geometry, it is clear to see how the paper gives the functions f, g, and h in equation (1): It is a little counterintuitive to think of the inputs and outputs as being in different coordinate frames, but that is indeed what is happening here. (X1 ,Y1, ϴ1) is wrt W, (X2, Y2, ϴ2) is wrt L1, and (X3, Y3, ϴ3) is again wrt W. Now let’s get back to the ellipses. The ellipses around each node, as mentioned previously, represent the uncertainty associated with that node. It is easier to think of these as the uncertainty of an edge, with the ellipse being centered around the end of the edge. The ellipse around L1 corresponds to the uncertainty from moving along edge A. If this is a mobile robot, this might be the uncertainty related to the odometry controls, whereas if this is a robot arm, this might be the uncertainty related to the internal torque sensors at the joints. Similarly, the small ellipse around L2 is the uncertainty corresponding to the movement along edge B. The two uncertainties are compounded together to get the larger (solid) ellipse around L2. This corresponds to the (dashed) edge E. Equations (2), (3), and (4), go through the process of deriving this larger ellipse, and I’ll flesh it out here in more detail. We can ignore the details of the geometry above and just take the functions f, g and h as general functions from equation 1. The paper proceeds in a very hand-wavy way to use the words “Taylor series” and “Jacobian”, but let me try here to explain what they are really talking about. (Please excuse my bitterness. In general, I understand that papers aren’t meant to derive every detail, but it bothers me that this paper is so often used as an example of how to derive these basic concepts, if that’s not what it’s doing.) The Taylor series is a way of decomposing a function, f(x) into an infinite sum, centered around some point, x0. The figure below depicts this: The equation for the Taylor series is: 𝑓 ′ (𝑥0 ) 𝑓 ′ ′(𝑥0 ) (𝑥 − 𝑥0 ) + (𝑥 − 𝑥0 )2 + … 𝑓(𝑥) = 𝑓(𝑥0 ) + 1! 2! In our case, the variable, x, is really the vector pose [X,Y,ϴ]T. We have some estimate of our pose, and we assume that the uncertainty of our estimate follows a Gaussian distribution, with the estimate as the mean of the distribution. So we can replace x0 with 𝑥̂ in the Taylor series: 𝑓(𝑥) = 𝑓(𝑥̂) + 𝑓 ′ (𝑥̂) 𝑓 ′ ′(𝑥̂) (𝑥 − 𝑥̂) + (𝑥 − 𝑥̂)2 + … 1! 2! In this case, the input x is really two poses, (X1, Y1, ϴ1, X2, Y2, ϴ2). The output f(x) is really the vector function [f, g, h]. But to keep things general, we can just talk about the input as x, and the output as f(x). Now we take a first order approximation, which means we chop everything off the Taylor series after the first derivative: 𝑓(𝑥) = 𝑓(𝑥̂) + 𝑓 ′ (𝑥̂)(𝑥 − 𝑥̂) Subtracting 𝑓(𝑥̂) from both sides, we get: 𝑓(𝑥) − 𝑓(𝑥̂) = 𝑓 ′ (𝑥̂)(𝑥 − 𝑥̂) The paper makes another hand-wavy statement: “The mean values of the functions (to first order) are the functions applied to variables means”. I could go ahead and derive this too, but I won’t. What it means is that: ̂ 𝑓(𝑥̂) = 𝑓(𝑥) Substituting this into the equation above it, we get: ̂ = 𝑓 ′ (𝑥̂)(𝑥 − 𝑥̂) 𝑓(𝑥) − 𝑓(𝑥) The paper again, in a rather hand wavy way, uses the word “deviate”. What they mean to say is that they are using the delta sign, ∆, to signify how much a variable deviates from its mean value; or rather, the difference between some random value for the variable and its mean value. Substituting this into the equation above, we get: ∆𝑓(𝑥) = 𝑓 ′ (𝑥̂)(∆𝑥) Now it makes sense to go back to the more specific vector form for this example. Since f(x) is now a vector function, f’(x) is the Jacobian, J. Now we have the equations (2), and (3) below: They split up J into submatrices H and K to distinguish between the (X1,Y1,ϴ1) and (X2,Y2,ϴ2) inputs. It will become clear soon why this is useful. We now multiply both sides of eq (2) by their respective transposes to get: 𝑇 ∆𝑋1 ∆𝑋1 ∆𝑋1 ∆𝑋1 𝑇 ∆𝑌1 ∆𝑌1 ∆𝑌1 ∆𝑌1 ∆𝑋3 ∆𝑋3 𝑇 ∆𝜃1 ∆𝜃1 ∆𝜃1 ∆𝜃1 [ ∆𝑌3 ] [ ∆𝑌3 ] = 𝐽 𝐽 =𝐽 𝐽𝑇 ∆𝑋2 ∆𝑋2 ∆𝑋2 ∆𝑋2 ∆𝜃3 ∆𝜃3 ∆𝑌2 ∆𝑌2 ∆𝑌2 ∆𝑌2 [ ∆𝜃2 ] [ [ ∆𝜃2 ]] [ ∆𝜃2 ] [ ∆𝜃2 ] ∆𝑋3 ∆𝑋3 [ ∆𝑌3 ∆𝑋3 ∆𝜃3 ∆𝑋3 ∆𝑋3 ∆𝑌3 ∆𝑌3 ∆𝑌3 ∆𝜃3 ∆𝑌3 ∆𝑋3 ∆𝜃3 ∆𝑌3 ∆𝜃3 ] ∆𝜃3 ∆𝜃3 ∆𝑋1 ∆𝑋1 ∆𝑌1 ∆𝑋1 ∆𝜃1 ∆𝑋1 =𝐽 ∆𝑋2 ∆𝑋1 ∆𝑌2 ∆𝑋1 [( ∆𝜃2 ∆𝑋1 ∆𝑋1 ∆𝑌1 ∆𝑌1 ∆𝑌1 ∆𝜃1 ∆𝑌1 ∆𝑋2 ∆𝑌1 ∆𝑌2 ∆𝑌1 ∆𝜃2 ∆𝑌1 ∆𝑋1 ∆𝜃1 ∆𝑌1 ∆𝜃1 ∆𝜃1 ∆𝜃1 ∆𝑋2 ∆𝜃1 ∆𝑌2 ∆𝜃1 ∆𝜃2 ∆𝜃1 ∆𝑋1 ∆𝑋2 ∆𝑌1 ∆𝑋2 ∆𝜃1 ∆𝑋2 ∆𝑋2 ∆𝑋2 ∆𝑌2 ∆𝑋2 ∆𝜃2 ∆𝑋2 ∆𝑋1 ∆𝑌2 ∆𝑌1 ∆𝑌2 ∆𝜃1 ∆𝑌2 ∆𝑋2 ∆𝑌2 ∆𝑌2 ∆𝑌2 ∆𝜃2 ∆𝑌2 ∆𝑋1 ∆𝜃2 ∆𝑌1 ∆𝜃2 ∆𝜃1 ∆𝜃2 𝐽𝑇 ∆𝑋2 ∆𝜃2 ∆𝑌2 ∆𝜃2 ∆𝜃2 ∆𝜃2 )] Now we take the expectation of both sides. The expectation function, E[x], of a random variable, x, is the mean of the distribution of that variable. So the expectation of ∆𝑥, E[∆𝑥], equals 0 because ∆𝑥 is defined as the deviation from the mean: 𝐸[∆𝑥] = 𝐸[𝑥 − 𝑥̂] = 𝐸[𝑥] − 𝐸[𝑥̂] = 𝑥̂ − 𝑥̂ = 0 But if we’re taking the expectation of the product of two delta variables, then it does not reduce to 0. 𝐸[∆𝑥 2 ] ≠ 0 We now make the assumption that variables X1 and X2 are conditionally independent. This means that the uncertainties associated with transformations A and B are uncorrelated. Then we can use the fact: 𝐸[∆𝑋1 ∆𝑋2 ] = 𝐸[∆𝑋1 ]𝐸[∆𝑋2 ] = 0 × 0 = 0 So when we take the expectation of both sides of the large equation above, we get 0 elements for the top right and bottom left of the large 6x6 matrix. The non-zero 3x3 submatrices left behind are all individually in terms of (X1, Y1, ϴ1), (X2, Y2, ϴ2), and (X3, Y3, ϴ3). We call these C1, C2, and C3, respectively. The C stands for covariance. Now we have equation (4): This is now the full derivation for a compounding procedure. Going back to figure 1, the chain of edges can be followed along from A to B to C to D, getting to L4. All of these can be compounded recursively into G. Compounding is the first of two steps used in a Kalman filter. The merging step is the second step. If the paper wasn’t hand-wavy enough, it gets even more hand-wavy now, because it doesn’t even attempt to derive the merging step. It just lists the equations (8), (9), and (10), and then it refers to Nahi 1976 (2) for the derivation. A good point the paper makes, though, is the analogy to electric circuit theory, looking at resistances in series or in parallel. Compounding can be thought of as adding resistances in series while resistances in parallel would be combined with equation (11), which is the 1-dimensional form of the merging equation: Looking back at the network in figure 1, a merging operation would be two parallel edges, such as G and S. S is formed from a sensing operation, while G is formed from a recursive compounding of motions. First, the direction of the arrow for S must be reversed, so that it is pointing in the same direction as G. This inverse operation is explained with figure 2 and equations (5), (6), and (7). Here, the B arrow is being reversed. Equation (5) is the geometrical interpretation of reversing the arrow, assuming we’re talking about X,Y,ϴ space: This is assuming B is the vector (X,Y,ϴ), and the reverse of B is the vector (X’,Y’,ϴ’). This is another example where it is absolutely crucial to think of these transformations as vectors. The first time I looked at equation (5), it was difficult for me to figure out what they meant by the variables X, Y, ϴ, X’,Y’, and ϴ’. I wasn’t sure which of these were in world or relative coordinates, and if relative, relative to what? Once the edges in figure 2 are viewed as Euclidean vectors, it is clear that these variables are just relative to the start of the vector, wherever that may be. Towards the end of the paper, they give a mobile robot example. They discuss mainly the sensing procedure. They bring up the point that uncertainty estimations can be used to decide ahead of time if sensing is even feasible. Appendix A goes through a very nice formulation of the parameter k, which is known as the Mahalanobis distance: The threshold for this Mahalanobis distance can be set according to the specifications of the problem at hand, in order to accept or reject potential sensing steps. For example, in a localization problem, you have some estimate of the robot’s location, 𝑋̂, 𝑎𝑛𝑑 𝐶𝑥 . You also know the location of landmarks in your environment. Let’s say there’s a landmark close by at location x. The covariance is visualized as some ellipse around the robot location estimate, and the equation above tells you how far out along the ellipse the landmark location is from the estimate. If it’s not too far out, then you would decide to take the sensor reading to better correct the estimate. Once the sensor reading has been taken, you would then use the same equation to decide if this reading is reasonable. It’s possible that there was a lot of noise in the reading, and it gave you something false. But now the variables in the equation would be in sensor space, representing the actual and estimated sensor readings. I learned a lot from this paper, even though I bitterly whined about some of its hand wavy-ness. I don’t know – maybe it would have been better to learn some of the fundamentals from a textbook, but I haven’t been able to find a textbook so far that covers this material in any sort of depth. Everyone keeps on telling me to read the Probabilistic Robotics textbook, but I feel that the explanations in this text start out with even more assumptions. Alas, the life of a grad student living on the cutting edge of research. People like to criticize me and tell me I’m too detail oriented, that I should be leaving the details to the textbooks, but they rarely have any textbooks to back up their claims. If you’ve gotten to the end of this review, and you think I’m full of it, then please, give me some reading to do. References: (1) Smith, R. C., and Cheeseman, P. 1985. On the Representation and Estimation of Spatial Uncertainty. SRI Robotics Lab. Tech. Paper, and to appear Int. J. Robotics Res. 5(4): Winter 1987. (2) Nahi, N. E. 1976. Estimation theory and applications. New York: R. E. Krieger.