Diagonally Dominant Matrix

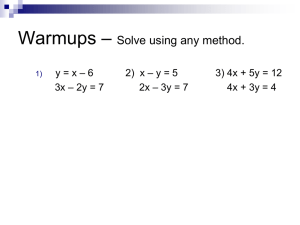

advertisement

Chapter I

Computational Linear Algebra

1. Introduction

Many of the problems of the numerical analysis can be reduced to the problem

of solving linear systems of equations. Among the problems, which could be treated

are the solution of ordinary and/or partial differential equations by using finite

difference methods, the solution of systems of equations, the eigen-value problems of

mathematical physics, least squares fitting of data and polynomial approximation.

In this course will restrict us to solve linear system of equations, including:

To solve a linear system A x b , where A is a given non-singular matrix of

order n (real or complex) b is a given column vector of n components, and x

is an unknown column vector of n components

In the above problem there are many several different right hand sides b - for

example, k of them – and therefore k unknown vectors x to be found. If we let

B be the n-rowed, k-column matrix of right-hand sided, and let X be the

corresponding n–rowed, k –column matrix of solution vectors, then we are to

solve the linear system AX = B.

To find the inverse A-1of a non-singular matrix A.

2. Direct Methods For Solving Linear System of Equations

By a direct method, we mean a method, which after a certain finite number of steps

gives the exact solution, disregarding rounding errors. For systems

Axb

where the matrix A is full (that is, most of the elements of A are non-zero), direct

elimination methods are almost always the most efficient, but when A is sparse (that

is, a large proportion of the elements of A are zeros), iterative methods offer certain

advantages. Iterative methods give a sequence of approximate solutions, converging

when the number of steps tends to infinity. Thus the choice between direct and

iterative methods depends on the proportion and distribution as well as sign and size

of non-zero elements of A.

2.1. Triangular Systems

Linear system of equations where the matrix is triangular are particularly simple to

solve. A system

Uxb,

where matrix U is upper triangular, has the form

u 11 x 1 u 12 x 2 ...

u 1n x n b1

u 22 x 2 ...

u 2n x n b 2

....

u n -1,n -1 x n -1 u n -1,n x n b n -1

u n,n x n b n

If we assume that ui,i 0, for i=1 (1) n, then the unknowns could be computed in the

order xn, xn-1, …, x1 (backward order) as follows

xn

bn

u n,n

x n -1

b n -1 u n -1,n x n

u n -1,n -1

....

bi

xi

n

u

j i 1

i, j

xj

u i,i

...

n

x1

b1 u i, j x j

j 2

u 1,1

Now we are ready to write a simple algorithm to find xi, i=n (-1) 1

x (n) b(n)/u(n, n);

for i (n - 1) : (-1) : 1

{s 0;

for j i 1 : n

{s s u(i, j) * x(j); }

x(i) b(i) - s)/u(i, i) ;

}

It is very simply to convert the above algorithm to MATLAB code (m file to solve the

a linear system of equations with an upper triangle matrix form - save the file as

uppersys.m)

function [x]= uppersys(n,u,b)

x(n)=b(n)/u(n,n);

for i=n-1:-1:1

s=0;

for j=i+1:n

s=s+u(i,j)*x(j);

end;

x(i)=(b(i)-s)/u(i,i);

end;

x=x';

the following is a MATLAB session to solve such systems for n=3;

» n=3;

» b=[1;2;3]

b =

1

2

3

» u=[ 12 2.4 3; 0 20 3;0 0 9]

u =

12.0000

2.4000

3.0000

0

20.0000

3.0000

0

0

9.0000

» x=uppersys(n,u,b)

x =

-0.0100

0.0500

0.3333

» check = u*x-b

check =

1.0e-015 *

-0.1110

0

0

It is easy to show that the number of division and multiplication is 0.5*(n2 + n), we

left the proof as an exercise.

2.2. Full Matrix and Gaussian Elimination

The most important among the direct methods for solving a general linear system of

equations is Gaussian elimination. The idea behind this method is to eliminate the

unknowns in a systematic way, in such a way that we end up with a triangular system,

which we know how to solve. Consider the system,

A n,n x n,1 b n,1 ,

We assume in the following that the matrix A is non-singular (det(A) 0), so the

system A x b has a unique solution (only one solution).

Now we assume a1,1 0.Then we could eliminate x1 from the last (n-1) equations by

subtracting from the ith equation the multiple

m i,1

a i,1

a 1,1

,

i 2 (1) n

of the first equation. So the pattern for the last (n-1) equations become

(2)

(2)

(2)

a (2)

2,2 x 2 a 2,3 x 3 ... a 2,n x n b 1

.

.

.

.

a

.

.

(2)

n,2

(2)

x n a x 3 ... a (2)

n,n x n b n

(2)

n,3

where the new coefficient are given by

a i,(2)j a i, j mi,1a 1, j

,

b i(2) b i mi,1b1 , i 2 (1) n .

This is a system of (n-1) equations in the (n-1) unknowns xi , i =2 (1) n. If the a 2( 2, 2) 0,

in a similar way, as in the elimination of x1from equations 2 (1) n, one could eliminate

x2 from the last (n-2) of these equations (n-1 equations). We get a system of (n-2)

equations in the unknowns xi , i= 3 (1) n, if we let

m i,2

(2)

a i,2

a (2)

2,2

i 3 (1) n

,

The coefficients of this system are given by

a i,(3)j a i,(2)j mi,2 a (2)

2, j

,

b i(3) b i(2) mi,2 b i(2) , i 3 (1) n .

(n 1)

The elements a 1,1 , a (2)

2,2 ,..., a n 1,n 1 , which appear during the elimination are called

pivotal elements. If all these are non-zero, we can continue until after (n-1) steps, we

get a single equation in one unknown, which is xn

(n)

a (n)

n,n x n b n

Now, we gather the first equation from each step to get the following new system

(1)

(1)

a 1,1

x 1 a 1,2

x 2 ... a 1,(1)n x n b1(1)

(2)

(2)

a (2)

2,2 x 2 ... a 2,n x n b 2

...

(n)

a (n)

n,n x n b n

Thus we have reduced the original system to triangular system, which as we have

seen could be easily solved.

The algorithm of the above-mentioned method (the triangularization of the system)

could be written as follows

for k 1 : (n - 1)

for i k 1 : n

{m i,k

a i,(k)k

a (k)

k,k

;

b i(k 1) b i(k) - m i,k b (k)

k ;

for j k 1 : n

a i,(kj1) a i,(k)j m i,k a (k)

k, j ;

}

The triangular decomposition algorithm could be simplified some what if we assume

that we are dealing with a copy of the given system of equations and therefore can use

the memory occupied by the ai,j for the storage of mi,k and a i,(k)j . The mi,k (called

multipliers) could be stored where the zeros occur, and the a i,(k)j could be written over

the old ai,j . The modified algorithm (minimizing the storage used) could be written as

follows

for k 1 : (n - 1)

for i k 1 : n

{a i,k

a i,k

a k,k

;

b i b i - a i,k b k ;

for j k 1 : n

a i, j a i, j a i,k a k, j ;

}

the MATLAB code for the modified algorithm is as follows (save with fmatsys.m)

function [a,b]=fmatsys (n,a,b)

for k=1:n-1

for i=k+1:n

a(i,k)=a(i,k)/a(k,k);

b(i)=b(i)-a(i,k)*b(k);

for j=k+1:n

a(i,j)=a(i,j)-a(i,k)*a(k,j);

end;

end;

end;

the MATLAB session to test the code is:

» n=3;

» a=magic(n)

a=

8

3

4

1

5

9

» b=[1;2;3]

b=

1

2

6

7

2

3

» [a,b]=fmatsys(n,a,b)

a=

8.0000

0.3750

0.5000

1.0000 6.0000

4.6250 4.7500

1.8378 -9.7297

b=

1.0000

1.6250

-0.4865

to find the unknown xi , i = 1 (1) n; we combine the code of triangularization and the

code of solving the upper triangular system, and the MATLAB code (save it as

gausseli.m) is as follows

function [x]=gausseli(n,a,b)

for k=1:n-1

for i=k+1:n

a(i,k)=a(i,k)/a(k,k);

b(i)=b(i)-a(i,k)*b(k);

for j=k+1:n

a(i,j)=a(i,j)-a(i,k)*a(k,j);

end;

end;

end;

x(n)=b(n)/a(n,n);

for i=n-1:-1:1

s=0;

for j=i+1:n

s=s+a(i,j)*x(j);

end;

x(i)=(b(i)-s)/a(i,i);

end;

x=x';

and the MATLAB session for testing the code is:

» n=3;

» a=magic(n)

a=

8

3

4

1

5

9

» b=[1;2;3]

6

7

2

b=

1

2

3

» x=gausseli(n,a,b)

x=

0.0500

0.3000

0.0500

» check=a*x-b

check =

1.0e-015 *

0

-0.2220

-0.4441

The estimate number of arithmetic operations required to reduce a system to

triangular form using Gauss elimination method is of order n3, and is denoted

mathematically as O(n3) and approximately equal to 1/3 n3, and we already know that

the of operations to solve upper triangular system, so we can calculate (or estimate )

the total number of operation to solve a system of linear equation with full matrix

form as a polynomial of n.

2.3. Pivoting Strategies

We have seen that, if in Gaussian elimination the pivotal elements a (k)

k,k = 0, for some

k, then the method breaks down. As a simple example for such case, let consider the

following

x1 x 2 x 3 1

x 1 x 2 2 x 3 2

x 1 2 x 2 2 x 3 1

(*)

This system is non-singular and has the unique solution x1 = - x2 = x3 = 1. However,

after the first step in the elimination processes, we get

x2

x3 1

x 3 0

Which means that a (2)

2,2 = 0, and we could not proceed as usual. The way to proceed

obviously is to interchange equations 2 and 3 before the next step, which in this case

actually gives us the triangular system directly.

Now we consider the general case that in step k we have a (k)

k,k = 0, but for some other

element a i,(k)k , i = k+1 (1) n in column k must be non-zero, otherwise the matrix is

singular (det(A) = 0), assume that a (k)

r,k , so we could interchange rows k and r and

proceed with the elimination. Using the above technique, we can conclude that any

non-singular matrix could be reduced to triangular form using Gaussian elimination

combined with row interchange.

To ensure numerical stability, it will be necessary to perform row interchange not

only a pivotal element is exactly zero, but also when it nearly zero. Suppose that in

the system of equations (*) the element a2,2 is changed to 0.0001 and Gaussian

elimination carried through without row interchanging. The resulting triangular

system becomes

x1 x 2 x 3 1

0.0001 x 2 2 x 3 1

9999 x 3 10000

(**)

If we perform the back-substitution using three-figure floating-point arithmetic, the

computed solution becomes

x1 0 , x 2 0

, x 3 1.000

where the true solution rounded to four decimals is

x 1 1.0000 , x 2 - 1.0001

, x 3 1.0001

It is left to the reader to verify that if we interchange rows 2 and 3, then using same

precision the computed solution becomes

x 1 1.000 , x 2 - 1.000

, x 3 1.000

which is correct to the three decimals.

To prevent possible catastrophic errors as illustrated by the example mentioned above,

it is usually necessary to choose pivotal element in the step k, by the following

strategy:

Partial Pivoting: Choose r as the smallest integer for which

(k)

a (k)

r, k max a i,k , k i n .

and interchange rows k and r, or interchange the order of reading

p(i)

k

k

or

r

r

In practice, partial pivoting is generally satisfactory with Gaussian elimination, to

convert any full matrix system to upper triangular system.

There are two important cases when Gaussian elimination could be carried without

partial pivoting (row interchanging). These are systems where the matrix A is of one

of the following types:

Diagonally Dominant Matrix

n

a i,i a i, j , i 1 (1) n

j 1

j i

Symmetric and positive definite

T

A T A and x A x 0, x 0

Now, we are ready to write the algorithm for Gaussian elimination (with partial

pivoting – permutation vector) and back-substitution

for k 1 : n

{p(k) k; }

for k 1 : n - 1

{max0 a k,k ;

locmax p(k);

for i k 1 : n

{ if a i,k max

{max0 a i,k ;

locmax i; }

}

if p(k) k

{r p(k); p(k) p(locmax); p(locmax) r; }

for i k 1 : n

{ a p(i),k

a p(i),k

a p(k),k

;

for j k 1 : n 1

a p(i), j a p(i), j a p(i),k * a p(k), j ;

}

}

xn

a p(n),n 1

a p(n),n

;

for k n - 1 : -1 : 1

{s 0;

for j k 1 : n

s s a p(k), j * x j ;

xk

a

p(k), j

s

a p(k),k

;

}

Now, it is easy to write the MATLAB code for the general direct algorithm to solve

system of linear equations, a possible code for the above algorithm is as follows (save

the file with gepartpb.m, we augment the Matrix A to include b as the (n+1)th column

function [x] = gepartpb (n,a)

% initialization of permutation vector

for k=1:n

p(k)=k;

end;

%apply partial pivoting associated with gaussian elimination

for k=1:n-1

max0=abs(a(k,k));

locmax=p(k);

for i=k+1:n

if abs(a(i,k)) > max0

max0=abs(a(i,k));

locmax=i;

end;

end;

if k ~= locmax

r=p(k);

p(k)=p(locmax);

p(locmax)=r;

end;

for i=k+1:n

a(p(i),k)=a(p(i),k)/a(p(k),k);

for j=k+1:n+1

a(p(i),j)=a(p(i),j)-a(p(i),k)*a(p(k),j);

end;

end;

end;

% back substitution to get the solution

x(n)=a(p(n),n+1)/a(p(n),n);

for k=(n-1):-1:1

s=0;

for j=k+1:n

s=s+a(p(k),j)*x(j);

end;

x(k)=(a(p(k),n+1)-s)/a(p(k),k);

end;

x=x’;

The MATLAB session for testing the code is provided in the following

» n=3;

» a=[1 1 1 1; 1 1 2 2; 1 2 2 1]

a=

1

1

1

1

1

2

1

2

2

1

2

1

» x=gepartpb(n,a)

locmax =

3

x=

1

-1

1

» check=a(:,1:3)*x-a(:,4)

check =

0

0

0

Now we test the code for, when we change the value of a(2,2) to 1.0001)

» a(2,2)=1.0001

a=

1.0000

1.0000

1.0000

1.0000 1.0000 1.0000

1.0001 2.0000 2.0000

2.0000 2.0000 1.0000

» x=gepartpb(n,a)

locmax =

3

x=

1.0000

-1.0001

1.0001

» check=a(:,1:3)*x-a(:,4)

check =

0

0

0

2.3. Tri-diagonal Matrix and Thomas Algorithm

We consider very important special case of system of linear equation, in which the

elements distributed on the diagonal and above and below that diagonal, and the

system has the following form ( for a system of n = 4)

b1

a

2

0

0

c1

0

b2

a3

0

c2

b3

a4

0

0

c3

b4

x1

x

2

x 3

x 4

d1

d

2

d 3

d 4

Instead of storing the above system in matrix form with a very large number of zero

elements (especially in case of large n), we store the system in vector format: the

diagonal elements in vector b of order n, and the elements below the diagonal a vector

a also of order n and the elements above the diagonal in a vector c of order n with a(1)

= c(n) = 0, and we apply the elimination process of one row below the diagonal. The

benefits for solving such systems using this technique (Thomas algorithm) instead of

using the general elimination (Gauss elimination on a full matrix) is to reduce the

number of arithmetic operation form O(n3) to O(n), and we sketch the algorithm in the

following steps

1. in row 1: divide the elements by b1: so b1=1; c1=c1/b1; d1=d1/b1.

2. start the elimination process to the second row: so a2 = 0; b2=b2- c1*a2; d2=d2a2*d1;(c2 is unchanged);

3. before we continue elimination process to the third row; we divide the second

row by b2, and so on till we reach to the nth row.

Now we are ready to develop the algorithm to solve the tri-diagonal system (Thomas

algorithm), as follows

c1 c1 /b 1 ; d 1 d 1 /b 1 ;

for i 2 : n

{ b i b i c i 1 * a i ;

c i c i /b i ;

d i d i d i 1 * a i /b i ;

}

xn d n ;

for k n - 1 : -1 : 1

{x k d k c k * x k 1 ; }

the MATLAB code:

function x=thomas(n,a,b,c,d)

c(1)=c(1)/b(1);

d(1)=d(1)/b(1);

for i=2:n

b(i)=b(i)-a(i)*c(i-1);

c(i)=c(i)/b(i);

d(i)=(d(i)-d(i-1)*a(i))/b(i);

end;

x(n)=d(n);

for k=n-1:-1:1

x(k)=d(k)-c(k)*x(k+1);

end;

x=x';

the MATLAB session to test the code

» n=5;

» a=[0;1;1;1;1]

a =

0

1

1

1

1

» b=[-4;-4;-4;-4;-4]

b =

-4

-4

-4

-4

-4

» c=[1;1;1;1;0]

c =

1

1

1

1

0

» d=[1;1;1;1;1]

d =

1

1

1

1

1

» x=thomas(n,a,b,c,d)

x =

-0.3654

-0.4615

-0.4808

-0.4615

-0.3654

» check=a(2)*x(1)+b(2)*x(2)+c(2)*x(3)-d(2)

check =

-1.1102e-016