Particle_filter

advertisement

Particle Filter based Localization for RoboCup

Prepared for Prof. Chew

This program is written in the main strategy. The name of the function is localization().

Now the function is called every two steps, when the length of the left leg equals the right

leg. We consider it is the time when the swing leg and the stance leg changing.

The following is the main flow of the program.

Vision

start

Start Up

Initialize()

Landmark

Recognition

Odometry

Two

Steps?

Image

data

Motion

Model

Update the

Poses of

Particles

Shared

Memory

Landmark

perceived?

Update

Weight

Robot

Pose

(Output)

Choose the

best particle

Resample

the particles

Layout of the field

y

o

x

We define the coordinate system for the field. The x axis is along the length of the field

and y axis is along the width of the field. The rotation will be considered positive when

the robot turns to its own left.

Initialization of the particles

We create NUM_PARTICLES (which is 50 now) particles, each particle contains four

components: x, y, theta and w.

In global robot localization, the initial belief is a set of poses drawn according to a

uniform distribution over the robot’s universe, annotated by the uniform importance

factor 1/m. (Particle filters for Mobile robot localization, dieter fox, Sebastian thrun, et.

al.)

We randomly put the particles in the field. And assign the weight of the each particle 1/

NUM_PARTICLES.

for (i=0;i<NUM_PARTICLES;i++)

{

particle[i].x = FIELD_WIDTH * rand()/RAND_MAX;

particle[i].y = FIELD_LENGTH * rand()/RAND_MAX;

particle[i].theta = 360 * rand()/RAND_MAX - 180;

particle[i].w = 1/NUM_PARTICLES;

}

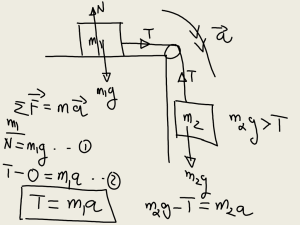

Motion Model

The motion model is using kinematics to calculate the translation and rotation of the

robot. It is quite different from the mobile robot because the movement of the humanoid

robot can be considered as discrete movement. Therefore, we only take the double

support phase into consideration. The distance and angle between the two feet will be

calculated and added in the odometry.

z

O

hip_pitch

hip_roll

E

B

A

D

B’

C

knee_pitch

This is a simplified model for one leg. We constrain the hip_yaw to zero and obtain:

OE OB cos hip _ pitch

ED BC cos hip _ pitch knee _ pitch

OD OE ED

z OA OD cos hip _ roll

y AD OD sin hip _ roll

x CD CB BD OB sin hip _ pitch BC sin hip _ pitch knee _ pitch

y

(x’, y’)

θ

(x, y)

C

x

0 A

Then we take hip_yaw into consideration. This is a projection of the leg to the x-y plane.

We can obtain:

hip _ yaw

x cos

y sin

z' z

sin x x cos y sin

cos y x sin y cos

y

Δ(x,y,θ)

0

x

Every step, we can identify the stance leg and swing leg through the length of each leg.

During the double support phase we calculate the position of each foot respectively. For

example the right leg is the stance leg, the left leg is the swing leg. When the left foot just

touch the ground, we update the odometry:

odom_x=Lx-Rx+stance_x;

odom_y=Ly-Ry+stance_y;

odom_theta=LEFTJA[HIP_YAW]+RIGHTJA[HIP_YAW]+stance_theta;

This algorithm is very suitable for the omni-direction walking gait. The function

odometry() is done in the function jointPositions(), which is calculating the angle of joint

to rotate to the servo unit. We also add in some noise in the motion model. The array

gaussian[i] is a pre-calculate array with Gaussian distribution

(http://www.dspguru.com/howto/tech/wgn.htm, How to generate White Gaussian Noise,

Matt Donadio), generated from the pseudo random number.

The motion model is very noisy. Dx, Dy and Dtheta are obtained from experiments. After

two steps, we will update the poses of all the particles.

for (i=0;i<NUM_PARTICLES;i++)

{

particle[i].x += odom_x + gaussian[rand()%100]* Dx ;

particle[i].y += odom_y + gaussian[rand()%100]* Dy;

particle[i].theta += odom_theta + gaussian[rand()%100]* Dtheta;

}

Sensor Model

Sensor model is for updating the weight of each particle. The information we can get

from the vision program is the x, y coordinates of the four corners of the bounding box in

the image. Because now we are using the fish eye lens for landmark recognition, the

distortion is considerable. However, we can simply make use of the raw image

information without using undistortion algorithm.

We can observe that the position of the landmark in the image and the size of the

landmark in the image can provide the angle information and the distance information.

Because we are using the fish eye lens, the straight line will be distorted significantly, but

the wide angle of view provides a higher chance to see the landmarks. The undistortion

algorithm and the line detection algorithm is computation expensive, so we only use the

landmarks that are ‘standing’ in the field.

From the following images, we can observe significant difference when the pole is at

different angles and distances.

50cm

200cm

100cm 45˚

200cm 45˚

We update the weight of each particle according to the angle and the distance

respectively.

Angle

We are using a single camera at one time. Due to the projection principle, we need to

choose some unchanged landmarks to be the reference. Now we are using the middle of

the two poles and the sides of the goals. These are ‘unchanged’ landmarks.

Robot

Robot

y

r

M

C Pole

O

x

The dash line is the angle bisector. This is to show that only the center of the pole is an

‘unchanged’ landmark. If we use the side of the pole, it is tangent point on a circle. This

point will change if the robot is at different locations.

Robot

Robot

The dash line is the angle bisector. This is to show that the side of the goal is an

‘unchanged’ landmark. But the center of the goal will be different seen from different

locations.

Expected Theta

When the camera detects any landmark, the function updateWeightAngle() will be called.

And the angle from the landmark to the norm of the image plane can be calculated

through a camera model.

Pole

(landmarkX, landmarkY)

expectedTheta

headOrientation

y

landmarkDirection

particle[i].theta

(particle[i].x, particle[i].y)

Particle (Predicted Pose)

0

x

For example, a BYB pole is seen by the robot, from the camera information, we can get

the angle from the landmark to the norm of the image plane. Compare this value to the

predicted value calculated from each particle, from where we can update the weight of

the particle.

From this graph, we can easily calculate the expected angle for each particle. This is the

predicted value, saved in expectedTheta.

dX = landmarkX - particle[i].x;

dY = landmarkY - particle[i].y;

landmarkDirection = atan2(dX,dY) * RAD_TO_DEG;

expectedTheta = landmarkDirection - headOrientation- particle[i].theta;

Perceived Theta

From the camera we can calculate the angle from the landmark to the norm of the image

plane. Because of the focal length of the fisheye lens is only 1.55mm, so the geometry

model is quite different from the normal pinhole lens. I have not found out what exact the

model is, but from experiment, it roughly fit in the rules:

sin (perceivedTheta) = 160 – poleX

160 is the middle of the image along x axis, poleX is the x coordinate of the perceived

landmark on the image. So

perceivedTheta = asin(160 - poleX)

Update Weight

Now we have the perceivedTheta and the expectedTheta, a standard normal distribution

is employed to calculate the weight and update the weight for each particle.

deltaTheta = expectedTheta - perceivedTheta;

u = deltaTheta / 15;

//belief = ( 1/sqrt(2*PI) ) * exp( -0.5 * u * u);

// 1/sqrt(2*PI) = 0.39894228040143267793994605993438

belief = 0.39894228 * exp( -0.5 * u * u);

particle[i].w = particle[i].w + belief;

We divide the deltaTheta by 15 because we want to give a high possibility to all the

particles with a deltaTheta within 15 degree. We can tune this parameter to give a proper

weight to the perceived angle.

(I will plot the distribution in Matlab. Now I give some numerical example.

If deltaTheta = 15, belief = 0.242;

deltaTheta = 0, belief = 0.399;

deltaTheta = 45, belief = 0.004;)

Distance

The distance from the landmark to the robot can be estimated through the size of the

landmark on the image. Though the optic geometry of the fisheye lens is unlike the

normal lens, we can still find out some basic relationship between the object and the

image through experiment.

Because the focal length of the lens is only 1.55mm, so we can consider that all the

landmarks are far away that the image is on the focal plane.

Perceived Distance

(Now it is still estimation. I am not so sure about the model of the fisheye lens. The

following table records some experiment data.

We take one pole for example. The diameter of the pole: D = 11cm.

Distance from the pole to

Num of pixels of the pole

L * pixel

the robot: L

width on image: pixel

20cm

60

1200

50cm

29

1450

100cm

13

1300

200cm

8

1600

300cm

5

1500

From the table, we can see that the product of the L and pixel can be considered as a

constant. It is easy for us to calculate the expectedDistance from the size of the perceived

landmark.

We use the width of the pole and the height of the goal for distance estimation. The width

of the goal is including in the angle estimation.

)

Expected Distance

Expected distance is the distance from the particle to the landmark.

dX = particle[i].x - landmarkX;

dY = particle[i].y - landmarkY;

expectedDistance = sqrt(dX*dX + dY*dY);

Update Weight

Now we have the perceived distance and the expected distance. A standard normal

distribution is employed to calculate the belief and update the weight. Previous we use

the absolute error for angle error update, now we use the relative error to update the

distance error.

deltaRate = (expectedDistance - perceivedDistance) / perceivedDistance;

u = deltaRate;

belief = 0.39894228 * exp( -0.5 * u * u);

particle[i].w = particle[i].w + belief;

Best Particle ( Output of the localization() )

We update the weight for each particle according to the image information. Now we can

compare all the weights, and choose the particle with the highest weight to be the final

estimation of the robot pose.

(From the code of the B-Human 2008, there are three algorithm to do the final estimation,

this is the most effective one. The other algorithms can be examined to see whether it will

improve the localization result.)

Resampling

There are also several dominant resampling algorithms. The following algorithm is the

most popular one:

We also adopt this algorithm but including the normalization in the resampling.

Future Work

Now the main part of the localization program is finished, but there are several

parameters and some different algorithms for estimate and resampling will affect the

result of the localization. I will try to figure out a good combination of the parameters and

the algorithms. A calibration program is also needed for the convenience of the tuning

work.

For the master thesis, I need to make the camera model more precise. Because the neural

network must be trained for each robot, we will try the NN method in the worst case.

Now the localization program is written within the strategy part and only use the image

before the localization() is called. We can make use of all the images in the interval of the

localization(). The random sensor error will be reduced.