3 Continuous Probability

advertisement

3

Continuous Probability

3.1 Probability Density Functions.

So far we have been considering examples where the outcomes form a finite or countably infinite set. In

many situations it is more natural to model a situation where the outcomes could be any real number or

vectors of real numbers. In these situations we usually model probabilities by means of integrals.

Example 1. A bank is doing a study of the amount of time, T, between arrivals of successive

customers. Assume we are measuring time in minutes. They are interested in the probability that T

will have various values. If we imagine that T can be any non-negative real number, then the sample

space is the set of all non-negative real numbers, i.e. S = {t: t 0}. This is an uncountable set. In

situations such as this, the probability that T assumes any particular value may be 0, so we are

interested in the probability that the outcome lies in various intervals. For example, what is the

probability that T will be between 2 and 3 minutes?

A common way to describe probabilities in situations such as this is by means of an integral. We try to find

b

a function f(t) such that the probability that T lies in any interval a t b is equal to

f(t) dt, i.e.

a

b

(1)

f(t) dt.

Pr{ a T b } =

a

A function f(t) with this property is called a probability density function for the outcomes of experiment.

We can regard T as a random variable and f(t) is also called the probability density function for the random

variable T.

Since Pr{ T = a } = 0 and Pr{ T = b } = 0, one has Pr{ a < T b }, Pr{ a T < b }, and Pr{ a < T < b } all

equal to this integral. In order that the non-negativity property and normalization axiom hold (formulas

(1.10) and (1.11) in section 1.1), one should have

(2)

f(t) 0

(3)

f(t) dt = 1.

for all t,

-

For example, suppose after some study the bank has come with the following model. They feel that

b

(4)

Pr{ a T b } =

e dt

2

-t/2

for 0 a b.

a

3.1 - 1

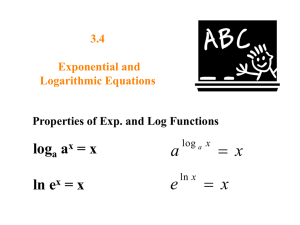

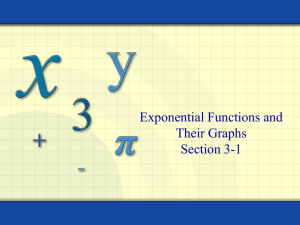

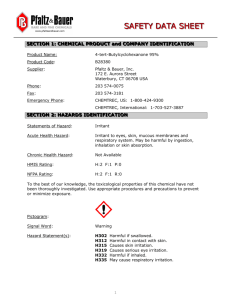

0.5

0.4

0.3

0.2

0.1

2

4

6

8

This is an example of an exponential density. In this case f(t) =

10

e-t/2

for t 0 and f(t) = 0 for t < 0. Note

2

that f(t) satisfies (2) and (3). The integral (4) can be evaluated in terms of elementary functions, so we

could just as well say

Pr{ a T b } = e-a/2 - e-b/2.

However, it is useful to keep in mind the integral representation (4).

For example, the probability that T would be between 2 and 3 minutes would be

Pr{ 2 T 3 } = e-2/2 - e-3/2 0.145.

It turns out the countable additivity property (1.11) holds when probabilities are given by integrals of the

form (1.14). This can be used to find the probability of sets which are not intervals.

Example 2. An insurance agency models the amount of time, T, between arrivals of successive

customers as a continuous random variable with density function f(t) = 2 e-2t for t 0 and f(t) = 0 for

t < 0. Suppose there are 2 insurance agents, Paul and Mary, who take turns handling customers as

follows. Paul takes any customers which arrive in the first hour, Mary takes any customers which

arrive in the second hour, Paul takes any which arrive in the third hour, etc. What is the probability

that Paul gets the next customer? This is the event that T is between 0 and 1 or between 2 and 3, or

between 4 and 6, etc. So we want to find Pr{E} where

E ={t: 2n t < 2n+1, for some n = 0, 1, 2, ... }.

E is the union of the disjoint intervals 2n t < 2n+1 for n = 0, 1, 2, ..., so we can use the countable

additivity formula (1.13) to get

3.1 - 2

Pr{E} =

Pr{ 2n T < 2n+1 } =

( e-2(2n) - e-2(2n+1) )

n=0

n=0

=

( e-4n - e-4n-2 )

n=0

=

= (1 - e-2)

e-4n

n=0

1 - e-2

= 0.8808

1 - e-4

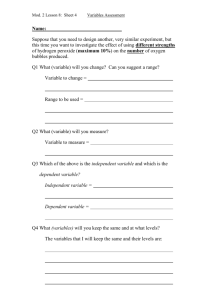

Cumulative distribution functions. Just as with discrete random variables, it is sometimes convenient to

use its cumulative distribution function F(t). If the random variable is T then

t

(5)

F(t) = Pr{ T t } =

f(s) ds

-

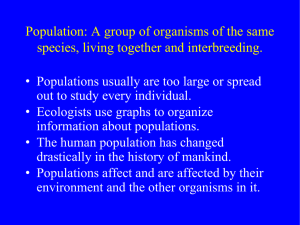

Note that F '(t) = f(t). In the example above where f(t) =

e-t/2

for t 0 and f(t) = 0 for t < 0 one has

2

f(t) = 1 - e-t/2 for t 0 and f(t) = 0 for t < 0.

1

0.8

0.6

0.4

0.2

2

4

6

8

10

Exponential random variables. The example above is an example of an exponential random variable.

These are random variables whose density function has the form f(t) = e-t for t 0 and f(t) = 0 for t < 0.

They are often used to model the time between successive events of a certain type, for example

1.

the times between breakdowns of a computer,

2.

the times between arrivals of customers at a store,

3.

the times between sign-ons of users on a computer network,

4.

the times between incoming phone calls,

5.

the times it takes to serve customers at a store,

6.

the times users stay connected to a computer network,

7.

the lengths of phone calls,

8.

the times it takes for parts to break, e.g. light bulbs to burn out.

3.1 - 3

Exponential random variables have an interesting property called the memoryless property. If T is an

exponential random variable, then the conditional probability that T is greater than some value t + s given

that T is greater than s is the same as the probability that T is greater than t. In symbols

Pr{ T > t + s | T > s } = Pr{ T > t }

This follows from the fact that Pr{ T > t } = e-t. Suppose, for example, the time between arrivals of bank

customers is an exponential random variable and it has been ten minutes since the arrival of the last

customer. Then the probability that the next customer will arrive in the next two minutes is the same as if a

customer had just arrived.

The parameter in the exponential distribution has a natural interpretation as a rate. Suppose we have N0

identical systems each of which will undergo a transition in time Ti for i = 1, …, N. Suppose the Ti are

independent and all have exponential distribution with parameter . We are interested in the rate at which

transitions are occurring at time t. The probability that any one of the systems will not have undergone a

transition in the time interval 0 s t is

e-s ds = e-t. So the expected number of systems that have not

t

yet undergone a transition at time t is N = N0e-t. So the rate at which they are undergoing transitions at

dN

1 dN

time t is = N0e-t = N. So = is the specific rate at which the systems are undergoing

dt

N dt

transitions.

Uniform random variables. A random variable with a constant density function is said to be a uniformly

distributed random variable.

Example 3. Suppose the time John arrives at work is uniformly distributed between 8 and 8:30. What

is the probability that John arrives no later than 8:10?

Suppose we let T be the time in minutes past 8:00 that John arrives. Then the probability density

function is given by f(t) = 1/30 for 0 t 30 and f(t) = 0 for other values of t. The probability that

10

John arrives no later than 8:10 is given by Pr{T 10}

=

1/30 dt = 1/3.

0

Means or expected values. For a random variable X that only takes on a discrete set of values x1, …, xn

with probabilities Pr{X = xk} = f(xk), its mean or expected value is

(6)

= E(X) = x1f(xn) + … + xnf(xn)

For a continuous random variable T with density function f(t) we replace the sum by an integral to calculate

its mean, i.e.

(7)

= E(T) =

t f(t) dt

-

3.1 - 4

Let's see why (7) is a reasonable generalization of (6) to the continuous case. Suppose for simplicity that T

only takes on values between two finite numbers a and b, i.e f(t) = 0 for t < a and t > b. Divide the interval

a t b into m equal sized subintervals by points t0, t1, …, tm where tk = a + k(t) with t = (b – a)/m.

Suppose we have a sequence T1, T1, …, Tn, … of repeated independent trials where each Tn has density

function f(t). Suppose s1, s1, …, sn, … are the values we actually observe for the random variables

_ s1 + s2 + + sn

T1, T1, …, Tn, … In our computation of s =

let's approximate all the values of sj that are

n

between tk-1 and tk by tk and then group all the approximate values that equal tk together. Then we have

_

s1 + s2 + + sn

(t1 + t1 + + t1) + (t2 + t2 + + t2) + + (tm + tm + + tm)

s =

n

n

=

g1t1 + g2t2 + + gmtm

g1

g2

gm

=

t + t + + tm

n

n 1 n 2

n

where gj is the number of times that sj is between tk-1 and tk. As n one has

tk

gj

Pr{tk-1 < T tk} =

f(t) dt f(tk)t. So as n gets large it is not unreasonable to suppose that

n

tk-1

m

m

_

s tk f(tk)t. If we let m then all the above approximations get better and tk f(tk)t

k=1

k=1

t f(t) dt which leads to (7).

-

For an exponential random variable we have

(7)

=

t e dt = - t

0

te-t | 0

+

e - t

1

- t

e dt = - 0 + 0 - | 0 =

0

We used integration by parts with u = t and dv = e-t dt so that du = dt and v = -e-t.

In the example above where the time between arrivals of bank customers was an exponential random

variable with = ½, the average time between arrivals is 1/ = 2 min.

Problem 3.1.1. Taxicabs pass by at an average rate of 20 per hour. Assume the time between taxi cabs

is an exponential random variable. What is the probability that a taxicab will pass by in the next

minute?

3.1 - 5