Stability Analysis for VAR systems

advertisement

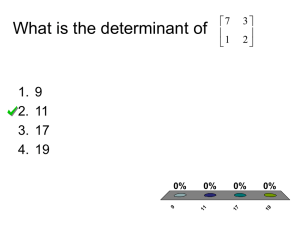

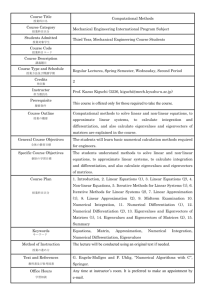

Stability Analysis for VAR systems For a set of n time series variables yt ( y1t , y2t , ..., ynt )' , a VAR model of order p (VAR(p)) can be written as: yt A1 yt 1 A2 yt 2 ... Ap yt p ut (1) where the Ai ’s are (nxn) coefficient matrices and ut (u1t , u2t ,..., unt )' is an unobservable i.i.d. zero mean error term. I. Stability of the Stationary VAR system: (Glaister, Mathematical Methods for Economists The stability of a VAR can be examined by calculating the roots of: ( I n A1L A2 L2 ....) yt A( L) yt The characteristic polynomial is defined as: ( z ) ( I n A1 z A2 z 2 ....) The roots of (z ) = 0 will give the necessary information about the stationarity or nonstationarity of the process. The necessary and sufficient condition for stability is that all characteristic roots lie outside the unit circle. Then is of full rank and all variables are stationary. In this section, we assume this is the case. Later we allow for less than full rank matrices (Johansen methodology). Calculation of the eigenvalues and eigenvectors Given an (nxn) square matrix A, we are looking for a scalar and a vector c 0 such that Ac c then is an eigenvalues (or characteristic value or latent root) of A. Then there will be up to n eigenvalues, which will give up to n linearly independent associated eigenvectors such that or Ac Ic 0 [ A I ]c 0 . For there to be a nontrivial solution, the matrix [ A I ] must be singular. Then must be such that A I 0 3 8 1 Ex: A= 0 4 3 0 3 4 8 1 3 8 1 0 0 3 0 4 3 0 0 0 4 3 . 0 3 4 0 0 0 3 4 Expanding the determinant of this matrix gives the characteristic equation: (3 )(2 8 7) 0 1 3, 2 1, 3 7 . Reminder: For a characteristic equation of the type a2 b c 0 1 , 2 b i b 2 4ac i 2a 2a Real roots: 1 , 2 Imaginary roots: 1 , 2 i where Modulus = 2 i 1 2 Note: an eigenvector is only determined up to a scalar multiple: If c is an eigenvector, then c is also an eigenvector where is a scalar: ( Ac) (c) A( c) (μc) . The associated eigenvectors are those that satisfy the equations for the three distinct values of the eigenvalues. The eigenvector associated with 1 3 , which satisfies the equation for this matrix is found as 0 8 1 c11 0 0 1 3 c12 0 . Notice that only columns 2 and 3 are linearly independent 0 3 1 c22 0 (rank=2) so we can choose the first element of the c matrix arbitrarily. Set c11 1 and the c11 1 other two elements are = 0 c12 0 . c13 0 Similarly, the eigenvector associated with 2 7 , which satisfies the equation for this matrix is found as 1 c 21 0 4 8 0 3 3 c22 0 . 0 3 3 c23 0 Notice that rk(A)=2 again because this time the last two rows are linearly dependent. Thus only the 2x2 matrix on the LHS is nonsingular. We can delete the last row and move c23 multiplied by the last column to the RHS. Now the first two elements will be expressed in terms of the last element. We can fix arbitrarily c23 and solve for the two others: assume c23 =4. Then c2 (9,4,4)' is an eigenvector corresponding to the eigenvalue 2 7 We can find similarly the last eigenvector to be c3 (7,2,2)' Jordan Canonical Form: Form a new matrix C whose columns are the three eigenvectors. 1 9 7 C 0 4 2 . You can calculate to find that the matrix product Q 1 AQ 0 4 2 3 0 0 1 0 0 C AC 0 7 0 = 0 2 0 0 0 1 0 0 3 1 Thus, for any square matrix A, there is a nonsingular matrix C such that (i) C 1 AC is diagonal with the eigenvalues on the diagonal. (ii) The eigenvectors corresponding to distinct eigenvalues of a symmetric matrix are orthogonal (linearly independent). II. Stability Conditions for Stationary and Nonstationary VAR Systems (Johnson and DiNardo, Ch 9+Appdx) To discuss these conditions we start with simple models and generalize. We will see: VAR(1) with 2 variables: VAR(2) with k variables (ex: VAR(2) with 2 variables) VAR(p) with k variables. 1. VAR(1) with two variables (p=1, k=2). (1) y1t b10 a11 y1,t 1 a12 y2,t 1 1t (2) y2t b20 a21 y1t 1 a22 y2t 1 2t or: (3) yt b Ayt 1 t , which can be written with the lag operator (4) ( I AL) yt b t Each variable is expressed as a linear combination of itself and all other variables (plus intercepts, dummies, time trends). The dynamics of the system will depend on the properties of the A matrix. s t 0 s t The error term is a vector white noise process with E ( t ) 0 and E ( t 's ) where the covariance matrix is assumed to be positive definite the errors are serially uncorrelated but can be contemporaneously correlated. Solution to 4: (i) Homogenous equation: Omit the error term yt b Ayt 1 simplest solution: yt yt 1 .. y . Then, (5) y 1b if is nonsingular ( I A ) As a solution try dt . Substituting it in the homogenous (trivial solution) equation (5): (I A)d 0 ---eigenvalues The nontrivial solution requires the determinant to be zero: I A 0 Get the eigenvalues ( ' s ). (ii) Substitute the eigenvalues into the homogenous system, to get the corresponding eigenvectors ( C' s ). (iii) After calculating the nonhomogenous solution and adding to the homogenous equation, we obtain the complete solution (in matrix form): (6) yt c11t c2 t2 y yt y (LR value) as t rises if the two eigenvalues have the modulus<1. We can rewrite ( I AL) yt b in (4) as a polynomial to see the stability conditions in terms of the eigenvalues: B( L) yt b where B(L)=I-AL, B( L) (1 1 L)(1 2 L) . The stability condition: (i) Modulus s <1 nonsingular, the determinant not 0, the system is stationary. In (6) y converges to y . (ii) Modulus i >1 nonsingular, but the system is explosive, no convergence. This is because one or more of the ' s grows without bound as t increases, so does y from (6). Not a typical process observed in the macro/finance series, therefore we do not consider this case. (iii) Modulus i =1 unit root, is singular, the determinant is 0 –y is nonstationary, we need to look into the VECM specification. A lot of the macro/finance models fall into this category. (iv) Modulus 1 2 1 I(2) variables, VAR is I(1). In general A is not symmetric. Look for cointegrating vectors. Relation between VAR variables and eigenvalues Define the eigenvalues and the corresponding eigenvectors of the matrix A as: 0 1 0 2 c c and C 11 12 c21 c22 If the eigenvalues are distinct then the eigenvectors are linearly independent, and C is nonsingular C 1 AC and A CC 1 . 1 Theorem: (i) for any square matrix A, there is a nonsingular matrix C such that C AC is diagonal with the eigenvalues on the diagonal. (ii) The eigenvectors corresponding to distinct eigenvalues of a symmetric matrix are orthogonal (linearly independent). Define a new vector of variables w such that yt Cwt or wt C 1 yt each y is a linear combination of w’s (or each w is a linear combination of y’s). Multiply (3) yt b Ayt 1 t by C 1 : C 1 yt C 1b AC 1 yt 1 C 1 t wt b * wt 1 et , b* C 1b , C 1 AC , et C 1 t , or: (7) w1t b1 * 1 w1,t 1 e1t w2t b2 * 2 w2t 1 e2t (i) i 1 for i=1,2 Both eigenvalues have modulus < 1. Each w is therefore I(0), and since y’s are linear combinations of w’s, each y is I(0). You can therefore apply the standard inference procedures and estimate each equation separately. As we saw above, I A is nonsingular, it is full rank (=2 here), and a unique static equilibrium exists: yt ( I A) 1 b 1b . The values of are such that any shock die out quickly and deviations from equilibrium are transitory. (ii) i 1 for i=1 or 2 One of the eigenvalues has modulus > 1. Since each y is a linear combination of both w’s, y is unbounded and the process is explosive. (iii) 1 1 and 2 1 Now w1 is a random walk with drift, or I(1), w2 is I(0). Each y is I(1) since each y is a linear combination of both w’s, therefore VAR is nonstationary. Is there a linear combination of y1t and y2t that removes the stochastic trend and makes it I(0), i.e. both variables are cointegrated? c * y c *y Consider again wt C 1 yt = 11 1,t 1 12 2,t 1 where c* represent the coefficients in c21 * y1,t 1 c22 * y2,t 1 the C 1 matrix. We know that w2 is I(0), thus [c21 * c22 *] is a cointegrating vector. Look for a Relation between the CI vector [c21 * c22 *] and the matrix such that * * . [.] c21 c22 Reparameterize equation (3) to give: (8) yt b yt 1 t where I A . The eigenvalues of are the complements of the eigenvalues of A: i 1 i . Since 1 1the eigenvalues of are 0 and 1 2 . Thus, it is a singular matrix with rank 1. Let us decompose . Since I A and A CC 1 , we can write I C 1 AC (CI C)C 1 C( I )C 1 . Thus: 0 1 0 (9) C C 0 1 2 * * 0 c11 c12 (1 2 ) c12 c11 c12 0 c21 * c22 * ' * * c21 c22 0 1 2 c21 c22 c22 (1 2 ) So , which has a rank 1, is factorized into the product of a row vector and a column vector , called an outer product: The row vector = = the cointegrating vector. The column vector = = the loading matrix = the weights with which the CI vector enters into each equation of the VAR. ----------Note: compare (9) to the case where is full rank with 1 0 : * * 0 1 c11 (1 1 ) c12 (1 2 ) c11 c12 1 1 . You can see why ' is C * * 1 2 0 c21 (1 1 ) c22 (1 2 ) c21 c22 said to be of reduced rank. ------------ C Combining (8) and (9) we get the vector error correction model of the VAR: y1t b1 c12 (1 2 )(c21 * y1,t 1 c22 * y2,t 1 ) 1t (10) y2t b2 c22 (1 2 )(c21 * y1,t 1 c22 * y2,t 1 ) 2t y1t b1 c12 (1 2 ) w2,t 1 1t y2t b2 c22 (1 2 ) w2,t 1 2t All variables here are I(0): y’s in first differences and w’s. The w (EC term) measures the extent to which y’s deviate from their equilibrium LR values. Although all the variables are I(0), the standard inference procedures are not valid. (similar to the univariate case where in order to test whether a series is I(1), we have to use an ADF test and not the t statistics on the AR coefficient). --See example below— (iv) Repeated unitary eigenvalues: 1 2 1 We can no longer have a diagonal eigenvalue matrix as before. But it is possible to 1 0 find a nonsingular matrix P such that P 1 AP J and A PJP 1 where J (the Jordan matrix). The problem with this case is that although is still rank 1, the transformation of y’s into w’s leads to I(2) variables, the cointegration vector gives a linear combination of I(2) variables and is thus I(1) and not I(0). Thus y is CI(2,1), the variables in the VAR are all I(1) but the inference procedures are nonstandard. Example of a case with 1 1 and 2 1 Find the matrices and from a VAR(1) with k=2: (11) y1t 1.2 y1,t 1 0.2 y2,t 1 e1,t (11) y2t 0.6 y1,t 1 0.4 y2,t 1 e2,t Reparametrizing the VAR into a VECM gives us: y1t 0.2 y1,t 1 0.2 y2,t 1 e1,t y2t 0.6 y1,t 1 0.6 y2,t 1 e2,t in matrix form: y 0.2 0.2 y1t 1 e1t (12) 1t y2t 0.6 0.6 y2t 1 e2t or: Yt Yt 1 ut But we cannot infer the loading matrix and the cointegrating matrix separately from this. To find and separately, we need to calculate the eigenvector matrix: Get the eigenvalues from the solution to A I 0 . A I a11 a12 a21 a22 1.2 0.2 0 1 1, 2 0.6 0.6 0.4 Eigenvectors corresponding to 1 1: 1.2 1 0.2 c11 0 0.2 0.2 c11 0 0.6 0.4 1 c 0 0.6 0.6 c 0 there is linear dependency 12 12 So set c12 1 c1 1 1' Eigenvalues corresponding to 2 0.6 0.6 0.2 c21 0 0.6 0.2 c 0 there is linear dependency So set c21 1 c2 1 3' 22 The eigenvector matrix and its inverse are: 1 1 1.5 0.5 1 C C 0.5 0.5 1 3 Now we can write the VAR in VECM by decomposing : 0 1 0 Yt Yt 1 ut C C ut 0 1 2 y1,t 1 0.4 Yt 0.5 0.5 ut 1.2 y2,t 1 This is the same expression as in (12) but now we have both the loading and the cointegrating matrices: 0 .4 1.2 and ' 0.5 0.5 2. VAR(2) with k variables: yt b A1 yt 1 A2 yt 2 t (13) Note: you can also add any deterministic terms such as trend, breaks by specifying the model as: yt A1 yt 1 A2 yt 2 Dt t Set the error term=0 and examine the properties of the system. We still have the LR solution (or the particular solution) as in (5) y 1b but now I A1 A2 . y exists if 1 is nonzero. To see this, look at the eigenvalues. We again try the same solution for the homogenous equation yt ct and substitute it in to get the characteristic equation 2 I A1 A2 0 The number of roots = pk where p=order of the VAR and k=#variables. Here we will have 2k roots. If all eigenvalues have modulus<1 then is non singular and the solution yt i2k1 ci t y will converge to y as t grows. The analysis w.r.t the modulus of the roots (<1, =1, >1) is the same as in the VAR(1) case. If the process is stationary then we can invert the VAR model and express y as a function of present and past shocks, and the exogenous (deterministic) components=Impulse Responses: Ex: Calculate the roots of a 2-dimensional VAR(2): n=p=2 and find the effect of a shock on a dependent variable: (Juselius Ch. 3) The characteristic function of ( z) I A1 z A2 z 2 where z 1 is 1,11 1,12 2,11 2,12 2 ( z ) I z z 1, 21 1, 22 2, 21 2, 22 1,11 z 1,12 z 2,11 z 2 2,12 z 2 I 2 2 1, 21 z 1, 22 z 2, 21 z 2, 22 z (1 1,11 z 2,11 z 2 ) ( 1,12 z 2,12 z 2 ) 2 2 ( 1, 21 z 2, 21 z ) (1 1, 22 z 2, 22 z ) Therefore ( z ) (1 1,11 z 2,11 z 2 )(1 1, 22 z 2, 22 z 2 ) ( 1,12 z 2,12 z 2 )( 1, 21 z 2, 21 z 2 ) Regrouping similar terms: ( z ) 1 a1 z a2 z 2 a3 z 3 a4 z 4 (1 1 z )(1 2 z )(1 3 z )(1 4 z ) The determinant is a 4th order polynomial in z giving 4 characteristic roots: z1 1/ 1; z2 1/ 2 ; z3 1/ 3 ; z4 1/ 4 Effects of a shock (or structural change dummy) on a dependent variable: If is invertible (all roots in the unit circle), we can write yt 1 t . We can then calculate the effect of a shock on yit : yit a ( L) jt ( z ) jt a ( L) for t=1,….T. (1 2 z )(1 3 z )(1 4 z ) (1 1 z ) We are assuming that all roots have modulus less than 1. The characteristic roots give information about the dynamic behavior of the process. To see how the shock is propagated, expand the last component: (1 1 )1 jt (1 1L 12 L2 ...) jt . You will have to do the same thing with each root. Thus, each shock will affect current and future values of yi . The persistence of the shock depends on the magnitude of the roots. The larger they are the more persistent will be the shocks. -If the roots i are real and <1, the shock will exponentially die out. -If one or more root i is imaginary then a shock will be cyclical but exponentially declining. -If one or more roots i lies on the unit circle, the shock will be permanent and and yi will show nonstationary behavior. VAR is not invertible, then we need to look into VECM We can also calculate the roots by reformulating the VAR(p) into the companion matrix VAR(1) form and solve for the two eigenvalues: Alternative approach: companion matrix. A VAR(p) can be transformed into a VAR(1). Consider the equation (6) again. We can rewrite it as: yt A1 yt 1 A2 yt 2 ut yt 1 yt 1 In matrix form: yt A1 A2 yt 1 ut y I t 1 p 0 yt 2 0 Calculate the eigenvalues i from the coefficient matrix: 0 A1 I I A 2 0 I 2 I 2 ( 1 )( 2 ) 0 . A1 A2 A2 2 A1 A2 0 =0= I2 0 Now we get the roots directly instead of the z’s, which were the inverse of the roots, obtained by solving the characteristic polynomial. Johansen and Juselius refer to the s as eigenvalues roots and to z’s characteristic roots. In the case of the companion matrix, there are two roots. If the roots to the characteristic polynomial are outside the unit circle, then the eigenvalues of the companion matrix are inside the unit circle and the system is stable. To recap: -The solution to I zA 0 gives the stationary roots (characteristic roots) outside the unit circle. -The solution to I A 0 gives the stationary roots (eigenvalues) inside the unit circle. -If the roots of (z ) are all outside the unit circle or the eigenvalues of the companion matrix are inside the unit circle, the process is stationary. -If one or more of the roots of (z ) or those of the companion matrix are on the unit circle then the process is nonstationary. -If one or more roots of (z ) is inside the unit circle or the eigenvalues of the companion matrix are outside the unit circle, the process is explosive.