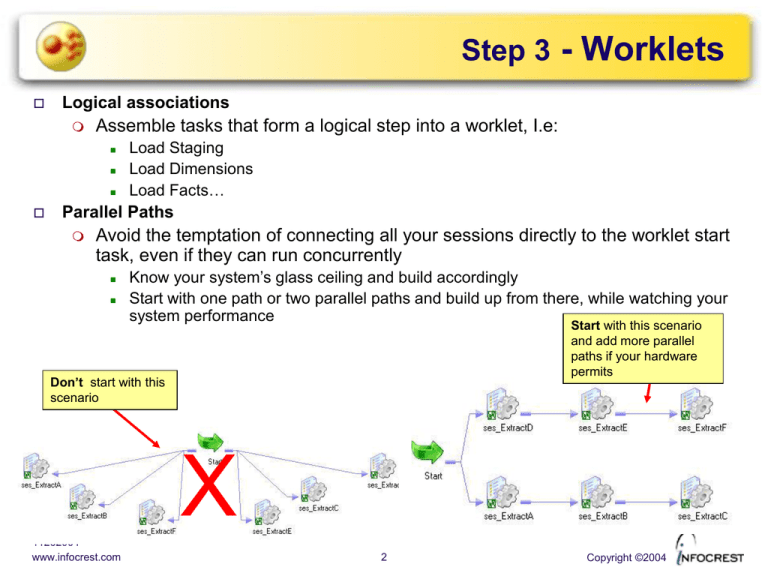

Step 3 - Worklets

Logical associations

Assemble tasks that form a logical step into a worklet, I.e:

Load Staging

Load Dimensions

Load Facts…

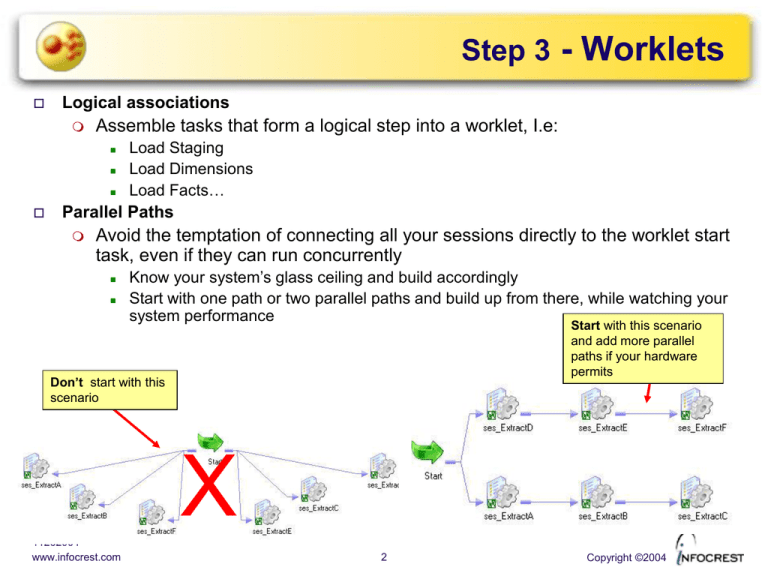

Parallel Paths

Avoid the temptation of connecting all your sessions directly to the worklet start

task, even if they can run concurrently

Know your system’s glass ceiling and build accordingly

Start with one path or two parallel paths and build up from there, while watching your

system performance

Start with this scenario

and add more parallel

paths if your hardware

permits

Don’t start with this

scenario

X

11262004

www.infocrest.com

2

Copyright ©2004

Step 3 - Worklets

Usability

Build your worklets as restartable units

Nest worklets

For groups of interdependent sessions

For sessions that must be executed in a prescribed order

For instance, a session doing a batch delete that must precede a session doing the

batch insert

Variables

A worklet level variables can be set to the value of a workflow level variable

A workflow level variable cannot be set to the value of a worklet level variable

Worklet task

Parameters

Assign the value of a workflow

variable to a worklet variable

11262004

www.infocrest.com

3

Copyright ©2004

Step 4 - Workflow

Flow

Join your worklets to form the master workflow

Link worklets in series when

Worklets already contain parallel paths

Worklets must be executed in sequence

Functionality

Add workflow level functionality

Timed notifications

Success email

Suspend on error email

Modify default links between worklets

11262004

www.infocrest.com

Set links to return false on error

4

Copyright ©2004

Step 4 - Workflow

Error handling

Suspend on Error

Stops the workflow if a task errors

Only stop the tasks in the same execution path, other paths still run

Can send an email on suspension

Restart workflow from the Workflow monitor after error fix

Task that suspended the workflow is restarted from the top, unless you specified

recovery for the failed session. In that case you can use ‘Recover Workflow

From Task’

Works well with fully restartable mappings

Email task must be

reusable. Only one

email is sent per

suspension.

11262004

www.infocrest.com

5

Copyright ©2004

Step 4 - Workflow

Error handling

Using tasks properties and link conditions

Link condition between a failed task and the next task must evaluate to FALSE if

you don’t want the workflow to continue

If a link evaluates to FALSE in a Workflow with multiple execution branches, only

the branch affected by the error is stopped

Fail parent if this task fails is set

PrevTaskStatus=SUCCEEDED

Stop the execution of the top branch if the

first worklet fails and mark the workflow as

failed when the bottom branch completes

11262004

www.infocrest.com

6

Copyright ©2004

Step 4 - Workflow

Error handling

Using link conditions and control tasks

Use a control task if you want to stop or abort the entire Workflow or an entire

Worklet.

As with session properties, setting the Control task to Fail Parent… only marks

the enclosing object as failed but does not stop or abort it.

PrevTaskStatus=SUCCEEDED

Set to Stop top-level workflow

PrevTaskStatus=FAILED

Stop the execution of both branches, but set

the final status of the workflow to stopped, not

failed

11262004

www.infocrest.com

7

Copyright ©2004

Step 5 - Triggers

Are the sources available?

You will probably need to wait for some kind of triggering event before you can

start the workflow

Event Wait

This task is the easiest way to implement triggers

Trigger files must be directed to the Informatica server machine

Will remove the trigger file

if checked

If you want to archive trigger files instead of deleting them, add a command

task:

Delete Filewatch file

property cleared

11262004

www.infocrest.com

8

Copyright ©2004

Step 5 - Triggers

Waiting for Multiple Source Systems

One workflow branch for each system, each with an event wait task

Re-join the branches at a Decision task or Event raise task (optional but

cleaner)

Make sure the task where all branches rejoin has the ‘Treat links as’ property

set to ‘AND’ (default)

11262004

www.infocrest.com

10

Copyright ©2004

Step 5 - Scheduler

Availability

Reusable, as a special object

Non-reusable, under Workflows Edit Scheduler

NonReusable

11262004

www.infocrest.com

Reusable

11

Copyright ©2004

Step 5 - Scheduler

Basic Properties

Runs every 15 minutes, starting 3/7/03

15:21 and ending 3/24/03

Start when

server starts

Default

mode, not

scheduled

Run again as

soon as

previous run

is completed

Calendar based run

windows

11262004

www.infocrest.com

12

Copyright ©2004

Step 5 - Scheduler

Custom Repeats

Repeat frequency

Repeat any day of

the month, or several

days a month

Repeat any day of

the week, or several

days a week

11262004

www.infocrest.com

Here, repeats every

last Saturday of the

month

13

Copyright ©2004

Step 5 - Scheduler

Custom Repeats

The scheduler cannot specify a time window within a day (I.e. run every day

between 8PM and 11PM)

For this, use a link condition between the start task and the next task and

schedule the Workflow to run continuously or every (n) minutes

Runs if workflow started

between 8 and 10:59 PM

11262004

www.infocrest.com

14

Copyright ©2004

Step 6 - Testing

One piece at a time

Verify the functionality of your worklets using the ‘Start Task’ command

Start task

Testing Worklet Tasks

In order to test and run individual tasks within a worklet, you can copy all the tasks in

the worklet and paste them into a new empty workflow

These test workflows can also be used in production, if you have to rerun a worklet or

part of a worklet while the main workflow is still running

11262004

www.infocrest.com

15

Copyright ©2004

Step 6 - Testing

Gantt Chart

Monitor this view and the server performance at the same time

Identify workflow bottlenecks quickly (candidates for partitioning)

Monitor sessions performances when they run concurrently

11262004

www.infocrest.com

16

Session and Server

Variables

11262004

www.infocrest.com

18

Copyright ©2004

Session & Server Variables

Session Variables

Some session properties can be parameterized with the following variable names:

$DBConnection_Name

$BadFile_Name

$InputFile_Name

$OutputFile_Name

$LookupFile_Name

$PMSessionLogFile

Use parameters in session properties to override

Source, target, lookup or stored procedure connections

Source, target or lookup file names

Reject file names

Session log file names

Session parameters do not have default values

You must provide a value in a parameter file or the session will fail

11262004

www.infocrest.com

19

Copyright ©2004

Session & Server Variables

Using Session Variables in Session Properties

1- use parameter names in

the session properties

2- specify a parameter file

name in the general

properties

[ses_BrowserReport]

$InputFile_dailylog_part1=daily_1.log

$InputFile_dailylog_part2=daily_2.log

11262004

www.infocrest.com

20

3- add an entry for each

parameter used in the

session properties

Copyright ©2004

Session & Server Variables

Server Variables

Specifies the default location of various folders on the Informatica server machine

such as

Also provides default values for the following properties

Root directory

Session log directory

Cache and Temp directories

Source, target and lookup file directories

External procedures directory

Success or failure email user

Session and workflow log count

Session error threshold

These variables are set at the server level and cannot be overridden with a

parameter file

11262004

www.infocrest.com

21

Copyright ©2004

Session & Server Variables

Using Session and Server Variables in Session Components

1- select a session component,

either non-reusable or reusable

2- use either session or

server parameters within

the command

11262004

www.infocrest.com

[ses_BrowserReport]

$InputFile_dailylog_part1=daily_1.log

$InputFile_dailylog_part2=daily_2.log

$PMSessionLogFile=ses_BrowserReport.log

22

3- add an entry for each

session parameter used

Copyright ©2004

Parameter Files

Workflow Parameter Files

Use to override workflow or worklet user-defined variables

Can also contain values for parameters and variables used in sessions and

mappings within the workflow

The path to this log file can be provided at the ‘pmcmd’ command line

If you use both, the command line argument has precedence

Workflow properties

11262004

www.infocrest.com

23

Copyright ©2004

Parameter Files

Format

[Heading]

parameterName = parameterValue

Heading format

Workflow:

Worklet:

[folderName.WF:workflowName.WT:workletName]

Nested Worklet

[folderName.WF:workflowName]

[folderName.WF:workflowName.WT:workletName.WT:nestedWorkletName]

Session

Workflow name required if session

[folderName.WF:workflowName.ST:sessionName]

name is not unique in folder

[folderName.sessionName]

Folder name required if session

[sessionName]

name is not unique in repository

Names in heading are case-sensitive.

Parameters and variable names are not

Example

String are

not quoted

11262004

www.infocrest.com

[tradewind.WF:WKF_BrowserReport.ST:SES_loadFacts]

$$lastLoadDate=10/2/2003 23:34:56

Default date formats:

$$Filter=California,Nevada

•mm/dd/yyyy hh24:mi:ss

Fact_Mapplet.$$maxValues=78694.2

•mm/dd/yyyy

Mapplet variable prefix

24

Copyright ©2004

Incremental Load

Using Mapping Variables for Incremental Load

Speeds up the load by processing only the rows added or changed since the last load

Requires a good knowledge of the source systems to figure out exactly what a new or added row

is

You can use Informatica’s mapping variable to implement incremental load parameters

For added safety, save the variables in parameter files:

Our process only updates values in the

parameter file if the data is valid

(balanced). If not, we can rerun the

load with the old parameter values

Informatica updates the

variables in the repository

upon completion of the load

process

11262004

www.infocrest.com

26

Copyright ©2004

Partitioning

11262004

www.infocrest.com

27

Copyright ©2004

Informatica Server Architecture

1 - session is started

3 - load manager

starts DTM

4 - DTM fetches

session’s mapping

in Repository

5 - DTM creates

and starts stage

threads

2 - load manager finds a

slot for session

11262004

www.infocrest.com

28

DTM Architecture

Efficient, multi-threaded architecture

data is processed in stages

-Each

stage is buffered

-Stage processes overlap

There is one DTM process

for every running session

(process runs as pmdtm)

at least one thread per reader, transformation and writer stage

other threads to control and monitor the overall process

Data is being transformed

as more data is being read

Data is being written as

more data is being

transformed

User control

as a user you have control over many performance aspects

-Memory

usage per session and, in some cases, per transformation

-Buffer block size (data is moved in memory by chunks)

-Disk allocations, for caches, indexes and logs

-Server allocation, with PowerCenter

11262004

www.infocrest.com

29

Copyright ©2004

Partitioning Guidelines

When to do it

After unit-testing

Mapping should be free of error and optimized

Only mappings seen as bottlenecks during volume testing should be candidates for partitioning

How to do it

Look at the big picture

What sessions are running concurrently with the session you want to partition

What other processes may be running at the same time as the partitioned session

A monster session you wish to partition should not be scheduled to run concurrently with other

sessions

Reserve some time on the server to do your partitioning initial testing

If you have to compete with other processes, the test results may be skewed or meaningless

Add one partition at a time

Monitor the system closely. Look at RAM usage, CPU usage and disk I/O

Monitor the session closely. Re-compute the total throughput at each step

Each partition adds a new set of concurrent threads and a new set of memory caches

Sooner or later, you will hit your server’s glass ceiling. At which point, performance will

degrade

Add partition point at transformations where you suspect there is a bottleneck

Partition points redistribute the data among partitions and allow for process overlap

11262004

www.infocrest.com

30

Copyright ©2004

Definitions

Source Pipeline

Data flowing from a source qualifier to transformations and targets

There are two pipelines per joiner transformation. The master source pipeline stops at the joiner

transformation

Pipelines are processed sequentially by the Informatica Server

Partition Point

Set at the transformation object level

Define the boundaries between DTM threads

Default partition points are created for

Source Qualifier or Normalizer transformations (reader threads)

Target instances (writer threads)

Rank and unsorted Aggregator transformations

Data can be redistributed between partitions at each partition point

Pipeline Stage

Area between partition points, where the partition threads operate

3 default stages

Reader stage, reads the source data and brings it in to the Source Qualifier

Transformation stage, moves data from the Source Qualifier up to a Target instance

Writer stage, writes data to the target(s)

Adding a partition points creates one more transformation stage

The processing in each stage can overlap, resulting in improved performance

11262004

www.infocrest.com

31

Copyright ©2004

Flow Example

2 partitions, 5 stages, 10 threads

Partition points

Stage 1

Open one concurrent

connection per partition,

for relational sources

and targets

11262004

www.infocrest.com

Stage 2

Stage 3

Stage 4

Stage 5

Data can be redistributed between partition threads

at partition points, using these methods:

•Pass through

•Round robin

•Hash keys

•Key/Value range

32

Copyright ©2004

Partition Methods

You can change the partitioning method at each partition point to

redistribute data between stage threads more efficiently

All methods but pass-through come at the cost of some performance

Round Robin

Distributes data evenly between stage threads

Use in the transformation stage, when reading from unevenly partitioned

sources

Hash Key

Keeps data belonging to the same group in the same partition so the data is

aggregated or sorted properly

Use with Aggregator, Sorter, Joiner and Rank transformations

Hash auto keys

Hash keys generated by the server engine, based on ‘groups by’ and

‘order by’ ports in transformations

Hash user keys

Define the ports you want to group by

11262004

www.infocrest.com

34

Copyright ©2004

Partition Methods

Key/Value Range

Define a key (one or more ports)

Define a range of values for each partition

Use with relational sources or targets

You can specify additional SQL filters for relational sources or override the

SQL entirely

Workflow Manager does not validate key ranges (missing or overlapping)

Pass Through

Use when you want to create a new pipeline stage without redistributing data.

If you want to set a partition point at an aggregator with sorted input, passthrough is the only method available

DB Target Partitioning

Only available for DB2 targets

Queries system tables and distributes output to the appropriate nodes

11262004

www.infocrest.com

35

Copyright ©2004

Partitions and Caches

Partitioned Cache Files

Each partitioned cache only hold the data needed to process that partition

Caches are partitioned automatically for Aggregator and Rank transformations

Joiner caches will be partitioned if you set a partition point at the Joiner

transformation

Lookup caches will be partitioned if

When using a joiner with sorted input and multiple partitions for both master and detail

sides, make sure all the data before the joiner is kept into one partition to maintain the

sort order, then use the hash auto-key partition method at the joiner.

To keep the data in one partition:

Flat files: use a pass-through partition point at the source qualifer with the flat file

source connected to the first partition and dummy (empty) files connected to the

other partitions

Relational: use a key range partition point at the source qualifier to bring the

entire data set into the first partition

You set a hash auto key partition point at the Lookup transformation

You use only equality operators in the lookup condition

The database is set for case sensitive comparisons

Sorter caches are not partitioned.

11262004

www.infocrest.com

36

Copyright ©2004

Limitations

Partition points

Cannot delete default partition points at the reader or writer stages

Cannot delete default partition points at Rank or unsorted Aggregator

transformation unless

You cannot partition a pipeline that contains an XML source

Joiners

Cannot add a partition point at a Sequence Generator or an unconnected

transformation

A transformation can only receive input from one pipeline stage. You cannot add

a partition point if it violates this rule.

XML sources

There is only one partition for the session

There is a partition point upstream that uses hash keys

You cannot partition the pipeline that contains the Master source unless you

create a partition point at the Joiner Transformation

Mapping changes

After you partition a session, you could make some changes to the underlying

mapping that would violate the partitioning rules above. Theses changes would

not get validated in the Workflow Manager and the session would fail.

11262004

www.infocrest.com

37

Copyright ©2004

Limitations

Hash Auto Keys

Make sure the row grouping stays the same when you have one auto key

partition point feeding data to several ‘grouping’ transformation, such as a

Sorter followed by an Aggregator. If the grouping is different, you may not get

the results you expect.

External Loaders

You cannot partition a session that feeds an external loader

The session may validate but the server will fail the session

The exception is Oracle external loader, under certain conditions

One potential solution is to load the target data into a flat file then use an

external loader to push the data to the database

On UNIX, the server pipes data through to the external loader as the output data

is produced and you would loose this advantage with this solution

Debugger

You cannot run a session with multiple partitions in the debugger

Resources

Partitioning can be a great help in speeding up sessions but it can use up

resources very quickly

Review the session’s performance in the production environment to make sure

you are not hitting your system’s glass ceiling

11262004

www.infocrest.com

38

Copyright ©2004

Partitioning Demo

You have a mapping that reads data from web log files and aggregates

values by user session in a daily table

In addition, you run a top 10 most active sessions report file

You have three web servers, each dedicated to its own subject area

Data for one user session can be spread across several log files

Log file sizes vary between servers

You have a persistent session id in the logs

Log file reader

Filter out unwanted

transactions

11262004

www.infocrest.com

Sort input by

session ID

Aggregate log

data per session

Rank the top 10 most

active sessions

39

Copyright ©2004

Partition Demo – Strategy

Define your strategy

Using partitions, you can process several log files concurrently, one log per partition

Because the log files vary in sizes, you need to re-balance the data load across

partitions

To keep the sorter and aggregator working properly, you need to group the data load

by session id across partitions. This way, data that belongs to the same user session

will always be processed in the same partition

For the rank transformation, you need all the data to be channeled through one

partition, so it can extract the top 10 sessions from the entire data set

3 partitions: each will

read a separate log file

Partition point #1;

Pass-through, to read

the each log file entirely

11262004

www.infocrest.com

Partition point #2;

Round-robin, to even out the

load between partitions

Partition point #4;

Hash auto keys, this ranker

does not use a ‘group by’ port.

All data will be lumped into one

default group and one partition

Partition point #3;

Hash auto keys, the server

will channel data based on

session id, the ‘sort by’ port

40

Copyright ©2004

Partition Demo – Implementation I

Create one partition per source file

1 Edit session task properties in

workflow manager

2 Select Mapping tab

5 Click ‘Edit Partition Point’

4 Select your source qualifier

6 Click ‘Add’ twice

3 Select Partitions sub-tab

11262004

www.infocrest.com

Source qualifier partition point

can only be ‘Pass Through’ for

flat file sources or ‘Pass Through’

and ‘Key Range’ for relational

sources

42

Copyright ©2004

Partition Demo – Implementation II

Specify Source Files

2 Select your source qualifier

3 Type filenames

1 Select Transformations sub-tab

11262004

www.infocrest.com

43

Copyright ©2004

Partition Demo – Implementation III

Re-balance the data load

3 Click ‘Add Partition Point’

2 Select your filter

1 Select Partitions sub-tab

4 Select ‘Round Robin’

11262004

www.infocrest.com

44

Copyright ©2004

Partition Demo – Implementation IV

Re-group the data for the Sorter and Aggregator transformation

1 Select your sorter

The Aggregator transformation has the

‘Sorted Input’ property set and therefore

does not have a default partition point.

Since we added a partition point at the

Sorter, we don’t need one at the

Aggregator.

2 Click ‘Add Partition Point’

3 Select ‘Hash Auto Keys’

11262004

www.infocrest.com

45

Copyright ©2004

Partition Demo – Implementation V

Ensure the default Rank transformation partition point is set correctly

Every Rank transformation gets a default partition point

set to hash auto keys, and this is the behavior we want

11262004

www.infocrest.com

46

Copyright ©2004

Partition Demo – Implementation VI

Set the defaults for your target Top 10 file

2 Select your flat file target

When you write to a partitioned flat file target,

data for each partition ends up in its own file.

Click ‘Merge Partitioned Files’ to have the

server merge those files into one.

1 Select Transformations sub-tab

11262004

www.infocrest.com

47

Copyright ©2004

Partition Demo – Implementation VII

Set Session performance parameters

1 Select Properties tab

2 Increase total DTM buffer

size if needed. Depends on

the number of partitions and

the number of sources and

targets.

3 Check ‘Collect Performance

Data’ box for a test run to see

how your partitioning strategy

is performing

11262004

www.infocrest.com

48

Copyright ©2004

Partition Demo – Test Run I

Monitor Session statistics through the Workflow Monitor

Select Transformation

Statistics tab

Number of input rows for each log file

Number of output rows for the

relational target table. Load is spread

evenly across partitions

Output rows sent to the flat file top

10 target, confined to one partition

11262004

www.infocrest.com

50

Copyright ©2004

Partition Demo – Test Run II

Monitor Session performance through the Workflow Monitor

Select Performance tab, only visible while the session

is running and until you close the window. These

numbers are saved in the ‘.perf’ file

Round-robin evens out the load at the Filter

transformation

# output rows in Filter != # input rows in

Sorter. Data was redistributed.

Partition [1]

All output rows from the aggregator end up

in this partition to be ranked. A single group

is created.

11262004

www.infocrest.com

51

Copyright ©2004

Partition Demo – Test Run III

Examine Pipeline stage threads performance in session log

Thread by thread performance, for each pipeline stage

Total run time

Idle time

Busy percentage

High idle time means a thread is waiting for data, look for a bottleneck

upstream

Scroll down to the Run Info section

11262004

www.infocrest.com

52

Copyright ©2004

Performance &

Tuning

11262004

www.infocrest.com

53

Copyright ©2004

Informatica Tuning 101

Collect base performance data

Establish reference points for your particular system

-

Your goal is to measure optimal I/O performance on your system

Create pass through mappings for each main source/target combination

Make notes of the read and write throughput counters in the session statistics

Time these sessions and compute Mb/hour or Gb/hour numbers

Do this for various combinations of file and relational sources and targets

Try and have the system to yourself when you run your benchmarks

Collect performance data for your existing mappings

Before tuning them

Collect read and write throughput data

- Collect Mb/hour ot Gb/hour data

-

Identify and remove the bottlenecks in your mappings

Keep notes of what you do and how it affects the performance

Go after one problem at a time and re-check performance after each change

If a fix does not provide speed improvement, revert to your previous

configuration

11262004

www.infocrest.com

54

Copyright ©2004

Collecting Reference Data

Use a pass-through mapping

a source definition

a source qualifier

a target definition

No transformations

no transformation thread

best possible engine performance

for this source and target

combination

11262004

www.infocrest.com

55

Copyright ©2004

Identifying Bottlenecks

1-Writing to a slow target ?

2-Reading from a slow source ?

3-Transformation inefficiencies ?

4-Session inefficiencies ?

5-System not

optimized ?

11262004

www.infocrest.com

56

Copyright ©2004

Target Bottleneck

Change session’s

writer to a file write

11262004

www.infocrest.com

58

Copyright ©2004

Target Bottleneck

Common sources of problems

Indexes or key constraints

Database commit points too high or too low

Common Solutions

Drop indexes and key constraints before loading, rebuild after loading

Use bulk loading or external loaders when practical

Experiment with the frequency of database commit points

11262004

www.infocrest.com

59

Copyright ©2004

Source Bottleneck

OR

11262004

www.infocrest.com

60

Copyright ©2004

Source Bottleneck

Common sources of problems

Inefficient SQL query

Table partitioning does not fit the query

Common Solutions

analyze the query issued by the Source Qualifier. It appears in the session

log. Most SQL interpreter tools allow you to view an execution plan for your

query.

consider using database optimizer hints to make sure correct indexes are

used

consider indexing tables when you have order by or group by clauses

try database parallel queries if supported

try partitioning the session if appropriate

If you have table partitioning, make sure your query does not pull data across

partition lines

If you have a query filter on non-indexed columns, try moving the filter outside

of the query, into a Filter Transformation

11262004

www.infocrest.com

61

Copyright ©2004

Mapping Bottleneck

Under

Properties -> Performance

11262004

www.infocrest.com

62

Copyright ©2004

Mapping Bottleneck

Common sources of problems

too many transforms

unused links between ports

too many input/output or outputs ports connected out of aggregator, ranking, lookup

transformations

unnecessary data-type conversions

Common solutions

eliminate transformation errors

if several mappings read from the same source, try single pass reading

optimize datatypes, use integers for comparisons.

don’t convert back and forth between datatypes

optimize lookups and lookup tables, using cache and indexing tables

put your filters early in the data flow, use a simple filter condition

for aggregators, use sorted input, integer columns to group by and simplify expressions

if you use reusable sequence generators, increase number of cached values

if you use the same logic in different data streams, apply it before the streams branch off

optimize expressions:

- isolate slow and complex expressions

- reduce or simplify aggregate functions

- use local variables to encapsulate repeated computations

- integer computations are faster than character computations

- use operators rather that the equivalent function, ‘||’ faster than CONCAT().

11262004

www.infocrest.com

63

Copyright ©2004

Session Bottleneck

11262004

www.infocrest.com

64

Copyright ©2004

Session Bottleneck

Common sources of problems

inappropriate memory allocation settings

under-utilized or over-utilized resources (CPU and RAM)

error tracing override set to high level

Common solutions

experiment with DTM buffer pool and buffer block size

-As

good starting point is 25MB for DTM buffer and 64K for buffer block size

make sure to keep data caches and indexes in memory

-Avoid

paging to disk, but be aware of your RAM limits

run sessions in parallel, in parallel workflow execution paths, whenever possible

-Here

also, be cautious not to hit your glass ceiling

if your mapping allows it, use partitioning

experiment with database commit interval

turn off decimal arithmetic (it is off by default)

use debugger rather than high error tracing,reduce your tracing level for production runs

-Create

a reusable session configuration object to store tracing level and block buffer size

don’t stage your data if you can avoid it, read directly from original sources

look at the performance of your session components (run each separately)

11262004

www.infocrest.com

66

Copyright ©2004

System Bottleneck

11262004

www.infocrest.com

67

Copyright ©2004

System Bottleneck

Common sources of problems

slow network connections

overloaded or under-powered servers

slow disk performance

Common Solutions

get the best machines to run your server. Better yet, use several servers

against the same repository (power center only).

use multiple CPUs and session partitioning

make sure you have good network connections between Informatica server

and database servers

Locate the Repository database on the Informatica server machine

shutdown unneeded processes or network services on your servers

use 7 bit ASCII data movement (the default) if you don’t need Unicode

evaluate hard disk performance, try locating sources and targets on different

drives

Use different drives for transformation caches, if they don’t fit in memory

get as much RAM as you can for your servers

11262004

www.infocrest.com

68

Copyright ©2004

Using Statistics Counters

View Session statistics through the Workflow Monitor

These numbers are available in realtime, they are updated every few

seconds.

Select Transformation

Statistics tab

Number of input rows for each source

file

Number of output rows for the

relational target table. Load is spread

evenly across partitions

Output rows sent to the flat file top

10 target, confined to one partition

11262004

www.infocrest.com

69

Copyright ©2004

Using Performance Counters

Turning it on

In the Workflow Manager, edit session

Collecting Performance data requires an additional 200K of memory per session

1 - Select Properties tab

3 - Check ‘Collect Performance

Data’ box for a test run to see

how your partitioning strategy is

performing

2 - Select Performance section

11262004

www.infocrest.com

70

Copyright ©2004

Using Performance Counters

Monitor Session performance through the Workflow Monitor

Select Performance tab, only visible while the session

is running and until you close the window. These

numbers are saved in the ‘.perf’ file

Input rows and output

rows counters for each

transformation

Error rows counters for

each transformation

11262004

www.infocrest.com

Read from disk/cache,

Write to disk/cache

counters for ranks,

aggregators and joiners

71

Copyright ©2004

Using Performance Counters

How to use the counters

Input & output rows to verify

data integrity

Rows repartition at a partition point

Error rows

Did you expect this transformation to reject rows due to error ?

Read/Write to disk

If the counters have non-zero values, your transformation is paging to disk

Read/Write to cache

Use in conjunction with read/write to disk to estimate the size of the cache needed to hold

everything within RAM

New group key

Aggregator and ranker

Number of groups created

Does this number seem right ? If not, your grouping condition may be wrong

Old group key

Aggregator and ranker

Number of times a group was reused

Rows in Lookup Cache

Lookup only

Use to estimate the total cache size

11262004

www.infocrest.com

72

Copyright ©2004

Using Run Info Counters

Using Session log’s Run Info

Only available when the session is finished

One entry per stage per partition

Counters:

Run time, total run time for the thread

Idle time, total time the thread spent doing

nothing (included in total run time)

Busy percentage, a function of the two counters

abover

Replaces V5 buffer efficiency counters

Scroll down to the Run Info section

11262004

www.infocrest.com

74

Copyright ©2004

Using Run Info Counters

Run Info Busy Percentage

You need to compare the values for each stage to properly evaluate where the

bottleneck may be

You want to look for a high value (busy) that stands out. This indicates a problem

area.

High values across the board are indicators of an optimized session

Bottlenecks in

red

11262004

www.infocrest.com

Reader

Transform

Writer

High %

Low %

Low %

Low %

High %

Low %

Low %

Low %

High %

75

Copyright ©2004

Review Quiz

1.

2.

3.

What is a benefit of buffered processing stages ?

a)

Safety net against network errors

b)

Lower memory requirements

c)

Overlapping data processing

How do you identify a target bottleneck ?

a)

By changing the output of the session to point to a flat file instead of a relational

target

b)

By reading the Run-Info section of the session log and looking for a low busy

percentage at the writer stage

c)

By replacing the mapping with a pass-through mapping connected to the same

target

The ‘Collect Performance Data’ option is enabled by default ?

a)

No, never

b)

Yes, always

c)

No, unless you run a debugging session

11262004

www.infocrest.com

76

Copyright ©2004

Review Quiz

4.

5.

You have a shared session memory set to 25MB and a buffer block size set to

64K. How many rows of data can the server move to memory in a single

operation ?

a)

40,000 rows if average row size is 655 bytes

b)

100 rows if average row size is 655 bytes

c)

2,500 rows if average row size is 64k

The Aggregator Transformation’s ‘Write To Cache’ tells the number of rows

written to the disk cache ?

a)

TRUE.

b)

FALSE

11262004

www.infocrest.com

77

Copyright ©2004

Command Line Utilities

11262004

www.infocrest.com

78

Copyright ©2004

Overview

Pmcmd

Communicates with the Informatica Server

Located in the server install directory

Use with external scheduler tools or server scripts

Use for remote administration when Workflow Manager or Monitor GUI is not accessible

On windows, in the ‘bin’ folder

On Unix, at the parent level

Pmrep & Pmrepagent

Communicates with the Repository Server

Use to backup repository

Use to change database connections parameters or server variables ($PM…)

Use to perform security related tasks

located in the repository server install directory

On windows, in the ‘bin’ folder

On Unix, at the parent level

11262004

www.infocrest.com

79

Copyright ©2004

Working with pmcmd & pmrep (I)

Two modes

Command line

Pass the entire command and parameters to the utility

Use when writing server scripts that automate server or repository functions

Example

Main command

Connection parameters need flags (-u, -p,…)

>>pmcmd startworkflow -u User -p Password -s InfaServer:4001

wkf_LoadFactTables

Interactive

Maintain a connection to the server or repository until typing exit

Use to enter series of commands, when operating server or repository remotely

Example:

Just type the utility

name at the

console to start

interactive mode

11262004

www.infocrest.com

main parameter

>>pmcmd

>>

>>Informatica™PMCMD 7.1 (1119)

>>Copyright © Informatica Corporation 1994-2004

>>All Rights Reserved

>>

>>Invoked at Fri Apr 25 13:14:23 2003

>>

>>pmcmd>connect

>>username:User

>>password:

>>server address <[host:]portno>: InfaServer:4001

80

type a command name

without parameters and the

utility prompts for the

parameters (not available for

all commands)

Copyright ©2004

Working with pmcmd & pmrep (II)

Getting help

Command line

Interactive

Type >>pmcmd help | more to get a paged list of all pmcmd or pmrep commands and

arguments

Type >>pmcmd help <commandname> to get help on a specific command

Type help or help <commandname> at the pmcmd or pmrep prompt

Example

>>pmrep help backup

>>

backup

>>

-o <output file name>

>>

-f (override existing output file)

>>help completed successfully

Getting help on the repository

server’s backup command.

Non-interactive mode

Terminating an interactive session

Type exit at the pmcmd or pmrep prompt

You can also type quit at the pmcmd prompt

11262004

www.infocrest.com

82

Copyright ©2004

Using pmcmd (I)

Running Workflows and Tasks

Commands for starting, stopping and aborting tasks and workflows

You can start a process in wait

or nowait mode. You can also

specify a parameter file

Commands to resume a workflow or worklet

starttask

startworflow

stoptask

stopworkflow

aborttask

abortworkflow

schedule Workflow

unschedule Workflow

resumeworkflow

resumeworklet

Commands to wait for a process to finish

waittask

waitworkflow

Utility will return control to the user

when a given process terminates

Task names are fully

qualified. If task is within

a worklet, use the syntax

workletname.taskname

Specify the folder (-f) and workflow (-w) hosting the task

Example

>>pmcmd starttask -u joe -p 1234 -s InfaServer:4001 -f prodFolder -w

11262004

wkf_loadFacts

ses_LoadTrans

www.infocrest.com

83

Copyright ©2004

Using pmcmd (II)

Server Administration

Interactive mode:

pingserver

shutdownserver

version

Server and workflows status. You

can get info about all, running or

scheduled workflows

Gathering Information About

Server

getserverdetails

getserverproperties

Server name,type and version, repository name

Repository

connect

disconnect

Both modes

Need a user name, password and

a server address and port to

connect

getsessionstatistics

gettaskdetails

getworkflowdetails

getrunningsessionsdetails

Interactive Mode Only

Setting defaults and properties

11262004

www.infocrest.com

Set a default folder or a run mode

valid for the entire session

setfolder, unsetfolder

setwait,setnowait

84

Copyright ©2004

Using pmcmd (III)

Return Codes

In Command-Line mode, ‘pmcmd’ returns a value to indicate the status of the last

command

Zero

Non zero

Command was successful.

If starting a workflow or task in wait mode, zero indicates successful completion of the process.

In no-wait mode, zero indicates the server successfully received and processed the request

Error status, such as invalid user name or password, or wrong command parameters

See your Informatica documentation for a list of the latest return codes

Caching return codes

Within a dos batch file, use the ERRORLEVEL variable

Check for exact values, starting with the highest one as in:

pmcmd pingserver Infa61:4001

IF ERRORLEVEL == 1 GOTO error

IF ERRORLEVEL == 0 GOTO success

Within a Perl script, you can use the $? variable shifted by 8 as in:

system(‘pmcmd pingserver Infa61:4001’);

$returnVal = $? >> 8;

11262004

www.infocrest.com

85

Copyright ©2004

PMREP Commands

Change Management Commands

CreateDeployment Group

AddToDeploymentGroup

ClearDeploymentGroup

DeployDeploymentGroup

DeleteDeploymentGroup

CreateLabel

ApplyLabel

DeleteLabel

Deployment group functions to

create, add to, deploy, clear or

delete a group.Groups can either

be static or dynamic.

Label functions to create, apply

or delete a label.

Checkin

UndoCheckout

DeployFolder

Folder copy

ExecuteQuery

FindCheckout

Validate

Executes an existing query

11262004

www.infocrest.com

86

Copyright ©2004

PMREP Commands

Persistent Input Files

You can create reusable input files for some repository and versioning commands

These files describe the objects that will be affected by these operations

Input files can be created manually or by using repository commands

Operations that support input files:

Operations that can create a persistent input file

Add to Deployment Group

Apply Label

Validate

Object Export

List Object Dependencies

Execute Query

List Object Dependencies

Deployment Control Files

XML files written to specify deployment options such as ‘Replace Folder’ or ‘Retain Mapping

Persistent Values’

Used with

11262004

www.infocrest.com

Deploy Folder

Deploy Deployment Group

87

Copyright ©2004

PMREP Commands

Repository Commands

ListObjects

Listtablesbysess

Import and Export repository objects as XML files

Updateseqgenvals

List objects dependent on another object (or objects if you use an input file) for a given type and folder

ObjectExport

ObjectImport

List source and target table instance names for a given session

ListObjectDependencies

Lists repository objects for a given type and folder

Change values for non-reusable sequence generators in a mapping

For instance, you can reset dimension key generators start values to 1 when reloading data from

scratch (second initial load) in a data mart

Updatesrcprefix

Updatetargprefix

11262004

www.infocrest.com

Change the value of a source or target owner name for a given table in a given session

88

Copyright ©2004

PMREPAGENT Commands

Repository Commands

Backup

Backup a repository to a file. Repository must be stopped

Create

Create a new repository in a pre-configured database

Delete

Delete repository tables from a database

Restore

Restore a repository from a backup file to an empty database

Upgrade

Upgrade an existing repository to the latest version

11262004

www.infocrest.com

90

Copyright ©2004

Repository MX Views

11262004

www.infocrest.com

91

Copyright ©2004

Repository Views

Summary

Provided for reporting on the Repository

Historical load performance

Documentation

Dependencies

These views take most of the complexity out of the production repository

tables

Use them whenever possible rather than going against production

repository tables

Never modify the production repository tables themselves

11262004

www.infocrest.com

92

Copyright ©2004

Repository Views

Accessing repository MX views

Cannot be accessed by Informatica directly

Direct access to these tables is prohibited by Informatica

You cannot import these table definitions in the Source designer either

This can however be circumvented:

Create a copy of the views using a different name in the production repository

(potentially dangerous)

Create a copy of the views in a different database (safer but slower)

»

»

use different view names

create with an account that has read permission into the production repository views

Can be queried by other database tools

SQL*plus for Oracle or SQL query analyzer for MS SQL server

Perl scripts using DBI-DBD modules

PHP scripts

11262004

www.infocrest.com

93

Copyright ©2004

Repository Views

All views at a glance

REP_DATABASE_DEFS

A list of sources subfolders for each folder

REP_SCHEMA

List of folders and version info

REP_SESSION_CNXS

Info about database connections in reusable

sessions

REP_SESSION_INSTANCES

Info about session instances in workflows or

worklets

REP_SRC_FILE_FLDS

Detailed info about flat file, ISAM & XML source

fields

REP_SRC_FILES

Detailed info about flat, ISAM & XML source

definitions

REP_SRC_FLD_MAP

Info about data transformations at the field level

for relational sources

REP_SRC_MAPPING

Sources for each mapping

REP_SRC_TBL_FLDS

Detailed info about relational source fields

REP_SRC_TBLS

Info about relational sources for each folder

11262004

www.infocrest.com

94

REP_TARG_FLD_MAP

Info about data transformations at the field level

for relational targets

REP_TARG_MAPPING

Targets for each mapping

REP_TARG_TBL_COLS

-Detailed info about relational targets columns

REP_TARG_TBL_JOINS

Primary/Foreign key relationship between

targets, per folder

REP_TARG_TLBS

Info about relational targets per folder

REP_TBL_MAPPING

List of sources & targets per mapping, with

filters, group bys and SQL overrides

REP_WORKFLOWS

Limited info about workflows

REP_SESS_LOG

Historical data about session runs

REP_SESS_TBL_LOG

Historical load info for targets

REP_FLD_MAPPING

Describe data path from source field to target

field

Copyright ©2004

Repository Views

Usage

Dependencies

You are changing a source or a target table and need to know what mappings are affected

You are changing a database connection and need to know which sessions are affected

Useful Views

Source dependencies

Target dependencies

REP_SRC_MAPPING

REP_TARG_MAPPING

Connections dependencies

REP_SESSION_INSTANCES

REP_TARG_MAPPING

Subject area: name for

folder in repository tables

and views

TARGET_NAME

TARG_BUSNAME

SUBJECT_AREA

MAPPING_NAME

Explains how the data is transformed

from the source to the target

VERSION_ID

REP_SRC_MAPPING

SOURCE_NAME

VERSION_NAME

SRC_BUSNAME

CONDITIONAL_LOAD

SUBJECT_AREA

MAPPING_NAME

SOURCE_FILTER

WORKFLOW_NAME

GROUP_BY_CLAUSE

SQL_OVERRIDE

VERSION_ID

VERSION_NAME

MAPPING_COMMENT

MAPPING_LAST_SAVED

REP_SESSION_INSTANCES

SUBJECT_AREA

SESSION_INSTANCE_NAME

IS_TARGET

VERSION_ID

DESCRIPTION

CONNECTION_NAME

MAPPING_COMMENT

CONNECTION_ID

MAPPING_LAST_SAVED

Refers to folder versioning

11262004

www.infocrest.com

95

Copyright ©2004

Repository Views

Dependencies

Example queries

Display all mappings in the TradeWind folder that have a source or a target named

‘customers’

select distinct mapping_name from rep_src_mapping

where source_name = 'customers' and subject_area = 'Tradewind’

union

select distinct mapping_name from rep_targ_mapping

where target_name like 'customers’ and subject_area = 'Tradewind'

Display all workflows and worklets using a server target connection named

‘Target_DB’

select distinct workflow_name from rep_session_instances

where connection_name = ’Target_DB' and is_Target = 1

11262004

www.infocrest.com

96

Copyright ©2004

Repository Views

Usage

Session Performance

Run a report on historical load performance for given targets

Run a post-load check on warning, errors and rejected rows for all sessions within a folder

Useful Views

Target performance

REP_SESS_LOG

REP_SESS_TBL_LOG

SUBJECT_AREA

Session performance

SESSION_NAME

REP_SESS_LOG

SESSION_INSTANCE

REP_SESS_TBL_LOG

SUCCESSFUL_ROWS

SUBJECT_AREA

FAILED_ROWS

SESSION_NAME

FIRST_ERROR_CODE

SESSION_INSTANCE

Both first and last

error messages

TABLE_NAME

TABLE_BUSNAME

Time when server

received the start

session request

SUCCESSFUL_ROWS

FAILED_ROWS

ACTUAL START

SESSION_TIMESTAMP

SESSION_LOG_FILE

LAST_ERROR

LAST_ERROR_CODE

A post load process

can access session

logs and reject files

START_TIME

END_TIME

11262004

www.infocrest.com

LAST_ERROR_CODE

LAST_ERROR

TABLE_INSTANCE_NAME

Start and end times for the

writer stage

FIRST_ERROR_MSG

BAD_FILE_LOCATION

SESSION_TIMESTAMP

BAD_FILE_LOCATION

98

Copyright ©2004

Repository Views

Session Performance

Example queries

Display historical load data and elapsed load times for a target called ‘T_Orders’ in

the folder ‘TradeWind’

The method to compute the elapsed time will be database dependent

select successful_rows, failed_rows, <end_time - start_time>

from rep_sess_tbl_log

where table_name = ’T_Orders' and subject_area = 'Tradewind’

order by session_timestamp desc

Display a post - load report showing sessions having error or warning messages

The variable load-start-time is taken from repository production table

opb_wflow_run

This query assumes there is only one workflow called ‘DailyDatawarehouseLoad’ in

the repository

select session_instance_name, successful_rows, failed_rows, first_error_msg

from rep_sess_log

where subject_Area = ‘TradeWind’

and first_error_Msg != ‘No errors encountered.’

and session_timestamp >= (select max(start_time) from opb_wflow_run

where workflow_name = ‘DailyDatawarehouseLoad’)

11262004

www.infocrest.com

99

Copyright ©2004

Repository Views

Documentation

Usage

Document the schema (sources and targets with their respective fields) for a

given folder or the entire repository

REP_TARG_TBL_COLS

Useful Views

REP_SRC_TBLS

REP_TARG_TBLS

REP_SRC_TBL_FLDS

REP_TARG_TBL_COLS

REP_SRC_TBLS

REP_TARG_TBLS

SUBJECT_AREA

REP_SRC_TBL_FLDS

TABLE_NAME

COLUMN_NAME

TABLE_BUSNAME

COLUMN_BUSNAME

COLUMN_NAME

COLUMN_ID

COLUMN_BUSNAME

SUBJECT_AREA

COLUMN_NUMBER

TABLE_ID

COLUMN_ID

TABLE_NAME

SUBJECT_AREA

TABLE_NAME

VERSION_ID

TABLE_BUSNAME

TABLE_NAME

TABLE_BUSNAME

VERSION_NAME

TABLE_ID

BUSNAME

COLUMN_NUMBER

DESCRIPTION

SUBJECT_AREA

VERSION_ID

COLUMN_DESCRIPTION

COLUMN_KEYTYPE

VERSION_NAME

KEY_TYPE

DATA_TYPE

DESCRIPTION

SOURCE_TYPE

DATA_TYPE_GROUP

FIRST_COLUMN_ID

DATA_PRECISION

DATA_PRECISION

TABLE_CONSTRAINT

DATA_SCALE

DATA_SCALE

CREATE_OPTIONS

NEXT_COLUMN

NEXT_COLUMN_ID

FIRST_INDEX_ID

VERSION_ID

IS_NULLABLE

LAST_SAVED

VERSION_NAME

SOURCE_COLUMN_ID

DATABASE_TYPE

DATABASE_NAME

SCHEMA_NAME

FIRST_FIELD_ID

SOURCE_DESCRIPTION

VERSION_ID

VERSION_NAME

LAST_SAVED

11262004

www.infocrest.com

100

Copyright ©2004

Repository Views

Documentation

Usage

Document the path from each source field to each target column, with the data

transformations in between

For each mapping, document the sources and targets objects including SQL overrides

and group conditions

Useful Views

REP_FLD_MAPPING

REP_TBL_MAPPING

Source & Target level

REP_TBL_MAPPING

Field level

REP_FLD_MAPPING

SOURCE_FIELD_NAME

SOURCE_NAME

SRC_FLD_BUSNAME

SRC_BUSNAME

SOURCE_NAME

TARGET_NAME

SRC_BUSNAME

TARG_BUSNAME

TARGET_COLUMN_NAME

SUBJECT_AREA

In Source Qualifier properties

MAPPING_NAME

VERSION_ID

In Filter transformations

VERSION_NAME

SOURCE_FILTER

In Aggregator transformations

CONDITIONAL_LOAD

GROUP_BY_CLAUSE

SQL_OVERRIDE

Digest of all

transformations that

occur between the

source field and the

target column.

Sometimes cryptic

and hard to read…

TARG_COL_BUSNAME

SUBJECT_AREA

MAPPING_NAME

VERSION_ID

VERSION_NAME

TRANS_EXPRESSION

USER_COMMENT

DBA_COMMENT

DESCRIPTION

MAPPING_COMMENT

MAPPING_COMMENT

MAPPING_LAST_SAVED

11262004

www.infocrest.com

MAPPING_LAST_SAVED

101

Copyright ©2004

Repository Views - Documentation

Example queries

Display source schema (all source definitions and field properties) for the

folder ‘TradeWind’

The output is sorted by column number to keep it in sync with the field

order of each source definition

select table_name, column_name, source_type, data_precision,

data_scale

from rep_src_tbl_flds where subject_area = 'TradeWind'

and version_name = '010000‘ and table_name in

(select table_name from rep_Src_tbls where subject_area = 'TradeWind')

order by table_name, column_number

Display the path of data from source field to target column, each with its

concatenated transformation expression, for the mapping ‘OrdersTimeMetric’

in the folder’ TradeWind’

select source_field_name, target_column_name, trans_expression

from rep_fld_mapping

where mapping_name = 'OrdersTimeMetric’ and subject_area = ‘Tradewind’

11262004

www.infocrest.com

102

Copyright ©2004

Repository Views - Documentation

Sample output

ShipCountry

Country

:SD.Orders.ShipCountry

RequiredDate

OnTime_Orders

SUM(IIF (DATE_COMPARE(iif (isnull(:SD.Orders.RequiredDate),

:SD.Orders.ShippedDate,:SD.Orders.RequiredDate), :SD.Orders.ShippedDate) >= 0, 1, 0))

ShippedDate

OnTime_Orders

SUM(IIF (DATE_COMPARE(iif (isnull(:SD.Orders.RequiredDate),

:SD.Orders.ShippedDate,:SD.Orders.RequiredDate), :SD.Orders.ShippedDate) >= 0, 1, 0))

OrderID

Late_Orders

COUNT(:SD.Orders.OrderID) SUM(IIF (DATE_COMPARE(iif (isnull(:SD.Orders.RequiredDate),

:SD.Orders.ShippedDate,:SD.Orders.RequiredDate), :SD.Orders.ShippedDate) >= 0, 1, 0))

RequiredDate and ShippedDate source fields

both feed the OnTime_Orders column

11262004

www.infocrest.com

:SD. Prefix for Source definition

103

Copyright ©2004