Memory Management

advertisement

Programming Languages

Memory Management

Chapter 11

1

Definitions

• Memory management: the process of binding

values to memory locations.

• The memory accessible to a program is its

address space, represented as a set of values

{0, 1, …, n}.

– The numbers represent memory locations.

– These are logical addresses – do not always

correspond to physical addresses at runtime.

• The exact organization of the address space

depends on the operating system and the

programming language being used.

2

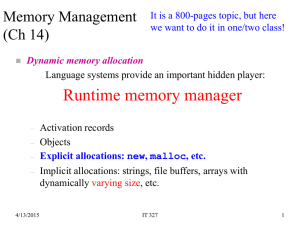

• Runtime memory management is an

important part of program meaning.

– The language run-time system creates &

deletes stack frames, creates & deletes

dynamically allocated heap objects – in

cooperation with the operating system

• Whether done automatically (as in Java or

Python), or partially by the programmer

(as in C/C++), dynamic memory

management is an important part of

programming language design.

3

Review Definitions

• Method: any subprogram (function,

procedure, subroutine) – depends on

language terminology.

• Environment of an active method: the

variables it can currently access plus their

addresses (a set of ordered pairs)

• State of an active method: variable/value

pairs

4

Three Categories of Memory

(for Data Store)

• Static: storage requirements are known

prior to run time; lifetime is the entire

program execution

• Run-time stack: memory associated with

active functions

– Structured as stack frames (activation records)

• Heap: dynamically allocated storage; the

least organized and most dynamic storage

area

5

Static Data Memory

• Simplest type of memory to manage.

• Consists of anything that can be completely

determined at compile time; e.g., global

variables, constants (perhaps), code.

• Characteristics:

– Storage requirements known prior to execution

– Size of static storage area is constant throughout

execution

6

Run-Time Stack

• The stack is a contiguous memory region

that grows and shrinks as a program runs.

• Its purpose: to support method calls

• It grows (storage is allocated) when the

activation record (or stack frame) is

pushed on the stack at the time a method

is called (activated).

• It shrinks when the method terminates and

storage is de-allocated.

7

Run-Time Stack

• The stack frame has storage for local

variables, parameters, and return linkage.

• The size and structure of a stack frame is

known at compile time, but actual contents

and time of allocation is unknown until

runtime.

• How is variable lifetime affected by stack

management techniques?

8

Heap Memory

• Heap objects are allocated/deallocated

dynamically as the program runs (not

associated with specific event such as

function entry/exit).

• The kind of data found on the heap

depends on the language

– Strings, dynamic arrays, objects, and linked

structures are typically located here.

– Java and C/C++ have different policies.

9

Heap Memory

• Special operations (e.g., malloc, new)

may be needed to allocate heap storage.

• When a program deallocates storage

(free, delete) the space is returned to

the heap to be re-used.

• Space is allocated in variable sized blocks,

so deallocation may leave “holes” in the

heap (fragmentation).

– Compare to deallocation of stack storage

10

Heap Management

• Some languages (e.g. C, C++) leave heap

storage deallocation to the programmer

– delete

• Others (e.g., Java, Perl, Python, listprocessing languages) employ garbage

collection to reclaim unused heap space.

11

The Structure of Run-Time Memory

Figure 11.1

These two areas grow

towards each other as

program events

require.

12

Stack Overflow

• The following relation must hold:

0≤a≤h≤n

• In other words, if the stack top bumps into

the heap, or if the beginning of the heap is

greater than the end, there are problems!

13

Heap Storage States

• For simplicity, we assume that memory

words in the heap have one of three

states:

– Unused: not allocated to the program yet

– Undef: allocated, but not yet assigned a value

by the program

– Contains some actual value

14

Heap Management Functions

• new returns the start address of a block of k

words of unused heap storage and changes

the state of the words from unused to undef.

– n ≤ k, where n is the number of words of storage

needed; e.g., suppose a Java class Point has

data members x,y,z which are floats.

– If floats require 4 bytes of storage, then

Point firstCoord = new Point( )

calls for 3 X 4 bytes (at least) to be allocated

and initialized to some predetermined state.

15

Heap Overflow

• Heap overflow occurs when a call to new

occurs and the heap does not have a

contiguous block of k unused words

• So new either fails, in the case of heap

overflow, or returns a pointer to the new

block

16

Heap Management Functions

• delete returns a block of storage to the

heap

• The status of the returned words are

returned to unused, and are available to

be allocated in response to a future new

call.

• One cause of heap overflow is a failure on

the part of the program to return unused

storage.

17

The New (5) Heap Allocation Function Call: Before and After

Figure 11.2

A before and after view of the heap. The “after” shows

the affect of an operation requesting a size-5 block.

(Note difference between “undef” and “unused”.)

Deallocation reverses the process.

18

Heap Allocation

• Heap space isn’t necessarily allocated

and deallocated from one end (like the

stack) because the memory is not

allocated and deallocated in a predictable

(first-in, first-out or last-in, first-out) order.

• As a result, the location of the specific

memory cells depends on what is

available at the time of the request.

19

Choosing a Free Block

• The memory manager can adopt either a

first-fit or best-fit policy.

• Free list = a list of all the free space on the

heap: 4 bytes, 32 bytes, 1024 bytes, 16

bytes, …

• A request for 14 bytes could be satisfied

– First-fit: from the 32-byte block

– Best-fit: from the 16 byte block

20

Virtual versus Physical

• The view of a process address space as a

contiguous set of bytes consisting of static,

stack, and heap storage, is a view of the logical

(virtual) address space.

• The physical address space is managed by the

operating system, and may not resemble this

view at all.

– OS is responsible for mapping virtual memory to

physical memory and determining how much physical

memory a program can have at a time.

– Language is responsible for managing virtual/logical

memory

21

Pointers

• Pointers are addresses; i.e., the value of a

pointer variable is an address.

• Memory that is accessed through a pointer

is dynamically allocated in the heap

• Java doesn’t have explicit pointers, but

reference types are represented by their

addresses and their storage is allocated on

the heap (although the reference is on the

stack).

22

11.2: Dynamic Arrays

• In addition to simple variables (ints, floats,

etc.) most imperative languages support

structured data types.

– Arrays: “[finite] ordered sequences of values

that all share the same type”

– Records (structs): “finite collections of values

that have different types”

23

Java versus C/C++/etc.

• In Java, arrays are always allocated

dynamically from heap memory.

• In many other languages

– Globally defined arrays - static memory.

– Local (to a function) arrays are - stack storage.

– Dynamically allocated arrays - heap storage.

• Dynamically allocated arrays also have

storage on the stack – a reference (pointer)

to the heap block that holds the array.

24

Declaring Arrays

• Typical Java array declarations:

– int[] arr = new int[5];

– float[][] arr1 = new float [10][5];

– Object[] arr2 = new Object[100];

• Typical C/C++ array declarations

– int arr[5];

– float arr1[10][15];

– int *intPtr;

intPtr = new int[5]

25

When Heap Allocation is Needed

• Consider the declaration

int A(n);

• Since array size isn’t known until runtime,

storage for the array can’t be allocated in

static storage or on the run-time stack.

• The stack contains the dope vector for the

array, including a pointer to its base

address, and the heap holds the array

values, in contiguous locations.

26

Array Allocation and

Referencing

• The dope vector has information needed to

interpret array references:

– Array base address

– Array size (number of elements)

for multi-dimensioned arrays, size of each dimension

– Element type (which indicates the amount of storage

required for each element)

• For dynamically allocated arrays, this information

should be stored in memory to access at runtime.

27

Allocation of Stack and Heap

Space for Array A Figure 11.3

28

Program Semantics for Arrays

skip to slide 39

• Semantics = program meaning

• If State is the set of all program states, the

meaning M of an abstract Clite Program is

defined by

– M: Program → State

– M: Statement X State → State

– M: Expression X State → Value

29

Program Semantics

• M: Program → State

– The meaning of a program is a function that

produces a state.

• M: Statement X State → State

– The meaning of a statement is a function that,

given a current state, yields a new state

• M: Expression X State → Value

– The meaning of an expression is a function that,

given a current state, yields a value.

30

Example

• For the Clite abstract syntax rule

Program = Declarations decpart;

Block body

the meaning rule is as follows:

“The meaning of a Program is defined to be the

meaning of its body when given an initial state

consisting of the variables of the decpart, each

initialized to the undef value corresponding to its

declared type.” (page 200)

31

1-D Array Semantics Notation

• From Chapter 5, page 116:

– addr(a[i]) = addr(a[0]) + e∙i where e is element

size

• From Chapter 2, page 53, abstract syntax

– ArrayRef = String id; Expression index;

– ArrayDecl = Variable v; Type t; Integer size;

– For ArrayDecl ad, ad.size = # of elements

– For ArrayRef ar, ar.index = value of index

expression

32

Array Semantics

• Assume:

– Array is dynamically declared

– Array is one dimension only

– Array element size is 1 (word)

– Array indexing is 0-based

• ad is the array declaration, ar is an array

reference.

33

Meaning Rule 11.1: The meaning of an

ArrayDecl ad is:

1. Compute addr(ad[0]) = new(ad.size).

(Allocate enough storage to hold size elements

of ad.type. addr(ad[0])= start address of

the block of storage)

2. Push addr(ad[0]) onto the stack.

3. Push ad.size onto the stack.

4. Push ad.type onto the stack.

Step 1 creates a heap block for ad.

Steps 2-4 create the dope vector for ad in the

stack.

34

Implementing the Meaning Rule

• The compiler generates code to perform

the steps outlined in the meaning rule and

incorporates them into the object code

wherever there is an array declaration.

• If ‘new’ fails, an exception is generated.

35

Meaning Rule 11.2 The meaning of an

ArrayRef ar for an array declaration ad is:

(assume element size is 1)

1. Compute

addr(ad[ar.index]) = addr(ad[0])

+ (ad.index - 1])

(where ad.index-1 = ad[index – 1])

2. If addr(ad[0]) addr(ad[ar.index])

< addr(ad[0])+ad.size,

then return the value at addr(ad[ar.index])

3. Otherwise, signal an index-out-of-range error.

36

Array Assignments: a[i] = Expr

Meaning Rule 11.3 The meaning of an array

Assignment as is:

1. Compute addr(ad[ar.index])=addr(ad[0])

+(ad.index-1)

2. If addr(ad[0]) addr( ad[ar.index] )

< addr(ad[0])+ad.size)

then assign the value of as.source to

addr(ad[ar.index]) (the target)

3. Otherwise, signal an index-out-of-range error.

37

Example

The assignment A[5]=3 changes the value at

heap address addr(A[0])+4 to 3, since

ar.index=5 and addr(A[5])=addr(A[0])+4.

This assumes that the size of an int is one

word.

38

Alternative Storage Allocation

for Arrays and Structs

• C/C++ support static (globally defined) arrays

• C/C++ also have fixed stack-dynamic arrays

– Arrays declared in functions are allocated storage on

the stack, just like other local variables.

– Index range and element type are static

• Ada also permits (variable) stack-dynamic arrays

– Index range can be specified as a variable

Get(List_Len);

Declare

List: array (1 .. List_Len) of Integer;

39

11.2.1 Memory Leaks and

Garbage Collection

• The increasing popularity of OO

programming has meant more emphasis

on heap storage management.

• Active objects: can be accessed through a

pointer or reference.

• Inactive objects: blocks that cannot be

accessed; no reference exists.

• (Accessible and inaccessible may be more

descriptive.)

40

Review

• Three types of storage

– Static

– Stack

– Heap

• Problems with heap storage:

– Memory leaks (garbage): failure to free storage when

pointers (references) are reassigned

– Dangling pointers: when storage is freed, but

references to the storage still exist.

41

Allocation of Stack and Heap

Space for Array A Figure 11.3

42

Garbage

• Garbage: any block of heap memory that

cannot be accessed by the program; i.e.,

there is no stack pointer to the block; but

which the runtime system thinks is in use.

• Garbage is created in several ways:

– A function ends without returning the space

allocated to a local array or other dynamic

variable. The pointer (dope vector) is gone.

– A node is deleted from a linked data structure,

but isn’t freed

–…

43

Another Problem

• A second type of problem can occur when

a program assigns more than one pointer

to a block of heap memory

• The block may be deleted and one of the

pointers set to null, but the other pointers

still exist.

• If the runtime system reassigns the

memory to another object, the original

pointers pose a danger.

44

Terminology

• A dangling pointer (or dangling reference, or

widow) is a pointer (reference) that still contains

the address of heap space that has been

deallocated (returned to the free list).

• An orphan (garbage) is a block of allocated heap

memory that is no longer accessible through any

pointer.

• A memory leak is a gradual loss of available

memory due to the creation of garbage.

45

Widows and Orphans

Consider this code:

class node {

int value;

node next; }

. . .

node p, q;

p = new node();

q = new node();

. . .

q = p;

delete(p);

• The statement q = p;

creates a memory leak.

• The node originally

pointed to by q is no

longer accessible – it’s an

orphan (garbage).

• Now, add the statement

delete(p);

• The pointer p is correctly

set to null, but q is now a

dangling pointer (or

widow)

46

Creating Widows and Orphans: A Simple Example

Figure 11.4

(a): after new(p); new(q); (b): after q = p;

(c): after delete(p); q still points to a location in the heap,

which could be allocated to another request in the future.

The node originally pointed to by q is now garbage.

47

Python Memory Allocation

Variables contain references to data values

A

3.5

A=A*2

A

4

A = “cat”

7.0

cat

Python may allocate new storage with each assignment, so it

handles memory management automatically. It will create

new objects and store them in memory; it will also execute

garbage collection algorithms to reclaim any inaccessible

memory locations.

48

11.3 Garbage Collection

• All inaccessible blocks of storage are

identified and returned to the free list.

• The heap may also be compacted at this

time: allocated space is compressed into

one end of the heap, leaving all free space

in a large block at the other end.

49

Garbage Collection

• C & C++ leave it to the programmer – if an unused block

of storage isn’t explicitly freed by the program, it

becomes garbage.

– You can get C++ garbage collectors, but they aren’t standard

• Java, Python, Perl, (and other scripting languages) are

examples of languages with garbage collection

– Python, etc. also automatic allocation: no need for “new”

statements

• Garbage collection was pioneered by languages like

Lisp, which constantly creates and destroys linked lists.

50

Implementing Automated

Garbage Collection

• If programmers were perfect, garbage

collection wouldn’t be needed. However,

...

• There are three major approaches to

automating the process:

– Reference counting

– Mark-sweep

– Copy collection

51

Reference Counting

• Initially, the heap is structured as a linked list

(free list) of nodes.

• Each node has a reference count field; initially 0.

• When a block is allocated it’s removed from the

free list and its reference count is set to 1.

• When pointers are assigned or freed the count

is incremented or decremented.

• When a block’s count goes back to zero, return

it to the free list and reduce the reference count

of any node it points to.

52

A Simple Illustration

P

2

1

1

null

Q

“P = null” reduces the reference count of the first node to 1

“Q = null” reduces the reference count of the first node to 0, which

triggers the reduction of the reference count in node 2 to 0,

recursively reduces the ref. count in node 3 to 0, and then returns

all three nodes to the free list.

53

Node Structure and Example Heap for Reference Counting

Figure 11.5

•There’s a block at the bottom whose reference count is 0.

What does this represent?

•What would happen if delete is performed on p and q?

54

Reference Counting: Pros and

Cons

• Advantage: the algorithm is performed

whenever there is an assignment or

other heap action. Overhead is

distributed over program lifetime

• Disadvantages are:

– Can’t detect inaccessible circular lists.

– Extra overhead due to reference counts

(storage and time).

55

Mark and Sweep

• Runs when the heap is full (free list is

empty or cannot satisfy a request).

• Two-pass process:

– Pass 1: All active references on the stack are

followed and the blocks they point to are

marked (using a special mark bit set to 1).

– Pass 2: The entire heap is swept, looking for

unmarked blocks, which are then returned to

the free list. At the same time, the mark bits

are turned off (set to 0).

56

Mark Algorithm

Mark(R): //R is a stack reference

If (R.MB == 0)

R.MB = 1;

If (R.next != null)

Mark(R.next);

All reachable nodes are marked.

Starts in the stack, moves to the heap.

57

Sweep Algorithm

Sweep( ):

i = h; // h = first heap address

While (i<=n) {

if(i.MB == 0)

free(i);//add node i to free list

else i.MB = 0;

i++;

}

Operates only on the heap.

58

Node Structure and Example for Mark-Sweep Algorithm

Figure 11.6

Before the mark-sweep algorithm begins

59

Heap after Pass I of Mark-Sweep

Figure 5.16

After the first (mark) pass, accessible nodes are

marked, others aren’t

60

Heap after Pass II of Mark-Sweep

Figure 11.8

After the 2nd (sweep) pass: All inaccessible nodes are

linked into a free list; all accessible nodes have their mark

bits returned to 0

61

Mark and Sweep: Pros and

Cons

• Advantages:

– It may never run (it only runs when the heap

is full).

– It finds and frees all unused memory blocks.

• Disadvantage: It is very intensive when it

does run. Long, unpredictable delays are

unacceptable for some applications.

62

Copy Collection

• Similar to mark and sweep in that it runs

when the heap is full.

• Faster than mark and sweep because it

only makes one pass through the heap.

• No extra reference count or mark bit

needed.

• The heap is divided into two halves,

from_space and to_space.

63

Copy Collection

(Stop and Copy)

• While garbage collection isn’t needed,

– From_space contains allocated nodes and nodes on

the free list.

– To_space is unusable.

• When there are no more un-allocated nodes in

from_space, “flip” the two spaces, and pack all

accessible nodes in the old from_space into the

new from_space. Any left-over space is the free

space.

64

Initial Heap Organization for Copy Collection

Figure 11.9

Not available

65

Result of a Copy Collection Activation

Figure 11. 9

After “flipping” and repacking into

the former to_space.

(The accessible nodes are packed, orphans

are returned to the free_list, and the two

halves reverse roles.)

66

Discussion

• When an active object is copied to the

to_space, update any references

contained in the objects

• When copying is completed, the new

to_space contains only active objects, and

they are tightly packed into the space.

• Consequently, the heap is automatically

compacted (defragmented).

67

Analysis

• Automatic compaction is the main

advantage of this method when compared

to mark-and-sweep.

• Disadvantages:

– All active objects must be copied: may take a

lot of time (not necessarily as much as the

two-pass algorithm).

– Requires twice as much space for the heap

68

Copy Collection v Mark-Sweep

• If r, the ratio of active heap blocks to heap

size, is significantly less than (heap size)/2,

copy collection is more efficient

– Efficiency = amount of memory reclaimed per

unit of time

• As r approaches (heap size)/2 mark-sweep

becomes more efficient

• Based on a study reported in a paper Jones

and Lins, 1996.

69

Garbage Collection Analysis

• Different languages and implementations will

probably use some variation or combination of one

of the above strategies.

• Java runs garbage collection as a background

process when demand on the system is low, hoping

that the heap will never be full.

• Java also allows programmers to explicitly request

garbage collection, without waiting for the system to

do it automatically.

• Functional languages (Lisp, Scheme, …) also have

built-in garbage collectors

• C/C++ do not.

70

Garbage Collection

Summarized

• Some commercial applications divide

nodes into categories according to how

long they’ve been in memory

– The assumption is that long-resident nodes

are likely to be permanent – don’t examine

them

– New nodes are less likely to be permanent –

consider them first

– There may be several “aging” levels

71