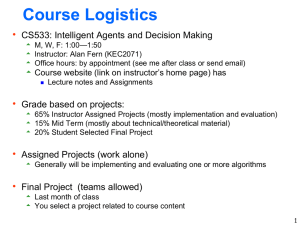

ppt - Kavraki Lab

advertisement

An Incremental Sampling-based Algorithm for

Stochastic Optimal Control

Martha Witick

Department of Computer Science

Rice University

Vu Anh Huynh, Sertac Karamanm Emilio Frazzoli. ICRA 2012.

Motion Planning!

•Continuous time

•Continuous state space

•Continuous controls

•Noisy.

MDPs?

S

Motivation

• Cnts-time, cnts space stochastic optimal control problem: no closed form,

exact algorithmic solutions

• Approximate cnts problem with discrete MDP and compute solution

• ... but exponential with number of state and control spaces

• Sampling-based methods: fast and effective, but...

• RRT: not optimal

• RRT*: no systems with uncertain dynamics

Overview

• Have a continuous time/space stochastic optimal control problem

• Want an optimal cost function J* and ultimately an optimal policy μ*

• Create a discrete-state Markov Decision Process and refine, iterating until the

current cost-to-go Ji* is close enough to J*

• What the iterative Markov Decision Process (iMDP) algorithm does.

Outline

• Continuous Stochastic Optimal Control Problem Definition

• Discrete Markov Chain approximation

• iMDP Algorithm

• iMDP Results

• Conclusion

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

S0

S

state x(t) ∊ S

control u(t)

time t ≥ 0

Consider a stochastic dynamical system that looks like this:

robot's dynamics

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

state x(t) ∊ S

control u(t)

time t ≥ 0

S0

S

Consider a stochastic dynamical system that looks like this:

robot's dynamics

noise

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

state x(t) ∊ S

control u(t)

time t ≥ 0

S0

S

Consider a stochastic dynamical system that looks like this:

robot's dynamics

noise

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

x

(

0

)

S0

S

Solutions looks like this until x(t) hits δS:

state x(t) ∊ S

control u(t)

time t ≥ 0

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

x

(

0

)

S0

S

Solutions looks like this until x(t) hits δS:

state x(t) ∊ S

control u(t)

time t ≥ 0

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

x

(

0

)

S0

S

Solutions looks like this until x(t) hits δS:

state x(t) ∊ S

control u(t)

time t ≥ 0

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

x

(

0

)

S0

S

state x(t) ∊ S

policy μ(t)

time t ≥ 0

A Markov control (or policy) μ(t): S→ℝ needs only state x(t).

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

x

(

0

)

S0

S

The expected cost-to-go function under policy μ

state x(t) ∊ S

policy μ(t)

time t ≥ 0

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

x

(

0

)

S0

S

The expected cost-to-go function under policy μ

first exit time

state x(t) ∊ S

policy μ(t)

time t ≥ 0

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

x

(

0

)

S0

S

The expected cost-to-go function under policy μ

discount rate α ∊ [0,1)

state x(t) ∊ S

policy μ(t)

time t ≥ 0

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

x

(

0

)

S0

S

The expected cost-to-go function under policy μ

cost rate function

state x(t) ∊ S

policy μ(t)

time t ≥ 0

Continuous Stochastic Dynamics

S ⊂ ℝdx

interior S0

smooth boundary δS

δS

x

(

0

)

S0

S

The expected cost-to-go function under policy μ

terminal cost function

state x(t) ∊ S

policy μ(t)

time t ≥ 0

Continuous Stochastic Dynamics

• We want the optimal cost-to-go function J*:

• We want to compute J* so we can get its optimal policy μ*

• But solving this continuous problem is hard.

Solving this is hard

• Lets make a discrete model!

Outline

• Continuous Stochastic Optimal Control Problem Definition

• Discrete Markov Chain approximation

• iMDP Algorithm

• iMDP Results

• Conclusion

Markov Chain Approximation

Approximate stochastic dynamics with a sequence of MDPs {Mn}∞n=0

Markov Chain Approximation

Approximate stochastic dynamics with a sequence of MDPs {Mn}∞n=0

Markov Chain Approximation

Approximate stochastic dynamics with a sequence of MDPs {Mn}∞n=0

Discretize states: grab finite set of states Sn from S

assign transition probabilities Pn

Discretize time: assign non-negative holding time Δtn(z) to each state z

Don't need to discretize controls.

Markov Chain Approximation

Local Consistency Property:

• For all states z ∊ S,

• For all states z ∊ S and all controls v ∊ U,

Markov Chain Approximation

Local Consistency Property:

• For all states z ∊ S,

• For all states z ∊ S and all controls v ∊ U,

Markov Chain Approximation

Local Consistency Property:

• For all states z ∊ S,

• For all states z ∊ S and all controls v ∊ U,

Markov Chain Approximation

Local Consistency Property:

• For all states z ∊ S,

• For all states z ∊ S and all controls v ∊ U,

Markov Chain Approximation

Local Consistency Property:

• For all states z ∊ S,

• For all states z ∊ S and all controls v ∊ U,

Markov Chain Approximation

Approximate stochastic dynamics with a sequence of MDPs {Mn}∞n=0

Control problem is analogous, so let's define a discrete discounted cost:

Continuous for comparison:

Markov Chain Approximation

Approximate stochastic dynamics with a sequence of MDPs {Mn}∞n=0

Discontinuity and Remarks

• F, f, g, and h can be discontinuous and still work

• While the controlled Markov chain has a discrete state structure and the

stochastic dynamical system has a continuous model, they BOTH have a

continuous control space.

Outline

• Continuous Stochastic Optimal Control Problem Definition

• Discrete Markov Chain approximation

• iMDP Algorithm

• iMDP Results

• Conclusion

iMDP Algorithm

Set 0th MDP M0 to empty

S0

δS

S

iMDP Algorithm

Set 0th MDP M0 to empty

while n < N do

nth MDP Mn <- (n-1)th MDP

S0

δS

S

iMDP Algorithm

Set 0th MDP M0 to empty

while n < N do

nth MDP Mn <- (n-1)th MDP

Sample state from δS and add it to

Mn

S0

zs

δS

S

iMDP Algorithm

Set 0th MDP M0 to empty

while n < N do

nth MDP Mn <- (n-1)th MDP

Sample state from δS and add it to

Mn

S0

δS

S

iMDP Algorithm

Set 0th MDP M0 to empty

while n < N do

nth MDP Mn <- (n-1)th MDP

Sample state from δS and add it to

Mn

Sample zs from S0

zs

S0

δS

S

iMDP Algorithm

Set 0th MDP M0 to empty

while n < N do

nth MDP Mn <- (n-1)th MDP

Sample state from δS and add it to

Mn

Sample zs from S0

Set znearest to nearest state in Sn

zs

S0

δS

znearest

S

iMDP Algorithm

zs

x:[0,t]

S0

δS

znearest

S

Set 0th MDP M0 to empty

while n < N do

nth MDP Mn <- (n-1)th MDP

Sample state from δS and add it to

Mn

Sample zs from S0

Set znearest to nearest state in Sn

Compute trajectory x:[0,t] with

control u from znearest to zs

iMDP Algorithm

zs

z

x:[0,t]

S0

δS

znearest

S

Set 0th MDP M0 to empty

while n < N do

nth MDP Mn <- (n-1)th MDP

Sample state from δS and add it to

Mn

Sample zs from S0

Set znearest to nearest state in Sn

Compute trajectory x:[0,t] with

control u from znearest to zs

Set z to x(0)

iMDP Algorithm

zs

z

x:[0,t]

S0

δS

znearest

S

Set 0th MDP M0 to empty

while n < N do

nth MDP Mn <- (n-1)th MDP

Sample state from δS and add it to

Mn

Sample zs from S0

Set znearest to nearest state in Sn

Compute trajectory x:[0,t] with

control u from znearest to zs

Set z to x(0) and add to Sn

iMDP Algorithm

z

S0

δS

znearest

S

Set 0th MDP M0 to empty

while n < N do

nth MDP Mn <- (n-1)th MDP

Sample state from δS and add it to

Mn

Sample zs from S0

Set znearest to nearest state in Sn

Compute trajectory x:[0,t] with

control u from znearest to zs

Set z to x(0) and add to Sn

Compute cost and save

Update() new z and Kn states in Sn

iMDP Algorithm: Update Loop

Update() new z and Kn states in Sn:

iMDP Algorithm: Update Loop

Update() new z and Kn states in Sn:

iMDP Algorithm: Update Loop

Update() new z and Kn states in Sn:

iMDP Algorithm: Update()

iMDP Algorithm: Update()

iMDP Algorithm: Update()

Uniformly sample or create Cn controls from z to nearest Cn states

iMDP Algorithm: Update()

For each control v in Un

iMDP Algorithm: Update()

Compute new transition probability to nearest log(Sn) states Znear

iMDP Algorithm: Update()

iMDP Algorithm: Update()

holding time tau

iMDP Algorithm: Update()

cost rate function g

iMDP Algorithm: Update()

discount rate alpha

iMDP Algorithm: Update()

Expected cost-to-go to Znear under n-th policy

iMDP Algorithm: Update()

iMDP Algorithm: Update()

If the new cost J is less then the old cost

iMDP Algorithm: Update()

Update z's cost, policy, holding time, and number of states in Sn

iMDP Algorithm

z

S

δS

znearest

S

Set 0th MDP M0 to empty

while n < N do

nth MDP Mn <- (n-1)th MDP

Sample state from δS and add it to

Mn

Sample zs from S0

Set znearest to nearest state in Sn

Compute trajectory x:[0,t] with

control u from znearest to zs

Set z to x(0) and add to Sn

Compute cost and save

Update() new z and Kn states in Sn

n=n+1

IMDP: Generating a Policy

iMDP Time Complexity

• |Sn|Ѳ states updated and log(|Sn|) controls processed per iteration

• Time Complexity when Pn constructed with linear equations:

O(n |Sn|Ѳ log |Sn| )

• Time Complexity when Pn constructed with Gaussian distribution:

O(n |Sn|Ѳ (log |Sn|)2 )

• Space Complexity: O(|Sn|)

• State Space Size:

|Sn| = Θ(n)

iMDP Analysis

iMDP Analysis

iMDP Analysis

iMDP Analysis

Outline

• Continuous Stochastic Optimal Control Problem Definition

• Discrete Markov Chain approximation

• iMDP Algorithm

• iMDP Results

• Conclusion

Results

Experiment (a): convergence of iMDP applied to a stochastic LQR problem

With dynamics dx =

Results

Experiment (a): convergence of iMDP applied to a stochastic LQR problem

With dynamics dx =

Results

Experiment (b): A system with stochastic single integrator dynamics to a goal region

(upper right) with free ending time in a cluttered environment.

Results

•Experiment (c): Noise-free versus noisy. σ = 0.37

Note that (b) failed to find a valid trajectory.

Conclusions

• The iMDP algorithm:

• incrementally builds discrete MDPs to approximate continuous problems

• Improves cost every iteration (value iteration), novel approach to computing

Bellman updates

• has a fast total processing time O(k^1+0 log k)

• Remove necessity for point-to-point steering of RRT (e.g. RRT*)