Slides

advertisement

Crowdsourcing Complexity:

Lessons for Software Engineering

Lydia Chilton

2 June 2014 ICSE Crowdsourcing Workshop

Clarification: Human Computation

Mechanical Turk

Microtasks:

2007: JavaScript Calculator

2007: JavaScript Calculator

Evolution of Complexity

in Human Computation

Task Decomposition: Cascade & Frenzy

Evolution of Complexity

1. Collective Intelligence

1906: 787 aggregated votes averaged 1197 lbs.

Actual answer: 1198 lbs.

1. Collective Intelligence

Principles:

– Small tasks

– Intuitive tasks

– Independent answers

– Simple aggregation

Application:

- ESP Game

2. Iterative Workflows

work

improve vote

improve vote

Collective Intelligence

2. Iterative Workflows

Principles:

– Use fresh eyes

– Vote to ensure

improvement

Application:

- Bug finding

“given enough eyeballs,

all bugs are shallow”

3. Psychological Boundaries

3. Psychological Boundaries

Applications:

• Manager / programmer

• Writer / editor

• Write code / test code

• Addition / subtraction

Principle:

– Task switching is hard

– Natural boundaries for tasks

4. Task Decomposition

Legion:Scribe Real Time Audio Captioning on MTurk

4. Task Decomposition

Principles:

– Must be able to break apart tasks AND put them

back together.

– Complex aggregation

– Hint: Solve backwards. Find what people can do,

and build up from there.

5. Worker Choice

Mobi: Trip Planning on Mturk with an open UI.

5. Worker Choice

Applications:

• Trip planning

• Conference time table

• Conference session-making

Principles:

- Giving workers freedom relieves requesters’

burden of task decomposition.

- Workers feel more involved and empowered.

- BUT complex interface that is difficult to scale.

6. Learning and Doing

6. Learning and Doing

Applications:

• Peer assessment

• Do grading assignments before you do your own

assignment

• Task Feedback

Principles:

- Teaching workers makes them better.

- How long will they stay?

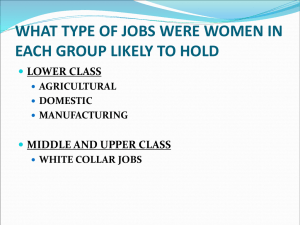

Lessons for Software Engineering

1. Propose and vote

2. Find natural psychological

boundaries between tasks

3. Find the tasks people can do,

then assemble them using

complex aggregation

techniques.

4. Teach.

221

+ 473

-221

+ 473

Evolution of Complexity

in Human Computation

Task Decomposition: Cascade & Frenzy

Task decomposition is the key to

crowdsourcing software engineering

Cascade

Crowdsourcing Taxonomy Creation

Lydia Chilton (UW), Greg Little (oDesk), Darren Edge (MSR Asia),

23

Dan Weld (UW), James Landay (UW)

Problem

24

•

•

•

•

1000 eGovernment suggestions

50 top product reviews

100 employee feedback comments

1000 answers to “Why did you decide to

major in Computer Science?”

Machines can’t analyze it

People don’t have time to analyze it

1. time consuming

2. overwhelming

3. no right answer

26

Solution

27

Solution: Crowdsourced Taxonomies

28

Toy Application: Colors

29

Initial Prototypes

30

Iterative Improvement

Problems

1. The hierarchy grows and

becomes overwhelming

2. Workers have to decide

what to do

Lesson

Break up the task more

31

Initial Approach 2:

Category Comparison

Problem

Without context, it’s hard to judge

relationships

• flying vs. flights

• TSA liquids vs. removing liquids

• Packing vs. what to bring

Lesson

Don’t compare abstractions to

abstractions

Instead compare data to abstractions

32

Use Lesson #3

Find the tasks people can do.

Assemble them using complex

aggregation techniques.

Generate Labels

Select Best Labels

Categorize

Cascade Algorithm

For a subset of items

Generate Labels

Select Best Labels

{good labels}

Blue

Light Blue

Green

Greenish

Red

Gold

Categorize

For all items,

for all good labels,

Then recurse

34

Aggregate Data into Taxonomy

Blue

Light Blue

Green

Greenish

Red

Gold

redundant

nested

Green

Light

Blue

Blue

singletons

Blue:

Light Blue:

Green:

Other:

35

Cascade Results: 100 Colors

36

How can we get a global picture from

workers who see only subsets of the

data?

37

Propose, Vote, Test

1.

2.

3.

4.

Workers have good heuristics.

Let them propose categories.

Vote on categories to weed out bad ones.

Test the heuristics by verifying it on data.

Propose

Vote

Test

Lesson

Propose, Vote, Test.

39

Deploy Cascade to Real Needs

1. CHI 2013 Program Committee

Organize 430 accepted papers to help session

making

2. 40 CrowdCamp Hack-a-thon Participants

Organize 100 hack-a-thon ideas to help organize

teams

40

430 CHI Papers: Good Results, but…

Patina: Dynamic Heatmaps for Visualizing Application Usage',

Effects of Visualization and Note-Taking on Sensemaking and Analysis',

Contextifier: Automatic Generation of Messaged Visualizations',

• Visualization (19)

Interactive Horizon Graphs: Improving the Compact Visualization of

Time

Series',

• Multiple

evaluating

infovis

(9)

Quantity Estimation in Visualizations of Tagged Text',

• text (2)

Motif Simplification: Improving Network Visualization Readability with

Fan, (6)

Connector, and Clique

• video

Evaluation of Alternative Glyph Designs for Time Series Data in a Small

Multiple Setting',

• visualizing

time data (5)

Individual User Characteristics and Information Visualization: Connecting

• gazethe

(4) Dots through Eye Tra

"Without the Clutter of Unimportant Words": Descriptive Keyphrases for

• Text

gazeVisualization']],

tracking (3)

Direct Space-Time Trajectory Control for Visual Media Editing

• user requirements (3)

Your eyes will go out of the face: Adaptation for virtual eyes in video

HMDs

• see-through

color schemes

(2)

Swifter: Improved Online Video Scrubbing

Direct Manipulation Video Navigation in 3D

NoteVideo: Facilitating Navigation of Blackboard-style Lecture Videos

Ownership and Control of Point of View in Remote Assistance

EyeContext: Recognition of High-level Contextual Cues from Human Visual Behaviour

Your eyes will go out of the face: Adaptation for virtual eyes in video see-through HMDs

Still Looking: Investigating Seamless Gaze-supported Selection, Positioning, and Manipulation of D

Individual User Characteristics and Information Visualization: Connecting the Dots through Eye Tra

41

Quantity Estimation in Visualizations of Tagged Text

“Don’t treat me like a Turker.”

“I just want to see all the data”

42

Lesson

Authority and Responsibility

should be aligned.

43

Frenzy:

Collaborative Data Organization for

Creating Conference Sessions

Lydia Chilton (UW), Juho Kim (MIT), Paul Andre (CMU),

Felicia Cordeiro (UW), James Landay (Cornell?), Dan Weld (UW),

Steven Dow (CMU), Rob Miller (MIT), Haoqi Zhang (NW)

44

45

Groupware

Creating conference sessions is a social process.

Grudin: Social process are often guided by personalities, tradition, convention.

Challenge: support to the process without seeking to replace these behaviors.

Challenge: remain flexible and do not improve rigid structures.

46

DEMO

47

Light-weight contributions

Label

Vote

Categorize

48

2-Stage Workflow

Stage 1

Set-up

Collect Meta Data

• 60 PC members

• Low authority

• Low responsibility

Stage 2

Session Making

• 11 PC members

• High authority

• High responsibility

49

Goals

Collect data: labels, votes

SessionMaking

50

Results

Sessions created in record-setting 88 minutes.

Lessons for Software Engineering

1. Propose and vote.

2. Find natural psychological

221

boundaries between tasks.

+ 473

3. Find the tasks people can do,

then assemble them using

complex aggregation techniques.

4. Teach.

5. Propose, vote, test.

6. Align authority and responsibility.

-221

+ 473

Low

Hi