HPC105 - Advanced Research Computing at UM (ARC)

advertisement

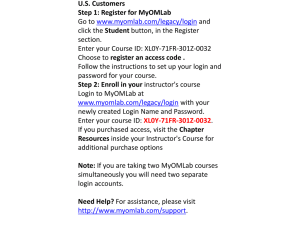

Flux for PBS Users HPC 105 Dr. Charles J Antonelli LSAIT ARS August, 2013 Flux Flux is a university-wide shared computational discovery / high-performance computing service. Interdisciplinary Provided by Advanced Research Computing at U-M (ARC) Operated by CAEN HPC Hardware procurement, software licensing, billing support by U-M ITS Used across campus Collaborative since 2010 Advanced Research Computing at U-M (ARC) College of Engineering’s IT Group (CAEN) Information and Technology Services Medical School College of Literature, Science, and the Arts School of Information http://arc.research.umich.edu/resources-services/flux/ cja 2013 2 8/13 The Flux cluster … cja 2013 3 8/13 Flux node 48 GB RAM 12 Intel cores Local disk Ethernet cja 2013 InfiniBand 4 8/13 Flux Large Memory node 1 TB RAM 40 Intel cores Local disk Ethernet cja 2013 InfiniBand 5 8/13 Flux hardware 8,016 Intel cores 632 Flux nodes 200 Intel Large Memory cores 5 Flux Large Memory nodes 48/64 GB RAM/node 1 TB RAM/ Large Memory node 4 GB RAM/core (allocated) 25 GB RAM/Marge Memory core 4X Infiniband network (interconnects all nodes) 40 Gbps, <2 us latency Latency an order of magnitude less than Ethernet Lustre Filesystem Scalable, high-performance, open Supports MPI-IO for MPI jobs Mounted on all login and compute nodes ES13 6 5/13 Flux software Licensed software http://cac.engin.umich.edu/resources/software/flux-software et al Compilers & Libraries: Intel , PGI, GNU OpenMP OpenMPI cja 2013 7 8/13 Using Flux Three basic requirements to use Flux: 1. A Flux account 2. An MToken (or a Software Token) 3. A Flux allocation cja 2013 8 8/13 Using Flux 1. A Flux account Allows login to the Flux login nodes Develop, compile, and test code Available to members of U-M community, free Get an account by visiting https://www.engin.umich.edu/form/cacaccountapplication cja 2013 9 8/13 Flux Account Policies To qualify for a Flux account: You must have an active institutional role On the Ann Arbor campus Not a Retiree or Alumni role Your uniqname must have a strong identity type Not a friend account You must be able to receive email sent to uniqname@umich.edu You must have run a job in the last 13 months http://cac.engin.umich.edu/resources/systems/user-accounts cja 2013 10 8/13 Using Flux 2. An MToken (or a Software Token) Required for access to the login nodes Improves cluster security by requiring a second means of proving your identity You can use either an MToken or an application for your mobile device (called a Software Token) for this Information on obtaining and using these tokens at http://cac.engin.umich.edu/resources/login-nodes/tfa cja 2013 11 8/13 Using Flux 3. A Flux allocation Allows you to run jobs on the compute nodes Current rates: (through June 30, 2016) $18 per core-month for Standard Flux $24.35 per core-month for Large Memory Flux $8 cost-share per core-month for LSA, Engineering, and Medical School Details at http://arc.research.umich.edu/resourcesservices/flux/flux-pricing/ To inquire about Flux allocations please email fluxsupport@umich.edu cja 2013 12 8/13 Flux Allocations To request an allocation send email to fluxsupport@umich.edu with the type of allocation desired Regular or Large-Memory the number of cores needed the start date and number of months for the allocation the shortcode for the funding source the list of people who should have access to the allocation the list of people who can change the user list and augment or end the allocations http://arc.research.umich.edu/resources-services/flux/managing-a-flux-project/ cja 2013 13 8/13 Flux Allocations An allocation specifies resources that are consumed by running jobs Explicit core count Implicit memory usage (4 or 25 GB per core) When any resource fully in use, new jobs are blocked An allocation may be ended early On the monthly anniversary You may have multiple active allocations Jobs draw resources from all active allocations cja 2013 14 8/13 lsa_flux Allocation LSA funds a shared allocation named lsa_flux Usable by anyone in the College 60 cores For testing, experimentation, exploration Not for production runs Each user limited to 30 concurrent jobs https://sites.google.com/a/umich.edu/fluxsupport/support-for-users/lsa_flux cja 2013 15 8/13 Monitoring Allocations Visit https://mreports.umich.edu/mreports/pages/Flux.aspx Select your allocation from the list at upper left You’ll see all allocations you can submit jobs against Four sets of outputs Allocation details (start & end date, cores, shortcode) Financial overview (cores allocated vs. used, by month) Usage summary table (core-months by user and month Drill down for individual job run data Usage charts (by user) Details & screenshots:http://arc.research.umich.edu/resourcesservices/flux/check-my-flux-allocation/ cja 2013 16 8/13 Storing data on Flux Lustre filesystem mounted on /scratch on all login, compute, and transfer nodes 640 TB of short-term storage for batch jobs Pathname depends on your allocation and uniqname e.g., /scratch/lsa_flux/cja Can share through UNIX groups Large, fast, short-term Data deleted 60 days after allocation expires http://cac.engin.umich.edu/resources/storage/flux-high-performance-storage-scratch NFS filesystems mounted on /home and /home2 on all nodes 80 GB of storage per user for development & testing Small, slow, long-term cja 2013 17 8/13 Storing data on Flux Flux does not provide large, long-term storage Alternatives: LSA Research Storage ITS Value Storage Departmental server CAEN HPC can mount your storage on the login nodes Issue df -kh command on a login node to see what other groups have mounted cja 2013 18 8/13 Storing data on Flux LSA Research Storage 2 TB of secure, replicated data storage Available to each LSA faculty member at no cost Additional storage available at $30/TB/yr Turn in existing storage hardware for additional storage Request by visiting https://sharepoint.lsait.lsa.umich.edu/Lists/Research%20Sto rage%20Space/NewForm.aspx?RootFolder= Authenticate with Kerberos login and password Select NFS as the method for connecting to your storage cja 2013 19 8/13 Copying data to Flux Using the transfer host: rsync -avz /your/cluster1/directory fluxxfer.engin.umich.edu:newdirname rsync -avz /your/cluster1/directory fluxxfer.engin.umich.edu:/scratch/youralloc/youru niqname Or use scp, sftp, WinSCP, Cyberduck, FileZilla http://cac.engin.umich.edu/resources/login-nodes/transfer-hosts cja 2013 20 8/13 Globus Online Features High-speed data transfer, much faster than SCP or SFTP Reliable & persistent Minimal client software: Mac OS X, Linux, Windows GridFTP Endpoints Gateways through which data flow Exist for XSEDE, OSG, … UMich: umich#flux, umich#nyx Add your own server endpoint: contact flux-support@umich.edu Add your own client endpoint! More information http://cac.engin.umich.edu/resources/login-nodes/globus-gridftp cja 2013 21 8/13 Connecting to Flux ssh flux-login.engin.umich.edu Login with token code, uniqname, and Kerberos password You will be randomly connected a Flux login node Currently flux-login1 or flux-login2 Do not run compute- or I/O-intensive jobs here Processes killed automatically after 30 minutes Firewalls restrict access to flux-login. To connect successfully, either Physically connect your ssh client platform to the U-M campus wired or MWireless network, or Use VPN software on your client platform, or Use ssh to login to an ITS login node (login.itd.umich.edu), and ssh to flux-login from there cja 2013 22 8/13 Lab 1 Task: Use the multicore package The multicore package allows you to use multiple cores on the same node module load R Copy sample code to your login directory cd cp ~cja/hpc-sample-code.tar.gz . tar -zxvf hpc-sample-code.tar.gz cd ./hpc-sample-code Examine Rmulti.pbs and Rmulti.R Edit Rmulti.pbs with your favorite Linux editor Change #PBS -M email address to your own cja 2013 23 8/13 Lab 1 Task: Use the multicore package Submit your job to Flux qsub Rmulti.pbs Watch the progress of your job qstat -u uniqname where uniqname is your own uniqname When complete, look at the job’s output less Rmulti.out cja 2013 24 8/13 Lab 2 Task: Run an MPI job on 8 cores Compile c_ex05 cd ~/cac-intro-code make c_ex05 Edit file run with your favorite Linux editor Change #PBS -M address to your own I don’t want Brock to get your email! Change #PBS -A allocation to FluxTraining_flux, or to your own allocation, if desired Change #PBS -l allocation to flux Submit your job qsub run cja 2013 25 8/13 PBS resources (1) A resource (-l) can specify: Request wallclock (that is, running) time -l walltime=HH:MM:SS Request C MB of memory per core -l pmem=Cmb Request T MB of memory for entire job -l mem=Tmb Request M cores on arbitrary node(s) -l procs=M Request a token to use licensed software -l gres=stata:1 -l gres=matlab -l gres=matlab%Communication_toolbox cja 2013 26 8/13 PBS resources (2) A resource (-l) can specify: For multithreaded code: Request M nodes with at least N cores per node -l nodes=M:ppn=N Request M cores with exactly N cores per node (note the difference vis a vis ppn syntax and semantics!) -l nodes=M,tpn=N (you’ll only use this for specific algorithms) cja 2013 27 8/13 Interactive jobs You can submit jobs interactively: qsub -I -V -l procs=2 -l walltime=15:00 -A youralloc_flux -l qos=flux –q flux This queues a job as usual Your terminal session will be blocked until the job runs When it runs, you will be connected to one of your nodes Invoked serial commands will run on that node Invoked parallel commands (e.g., via mpirun) will run on all of your nodes When you exit the terminal session your job is deleted Interactive jobs allow you to Test your code on cluster node(s) Execute GUI tools on a cluster node with output on your local platform’s X server Utilize a parallel debugger interactively cja 2013 28 8/13 Lab 3 Task: compile and execute an MPI program on a compute node Copy sample code to your login directory: cd cp ~brockp/cac-intro-code.tar.gz . tar -xvzf cac-intro-code.tar.gz cd ./cac-intro-code Start an interactive PBS session qsub -I -V -l procs=2 -l walltime=30:00 -A FluxTraining_flux -l qos=flux -q flux On the compute node, compile & execute MPI parallel code: cd $PBS_O_WORKDIR mpicc -O3 -ipo -no-prec-div -xHost -o c_ex01 c_ex01.c mpirun -np 2 ./c_ex01 cja 2013 29 8/13 Lab 4 Task: Run Matlab interactively module load matlab Start an interactive PBS session qsub -I -V -l procs=2 -l walltime=30:00 -A FluxTraining_flux -l qos=flux -q flux Run Matlab in the interactive PBS session matlab -nodisplay cja 2013 30 8/13 The Scheduler (1/3) Flux scheduling policies: The job’s queue determines the set of nodes you run on flux, fluxm The job’s account determines the allocation to be charged If you specify an inactive allocation, your job will never run The job’s resource requirements help determine when the job becomes eligible to run If you ask for unavailable resources, your job will wait until they become free There is no pre-emption cja 2013 31 8/13 The Scheduler (2/3) Flux scheduling policies: If there is competition for resources among eligible jobs in the allocation or in the cluster, two things help determine when you run: How long you have waited for the resource How much of the resource you have used so far This is called “fairshare” The scheduler will reserve nodes for a job with sufficient priority This is intended to prevent starving jobs with large resource requirements cja 2013 32 8/13 The Scheduler (3/3) Flux scheduling policies: If there is room for shorter jobs in the gaps of the schedule, the scheduler will fit smaller jobs in those gaps This is called “backfill” Cores Time cja 2013 33 8/13 Job monitoring There are several commands you can run to get some insight over your jobs’ execution: freenodes : shows the number of free nodes and cores currently available mdiag -a youralloc_name : shows resources defined for your allocation and who can run against it showq -w acct=yourallocname: shows jobs using your allocation (running/idle/blocked) checkjob jobid : Can show why your job might not be starting showstart -e all jobid : Gives you a coarse estimate of job start time; use the smallest value returned cja 2013 34 8/13 Job Arrays • Submit copies of identical jobs • Invoked via qsub –t: qsub –t array-spec pbsbatch.txt Where array-spec can be m-n a,b,c m-n%slotlimit e.g. qsub –t 1-50%10 Fifty jobs, numbered 1 through 50, only ten can run simultaneously • $PBS_ARRAYID records array identifier cja 2013 35 35 8/13 Dependent scheduling • Submit jobs whose execution scheduling depends on other jobs • Invoked via qsub –W: qsub -W depend=type:jobid[:jobid]… Where depend can be after afterok Schedule after jobids have started Schedule after jobids have finished, only if no errors afternotok Schedule after jobids have finished, only if errors afterany Schedule after jobids have finished, regardless of status Inverted semantics for before,beforeok,beforenotok,beforeany cja 2013 36 36 8/13 Some Flux Resources http://arc.research.umich.edu/resources-services/flux/ U-M Advanced Research Computing Flux pages http://cac.engin.umich.edu/ CAEN HPC Flux pages http://www.youtube.com/user/UMCoECAC CAEN HPC YouTube channel For assistance: flux-support@umich.edu Read by a team of people including unit support staff Cannot help with programming questions, but can help with operational Flux and basic usage questions cja 2013 37 8/13 Any Questions? Charles J. Antonelli LSAIT Advocacy and Research Support cja@umich.edu http://www.umich.edu/~cja 734 763 0607 cja 2013 38 8/13