- No category

Machine Learning: Decision Tree Learning & ML Paradigms Guide

advertisement

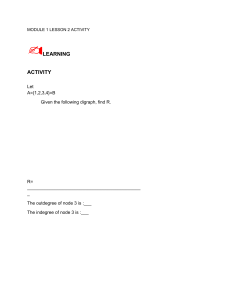

Machine Learning: Decision Tree Learning Introduction A fundamental component of data science and machine learning is decision tree learning. Decision trees are relevant as a baseline model in machine learning projects as they are easy to interpret, adaptable for classification and regression tasks, efficient in data handling, and capable of capturing non-linear correlations. We will be dealing with open ended questions such as: 1. What are Decision Trees and how do they work? What are the essential elements of a decision tree (leaves, branches, nodes, and roots)? How do feature selection and splitting criteria operate in the tree-growing process? 2. What are the different Machine Learning Paradigms and which paradigm does this model belong to? And why? 3. What are the Decision Tree's Pros and Cons? What are the benefits of decision trees for data processing and interpretability? What are typical mistakes to avoid, such as instability and overfitting, and how may they be corrected? 4. What are advanced techniques of the Decision Tree? What are decision tree-based ensemble techniques like Random Forests and Gradient Boosting that maximize performance? How would methods like cross-validation and pruning aid in the construction of stronger decision trees? 5. How Can Decision Trees Be Used to Solve Real-World Issues? What are some specific instances of decision tree applications across different industries? What real world analytical problem could be addressed by the Decision Tree Learning method This presentation aims to give an in-depth explanation of decision tree learning by going over its core ideas, real-world applications, and cutting-edge methods. By the end of the presentation, the audience will have gained a thorough understanding of Decision tree learning, including its benefits and limitations, operational processes, significance in data science, and potential real-world applications. We will be introducing the different machine learning paradigms and explaining what they are. We will then describe to which paradigm this model belongs to and why. We will then describe how this model works and the components that go along with it. Then we will conclude by giving an example of its application to solve a real world analytical problem. Introducing the different Machine learning paradigms Machine learning paradigms are the many techniques or methods applied throughout the model-training phase on data. The three primary learning paradigms are supervised, unsupervised, and semi- supervised learning. Each paradigm is different and best suited for a variety of applications and datasets. Supervised learning In the supervised learning paradigm, a labelled dataset is used to train the model. This indicates that an output label is associated with every training case. For the model to accurately predict the labels for fresh, unobserved data, it must first learn the mapping from inputs to outputs. The following are some examples of algorithms: random forests, decision trees, k-nearest neighbours (k-NN), neural networks, support vector machines (SVM), logistic regression, and linear regression. This paradigm can be used in Classification: Making predictions about discrete labels (e.g., image classification, spam detection). Forecasting continuous values (e.g., stock price forecasting, housing price prediction) is known as regression. Unsupervised learning Machine learning techniques such as unsupervised learning include training models on unlabelled data without any explicit supervision or direction. Finding patterns, connections, or structures in the data without the use of labels or categories is the aim of unsupervised learning. Algorithm examples include principal component analysis (PCA), autoencoders, tdistributed stochastic neighbour embedding (t-SNE), K-means clustering, hierarchical clustering. It is possible to apply this paradigm by Clustering: Dividing data items into discrete groups (market basket analysis, consumer segmentation, etc.). Dimensionality reduction (e.g., data visualization, noise reduction): lowering the feature count while maintaining the most crucial information. Semi-supervised learning The machine learning paradigm of semi-supervised learning falls in between supervised and unsupervised learning. For training, a sizable amount of unlabelled data and a relatively small amount of labelled data are used. Using the enormous volumes of available unlabelled data, this method makes use of the labelled data to direct the learning process. A significant amount of the training data is unlabelled and just a small portion is labelled. Algorithm examples include graph-based techniques, self-training, co-training, transductive learning approaches, and semi-supervised support vector machines. (SVM). This paradigm can be used in situations when there is a shortage of labelled data but an abundance of unlabelled data, such as in medical diagnosis with few labelled samples or natural language processing with partially labelled text. Which Paradigm this model belongs to and what it is Decision Tree Learning is a supervised machine learning method used for classification and regression applications. It entails building a model that learns basic decision rules derived from the data features to forecast the value of a target variable. The model is depicted as a tree structure, with each leaf node representing an outcome (label), each branch representing a decision rule, and each interior node representing a feature (attribute). This model falls within the category of supervised learning paradigms because it uses labelled training data to determine how to map input characteristics to the target variable (either continuous values for regression or class labels for classification). The goal of the model is to use the rules it learned during training to predict the output label for fresh, unused input data. Key Components Root Node: The decision tree's root node is the highest node. It represents the most accurate attribute or predictor used to divide the data into smaller groups. Internal Nodes: Nodes that show choices made in response to an input feature. Every internal node relates to a certain feature test, such as "Is age > 30?" Branches: The decision-making process at each node is represented by the edges that connect the nodes. Leaf Nodes: Terminal nodes that offer the regression or classification value, which is the anticipated result. Types of Decision Trees Classification Trees: Utilized when the goal variable is categorical. Class labels are represented by the leaves, and feature conjunctions that result in those class labels are represented by the branches. Regression Trees: Utilized when the target variable is continuous. The expected continuous value is shown by the leaves. How the Decision Tree Method works Decision tree learning is the process of using data attributes to learn decision rules to build a model that predicts the value of a target variable. This is a conceptual summary of the operation of this method: 1. Initialization Begin with the complete dataset as the tree’s root Multiple characteristics and a goal variable (label) relate to each observation in the dataset. 2. Selecting the Best Feature for Splitting Choose the optimal feature for data splitting at each node. Finding the most homogeneous subgroups—that is, subsets where the objective variable is as comparable as possible—is the aim of data partitioning. To assess the possible splits, apply a criterion such as information gain (for ID3), Gini impurity (for CART), or any other metric. 3. Splitting the Node Sort the data into subgroups according to the most valuable feature and its values. Every subset creates a branch that extends from the node. Creating thresholds (such as "Is age > 30?") may be necessary for continuous features. In the case of categorical features, this could entail making a branch for every category. 4. Creating Sub-Nodes and Recursive Splitting Repeat steps 1 through 3 for each branch (subset): choose the best feature, divide the data, and form sub-nodes. For every additional node, split recursively until a stopping condition is satisfied. 5. Stopping Conditions Pure Nodes: When it comes to classification or regression, all the observations in a node belong to the same class or have values that are comparable. Maximum Depth: A predetermined maximum depth is reached by the tree. Minimum Samples per Leaf: A minimum of one observation is required for each leaf node. No Improvement: The homogeneity of the nodes is not appreciably enhanced by additional splitting. 6. Assigning Labels to Leaf Nodes Give a node a label when it turns into a leaf (i.e., when it can no longer be split). In terms of classification, the label usually corresponds to the class that most observations in the leaf share. In regression, the goal values in the leaf's mean or median are usually used as the label. Advantages and Limitations of the Decision Tree Advantages: Simple to Visualize and Interpret: The tree structure is understandable and easy to understand. Needs Minimal Data Preprocessing: Normalization and feature scaling are not required. Takes Care of Both categorical and numerical data: A variety of data types are possible. Non-linear Relationships: Able to record non-linear correlations between the target variable and characteristics. Limitations: Overfitting: Poor generalization can result from trees that are overly complicated and overfit the training set. Instability: A slight alteration in the data can produce an entirely different tree. Bias: When one feature predominates in the splits, decision trees are vulnerable to prejudice. Strategies to mitigate Limitations 1. Pruning Pruning is chopping off branches of the tree that don't have much predictive ability for the desired variables. Types: Early Stopping, or Pre-pruning: Once the tree reaches a certain depth or additional splitting does not yield appreciable improvement in performance, stop the tree from growing. Post-pruning: After letting the tree reach its maximum potential, cut off any branches that don't seem very important using a validation set. Benefits: By optimizing the model and enhancing its generalization to new data, it lessens overfitting. 2. Cross- Validation Description: Apply cross-validation techniques to assess the decision tree's performance on various data subsets. Types: K-Fold Cross-Validation: Divide the data into k subsets, train on k-1 subsets, and validate on the remaining subset. Repeat k times. Leave-One-Out Cross-Validation (LOOCV): Repeat for each data point, using all but one for training and that one point for validation. Benefits: Lowers the chance of overfitting by assisting in determining how the model will generalize to an independent dataset. 3. Ensemble Methods Gradient Boosting Description: Construct trees one after the other, with each one aiming to fix the mistakes of the last one. Every tree is trained using remaining errors from the preceding trees. Benefits: Increases prediction accuracy and reduces overfitting by progressively increasing the number of trees in the model. Random Forests Description: The goal is to create a forest-like structure by combining several decision trees. Each tree is constructed using a subset of data and features, and the average of all the trees' forecasts is used to get the final prediction. Benefits: By averaging the forecasts, it improves robustness, decreases overfitting, and raises model accuracy. 4. Feature selection and Engineering Description: To enhance model performance, carefully consider and engineer features. Strategies: Eliminate Irrelevant Features: Find and eliminate features that don't improve the model's ability to predict the future. Feature transformation: apply operations (such as normalization and log transformation) to features to better represent the underlying patterns. Benefits: Enhances speed and interpretability while reducing model complexity. 5. Balanced Datasets Description: Balance out the dataset to avoid the model being skewed towards the majority class. Strategies: Oversampling: Increase the quantity of samples in the minority class Undersampling: Reduce the number of samples in the majority class Generating Synthetic Data: Construct synthetic instances for the minority group Through the adoption of these tactics, decision tree models' performance and robustness can be greatly enhanced, hence mitigating their inherent limits and enhancing their utility in realworld scenarios. Real-world Application A Decision Tree for Credit Risk Assessment Scenario: A bank seeks to assess the likelihood that borrowers would miss payments on their loans. They categorize borrowers into three risk groups (low, medium, and high) based on a variety of factors such income, work status, loan amount, credit score, and loan purpose. This can be illustrated on a Decision Tree Model. Steps to Create the Decision Tree 1. Define the Features and Target Variable: Features Credit Score (numerical) Loan Amount (numerical) Income (numerical) Employment Status (categorical: employed, self-employed, unemployed) Loan Purpose (categorical: home, car, education, personal) Target Variable Risk Category (categorical: low, medium, high) 2. Gather and Prepare the Data: Assemble past information on borrowers possessing the aforementioned attributes. Whenever necessary, normalize numerical features, handle missing values, and encode category variables. 3. Train the Decision Tree Model Divide the data into sets for testing and training. Using the relevant algorithm (e.g., CART), train the decision tree model on the training set. 4. Evaluate the Model Assess the model's performance on the test set by utilizing metrics like F1 score, accuracy, precision, and recall. Application Example: Example Decision Tree Structure 1. Root Node: o Feature: Credit Score o Split Criterion: Credit Score < 650 2. Node 1 (Credit Score < 650): o Feature: Employment Status o Split Criterion: Employment Status = Unemployed 3. Leaf Node (Credit Score < 650, Employment Status = Unemployed): o Risk Category: High 4. Node 2 (Credit Score < 650, Employment Status ≠ Unemployed): o Feature: Income o Split Criterion: Income < $50,000 5. Leaf Node (Credit Score < 650, Employment Status ≠ Unemployed, Income < $50,000): o Risk Category: Medium 6. Leaf Node (Credit Score < 650, Employment Status ≠ Unemployed, Income ≥ $50,000): o Risk Category: Medium 7. Node 3 (Credit Score ≥ 650): o Feature: Loan Amount o Split Criterion: Loan Amount < $20,000 8. Leaf Node (Credit Score ≥ 650, Loan Amount < $20,000): o Risk Category: Low 9. Node 4 (Credit Score ≥ 650, Loan Amount ≥ $20,000): o Feature: Loan Purpose o Split Criterion: Loan Purpose = Personal 10. Leaf Node (Credit Score ≥ 650, Loan Amount ≥ $20,000, Loan Purpose = Personal): o Risk Category: Medium 11. Leaf Node (Credit Score ≥ 650, Loan Amount ≥ $20,000, Loan Purpose ≠ Personal): o Risk Category: Low Credit Score < 650 ├── Employment Status = Unemployed: High Risk └── Employment Status ≠ Unemployed ├── Income < $50,000: Medium Risk └── Income ≥ $50,000: Medium Risk Credit Score ≥ 650 ├── Loan Amount < $20,000: Low Risk └── Loan Amount ≥ $20,000 ├── Loan Purpose = Personal: Medium Risk └── Loan Purpose ≠ Personal: Low Risk This code represents a decision tree for assessing the risk level of loan applicants based on their credit score, employment status, income, and loan amount. Here's a clear, concise, and readable explanation: If the credit score is less than 650: If the employment status is unemployed, the applicant is categorized as high risk. If the employment status is not unemployed: If the income is less than $50,000, the applicant is categorized as medium risk. If the income is $50,000 or more, the applicant is categorized as medium risk. If the credit score is 650 or more: If the loan amount is less than $20,000, the applicant is categorized as low risk. If the loan amount is $20,000 or more: If the loan purpose is personal, the applicant is categorized as medium risk. If the loan purpose is not personal, the applicant is categorized as low risk. Conclusion and presenter’s remarks In summary, decision tree learning is an effective and adaptable machine learning tool. It is especially useful for practitioners and researchers alike due to its easy design and versatility in handling various data formats. Despite its drawbacks, decision trees' resilience and predictive power have been greatly increased by the development of sophisticated approaches and ensemble methods. As we've seen, decision tree learning is a useful tool for resolving issues in a variety of sectors and offers a basis for comprehending increasingly sophisticated machine learning algorithms. Its use in industries including marketing, banking, and healthcare proves its effect and wide range of applications. Adopting the most recent developments in decision tree approaches will help us develop our analytical skills and open new possibilities in the future. Decision tree learning is still an area full of possibilities for advancement and use for those wishing to apply it or study it more. Algorithms: To create a decision tree that best classifies or regresses the data, particular algorithms are used to identify the ideal splits at each node. Calculates the entropy change from a pre-split state to a post-split state. utilized in ID3. Normalizes information gain based on a split's inherent information. Utilized in C4.5. Calculates the probability that an element selected at random will be classed wrongly. Utilized for classification in CART. Calculates the mean squared difference between the values that were expected and actual values. Utilized for regression in CART. Evaluates the relationship between feature categories and the target variable in terms of statistical significance. Utilized in CHAID

0

0

advertisement

Related documents

Download

advertisement

Add this document to collection(s)

You can add this document to your study collection(s)

Sign in Available only to authorized usersAdd this document to saved

You can add this document to your saved list

Sign in Available only to authorized users