Uploaded by

Samar Badr

Efficient Offloading & Task Scheduling in IoT-Cloud-Fog Environments

advertisement

مناقشة بحث Efficient Offloading and Task Scheduling in Internet of Things-Cloud-Fog Environment Table of content 1 Introduction Problem Definition Related Work System Model Proposed Methods Simulation & Results Conclusions & Future works Publication Introduction / Background 2 Task Offloading in IoT-Cloud-Fog Environment In the IoT ecosystem, Fog Computing (FC) extends cloud services to the network edge, enabling faster data processing near the source without requiring high bandwidth. By combining FC and cloud computing, IoT systems achieve reduced latency, improved efficiency, and lower costs while still utilizing cloud resources when necessary. Fog computing benefits real-time systems by providing high-speed internet connectivity, but its limited resources present challenges in meeting dynamic real-time demands. A key issue in fog environments is the optimal assignment of tasks to fog nodes to ensure efficient resource utilization and performance. Background /Cont. 3 IoT-Cloud-Fog offloading refers to the process of distributing computational tasks across IoT devices, fog nodes, and cloud servers to optimize performance, reduce latency, and enhance resource utilization. In this paradigm: Background /Cont. 4 IoT devices collect and generate data, often with limited processing power, and may offload tasks to nearby fog nodes for faster processing. Fog nodes are positioned closer to IoT devices and provide intermediate computing resources, reducing latency and bandwidth usage compared to cloud servers. Cloud servers handle more complex and resource-intensive tasks that fog nodes cannot process, providing scalable storage and computational power when needed. Effective offloading strategies improve task execution time, energy efficiency, and overall system responsiveness in IoT-fog-cloud environments. Introduction / Scientific Workflow 5 A scientific workflow is a series of computational or data manipulation steps designed to achieve a specific scientific objective. A workflow application is modeled as a Directed Acyclic Graph (DAG), where tasks (vertices) are represented by T, and task dependencies (edges) by E. Each edge indicates the data transfer between tasks, with di,j representing the size of output data from task ti to task tj. Task tj can only begin after ti is completed. Starting tasks have no parent, and ending tasks have no child. Tasks at the same level can run concurrently. In an IoT-cloud-fog environment, workflow application offloading and scheduling aim to assign tasks to different computing resources, optimizing for completion time (makespan), energy consumption, and overall cost. Introduction / Scientific Workflow 6 This study uses three scientific workflow applications: Montage, Epigenomics, and CyberShake. Montage creates customized sky mosaics for astronomical data. CyberShake evaluates earthquake hazards for the Southern California Earthquake Center. Epigenomics automates genome sequence handling, in collaboration with the USC Epigenome Center and the Pegasus Team. Introduction / Offloading 7 Offloading involves deciding where a task or set of tasks should be processed either locally, on a fog node, or on remote cloud servers. Steps: Task Identification Identify which tasks can or need to be offloaded from a local device (IoT, fog node) to more powerful cloud resources. Resource Evaluation Evaluate the available resources such as local fog nodes or cloud infrastructure. This includes checking parameters like latency, bandwidth, computation power, and energy consumption. Decision Making Determine whether to offload the task to the fog (nearby) or cloud (remote) based on factors like response time, resource availability, energy efficiency, or processing capacity. Offload Execution Transfer the task (and necessary data) to the selected resource (fog or cloud) for processing. Task Completion Retrieve the results from the cloud or fog resource and return it to the originating device. Introduction / Workflow Scheduling 8 We concerned in our research on workflow scheduling because offloading decides the location of task execution, while task scheduling manages the timing and sequencing of task execution across available resources, and using both offloading and workflow scheduling together offers a comprehensive approach to optimizing task execution in cloud-fog computing environments. Here are the combined advantages: ➢ Optimized Resource Utilization ➢ Enhanced Performance and Reduced Latency ➢ Cost, and Energy Efficiency ➢ Improved Quality of Service ➢ Comprehensive Task Management Introduction / Challenge 9 : Complex Decision-Making: Deciding • Resource Allocation and Management where to offload tasks (fog, cloud, or local) and scheduling them efficiently involves complex decision-making processes that must consider multiple factors such as resource availability, task requirements, and performance metrics. : Resource Heterogeneity: Different resources (fog nodes, cloud servers, etc.) have varying capabilities, costs, and constraints, making it challenging to match tasks with the most appropriate resources. Introduction / Motivation and Objectives 10 The main Motivation of this article lies in optimizing performance, resource utilization, cost, and energy efficiency, while enhancing scalability and quality of service. ➢ From this motivation, we identify the main objectives of this thesis as follow: o Optimize Task Execution: by reducing the total completion time. o Achieve Cost Efficiency: by offloading tasks to cost-effective resources and schedule them to optimize resource usage and reduce costs. o Improve Energy Efficiency: Optimize task scheduling to minimize energy usage and leverage local or fog resources to save energy compared to cloudbased solutions. o Enhance System Scalability. Introduction / Problem Definition 11 The problem definition of cloud-fog computing involves addressing the complexities of integrating and managing resources across cloud and fog environments, optimizing task execution, resource allocation, cost, and energy efficiency, and ensuring high performance, scalability, and security. • Integration of Cloud and Fog Computing • Resource Management and Allocation • Task Offloading and Scheduling • The existing problem of application-awareness • Cost, and Energy Efficiency Related Work / The baseline method 12 The Simple offloading algorithm proposed in FogWorkflowSim is a straightforward approach that primarily considers task deadlines and execution times when making offloading decisions. It prioritizes meeting deadlines over energy consumption. Simple offloading / Cont. 13 The algorithm prioritizes meeting deadlines over energy consumption. It calculates execution times on different devices based on factors like network bandwidth and device capabilities. The offloading decision is made based on the task's deadline and the calculated execution times. The algorithm is relatively simple and easy to implement. Simple offloading / Limitations 14 The limitations of Simple offloading: ➢ The algorithm may not be optimal in scenarios where energy consumption is a critical factor. ➢ It does not consider other factors such as task complexity or resource availability. This simple offloading algorithm provides a baseline for understanding the concept of offloading in IoT-fog-cloud environments. More advanced algorithms may incorporate additional factors and optimization techniques to improve performance and energy efficiency. Related Work / Cont. 15 We have deduced the methods used in this article from the following: 1. The idea of this paper “An energy consumption oriented offloading algorithm for fog computing”, and Simple offloading strategy proposed in ‘‘FogWorkflowSim: An automated simulation toolkit for workflow performance evaluation in fog computing,’’ 2. The requirements of real time applications. System Model / Architecture 16 The system model in FogWorkflowSim is a three-tier architecture consisting of: 1. End devices (EDs): These are IoT devices or mobile devices that generate tasks and may execute some tasks locally. They have limited computational resources and may offload tasks to fog nodes or cloud servers. 2. Fog nodes: These are intermediate devices located at the network edge. They have more computational resources than end devices but less than cloud servers. Fog nodes can process tasks locally or offload them to cloud servers. 3. Cloud servers: These are powerful servers located in data centers. They have the highest computational resources and can handle complex tasks. System Model / Assumption 17 ❖ Deterministic task execution times. ❖ Tasks can be offloaded between any of the three tiers. ❖ Perfect knowledge of network conditions. ❖ The network bandwidth and latency between devices are known and constant. ❖ The cost of offloading tasks to fog nodes and cloud servers is known. The system model also includes parameters for: • Task execution times on different devices. • Network bandwidth between devices. • Energy consumption of devices. • Cost of computation and communication. Proposed Methods 18 Three offloading strategies are proposed in this article They are formulated as an optimization problem that aims to minimize the total execution time, cost, and energy consumption. Goal: ❖ develop an offloading algorithm that optimizes execution time, cost, and energy consumption. ❖ create methods that can effectively handle diverse scenarios, especially real-time applications. They are formulated as an optimization problem that aims to minimize the total execution time, cost, and energy consumption. Latency Centric Offloading (LCO) 19 LCO is an offloading strategy that prioritizes minimizing task completion time (latency). It aims to offload tasks to the tier (fog or cloud) that can execute them most quickly, even if it means incurring higher costs or energy consumption. This strategy is particularly suitable for applications that have strict latency requirements, such as real-time video streaming or online gaming. Decision-Making Process: ❖ Calculate execution times: For each task, calculate the estimated execution time on the end device, fog node, and cloud server. ❖ Select the fastest tier: Choose the tier with the shortest estimated execution time for the task. ❖ Offload if necessary: If the fastest tier is not the end device, offload the task to that tier. LCO / Cont. 20 Advantages of LCO: ➢ Low latency: Ensures that tasks are executed quickly, meeting the requirements of latency-sensitive applications. ➢ Simple implementation: The decisionmaking process is straightforward and easy to implement. Disadvantages of LCO: ➢ High energy consumption: Offloading tasks to fog or cloud servers can consume more energy than executing them locally on the end device Energy Based Offloading (EBO) 21 EBO is an offloading strategy that prioritizes energy conservation on IoT devices. It aims to offload tasks to the tier (fog or cloud) that can execute them with the least energy consumption, even if it means sacrificing some latency or incurring higher costs. This strategy is particularly suitable for applications where battery life or energy efficiency is a critical concern. Decision-Making Process: ❖ Calculate energy consumption: For each task, calculate the estimated energy consumption on the end device, fog node, and cloud server. ❖ Select the most energy-efficient tier: Choose the tier with the lowest estimated energy consumption for the task. ❖ Offload if necessary: If the most energy-efficient tier is not the end device, offload the task to that tier. EBO / Cont. 22 Advantages of EBO: ➢ Energy conservation: Helps to prolong the battery life of IoT devices and reduce energy costs. ➢ Reduced environmental impact: Contributes to a more sustainable and environmentally friendly computing environment. Disadvantages of EBO: ➢ Increased latency: Offloading tasks to fog or cloud servers may increase their execution time, leading to higher latency. ➢ Higher costs: Offloading tasks to fog or cloud servers can incur higher costs due to data transmission and computation expenses. Efficient Offloading (EO) 23 EO is an offloading strategy that aims to strike a balance between task completion time, energy consumption, and cost. It leverages the hybrid IoT-fog-cloud architecture to exploit the cost differentials between different resource types. Decision-Making Process: ❖ Calculate execution times and energy consumption: For each task, calculate the estimated execution time and energy consumption on the end device, fog node, and cloud server. ❖ Normalize execution times and energy consumption: Normalize the execution times and energy consumption values to a common scale. ❖ Calculate fitness function: Combine the normalized execution time and energy consumption values into a fitness function. ❖ Select the optimal tier: Choose the tier with the lowest fitness value. This tier represents the best balance between execution time and energy consumption for the task. ❖ Offload if necessary: If the optimal tier is not the end device, offload the task to that tier. EO / Cont. 24 Advantages of EO: ➢ Balanced approach: Considers both execution time and energy consumption, providing a more comprehensive optimization. ➢ Improved efficiency: Can achieve better overall system performance by effectively utilizing resources. Disadvantages of EO: ➢ Complexity: The decision-making process is more complex than LCO and EBO. Task Scheduling using Genetic Algorithm 25 Integration with a genetic algorithm (GA) for task scheduling in IoT-fog-cloud environments. The goal is to optimize the overall system performance by considering both offloading decisions and task scheduling. Integration Approach 1.Offloading Decision: 1. EO, EBO, or LCO: Apply one of the proposed offloading strategies to determine the optimal tier for each task based on the desired objectives (latency, energy, or balance). 2.Task Scheduling: 1. GA: Use a GA to generate a population of potential task schedules. Each individual in the population represents a specific allocation of tasks to available resources. 2. Fitness Function: Define a fitness function that evaluates the quality of each schedule based on the chosen objectives. The fitness function can incorporate factors such as makespan, cost, and energy consumption. 3. Genetic Operators: Apply genetic operators like selection, crossover, and mutation to generate new individuals and explore the solution space. 3.Iterative Process: 1. Repeat the above steps for multiple generations until a satisfactory solution is found or a termination criterion is met. Simulation & Results/ SW &HW Configuration 26 ➢ Operating system: windows 10 Enterprise. ➢ The FogWorkflowSim simulator runs on the Eclipse Java IDE. ➢ Processor: Intel Core. I7- CPU @ 2.80GHZ.. ➢ Installed memory (RAM): 16.00 GB. ➢ System type: 64-bit Operating System, x64-based processor. Simulation & Results/ Simulation Parameters 27 Parameters End device Fog VM Cloud VM Number of servers/devices 10 6 3 Processing rate (MIPS) 1000 1300 1600 Task execution cost ($) 0 0.48 0.96 Communication cost ($) 0 0.01 0.02 Working power (MW) 700 800 1600 Idle power (MW) 30 40 1300 Uplink bandwidth (Mbps) 20 10 1 Downlink bandwidth (Mbps) 40 10 10 28 Simulation & Results / Performance Metrics Comparing the results of our proposed method with the Simple offloading strategy in terms of: Total completion time (makespan). Average energy consumption. Total cost. Simulation & Results / Makespan 29 Simulation & Results / Cost 30 Simulation & Results / Energy Consumption 31 Conclusions 32 ➢ This study proposes three strategies: LCO, EBO, and EO as offloading strategies in IoT-fogcloud environments, with a focus on real-time applications. ➢ LCO is best for time-sensitive tasks but can be more energy-intensive. ➢ EBO focuses on energy efficiency, which is beneficial for long-term and computationally demanding tasks. ➢ EO seeks a balanced trade-off between time and energy, suited for using resources more effectively. ➢ This work employs genetic algorithms for task scheduling because they excel at handling complex solution spaces and dynamic situations. ➢ Combining offloading techniques with task scheduling algorithms provides efficient task execution. ➢ Overall, these strategies contribute to the optimization and reliability of IoT-fog-cloud systems in different environments, offering specified solutions for different task requirements and environmental constraints. Future Works 33 ▪ Future work could focus on incorporating additional workflow types and increasing the number of tasks in each workflow. ▪ Investigating the impact of dynamic resource provisioning and adaptive offloading strategies could also be explored. Publication 34 Gamal, M., Awad, S., Abdel-Kader, R. F., & Abd El Salam, K. (2024). Efficient offloading and task scheduling in internet of things-cloud-fog environment. International Journal of Electrical and Computer Engineering (IJECE), 14(4), 4445-4455. 35 Thank you

0

0

advertisement

Related documents

Download

advertisement

Add this document to collection(s)

You can add this document to your study collection(s)

Sign in Available only to authorized usersAdd this document to saved

You can add this document to your saved list

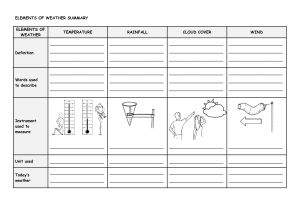

Sign in Available only to authorized users