Uploaded by

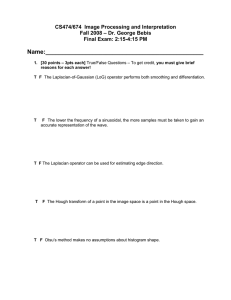

Miner Mod

Digital Image Processing: Motivation, Applications & Fundamentals - Module 1

advertisement

Module 1

1. Motivation and Perspective:

• Definition of digital image processing:

• Digital image processing refers to the manipulation and analysis of digital images using

computer algorithms and techniques.

• It involves the transformation of an image into a numerical representation and the

performance of various operations on it.

• Importance and applications of digital image processing:

• Enhancing image quality and improving visual perception

• Extracting relevant information from images for various purposes (e.g., medical diagnosis,

remote sensing, surveillance)

• Automating image-related tasks and decision-making processes

• Enabling efficient storage, transmission, and retrieval of image data

• Advancing fields such as computer vision, pattern recognition, and artificial intelligence

• Historical development and evolution of digital image processing:

• Early beginnings in the 1960s with the advent of digital computers and the need for image

processing in scientific and military applications

• Significant advancements in hardware and software technologies, leading to improved image

quality, resolution, and processing capabilities

• Emergence of dedicated image processing algorithms and techniques, such as image

enhancement, segmentation, and feature extraction

• Rapid growth of digital image processing applications in various domains, driven by

technological progress and increasing computational power

• Emerging trends and future prospects:

• Advancements in deep learning and artificial intelligence for image analysis and interpretation

• Integration of digital image processing with other technologies (e.g., Internet of Things, edge

computing, cloud computing)

• Increasing use of mobile devices and computational photography techniques

• Expansion of applications in areas like autonomous vehicles, medical imaging, and intelligent

surveillance systems

• Interdisciplinary nature of digital image processing:

• Collaboration with fields like computer science, electrical engineering, mathematics, and

physics

• Utilization of knowledge from areas such as signal processing, pattern recognition, and image

sensor design

2. Applications:

• Medical imaging (e.g., X-ray, CT, MRI, ultrasound):

• Enhancing image quality for improved diagnosis and treatment planning

• Automated detection and segmentation of abnormalities or lesions

• Quantitative analysis of medical images for monitoring and disease tracking

• Integration with computer-aided diagnosis (CAD) systems

• Telemedicine and remote healthcare applications

• Remote sensing (e.g., satellite imagery, aerial photography):

• Mapping and monitoring of land use, vegetation, and environmental changes

• Detection and classification of objects or features (e.g., roads, buildings, water bodies)

• Change detection and temporal analysis of satellite or aerial images

• Geospatial data analysis and integration with geographic information systems (GIS)

• Urban planning, resource management, and disaster monitoring applications

• Biometrics (e.g., fingerprint recognition, facial recognition):

• Automated identification and verification of individuals based on unique physical or

behavioral characteristics

• Enhancing the accuracy and reliability of biometric systems through image processing

techniques

• Liveness detection and anti-spoofing measures to prevent fraudulent access

• Integration with security and surveillance systems for access control and identification

• Emerging applications in mobile devices and Internet of Things (IoT) environments

3. Components of Image Processing System:

• Image acquisition (e.g., digital cameras, scanners):

• Conversion of physical scenes or objects into digital image data

• Factors affecting image quality, such as sensor resolution, dynamic range, and noise

• Calibration and color management techniques for accurate image capture

• Integration of image acquisition devices with computer systems

• Image preprocessing (e.g., noise reduction, image enhancement):

• Techniques to improve the quality and clarity of the acquired image

• Removal of unwanted artifacts, such as noise, blur, and distortions

• Enhancement of image features, such as contrast, sharpness, and color balance

• Preparation of the image for subsequent processing and analysis

• Image segmentation (e.g., object detection, edge detection):

• Partitioning the image into meaningful regions or objects of interest

• Techniques for detecting and delineating object boundaries, such as edge detection and regionbased segmentation

• Addressing challenges like overlapping objects, varying illumination, and complex

backgrounds

• Integration of segmentation with higher-level image analysis and interpretation

• Feature extraction and representation:

• Identification and extraction of relevant visual features from the image

• Representation of image features in a compact and efficient manner

• Techniques like shape analysis, texture analysis, and color analysis

• Dimensionality reduction and feature selection for efficient processing

• Image analysis and interpretation (e.g., pattern recognition, object classification):

• Applying machine learning and computer vision algorithms to analyze and interpret the image

content

• Techniques for object recognition, scene understanding, and image-based decision-making

• Integration of image analysis with domain-specific knowledge and applications

• Addressing challenges like occlusion, viewpoint changes, and environmental variations

4 Fundamentals: Element of Visual Perception:

• The human visual system and its characteristics:

• Structure of the human eye: The eye is a complex organ that includes the cornea,

lens, retina, optic nerve, and visual cortex. These components work together to

convert light into electrical signals that the brain can interpret.

• Visual perception process: Light enters the eye, is focused by the lens, and strikes

the retina, which contains photoreceptor cells (rods and cones). These cells convert

the light into electrical signals that are transmitted through the optic nerve to the

visual cortex of the brain, where the image is perceived and interpreted.

• Limitations of the visual system: The human visual system has limitations in terms

of visual acuity, field of view, and sensitivity to certain wavelengths of light. These

limitations are important considerations in digital image processing.

• Perception of brightness, contrast, and color:

• Brightness perception: The perceived brightness of an object or scene is influenced

by factors such as the intensity of the light, the reflectance of the surface, and the

adaptation level of the visual system.

• Contrast perception: Contrast is the difference in luminance or color that makes an

object distinguishable from its surroundings. The human visual system is highly

sensitive to changes in contrast, which is a crucial aspect of image quality.

• Color perception: The perception of color is a complex process that involves the

interaction of different types of color-sensitive cones in the retina. The brain

processes these signals to interpret the color of objects.

• The concept of visual acuity and visual resolution:

• Visual acuity: Visual acuity is a measure of the sharpness or clarity of vision. It is

often measured using Snellen charts and is influenced by factors such as eye health,

age, and illumination.

• Visual resolution: Visual resolution refers to the ability of the visual system to

distinguish between two closely spaced objects or details. It is related to the spatial

frequency content of an image and has important implications for digital image

processing.

• Factors affecting visual perception (e.g., environmental conditions, individual differences):

• Environmental factors: Factors such as lighting conditions, viewing distance, and

background can significantly influence visual perception. For example, glare from

bright light sources can reduce contrast and make it harder to perceive details.

• Individual differences: Visual perception can vary among individuals due to factors

like age, eye health, and cognitive abilities. This is an important consideration in

the design and evaluation of digital imaging systems.

• Applications of visual perception in digital image processing:

• Image quality enhancement: Understanding visual perception can guide the

development of image processing techniques that improve the visual appeal and

interpretability of digital images, such as contrast adjustment and color correction.

• Optimizing image display: Knowledge of visual perception characteristics can help

in the design of effective image display and presentation systems, ensuring that the

displayed images are optimized for human viewing.

• Automated visual tasks: Insights from visual perception can inform the

development of algorithms for automated visual tasks, such as object recognition

and scene understanding, which are essential in many digital image processing

applications.

5. A Simple Image Model:

• Definition and properties of a digital image:

• Digital image: A digital image is a two-dimensional array of pixels, where each

pixel represents the intensity or color value at a specific spatial location.

• Image attributes: Key attributes of a digital image include the spatial dimensions

(height and width), pixel depth (number of bits per pixel), and the number of color

channels (e.g., grayscale, RGB, CMYK).

• Representation of a digital image (e.g., matrix, pixel):

• Pixel representation: Pixels are the fundamental building blocks of a digital image,

and they are typically represented using a matrix structure, where each element

corresponds to a pixel value.

• Pixel indexing: Pixels are typically indexed using row and column coordinates,

with the top-left pixel having coordinates (0, 0) and the bottom-right pixel having

coordinates (height-1, width-1).

• Relationship between pixel values and physical properties: The pixel values in a

digital image are related to the physical properties of the imaged object or scene,

such as the intensity of light or the reflectance of surfaces.

• Spatial and intensity resolutions:

• Spatial resolution: Spatial resolution refers to the number of pixels per unit length

(e.g., pixels per inch or pixels per centimeter) and determines the level of detail that

can be captured in the image.

• Intensity resolution: Intensity resolution, also known as bit depth, refers to the

number of discrete intensity levels that can be represented by each pixel. Higher bit

depths allow for more precise representation of intensity variations.

• Tradeoffs between spatial and intensity resolutions: There is often a tradeoff

between spatial and intensity resolutions, as increasing one may require reducing

the other to maintain a reasonable file size or meet hardware constraints.

• Relationship between spatial and intensity resolutions:

• Balancing resolutions: The choice of spatial and intensity resolutions involves a

careful balance to achieve the desired image quality and file size, considering the

application requirements and hardware capabilities.

• Factors influencing resolution choices: Factors such as the nature of the imaging

task, the characteristics of the imaged objects or scenes, and the available storage

and processing resources can affect the selection of appropriate spatial and intensity

resolutions.

• Limitations and assumptions of the simple image model:

• Simplifications: The simple image model assumes a straightforward representation

of digital images, which may not capture the full complexity of real-world images.

• Advanced image models: In practice, more advanced image models may be

required to represent and process complex images, accounting for factors such as

noise, non-uniform illumination, and various image transformations.

6. Sampling and Quantization:

• Sampling and its importance in digital image processing:

• Analog-to-digital conversion: Sampling is the process of converting a continuoustime, continuous-amplitude signal (such as an analog image) into a discrete-time,

discrete-amplitude signal (a digital image).

• Preserving spatial information: Sampling is crucial in digital image processing as it

allows the spatial information present in the original analog image to be captured

and represented in the digital domain.

• The sampling theorem and its implications:

• Nyquist-Shannon sampling theorem: This theorem states that a continuous-time

signal can be perfectly reconstructed from its discrete-time samples if the sampling

rate is at least twice the highest frequency present in the signal.

• Avoiding aliasing: The sampling theorem is essential in digital image processing to

prevent aliasing, which occurs when the sampling rate is insufficient to capture the

high-frequency components of the image, leading to distortions.

• Practical considerations: In practice, satisfying the sampling theorem can be

challenging due to factors such as image bandwidth, hardware limitations, and

computational complexity.

• Factors affecting sampling (e.g., spatial resolution, frequency content):

• Spatial resolution: The required sampling rate is directly related to the desired

spatial resolution of the digital image. Higher spatial resolutions generally require

higher sampling rates to avoid aliasing.

• Frequency content: The frequency content of the image, determined by the spatial

characteristics of the imaged objects or scenes, also affects the choice of sampling

rate to prevent aliasing.

• Anti-aliasing techniques: Techniques such as the use of optical low-pass filters can

help mitigate aliasing by limiting the high-frequency content of the image before

sampling.

• Quantization and its role in digital image representation:

• Quantization: Quantization is the process of converting the continuous-amplitude

pixel values into a finite set of discrete levels, typically represented by integer

values.

• Impact on image quality: Quantization introduces a form of distortion, known as

quantization noise, which affects the fidelity of the digital image representation.

Higher bit depths (more quantization levels) generally result in better image quality.

• Quantization techniques: Various quantization techniques, such as uniform and nonuniform (e.g., logarithmic) quantization, can be employed to balance image quality

and storage requirements.

• Relationship between sampling and quantization:

• Synergistic effects: Sampling and quantization work together to represent the

continuous-time, continuous-amplitude analog image in the digital domain. The

combined effects of these processes determine the overall quality and

characteristics of the digital image.

• Balancing resolutions: The choice of spatial and intensity resolutions (through

sampling and quantization) involves a trade-off to achieve the desired image quality

and file size, considering the application requirements and hardware constraints.

7. Image Enhancement in Spatial Domain Introduction:

• Concept of image enhancement:

• Image enhancement: Image enhancement refers to the process of improving the

visual quality and interpretability of digital images by manipulating the pixel values

in the spatial domain.

• Objectives: The main objectives of image enhancement include improving contrast,

sharpening edges, reducing noise, and highlighting specific features of interest.

• Spatial domain techniques (e.g., histogram equalization, contrast stretching):

• Histogram equalization: This technique redistributes the pixel intensity values to

improve the overall contrast of the image by flattening the histogram.

• Contrast stretching: Contrast stretching is a technique that expands the dynamic

range of pixel values to enhance the contrast of the image, making dark regions

darker and bright regions brighter.

• Adaptive filtering: Spatial filtering techniques, such as unsharp masking and

adaptive filtering, can be used to selectively enhance or suppress certain image

features based on local characteristics.

• Intensity transformations and their effects:

• Intensity transformation functions: Various intensity transformation functions, such

as logarithmic, power-law, and piecewise linear functions, can be applied to the

pixel values to achieve different enhancement effects.

• Impact on image characteristics: The choice of intensity transformation function

can significantly affect the brightness, contrast, and dynamic range of the resulting

image.

• Application-specific transformations: The selection of appropriate intensity

transformation functions depends on the specific image characteristics and the

desired enhancement goals.

• Smoothing and sharpening filters:

• Smoothing filters: Spatial filtering techniques, such as averaging and Gaussian

filters, can be used to reduce noise and smooth out unwanted details in the image.

• Sharpening filters: Filters like the Laplacian and unsharp masking can be employed

to enhance the edges and fine details in the image, effectively sharpening the visual

appearance.

• Balancing smoothing and sharpening: The appropriate choice and combination of

smoothing and sharpening filters depend on the specific image characteristics and

the desired enhancement objectives.

• Applications of spatial domain image enhancement:

• Improving visibility: Image enhancement techniques can be used to improve the

visibility of specific features or regions of interest in the image, facilitating better

interpretation and analysis.

• Preprocessing for further processing: Spatial domain enhancement can serve as a

preliminary step in the image processing pipeline, preparing the image for

subsequent tasks such as segmentation, object recognition, or feature extraction.

• Domain-specific applications: Image enhancement has a wide range of applications,

from medical imaging and remote sensing to industrial inspection and multimedia

processing, where improving the visual quality and interpretability of images is

crucial.

Basic Gray Level Transformation Functions:

• Piecewise-Linear Transformation Functions:

• Definition: Piecewise-linear transformation functions are a class of intensity

transformation functions that are linear within specific intervals of pixel values. These

functions are defined by a set of linear segments, each with its own slope and range of

input values.

• Representation: Piecewise-linear transformation functions can be represented

mathematically as:

g(x) = ax + b, for r1 ≤ x < r2

Where g(x) is the output pixel value, x is the input pixel value, a is the slope of the linear

segment, b is the y-intercept, and r1 and r2 are the lower and upper limits of the input value range for

that linear segment.

• Contrast Stretching:

• Objective: The main objective of contrast stretching is to expand the dynamic range of

pixel values in an image to enhance the overall contrast and improve the visibility of

details.

• Procedure:

1. Determine the minimum and maximum pixel values in the input image

(xmin and xmax).

2. Decide on the desired minimum and maximum output pixel values

(ymin and ymax).

3. Define a piecewise-linear transformation function with two linear segments:

• Segment 1: g(x) = (ymax - ymin) / (xmax - xmin) *

(x - xmin) + ymin, f or xmin ≤ x < xmax

• Segment 2: g(x) = ymax, for x ≥ xmax

• Parameters:

1. xmin and xmax: The minimum and maximum pixel values in the input image,

respectively.

2. ymin and ymax: The desired minimum and maximum output pixel values,

typically the full range of the image data type (e.g., 0-255 for 8-bit images).

• Applications:

1. Improving the visibility of details in under-exposed or over-exposed regions of an

image.

2. Enhancing the contrast of images with a limited dynamic range.

3. Preparing images for further processing, such as segmentation or feature extraction.

2. Histogram Specification:

• Histogram Equalization:

• Objective: The main objective of histogram equalization is to redistribute the pixel

intensity values in an image to achieve a more uniform histogram, effectively

enhancing the overall contrast of the image.

• Procedure:

1. Compute the histogram of the input image, which represents the frequency

of occurrence of each pixel value.

2. Calculate the cumulative distribution function (CDF) of the input histogram.

3. Normalize the CDF to the range of the desired output pixel values (typically

0 to 255 for 8-bit images).

4. Use the normalized CDF as a transformation function to map the input pixel

values to the new output pixel values.

• Benefits:

1. Histogram equalization can effectively enhance the contrast and reveal

details in both bright and dark regions of an image.

2. It is a global enhancement technique that can improve the overall visual

appearance of the image.

• Histogram Matching (Specification):

• Objective: The goal of histogram matching (or histogram specification) is to

transform the histogram of an input image to match a desired target histogram,

allowing for more precise control over the image enhancement.

• Procedure:

1. Obtain the target histogram that represents the desired pixel value

distribution.

2. Calculate the cumulative distribution functions (CDFs) of both the input

image histogram and the target histogram.

3. Derive a transformation function by mapping the input image CDF to the

target histogram CDF.

4. Apply the transformation function to the input image to obtain the output

image with the desired histogram.

• Applications:

1. Histogram matching can be useful for enhancing specific image features or

achieving a desired visual appearance based on the target histogram.

2. It allows for more targeted and controlled image enhancement compared to

global histogram equalization.

• Local Enhancement:

• Objective: The aim of local enhancement is to apply histogram equalization or

other enhancement techniques within smaller, local regions of an image, rather than

globally across the entire image.

• Procedure:

1. Divide the input image into smaller, overlapping regions or blocks.

2. Apply histogram equalization (or other enhancement methods) to each local

region independently.

3. Combine the enhanced local regions to form the final output image.

• Benefits:

1. Local enhancement can better preserve important details and avoid overenhancement in some regions while underenhancing others.

2. It can adaptively enhance different parts of the image based on their local

characteristics, leading to more balanced and effective contrast

improvement.

3. Enhancement using Arithmetic/Logic Operations:

• Image Subtraction:

• Objective: The main objective of image subtraction is to extract differences

between two images or to remove unwanted background information from an

image.

• Procedure:

1. Acquire two images, often referred to as the "input image" and the

"reference image."

2. Subtract the pixel values of the reference image from the corresponding

pixel values of the input image.

3. The resulting image will highlight the differences between the two input

images.

• Applications:

1. Change detection: Identifying changes between two images of the same

scene captured at different times.

2. Edge enhancement: Subtracting a blurred version of an image from the

original can enhance the edges and fine details.

3. Background removal: Subtracting a background image from a foreground

image can effectively isolate the object of interest.

• Image Averaging:

• Objective: The objective of image averaging is to combine multiple images to

reduce noise and improve the overall image quality.

• Procedure:

1. Acquire multiple images of the same scene or object, typically under the

same conditions.

2. Calculate the average of the corresponding pixel values across all the input

images.

3. The resulting averaged image will have a higher signal-to-noise ratio

compared to the individual input images.

• Benefits:

1. Averaging can effectively reduce random noise, such as shot noise or sensor

noise, by exploiting the statistical properties of the noise.

2. It can improve the overall image quality and clarity by enhancing the

desired signal while suppressing the unwanted noise components.

Basics of Spatial Filtering:

• Smoothing Filters:

• Mean Filter:

• Objective: The main objective of the mean filter is to remove noise and smooth out

details in an image by replacing each pixel value with the average of the pixel

values in a specified neighborhood.

• Procedure:

1. Define a 2D window or kernel (e.g., 3x3, 5x5) that represents the

neighborhood around each pixel.

2. For each pixel in the input image, compute the average of the pixel values

within the corresponding neighborhood.

3. Replace the original pixel value with the computed average to obtain the

output image.

• Mathematical Representation: The output pixel value g(x,y) at

position (x,y) is calculated as the average of the pixel values f(i,j) within the

neighborhood N(x,y):

g(x,y) = (1/|N(x,y)|) * Σ(i,j)∈N(x,y) f(i,j)

where |N(x,y)| is the number of pixels in the neighborhood.

• Benefits:

• The mean filter is simple and effective in reducing high-frequency noise, such as

Gaussian noise.

• It can smooth out unwanted details and provide a more uniform appearance to the

image.

• Ordered Statistic Filters:

• Objective: Ordered statistic filters, such as the median filter, aim to reduce noise while

preserving edges and details more effectively than the mean filter.

• Procedure:

• Define a 2D window or kernel (e.g., 3x3, 5x5) that represents the neighborhood

around each pixel.

• For each pixel in the input image, sort the pixel values within the corresponding

neighborhood in ascending order.

• Replace the original pixel value with the median (or other order statistic) of the

sorted values to obtain the output image.

• Mathematical Representation: The output pixel value g(x,y) at position (x,y) is the

median (or other order statistic) of the pixel values f(i,j) within the

neighborhood N(x,y):

g(x,y) = median {f(i,j) | (i,j) ∈ N(x,y)}

• Benefits:

• Ordered statistic filters, such as the median filter, are more effective in

preserving edges and details while removing impulse noise (e.g., salt-andpepper noise).

• They can selectively smooth out noise without significantly blurring

important image features.

• Sharpening Filters:

• The Laplacian:

• Objective: The primary objective of the Laplacian filter is to enhance the edges and

fine details in an image by amplifying the high-frequency components.

• Procedure:

• Define a Laplacian kernel, which is a 2D array of coefficients that represent

the discrete Laplacian operator.

• Convolve the input image with the Laplacian kernel to compute the second

derivative of the pixel values in the spatial domain.

• The resulting image will highlight the edges and fine details, effectively

sharpening the visual appearance.

Mathematical Representation: The Laplacian operator can be represented as a

convolution of the input image f(x,y) with a Laplacian kernel L(x,y):

g(x,y) = f(x,y) * L(x,y)

Where * denotes the convolution operation, and a common Laplacian kernel is:

L(x,y) = [0 1 0]

[1 -4 1]

[0 1 0]

Benefits:

• The Laplacian filter is effective in detecting and enhancing edges, which are important

features for many image processing tasks.

• It can be combined with other filters, such as the Gaussian filter, to create more

sophisticated sharpening techniques (e.g., unsharp masking).

• Limitations:

• The Laplacian filter can also amplify noise if not applied carefully, as it is sensitive to

high-frequency components.

Basic Gray Level Functions:

Piecewise-Linear Transformation Functions - Contrast Stretching:

1. Introduction :

• Contrast stretching is a basic gray level function used to enhance the contrast of an image.

• It involves stretching the intensity values of an image to span the entire dynamic range.

2. Piecewise-Linear Transformation :

• This method divides the intensity range of the image into segments and applies different

linear transformations to each segment.

• Each segment aims to expand or compress the range of intensity values to achieve the

desired contrast enhancement.

3. Contrast Stretching :

• In contrast stretching, the lowest and highest intensity values in the image are mapped to

the minimum and maximum intensity values of the display.

• This expands the range of intensity values, making the dark areas darker and the bright

areas brighter, thereby enhancing the overall contrast.

4. Application :

• Contrast stretching is commonly used in image enhancement tasks where the contrast of

the image needs to be improved.

• It is particularly effective in enhancing images with low contrast or narrow intensity

ranges.

5. Example :

• For example, in a grayscale image, if the intensity values range from 0 to 100, contrast

stretching may map the intensity range to 0-255, thereby stretching the contrast for better

visualization.

Histogram Specification: Histogram Equalization, Local Enhancement, Enhancement using

Arithmetic/Logic Operations

1. Histogram Equalization :

• Histogram equalization is a technique used to adjust the contrast of an image by

redistributing intensity values to make the histogram more uniform.

• It aims to spread out the intensity values over the entire dynamic range, resulting in

improved contrast and visibility of details.

2. Local Enhancement :

• Local enhancement techniques focus on enhancing specific regions or features within an

image rather than the entire image.

• This is achieved by applying enhancement operations locally, based on the characteristics

of the neighboring pixels.

3. Enhancement using Arithmetic/Logic Operations :

• Arithmetic and logic operations are applied to manipulate pixel values directly for image

enhancement.

• Operations like addition, subtraction, multiplication, and logical AND, OR, XOR are used

to modify pixel values based on predefined criteria.

4. Application :

• Histogram equalization is widely used in medical imaging, satellite imagery, and digital

photography to enhance the visibility of structures and details.

• Local enhancement techniques are valuable in applications where specific features need to

be highlighted or enhanced.

• Arithmetic and logic operations are used for tasks like image blending, noise reduction,

and feature extraction.

5. Example :

• Histogram equalization can significantly improve the contrast of an underexposed image

by redistributing the intensity values to cover the entire dynamic range.

Image Subtraction, Image Averaging:

1. Image Subtraction :

• Image subtraction involves subtracting the pixel values of one image from another to

highlight the differences between them.

• It is commonly used for tasks like background subtraction, motion detection, and change

detection.

2. Image Averaging :

• Image averaging combines multiple images by taking the average pixel value at each pixel

location.

• It is used to reduce noise and enhance the signal-to-noise ratio in images captured under

varying conditions or over multiple exposures.

3. Application :

• Image subtraction is useful in medical imaging for detecting abnormalities in sequential

images, such as X-rays or MRI scans.

• Image averaging is employed in astrophotography to enhance the visibility of faint

celestial objects by reducing the effects of noise.

4. Example :

• In medical imaging, subtracting a pre-contrast image from a post-contrast image can

highlight areas where contrast agent uptake has occurred, aiding in the detection of lesions

or tumors.

• Averaging multiple noisy images of a star field can reveal faint stars that would otherwise

be obscured by noise.

Basics of Spatial Filtering:

Smoothing - Mean filter:

1. Mean Filter :

• The mean filter is a type of spatial filter used for image smoothing or blurring.

• It replaces each pixel value with the average of its neighboring pixel values within a

defined kernel or window.

2. Smoothing :

• Smoothing filters reduce high-frequency noise in an image by averaging pixel values

within a local neighborhood.

• They are effective in removing random variations in intensity while preserving largerscale features.

3. Kernel Operation :

• The mean filter operates by sliding a kernel window over the image and replacing each

pixel value with the average of the pixel values within the window.

• The size of the kernel determines the extent of smoothing; larger kernels result in more

pronounced blurring.

4. Application :

• Mean filtering is commonly used in image preprocessing to reduce noise before

performing more complex operations such as edge detection or segmentation.

• It is also employed in image compression to achieve smoother gradients and reduce file

size.

5. Example :

• Applying a mean filter to a noisy image of text can improve its readability by smoothing

out the noise while preserving the sharpness of the characters.

Ordered Statistic Filter:

1. Ordered Statistic Filter :

• Ordered statistic filters, also known as rank filters, are non-linear filters that process image

pixels based on their order statistics within a neighborhood.

• They are effective in removing various types of noise while preserving edges and image

details.

2. Rank Selection :

• In ordered statistic filtering, the pixel values within the kernel window are sorted, and a

specific rank or percentile value is selected as the output for each pixel location.

• Common ranks include the minimum, maximum, median, and midpoint.

3. Adaptive Filtering :

• Ordered statistic filters can adaptively adjust their behavior based on the local

characteristics of the image.

• This makes them robust to different types of noise and suitable for applications where

noise levels vary across the image.

4. Application :

• Ordered statistic filters are used in image denoising, especially in scenarios where

traditional linear filters may blur edges or smooth out important details.

• They find applications in medical imaging, satellite imagery, and surveillance where noise

reduction is crucial for accurate analysis.

5. Example :

• Applying a median filter, a type of ordered statistic filter, to an image corrupted with saltand-pepper noise can effectively remove the noise while preserving the edges and fine

details.

Sharpening – The Laplacian:

1. Laplacian Filter :

• The Laplacian filter is a spatial filter used for edge detection and image sharpening.

• It highlights regions of rapid intensity change in an image, which typically correspond to

edges or boundaries between objects.

2. Edge Enhancement :

• The Laplacian filter enhances edges by emphasizing the high-frequency components of an

image while suppressing low-frequency components.

• This results in increased contrast along edges, leading to sharper image appearance.

3. Kernel Operation :

• The Laplacian filter is based on the Laplacian operator, which calculates the second

derivative of the image intensity function.

• It is applied by convolving the image with a Laplacian kernel, such as the 3x3 or 5x5

Laplacian mask.

4. Application :

• Laplacian sharpening is commonly used in image editing software to enhance