Chapter 4

Introduction to Probability

Experiments, Counting Rules,

and Assigning Probabilities

Events and Their Probability

Some Basic Relationships

of Probability

Conditional Probability

Bayes’ Theorem

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 1

Uncertainties

Managers often base their decisions on an analysis

of uncertainties such as the following:

What are the chances that sales will decrease

if we increase prices?

What is the likelihood a new assembly method

method will increase productivity?

What are the odds that a new investment will

be profitable?

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 2

Probability

Probability is a numerical measure of the likelihood

that an event will occur.

Probability values are always assigned on a scale

from 0 to 1.

A probability near zero indicates an event is quite

unlikely to occur.

A probability near one indicates an event is almost

certain to occur.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 3

Probability as a Numerical Measure

of the Likelihood of Occurrence

Increasing Likelihood of Occurrence

Probability:

0

The event

is very

unlikely

to occur.

.5

The occurrence

of the event is

just as likely as

it is unlikely.

1

The event

is almost

certain

to occur.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 4

Statistical Experiments

In statistics, the notion of an experiment differs

somewhat from that of an experiment in the

physical sciences.

In statistical experiments, probability determines

outcomes.

Even though the experiment is repeated in exactly

the same way, an entirely different outcome may

occur.

For this reason, statistical experiments are sometimes called random experiments.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 5

An Experiment and Its Sample Space

An experiment is any process that generates welldefined outcomes.

The sample space for an experiment is the set of

all experimental outcomes.

An experimental outcome is also called a sample

point.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 6

An Experiment and Its Sample Space

Experiment

Experiment Outcomes

Toss a coin

Inspection a part

Conduct a sales call

Roll a die

Play a football game

Head, tail

Defective, non-defective

Purchase, no purchase

1, 2, 3, 4, 5, 6

Win, lose, tie

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 7

An Experiment and Its Sample Space

Example: Bradley Investments

Bradley has invested in two stocks, Markley Oil

and Collins Mining. Bradley has determined that the

possible outcomes of these investments three months

from now are as follows.

Investment Gain or Loss

in 3 Months (in $000)

Markley Oil Collins Mining

8

10

-2

5

0

-20

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 8

A Counting Rule for

Multiple-Step Experiments

If an experiment consists of a sequence of k steps

in which there are n1 possible results for the first step,

n2 possible results for the second step, and so on,

then the total number of experimental outcomes is

given by (n1)(n2) . . . (nk).

A helpful graphical representation of a multiple-step

experiment is a tree diagram.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 9

A Counting Rule for

Multiple-Step Experiments

Example: Bradley Investments

Bradley Investments can be viewed as a two-step

experiment. It involves two stocks, each with a set of

experimental outcomes.

Markley Oil:

Collins Mining:

Total Number of

Experimental Outcomes:

n1 = 4

n2 = 2

n1n2 = (4)(2) = 8

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 10

Tree Diagram

Example: Bradley Investments

Markley Oil

(Stage 1)

Collins Mining

(Stage 2)

Gain 8

Gain 10

Gain 8

Gain 5

Lose 2

Lose 2

Gain 8

Even

Lose 20

Gain 8

Lose 2

Lose 2

Experimental

Outcomes

(10, 8)

Gain $18,000

(10, -2) Gain

$8,000

(5, 8)

Gain $13,000

(5, -2)

Gain

$3,000

(0, 8)

Gain

$8,000

(0, -2)

Lose

$2,000

(-20, 8) Lose $12,000

(-20, -2) Lose $22,000

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 11

Counting Rule for Combinations

Number of Combinations of N Objects

Taken n at a Time

A second useful counting rule enables us to count

the number of experimental outcomes when n objects

are to be selected from a set of N objects.

CnN

where:

N!

N

n n !(N - n )!

N! = N(N - 1)(N - 2) . . . (2)(1)

n! = n(n - 1)(n - 2) . . . (2)(1)

0! = 1

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 12

Counting Rule for Permutations

Number of Permutations of N Objects

Taken n at a Time

A third useful counting rule enables us to count

the number of experimental outcomes when n

objects are to be selected from a set of N objects,

where the order of selection is important.

PnN

where:

N!

N

n !

n (N - n )!

N! = N(N - 1)(N - 2) . . . (2)(1)

n! = n(n - 1)(n - 2) . . . (2)(1)

0! = 1

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 13

Assigning Probabilities

Basic Requirements for Assigning Probabilities

1. The probability assigned to each experimental

outcome must be between 0 and 1, inclusively.

0 < P(Ei) < 1 for all i

where:

Ei is the ith experimental outcome

and P(Ei) is its probability

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 14

Assigning Probabilities

Basic Requirements for Assigning Probabilities

2. The sum of the probabilities for all experimental

outcomes must equal 1.

P(E1) + P(E2) + . . . + P(En) = 1

where:

n is the number of experimental outcomes

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 15

Assigning Probabilities

Classical Method

Assigning probabilities based on the assumption

of equally likely outcomes

Relative Frequency Method

Assigning probabilities based on experimentation

or historical data

Subjective Method

Assigning probabilities based on judgment

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 16

Classical Method

Example: Rolling a Die

If an experiment has n possible outcomes, the

classical method would assign a probability of 1/n

to each outcome.

Experiment: Rolling a die

Sample Space: S = {1, 2, 3, 4, 5, 6}

Probabilities: Each sample point has a

1/6 chance of occurring

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 17

Relative Frequency Method

Example: Lucas Tool Rental

Lucas Tool Rental would like to assign probabilities

to the number of car polishers it rents each day.

Office records show the following frequencies of daily

rentals for the last 40 days.

Number of

Polishers Rented

0

1

2

3

4

Number

of Days

4

6

18

10

2

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 18

Relative Frequency Method

Example: Lucas Tool Rental

Each probability assignment is given by dividing

the frequency (number of days) by the total frequency

(total number of days).

Number of

Polishers Rented

0

1

2

3

4

Number

of Days

4

6

18

10

2

40

Probability

.10

.15

.45

4/40

.25

.05

1.00

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 19

Subjective Method

When economic conditions and a company’s

circumstances change rapidly it might be

inappropriate to assign probabilities based solely on

historical data.

We can use any data available as well as our

experience and intuition, but ultimately a probability

value should express our degree of belief that the

experimental outcome will occur.

The best probability estimates often are obtained by

combining the estimates from the classical or relative

frequency approach with the subjective estimate.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 20

Subjective Method

Example: Bradley Investments

An analyst made the following probability estimates.

Exper. Outcome Net Gain or Loss Probability

(10, 8)

.20

$18,000 Gain

(10, -2)

.08

$8,000 Gain

(5, 8)

.16

$13,000 Gain

(5, -2)

.26

$3,000 Gain

(0, 8)

.10

$8,000 Gain

(0, -2)

.12

$2,000 Loss

(-20, 8)

.02

$12,000 Loss

(-20, -2)

.06

$22,000 Loss

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 21

Events and Their Probabilities

An event is a collection of sample points.

The probability of any event is equal to the sum of

the probabilities of the sample points in the event.

If we can identify all the sample points of an

experiment and assign a probability to each, we

can compute the probability of an event.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 22

Events and Their Probabilities

Example: Bradley Investments

Event M = Markley Oil Profitable

M = {(10, 8), (10, -2), (5, 8), (5, -2)}

P(M) = P(10, 8) + P(10, -2) + P(5, 8) + P(5, -2)

= .20 + .08 + .16 + .26

= .70

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 23

Events and Their Probabilities

Example: Bradley Investments

Event C = Collins Mining Profitable

C = {(10, 8), (5, 8), (0, 8), (-20, 8)}

P(C) = P(10, 8) + P(5, 8) + P(0, 8) + P(-20, 8)

= .20 + .16 + .10 + .02

= .48

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 24

Some Basic Relationships of Probability

There are some basic probability relationships that

can be used to compute the probability of an event

without knowledge of all the sample point probabilities.

Complement of an Event

Union of Two Events

Intersection of Two Events

Mutually Exclusive Events

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 25

Complement of an Event

The complement of event A is defined to be the event

consisting of all sample points that are not in A.

The complement of A is denoted by Ac.

Event A

Ac

Sample

Space S

Venn

Diagram

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 26

Union of Two Events

The union of events A and B is the event containing

all sample points that are in A or B or both.

The union of events A and B is denoted by A B

Event A

Event B

Sample

Space S

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 27

Union of Two Events

Example: Bradley Investments

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

M C = Markley Oil Profitable

or Collins Mining Profitable (or both)

M C = {(10, 8), (10, -2), (5, 8), (5, -2), (0, 8), (-20, 8)}

P(M C) = P(10, 8) + P(10, -2) + P(5, 8) + P(5, -2)

+ P(0, 8) + P(-20, 8)

= .20 + .08 + .16 + .26 + .10 + .02

= .82

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 28

Intersection of Two Events

The intersection of events A and B is the set of all

sample points that are in both A and B.

The intersection of events A and B is denoted by A

Event A

Event B

Sample

Space S

Intersection of A and B

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 29

Intersection of Two Events

Example: Bradley Investments

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

M C = Markley Oil Profitable

and Collins Mining Profitable

M C = {(10, 8), (5, 8)}

P(M C) = P(10, 8) + P(5, 8)

= .20 + .16

= .36

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 30

Addition Law

The addition law provides a way to compute the

probability of event A, or B, or both A and B occurring.

The law is written as:

P(A B) = P(A) + P(B) - P(A B

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 31

Addition Law

Example: Bradley Investments

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

M C = Markley Oil Profitable

or Collins Mining Profitable

We know: P(M) = .70, P(C) = .48, P(M C) = .36

Thus: P(M C) = P(M) + P(C) - P(M C)

= .70 + .48 - .36

= .82

(This result is the same as that obtained earlier

using the definition of the probability of an event.)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 32

Mutually Exclusive Events

Two events are said to be mutually exclusive if the

events have no sample points in common.

Two events are mutually exclusive if, when one event

occurs, the other cannot occur.

Event A

Event B

Sample

Space S

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 33

Mutually Exclusive Events

If events A and B are mutually exclusive, P(A B = 0.

The addition law for mutually exclusive events is:

P(A B) = P(A) + P(B)

There is no need to

include “- P(A B”

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 34

Conditional Probability

The probability of an event given that another event

has occurred is called a conditional probability.

The conditional probability of A given B is denoted

by P(A|B).

A conditional probability is computed as follows :

P( A B)

P( A|B)

P( B)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 35

Conditional Probability

Example: Bradley Investments

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

P(C | M ) = Collins Mining Profitable

given Markley Oil Profitable

We know: P(M C) = .36, P(M) = .70

P(C M ) .36

Thus: P(C | M )

.5143

P( M )

.70

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 36

Multiplication Law

The multiplication law provides a way to compute the

probability of the intersection of two events.

The law is written as:

P(A B) = P(B)P(A|B)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 37

Multiplication Law

Example: Bradley Investments

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

M C = Markley Oil Profitable

and Collins Mining Profitable

We know: P(M) = .70, P(C|M) = .5143

Thus: P(M C) = P(M)P(M|C)

= (.70)(.5143)

= .36

(This result is the same as that obtained earlier

using the definition of the probability of an event.)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 38

Joint Probability Table

Collins Mining

Profitable (C) Not Profitable (Cc)

Markley Oil

Total

Profitable (M)

.36

.34

.70

Not Profitable (Mc)

.12

.18

.30

Total

.48

.52

1.00

Joint Probabilities

(appear in the body

of the table)

Marginal Probabilities

(appear in the margins

of the table)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 39

Independent Events

If the probability of event A is not changed by the

existence of event B, we would say that events A

and B are independent.

Two events A and B are independent if:

P(A|B) = P(A)

or

P(B|A) = P(B)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 40

Multiplication Law

for Independent Events

The multiplication law also can be used as a test to see

if two events are independent.

The law is written as:

P(A B) = P(A)P(B)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 41

Multiplication Law

for Independent Events

Example: Bradley Investments

Event M = Markley Oil Profitable

Event C = Collins Mining Profitable

Are events M and C independent?

Does P(M C) = P(M)P(C) ?

We know: P(M C) = .36, P(M) = .70, P(C) = .48

But: P(M)P(C) = (.70)(.48) = .34, not .36

Hence: M and C are not independent.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 42

Mutual Exclusiveness and Independence

Do not confuse the notion of mutually exclusive

events with that of independent events.

Two events with nonzero probabilities cannot be

both mutually exclusive and independent.

If one mutually exclusive event is known to occur,

the other cannot occur.; thus, the probability of the

other event occurring is reduced to zero (and they

are therefore dependent).

Two events that are not mutually exclusive, might

or might not be independent.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 43

Bayes’ Theorem

Often we begin probability analysis with initial or

prior probabilities.

Then, from a sample, special report, or a product

test we obtain some additional information.

Given this information, we calculate revised or

posterior probabilities.

Bayes’ theorem provides the means for revising the

prior probabilities.

Prior

Probabilities

New

Information

Application

of Bayes’

Theorem

Posterior

Probabilities

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 44

Bayes’ Theorem

Example: L. S. Clothiers

A proposed shopping center will provide strong

competition for downtown businesses like L. S.

Clothiers. If the shopping center is built, the owner

of L. S. Clothiers feels it would be best to relocate to

the shopping center.

The shopping center cannot be built unless a

zoning change is approved by the town council.

The planning board must first make a

recommendation, for or against the zoning change,

to the council.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 45

Prior Probabilities

Example: L. S. Clothiers

Let:

A1 = town council approves the zoning change

A2 = town council disapproves the change

Using subjective judgment:

P(A1) = .7, P(A2) = .3

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 46

New Information

Example: L. S. Clothiers

The planning board has recommended against

the zoning change. Let B denote the event of a

negative recommendation by the planning board.

Given that B has occurred, should L. S. Clothiers

revise the probabilities that the town council will

approve or disapprove the zoning change?

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 47

Conditional Probabilities

Example: L. S. Clothiers

Past history with the planning board and the town

council indicates the following:

Hence:

P(B|A1) = .2

P(B|A2) = .9

P(BC|A1) = .8

P(BC|A2) = .1

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 48

Tree Diagram

Example: L. S. Clothiers

Town Council Planning Board

P(B|A1) = .2

P(A1) = .7

c

P(B |A1) = .8

P(B|A2) = .9

P(A2) = .3

c

P(B |A2) = .1

Experimental

Outcomes

P(A1 B) = .14

P(A1 Bc) = .56

P(A2 B) = .27

P(A2 Bc) = .03

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 49

Bayes’ Theorem

To find the posterior probability that event Ai will

occur given that event B has occurred, we apply

Bayes’ theorem.

P( Ai )P( B| Ai )

P( Ai |B)

P( A1 )P( B| A1 ) P( A2 )P( B| A2 ) ... P( An )P( B| An )

Bayes’ theorem is applicable when the events for

which we want to compute posterior probabilities

are mutually exclusive and their union is the entire

sample space.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 50

Posterior Probabilities

Example: L. S. Clothiers

Given the planning board’s recommendation not

to approve the zoning change, we revise the prior

probabilities as follows:

P( A1 |B)

=

P( A1 )P( B| A1 )

P( A1 )P( B| A1 ) P( A2 )P( B| A2 )

(. 7 )(. 2 )

(. 7 )(. 2 ) (. 3)(. 9)

.34

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 51

Posterior Probabilities

Example: L. S. Clothiers

The planning board’s recommendation is good

news for L. S. Clothiers. The posterior probability of

the town council approving the zoning change is .34

compared to a prior probability of .70.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 52

Bayes’ Theorem: Tabular Approach

Example: L. S. Clothiers

• Step 1

Prepare the following three columns:

Column 1 - The mutually exclusive events for

which posterior probabilities are desired.

Column 2 - The prior probabilities for the events.

Column 3 - The conditional probabilities of the

new information given each event.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 53

Bayes’ Theorem: Tabular Approach

Example: L. S. Clothiers

• Step 1

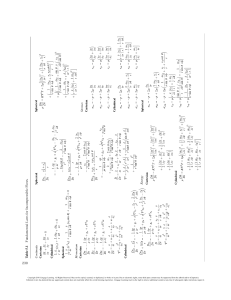

(1)

(2)

(3)

(4)

(5)

Conditional

Prior

Events Probabilities Probabilities

Ai

P(Ai)

P(B|Ai)

A1

.7

.2

A2

.3

.9

1.0

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 54

Bayes’ Theorem: Tabular Approach

Example: L. S. Clothiers

• Step 2

Prepare the fourth column:

Column 4

Compute the joint probabilities for each event and

the new information B by using the multiplication

law.

Multiply the prior probabilities in column 2 by

the corresponding conditional probabilities in

column 3. That is, P(Ai IB) = P(Ai) P(B|Ai).

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 55

Bayes’ Theorem: Tabular Approach

Example: L. S. Clothiers

• Step 2

(1)

(2)

(3)

(4)

(5)

Conditional

Prior

Joint

Events Probabilities Probabilities Probabilities

Ai

P(Ai)

P(B|Ai)

P(Ai I B)

A1

.7

.2

.14

A2

.3

.9

.27

1.0

.7 x .2

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 56

Bayes’ Theorem: Tabular Approach

Example: L. S. Clothiers

• Step 2 (continued)

We see that there is a .14 probability of the town

council approving the zoning change and a

negative recommendation by the planning board.

There is a .27 probability of the town council

disapproving the zoning change and a negative

recommendation by the planning board.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 57

Bayes’ Theorem: Tabular Approach

Example: L. S. Clothiers

• Step 3

Sum the joint probabilities in Column 4. The

sum is the probability of the new information,

P(B). The sum .14 + .27 shows an overall

probability of .41 of a negative recommendation

by the planning board.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 58

Bayes’ Theorem: Tabular Approach

Example: L. S. Clothiers

• Step 3

(1)

(2)

(3)

(4)

(5)

Conditional

Prior

Joint

Events Probabilities Probabilities Probabilities

Ai

P(Ai)

P(B|Ai)

P(Ai I B)

A1

.7

.2

.14

A2

.3

.9

.27

1.0

P(B) = .41

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 59

Bayes’ Theorem: Tabular Approach

Example: L. S. Clothiers

• Step 4

Prepare the fifth column:

Column 5

Compute the posterior probabilities using the

basic relationship of conditional probability.

P( Ai B)

P( Ai | B)

P( B)

The joint probabilities P(Ai I B) are in column 4

and the probability P(B) is the sum of column 4.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 60

Bayes’ Theorem: Tabular Approach

Example: L. S. Clothiers

• Step 4

(1)

(2)

(3)

(4)

(5)

Prior

Joint

Posterior

Conditional

Events Probabilities Probabilities Probabilities Probabilities

P(B|Ai)

P(Ai)

P(Ai I B)

P(Ai |B)

Ai

A1

.7

.2

.14

.3415

A2

.3

.9

.27

.6585

P(B) = .41

1.0000

1.0

.14/.41

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 61

Example: Incidence of a rare disease

Only 1 in 1000 adults is afflicted with a rare

disease for which a diagnostic test has been

developed. The test is such that when an

individual actually has a disease, a positive

result will occur 99% of the time, whereas an

individual without the disease will show a

positive test result only 2% of the time. If a

randomly selected individual is tested and the

result is positive, what is the probability that the

individual has the disease?

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

62 Slide 62

End of Chapter 4

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 63

Chapter 5

Discrete Probability Distributions

Random Variables

Discrete Probability Distributions

Expected Value and Variance

Binomial Probability Distribution

Poisson Probability Distribution .40

Hypergeometric Probability

.30

Distribution

.20

.10

0

1

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

2

3

4

Slide 64

Random Variables

A random variable is a numerical description of the

outcome of an experiment.

A discrete random variable may assume either a

finite number of values or an infinite sequence of

values.

A continuous random variable may assume any

numerical value in an interval or collection of

intervals.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 65

Discrete Random Variable

with a Finite Number of Values

Example: JSL Appliances

Let x = number of TVs sold at the store in one day,

where x can take on 5 values (0, 1, 2, 3, 4)

We can count the TVs sold, and there is a finite

upper limit on the number that might be sold (which

is the number of TVs in stock).

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 66

Discrete Random Variable

with an Infinite Sequence of Values

Example: JSL Appliances

Let x = number of customers arriving in one day,

where x can take on the values 0, 1, 2, . . .

We can count the customers arriving, but there is

no finite upper limit on the number that might arrive.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 67

Random Variables

Question

Family

size

Type

Random Variable x

x = Number of dependents

reported on tax return

Discrete

Distance from x = Distance in miles from

home to store

home to the store site

Continuous

Own dog

or cat

Discrete

x = 1 if own no pet;

= 2 if own dog(s) only;

= 3 if own cat(s) only;

= 4 if own dog(s) and cat(s)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 68

Discrete Probability Distributions

The probability distribution for a random variable

describes how probabilities are distributed over

the values of the random variable.

We can describe a discrete probability distribution

with a table, graph, or formula.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 69

Discrete Probability Distributions

The probability distribution is defined by a

probability function, denoted by f(x), which provides

the probability for each value of the random variable.

The required conditions for a discrete probability

function are:

f(x) > 0

f(x) = 1

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 70

Discrete Probability Distributions

Example: JSL Appliances

• Using past data on TV sales, …

• a tabular representation of the probability

distribution for TV sales was developed.

Units Sold

0

1

2

3

4

Number

of Days

80

50

40

10

20

200

x

0

1

2

3

4

f(x)

.40

.25

.20

.05

.10

1.00

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

80/200

Slide 71

Discrete Probability Distributions

Example: JSL Appliances

Graphical

representation

of probability

distribution

Probability

.50

.40

.30

.20

.10

0

1

2

3

4

Values of Random Variable x (TV sales)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 72

Discrete Uniform Probability Distribution

The discrete uniform probability distribution is the

simplest example of a discrete probability

distribution given by a formula.

The discrete uniform probability function is

f(x) = 1/n

the values of the

random variable

are equally likely

where:

n = the number of values the random

variable may assume

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 73

Expected Value

The expected value, or mean, of a random variable

is a measure of its central location.

E(x) = = xf(x)

The expected value is a weighted average of the

values the random variable may assume. The

weights are the probabilities.

The expected value does not have to be a value the

random variable can assume.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 74

Variance and Standard Deviation

The variance summarizes the variability in the

values of a random variable.

Var(x) = 2 = (x - )2f(x)

The variance is a weighted average of the squared

deviations of a random variable from its mean. The

weights are the probabilities.

The standard deviation, , is defined as the positive

square root of the variance.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 75

Expected Value

Example: JSL Appliances

x

0

1

2

3

4

f(x)

xf(x)

.40

.00

.25

.25

.20

.40

.05

.15

.10

.40

E(x) = 1.20

expected number of

TVs sold in a day

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 76

Variance

Example: JSL Appliances

x

x-

0

1

2

3

4

-1.2

-0.2

0.8

1.8

2.8

(x - )2

f(x)

(x - )2f(x)

1.44

0.04

0.64

3.24

7.84

.40

.25

.20

.05

.10

.576

.010

.128

.162

.784

TVs

squared

Variance of daily sales = 2 = 1.660

Standard deviation of daily sales = 1.2884 TVs

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 77

Binomial Probability Distribution

Four Properties of a Binomial Experiment

1. The experiment consists of a sequence of n

identical trials.

2. Two outcomes, success and failure, are possible

on each trial.

3. The probability of a success, denoted by p, does

not change from trial to trial.

stationarity

assumption

4. The trials are independent.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 78

Binomial Probability Distribution

Our interest is in the number of successes

occurring in the n trials.

We let x denote the number of successes

occurring in the n trials.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 79

Binomial Probability Distribution

Binomial Probability Function

n!

f (x)

p x (1 - p )( n - x )

x !(n - x )!

where:

x = the number of successes

p = the probability of a success on one trial

n = the number of trials

f(x) = the probability of x successes in n trials

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 80

Binomial Probability Distribution

Binomial Probability Function

n!

f (x)

p x (1 - p )( n - x )

x !(n - x )!

Number of experimental

outcomes providing exactly

x successes in n trials

Probability of a particular

sequence of trial outcomes

with x successes in n trials

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 81

Binomial Probability Distribution

Example: Evans Electronics

Evans Electronics is concerned about a low

retention rate for its employees. In recent years,

management has seen a turnover of 10% of the

hourly employees annually.

Thus, for any hourly employee chosen at random,

management estimates a probability of 0.1 that the

person will not be with the company next year.

Choosing 3 hourly employees at random, what is

the probability that 1 of them will leave the company

this year?

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 82

Binomial Probability Distribution

Example: Evans Electronics

The probability of the first employee leaving and the

second and third employees staying, denoted (S, F, F),

is given by

p(1 – p)(1 – p)

With a .10 probability of an employee leaving on any

one trial, the probability of an employee leaving on

the first trial and not on the second and third trials is

given by

(.10)(.90)(.90) = (.10)(.90)2 = .081

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 83

Binomial Probability Distribution

Example: Evans Electronics

Two other experimental outcomes also result in one

success and two failures. The probabilities for all

three experimental outcomes involving one success

follow.

Experimental

Outcome

Probability of

Experimental Outcome

(S, F, F)

(F, S, F)

(F, F, S)

p(1 – p)(1 – p) = (.1)(.9)(.9) = .081

(1 – p)p(1 – p) = (.9)(.1)(.9) = .081

(1 – p)(1 – p)p = (.9)(.9)(.1) = .081

Total = .243

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 84

Binomial Probability Distribution

Example: Evans Electronics

Let: p = .10, n = 3, x = 1

Using the

probability

function

n!

f ( x)

p x (1 - p ) (n - x )

x !( n - x )!

3!

f (1)

(0.1)1 (0.9)2 3(.1)(.81) .243

1!(3 - 1)!

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 85

Binomial Probability Distribution

Example: Evans Electronics

1st Worker

2nd Worker

Leaves (.1)

Leaves

(.1)

Using a tree diagram

3rd Worker

L (.1)

x

3

Prob.

.0010

S (.9)

2

.0090

L (.1)

2

.0090

S (.9)

1

.0810

L (.1)

2

.0090

S (.9)

1

.0810

1

.0810

0

.7290

Stays (.9)

Leaves (.1)

Stays

(.9)

L (.1)

Stays (.9)

S (.9)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 86

Binomial Probabilities

and Cumulative Probabilities

Statisticians have developed tables that give

probabilities and cumulative probabilities for a

binomial random variable.

These tables can be found in some statistics

textbooks.

With modern calculators and the capability of

statistical software packages, such tables are

almost unnecessary.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 87

Binomial Probability Distribution

Expected Value

E(x) = = np

Variance

Var(x) = 2 = np(1 - p)

Standard Deviation

np(1 - p )

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 88

Binomial Probability Distribution

Example: Evans Electronics

• Expected Value

E(x) = np = 3(.1) = .3 employees out of 3

•

Variance

Var(x) = np(1 – p) = 3(.1)(.9) = .27

• Standard Deviation

3(.1)(.9) .52 employees

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 89

Chapter 6

Continuous Probability Distributions

Uniform Probability Distribution

Normal Probability Distribution

Normal Approximation of Binomial Probabilities

Exponential Probability Distribution

f (x)

f (x) Exponential

Uniform

f (x)

Normal

x

x

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 90

Continuous Probability Distributions

A continuous random variable can assume any value

in an interval on the real line or in a collection of

intervals.

It is not possible to talk about the probability of the

random variable assuming a particular value.

Instead, we talk about the probability of the random

variable assuming a value within a given interval.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 91

Continuous Probability Distributions

f (x)

The probability of the random variable assuming a

value within some given interval from x1 to x2 is

defined to be the area under the graph of the

probability density function between x1 and x2.

f (x) Exponential

Uniform

f (x)

x1 x2

Normal

x1 xx12 x2

x

x1 x2

x

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 92

Uniform Probability Distribution

A random variable is uniformly distributed

whenever the probability is proportional to the

interval’s length.

The uniform probability density function is:

f (x) = 1/(b – a) for a < x < b

=0

elsewhere

where: a = smallest value the variable can assume

b = largest value the variable can assume

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 93

Uniform Probability Distribution

Expected Value of x

E(x) = (a + b)/2

Variance of x

Var(x) = (b - a)2/12

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 94

Uniform Probability Distribution

Example: Slater's Buffet

Slater customers are charged for the amount of

salad they take. Sampling suggests that the amount

of salad taken is uniformly distributed between 5

ounces and 15 ounces.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 95

Uniform Probability Distribution

Uniform Probability Density Function

f(x) = 1/10 for 5 < x < 15

=0

elsewhere

where:

x = salad plate filling weight

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 96

Uniform Probability Distribution

Expected Value of x

E(x) = (a + b)/2

= (5 + 15)/2

= 10

Variance of x

Var(x) = (b - a)2/12

= (15 – 5)2/12

= 8.33

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 97

Uniform Probability Distribution

Uniform Probability Distribution

for Salad Plate Filling Weight

f(x)

1/10

0

5

10

Salad Weight (oz.)

x

15

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 98

Uniform Probability Distribution

What is the probability that a customer

will take between 12 and 15 ounces of salad?

f(x)

P(12 < x < 15) = 1/10(3) = .3

1/10

0

5

10 12

Salad Weight (oz.)

x

15

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 99

Area as a Measure of Probability

The area under the graph of f(x) and probability are

identical.

This is valid for all continuous random variables.

The probability that x takes on a value between some

lower value x1 and some higher value x2 can be found

by computing the area under the graph of f(x) over

the interval from x1 to x2.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 100

Normal Probability Distribution

The normal probability distribution is the most

important distribution for describing a continuous

random variable.

It is widely used in statistical inference.

It has been used in a wide variety of applications

including:

• Heights of people

• Rainfall amounts

• Test scores

• Scientific measurements

Abraham de Moivre, a French mathematician,

published The Doctrine of Chances in 1733.

He derived the normal distribution.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 101

Normal Probability Distribution

Normal Probability Density Function

1

- ( x - )2 /2 2

f (x)

e

2

where:

= mean

= standard deviation

= 3.14159

e = 2.71828

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 102

Normal Probability Distribution

Characteristics

The distribution is symmetric; its skewness

measure is zero.

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 103

Normal Probability Distribution

Characteristics

The entire family of normal probability

distributions is defined by its mean and its

standard deviation .

Standard Deviation

Mean

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 104

Normal Probability Distribution

Characteristics

The highest point on the normal curve is at the

mean, which is also the median and mode.

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 105

Normal Probability Distribution

Characteristics

The mean can be any numerical value: negative,

zero, or positive.

x

-10

0

25

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 106

Normal Probability Distribution

Characteristics

The standard deviation determines the width of the

curve: larger values result in wider, flatter curves.

= 15

= 25

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 107

Normal Probability Distribution

Characteristics

Probabilities for the normal random variable are

given by areas under the curve. The total area

under the curve is 1 (.5 to the left of the mean and

.5 to the right).

.5

.5

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 108

Normal Probability Distribution

Characteristics (basis for the empirical rule)

68.26% of values of a normal random variable

are within +/- 1 standard deviation of its mean.

95.44% of values of a normal random variable

are within +/- 2 standard deviations of its mean.

99.72% of values of a normal random variable

are within +/- 3 standard deviations of its mean.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 109

Normal Probability Distribution

Characteristics (basis for the empirical rule)

99.72%

95.44%

68.26%

– 3

– 1

– 2

+ 3

+ 1

+ 2

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

x

Slide 110

Standard Normal Probability Distribution

Characteristics

A random variable having a normal distribution

with a mean of 0 and a standard deviation of 1 is

said to have a standard normal probability

distribution.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 111

Standard Normal Probability Distribution

Characteristics

The letter z is used to designate the standard

normal random variable.

1

z

0

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 112

Standard Normal Probability Distribution

Converting to the Standard Normal Distribution

z

x-

We can think of z as a measure of the number of

standard deviations x is from .

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 113

Standard Normal Probability Distribution

Example: Pep Zone

Pep Zone sells auto parts and supplies including

a popular multi-grade motor oil. When the stock of

this oil drops to 20 gallons, a replenishment order is

placed.

The store manager is concerned that sales are

being lost due to stockouts while waiting for a

replenishment order.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 114

Standard Normal Probability Distribution

Example: Pep Zone

It has been determined that demand during

replenishment lead-time is normally distributed

with a mean of 15 gallons and a standard deviation

of 6 gallons.

The manager would like to know the probability

of a stockout during replenishment lead-time. In

other words, what is the probability that demand

during lead-time will exceed 20 gallons?

P(x > 20) = ?

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 115

Standard Normal Probability Distribution

Solving for the Stockout Probability

Step 1: Convert x to the standard normal distribution.

z = (x - )/

= (20 - 15)/6

= .83

Step 2: Find the area under the standard normal

curve to the left of z = .83.

see next slide

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 116

Standard Normal Probability Distribution

Cumulative Probability Table for

the Standard Normal Distribution

z

.00

.01

.02

.03

.04

.05

.06

.07

.08

.09

.

.

.

.

.

.

.

.

.

.

.

.5

.6915 .6950 .6985 .7019 .7054 .7088 .7123 .7157 .7190 .7224

.6

.7257 .7291 .7324 .7357 .7389 .7422 .7454 .7486 .7517 .7549

.7

.7580 .7611 .7642 .7673 .7704 .7734 .7764 .7794 .7823 .7852

.8

.7881 .7910 .7939 .7967 .7995 .8023 .8051 .8078 .8106 .8133

.9

.8159 .8186 .8212 .8238 .8264 .8289 .8315 .8340 .8365 .8389

.

.

.

.

.

.

.

.

.

.

.

P(z < .83)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 117

Standard Normal Probability Distribution

Solving for the Stockout Probability

Step 3: Compute the area under the standard normal

curve to the right of z = .83.

P(z > .83) = 1 – P(z < .83)

= 1- .7967

= .2033

Probability

of a stockout

P(x > 20)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 118

Standard Normal Probability Distribution

Solving for the Stockout Probability

Area = 1 - .7967

Area = .7967

= .2033

0

.83

z

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 119

Standard Normal Probability Distribution

Standard Normal Probability Distribution

If the manager of Pep Zone wants the probability

of a stockout during replenishment lead-time to be

no more than .05, what should the reorder point be?

--------------------------------------------------------------(Hint: Given a probability, we can use the standard

normal table in an inverse fashion to find the

corresponding z value.)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 120

Standard Normal Probability Distribution

Solving for the Reorder Point

Area = .9500

Area = .0500

0

z.05

z

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 121

Standard Normal Probability Distribution

Solving for the Reorder Point

Step 1: Find the z-value that cuts off an area of .05

in the right tail of the standard normal

distribution.

z

.00

.01

.02

.03

.04

.05

.06

.07

.08

.09

.

.

.

.

.

.

.

.

.

.

.

1.5 .9332 .9345 .9357 .9370 .9382 .9394 .9406 .9418 .9429 .9441

1.6 .9452 .9463 .9474 .9484 .9495 .9505 .9515 .9525 .9535 .9545

1.7 .9554 .9564 .9573 .9582 .9591 .9599 .9608 .9616 .9625 .9633

up.9706

1.8 .9641 .9649 .9656 .9664 .9671 .9678 .9686 We

.9693look

.9699

the.9756

complement

1.9 .9713 .9719 .9726 .9732 .9738 .9744 .9750

.9761 .9767

.

.

.

.

.

.

.

.

of the

tail. area .

.

(1 - .05 = .95)

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 122

Standard Normal Probability Distribution

Solving for the Reorder Point

Step 2: Convert z.05 to the corresponding value of x.

x = + z.05

= 15 + 1.645(6)

= 24.87 or 25

A reorder point of 25 gallons will place the probability

of a stockout during leadtime at (slightly less than) .05.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 123

Normal Probability Distribution

Solving for the Reorder Point

Probability of no

stockout during

replenishment

lead-time = .95

Probability of a

stockout during

replenishment

lead-time = .05

15

24.87

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 124

Standard Normal Probability Distribution

Solving for the Reorder Point

By raising the reorder point from 20 gallons to

25 gallons on hand, the probability of a stockout

decreases from about .20 to .05.

This is a significant decrease in the chance that

Pep Zone will be out of stock and unable to meet a

customer’s desire to make a purchase.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 125

Normal Approximation of Binomial Probabilities

When the number of trials, n, becomes large,

evaluating the binomial probability function by hand

or with a calculator is difficult.

The normal probability distribution provides an

easy-to-use approximation of binomial probabilities

where np > 5 and n(1 - p) > 5.

In the definition of the normal curve, set

= np and np (1 - p )

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 126

Normal Approximation of Binomial Probabilities

Add and subtract a continuity correction factor

because a continuous distribution is being used to

approximate a discrete distribution.

For example, P(x = 12) for the discrete binomial

probability distribution is approximated by

P(11.5 < x < 12.5) for the continuous normal

distribution.

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 127

Normal Approximation of Binomial Probabilities

Example

Suppose that a company has a history of making

errors in 10% of its invoices. A sample of 100

invoices has been taken, and we want to compute

the probability that 12 invoices contain errors.

In this case, we want to find the binomial

probability of 12 successes in 100 trials. So, we set:

= np = 100(.1) = 10

np (1 - p ) = [100(.1)(.9)] ½ = 3

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 128

Normal Approximation of Binomial Probabilities

Normal Approximation to a Binomial Probability

Distribution with n = 100 and p = .1

=3

P(11.5 < x < 12.5)

(Probability

of 12 Errors)

= 10

11.5

12.5

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 129

Normal Approximation of Binomial Probabilities

Normal Approximation to a Binomial Probability

Distribution with n = 100 and p = .1

P(x < 12.5) = .7967

10 12.5

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 130

Normal Approximation of Binomial Probabilities

Normal Approximation to a Binomial Probability

Distribution with n = 100 and p = .1

P(x < 11.5) = .6915

10

x

11.5

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 131

Normal Approximation of Binomial Probabilities

The Normal Approximation to the Probability

of 12 Successes in 100 Trials is .1052

P(x = 12)

= .7967 - .6915

= .1052

10

11.5

12.5

x

© 2011 Cengage Learning. All Rights Reserved. May not be scanned, copied

or duplicated, or posted to a publicly accessible website, in whole or in part.

Slide 132