DA-IICT, Gandhinagar

Lecture Notes

Subject: Probability, Statistics and Information Theory (SC222)

Date: 07/04/2021

Reg. No.: 201901438

Name: Yash Mandaviya

Lecture No.: 21

1

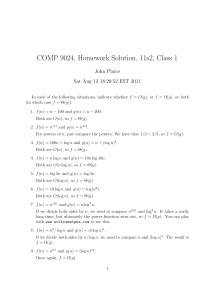

The Information Theory

The Information Theory is the study of the communication of information.

The fundamental work of the field The Information Theory was done by Harry Nyquist(an

electrical engineer), Ralph Hartley(an electrical reasercher) and Claude Shannon(mathematician).ClaudeShannon is known as The Father of the information theory because of the paper

he had published in 1948 called ”A Mathematical theory of the information”.

The Information Theory is divided in the parts like (1) Source Coding and (2) Channel

Coding.Source Coding comes in play while passing the messages, it is more of a related

to compressing the data.Source coding helps storing the data in the least possible space.

Whereas the channel coding is quite opposite , as if it adds more bits to the message to

make the communication error free.

1.1

Information

While studying the Information Theory, question comes in mind that what is actually information in the mathematical sense, and answer is:

”Information is the amount of uncertainty”

Well, still it’s not that much clear,isn’t it?, here are some examples:

Example 1: Suppose that Ankit and Hiten are playing some chess games and carrom

games, Ankit wins the chess games by 63 percent win rate, and Hiten wins the chess games

by 37 percent win rate, Ankit wins the carrom games by the win rate of 6 percent and

Hiten wins the carrom games by the win rate of 94 percent.

win rate for Ankit

win rate for Hiten

chess

63

37

carrom

6

94

If we go through the table and look at the chess coloumn , we can’t actually predicte the

good outcome weather Ankit wins or Hiten, but if we observe the carrom coloumn we are

almost certain that Hiten will win the game of the carrom.so we can say that the results of

the chess coloumn are more uncertain that of the carrom.

Example 2: The results published by the CBSE board in 2020 shows that 88.78 percent students passed the exams, and the report published by the IIT Delhi in 2020 shows

that only 28.64 percent students passed the IIT enterance exam.

pass percentage

fail percentage

IIT-JEE(2020)

28.64

71.36

CBSE board(2020)

88.78

11.22

We can conclude from the above table that, the information of the pass-fail percentage

of the IIT-JEE(2020) is less certain than the information of the CBSE board(2020).

The observation of the 2 tables above tell us that if there is more uncertanity in the

information then, that information contains more data.(the amount of the data in bits are

calculated in the entropy part.)

1.2

Entropy

Entropy is very important term , we are using in the information theory to measure the

information.As the information is the amount of uncertanity , more uncertanity in the information brings more information to store ,so the entropy is the amount of the uncertanity

in the information.

Here is the formula to calculate the entropy for a discreate random variable X,

X

H(X) = −

p(i) log2 p(i) bits

i∈X

The unit of the entropy is ’bits’ if the we were to take the base 2 in the formula, but if

we take the base ’e’ then, the unit of the entropy is ’nats’.

Example 1:Import all the data from the Example 1 of the Information part, we have

the following for chess game:

win rate for Ankit: 63 percent

win rate for Hiten: 37 percent

the entropy of the chess game is as follows:

H(chess) = −0.63 log2 0.63 − 0.37 log2 0.37 = 0.95 bits

we have the following for the carrom game:

win rate for Ankit: 6 percent

win rate for Hiten:94 percent

the entropy of the carrom game is as follows:

H(carrom) = −0.06 log2 0.06 − 0.94 log2 0.94 = 0.32 bits

2

Example 2: Suppose that we have two coins, one is unbiased and other is biased with

the following results:

Head percenatge

Tail percenatge

Biased Coin(A)

70

30

Unbiased Coin(B)

50

50

H(A) = −0.7 log2 0.7 − 0.3 log2 0.3 = 0.88 bits

H(B) = −0.5 log2 0.5 − 0.5 log2 0.5 = 1 bits

Some Observation: Suppose that there is a random experiment , and it has ’n’ different

outcomes with the same probability of ’1/n’ then after the experiment is done we were to

get the information of log2 n bits

From the understanding of the examples we have analyzed we have the following:- Before the random event is occured, There is some fixed(certain) amount of the uncertanity and we have not gained any type of information

- After the random event has occured , There is some fixed(certain) amount of information

gained and no uncertainty.

Example 3: Suppose that you toss a unbiased coin for several times untill you get 2

tails one after another. X denotes the number of tosses required to get 2 consecutive tails.

Calculate the Entropy for X.Follow the table.

X

2

3

4

5

..

i

p(X)

(1/4)

(1/8)

(1/16)

(1/32)

..

(1/2i )

Event

TT

HTT

HHTT

HHHTT

..

(H..H)TT

∞

X

1

1

= 1.5 bits

H(X) =

log2

i

i

2

1/2

i=2

Quick Reference to sum of some infinite series:

∞

X

ri =

1

, |r| < 1

1−r

ri =

r

, |r| < 1

1−r

i=0

∞

X

i=1

3

∞

X

i ∗ ri =

i=1

r

, |r| < 1

(1 − r)2

Example 4: Suppose that a certain random event has two outcomes 0 and 1, let’s take

a random variable X , which ∈ {0, 1} with following probabilities,

P (X = 0) = 1 − P, P (X = 1) = 1 − P (X = 0) = P, 0 ≤ p ≤ 1.

H(X) = −P log P − (1 − P ) log (1 − P )

If we want to maximize H(x) over P,we have to take

dH(x)

= 0

dx

and we will get x=1/2, means H(1/2) is maximum :

1.2.1

JOINT ENTROPY:

H(X, Y ) = −

XX

p(x, y) log2 p(x, y)

x∈X y∈Y

1.2.2

CONDITIONAL ANTROPY:

XX

H(Y | X) = −

p(x, y) log2 p(y | x)

x∈X y∈Y

=

P

x∈X

p(x) H(Y | x)

Example 1: joint probabilities for the random variables X and Y is given in the following

table:

X

Y

1

2

3

p(X) =⇒

1

2

3

p(Y)⇓

1/12

1/6

1/12

1/3

1/4

1/8

1/8

1/2

1/12

0

1/12

1/6

5/12

7/24

7/24

total = 1

4

1. H(X) = 0.56 bits

2. H(Y ) = 1.56 bits

3. H(Y | X) = 0.4 bits

4. H(X | Y ) = 1.4 bits

5. H(X, Y ) = 4 bits

1.2.3

MUTUAL INFORMATION

Mutual information of the two random variables A and B is denominated as I(A;B) or

I(B;A),

if we look up in the example of the conditional entropy , we can very wel see that H(Y) H(X) = H(Y | X) − H(X | Y )

I(A; B) = H(B) − H(B | A) = H(A) − H(A | B)

from the example 1 of the conditonal entropy , I(X;Y) = 1 bit

1.2.4

CHAIN RULE FOR ENTROPY

H(A, B) = H(A) + H(B | A)

= H(B) + H(A | B)

Given Formulas shows that, the total amount of the information which is carried by A,B

is the same as total information carried by A and total information carried by B given X ,

and vice-versa.

From this,we can derive yet another formula for I(A;B) which is,

I(A; B) = H(A) + H(B) − H(A, B)

If we are dealing with more than two random variables , say A1 , A2 , A3 , ...., andAn , then,

H(A1 , A2 , A3 , ...., An ) =

n

X

H(Aj | A1 , A2 , A3 , ...., Aj−1 )

j=1

And the Mutual Information for more than

two random variables:

n

X

I(A1 , A2 , A3 , ...., An ; B) =

I(Aj ; B | A1 , A2 , A3 , ...., Aj−1 )

j=1

5

1.3

SOURCE-CODING:

Source code C for a random variable X is a mapping from X to {0, 1}∗ , i.e., all possible

bit strings of 0 and 1. Here {0, 1}∗ = { ε, 0, 1, 00, 01, 10, 11, ....}

Average Length of the code C(L(C)) is given by:

X

L(C) =

pi li

i

Example 1: Suppose that there is a Random varible called X with the following attributes:

X

1

2

3

L(C1 ) =

p(x)

1/3

1/2

1/6

C(x)

10

011

1101

l(x)

2

3

4

1

1

1

∗2+ ∗3+ ∗4

3

2

6

= 2.8333 bits

Example 2: Suppose A has the following properties:

A

1

2

3

4

L(C) =

p(a)

1/8

1/2

1/4

1/8

C(a)

001

01

0

1111

l(a)

3

2

1

4

1

1

1

1

∗3+ ∗2+ ∗1+ ∗4

8

2

4

8

= 9/8 bits

Example 3: find the average length for Y ,which has the following prop:

Y

1

2

3

4

p(y)

1/2

1/4

1/8

1/8

6

C(y)

0

10

110

111

l(y)

1

2

3

3

L(C3 ) =

1

1

1

1

∗1+ ∗2+ ∗3+ ∗3

2

4

8

8

= 1.75 bits

H(Y1 ) =

1

1

1

1

log2 2 + log2 4 + log2 8 + log 8

2

4

8

8

= 1.75 bits

here, l(y) = −log2 p(y). So, L(C) = H(Y ).

2

CONCLUSION:

Now , Question is can we use the code which is in the example 2?

Answer = No

Reason = Because it’s not possible to decode 001.

References

[1] Thomas M. Cover and Joy A. Thomas. Elements of Information Theory, 2nd edition.

John Wiley & Sons, Inc., 2006 .

7