ENGINEERING

OPTIMIZATION

Theory and Practice

Third Edition

SINGIRESU S. RAO

School of Mechanical Engineering

Purdue University

West Lafayette, Indiana

A Wiley-Interscience Publication

John Wiley & Sons, Inc.

New York • Chichester • Brisbane • Toronto • Singapore

This text is printed on acid-free paper.

Copyright © 1996 by John Wiley & Sons, Inc., Wiley Eastern Limited, Publishers, and New

Age International Publishers, Ltd.

All rights reserved. Published simultaneously in Canada.

Reproduction or translation of any part of this work beyond

that permitted by Section 107 or 108 of the 1976 United

States Copyright Act without the permission of the copyright

owner is unlawful. Requests for permission or further

information should be addressed to the Permissions Department,

John Wiley & Sons, Inc.

This publication is designed to provide accurate and

authoritative information in regard to the subject

matter covered. It is sold with the understanding that

the publisher is not engaged in rendering

professional services. If legal, accounting, medical, psychological, or any other

expert assistance is required, the services of a competent

professional person should be sought.

Library of Congress Cataloging in Publication Data:

ISBN 0-471-55034-5

Printed in the United States of America

10 9 8 7 6 5 4

PREFACE

The ever-increasing demand on engineers to lower production costs to withstand competition has prompted engineers to look for rigorous methods of decision making, such as optimization methods, to design and produce products

both economically and efficiently. Optimization techniques, having reached a

degree of maturity over the past several years, are being used in a wide spectrum of industries, including aerospace, automotive, chemical, electrical, and

manufacturing industries. With rapidly advancing computer technology, computers are becoming more powerful, and correspondingly, the size and the

complexity of the problems being solved using optimization techniques are

also increasing. Optimization methods, coupled with modern tools of computer-aided design, are also being used to enhance the creative process of conceptual and detailed design of engineering systems.

The purpose of this textbook is to present the techniques and applications

of engineering optimization in a simple manner. Essential proofs and explanations of the various techniques are given in a simple manner without sacrificing accuracy. New concepts are illustrated with the help of numerical examples. Although most engineering design problems can be solved using

nonlinear programming techniques, there are a variety of engineering applications for which other optimization methods, such as linear, geometric, dynamic, integer, and stochastic programming techniques, are most suitable. This

book presents the theory and applications of all optimization techniques in a

comprehensive manner. Some of the recently developed methods of optimization, such as genetic algorithms, simulated annealing, neural-network-based

methods, and fuzzy optimization, are also discussed in the book.

A large number of solved examples, review questions, problems, figures,

and references are included to enhance the presentation of the material. Al-

though emphasis is placed on engineering design problems, examples and

problems are taken from several fields of engineering to make the subject appealing to all branches of engineering.

This book can be used either at the junior/senior or first-year-graduate-level

optimum design or engineering optimization courses. At Purdue University, I

cover Chapters 1, 2, 3, 5, 6, and 7 and parts of Chapters 8, 10, 12, and 13 in

a dual-level course entitled Optimal Design: Theory with Practice. In this

course, a design project is also assigned to each student in which the student

identifies, formulates, and solves a practical engineering problem of his or her

interest by applying or modifying an optimization technique. This design project gives the student a feeling for ways that optimization methods work in

practice. The book can also be used, with some supplementary material, for a

second course on engineering optimization or optimum design or structural

optimization. The relative simplicity with which the various topics are presented makes the book useful both to students and to practicing engineers for

purposes of self-study. The book also serves as reference source for different

engineering optimization applications. Although the emphasis of the book is

on engineering applications, it would also be useful to other areas, such as

operations research and economics. A knowledge of matrix theory and differential calculus is assumed on the part of the reader.

The book consists of thirteen chapters and two appendices. Chapter 1 provides an introduction to engineering optimization and optimum design and an

overview of optimization methods. The concepts of design space, constraint

surfaces, and contours of objective function are introduced here. In addition,

the formulation of various types of optimization problems is illustrated through

a variety of examples taken from various fields of engineering. Chapter 2 reviews the essentials of differential calculus useful in finding the maxima and

minima of functions of several variables. The methods of constrained variation

and Lagrange multipliers are presented for solving problems with equality constraints. The Kuhn-Tucker conditions for inequality-constrained problems are

given along with a discussion of convex programming problems.

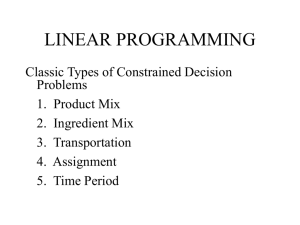

Chapters 3 and 4 deal with the solution of linear programming problems.

The characteristics of a general linear programming problem and the development of the simplex method of solution are given in Chapter 3. Some advanced topics in linear programming, such as the revised simplex method,

duality theory, the decomposition principle, and postoptimality analysis, are

discussed in Chapter 4. The extension of linear programming to solve quadratic programming problems is also considered in Chapter 4.

Chapters 5 through 7 deal with the solution of nonlinear programming problems. In Chapter 5, numerical methods of finding the optimum solution of a

function of a single variable are given. Chapter 6 deals with the methods of

unconstrained optimization. The algorithms for various zeroth-, first-, and second-order techniques are discussed along with their computational aspects.

Chapter 7 is concerned with the solution of nonlinear optimization problems

in the presence of inequality and equality constraints. Both the direct and in-

direct methods of optimization are discussed. The methods presented in this

chapter can be treated as the most general techniques for the solution of any

optimization problem.

Chapter 8 presents the techniques of geometric programming. The solution

techniques for problems with mixed inequality constraints and complementary

geometric programming are also considered. In Chapter 9, computational procedures for solving discrete and continuous dynamic programming problems

are presented. The problem of dimensionality is also discussed. Chapter 10

introduces integer programming and gives several algorithms for solving integer and discrete linear and nonlinear optimization problems. Chapter 11 reviews the basic probability theory and presents techniques of stochastic linear,

nonlinear, geometric, and dynamic programming. The theory and applications

of calculus of variations, optimal control theory, multiple objective optimization, optimality criteria methods, genetic algorithms, simulated annealing,

neural-network-based methods, and fuzzy system optimization are discussed

briefly in Chapter 12. The various approximation techniques used to speed up

the convergence of practical mechanical and structural optimization problems

are outlined in Chapter 13. Appendix A presents the definitions and properties

of convex and concave functions. Finally, a brief discussion of the computational aspects and some of the commercial optimization programs is given in

Appendix B.

ACKNOWLEDGMENTS

I wish to thank my wife, Kamala, and daughters, Sridevi and Shobha, for their

patience, understanding, encouragement, and support in preparing the manuscript.

S. S. RAO

March 1995

Contents

Preface ....................................................................................

vii

Acknowledgments ...................................................................

xi

1.

Introduction to Optimization ..........................................

1

1.1

Introduction .........................................................................

1

1.2

Historical Development .......................................................

3

1.3

Engineering Applications of Optimization ..........................

4

1.4

Statement of an Optimization Problem ..............................

5

1.4.1

Design Vector ..................................................

6

1.4.2

Design Constraints ..........................................

7

1.4.3

Constraint Surface ...........................................

8

1.4.4

Objective Function ...........................................

9

1.4.5

Objective Function Surfaces ............................

10

Classification of Optimization Problems .............................

15

1.5

1.5.1

1.5.2

1.5.3

1.5.4

Classification Based on the Existence of

Constraints ......................................................

15

Classification Based on the Nature of the

Design Variables ..............................................

15

Classification Based on the Physical

Structure of the Problem ..................................

17

Classification Based on the Nature of the

Equations Involved ..........................................

20

This page has been reformatted by Knovel to provide easier navigation.

xiii

xiv

Contents

1.5.5

Classification Based on the Permissible

Values of the Design Variables ........................

31

Classification Based on the Deterministic

Nature of the Variables ....................................

32

Classification Based on the Separability of

the Functions ...................................................

34

Classification Based on the Number of

Objective Functions .........................................

36

1.6

Optimization Techniques ....................................................

38

1.7

Engineering Optimization Literature ...................................

39

References and Bibliography ........................................................

40

Review Questions .........................................................................

44

Problems .......................................................................................

46

Classical Optimization Techniques ...............................

65

2.1

Introduction .........................................................................

65

2.2

Single-Variable Optimization ..............................................

65

2.3

Multivariable Optimization with No Constraints .................

71

2.3.1

Semidefinite Case ............................................

77

2.3.2

Saddle Point ....................................................

77

Multivariable Optimization with Equality Constraints .........

80

2.4.1

Solution by Direct Substitution .........................

80

2.4.2

Solution by the Method of Constrained

Variation ..........................................................

82

Solution by the Method of Lagrange

Multipliers ........................................................

91

1.5.6

1.5.7

1.5.8

2.

2.4

2.4.3.

2.5

2.6

Multivariable Optimization with Inequality

Constraints .......................................................................... 100

2.5.1

Kuhn-Tucker Conditions .................................. 105

2.5.2

Constraint Qualification .................................... 105

Convex Programming Problem .......................................... 112

This page has been reformatted by Knovel to provide easier navigation.

Contents

xv

References and Bibliography ........................................................ 112

Review Questions ......................................................................... 113

Problems ....................................................................................... 114

3.

Linear Programming I: Simplex Method ....................... 129

3.1

Introduction ......................................................................... 129

3.2

Applications of Linear Programming .................................. 130

3.3

Standard Form of a Linear Programming Problem ............ 132

3.4

Geometry of Linear Programming Problems ..................... 135

3.5

Definitions and Theorems .................................................. 139

3.6

Solution of a System of Linear Simultaneous

Equations ............................................................................ 146

3.7

Pivotal Reduction of a General System of

Equations ............................................................................ 148

3.8

Motivation of the Simplex Method ...................................... 152

3.9

Simplex Algorithm ............................................................... 153

3.9.1

Identifying an Optimal Point ............................. 154

3.9.2

Improving a Nonoptimal Basic Feasible

Solution ............................................................ 154

3.10 Two Phases of the Simplex Method .................................. 164

References and Bibliography ........................................................ 172

Review Questions ......................................................................... 172

Problems ....................................................................................... 174

4.

Linear Programming II: Additional Topics and

Extensions ...................................................................... 193

4.1

Introduction ......................................................................... 193

4.2

Revised Simplex Method .................................................... 194

4.3

Duality in Linear Programming ........................................... 210

4.3.1

Symmetric Primal-Dual Relations ..................... 211

4.3.2

General Primal-Dual Relations ......................... 211

This page has been reformatted by Knovel to provide easier navigation.

xvi

Contents

4.3.3

Primal-Dual Relations When the Primal Is

in Standard Form ............................................. 212

4.3.4

Duality Theorems ............................................. 214

4.3.5

Dual Simplex Method ....................................... 214

4.4

Decomposition Principle ..................................................... 219

4.5

Sensitivity or Postoptimality Analysis ................................. 228

4.5.1

Changes in the Right-Hand-Side

Constants bi ..................................................... 229

4.5.2

Changes in the Cost Coefficients cj .................. 235

4.5.3

Addition of New Variables ................................ 237

4.5.4

Changes in the Constraint Coefficients aij ........ 238

4.5.5

Addition of Constraints ..................................... 241

4.6

Transportation Problem ...................................................... 243

4.7

Karmarkar’s Method ........................................................... 246

4.8

4.7.1

Statement of the Problem ................................ 248

4.7.2

Conversion of an LP Problem into the

Required Form ................................................. 248

4.7.3

Algorithm ......................................................... 251

Quadratic Programming ..................................................... 254

References and Bibliography ........................................................ 261

Review Questions ......................................................................... 262

Problems ....................................................................................... 263

5.

Nonlinear Programming I: One-Dimensional

Minimization Methods .................................................... 272

5.1

Introduction ......................................................................... 272

5.2

Unimodal Function .............................................................. 278

Elimination Methods ...................................................................... 279

5.3

Unrestricted Search ............................................................ 279

5.3.1

Search with Fixed Step Size ............................ 279

5.3.2

Search with Accelerated Step Size .................. 280

This page has been reformatted by Knovel to provide easier navigation.

Contents

xvii

5.4

Exhaustive Search .............................................................. 281

5.5

Dichotomous Search .......................................................... 283

5.6

Interval Halving Method ...................................................... 286

5.7

Fibonacci Method ............................................................... 289

5.8

Golden Section Method ...................................................... 296

5.9

Comparison of Elimination Methods .................................. 298

Interpolation Methods .................................................................... 299

5.10 Quadratic Interpolation Method .......................................... 301

5.11 Cubic Interpolation Method ................................................ 308

5.12 Direct Root Methods ........................................................... 316

5.12.1 Newton Method ................................................ 316

5.12.2 Quasi-Newton Method ..................................... 319

5.12.3 Secant Method ................................................. 321

5.13 Practical Considerations ..................................................... 324

5.13.1 How to Make the Methods Efficient and

More Reliable ................................................... 324

5.13.2 Implementation in Multivariable

Optimization Problems ..................................... 325

5.13.3 Comparison of Methods ................................... 325

References and Bibliography ........................................................ 326

Review Questions ......................................................................... 326

Problems ....................................................................................... 327

6.

Nonlinear Programming II: Unconstrained

Optimization Techniques ............................................... 333

6.1

Introduction ......................................................................... 333

6.1.1

Classification of Unconstrained

Minimization Methods ...................................... 336

6.1.2

General Approach ............................................ 337

6.1.3

Rate of Convergence ....................................... 337

6.1.4

Scaling of Design Variables ............................. 339

This page has been reformatted by Knovel to provide easier navigation.

xviii

Contents

Direct Search Methods .................................................................. 343

6.2

Random Search Methods ................................................... 343

6.2.1

Random Jumping Method ................................ 343

6.2.2

Random Walk Method ..................................... 345

6.2.3

Random Walk Method with Direction

Exploitation ...................................................... 347

6.3

Grid Search Method ........................................................... 348

6.4

Univariate Method .............................................................. 350

6.5

Pattern Directions ............................................................... 353

6.6

Hooke and Jeeves’ Method ................................................ 354

6.7

Powell’s Method .................................................................. 357

6.7.1

Conjugate Directions ........................................ 358

6.7.2

Algorithm ......................................................... 362

6.8

Rosenbrock’s Method of Rotating Coordinates ................. 368

6.9

Simplex Method .................................................................. 368

6.9.1

Reflection ......................................................... 369

6.9.2

Expansion ........................................................ 372

6.9.3

Contraction ...................................................... 373

Indirect Search (Descent) Methods .............................................. 376

6.10 Gradient of a Function ........................................................ 376

6.10.1 Evaluation of the Gradient ............................... 379

6.10.2 Rate of Change of a Function Along a

Direction .......................................................... 380

6.11 Steepest Descent (Cauchy) Method .................................. 381

6.12 Conjugate Gradient (Fletcher-Reeves) Method ................. 383

6.12.1 Development of the Fletcher-Reeves

Method ............................................................. 384

6.12.2 Fletcher-Reeves Method .................................. 386

6.13 Newton's Method ................................................................ 389

6.14 Marquardt Method .............................................................. 392

6.15 Quasi-Newton Methods ...................................................... 394

This page has been reformatted by Knovel to provide easier navigation.

Contents

xix

6.15.1 Rank 1 Updates ............................................... 395

6.15.2 Rank 2 Updates ............................................... 397

6.16 Davidon-Fletcher-Powell Method ....................................... 399

6.17 Broydon-Fletcher-Goldfarb-Shanno Method ...................... 405

6.18 Test Functions .................................................................... 408

References and Bibliography ........................................................ 411

Review Questions ......................................................................... 413

Problems ....................................................................................... 415

7.

Nonlinear Programming III: Constrained

Optimization Techniques ............................................... 428

7.1

Introduction ......................................................................... 428

7.2

Characteristics of a Constrained Problem ......................... 428

Direct Methods .............................................................................. 432

7.3

Random Search Methods ................................................... 432

7.4

Complex Method ................................................................ 433

7.5

Sequential Linear Programming ......................................... 436

7.6

Basic Approach in the Methods of Feasible

Directions ............................................................................ 443

7.7

Zoutendijk’s Method of Feasible Directions ....................... 444

7.8

7.7.1

Direction-Finding Problem ................................ 446

7.7.2

Determination of Step Length .......................... 449

7.7.3

Termination Criteria ......................................... 452

Rosen’s Gradient Projection Method ................................. 455

7.8.1

7.9

Determination of Step Length .......................... 459

Generalized Reduced Gradient Method ............................ 465

7.10 Sequential Quadratic Programming ................................... 477

7.10.1 Derivation ........................................................ 477

7.10.2 Solution Procedure .......................................... 480

Indirect Methods ............................................................................ 485

7.11 Transformation Techniques ................................................ 485

This page has been reformatted by Knovel to provide easier navigation.

xx

Contents

7.12 Basic Approach of the Penalty Function Method ............... 487

7.13 Interior Penalty Function Method ....................................... 489

7.14 Convex Programming Problem .......................................... 501

7.15 Exterior Penalty Function Method ...................................... 502

7.16 Extrapolation Technique in the Interior Penalty

Function Method ................................................................. 507

7.16.1 Extrapolation of the Design Vector X ............... 508

7.16.2 Extrapolation of the Function f ......................... 510

7.17 Extended Interior Penalty Function Methods ..................... 512

7.17.1 Linear Extended Penalty Function

Method ............................................................. 512

7.17.2 Quadratic Extended Penalty Function

Method ............................................................. 513

7.18 Penalty Function Method for Problems with Mixed

Equality and Inequality Constraints .................................... 515

7.18.1 Interior Penalty Function Method ..................... 515

7.18.2 Exterior Penalty Function Method .................... 517

7.19 Penalty Function Method for Parametric

Constraints .......................................................................... 517

7.19.1 Parametric Constraint ...................................... 517

7.19.2 Handling Parametric Constraints ...................... 519

7.20 Augmented Lagrange Multiplier Method ............................ 521

7.20.1 Equality-Constrained Problems ........................ 521

7.20.2 Inequality-Constrained Problems ..................... 523

7.20.3 Mixed Equality-Inequality Constrained

Problems ......................................................... 525

7.21 Checking Convergence of Constrained Optimization

Problems ............................................................................. 527

7.21.1 Perturbing the Design Vector ........................... 527

7.21.2 Testing the Kuhn-Tucker Conditions ................ 528

This page has been reformatted by Knovel to provide easier navigation.

Contents

xxi

7.22 Test Problems ..................................................................... 529

7.22.1 Design of a Three-Bar Truss ............................ 530

7.22.2 Design of a Twenty-Five-Bar Space

Truss ............................................................... 531

7.22.3 Welded Beam Design ...................................... 534

7.22.4 Speed Reducer (Gear Train) Design ................ 536

7.22.5 Heat Exchanger Design ................................... 537

References and Bibliography ........................................................ 538

Review Questions ......................................................................... 540

Problems ....................................................................................... 543

8.

Geometric Programming ................................................ 556

8.1

Introduction ......................................................................... 556

8.2

Posynomial ......................................................................... 556

8.3

Unconstrained Minimization Problem ................................ 557

8.4

Solution of an Unconstrained Geometric

Programming Problem Using Differential Calculus ........... 558

8.5

Solution of an Unconstrained Geometric

Programming Problem Using Arithmetic-Geometric

Inequality ............................................................................. 566

8.6

Primal-Dual Relationship and Sufficiency Conditions

in the Unconstrained Case ................................................. 567

8.7

Constrained Minimization ................................................... 575

8.8

Solution of a Constrained Geometric Programming

Problem ............................................................................... 576

8.9

Primal and Dual Programs in the Case of Less-Than

Inequalities .......................................................................... 577

8.10 Geometric Programming with Mixed Inequality

Constraints .......................................................................... 585

8.11 Complementary Geometric Programming ......................... 588

8.12 Applications of Geometric Programming ........................... 594

This page has been reformatted by Knovel to provide easier navigation.

xxii

Contents

References and Bibliography ........................................................ 609

Review Questions ......................................................................... 611

Problems ....................................................................................... 612

9.

Dynamic Programming .................................................. 616

9.1

Introduction ......................................................................... 616

9.2

Multistage Decision Processes .......................................... 617

9.2.1

Definition and Examples .................................. 617

9.2.2

Representation of a Multistage Decision

Process ............................................................ 618

9.2.3

Conversion of a Nonserial System to a

Serial System ................................................... 620

9.2.4

Types of Multistage Decision Problems ........... 621

9.3

Concept of Suboptimization and the Principle of

Optimality ............................................................................ 622

9.4

Computational Procedure in Dynamic

Programming ...................................................................... 626

9.5

Example Illustrating the Calculus Method of

Solution ............................................................................... 630

9.6

Example Illustrating the Tabular Method of Solution ......... 635

9.7

Conversion of a Final Value Problem into an Initial

Value Problem .................................................................... 641

9.8

Linear Programming as a Case of Dynamic

Programming ...................................................................... 644

9.9

Continuous Dynamic Programming ................................... 649

9.10 Additional Applications ....................................................... 653

9.10.1 Design of Continuous Beams ........................... 653

9.10.2 Optimal Layout (Geometry) of a Truss ............. 654

9.10.3 Optimal Design of a Gear Train ....................... 655

9.10.4 Design of a Minimum-Cost Drainage

System ............................................................. 656

This page has been reformatted by Knovel to provide easier navigation.

Contents

xxiii

References and Bibliography ........................................................ 658

Review Questions ......................................................................... 659

Problems ....................................................................................... 660

10. Integer Programming ..................................................... 667

10.1 Introduction ......................................................................... 667

Integer Linear Programming ......................................................... 668

10.2 Graphical Representation ................................................... 668

10.3 Gomory’s Cutting Plane Method ........................................ 670

10.3.1 Concept of a Cutting Plane .............................. 670

10.3.2 Gomory’s Method for All-Integer

Programming Problems ................................... 672

10.3.3 Gomory’s Method for Mixed-Integer

Programming Problems ................................... 679

10.4 Balas’ Algorithm for Zero-One Programming

Problems ............................................................................. 685

Integer Nonlinear Programming .................................................... 687

10.5 Integer Polynomial Programming ....................................... 687

10.5.1 Representation of an Integer Variable by

an Equivalent System of Binary

Variables .......................................................... 688

10.5.2 Conversion of a Zero-One Polynomial

Programming Problem into a Zero-One LP

Problem ........................................................... 689

10.6 Branch-and-Bound Method ................................................ 690

10.7 Sequential Linear Discrete Programming .......................... 697

10.8 Generalized Penalty Function Method ............................... 701

References and Bibliography ........................................................ 707

Review Questions ......................................................................... 708

Problems ....................................................................................... 709

This page has been reformatted by Knovel to provide easier navigation.

xxiv

Contents

11. Stochastic Programming ............................................... 715

11.1 Introduction ......................................................................... 715

11.2 Basic Concepts of Probability Theory ................................ 716

11.2.1 Definition of Probability .................................... 716

11.2.2 Random Variables and Probability Density

Functions ......................................................... 717

11.2.3 Mean and Standard Deviation .......................... 719

11.2.4 Function of a Random Variable ........................ 722

11.2.5 Jointly Distributed Random Variables .............. 723

11.2.6 Covariance and Correlation ............................. 724

11.2.7 Functions of Several Random

Variables .......................................................... 725

11.2.8 Probability Distributions ................................... 727

11.2.9 Central Limit Theorem ..................................... 732

11.3 Stochastic Linear Programming ......................................... 732

11.4 Stochastic Nonlinear Programming .................................... 738

11.4.1 Objective Function ........................................... 738

11.4.2 Constraints ...................................................... 739

11.5 Stochastic Geometric Programming .................................. 746

11.6 Stochastic Dynamic Programming ..................................... 748

11.6.1 Optimality Criterion .......................................... 748

11.6.2 Multistage Optimization .................................... 749

11.6.3 Stochastic Nature of the Optimum

Decisions ......................................................... 753

References and Bibliography ........................................................ 758

Review Questions ......................................................................... 759

Problems ....................................................................................... 761

12. Further Topics in Optimization ...................................... 768

12.1 Introduction ......................................................................... 768

This page has been reformatted by Knovel to provide easier navigation.

Contents

xxv

12.2 Separable Programming .................................................... 769

12.2.1 Transformation of a Nonlinear Function to

Separable Form ............................................... 770

12.2.2 Piecewise Linear Approximation of a

Nonlinear Function ........................................... 772

12.2.3 Formulation of a Separable Nonlinear

Programming Problem ..................................... 774

12.3 Multiobjective Optimization ................................................. 779

12.3.1 Utility Function Method .................................... 780

12.3.2 Inverted Utility Function Method ....................... 781

12.3.3 Global Criterion Method ................................... 781

12.3.4 Bounded Objective Function Method ............... 781

12.3.5 Lexicographic Method ...................................... 782

12.3.6 Goal Programming Method .............................. 782

12.4 Calculus of Variations ......................................................... 783

12.4.1 Introduction ...................................................... 783

12.4.2 Problem of Calculus of Variations .................... 784

12.4.3 Lagrange Multipliers and Constraints ............... 791

12.4.4 Generalization .................................................. 795

12.5 Optimal Control Theory ...................................................... 795

12.5.1 Necessary Conditions for Optimal

Control ............................................................. 796

12.5.2 Necessary Conditions for a General

Problem ........................................................... 799

12.6 Optimality Criteria Methods ................................................ 800

12.6.1 Optimality Criteria with a Single

Displacement Constraint .................................. 801

12.6.2 Optimality Criteria with Multiple

Displacement Constraints ................................ 802

12.6.3 Reciprocal Approximations .............................. 803

This page has been reformatted by Knovel to provide easier navigation.

xxvi

Contents

12.7 Genetic Algorithms ............................................................. 806

12.7.1 Introduction ...................................................... 806

12.7.2 Representation of Design Variables ................. 808

12.7.3 Representation of Objective Function and

Constraints ...................................................... 809

12.7.4 Genetic Operators ........................................... 810

12.7.5 Numerical Results ............................................ 811

12.8 Simulated Annealing ........................................................... 811

12.9 Neural-Network-Based Optimization .................................. 814

12.10 Optimization of Fuzzy Systems .......................................... 818

12.10.1 Fuzzy Set Theory ............................................. 818

12.10.2 Optimization of Fuzzy Systems ........................ 821

12.10.3 Computational Procedure ................................ 823

References and Bibliography ........................................................ 824

Review Questions ......................................................................... 827

Problems ....................................................................................... 829

13. Practical Aspects of Optimization ................................. 836

13.1 Introduction ......................................................................... 836

13.2 Reduction of Size of an Optimization Problem .................. 836

13.2.1 Reduced Basis Technique ............................... 836

13.2.2 Design Variable Linking Technique .................. 837

13.3 Fast Reanalysis Techniques .............................................. 839

13.3.1 Incremental Response Approach ..................... 839

13.3.2 Basis Vector Approach .................................... 845

13.4 Derivatives of Static Displacements and Stresses ............ 847

13.5 Derivatives of Eigenvalues and Eigenvectors .................... 848

13.5.1 Derivatives of λi ............................................... 848

13.5.2 Derivatives of Yi ............................................... 849

13.6 Derivatives of Transient Response .................................... 851

This page has been reformatted by Knovel to provide easier navigation.

Contents

xxvii

13.7 Sensitivity of Optimum Solution to Problem

Parameters ......................................................................... 854

13.7.1 Sensitivity Equations Using Kuhn-Tucker

Conditions ........................................................ 854

13.7.2 Sensitivity Equations Using the Concept of

Feasible Direction ............................................ 857

13.8 Multilevel Optimization ........................................................ 858

13.8.1 Basic Idea ........................................................ 858

13.8.2 Method ............................................................. 859

13.9 Parallel Processing ............................................................. 864

References and Bibliography ........................................................ 867

Review Questions ......................................................................... 868

Problems ....................................................................................... 869

Appendices ............................................................................ 876

Appendix A: Convex and Concave Functions .............................. 876

Appendix B: Some Computational Aspects of Optimization ........ 882

Answers to Selected Problems ............................................ 888

Index ....................................................................................... 895

This page has been reformatted by Knovel to provide easier navigation.

1

INTRODUCTION TO OPTIMIZATION

1.1 INTRODUCTION

Optimization is the act of obtaining the best result under given circumstances.

In design, construction, and maintenance of any engineering system, engineers

have to take many technological and managerial decisions at several stages.

The ultimate goal of all such decisions is either to minimize the effort required

or to maximize the desired benefit. Since the effort required or the benefit

desired in any practical situation can be expressed as a function of certain

decision variables, optimization can be defined as the process of finding the

conditions that give the maximum or minimum value of a function. It can be

seen from Fig. 1.1 that if a point JC* corresponds to the minimum value of

function/(JC), the same point also corresponds to the maximum value of the

negative of the function, —f(x). Thus, without loss of generality, optimization

can be taken to mean minimization since the maximum of a function can be

found by seeking the minimum of the negative of the same function. There is

no single method available for solving all optimization problems efficiently.

Hence a number of optimization methods have been developed for solving

different types of optimization problems.

The optimum seeking methods are also known as mathematical programming techniques and are generally studied as a part of operations research.

Operations research is a branch of mathematics concerned with the application

of scientific methods and techniques to decision making problems and with

establishing the best or optimal solutions. Table 1.1 lists various mathematical

programming techniques together with other well-defined areas of operations

research. The classification given in Table 1.1 is not unique; it is given mainly

for convenience.

re*, Minimum of f(x)

x*, Maximum of - f(x)

Figure 1.1 Minimum of/(jc) is same as maximum of —f(x).

Mathematical programming techniques are useful in finding the minimum

of a function of several variables under a prescribed set of constraints. Stochastic process techniques can be used to analyze problems described by a set

of random variables having known probability distributions. Statistical methods enable one to analyze the experimental data and build empirical models to

TABLE 1.1 Methods of Operations Research

Mathematical Programming

Techniques

Calculus methods

Calculus of variations

Nonlinear programming

Geometric programming

Quadratic programming

Linear programming

Dynamic programming

Integer programming

Stochastic programming

Separable programming

Multiobjective programming

Network methods: CPM and

PERT

Game theory

Simulated annealing

Genetic algorithms

Neural networks

Stochastic Process

Techniques

Statistical Methods

Statistical decision theory

Markov processes

Queueing theory

Renewal theory

Simulation methods

Reliability theory

Regression analysis

Cluster analysis, pattern

recognition

Design of experiments

Discriminate analysis

(factor analysis)

obtain the most accurate representation of the physical situation. This book

deals with the theory and application of mathematical programming techniques

suitable for the solution of engineering design problems.

1.2 HISTORICAL DEVELOPMENT

The existence of optimization methods can be traced to the days of Newton,

Lagrange, and Cauchy. The development of differential calculus methods of

optimization was possible because of the contributions of Newton and Leibnitz

to calculus. The foundations of calculus of variations, which deals with the

minimization of functionals, were laid by Bernoulli, Euler, Lagrange, and

Weirstrass. The method of optimization for constrained problems, which involves the addition of unknown multipliers, became known by the name of its

inventor, Lagrange. Cauchy made the first application of the steepest descent

method to solve unconstrained minimization problems. Despite these early

contributions, very little progress was made until the middle of the twentieth

century, when high-speed digital computers made implementation of the optimization procedures possible and stimulated further research on new methods. Spectacular advances followed, producing a massive literature on optimization techniques. This advancement also resulted in the emergence of

several well-defined new areas in optimization theory.

It is interesting to note that the major developments in the area of numerical

methods of unconstrained optimization have been made in the United Kingdom

only in the 1960s. The development of the simplex method by Dantzig in 1947

for linear programming problems and the annunciation of the principle of optimality in 1957 by Bellman for dynamic programming problems paved the

way for development of the methods of constrained optimization. Work by

Kuhn and Tucker in 1951 on the necessary and sufficiency conditions for the

optimal solution of programming problems laid the foundations for a great deal

of later research in nonlinear programming. The contributions of Zoutendijk

and Rosen to nonlinear programming during the early 1960s have been very

significant. Although no single technique has been found to be universally

applicable for nonlinear programming problems, work of Carroll and Fiacco

and McCormick allowed many difficult problems to be solved by using the

well-known techniques of unconstrained optimization. Geometric programming was developed in the 1960s by Duffin, Zener, and Peterson. Gomory did

pioneering work in integer programming, one of the most exciting and rapidly

developing areas of optimization. The reason for this is that most real-world

applications fall under this category of problems. Dantzig and Charnes and

Cooper developed stochastic programming techniques and solved problems by

assuming design parameters to be independent and normally distributed.

The desire to optimize more than one objective or goal while satisfying the

physical limitations led to the development of multiobjective programming

methods. Goal programming is a well-known technique for solving specific

types of multiobjective optimization problems. The goal programming was

originally proposed for linear problems by Charnes and Cooper in 1961. The

foundations of game theory were laid by von Neumann in 1928 and since then

the technique has been applied to solve several mathematical economics and

military problems. Only during the last few years has game theory been applied

to solve engineering design problems.

Simulated annealing, genetic algorithms, and neural network methods represent a new class of mathematical programming techniques that have come

into prominence during the last decade. Simulated annealing is analogous to

the physical process of annealing of solids. The genetic algorithms are search

techniques based on the mechanics of natural selection and natural genetics.

Neural network methods are based on solving the problem using the efficient

computing power of the network of interconnected "neuron" processors.

1.3 ENGINEERING APPLICATIONS OF OPTIMIZATION

Optimization, in its broadest sense, can be applied to solve any engineering

problem. To indicate the wide scope of the subject, some typical applications

from different engineering disciplines are given below.

1. Design of aircraft and aerospace structures for minimum weight

2. Finding the optimal trajectories of space vehicles

3. Design of civil engineering structures such as frames, foundations,

bridges, towers, chimneys, and dams for minimum cost

4. Minimum-weight design of structures for earthquake, wind, and other

types of random loading

5. Design of water resources systems for maximum benefit

6. Optimal plastic design of structures

7. Optimum design of linkages, cams, gears, machine tools, and other

mechanical components

8. Selection of machining conditions in metal-cutting processes for minimum production cost

9. Design of material handling equipment such as conveyors, trucks, and

cranes for minimum cost

10. Design of pumps, turbines, and heat transfer equipment for maximum

efficiency

11. Optimum design of electrical machinery such as motors, generators,

and transformers

12. Optimum design of electrical networks

13. Shortest route taken by a salesperson visiting various cities during one

tour

14. Optimal production planning, controlling, and scheduling

15. Analysis of statistical data and building empirical models from experimental results to obtain the most accurate representation of the physical

phenomenon

16. Optimum design of chemical processing equipment and plants

17. Design of optimum pipeline networks for process industries

18. Selection of a site for an industry

19. Planning of maintenance and replacement of equipment to reduce operating costs

20. Inventory control

21. Allocation of resources or services among several activities to maximize the benefit

22. Controlling the waiting and idle times and queueing in production lines

to reduce the costs

23. Planning the best strategy to obtain maximum profit in the presence of

a competitor

24. Optimum design of control systems

1.4 STATEMENT OF AN OPTIMIZATION PROBLEM

An optimization or a mathematical programming problem can be stated as follows.

(-)

Find X = \ } > which minimizes /(X)

U J

subject to the constraints

gj(X) < 0,

J = 1,2,. . .,m

Ij(X) = 0,

J = 1,2,. . .,/>

( 1 1}

where X is an n-dimensional vector called the design vector, /(X) is termed

the objective Junction, and gj (X) and Ij (X) are known as inequality and equality constraints, respectively. The number of variables n and the number of

constraints m and/or/? need not be related in any way. The problem stated in

Eq. (1.1) is called a constrained optimization problem.^ Some optimization

1

In the mathematical programming literature, the equality constraints Z7(X) = 0 , j = 1,2,. . .,p

are often neglected, for simplicity, in the statement of a constrained optimization problem, although several methods are available for handling problems with equality constraints.

problems do not involve any constraints and can be stated as:

("X1-)

Find X = < X} > which minimizes/(X)

Such problems are called unconstrained optimization

1.4.1

(1.2)

problems.

Design Vector

Any engineering system or component is defined by a set of quantities some

of which are viewed as variables during the design process. In general, certain

quantities are usually fixed at the outset and these are called preassigned parameters. All the other quantities are treated as variables in the design process

and are called design or decision variables xh i = 1,2,. . .,n. The design vari-

ables are collectively represented as a design vector X = \ } >. As an exU J

ample, consider the design of the gear pair shown in Fig. 1.2, characterized

by its face width b, number of teeth Tx and T2, center distance d, pressure

angle \[/, tooth profile, and material. If center distance d, pressure angle xj/,

tooth profile, and material of the gears are fixed in advance, these quantities

can be called preassigned parameters. The remaining quantities can be collectively represented by a design vector X = < X2 ) = < T1 >. If there are no

W

W

restrictions on the choice of b, Tx, and T2, any set of three numbers will constitute a design for the gear pair. If an n-dimensional Cartesian space with each

coordinate axis representing a design variablex t (i = 1,2,. . .,n) is considered,

the space is called the design variable space or simply, design space. Each

point in the rc-dimensional design space is called a design point and represents

either a possible or an impossible solution to the design problem. In the case

of the design of a gear pair, the design point < 20 >, for example, represents

I

4 0

J

r

, o )

a possible solution, whereas the design point < — 20 > represents an imposL 40.5J

Ti

N1

d

T2

N2

b

Figure 1.2

Gear pair in mesh.

sible solution since it is not possible to have either a negative value or a fractional value for the number of teeth.

1.4.2

Design Constraints

In many practical problems, the design variables cannot be chosen arbitrarily;

rather, they have to satisfy certain specified functional and other requirements.

The restrictions that must be satisfied to produce an acceptable design are collectively called design constraints. Constraints that represent limitations on the

behavior or performance of the system are termed behavior or functional constraints. Constraints that represent physical limitations on design variables such

as availability, fabricability, and transportability are known as geometric or

side constraints. For example, for the gear pair shown in Fig. 1.2, the face

width b cannot be taken smaller than a certain value, due to strength requirements. Similarly, the ratio of the numbers of teeth, TxIT2, is dictated by the

speeds of the input and output shafts, Nx and Af2. Since these constraints depend

on the performance of the gear pair, they are called behavior constraints. The

values of Tx and T2 cannot be any real numbers but can only be integers.

Further, there can be upper and lower bounds on T1 and T2 due to manufacturing limitations. Since these constraints depend on the physical limitations,

they are called side constraints.

1.4.3 Constraint Surface

For illustration, consider an optimization problem with only inequality constraints gj (X) < 0. The set of values of X that satisfy the equation gj (X) =

0 forms a hypersurface in the design space and is called a constraint surface.

Note that this is an (n-l)-dimensional subspace, where n is the number of

design variables. The constraint surface divides the design space into two regions: one in which gj (X) < 0 and the other in which gj (X) > 0. Thus the

points lying on the hypersurface will satisfy the constraint gj (X) critically,

whereas the points lying in the region where gj (X) > 0 are infeasible or unacceptable, and the points lying in the region where gj (X) < 0 are feasible or

acceptable. The collection of all the constraint surfaces gy (X) = 0, j =

1,2,. . .,m, which separates the acceptable region is called the composite constraint surface.

Figure 1.3 shows a hypothetical two-dimensional design space where the

infeasible region is indicated by hatched lines. A design point that lies on one

or more than one constraint surface is called a bound point, and the associated

constraint is called an active constraint. Design points that do not lie on any

constraint surface are known as free points. Depending on whether a particular

design point belongs to the acceptable or unacceptable region, it can be iden-

Behaviour constraint g- = 0

X2

Infeasible region

Side constraint^ = 0

Feasible region

Free point

Behavior

constraint

Behavior

constraint

Bound acceptable

point

Free

unacceptable

point

Side constraint # = 0

Bound unacceptable point

*1

Figure 1.3 Constraint surfaces in a hypothetical two-dimensional design space.

tified as one of the following four types:

1.

2.

3.

4.

Free and acceptable point

Free and unacceptable point

Bound and acceptable point

Bound and unacceptable point

All four types of points are shown in Fig. 1.3.

1.4.4 Objective Function

The conventional design procedures aim at finding an acceptable or adequate

design which merely satisfies the functional and other requirements of the

problem. In general, there will be more than one acceptable design, and the

purpose of optimization is to choose the best one of the many acceptable designs available. Thus a criterion has to be chosen for comparing the different

alternative acceptable designs and for selecting the best one. The criterion with

respect to which the design is optimized, when expressed as a function of the

design variables, is known as the criterion or merit or objective function. The

choice of objective function is governed by the nature of problem. The objective function for minimization is generally taken as weight in aircraft and aerospace structural design problems. In civil engineering structural designs, the

objective is usually taken as the minimization of cost. The maximization of

mechanical efficiency is the obvious choice of an objective in mechanical engineering systems design. Thus the choice of the objective function appears to

be straightforward in most design problems. However, there may be cases

where the optimization with respect to a particular criterion may lead to results

that may not be satisfactory with respect to another criterion. For example, in

mechanical design, a gearbox transmitting the maximum power may not have

the minimum weight. Similarly, in structural design, the minimum-weight design may not correspond to minimum stress design, and the minimum stress

design, again, may not correspond to maximum frequency design. Thus the

selection of the objective function can be one of the most important decisions

in the whole optimum design process.

In some situations, there may be more than one criterion to be satisfied

simultaneously. For example, a gear pair may have to be designed for minimum weight and maximum efficiency while transmitting a specified horsepower. An optimization problem involving multiple objective functions is

known as a multiobjective programming problem. With multiple objectives

there arises a possibility of conflict, and one simple way to handle the problem

is to construct an overall objective function as a linear combination of the

conflicting multiple objective functions. Thus if Z1 (X) and/2(X) denote two

objective functions, construct a new (overall) objective function for optimization as

/(X) = a,/,(X) + a2/2(X)

(1.3)

where ax and a2 are constants whose values indicate the relative importance

of one objective function relative to the other.

1.4.5 Objective Function Surfaces

The locus of all points satisfying /(X) = c = constant forms a hypersurface

in the design space, and for each value of c there corresponds a different member of a family of surfaces. These surfaces, called objective function surfaces,

are shown in a hypothetical two-dimensional design space in Fig. 1.4.

Once the objective function surfaces are drawn along with the constraint

surfaces, the optimum point can be determined without much difficulty. But

the main problem is that as the number of design variables exceeds two or

three, the constraint and objective function surfaces become complex even for

visualization and the problem has to be solved purely as a mathematical problem. The following example illustrates the graphical optimization procedure.

uptimumjDoint

Figure 1.4 Contours of the objective function.

Example IA Design a uniform column of tubular section (Fig. 1.5) to carry

a compressive load P = 2500 kgf for minimum cost. The column is made up

of a material that has a yield stress (a^) of 500 kgf/cm2, modulus of elasticity

(E) of 0.85 X 106 kgf/cm2, and density (p) of 0.0025 kgf/cm3. The length of

the column is 250 cm. The stress induced in the column should be less than

the buckling stress as well as the yield stress. The mean diameter of the column

is restricted to lie between 2 and 14 cm, and columns with thicknesses outside

the range 0.2 to 0.8 cm are not available in the market. The cost of the column

includes material and construction costs and can be taken as 5W + 2d, where

W is the weight in kilograms force and d is the mean diameter of the column

in centimeters.

SOLUTION

ness (t):

The design variables are the mean diameter (d) and tube thick-

-

a

-

t

t

The objective function to be minimized is given by

/(X) = 5W + Id = 5p/x dt + Id = 9.82X1X2 + 2Jc1

P

/

A

A

Section A-A

P

Figure 1.5

Tubular column under compression.

(E2)

The behavior constraints can be expressed as

stress induced < yield stress

stress induced < buckling stress

The induced stress is given by

•induced

, , stress = O = —p1

250

=

TT dt

°

(E3)

TTXiX2

The buckling stress for a pin-connected column is given by

, „.

Euler buckling load

Tr2EI 1

buckling stress = ob =

= —~

cross-sectional area

/

(E4)

TT at

where

/ = second moment of area of the cross section of the column

= ^

( 4 + dj)(do

+ Cl1)(Cl0 - Cl1) = ^Kd

• [(d + t) + (d~

t)][(d + t ) - ( d -

+ tf

+ (d-

t)2]

0]

= I dt(d2 + t2) = I X1X2(X2 + xl)

(E 5 )

Thus the behavior constraints can be restated as

S1(X) =

2500

500 < 0

(E6)

7T2(0.85 X IQ 6 X^ + xl)

^ 0

flff.m2

8(250)

(E7)

TTXxX2

2500

g2(X) =

TTX1X2

The side constraints are given by

2 < d < 14

0.2 < t < 0.8

which can be expressed in standard form as

g3(X)

= -X1 + 2.0 < 0

(E 8 )

S 4 ( X ) =xxg5(X)

14.0< 0

(E9)

= -X2 + 0.2 < 0

(E10)

S6(X) = X2 - 0.8 < 0

(E11)

Since there are only two design variables, the problem can be solved graphically as shown below.

First, the constraint surfaces are to be plotted in a two-dimensional design

space where the two axes represent the two design variables Jc1 and X2. To plot

the first constraint surface, we have

S1(X) = ^ - - 500 < 0

TTJC1JC2

that is,

JC1Jc2 > 1.593

Thus the curve JC1JC2 = 1.593 represents the constraint surface Si(X) = 0. This

curve can be plotted by finding several points on the curve. The points on the

curve can be found by giving a series of values to JC1 and finding the corresponding values of Jc2 that satisfy the relation JC1Jc2 = 1.593:

jc,

2.0

4.0

6.0

8.0

Jc2

0.7965

0.3983

0.2655

0.1990

10.0

0.1593

12.0

0.1328

14.0

0.1140

These points are plotted and a curve P 1 Q1 passing through all these points is

drawn as shown in Fig. 1.6, and the infeasible region, represented by g\(X)

> 0 OrJc1JC2 < 1.593, is shown by hatched lines. f Similarly, the second constraint g2(X) < 0 can be expressed as xxx2(x2\ H-Jc2) > 47.3 and the points

lying on the constraint surface g2(X) = 0 can be obtained as follows:

IfOrJc1JC2(X? + JC|) = 47.3]:

jc,

2

4

6

8

jc2

2.41

0.716

0.219

0.0926

10

0.0473

12

0.0274

14

0.0172

These points are plotted as curve P2Q2, the feasible region is identified, and

the infeasible region is shown by hatched lines as shown in Fig. 1.6. The

plotting of side constraints is very simple since they represent straight lines.

After plotting all the six constraints, the feasible region can be seen to be given

by the bounded area ABCDEA.

tr

The infeasible region can be identified by testing whether the origin lies in the feasible or infeasible region.

Yield constraint

g,(x) = O

Pl

?7

Buckling constraint

g2M = 0

E

A

ABCDEA = Feasible region

Optimum point

(5.44, 0.293)

C

D

Ql

Q2

Figure 1.6

Graphical optimization of Example 1.1.

Next, the contours of the objective function are to be plotted before finding

the optimum point. For this, we plot the curves given by

/(X) =

9.82X,JC 2

+ Ixx = c = constant

for a series of values of c. By giving different values to c, the contours o f /

can be plotted with the help of the following points.

For 9.82X1Jc2 4- 2X1 = 50.0:

X2

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

jc,

16.77

12.62

10.10

8.44

7.24

6.33

5.64

5.07

For 9.82X,JC 2 + 2X1 =

40.0:

jc2

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

Jc1

13.40

10.10

8.08

6.75

5.79

5.06

4.51

4.05

For 9.82X1X2 H- 2xj = 31.58 (passing through the corner point C):

X2

0.1

JC,

10.57

0.2

0.3

0.4

0.5

0.6

0.7

0.8

7.96

6.38

5.33

4.57

4.00

3.56

3.20

F o r 9.82X 1 X 2 + 2X 1 = 2 6 . 5 3 (passing through t h e corner point B):

X2

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

x,

8.88

6.69

5.36

4.48

3.84

3.36

2.99

2.69

For 9.82X1X2 = 2X1 = 20.0:

X2

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0.8

X1

6.70

5.05

4.04

3.38

2.90

2.53

2.26

2.02

These contours are shown in Fig. 1.6 and it can be seen that the objective

function cannot be reduced below a value of 26.53 (corresponding to point B)

without violating some of the constraints. Thus the optimum solution is given

by point B with d* = x* = 5.44 cm and t* = X2 = 0.293 cm with/ m i n =

26.53.

1.5

CLASSIFICATION OF OPTIMIZATION PROBLEMS

Optimization problems can be classified in several ways, as described below.

1.5.1

Classification Based on the Existence of Constraints

As indicated earlier, any optimization problem can be classified as constrained

or unconstrained, depending on whether or not constraints exist in the problem.

1.5.2

Classification Based on the Nature of the Design Variables

Based on the nature of design variables encountered, optimization problems

can be classified into two broad categories. In the first category, the problem

is to find values to a set of design parameters that make some prescribed function of these parameters minimum subject to certain constraints. For example,

the problem of minimum-weight design of a prismatic beam shown in Fig.

1.7a subject to a limitation on the maximum deflection can be stated as follows.

Find X = ,

which minimizes

(1.4)

W

/ ( X ) = plbd

subject to the constraints

Stip(X) < <5max

b > 0

d > 0

where p is the density and 5tip is the tip deflection of the beam. Such problems

are called parameter or static optimization problems. In the second category

of problems, the objective is to find a set of design parameters, which are all

continuous functions of some other parameter, that minimizes an objective

function subject to a set of constraints. If the cross-sectional dimensions of the

rectangular beam are allowed to vary along its length as shown in Fig. 1.7ft,

the optimization problem can be stated as:

Find X(r) = ) J / \ ( which minimizes

/[X(O] = P f b(t)d(t)dt

Jo

Figure 1.7 Cantilever beam under concentrated load.

(1.5)

subject to the constraints

StJp[X(O] ^ Smax,

6(0 > 0,

rf(0 ^ 0,

0 < t < /

0 < t < /

0 < t < /

Here the design variables are functions of the length parameter t. This type of

problem, where each design variable is a function of one or more parameters,

is known as a trajectory or dynamic optimization problem [1.40].

1.5.3

Classification Based on the Physical Structure of the Problem

Depending on the physical structure of the problem, optimization problems

can be classified as optimal control and nonoptimal control problems.

Optimal Control Problem. An optimal control (OC) problem is a mathematical programming problem involving a number of stages, where each stage

evolves from the preceding stage in a prescribed manner. It is usually described

by two types of variables: the control (design) and the state variables. The

control variables define the system and govern the evolution of the system

from one stage to the next, and the state variables describe the behavior or

status of the system in any stage. The problem is to find a set of control or

design variables such that the total objective function (also known as the performance index, PI) over all the stages is minimized subject to a set of constraints on the control and state variables. An OC problem can be stated as

follows [1.40]:

Find X which minimizes/(X) = S fi(xhy^)

(1.6)

/= i

subject to the constraints

qi(xi9yd + yt = yi + i,

i = 1,2,. . .,/

gj(xj) < 0,

J=

1,2,...,/

hk(yk) < 0,

* = 1,2,. . .,/

where xt is the ith control variable, yt the ith state variable, a n d / the contribution of the ith stage to the total objective function; gj9 hk, and qt are functions

of Xj, yk and xt and yh respectively, and / is the total number of stages. The

control and state variables Jc1 and yt can be vectors in some cases. The following

example serves to illustrate the nature of an optimal control problem.

Example 1.2 A rocket is designed to travel a distance of 12s in a vertically

upward direction [1.32]. The thrust of the rocket can be changed only at the

discrete points located at distances of 0, s, 2s, 3s, . . . , 11s. If the maximum

thrust that can be developed at point / either in the positive or negative direction

is restricted to a value of F1-, formulate the problem of minimizing the total

time of travel under the following assumptions:

1. The rocket travels against the gravitational force.

2. The mass of the rocket reduces in proportion to the distance traveled.

3. The air resistance is proportional to the velocity of the rocket.

SOLUTION Let points (or control points) on the path at which the thrusts of

the rocket are changed be numbered as 1,2, 3, . . . , 1 3 (Fig. 1.8). Denoting

Figure 1.8

Control points in the path of the rocket.

Control

points

Distance from

starting point

Xj as the thrust, V1 the velocity, at the acceleration, and YYi1 the mass of the

rocket at point /, Newton's second law of motion can be applied as

net force on the rocket = mass X acceleration

This can be written as

thrust — gravitational force — air resistance = mass X acceleration

or

xt - mg - kxvt = Yn1Q1

(E1)

where the mass Yn1 can be expressed as

YYl1 = YYl1-X ~ k2S

(E 2 )

and kx and k2 are constants. Equation (Ej) can be used to express the acceleration, ah as

0/ =

*i

kxvt

g

(E3)

m

YYIi

i

If tt denotes the time taken by the rocket to travel from point i to point / H- 1,

the distance traveled between the points / and / + 1 can be expressed as

s = vfi + \aii\

or

1

9

(X;

k\V\

from which t{ can be determined as

.

2

, ^

*i

_

h =

(Xi

k V

\ i\

:

YYl1

(E5)

_k[Vi

YYl1

Of the two values given by Eq. (E 5 ), the positive value has to be chosen for

th The velocity of the rocket at point i + 1, V1+ u can be expressed in terms

of Vi as (by assuming the acceleration between points i and /H- 1 to be constant

for simplicity)

V1 + x = V1 + afi

(E6)

The substitution of Eqs. (E3) and (E5) into Eq. (E6) leads to

*,+1 = L? + * ( * - , - ^ )

S

\mi

(E7)

mi )

From an analysis of the problem, the control variables can be identified as the

thrusts X1 and the state variables as the velocities, V1. Since the rocket starts at

point 1 and stops at point 13,

V1 = v 13 = 0

(E8)

Thus the problem can be stated as an OC problem as

Find X = <

.

which minimizes

f

/

\

.

/(X) = 2 ^ = 2

^

T "\

2 , o (Xi

k

*Vi\

7

—

\m,-

m,- /

'

y

subject to

m, + i = m, — ^ 2 5 '

' = 1>2,. • .,12

^+i=>? + 2 , ( ^ - ^ - ^ ) ,

|JC,-| < F / ?

vx

1.5.4

/ = 1,2

1

2

i = 1,2,. . .,12

=^,3=0

Classification Based on the Nature of the Equations Involved