Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

Bingo Bonus points

1) I should receive at least 10 points for trying PROC Genmod and giving some insight

about the difference of output.

2) I have used Weka Machine learning tool for variable selection, and I should get 10 points

for that.

3) I should get full 20 points for recreating the program in R.

4) I should get 5 points for using Amelia for multiple imitations in R.

5) I am expecting at least 5 points for using SAS macro in my scoring program.

1. INTRODUCTION

This data set contains approximately 8000 records. Each record in the dataset represents a

customer at an auto insurance company. Each record has two target variables

TARGET_FLAG and TARGET_AMT. TARGET_FLAG indicates if the person was in a car crash.

TARGET_AMT, will be zero if the person did not crash their car and greater than zero if the

car is involved in the crash. First we will build the Logistic Regression model to estimate the

probability that a person will crash their car and then a Linear regression model to estimate

the loss in the event of a crash.

2. DATA PREPARATION

In the dataset, there are 23 dependent variables and 2 response variables. The TARGET_FLAG

and TARGET_AMT are response variables. The below table shows the variables data types.

Table 1: Data Dictionary

VARIABLE NAME

TYPE

TARGET_FLAG

Response variable

TARGET_AMT

Response variable

AGE

BLUEBOOK

CAR_AGE

CAR_TYPE

CAR_USE

CLM_FREQ

EDUCATION

HOMEKIDS

HOME_VAL

INCOME

JOB

continuous

continuous

continuous

categorical

categorical

continuous

categorical

continuous

continuous

continuous

categorical

DEFINITION

Was Car in a crash?

1=YES 0=NO

If car was in a crash, what

was the cost

Age of Driver

Value of Vehicle

Vehicle Age

Type of Car

Vehicle Use

#Claims(Past 5 Years)

Max Education Level

#Children @Home

Home Value

Income

Job Category

1

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

KIDSDRIV

MSTATUS

categorical

categorical

MVR_PTS

continuous

OLDCLAIM

PARENT1

RED_CAR

continuous

categorical

categorical

REVOKED

categorical

SEX

TIF

TRAVTIME

URBANICITY

YOJ

categorical

continuous

continuous

categorical

continuous

#Driving Children

Marital Status

Motor Vehicle Record

Points

Total Claims(Past 5 Years)

Single Parent

A Red Car

License Revoked (Past 7

Years)

Gender

Time in Force

Distance to Work

Home/Work Area

Years on Job

After looking at the above table, among all predictors, we have notice that AGE, BLUEBOOK,

CAR_AGE , CLM_FREQ, HOMEKIDS, HOME_VAL, INCOME, MVR_PTS, OLDCLAIM, TIF and

TRAVTIME variable are continuous variables. Rest are categorical variables.

We will examine each variable by creating a histogram as well as tests for normality. I have put

some of the histograms of the variables frequency distribution. I did not put all of histograms

for brevity.

Among the predictor variables, the AGE variable is kind of bell shaped and looks normally

distributed.

2

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

3

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

Some variables are kind of continuous but appear to be like categorical variables, such as

CLM_FREQ, HOMEKIDS and MVR_PTS. When looking at these it seems reasonable to pull them

to the categorical side of the analysis by putting into multiple levels, but in my opinion it will be

better, if we continue to use them as continuous variables.

CAR_AGE, HOME_VAL, and OLDCLAIM have very strong presence in the left side of the

histogram.

For example, in case HOME_VAL, the 30% of customer dies not have home and they are renting

the house or they are homeless. It could be due to the way survey might be done. The

skewness could have been result of that.

Also we could have change the HOME_VAL as categorical variable i.e. as OWN_HOME and value

as yes or no. But seeing the continuity at the right side of the histogram of the variable, it will

be good idea to keep it as continuous variable.

Correlation with response variable

Table 2: Correlation

Variable

AGE

Correlation

-0.10322

CAR_AGE

-0.10065

BLUEBOOK

CLM_FREQ

HOMEKIDS

HOME_VAL

INCOME

MVR_PTS

OLDCLAIM

TIF

TRAVTIME

-0.10338

0.2162

0.11562

-0.18374

-0.14201

0.2192

0.13808

0.08237

0.04815

The above table shows the variables correlation with TARGET_FLAG.

From this we see that there are some variables that we would automatically take forward into

model construction, notably anything that exceeds the 0.10 threshold. We are concerned to see

that travel time has such a very low correlation. We'd expect that the concept of exposure to

operation of their vehicle would significantly increase the likelihood of a crash. We will drop

these two variables from the EDA and model building due to weak correlation. We choose to

abandon the use of TIF and YOJ.

4

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

Categorical Variables

We have many categorical variables in the Insurance dataset. Some of them has just two

categories such Yes/No or M/F and some of them have more than two categories. For example,

CAR_TYPE and JOB has many levels and SEX and RED_CAR has just two.

I have kept all the categorical variables in the model. We will now code each categorical

variable as a family of dummy variables. I have given below one of the categorical variable as

dummy variable coding.

By default, the dummy variables for JOB are 0. There are some missing values for JOB and those

will have assigned as 0 by default.

JOB_C = 0;

JOB_HM = 0;

JOB_L = 0;

JOB_M = 0;

JOB_P = 0;

JOB_S = 0;

JOB_BC = 0;

if JOB in ('Doctor' 'Clerical' 'Home Maker' 'Lawyer' 'Manager' 'Professional' 'Student' 'z_Blue

Collar') then do;

JOB_D = (JOB eq 'Doctor');

JOB_C = (JOB eq 'Clerical');

JOB_HM = (JOB eq 'Home Maker');

JOB_L = (JOB eq 'Lawyer');

JOB_M = (JOB eq 'Manager');

JOB_P = (JOB eq 'Professional');

JOB_S = (JOB eq 'Student');

JOB_BC = (JOB eq 'z_Blue Collar');

end;

The rest of the categorical variables are coded like above.

3. DATA PREPARATION

There are some missing values which have been imputed. At the same time, we need to create

flags to as indicator variable as we have imputed the data. The below tables shows the missing

values of some of the variables.

Missing values and Imputation

Table 3: Missing values and mean (All data)

5

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

Variable

KIDSDRIV

AGE

HOMEKIDS

YOJ

INCOME

HOME_VAL

TRAVTIME

BLUEBOOK

TIF

OLDCLAIM

CLM_FREQ

MVR_PTS

CAR_AGE

Count

8161

8155

8161

7707

7716

7697

8161

8161

8161

8161

8161

8161

7651

Missing

0

6

0

454

445

464

0

0

0

0

0

0

510

Mean

0.1710575

44.7903127

0.7212351

10.4992864

61898.1

154867.29

33.4887972

15709.9

5.351305

4037.08

0.7985541

1.695503

8.3283231

Table 4: Missing values and mean (Categorized on TRAGET_FLAG)

TARGET_FLAG

Variable

N

N Miss

Mean

1

1

1

1

1

1

1

1

1

1

1

1

1

0

0

0

0

0

0

0

0

0

0

0

0

0

KIDSDRIV

6008

0

0.1393142

AGE

6007

1

45.3227901

HOMEKIDS

6008

0

0.6439747

YOJ

5677

331

10.6718337

INCOME

5673

335

65951.97

HOME_VAL

5665

343

169075.41

TRAVTIME

6008

0

33.0303446

BLUEBOOK

6008

0

16230.95

TIF

6008

0

5.555759

OLDCLAIM

6008

0

3311.59

CLM_FREQ

6008

0

0.6486352

MVR_PTS

6008

0

1.4137816

CAR_AGE

5640

368

8.670922

KIDSDRIV

2153

0

0.2596377

AGE

2148

5

43.3012104

HOMEKIDS

2153

0

0.9368323

YOJ

2030

123

10.0167488

INCOME

2043

110

50641.3

HOME_VAL

2032

121

115256.55

TRAVTIME

2153

0

34.7681203

BLUEBOOK

2153

0

14255.9

TIF

2153

0

4.780771

OLDCLAIM

2153

0

6061.55

CLM_FREQ

2153

0

1.2169066

MVR_PTS

2153

0

2.4816535

CAR_AGE

2011

142

7.3674789

6

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

If we look at the above table, we notice there are several variables, which have missing values.

We notice that of the missing records that none are exceedingly large proportions of the

observed data. There are at the most 5% missing values for some of the variables. We will use

mean to impute the missing value. We are aware that simply imputing with mean can bias the

data but since the number of missing value are low, mean imputation will be fine. We feel that

it is still better than removal of the observations from the data set.

These are the list of Imputed variables and corresponding flag variables listed below.

IMP_AGE and IND_IMP_AGE ;

IMP_CAR_AGE and IND_IMP_CAR_AGE;

IMP_HOME_VAL and IND_IMP_HOME_VAL

IMP_INCOME and IND_IMP_INCOME

The flag variable prefixed with IND_ above should be included as an input variable into the

predictive model because the fact that a variable is missing is often times predictive.

4. BUILD MODELS

I have created the three models to compare and get the best model.

Model 1

I have created this model by selecting variables using Weka Explorer tool. I have used the

CfsSubsetEval Evaluator and GreedyStepwise search algorithm for variable selection.

The tool has selected INCOME, HOME_VAL, JOB and CLM_FREQ as the predictor variables.

I have used only these variables in my logistic model. I made sure that the JOB variables has

dummy variables created and used in the model. Please look at the below

Screenshot which depicts the variable selection from Weka tool.

7

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

Based on the above variable selection, I have build the model and the coefficients I got is

depicted below.

The model with the above coefficients and intercept is constructed below.

8

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

Log(p/1-p) = -0.8346 -3.02E-06 *IMP_INCOME -2.83E-06 IMP_HOME_VAL +CLM_FREQ 0.3795

-0.2185*JOB_L -0.6173 *JOB_M +JOB_BC *0.4622

The interpretation of the above model, all variables being held, is that: For a one unit increase

in CLM_FREQ there will be a 73% increase in the likelihood of a crash. For a one unit decrease in

the amount of IMP_INCOME there is a 50% decrease in the likelihood of a crash.

The above % value is found using p = exp(x)/(1+exp(x)).

The coefficient relation seems intuitive and reasonable. But the ROC value is bit lower which is

0.6967. At this moment we should look at the other model for better Roc value.

9

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

If we look at the KS output below, The D statistic is the maximum difference between the

cumulative distributions between events (Y=1) and non-events (Y=0). In this model,

D=0.293500.

Higher the value of D, the better the model distinguishes between events and non-events.

10

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

Model 2

I have created this model by selecting variables which has higher correlations. Please look at

the Table 2. I have included all the categorical variables.

The tool has selected BLUEBOOK ,CLM_FREQ ,HOMEKIDS ,IMP_INCOME ,MVR_PTS ,OLDCLAIM

,IMP_HOME_VAL and some categorical variables as the predictor variables. These continuous

variables from table 2 which has higher correlations are used here.

Based on the above variable selection, I have build the model and the coefficients I got is

depicted below.

11

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

The model with the above coefficients and intercept is constructed below.

Log(p/1-p) = 0.0362 -0.00002 *BLUEBOOK +0.2059 *CLM_FREQ +0.1271 *HOMEKIDS -3.94E06 *IMP_INCOME +0.1205 *MVR_PTS -0.00001 *OLDCLAIM -1.28E-06 *IMP_HOME_VAL 0.6266 *TYPE_MINI +0.3081 *TYPE_SPOR -0.7158 *USE_P +0.4465 *EDU_HS +0.449

*EDU_ZHS -0.7357 *JOB_M -0.4652 *MARRIED_Y +0.3749 *PARTENT_S +0.9158 *REV_L 2.1897 *R_URBANICITY

The interpretation of the above model, all variables being held, is that: For a one unit increase

in PARTENT_S there will be a 59% increase in the likelihood of a crash. That means being single

parent is risky and more likely to crash. For a one unit increase in the amount of HOME_KIDS

there is a 53% increase in the likelihood of a crash. These sounds intuitive and reasonable.

The above % value is found using p = exp(x)/(1+exp(x)).

12

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

The ROC value is way higher than the Model 1 which is 0.8011.

If we look at the KS output below ,In this model, D=0.45688 which higher than the model 1.

Higher the value of D, the better the model distinguishes between events and non-events.

13

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

Model 3

I have created this final model by including all the variables in the data and I wanted the Logistic

Regression Forward selection method choose the variables. Surprisingly the variable TRAVTIME

makes into the selected variable list. If you look at tat the Table 2, the TRAVTIME has very low

correlation but the forward selection method has included in the prediction. I have included all

the categorical variables.

The tool has selected BLUEBOOK ,CLM_FREQ ,HOMEKIDS ,IMP_INCOME ,MVR_PTS ,OLDCLAIM

,IMP_HOME_VAL, TRAVTIME and some categorical variables as the predictor variables.

Based on the above variable selection, I have build the model and the coefficients I got is

depicted below.

14

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

The model with the above coefficients and intercept is constructed below.

Log(p/1-p) = -0.4361 -0.00002 *BLUEBOOK +0.2005 *CLM_FREQ +0.1323 *HOMEKIDS -4.02E06 *IMP_INCOME +0.1191 *MVR_PTS -0.00001 *OLDCLAIM +0.0146 *TRAVTIME -1.26E-06

15

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

*IMP_HOME_VAL -0.6355 *TYPE_MINI +0.3092 *TYPE_SPOR -0.7159 *USE_P +0.4526

*EDU_HS +0.4597 *EDU_ZHS -0.7076 *JOB_M -0.4778 *MARRIED_Y +0.3944 *PARTENT_S

+0.9195 *REV_L -2.3095 *R_URBANICITY

The interpretation of the above model, all variables being held, is that: For a one unit increase

in TRAVTIME there will be a 50% increase in the likelihood of a crash. That means being single

parent is risky and more likely to crash. For a one unit decrease in the amount of BLUEBOOK

value, there is a 50% decrease in the likelihood of a crash. These sounds intuitive and

reasonable.

The above % value is found using p = exp(x)/(1+exp(x)).

The ROC value is the highest in this Model which is 0.8052.

16

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

If we look at the KS output below , in this model, D=0.459944 which is the highest among all

three models.

17

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

5. SELECT MODELS

Model 1

AIC

8677.301

ROC

0.6967

KS (Decile value)

0.293500

Model 2

Model 3

7512.858

7455.178

0.8011

0.8052

0.45688

0.459944

The above table shows the AIC, ROC and KS measures of the all the three models. If we

compare AIC and ROC measure, the Model 3 seems the best model. AIC is the lowest and Roc is

the highest.

KS also captures the discriminatory power of the model in separating “Good” from “Bad”. It is

the highest separation between the Cumulative Good Rate and Cumulative Bad Rate. Higher

the KS, better is the model (higher separation between good and bad). KS values can range

between 0 -100%, KS values greater than 20% are considered acceptable for a model.

18

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

The Model 2 and Model 3 both has KS (D value) value more than 45% and Model 3 KS value is

the highest so we will go for Model 3.

6. Conclusion

We have built the three Logistic Regression Model to best predict who will crash the car based

on the predictor variables. We started with first Model with the help of external tool called

Weka. The two other models were built using seeing correlation and also using SAS forward

selection method. We found that Model 3 is the best model. We have used AIC, ROC and KS

statistic to find the best model. Although the Model 3 has one extra variable (TRAVTIME) than

Model 2. But seeing the result if AIC, ROC and KS, we will choose Model 3.

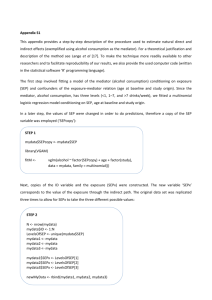

BINGO BONUS:

PROC GENMOD

proc genmod data=imp_temp descending ;

model TARGET_FLAG = IMP_AGE BLUEBOOK IMP_CAR_AGE CLM_FREQ HOMEKIDS

IMP_INCOME MVR_PTS OLDCLAIM TRAVTIME

IMP_HOME_VAL TYPE_MINI TYPE_PICK TYPE_SPOR TYPE_VAN TYPE_SUV USE_P

EDU_HS EDU_BA EDU_MA EDU_ZHS

JOB_C JOB_HM JOB_L JOB_M JOB_P JOB_S JOB_BC MARRIED_Y PARTENT_S

RED_C REV_L SEX_F R_URBANICITY /dist=binomial link=logit ;

run;

I have used the Proc Genmod and when I compared with Proc Logistic, I found that the result

AIC value is poorer than the Model 3 above. The AIC value for Genmod is 7471.2101.

Also the output of Proc Genmod is not as intuitive than Proc Logistics. By default , the ROC

value is not calculated. The ROC value helps compare the model. It also included long list of

variables.

19

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

Weka

I have downloaded and used Weka Machine Learning tool to select the variables for Model 1.

The screenshot has been attached in the Model 1 section.

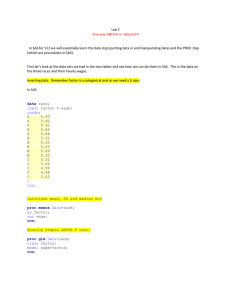

R

I have written the R program to create Logistic Regression for TARGET_FLAG and Linear

Regression for TARGET_AMT.

I have used the Amelia package to do the imputation. Amelia uses the Multiple Imputation

technique call EM. Surprisingly, the ROC from R using Amelia is 0.8148. AIC value from R code

using Amelia is 7354 which is the lowest if we compare with Model 1, 2 and 3.

I have used the output of the R and submitted on the Kaggle and got best score.

20

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

21

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

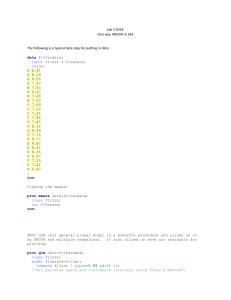

R Code

require(moments)

require(ggplot2)

require(gridExtra)

require(Amelia)

###############################

mydata <read.csv(file.path("/Users/ajha/Documents/411","logit_insurance.csv"),header=T,na.strings=c("") )

mydata$BLUEBOOK <- gsub(",","",mydata$BLUEBOOK)

mydata$BLUEBOOK <- gsub("\\$","",mydata$BLUEBOOK)

mydata$BLUEBOOK <- as.double(mydata$BLUEBOOK)

mydata$INCOME <- gsub(",","",mydata$INCOME)

mydata$INCOME <- gsub("\\$","",mydata$INCOME)

mydata$INCOME <- as.double(mydata$INCOME)

mydata$HOME_VAL <- gsub(",","",mydata$HOME_VAL)

mydata$HOME_VAL <- gsub("\\$","",mydata$HOME_VAL)

mydata$HOME_VAL <- as.double(mydata$HOME_VAL)

mydata$OLDCLAIM <- gsub(",","",mydata$OLDCLAIM)

mydata$OLDCLAIM <- gsub("\\$","",mydata$OLDCLAIM)

mydata$OLDCLAIM <- as.double(mydata$OLDCLAIM)

str(mydata)

mydata$PARENT1 <- as.factor(as.integer(mydata$PARENT1))

mydata$MSTATUS <- as.factor(as.integer(mydata$MSTATUS))

mydata$SEX <- as.factor(as.integer(mydata$SEX))

mydata$EDUCATION <- as.factor(as.integer(mydata$EDUCATION))

mydata$JOB <- as.factor(as.integer(mydata$JOB))

mydata$CAR_USE <- as.factor(as.integer(mydata$CAR_USE))

mydata$CAR_TYPE <- as.factor(as.integer(mydata$CAR_TYPE))

mydata$RED_CAR <- as.factor(as.integer(mydata$RED_CAR))

mydata$REVOKED <- as.factor(as.integer(mydata$REVOKED))

mydata$URBANICITY <- as.factor(as.integer(mydata$URBANICITY))

str(mydata)

22

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

###############################

#Using Amelia to impute missng values using multiple imputations

amelia_fit <- amelia(mydata, m=2, parallel = "multicore", noms = c(

'PARENT1','MSTATUS','SEX','EDUCATION','JOB','CAR_USE','CAR_TYPE','RED_CAR','REVOKED','URBANICIT

Y'))

mydata <- amelia_fit$imputations[[1]]

mydata[1,]

#Create model on trainig set TARGET_FLAG

mod_v1<-glm(TARGET_FLAG~KIDSDRIV + AGE + HOMEKIDS + YOJ + INCOME + HOME_VAL +PARENT1 +

MSTATUS + SEX + EDUCATION+ JOB

+TRAVTIME+ CAR_USE

+BLUEBOOK

+TIF

+CAR_TYPE+ RED_CAR+

OLDCLAIM

+CLM_FREQ+ REVOKED

+MVR_PTS+

CAR_AGE

+URBANICITY, data=mydata,family=binomial(),na.action=na.omit)

mod_v1

predpr <- predict(mod_v1,type=c("response"))

library(pROC)

roccurve <- roc(mydata$TARGET_FLAG ~ predpr)

plot(roccurve)

#Create model on trainig set TARGET_AMT

mydata <- mydata[mydata$TARGET_AMT > 0,]

mod_vlr<-lm(TARGET_AMT~KIDSDRIV + AGE + HOMEKIDS + YOJ + INCOME + HOME_VAL +PARENT1 +

MSTATUS + SEX + EDUCATION+ JOB

+TRAVTIME+ CAR_USE

+BLUEBOOK

+TIF

+CAR_TYPE+ RED_CAR+

OLDCLAIM

+CLM_FREQ+ REVOKED

+MVR_PTS+

CAR_AGE

+URBANICITY, data=mydata)

step(mod_vlr, direction="forward")

############################################

# Load test set data

mydata_test <read.csv(file.path("/Users/ajha/Documents/411","logit_insurance_test.csv"),header=T,na.strings=c("") )

mydata_test$BLUEBOOK <- gsub(",","",mydata_test$BLUEBOOK)

mydata_test$BLUEBOOK <- gsub("\\$","",mydata_test$BLUEBOOK)

mydata_test$BLUEBOOK <- as.double(mydata_test$BLUEBOOK)

mydata_test$INCOME <- gsub(",","",mydata_test$INCOME)

mydata_test$INCOME <- gsub("\\$","",mydata_test$INCOME)

mydata_test$INCOME <- as.double(mydata_test$INCOME)

mydata_test$HOME_VAL <- gsub(",","",mydata_test$HOME_VAL)

mydata_test$HOME_VAL <- gsub("\\$","",mydata_test$HOME_VAL)

23

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

mydata_test$HOME_VAL <- as.double(mydata_test$HOME_VAL)

mydata_test$OLDCLAIM <- gsub(",","",mydata_test$OLDCLAIM)

mydata_test$OLDCLAIM <- gsub("\\$","",mydata_test$OLDCLAIM)

mydata_test$OLDCLAIM <- as.double(mydata_test$OLDCLAIM)

str(mydata_test)

mydata_test$PARENT1 <- as.factor(as.integer(mydata_test$PARENT1))

mydata_test$MSTATUS <- as.factor(as.integer(mydata_test$MSTATUS))

mydata_test$SEX <- as.factor(as.integer(mydata_test$SEX))

mydata_test$EDUCATION <- as.factor(as.integer(mydata_test$EDUCATION))

mydata_test$JOB <- as.factor(as.integer(mydata_test$JOB))

mydata_test$CAR_USE <- as.factor(as.integer(mydata_test$CAR_USE))

mydata_test$CAR_TYPE <- as.factor(as.integer(mydata_test$CAR_TYPE))

mydata_test$RED_CAR <- as.factor(as.integer(mydata_test$RED_CAR))

mydata_test$REVOKED <- as.factor(as.integer(mydata_test$REVOKED))

mydata_test$URBANICITY <- as.factor(as.integer(mydata_test$URBANICITY))

str(mydata_test)

#Using Amelia to impute missng values using multiple imputations

mydata_test$TARGET_FLAG <- mydata_test$TARGET_AMT <- NULL

amelia_fit <- amelia(mydata_test, m=2, parallel = "multicore", noms = c(

'PARENT1','MSTATUS','SEX','EDUCATION','JOB','CAR_USE','CAR_TYPE','RED_CAR','REVOKED','URBANICIT

Y'))

mydata_test <- amelia_fit$imputations[[1]]

mydata_test[1,]

################################################

#Predict for the Test set

mydata_test$TARGET_FLAG <- predict.glm(mod_v1, newdata=mydata_test, type="response")

sub <- subset(mydata_test, select=c("INDEX","TARGET_FLAG"))

names(sub) <- c('INDEX','P_TARGET_FLAG')

write.table(sub,"/Users/ajha/Documents/411/out_glm.csv",sep=',',col.names=T,row.names=F)

mydata_test$TARGET_AMT <- predict.lm(mod_vlr, newdata=mydata_test, type="response")

sub <- subset(mydata_test, select=c("INDEX","TARGET_FLAG","TARGET_AMT"))

names(sub) <- c('INDEX','P_TARGET_FLAG','P_TARGET_AMT')

sub$AMT <- sub$P_TARGET_FLAG*sub$P_TARGET_AMT;

write.table(sub,"/Users/ajha/Documents/411/out_glm_amt.csv",sep=',',col.names=T,row.names=F)

24

Ajay Nath Jha

PREDICT_411-DL_SEC60

Kaggle user: ajayjha2017

SAS Macro

I have used SAS macro in the score part of the code. Please have a look at the score code. The snippet

of the SAS macro is below:

%macro SCORE_FLAG( INFILE, OUTFILE );

data &OUTFILE.;

set &INFILE.;

ODDS_Y = -0.4361 -0.00002*BLUEBOOK + 0.2005*CLM_FREQ + 0.1323*HOMEKIDS 0.00000402*IMP_INCOME +

0.1191*MVR_PTS -0.00001*OLDCLAIM + 0.0146*TRAVTIME 0.00000126*IMP_HOME_VAL 0.6355*TYPE_MINI + 0.3092*TYPE_SPOR -0.7159*USE_P +

0.4526*EDU_HS + 0.4597*EDU_ZHS 0.7076*JOB_M - 0.4778*MARRIED_Y + 0.3944*PARTENT_S +

0.9195*REV_L -2.3095*R_URBANICITY;

P_TARGET_FLAG = exp(ODDS_Y)/(1+exp(ODDS_Y));

P_TARGET_AMT1 = 4131.65436 +

0.11017*BLUEBOOK;

keep index P_TARGET_FLAG P_TARGET_AMT1;

run;

%mend;

%score_flag( temp , temp_out );

proc print data=temp_out;

var INDEX P_TARGET_FLAG;

run;

25