Fundamentals of Electrical

Engineering I

© Don Johnson

This work is licensed under a Creative Commons-ShareAlike 4.0 International License

Original source: The Orange Grove

http://florida.theorangegrove.org/og/items/c7d013ec-2b4d-6971-65f9-b1a6af2d9092/

1/

Contents

Chapter 1 Introduction ..................................................................................................1

1.1 Themes ................................................................................................................................1

1.2 Signals Represent Information ........................................................................................2

1.2.1 Analog Signals..........................................................................................................3

1.2.2 Digital Signals...........................................................................................................4

1.3 Structure of Communication Systems .............................................................................5

1.4 The Fundamental Signal ...................................................................................................7

1.4.1 The Sinusoid ............................................................................................................7

Exercise 1.4.1 ............................................................................................................8

Exercise 1.4.2 ............................................................................................................8

1.4.2 Communicating Information with Signals ............................................................8

1.5 Introduction Problems.......................................................................................................9

1.6 Solutions to Exercises in Chapter 1 ................................................................................10

Chapter 2 Signals and Systems ...................................................................................11

2.1 Complex Numbers ...........................................................................................................11

2.1.1 Definitions ..............................................................................................................11

Exercise 2.1.1 ..........................................................................................................13

Exercise 2.1.2 ..........................................................................................................13

2.1.2 Euler's Formula ......................................................................................................13

2.1.3 Calculating with Complex Numbers....................................................................14

Exercise 2.1.3

.....................................................................................................15

Example 2.1 ............................................................................................................16

2.2 Elemental Signals..............................................................................................................16

2.2.1 Sinusoids ................................................................................................................16

2.2.2 Complex Exponentials ..........................................................................................17

2.2.3 Real Exponentials ..................................................................................................18

2.2.4 Unit Step .................................................................................................................18

2.2.5 Pulse........................................................................................................................20

2.2.6 Square Wave ..........................................................................................................20

2.2.7 Signal Decomposition ...........................................................................................21

Example 2.2 ............................................................................................................21

Exercise 2.3.1 ..........................................................................................................21

2.3 Discrete-Time Signals ......................................................................................................21

2.3.1 Real-and Complex-valued Signals .......................................................................22

2.3.2 Complex Exponentials ..........................................................................................22

2.3.3 Sinusoids ................................................................................................................23

2.3.4 Unit Sample............................................................................................................23

2.3.5 Symbolic-valued Signals .......................................................................................24

2.3.6 Introduction to Systems .......................................................................................24

2.3.6.1 Cascade Interconnection...........................................................................25

2.3.7 Parallel Interconnection .......................................................................................25

2.3.8 Feedback Interconnection....................................................................................26

2.4 Simple Systems ................................................................................................................27

2.4.1 Sources ...................................................................................................................27

2.4.2 Amplifiers ...............................................................................................................27

2.4.3 Delay .......................................................................................................................28

2.4.4 Time Reversal.........................................................................................................28

Exercise 2.6.1 ..........................................................................................................28

2.4.5 Derivative Systems and Integrators ...................................................................29

2.4.6 Linear Systems.......................................................................................................29

2.4.7 Time-Invariant Systems ........................................................................................30

2.5 Signals and Systems Problems .......................................................................................32

Problem 2.1: Complex Number Arithmetic ................................................................32

Problem 2.2: Discovering Roots ...................................................................................32

Problem 2.3: Cool Exponentials ...................................................................................32

Problem 2.4: Complex-valued Signals .........................................................................33

Problem 2.5: ...................................................................................................................34

Problem 2.6: ...................................................................................................................35

Problem 2.7: Linear, Time-Invariant Systems .............................................................36

Problem 2.8: Linear Systems ........................................................................................37

Problem 2.9: Communication Channel .......................................................................37

Problem 2.10: Analog Computers ................................................................................38

2.6 Solutions to Exercises in Chapter 2 ................................................................................38

Chapter 3 Analog Signal Processing ...........................................................................40

3.1 Voltage, Current, and Generic Circuit Elements ...........................................................40

Exercise 3.1.1 ..................................................................................................................41

3.2 Ideal Circuit Elements .....................................................................................................41

3.2.1 Resistor ...................................................................................................................42

3.2.2 Capacitor ................................................................................................................42

3.2.3 Inductor ..................................................................................................................43

3.2.4 Sources ...................................................................................................................44

3.3 Ideal and Real-World Circuit Elements ..........................................................................44

3.4 Electric Circuits and Interconnection Laws....................................................................45

3.4.1 Kirchhof's Current Law .........................................................................................46

Exercise 3.4.1 ..........................................................................................................47

3.4.2 Kirchhof's Voltage Law (KVL) ................................................................................47

Exercise 3.4.2 ..........................................................................................................48

3.5 Power Dissipation in Resistor Circuits ...........................................................................48

Exercise 3.5.1 ..................................................................................................................50

Exercise 3.5.2 ..................................................................................................................50

3.6 Series and Parallel Circuits ..............................................................................................50

Exercise 3.6.1 ..................................................................................................................52

Exercise 3.6.2 ..................................................................................................................54

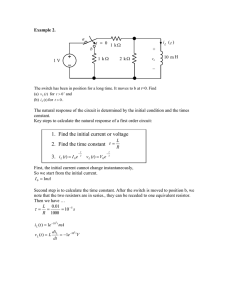

Example 3.1 ....................................................................................................................55

Exercise 3.6.3 ..................................................................................................................56

3.7 Equivalent Circuits: Resistors and Sources....................................................................57

Exercise 3.7.1 ..................................................................................................................59

Example 3.2 ....................................................................................................................59

Exercise 3.7.2 ..................................................................................................................61

3.8 Circuits with Capacitors and Inductors ..........................................................................62

3.9 The Impedance Concept..................................................................................................63

3.10 Time and Frequency Domains ......................................................................................65

Example 3.3 ....................................................................................................................67

Exercise 3.10.1 ................................................................................................................68

3.11 Power in the Frequency Domain ..................................................................................68

Exercise 3.11.1 ................................................................................................................69

Exercise 3.11.2 ................................................................................................................70

3.12 Equivalent Circuits: Impedances and Sources ............................................................70

Example 3.4 ....................................................................................................................72

3.13 Transfer Functions .........................................................................................................73

Exercise 3.13.1 ................................................................................................................76

3.14 Designing Transfer Functions .......................................................................................76

Example 3.5 ....................................................................................................................77

3.15 Formal Circuit Methods: Node Method .......................................................................79

Example 3.6: Node Method Example ..........................................................................82

Exercise 3.15.1 ................................................................................................................83

Exercise 3.15.2 ................................................................................................................84

3.16 Power Conservation in Circuits.....................................................................................84

3.17 Electronics ......................................................................................................................86

3.18 Dependent Sources........................................................................................................86

3.19 Operational Amplifiers...................................................................................................89

3.20 Inverting Amplifier..........................................................................................................90

3.21 Active Filters ....................................................................................................................91

Example 3.7 ....................................................................................................................92

Exercise 3.19.1 ................................................................................................................93

3.22 Intuitive Way of Solving Op-Amp Circuits ....................................................................93

Example 3.8 ....................................................................................................................95

3.23 The Diode ........................................................................................................................96

3.24 Analog Signal Processing Problems .............................................................................98

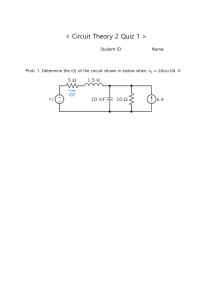

Problem 3.1: Simple Circuit Analysis ...........................................................................98

Problem 3.2: Solving Simple Circuits ...........................................................................99

Problem 3.3: Equivalent Resistance ............................................................................99

Problem 3.4: Superposition Principle ....................................................................... 100

Problem 3.5: Current and Voltage Divider ............................................................... 101

Problem 3.6: Thevenin and Mayer-Norton Equivalents ......................................... 101

Problem 3.7: Detective Work ..................................................................................... 102

Problem 3.8: Bridge Circuits ...................................................................................... 102

Problem 3.9: Cartesian to Polar Conversion ........................................................... 103

Problem 3.10: The Complex Plane ............................................................................ 103

Problem 3.11: Cool Curves ........................................................................................ 103

Problem 3.12: Trigonometric Identities and Complex Exponentials .................... 104

Figure 3.61Problem 3.13: Transfer Functions ......................................................... 104

Problem 3.14: Using Impedances ............................................................................. 105

Problem 3.15: Measurement Chaos ......................................................................... 105

Problem 3.16: Transfer Functions ............................................................................ 106

Problem 3.17: A Simple Circuit .................................................................................. 106

Problem 3.18: Circuit Design ..................................................................................... 107

Problem 3.19: Equivalent Circuits and Power ......................................................... 107

Problem 3.20: Power Transmission .......................................................................... 108

Problem 3.21: Optimal Power Transmission ........................................................... 109

Problem 3.22: Big is Beautiful ................................................................................... 110

Problem 3.23: Sharing a Channel ............................................................................. 110

Problem 3.24: Circuit Detective Work ...................................................................... 111

Problem 3.25: Mystery Circuit ................................................................................... 111

Problem 3.26: More Circuit Detective Work ............................................................ 112

Problem 3.27: Linear, Time-Invariant Systems ....................................................... 113

Problem 3.28: Long and Sleepless Nights ............................................................... 114

Problem 3.29: A Testing Circuit ................................................................................. 114

Problem 3.30: Black-Box Circuit ................................................................................ 115

Problem 3.31: Solving a Mystery Circuit .................................................................. 115

Problem 3.32: Find the Load Impedance ................................................................. 116

Problem 3.33: Analog "Hum" Rejection ................................................................... 116

Problem3.34: An Interesting Circuit ......................................................................... 117

Problem 3.35: A Simple Circuit .................................................................................. 117

Problem 3.36: An Interesting and Useful Circuit ..................................................... 118

Problem 3.37: A Circuit Problem ............................................................................... 119

Problem 3.38: Analog Computers ............................................................................. 119

Problem 3.39: Transfer Functions and Circuits ....................................................... 120

Problem 3.40: Fun in the Lab .................................................................................... 120

Problem 3.41: Dependent Sources ........................................................................... 120

Problem 3.42: Operational Amplifers ....................................................................... 121

Problem 3.43: Op-Amp Circuit .................................................................................. 122

Problem 3.44: Why Op-Amps are Useful ................................................................. 123

Problem 3.45: Operational Amplifiers ...................................................................... 124

Problem 3.46: Designing a Bandpass Filter ............................................................. 124

Problem 3.47: Pre-emphasis or De-emphasis? ....................................................... 125

Problem 3.48: Active Filter ......................................................................................... 126

Problem 3.49: This is a filter? ..................................................................................... 126

Problem 3.50: Optical Receivers ............................................................................... 127

Problem 3.51: Reverse Engineering .......................................................................... 128

3.25 Solutions to Exercises in Chapter 3........................................................................... 128

Chapter 4 Frequency Domain ...................................................................................132

4.1 Introduction to the Frequency Domain ..................................................................... 132

4.2 Complex Fourier Series................................................................................................. 132

Exercise 4.2.1 ............................................................................................................... 133

Example 4.1 ................................................................................................................. 134

Exercise 4.2.2 ............................................................................................................... 137

4.3 Classic Fourier Series .................................................................................................... 138

Exercise 4.3.1 ............................................................................................................... 138

Exercise 4.3.2 ............................................................................................................... 140

Exercise 4.3.3 ............................................................................................................... 140

Example 4.2 ................................................................................................................. 141

4.4 A Signal's Spectrum ....................................................................................................... 142

Exercise 4.4.1 ............................................................................................................... 143

Exercise 4.4.2 ............................................................................................................... 144

4.5 Fourier Series Approximation of Signals .................................................................... 144

Exercise 4.5.1 ............................................................................................................... 148

4.6 Encoding Information in the Frequency Domain ...................................................... 149

Exercise 4.6.1 ............................................................................................................... 150

Exercise 4.6.2 ............................................................................................................... 151

4.7 Filtering Periodic Signals............................................................................................... 152

Example 4.3 ................................................................................................................. 153

Exercise 4.7.1 ............................................................................................................... 154

4.8 Derivation of the Fourier Transform ........................................................................... 154

Example 4.4 ................................................................................................................. 155

Exercise 4.8.1 ............................................................................................................... 156

Exercise 4.8.2 ............................................................................................................... 157

Example 4.5 ................................................................................................................. 159

Exercise 4.8.3 ............................................................................................................... 161

Exercise 4.8.4 ............................................................................................................... 161

4.9 Linear Time Invariant Systems..................................................................................... 161

Example 4.6 ................................................................................................................. 162

Exercise 4.9.1 ............................................................................................................... 163

4.9.1 Transfer Functions ............................................................................................. 164

4.9.2 Commutative Transfer Functions .................................................................... 164

4.10 Modeling the Speech Signal ....................................................................................... 165

Exercise 4.10.1 ............................................................................................................. 167

Exercise 4.10.2 ............................................................................................................. 169

Exercise 4.10.3 ............................................................................................................. 170

4.11 Frequency Domain Problems .................................................................................... 173

Problem 4.1: Simple Fourier Series .......................................................................... 173

Problem 4.2: Fourier Series ....................................................................................... 174

Problem 4.3: Phase Distortion .................................................................................. 175

Problem 4.4: Approximating Periodic Signals ......................................................... 176

Problem 4.5: Long, Hot Days ..................................................................................... 177

Problem 4.6: Fourier Transform Pairs ...................................................................... 178

Problem 4.7: Duality in Fourier Transforms ............................................................ 178

Problem 4.8: Spectra of Pulse Sequences ............................................................... 179

Problem 4.10: Lowpass Filtering a Square Wave .................................................... 180

Problem 4.11: Mathematics with Circuits ................................................................ 180

Problem 4.12: Where is that sound coming from? ................................................. 181

Problem 4.13: Arrangements of Systems ................................................................ 182

Problem 4.14: Filtering ............................................................................................... 182

Problem 4.15: Circuits Filter! ..................................................................................... 183

Problem 4.16: Reverberation .................................................................................... 183

Problem 4.17: Echoes in Telephone Systems .......................................................... 183

Problem 4.18: Effective Drug Delivery ...................................................................... 184

Problem 4.19: Catching Speeders with Radar ......................................................... 184

Problem 4.20: Demodulating an AM Signal ............................................................. 185

Problem 4.21: Unusual Amplitude Modulation ...................................................... 186

Problem 4.22: Sammy Falls Asleep... ........................................................................ 187

Problem 4.23: Jamming .............................................................................................. 188

Problem 4.24: AM Stereo ........................................................................................... 189

Problem 4.25: Novel AM Stereo Method ................................................................. 190

Problem 4.26: A Radical Radio Idea .......................................................................... 191

Problem 4.27: Secret Communication ..................................................................... 191

Problem 4.28: Signal Scrambling .............................................................................. 192

4.12 Solutions to Exercises in Chapter 4........................................................................... 192

Chapter 5 Digital Signal Processing .........................................................................196

5.1 Introduction to Digital Signal Processing.................................................................... 196

5.2 Introduction to Computer Organization .................................................................... 197

5.2.1 Computer Architecture ...................................................................................... 197

5.2.2 Representing Numbers ..................................................................................... 198

Exercise 5.2.1 ....................................................................................................... 199

Exercise 5.2.2 ....................................................................................................... 200

5.2.3 Computer Arithmetic and Logic ....................................................................... 200

Exercise 5.2.3 ....................................................................................................... 201

5.3 The Sampling Theorem ................................................................................................ 201

5.3.1 Analog-to-Digital Conversion ........................................................................... 201

5.4 The Sampling Theorem................................................................................................. 202

Exercise 5.3.1 ............................................................................................................... 204

Exercise 5.3.2 ............................................................................................................... 204

Exercise 5.3.3 ............................................................................................................... 205

5.5 Amplitude Quantization................................................................................................ 205

Exercise 5.4.1 ............................................................................................................... 206

Exercise 5.4.2 ............................................................................................................... 208

Exercise 5.4.3 ............................................................................................................... 208

Exercise 5.4.4 ............................................................................................................... 208

5.6 Discrete-Time Signals and Systems ............................................................................ 208

5.6.1 Real-and Complex-valued Signals .................................................................... 209

5.6.2 Complex Exponentials ....................................................................................... 209

5.6.3 Sinusoids ............................................................................................................ 209

5.6.4 Unit Sample......................................................................................................... 210

5.6.5 Unit Step ............................................................................................................. 210

5.6.6 Symbolic Signals ................................................................................................ 211

5.6.7 Discrete-Time Systems ..................................................................................... 211

5.7 Discrete-Time Fourier Transform (DTFT) .................................................................... 211

5.8 Discrete Fourier Transforms (DFT) .............................................................................. 217

5.9 DFT: Computational Complexity .................................................................................. 220

5.10 Fast Fourier Transform (FFT)...................................................................................... 221

5.11 Spectrograms............................................................................................................... 224

5.12 Discrete-Time Systems ............................................................................................... 228

5.13 Discrete-Time Systems in the Time-Domain ............................................................ 228

5.14 Discrete-Time Systems in the Frequency Domain .................................................. 233

5.14.1 Filtering in the Frequency Domain................................................................. 235

5.15 Efficiency of Frequency-Domain Filtering................................................................. 239

5.16 Discrete-Time Filtering of Analog Signals ................................................................. 242

5.17 Digital Signal Processing Problems ........................................................................... 244

5.18 Solutions to Exercises in Chapter 5........................................................................... 256

Chapter 6 Information Communication ..................................................................261

6.1 Information Communication ....................................................................................... 261

6.2 Types of Communication Channels ............................................................................ 262

6.3 Wireline Channels.......................................................................................................... 263

6.4 Wireless Channels ......................................................................................................... 269

6.5 Line-of-Sight Transmission ........................................................................................... 270

6.6 The Ionosphere and Communications ....................................................................... 272

6.7 Communication with Satellites .................................................................................... 272

6.8 Noise and Interference ................................................................................................. 273

6.9 Channel Models ............................................................................................................. 274

6.10 Baseband Communication......................................................................................... 276

6.11 Modulated Communication ....................................................................................... 277

6.12 Signal-to-Noise Ratio of an Amplitude-Modulated Signal ...................................... 278

6.13 Digital Communication ............................................................................................... 281

6.14 Binary Phase Shift Keying ........................................................................................... 282

6.15 Frequency Shift Keying ............................................................................................... 285

6.16 Digital Communication Receivers.............................................................................. 287

6.17 Digital Communication in the Presence of Noise.................................................... 289

6.18 Digital Communication System Properties .............................................................. 291

6.19 Digital Channels........................................................................................................... 292

6.20 Entropy ......................................................................................................................... 293

6.21 Source Coding Theorem ............................................................................................. 295

6.22 Compression and the Huffman Code ....................................................................... 297

6.23 Subtlies of Coding........................................................................................................ 299

6.24 Channel Coding ........................................................................................................... 302

6.25 Repetition Codes ......................................................................................................... 302

6.26 Block Channel Coding ................................................................................................. 304

6.27 Error-Correcting Codes: Hamming Distance............................................................ 305

6.28 Error-Correcting Codes: Channel Decoding ............................................................. 308

6.29 Error-Correcting Codes: Hamming Codes ................................................................ 310

6.30 Noisy Channel Coding Theorem ............................................................................... 313

6.30.1 Noisy Channel Coding Theorem .................................................................... 313

6.30.2 Converse to the Noisy Channel Coding Theorem ........................................ 314

6.31 Capacity of a Channel ................................................................................................. 315

6.32 Comparison of Analog and Digital Communication................................................ 316

6.33 Communication Networks ......................................................................................... 317

6.34 Message Routing ......................................................................................................... 319

6.35 Network architectures and interconnection ............................................................ 320

6.36 Ethernet ........................................................................................................................ 321

6.37 Communication Protocols.......................................................................................... 324

6.38 Information Communication Problems.................................................................... 325

6.39 Solutions to Exercises in Chapter 6........................................................................... 342

Chapter 7 Appendix ...................................................................................................351

7.1 Decibels .......................................................................................................................... 351

7.2 Permutations and Combinations ............................................................................... 352

7.2.1 Permutations and Combinations ..................................................................... 352

7.3 Frequency Allocations .................................................................................................. 354

7.4 Solutions to Exercises in Chapter 7 ............................................................................ 354

1

Chapter 1 Introduction

1.1 Themes

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

From its beginnings in the late nineteenth century, electrical engineering has

blossomed from focusing on electrical circuits for power, telegraphy and telephony to

focusing on a much broader range of disciplines. However, the underlying themes are

relevant today: Power creation and transmission and information have been the

underlying themes of electrical engineering for a century and a half. This course

concentrates on the latter theme: the representation, manipulation, transmission,

and reception of information by electrical means. This course describes what

information is, how engineers quantify information, and how electrical signals

represent information.

Information can take a variety of forms. When you speak to a friend, your thoughts

are translated by your brain into motor commands that cause various vocal tract

components the jaw, the tongue, the lips to move in a coordinated fashion.

Information arises in your thoughts and is represented by speech, which must have a

well defined, broadly known structure so that someone else can understand what you

say. Utterances convey information in sound pressure waves, which propagate to your

friend's ear. There, sound energy is converted back to neural activity, and, if what you

say makes sense, she understands what you say. Your words could have been

recorded on a compact disc (CD), mailed to your friend and listened to by her on her

stereo. Information can take the form of a text file you type into your word processor.

You might send the file via e-mail to a friend, who reads it and understands it. From an

information theoretic viewpoint, all of these scenarios are equivalent, although the

forms of the information representation sound waves, plastic and computer files are

very different.

Engineers, who don't care about information content, categorize information into two

different forms: analog and digital. Analog information is continuous valued;

examples are audio and video. Digital information is discrete valued; examples are

text (like what you are reading now) and DNA sequences.

The conversion of information-bearing signals from one energy form into another is

known as energyconversion or transduction. All conversion systems are inefficient

since some input energy is lost as heat, but this loss does not necessarily mean that

the conveyed information is lost. Conceptually we could use any form of energy to

represent information, but electric signals are uniquely well-suited for information

representation, transmission (signals can be broadcast from antennas or sent through

wires), and manipulation (circuits can be built to reduce noise and computers can be

used to modify information). Thus, we will be concerned with how to

• represent all forms of information with electrical signals,

• encode information as voltages, currents, and electromagnetic waves,

2

• manipulate information-bearing electric signals with circuits and computers, and

• receive electric signals and convert the information expressed by electric signals

back into a useful form.

Telegraphy represents the earliest electrical information system, and it dates from

1837. At that time, electrical science was largely empirical, and only those with

experience and intuition could develop telegraph systems. Electrical science came of

age when James Clerk Maxwell2 proclaimed in 1864 a set of equations that he claimed

governed all electrical phenomena. These equations predicted that light was an

electromagnetic wave, and that energy could propagate. Because of the complexity of

Maxwell's presentation, the development of the telephone in 1876 was due largely to

empirical work. Once Heinrich Hertz confirmed Maxwell's prediction of what we now

call radio waves in about 1882, Maxwell's equations were simplified by Oliver

Heaviside and others, and were widely read. This understanding of fundamentals led

to a quick succession of inventions the wireless telegraph (1899), the vacuum tube

(1905), and radio broadcasting that marked the true emergence of the

communications age.

During the first part of the twentieth century, circuit theory and electromagnetic

theory were all an electrical engineer needed to know to be qualified and produce

first-rate designs. Consequently, circuit theory served as the foundation and the

framework of all of electrical engineering education. At mid-century, three

"inventions" changed the ground rules. These were the first public demonstration of

the first electronic computer (1946), the invention of the transistor (1947), and the

publication of A Mathematical Theoryof Communication by Claude Shannon (1948).

Although conceived separately, these creations gave birth to the information age, in

which digital and analog communication systems interact and compete for design

preferences. About twenty years later, the laser was invented, which opened even

more design possibilities. Thus, the primary focus shifted from how to build

communication systems (the circuit theory era) to what communications systems

were intended to accomplish. Only once the intended system is specified can an

implementation be selected. Today's electrical engineer must be mindful of the

system's ultimate goal, and understand the tradeoffs between digital and analog

alternatives, and between hardware and software configurations in designing

information systems.

Note: Thanks to the translation efforts of Rice University's Disability Support

Services , this collection is now available in a Braille-printable version. Please

click here5 to download a .zip file containing all the necessary .dxb and image

files.

1.2 Signals Represent Information

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

Whether analog or digital, information is represented by the fundamental quantity in

electrical engineering: the signal. Stated in mathematical terms, a signal is merely a

function. Analog signals are continuous-valued; digital signals are discrete-valued.

3

The independent variable of the signal could be time (speech, for example), space

(images), or the integers (denoting the sequencing of letters and numbers in the

football score).

1.2.1 Analog Signals

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

Analog signals are usually signals defined over continuous independent

variable(s). Modeling the Speech Signal (Page 165) is produced by your vocal cords

exciting acoustic resonances in your vocal tract. The result is pressure waves

propagating in the air, and the speech signal thus corresponds to a function having

independent variables of space and time and a value corresponding to air pressure: s

(x, t) (Here we use vector notation x to denote spatial coordinates). When you record

someone talking, you are evaluating the speech signal at a particular spatial location,

x0 say. An example of the resulting waveform s (x0,t) is shown in this Figure 1.1.

Speech Example

Figure 1.1 Speech Example A speech signal's amplitude relates to tiny air pressure variations. Shown is a

recording of the vowel "e" (as in "speech").

Photographs are static, and are continuous-valued signals defined over space. Blackand-white images have only one value at each point in space, which amounts to its

optical refection properties. In Figure 1.2, an image is shown, demonstrating that it

(and all other images as well) are functions of two independent spatial variables.

4

Figure 1.2 Lena On the left is the classic Lena image, which is used ubiquitously as a test image. It contains

straight and curved lines, complicated texture, and a face. On the right is a perspective display of the Lena

image as a signal: a function of two spatial variables. The colors merely help show what signal values are

about the same size. In this image, signal values range between 0 and 255; why is that?

Color images have values that express how reflectivity depends on the optical

spectrum. Painters long ago found that mixing together combinations of the so-called

primary colors red, yellow and blue can produce very realistic color images. Thus,

images today are usually thought of as having three values at every point in space, but

a different set of colors is used: How much of red, green and blue is present.

Mathematically, color pictures are multivalued vector-valued signals: s (x)=(r (x) ,g (x) ,b

T

(x)) .

Interesting cases abound where the analog signal depends not on a continuous

variable, such as time, but on a discrete variable. For example, temperature readings

taken every hour have continuous analog values, but the signal's independent variable

is (essentially) the integers.

1.2.2 Digital Signals

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

The word "digital" means discrete-valued and implies the signal has an integer-valued

independent variable. Digital information includes numbers and symbols (characters

typed on the keyboard, for example). Computers rely on the digital representation of

information to manipulate and transform information. Symbols do not have a

numeric value, and each is represented by a unique number. The ASCII character code

has the upper-and lowercase characters, the numbers, punctuation marks, and

various other symbols represented by a seven-bit integer. For example, the ASCII code

represents the letter a as the number 97 and the letter A as 65. Figure 1.3 shows the

international convention on associating characters with integers.

5

Figure 1.3 ASCII Table The ASCII translation table shows how standard keyboard characters are

represented by integers. In pairs of columns, this table displays first the so-called 7-bit code (how many

characters in a seven-bit code?), then the character the number represents. The numeric codes are

represented in hexadecimal (base-16) notation. Mnemonic characters correspond to control characters,

some of which may be familiar (like cr for carriage return) and some not (bel means a "bell").

1.3 Structure of Communication Systems

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

Figure 1.4 The Fundamental Model of Communication.

Figure 1.5 Definition of a system A system operates on its input signal x (t) to produce an output y (t).

The fundamental model of communications is portrayed in Figure 1.4. In this

fundamental model, each message-bearing signal, exemplified by s (t), is analog and is

a function of time. A system operates on zero, one, or several signals to produce

more signals or to simply absorb them Figure 1.5. In electrical engineering, we

represent a system as a box, receiving input signals (usually coming from the left) and

producing from them new output signals. This graphical representation is known as a

block diagram. We denote input signals by lines having arrows pointing into the box,

output signals by arrows pointing away. As typified by the communications model,

6

how information flows, how it is corrupted and manipulated, and how it is ultimately

received is summarized by interconnecting block diagrams: The outputs of one or

more systems serve as the inputs to others.

In the communications model, the source produces a signal that will be absorbed by

the sink. Examples of time-domain signals produced by a source are music, speech,

and characters typed on a keyboard. Signals can also be functions of two variables an

image is a signal that depends on two spatial variables or more television pictures

(video signals) are functions of two spatial variables and time. Thus, information

sources produce signals. In physical systems, each signal corresponds to an

electrical voltage or current. To be able to design systems, we must understand

electrical science and technology. However, we first need to understand the big

picture to appreciate the context in which the electrical engineer works.

In communication systems, messages signals produced by sourcesmust be recast for

transmission. The block diagram has the message s (t) passing through a block

labeled transmitter that produces the signal x (t). In the case of a radio transmitter, it

accepts an input audio signal and produces a signal that physically is an

electromagnetic wave radiated by an antenna and propagating as Maxwell's equations

predict. In the case of a computer network, typed characters are encapsulated in

packets, attached with a destination address, and launched into the Internet. From the

communication systems "big picture" perspective, the same block diagram applies

although the systems can be very different. In any case, the transmitter should not

operate in such a way that the message s (t) cannot be recovered from x (t). In the

mathematical sense, the inverse system must exist, else the communication system

cannot be considered reliable. (It is ridiculous to transmit a signal in such a way that

no one can recover the original. However, clever systems exist that transmit signals so

that only the "in crowd" can recover them. Such crytographic systems underlie secret

communications.)

Transmitted signals next pass through the next stage, the evil channel. Nothing good

happens to a signal in a channel: It can become corrupted by noise, distorted, and

attenuated among many possibilities. The channel cannot be escaped (the real world

is cruel), and transmitter design and receiver design focus on how best to jointly fend

of the channel's effects on signals. The channel is another system in our block

diagram, and produces r (t), the signal received by the receiver. If the channel were

benign (good luck finding such a channel in the real world), the receiver would serve

as the inverse system to the transmitter, and yield the message with no distortion.

However, because of the channel, the receiver must do its best to produce a received

message sˆ(t) that resembles s (t) as much as possible. Shannon8 showed in his 1948

paper that reliable for the moment, take this word to mean error-free digital

communication was possible over arbitrarily noisy channels. It is this result that

modern communications systems exploit, and why many communications systems

are going "digital." The module on Information Communication (Section 6.1) details

Shannon's theory of information, and there we learn of Shannon's result and how to

use it.

Finally, the received message is passed to the information sink that somehow makes

use of the message. In the communications model, the source is a system having no

input but producing an output; a sink has an input and no output.

7

Understanding signal generation and how systems work amounts to understanding

signals, the nature of the information they represent, how information is transformed

between analog and digital forms, and how information can be processed by systems

operating on information-bearing signals. This understanding demands two different

fields of knowledge. One is electrical science: How are signals represented and

manipulated electrically? The second is signal science: What is the structure of signals,

no matter what their source, what is their information content, and what capabilities

does this structure force upon communication systems?

1.4 The Fundamental Signal

1.4.1 The Sinusoid

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

The most ubiquitous and important signal in electrical engineering is the sinusoid.

Sine Definition

A is known as the sinusoid's amplitude, and determines the sinusoid's size. The

amplitude conveys the sinusoid's physical units (volts, lumens, etc). The frequencyf

-1

has units of Hz (Hertz) or s , and determines how rapidly the sinusoid oscillates per

unit time. The temporal variable t always has units of seconds, and thus the frequency

determines how many oscillations/second the sinusoid has. AM radio stations have

carrier frequencies of about 1 MHz (one mega-hertz or 106 Hz), while FM stations have

carrier frequencies of about 100 MHz. Frequency can also be expressed by the symbol

ω, which has units of radians/second. Clearly,

. In communications, we most often express frequency in Hertz. Finally, φ is the

phase, and determines the sine wave's behavior at the origin (t =0). It has units of

radians, but we can express it in degrees, realizing that in computations we must

convert from degrees to radians. Note that if

, the sinusoid corresponds to a sine function, having a zero value at the origin.

Thus, the only difference between a sine and cosine signal is the phase; we term

either a sinusoid.

We can also define a discrete-time variant of the sinusoid:

. Here, the independent variable is n and represents the integers. Frequency now has

no dimensions, and takes on values between 0 and 1.

8

Exercise 1.4.1

Show that cos (2πfn) = cos (2π (f + 1) n), which means that a sinusoid

having a frequency larger than one corresponds to a sinusoid having

a frequency less than one.

Note: Notice that we shall call either sinusoid an analog signal. Only when the

discrete-time signal takes on a finite set of values can it be considered a digital

signal.

Exercise 1.4.2

Can you think of a simple signal that has a finite number of values

but is defined in continuous time? Such a signal is also an analog

signal.

1.4.2 Communicating Information with Signals

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

The basic idea of communication engineering is to use a signal's parameters to

represent either real numbers or other signals. The technical term is to modulate the

carrier signal's parameters to transmit information from one place to another. To

explore the notion of modulation, we can send a real number (today's temperature,

for example) by changing a sinusoid's amplitude accordingly. If we wanted to send the

daily temperature, we would keep the frequency constant (so the receiver would know

what to expect) and change the amplitude at midnight. We could relate temperature

to amplitude by the formula A = A0(1 + kT), where A0 and k are constants that the

transmitter and receiver must both know.

If we had two numbers we wanted to send at the same time, we could modulate the

sinusoid's frequency as well as its amplitude. This modulation scheme assumes we

can estimate the sinusoid's amplitude and frequency; we shall learn that this is indeed

possible.

Now suppose we have a sequence of parameters to send. We have exploited all of the

sinusoid's two parameters. What we can do is modulate them for a limited time (say T

seconds), and send two parameters every T. This simple notion corresponds to how a

modem works. Here, typed characters are encoded into eight bits, and the individual

bits are encoded into a sinusoid's amplitude and frequency. We'll learn how this is

done in subsequent modules, and more importantly, we'll learn what the limits are on

such digital communication schemes.

9

1.5 Introduction Problems

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

Problem 1.1: RMS Values

The rms (root-mean-square) value of a periodic signal is defined to be

where T is defined to be the signal's period: the smallest positive number such that s

(t)= s (t + T ).

1. What is the period of s (t)= Asin (2πf0t + φ)?

2. What is the rms value of this signal? How is it related to the peak value?

3. What is the period and rms value of the depicted (Figure 1.6) square wave,

generically denoted by sq (t)?

4. By inspecting any device you plug into a wall socket, you'll see that it is labeled

"110 volts AC". What is the expression for the voltage provided by a wall socket?

What is its rms value?

Figure 1.6

Problem 1.2: Modems

The word "modem" is short for "modulator-demodulator." Modems are used not only

for connecting computers to telephone lines, but also for connecting digital (discretevalued) sources to generic channels. In this problem, we explore a simple kind of

modem, in which binary information is represented by the presence or absence of a

sinusoid (presence representing a "1" and absence a "0"). Consequently, the modem's

transmitted signal that represents a single bit has the form

Within each bit interval T, the amplitude is either A or zero.

1. What is the smallest transmission interval that makes sense with the frequency

f 0?

2. Assuming that ten cycles of the sinusoid comprise a single bit's transmission

interval, what is the datarate of this transmission scheme?

10

3. Now suppose instead of using "on-of" signaling, we allow one of several different

values for the amplitude during any transmission interval. If N amplitude values

are used, what is the resulting datarate?

4. The classic communications block diagram applies to the modem. Discuss how

the transmitter must interface with the message source since the source is

producing letters of the alphabet, not bits.

Problem 1.3: Advanced Modems

To transmit symbols, such as letters of the alphabet, RU computer modems use two

frequencies (1600 and 1800 Hz) and several amplitude levels. A transmission is sent

for a period of time T (known as the transmission or baud interval) and equals the

sum of two amplitude-weighted carriers.

We send successive symbols by choosing an appropriate frequency and amplitude

combination, and sending them one after another.

1. What is the smallest transmission interval that makes sense to use with the

frequencies given above? In other words, what should T be so that an integer

number of cycles of the carrier occurs?

2. Sketch (using Matlab) the signal that modem produces over several transmission

intervals. Make sure you axes are labeled.

3. Using your signal transmission interval, how many amplitude levels are needed to

transmit ASCII characters at a datarate of 3,200 bits/s? Assume use of the

extended (8-bit) ASCII code.

Note: We use a discrete set of values for A1 and A2. If we have N1 values for

amplitude A1, and N2 values for A2, we have N1N2 possible symbols that can be

sent during each T second interval. Toconvert this number into bits (the

fundamental unit of information engineers use to qualify things), compute

log2 (N1N2).

1.6 Solutions to Exercises in Chapter 1

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

Solution to Exercise 1.4.1

Solution to Exercise 1.4.2

A square wave takes on the values 1 and −1 alternately. See the plot in the module

Square Wave (Page 20)

11

Chapter 2 Signals and Systems

2.1 Complex Numbers

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

While the fundamental signal used in electrical engineering is the sinusoid, it can be

expressed mathematically in terms of an even more fundamental signal: the complex

exponential. Representing sinusoids in terms of complex exponentials is not a

mathematical oddity. Fluency with complex numbers and rational functions of

complex variables is a critical skill all engineers master. Understanding information

and power system designs and developing new systems all hinge on using complex

numbers. In short, they are critical to modern electrical engineering, a realization

made over a century ago.

2.1.1 Definitions

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

The notion of the square root of −1 originated with the quadratic formula: the solution

of certain quadratic equations mathematically exists only if the so-called imaginary

quantity

could be defined. Euler first used i for the imaginary unit but that notation did not take

hold until roughly Ampere's time. Ampere3 used the symbol i to denote current

(intensite de current). It wasn't until the twentieth century that the importance of

complex numbers to circuit theory became evident. By then, using i for current was

entrenched and electrical engineers chose j for writing complex numbers.

An imaginary number has the form

.A complex number, z, consists of the ordered pair (a,b), a is the real component and

b is the imaginary component (the j is suppressed because the imaginary component

of the pair is always in the second position). The imaginary number jb equals (0,b).

Note that a and b are real-valued numbers.

Figure 2.1 shows that we can locate a complex number in what we call the complex

plane. Here, a, the real part, is the x-coordinate and b, the imaginary part, is the ycoordinate.

From analytic geometry, we know that locations in the plane can be expressed as the

sum of vectors, with the vectors corresponding to the x and y directions.

Consequently, a complex number z can be expressed as the (vector) sum z = a + jb

where j indicates the y-coordinate. This representation is known as the Cartesian

form of z. An imaginary number can't be numerically added to a real number; rather,

12

this notation for a complex number represents vector addition, but it provides a

convenient notation when we perform arithmetic manipulations.

Some obvious terminology. The real part of the complex number z = a + jb, written as

Re (z), equals a. We consider the real part as a function that works by selecting that

component of a complex number not multiplied by j. The imaginary part of z, Im (z),

equals b: that part of a complex number that is multiplied by j. Again, both the real

and imaginary parts of a complex number are real-valued.

Figure 2.1 The Complex Plane A complex number is an ordered pair (a,b) that can be regarded as

coordinates in the plane. Complex numbers can also be expressed in polar coordinates as r∠θ.

The complex conjugate of z, written as z *, has the same real part as z but an

imaginary part of the opposite sign.

Using Cartesian notation, the following properties easily follow.

• If we add two complex numbers, the real part of the result equals the sum of the

real parts and the imaginary part equals the sum of the imaginary parts. This

property follows from the laws of vector addition.

In this way, the real and imaginary parts remain separate.

• The product of j and a real number is an imaginary number: ja. The product of j

2

and an imaginary number is a real number: j (jb)= −b because j = −1.

13

Consequently, multiplying a complex number by j rotates the number's position

by 90 degrees.

Exercise 2.1.1

Use the Definition of addition to show that the real and imaginary

parts can be expressed as a sum/diference of a complex number and

its conjugate.

and

Complex numbers can also be expressed in an alternate form, polar form, which we

will find quite useful. Polar form arises arises from the geometric interpretation of

complex numbers. The Cartesian form of a complex number can be re-written as

By forming a right triangle having sides a and b, we see that the real and imaginary

parts correspond to the cosine and sine of the triangle's base angle. We thus obtain

the polar form for complex numbers.

The quantity r is known as the magnitude of the complex number z, and is frequently

written as |z|. The quantity θ is the complex number's angle. In using the arc-tangent

formula to find the angle, we must take into account the quadrant in which the

complex number lies.

Exercise 2.1.2

Convert 3 − 2j to polar form.

2.1.2 Euler's Formula

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

Surprisingly, the polar form of a complex number z can be expressed mathematically

as

14

To show this result, we use Euler's relations that express exponentials with imaginary

arguments in terms of trigonometric functions.

The first of these is easily derived from the Taylor's series for the exponential.

Substituting jθ for x, we find that

2

3

4

because j = −1, j = −j, and j =1. Grouping separately the real-valued terms and the

imaginary-valued ones,

The real-valued terms correspond to the Taylor's series for cos (θ), the imaginary ones

to sin (θ), and Euler's first relation results. The remaining relations are easily derived

from the first. We see that multiplying the exponential in (2.3) by a real constant

corresponds to setting the radius of the complex number to the constant.

2.1.3 Calculating with Complex Numbers

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

Adding and subtracting complex numbers expressed in Cartesian form is quite easy:

You add (subtract) the real parts and imaginary parts separately.

To multiply two complex numbers in Cartesian form is not quite as easy, but follows

directly from following the usual rules of arithmetic.

Note that we are, in a sense, multiplying two vectors to obtain another vector.

Complex arithmetic provides a unique way of defining vector multiplication.

15

Exercise 2.1.3

What is the product of a complex number and its conjugate?

Division requires mathematical manipulation. We convert the

division problem into a multiplication problem by multiplying both

the numerator and denominator by the conjugate of the

denominator.

Because the final result is so complicated, it's best to remember how to perform

division multiplying numerator and denominator by the complex conjugate of the

denominator than trying to remember the final result.

The properties of the exponential make calculating the product and ratio of two

complex numbers much simpler when the numbers are expressed in polar form.

To multiply, the radius equals the product of the radii and the angle the sum of the

angles. To divide, the radius equals the ratio of the radii and the angle the difference

of the angles. When the original complex numbers are in Cartesian form, it's usually

worth translating into polar form, then performing the multiplication or division

(especially in the case of the latter). Addition and subtraction of polar forms amounts

to converting to Cartesian form, performing the arithmetic operation, and converting

back to polar form.

16

Example 2.1

When we solve circuit problems, the crucial quantity, known as a

transfer function, will always be expressed as the ratio of

polynomials in the variable s = j2πf. What we'll need to understand

the circuit's effect is the transfer function in polar form. For

instance, suppose the transfer function equals

Performing the required division is most easily accomplished by first

expressing the numerator and denominator each in polar form, then

calculating the ratio. Thus,

2.2 Elemental Signals

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

Elemental signals are the building blocks with which we build complicated

signals. By definition, elemental signals have a simple structure. Exactly what we

mean by the "structure of a signal" will unfold in this section of the course. Signals are

nothing more than functions defined with respect to some independent variable,

which we take to be time for the most part. Very interesting signals are not functions

solely of time; one great example of which is an image. For it, the independent

variables are x and y (two-dimensional space). Video signals are functions of three

variables: two spatial dimensions and time. Fortunately, most of the ideas underlying

modern signal theory can be exemplified with one-dimensional signals.

2.2.1 Sinusoids

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

Perhaps the most common real-valued signal is the sinusoid.

For this signal, A is its amplitude, f0 its frequency, and φ its phase.

17

2.2.2 Complex Exponentials

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

The most important signal is complex-valued, the complex exponential.

Here, j denotes

is known as the signal's complex amplitude. Considering the complex amplitude as a

complex number in polar form, its magnitude is the amplitude A and its angle the

signal phase. The complex amplitude is also known as a phasor. The complex

exponential cannot be further decomposed into more elemental signals, and is the

most important signal in electrical engineering! Mathematical manipulations at

first appear to be more difficult because complex-valued numbers are introduced. In

fact, early in the twentieth century, mathematicians thought engineers would not be

sufficiently sophisticated to handle complex exponentials even though they greatly

simplified solving circuit problems. Steinmetz 5 introduced complex exponentials to

electrical engineering, and demonstrated that "mere" engineers could use them to

good effect and even obtain right answers! See Complex Numbers (Page 11) for a

review of complex numbers and complex arithmetic.

The complex exponential defines the notion of frequency: it is the only signal that

contains only one frequency component. The sinusoid consists of two frequency

components: one at the frequency +f0 and the other at −f0.

EULER RELATION: This decomposition of the sinusoid can be traced to Euler's relation.

DECOMPOSITION: The complex exponential signal can thus be written in terms of its

real and imaginary parts using Euler's relation. Thus, sinusoidal signals can be

expressed as either the real or the imaginary part of a complex exponential signal, the

choice depending on whether cosine or sine phase is needed, or as the sum of two

complex exponentials. These two decompositions are mathematically equivalent to

each other.

18

Using the complex plane, we can envision the complex exponential's temporal

variations as seen in the above Figure 2.2. The magnitude of the complex exponential

is A, and the initial value of the complex exponential at t =0 has an angle of φ. As time

increases, the locus of points traced by the complex exponential is a circle (it has

constant magnitude of A). The number of times per second we go around the circle

equals the frequency f. The time taken for the complex exponential to go around the

circle once is known as its period T, and equals

. The projections onto the real and imaginary axes of the rotating vector representing

the complex exponential signal are the cosine and sine signal of Euler's relation

((2.16)).

2.2.3 Real Exponentials

Available under Creative Commons-ShareAlike 4.0 International License (http://creativecommon

s.org/licenses/by-sa/4.0/).

As opposed to complex exponentials which oscillate, real exponentials (Figure 2.3)

decay.

The quantity τ is known as the exponential's time constant, and corresponds to the

time required for the exponential to decrease by a factor of

, which approximately equals 0.368. A decaying complex exponential is the product