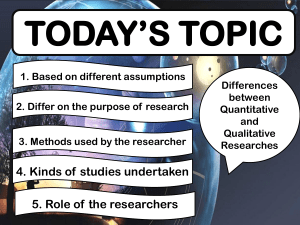

Research Design: Qualitative, Quantitative, Mixed Methods

advertisement