Analog Computing Review: Technical Report

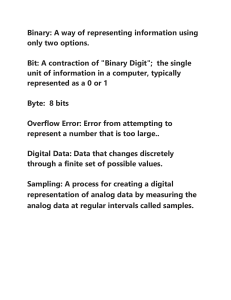

advertisement