Control Systems

Safety Evaluation

and Reliability

Third Edition

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,`

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Control Systems

Safety Evaluation

and Reliability

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

William M. Goble

Third Edition

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Notice

The information presented in this publication is for the general education of the reader. Because

neither the author nor the publisher has any control over the use of the information by the reader,

both the author and the publisher disclaim any and all liability of any kind arising out of such use.

The reader is expected to exercise sound professional judgment in using any of the information presented in a particular application.

Additionally, neither the author nor the publisher has investigated or considered the effect of

any patents on the ability of the reader to use any of the information in a particular application. The

reader is responsible for reviewing any possible patents that may affect any particular use of the

information presented.

Any references to commercial products in the work are cited as examples only. Neither the

author nor the publisher endorses any referenced commercial product. Any trademarks or tradenames referenced belong to the respective owner of the mark or name. Neither the author nor the

publisher makes any representation regarding the availability of any referenced commercial product at any time. The manufacturer’s instructions on use of any commercial product must be followed at all times, even if in conflict with the information in this publication.

Copyright © 2010

International Society of Automation

67 Alexander Drive

P.O. Box 12277

Research Triangle Park, NC 27709

All rights reserved.

Printed in the United States of America.

10 9 8 7 6 5 4 3 2

ISBN 978-1-934394-80-9

No part of this work may be reproduced, stored in a retrieval system, or transmitted

in any form or by any means, electronic, mechanical, photocopying, recording or

otherwise, without the prior written permission of the publisher.

Library of Congress Cataloging-in-Publication Data

Goble, William M.

Control systems safety evaluation and reliability / William M. Goble.

-- 3rd ed.

p. cm. -- (ISA resources for measurement and control series)

Includes bibliographical references and index.

ISBN 978-1-934394-80-9 (pbk.)

1. Automatic control--Reliability. I. Title.

TJ213.95.G62 2010

629.8--dc22

2010015760

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

ISA Resources for Measurement

and Control Series (RMC)

• Control System Documentation: Applying Symbols and Identification, 2nd

Edition

• Control System Safety Evaluation and Reliability, 3rd Edition

• Industrial Data Communications, 4th Edition

• Industrial Flow Measurement, 3rd Edition

• Industrial Level, Pressure, and Density Measurement, 2nd Edition

• Measurement and Control Basics, 4th Edition

• Programmable Controllers, 4th Edition

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Acknowledgments

This book has been made possible only with the help of many other persons.

Early in the process, J. V. Bukowski of Villanova taught a graduate course in

reliability engineering where I was introduced to the science. This course and

several subsequent tutorial sessions over the years provided the help necessary to

get started.

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Many others have helped develop the issues important to control system safety

and reliability. I want to thank co-workers; John Grebe, John Cusimano, Ted Bell,

Ted Tucker, Griff Francis, Dave Johnson, Glenn Bilane, Jim Kinney, and Steve

Duff. They have asked penetrating questions, argued key points, made

suggestions, and provided solutions to complicated problems. A former boss Bob

Adams deserves a special thank you for asking tough questions and demanding

that reliability be made a prime consideration in the design of new products.

Fellow members of the ISA84 standards committee have also helped develop the

issues. I wish to thank Vic Maggioli, Dimitrios Karydos, Tony Frederickson, Paris

Stavrianidis, Paul Gruhn, Aarnout Brombacher, Ad Hamer, Rolf Spiker, Dan

Sniezek and Steve Smith. I have learned from our debates.

Several persons made significant improvements to the document as part of the

review process. I wish to thank Tom Fisher, John Grebe, Griff Francis, Paul

Gruhn, Dan Sniezek, Rainer Faller and Rachel Amkreutz. The comments and

questions from these reviewers improved the book considerably. Julia Bukowski

from Villanova University and Jan Rouvroye of Eindhoven University deserve a

special thank you for their comprehensive and detail review. Iwan van Beurden

of Eindhoven University also deserves a special thank you for a detail review and

check of the examples and exercise answers. I also wish to thank Rick Allen, a

good friend, who reviewed the draft and tried to teach the rules of grammar and

punctuation.

Finally, I wish thank my wife Sandy and my daughters Tyree and Emily for their

patience and help. Everyone helped proofread, type, and check math. While the

specific help was greatly appreciated, it is the encouragement and support for

which I am truly thankful.

vii

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Contents

PREFACE xv

ABOUT THE AUTHOR xvii

Chapter 1 INTRODUCTION 1

Control System Safety and Reliability, 1

Standards, 4

Exercises, 6

Answers to Exercises, 7

References, 7

Chapter 2 UNDERSTANDING RANDOM EVENTS 9

Random Variables, 9

Mean, 18

Variance, 21

Common Distributions, 23

Exercises, 27

Answers to Exercises, 29

References, 31

Chapter 3 FAILURES: STRESS VERSUS STRENGTH 33

Failures, 33

Failure Categorization, 33

Categorization of Failure Stress Sources, 39

Stress and Strength, 46

Electrical Surge and Fast Transients, 55

Exercises, 56

Answers to Exercises, 56

References, 57

ix

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

x

Control Systems Safety Evaluation and Reliability

Chapter 4 RELIABILITY AND SAFETY 59

Reliability Definitions, 59

Time to Failure, 59

The Constant Failure Rate, 72

Steady-State Availability – Constant Failure Rate Components, 76

Safety Terminology, 78

Exercises, 85

Answers to Exercises, 86

References, 86

Chapter 5 FMEA / FMEDA 87

Failure Modes and Effects Analysis, 87

FMEA Procedure, 87

FMEA Limitations, 88

FMEA Format, 88

Failure Modes, Effects and Diagnostic Analysis (FMEDA), 94

Conventional PLC Input Circuit, 95

Critical Input (High Diagnostic) PLC Input Circuit, 97

FMEDA Limitations, 99

Exercises, 99

Answers to Exercises, 100

References, 100

Chapter 6 FAULT TREE ANALYSIS 103

Fault Tree Analysis, 103

Fault Tree Process, 104

Fault Tree Symbols, 105

Qualitative Fault Tree Analysis, 106

Quantitative Fault Tree Analysis, 108

Use of Fault Tree Analysis for PFDavg Calculations, 114

Using a Fault Tree for Documentation, 116

Exercises, 118

Answers to Exercises, 119

References, 119

Chapter 7 RELIABILITY BLOCK DIAGRAMS

Reliability Block Diagrams, 121

Series Systems, 123

Quantitative Block Diagram Evaluation, 137

Exercises, 146

Answers to Exercises, 147

References and Bibliography, 148

121

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Contents

xi

Chapter 8 MARKOV MODELING 149

Repairable Systems, 149

Markov Models, 149

Solving Markov Models, 151

Discrete Time Markov Modeling, 154

Exercises, 176

Answers to Exercises, 177

References, 177

Chapter 9 DIAGNOSTICS 179

Improving Safety and MTTF, 179

Measuring Diagnostic Coverage, 186

Diagnostic Techniques, 190

Fault Injection Testing, 197

Exercises, 197

Answers to Exercises, 198

References, 199

Chapter 11 SOFTWARE RELIABILITY 223

Software Failures, 223

Stress-Strength View of Software Failures, 226

Software Complexity, 229

Software Reliability Modeling, 238

Software Reliability Model Assumptions, 248

Exercises, 251

Answers to Exercises, 252

References, 253

Chapter 12 MODELING DETAIL 255

Key Issues, 255

Probability Approximations, 256

Diagnostics and Common Cause, 268

Probability of Initial Failure, 278

Comparing the Techniques, 280

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Chapter 10 COMMON CAUSE 201

Common-Cause Failures, 201

Common-Cause Modeling, 205

Common-Cause Avoidance, 211

Estimating the Beta Factor, 213

Estimating Multiple Parameter Common-Cause Models, 215

Including Common Cause in Unit or System Models, 216

Exercises, 220

Answers to Exercises, 220

References, 221

xii

Control Systems Safety Evaluation and Reliability

In Closing, 281

Exercises, 281

Answers to Exercises, 281

References, 282

Chapter 13 RELIABILITY AND SAFETY MODEL CONSTRUCTION 283

System Model Development, 283

Exercises, 302

Answers to Exercises, 302

References, 303

Chapter 14 SYSTEM ARCHITECTURES 305

Introduction, 305

Single Board PEC, 306

System Configurations, 310

Comparing Architectures, 353

Exercises, 355

Answers to Exercises, 356

References, 357

Chapter 15 SAFETY INSTRUMENTED SYSTEMS

Risk Cost, 359

Risk Reduction, 360

How Much RRF is Needed?, 361

SIS Architectures, 366

Exercises, 375

Answers to Exercises, 376

References, 376

359

Chapter 16 LIFECYCLE COSTING 379

The Language of Money, 379

Procurement Costs, 381

Cost of System Failure, 384

Lifecycle Cost Analysis, 386

Time Value of Money, 389

Safety Instrumented System Lifecycle Cost, 395

Exercises, 397

Answers to Exercises, 398

References, 399

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

APPENDIX A

STANDARD NORMAL DISTRIBUTION TABLE

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

401

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Contents

APPENDIX B MATRIX MATH 405

The Matrix, 405

Matrix Addition, 406

Matrix Subtraction, 406

Matrix Multiplication, 406

Matrix Inversion, 407

APPENDIX C PROBABILITY THEORY

Introduction, 413

Venn Diagrams, 414

Combining Probabilities, 417

Permutations and Combinations, 426

Exercises, 430

Answers to Exercises, 432

Bibliography, 433

413

APPENDIX D TEST DATA 435

Censored and Uncensored Data, 439

APPENDIX E CONTINUOUS TIME MARKOV MODELING 441

Single Nonrepairable Component, 441

Single Repairable Component, 444

Limiting State Probabilities, 448

Multiple Failure Modes, 450

INDEX 455

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

xiii

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Preface

The ability to numerically evaluate control system design parameters, like

safety and reliability, have always been important in order to balance the

tradeoffs between cost, performance and maintenance in control system

design. However, there is more involved than just economics. Proper protection of personnel and the environment have become the issue. Increasingly, quantitative analysis of safety and reliability is becoming essential

as international regulations require justified and measured safety protection performance.

The ISA-84.01 standard defines quantitative performance levels for safety

instrumented systems (SIS). New IEC safety standards and the industry

specific companion standards do the same. In general these standards are

not prescriptive, they do not say exactly how to design the system.

Instead, they advise the quantitative safety measurements that must be

met and the designer considers various design alternatives to see which

design meets the targets.

This general approach is very consistent with those who work to economically optimize their designs. Design constraints must be balanced in order

to provide the optimal design. The ultimate economic success of the process is affected by all of the design constraints. True design optimization

requires that alternative designs be evaluated in the context of the constraints. Numeric targets and methods to quantitatively evaluate safety

and reliability are the tools needed to include this dimension in the optimization process.

As with many areas of engineering, it must be realized that system safety

and reliability cannot be quantified with total certainty at the present time.

Different assumptions are made in order to simplify the problem. Failure

xv

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

xvi

Control Systems Safety Evaluation and Reliability

rate data, the primary input required for most methods, is not precisely

specified or readily available. Precise failure rate data requires an extensive life test where operational conditions match expected usage.

Several factors prevent this testing. First, current control system components from quality vendors have achieved a general level of reliability that

allows them to operate for many, many years. Precise life testing requires

that units be operated until failure. The time required for this testing is far

beyond the usefulness of the data (components are obsolete before the test

is complete). Second, operational conditions vary significantly between

control systems installations. One site may have failure rates that are

much higher than another site. Last, variations in usage will affect reliability of a component. This is especially true when design faults exist in a

product. Design faults are probable in the complex components used in

today's systems. Design faults, “bugs,” are almost expected in complicated

software.

In spite of the limitations of variability, imprecision, simplified assumptions, and different methods: rapid progress is being made in the area of

safety and reliability evaluation. ISA standards committees are working in

different areas of this field. ISA84 has a committee working on methods of

calculating system reliability. Several methods that utilize the tools covered in this book are proposed.

Software reliability has been the subject of intense research for over a

decade. These efforts are beginning to show some results. This is important to the subject of control systems because of the explosive growth of

software within these systems. Although software engineering techniques

have provided better design fault avoidance methods, the growth has outstripped the improvements. Software reliability may well be the control

system reliability crisis of the future.

William M. Goble

Ottsville, PA

April 2010

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Safety and reliability are important design constraints for control systems.

When those involved in the system design share common vocabulary,

understand evaluation methods, include all site variables and understand

how to evaluate reliable software; then safety and reliability can become

true design parameters. This is the goal.

About the Author

Dr. William M. Goble has more than 30 years of experience in analog and

digital electronic circuit design, software development, engineering

management and marketing. He is currently a founding member and

Principal Partner with exida, a knowledge company focused on

automation safety and reliability.

He holds a B.S. in electrical engineering from Penn State and an M.S. in

electrical engineering from Villanova. He has a Ph.D. from the

Department of Mechanical Reliability at Eindhoven University of

Technology in Eindhoven, Netherlands, and has done research in

methods of modeling the safety and reliability of automation systems. He

is a Professional Engineer in the state of Pennsylvania and holds a

Certified Functional Safety Expert certificate.

He is a well-known speaker and consultant and also develops and teaches

courses on various reliability and safety engineering topics. He has

written several books and has authored or co-authored many technical

papers and magazine articles, primarily on software and hardware safety

and reliability, and on quality improvement and quantitative modeling.

He is a Fellow Member of the International Society of Automation (ISA)

and is a member of IEEE, AIChE, and several international standards

committees.

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

xvii

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

1

Introduction

Control System Safety and Reliability

Safety and reliability have been essential parameters of automatic control

systems design for decades. It is clearly recognized that a safe and reliable

system provides many benefits. Economic benefits include less lost production, higher quality product, reduced maintenance costs, and lower

risk costs. Other benefits include regulatory compliance, the ability to

schedule maintenance, and many others—including peace of mind and

the satisfaction of a job well done.

Given the importance of safety and reliability, how are they achieved?

How are they measured? The science of Reliability Engineering has

advanced quite a bit in recent decades. That science offers a number of

fundamental concepts used to achieve high reliability and high safety.

These concepts include high-strength design, fault-tolerant design, on-line

failure diagnostics, and high-common-cause strength. All of these important concepts will be developed in later chapters of this book. When these

concepts are actually understood and used, great benefits can result.

Reliability and safety are measured using a number of well-defined

parameters including Reliability, Availability, MTTF (Mean Time To Failure), RRF (Risk Reduction Factor), PFD (Probability of Failure on

Demand), PFDavg (Average Probability of Failure on Demand), PFS

(Probability of Safe Failure), and other special metrics. These terms have

been developed over the last 60 years or so by the reliability and safety

engineering community.

1

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

2

Control Systems Safety Evaluation and Reliability

Reliability Engineering

The science of reliability engineering has developed a number of qualitative and semi-quantitative techniques that allow an engineer to understand system operation in the presence of a component failure. These

techniques include failure modes and effects analysis (FMEA), qualitative

fault tree analysis (FTA), and hazard and operational analysis (HAZOPS).

Other techniques based on probability theory and statistics allow the control engineer to quantitatively evaluate the reliability and safety of control

system designs. Reliability block diagrams and fault trees use combinational probability to evaluate the system-level probability of success, probability of safe failure, or probability of dangerous failure. Another popular

technique called Markov models shows system success and failure via circles called states. These techniques will be covered in this book.

Reliability engineering is built upon a foundation of probability and statistics. But, a successful control system reliability evaluation depends just as

much on control and safety systems knowledge. This knowledge includes

an understanding of the components used in these systems, the component failure modes and their effect on the system, and the system failure

modes and failure stress sources present in the system environment. Thus

logic, systems engineering, and some mathematics are combined to complete the tool-set needed for reliability and safety evaluation. Real-world

factors—including on-line diagnostic capability, repair times, software

failures, human failures, common-cause failures, failure modes, and timedependent failure rates— must be addressed in a complete analysis.

Perspective

The field of reliability engineering is relatively new compared to other

engineering disciplines, with significant research having been driven by

military needs in the mid-1940s. Introductory work in hardware reliability

was done in conjunction with the German V2 rocket program, where innovations such as the 2oo3 (two out of three) voting scheme were invented

[Ref. 1, 2]. Human reliability research began with American studies done

on radar operators and gunners during World War II. Military systems

were among the first to reach complexity levels at which reliability engineering became important. Methods were needed to answer important

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Life-cycle cost modeling may be the most useful technique of all to answer

questions of optimal cost and justification. Using this analysis tool, the

output of a reliability analysis in the language of statistics is converted to

the clearly understood language of money. It is frequently quite surprising

how much money can be saved using reliable and safe equipment. This is

especially true when the cost of failure is high.

Introduction

3

questions, such as: “Which configuration is more reliable on an airplane,

four small engines or two large engines?”

Control systems and safety protection systems have also followed an evolutionary path toward greater complexity. Early control systems were simple. Push buttons and solenoid valves, sight gauges, thermometers, and

dipsticks were typical control tools. Later, single loop pneumatic controllers dominated. Most of these machines were not only inherently reliable,

many failed in predictable ways. With a pneumatic system, when the air

tubes leaked, the output went down. When an air filter clogged, the output went to zero. When the hissing noise changed, a good technician

could “run diagnostics” just by listening to determine where the problem

was. Safety protection systems were built from relays and sensing

switches. With the addition of safety springs and special contacts, these

devices would virtually always fail with the contacts open. Again, they

were simple devices that were inherently reliable with predictable,

(mostly) fail-safe failure modes.

The inevitable need for better processes eventually pushed control systems to a level of complexity at which sophisticated electronics became the

optimal solution for control and safety protection. Distributed microcomputer-based controllers introduced in the mid-1970s offered economic

benefits, improved reliability, and flexibility.

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

The level of complexity in our control systems has continued to increase,

and programmable electronic systems have become the standard. Systems

today utilize a hierarchical collection of computers of all sizes, from microcomputer-based sensors to world-wide computer communication networks. Industrial control and safety protection systems are now among

the most complex systems anywhere. These complex systems are the type

that can benefit most from reliability engineering. Control systems designers need answers to their questions: “Which control architecture gives the

best reliability for the application?” “What combination of systems will

give me the lowest cost of ownership for the next five years?” “Should I

use a personal computer to control our reactor?” “What architecture is

needed to meet SIL3 safety requirements?”

These questions are best answered using quantitative reliability and safety

analysis. Markov analysis has been developed into one of the best techniques for answering these questions, especially when time dependent

variables such as imperfect proof testing are important. Failure Modes

Effects and Diagnostic Analysis (FMEDA) has been developed and refined

as a new tool for quantitative measurement of diagnostic capability. These

new tools and refined methods have made it easier to optimize designs

using reliability engineering.

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

4

Control Systems Safety Evaluation and Reliability

Standards

Many new international standards have been created in the world of

reliability engineering. Standards now provide detailed methods of

determining component failure rates [Ref. 3]. Standards provide checklists

of issues that should be addressed in qualitative evaluation. Standards

define performance measures against which quantitative reliability and

safety calculations can be compared. Standards also provide explanations

and examples of how systems can be designed to maximize safety and

reliability.

Several of these international standards play an important role in the

safety and reliability evaluation of control systems. The ISA-84.01 standard [Ref. 4], Applications of Safety Instrumented Systems for the Process

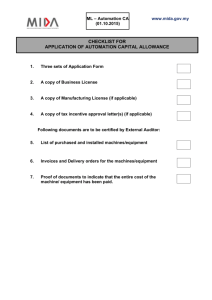

Industries, was a pioneering effort and first described quantitative means

to show safety integrity (Figure 1-1). It also described the boundaries of

the Safety Instrumented System (SIS) and the Basic Process Control System (BPCS). When used with ANSI/ISA-91.01 [Ref. 5], which provides

definitions to identify components of a safety critical system, various plant

equipment can be classified into the proper group.

Safety Integrity Level

4

3

2

1

Risk Reduction Factor

($R)

Probability of Failure

on Demand (PFDavg.)

10 4 PFDavg q 10

5

10000 a $R 100000

10 3 PFDavg q 10

4

1000 a $R 10000

10 2 PFDavg q 10

3

100 a $R 1000

10 1 PFDavg q 10

2

10 a $R 100

Figure 1-1. Safety Integrity Levels (SIL)

ISA-84.01 also pioneered the concept of a “safety life-cycle,” a systematic

design process that begins with conceptual process design and ends with

SIS decommissioning. A simplified version of the safety life-cycle chart is

shown in Figure 1-2.

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Introduction

Hazard and

Risk Analysis

Develop Safety

Requirements Determine SIL

Perform Detail

SIS Design

*

Verify Safety

Requirements

Have Been

Met

Create

Maintenance Operations

Procedures

Maint. - Operations

Perform Periodic

Testing

SIS

Modification or

De-commission

* verification of requirements/SIL

Figure 1-2. Simplified Safety Life-cycle (SLC)

The original ISA-84.01-1996 standard has been replaced by the updated

ANSI/ISA-84.00.01-2004 (IEC 61511 Mod) [Ref. 6]. This standard is almost

word-for-word identical with the IEC 61511 [Ref. 7] standard used worldwide, except for a clause added to cover existing installations. This standard is part of a family of international functional safety standards that

cover various industries. The entire family of standards is based on the

IEC 61508 [Ref. 8] standard, which is non-industry-specific and is used as

a reference or “umbrella” standard for the entire family. Many believe this

family of standards will have more influence on the field of reliability

engineering than any other standard written.

Qualitative versus Quantitative

There is healthy skepticism from some experienced control system engineers regarding quantitative safety and reliability engineering. This might

be a new interpretation of the old quotation, “There are lies, damned lies,

and statistics.” Quantitative evaluation does utilize some statistical methods. Consequently, there will be uncertainty in the results. There will be

real variations between predicted results and actual results. There will

even be significant variations in actual results from system site to system

site. This doesn’t mean that the methods are not valid. It does mean that

the methods are statistical and generalize many sets of data into one.

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Perform

Conceptual

SIS Design

Conceptual

Process Design

5

6

Control Systems Safety Evaluation and Reliability

The controversy may also come from the experiences that gave rise to

another famous quotation, “Garbage in, garbage out.” Poor failure rate

estimates and poor simplification assumptions can ruin the results of any

reliability and safety evaluation. Good qualitative reliability engineering

should be used to prevent “garbage” from going into the evaluation.

Qualitative engineering provides the foundation for all quantitative work.

Quantitative safety and reliability evaluation can add great depth and

insight into the design of a system and design alternatives. Sometimes

intuition can be deceiving. After all, it was once intuitively obvious that

the world was flat. Many aspects of probability and reliability can appear

counter-intuitive. The quantitative reliability evaluation either verifies the

qualitative evaluation or adds substantially to it. Therein lies its value.

Exercises

1.1

Are methods used to determine safety integrity levels of an industrial process presented in ANSI/ISA-84.00.01-2004 (IEC 61511

Mod)?

1.2

Are safety integrity levels defined by order of magnitude quantitative numbers?

1.3

Can quantitative evaluation techniques be used to verify safety

integrity requirements?

1.4

Should quantitative techniques be used exclusively to verify safety

integrity?

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Quantitative safety and reliability evaluation is a growing science. Knowledge and techniques grow and evolve each year. In spite of variation and

uncertainty, quantitative techniques can be valuable. As Lord Kelvin

stated, “… but when you cannot express it with numbers, your knowledge

is of a meagre and unsatisfactory kind; it may be the beginning of knowledge, but you have scarcely, in your thoughts, advanced to the stage of

science, whatever the matter may be.” The statement applies to control

systems safety and reliability.

Introduction

7

Answers to Exercises

1.1

Yes, ANSI/ISA-84.00.01-2004 (IEC 61511 Mod) describes the concept of safety integrity levels and presents example methods on

how to determine the safety integrity level of a process.

1.2

Yes, in the ISA-84.01-1996, IEC 61508 and ANSI/ISA-84.00.01-2004

(IEC 61511 Mod) standards.

1.3

Yes, if quantitative targets (typically an SIL level and required reliability) are defined as part of the safety requirements.

1.4

Not in the opinion of the author. Qualitative techniques are

required as well in order to properly understand how the system

works under failure conditions. Qualitative guidelines should be

used in addition to quantitative analysis.

1.

Coppola, A. “Reliability Engineering of Electronic Equipment: A

Historical Perspective.” IEEE Transactions of Reliability. IEEE, April

1984.

2.

Barlow, R. E. “Mathematical Theory of Reliability: A Historical

Perspective.” IEEE Transactions of Reliability. IEEE, April 1984.

3.

IEC 62380 Electronic Components Failure Rates. Geneva: International Electrotechnical Commission, 2005.

4.

ANSI/ISA-84.01-1996 (approved February 15, 1996) - Applications

of Safety Instrumented Systems for the Process Industries. Research Triangle Park: ISA, 1996.

5.

ANSI/ISA-91.00.01-2001 - Identification of Emergency Shutdown Systems and Controls That Are Critical to Maintaining Safety in Process

Industries. Research Triangle Park: ISA, 2001.

6.

ANSI/ISA-84.00.01-2004, Parts 1-3 (IEC 61511-1-3 Mod) - Functional Safety: Safety Instrumented Systems for the Process Industry Sector. Research Triangle Park: ISA, 2004.

Part 1: Framework, Definitions, System, Hardware and Software

Requirements.

Part 2: Guidelines for the Application of ANSI/ISA-84.00.01-2004 Part 1

(IEC 61511-1 Mod) – Informative.

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

References

8

Control Systems Safety Evaluation and Reliability

Part 3: Guidance for the Determination of the Required Safety Integrity

Levels – Informative.

7.

IEC 61511-2003 - Functional Safety – Safety Instrumented Systems for

the Process Industry Sector. Geneva: International Electrotechnical

Commission, 2003.

8.

IEC 61508-2000 - Functional Safety of Electrical/Electronic/Programmable Electronic Safety-related Systems. Geneva: International Electrotechnical Commission, 2000.

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

2

Understanding

Random Events

Random Variables

The concept of a random variable seems easy to understand and yet many

questions and statements indicate misunderstanding. For example, the

random variable in Reliability Engineering is “time to failure.” A manager

reads that on average an industrial boiler explodes every fifteen years (the

average time to failure is fifteen years) and knows that the unit in their

plant has been running fourteen years. He calls a safety engineer to determine how to avoid the explosion next year. This is clearly a misunderstanding.

We classify boiler explosions and many other types of failure events as

random because with limited statistical operating time data we often can

only predict chances and averages, not specific events at specific times.

Predictions are based on statistical data gathered from a large number of

sources. Statistical techniques are used because they offer the best information obtainable, but the timing of a failure event often cannot be precisely predicted.

The process of failure is like many other processes that have variations in

outcome that cannot be predicted by substituting variables into a formula.

Perhaps the exact formula is not understood. Or perhaps the variables

involved are not completely understood. These processes are called

random (stochastic) processes, primarily because they are not well

characterized.

Some random variables can have only certain values. These random variables are called “discrete” random variables. Other variables can have a

numerical value anywhere within a range. These are called “continuous”

9

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

10

Control Systems Safety Evaluation and Reliability

random variables. Statistics are used to gain some knowledge about these

random variables and the processes that produce them.

Statistics

Statistics are usually based on data samples. Consider the case of a

researcher who wants to understand how a computer program is being

used. The researcher calls six computer program users at each of twenty

different locations and asks what specific program function is being used

at that moment. The program functions are categorized as follows:

Category 1 - Editing Functions, such as Cut, Copy, and Paste

Category 2 - Input Functions, such as Data Entry

Category 3 - Output Functions, such as Printing and Formatting

Category 4 - Disk Functions

Category 5 - Check Functions, such as Spelling and Grammar

The results of the survey (sample data) are presented in Table 2-1. This is a

list of data values.

Table 2-1. Computer Program Function Usage

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

User 1

User 2

User 3

User 4

User 5

User 6

Site 1

1

2

3

2

4

1

Site 2

3

3

3

2

2

1

Site 3

2

2

1

3

3

2

Site 4

1

3

2

2

2

2

Site 5

2

1

4

3

3

2

Site 6

2

2

3

2

3

2

Site 7

1

2

2

3

2

1

Site 8

2

2

2

2

3

2

Site 9

3

3

2

2

2

4

Site 10

2

2

2

2

2

2

Site 11

2

5

2

2

3

2

Site 12

3

2

2

4

2

2

Site 13

5

2

1

2

2

3

Site 14

2

2

2

3

2

2

Site 15

3

2

2

4

2

2

Site 16

2

2

2

3

2

5

Site 17

2

3

1

2

2

3

Site 18

1

2

2

2

2

2

Site 19

2

2

3

2

3

1

Site 20

2

2

2

2

1

2

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Understanding Random Events

11

Histogram

One of the more common ways to organize data is the histogram (see

Table 2-1). A histogram is a graph with data values on the horizontal axis

and the quantity of samples with each value on the vertical axis. A histogram of data for Table 2-1 is shown in Figure 2-1.

Figure 2-1. Histogram of Computer Usage

EXAMPLE 2-1

Problem: Assume that the computer usage survey results of Figure

2-1 are representative for all users. If another call is made to a user,

what is the probability that the user will be using a function in

category five?

Solution: The histogram shows that three answers from the total of

one hundred and twenty were within category five. Therefore, the

chances of getting an answer in category five are 3/120, which is

2.5%.

Probability Density Function

A probability density function (PDF) relates the value of a random variable with the probability of getting that value (or value range). For discrete

random variables, a PDF provides the probability of getting each result.

For continuous random variables, a PDF provides the probability of get--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

12

Control Systems Safety Evaluation and Reliability

ting a random variable value within a range. The random variable values

typically form the horizontal axis, and probability numbers (a range of 0 to

1) form the vertical axis.

A probability density function has the following properties:

f ( x ) ≥ 0 for all x

(2-1)

and

n

P ( xi )

= 1

(2-2)

= 1

(2-3)

i=1

for discrete random variables or

+∞

f ( x ) dx

–∞

for continuous random variables.

Figure 2-2 shows a discrete PDF for the toss of a pair of fair dice. There are

36 possible combinations that add up to 11 possible outcomes. The probability of getting a result of seven is 6/36 because there are six combinations that result in a seven. The probability of getting a result of two is 1/

36 because there is only one combination of the dice that will give that

result. Again, the probabilities total to one.

Figure 2-2. Dice Toss Probability Density Function

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Understanding Random Events

13

For a continuous random variable, the PDF again shows probability as a

function of random variables. But with a continuous random variable,

interest is in the probability of getting a process outcome within a range,

not a particular value. Figure 2-3 shows a continuous PDF for a process

that can have an outcome between 100 and 110. All outcomes are equally

likely.

Figure 2-3. Uniform Distribution Probability Density Function

Distributions in which all outcomes are equally likely are called “uniform”

distributions. The probability of getting an outcome within an interval is

proportional to the length of the interval. For example, the probability of

getting a result between 101.0 and 101.2 is 0.02 (0.2 interval length × 0.1

probability). As the length of the interval is reduced, the probability of getting a result within the interval drops. The probability of getting an exact

particular value is zero.

For a continuous PDF, the probability of getting an outcome in the range

from a to b equals

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

b

P(a ≤ X ≤ b) =

f ( x ) dx

a

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

(2-4)

14

Control Systems Safety Evaluation and Reliability

EXAMPLE 2-2

Problem: A uniform PDF over the interval 0 to 1000 for a random

variable X is given by f(x) = 0.001 in the range between 0 and 1000,

and 0 otherwise. What is the probability of an outcome in the range of

110 to 120?

Solution: Using Equation 2-4,

120

P ( 110 ≤ X ≤ 120 ) =

0.001 dx = 0.001x

120

110

110

= 0.001 ( 120 – 110 ) = 0.01

EXAMPLE 2-3

Problem: The PDF for the random variable t is given by

f ( t ) = kT, if 0 ≤ t ≤ 4;

0 otherwise.

What is the value of k?

Solution: Using Equation 2-3 and substituting for f(t):

4

kt dt = 1

0

which equals

2

kt

------2

4

= 1

0

Evaluating,

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

16k

---------- = 1, ∴ k = 0.125

2

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Understanding Random Events

15

EXAMPLE 2-4

Problem: A random variable T has a PDF of f(t) = 0.01e–0.01t if t ≥ 0,

and 0 if t < 0. What is the probability of getting a result when T is

between 0 and 50?

Solution: Using Equation 2-4,

t=50

P ( 0 ≤ t ≤ 50 ) =

0.01e

– 0.01t

dt

t=0

Integrating by substitution (u = –0.01t), we obtain

P ( 0 ≤ t ≤ 50 ) =

0.01e

– 0.01t

dt

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

t=50

t=0

u=–0.5

u du

0.01e -------------– 0.01

=

u=0

Simplifying and converting back to t,

–

u=–0.5

u=0

u

e du = – e

u

– 0.5

0

= –e

– 0.01t

50

0

Evaluating,

= [ –e

– 0.01 ( 50 )

] – [ –e

– 0.01 ( 0 )

]

= – 0.60653 + 1 = 0.39347

Cumulative Distribution Function

A cumulative distribution function (CDF) is defined by the equation

j

F ( xn ) =

P ( xi )

(2-5)

i=1

for discrete random variables and

x

F(x) =

f ( x ) dx

–∞

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

(2-6)

16

Control Systems Safety Evaluation and Reliability

for continuous random variables. A CDF is often represented by the capital letter F. The cumulative distribution function represents the cumulative probability of getting a result in the interval from minus infinity to the

present value of x. It equals the area under the PDF curve accumulated up

to the present value.

A cumulative distribution function has the following properties:

dF

F ( ∞ ) = 1, F ( – ∞ ) = 0, and ------ ≥ 0

dx

The probability of a random process result within an interval can be

obtained using a CDF.

It can be shown that

b

P(a ≤ X ≤ b) =

f ( x ) dx

= F(b ) – F(a)

(2-7)

a

This means that the area under the PDF curve between a and b is simply

the CDF area to the left of b minus the CDF area to the left of a.

EXAMPLE 2-5

Problem: Calculate and graph the discrete CDF for a dice toss

process where the PDF is as shown in Figure 2-2.

Solution: A cumulative distribution function is the sum of previous

probabilities at each discrete variable. Table 2-2 shows the sum of the

probabilities for each result.

Table 2-2. Sum of Probabilities (Example 2-4)

Result

Probability

Cumulative Probability

2

3

4

5

6

7

8

9

10

11

12

0.0278

0.0556

0.0834

0.1111

0.1388

0.1666

0.1388

0.1111

0.0834

0.0556

0.0278

0.0278

0.0834

0.1668

0.2779

0.4167

0.5833

0.7221

0.8332

0.9166

0.9722

1.000

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Understanding Random Events

17

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

The CDF is plotted in Figure 2-4.

Figure 2-4. Dice Toss Cumulative Distribution Function

EXAMPLE 2-6

Problem: Calculate and plot the cumulative distribution function for

the PDF shown in Figure 2-3.

Solution: During the interval from 100 to 110, the PDF equals a

constant, 0.1. The PDF equals zero for other values of x. Therefore,

using Equation 2-6,

x

F( x) =

0.1 dx

= 0.1x for 100 ≤ x ≤ 110

–∞

The CDF is zero for values of x less than 100. The CDF is one for

values of x greater than 110. This CDF is plotted in Figure 2-5.

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

18

Control Systems Safety Evaluation and Reliability

Figure 2-5. Uniform Distribution: Cumulative Distribution Function of Figure 2-3

Mean

The average value of a random variable is called the “mean” or “expected

value” of a distribution. For discrete random variables, the equation for

mean, E, is

n

E ( xi ) = x =

xi P ( xi )

(2-8)

i=1

The xi in this equation refers to each discrete variable outcome. The n

refers to the number of different possible outcomes. P(xi) represents the

probability of each discrete variable outcome.

EXAMPLE 2-7

Problem: Calculate the mean value of a dice toss process.

Solution: Using Equation 2-8,

1 + 3 ----2 + 4 ----3 + 5 ----4

E ( x ) = 2 ----36-

36-

36-

36-

5 + 7 ----6 + 8 ----5 + 9 ----4

+ 6 ----36-

36-

36-

36-

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

3 + 11 ----2 + 12 ----1

+ 10 ----36-

36-

36-

= 252

---------- = 7

36

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Understanding Random Events

19

Another, more common form of mean is given by Equation 2-9:

n

xi

i=1 x = -------------n

(2-9)

where xi refers to each data sample in a collection of n samples. This is

called the “sample mean.” As the number of samples grows larger, the

results obtained from Equation 2-9 will get closer to the results obtained

from Equation 2-8. When a large number of samples are used, the probabilities are inherently included in the calculation.

EXAMPLE 2-8

Problem: A pair of fair dice is tossed ten times. The results are 7, 2,

4, 6, 10, 12, 3, 5, 4, and 2. What is the sample mean?

Solution: Using Equation 2-9, the samples are added and divided by

the number of samples. The answer is 5.5. Compare this to the

answer of seven obtained in Example 2-7. The difference of 1.5 is

large but not unreasonable since our sample size of ten is small.

EXAMPLE 2-9

Problem: The dice toss experiment is continued. The next 90

samples are 6, 8, 5, 7, 9, 4, 7, 11, 3, 6, 10, 7, 4, 6, 4, 8, 8, 5, 9, 3, 2,

11, 9, 8, 2, 9, 5, 11, 12, 8, 9, 7, 10, 6, 6, 8, 10, 7, 8, 11, 6, 7, 9, 8, 7, 8,

7, 10, 7, 6, 8, 7, 9, 11, 7, 7, 12, 7, 7, 9, 5, 6, 3, 7, 6, 10, 8, 7, 8, 6, 10,

7, 11, 6, 9, 11, 5, 8, 7, 4, 6, 8, 9, 7, 10, 9, 11, 7, 4, and 7. What is the

sample mean?

Solution: Using Equation 2-9, the samples are added and divided by

the number of samples. The total of all one hundred samples

(including those from Example 2-8) is 725. Dividing this by the

number of samples yields a sample mean result of 7.25. This result is

closer to the distribution mean obtained in Example 2-7.

For continuous random variables, the equation for mean value is

∞

E(x) =

xf ( x ) dx

–∞

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

(2-10)

20

Control Systems Safety Evaluation and Reliability

EXAMPLE 2-10

Problem: The PDF for a random variable is given by f(t) = 1/8 t, if

0 ≤ t ≤ 4; 0 otherwise. What is the mean?

Solution: Using Equation 2-10,

4

E(t) =

t --8- tdt

1

0

Integrating,

3

1t

E ( t ) = --- ---83

4

64

24

= -----0

The mean represents the “center of mass” of a distribution. Example

2-10 shows this attribute. The distribution spans values from zero to

four. The mean is 64/24 (2.667). This value is greater than the

median (middle) value of two.

Median

The median value (or simply, “median”) of a sample data set is a measure

of center. The median value is defined as the data sample where there are

an equal number of samples of greater value and lesser value. It is the

“middle” value in an ordered set. If there are two middle values, it is the

value halfway between those two values.

Knowing both the mean and the median allows one to evaluate symmetry.

In a perfectly symmetric PDF, such as a normal distribution, the mean and

the median are the same. In an asymmetric PDF, such as the exponential,

the mean and the median are different.

EXAMPLE 2-11

Problem: Find the median value of a dice toss process.

Solution: The set of possible values is 2, 3, 4, 5, 6, 7, 8, 9, 10, 11,

and 12. Since there are eleven values, the middle value would be the

sixth. Counting shows the median value to be seven.

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Understanding Random Events

21

EXAMPLE 2-12

Problem: Find the median value of the sample data presented in

Example 2-8.

Solution: Sorting the 10 values provides the list: 2, 2, 3, 4, 4, 5, 6, 7,

10, and 12. Two middle values are present, a four and a five.

Therefore, the median is halfway between these two at 4.5.

Variance

As noted, the mean is a measure of the “center of mass” of a distribution.

Knowing the center is valuable, but more must be known. Another important characteristic of a distribution is spread—the amount of variation. A

process under control will be consistent and will have little variation. A

measure of variation is important for control purposes [Ref. 1, 2].

One way to measure variation is to subtract values from the mean. When

this is done, the question, “How far from the center is the data?” can be

answered mathematically. For each discrete data point, calculate

( xi – x )

(2-11)

In order to calculate variance, these differences are squared to obtain all

positive numbers and then averaged. When there is a given discrete probability distribution, the formula for variance is

2

σ ( xi ) =

n

( xi – x )

2

P ( xi )

(2-12)

i=1

where xi refers to each discrete outcome and P(xi) refers to the probability

of realizing each discrete outcome. If a set of sample data is given, the formula for sample variance is

n

2

( xi – x )

2

i=1

s ( x i ) = ------------------------------n

(2-13)

where xi refers to each piece of data in the set. The size of the data set is

given by n. As in the case of the sample mean, the sample variance will

provide a result similar to the actual variance as the sample size grows

larger. But, there is no guarantee that these numbers will be equal. For this

reason, the sample variance is sometimes called an “estimator” variance.

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

22

Control Systems Safety Evaluation and Reliability

For continuous random variables, the variance is given by

2

∞

σ (x) =

(x – x)

2

f ( x ) dx

(2-14)

–∞

Standard Deviation

A standard deviation is the square root of variance. The formula for standard deviation is

σ(x) =

2

σ (x)

(2-15)

Standard deviation is often assigned the lowercase Greek letter sigma (σ).

It is a measure of “spread” just like variance and is most commonly associated with the normal distribution.

EXAMPLE 2-13

Solution: In Example 2-6, the mean of a dice toss process was

calculated as seven. Using Equation 2-12,

2

21

22

23

24

σ ( x i ) = ( 2 – 7 ) ------ + ( 3 – 7 ) ------ + ( 4 – 7 ) ------ + ( 5 – 7 ) -----36

36

36

36

25

26

25

24

+ ( 6 – 7 ) ------ + ( 7 – 7 ) ------ + ( 8 – 7 ) ------ + ( 9 – 7 ) -----36

36

36

36

23

22

21

+ ( 10 – 7 ) ------ + ( 11 – 7 ) ------ + ( 12 – 7 ) -----36

36

36

= 5.834

EXAMPLE 2-14

Problem: What is the sample variance of the dice toss data

presented in Example 2-9?

Solution: Using Equation 2-13, the sample mean of 7.25 is

subtracted from each data sample. This difference is squared. The

squared differences are summed and divided by 100. The answer is

5.9075. This result is comparable to the value obtained in Example 213.

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Problem: What is the variance of a dice toss process?

Understanding Random Events

23

Common Distributions

Several well known distributions play an important role in reliability engineering. The exponential distribution is widely used to represent the probability of component failure over a time interval. This is a direct result of a

constant failure rate assumption. The normal distribution is used in many

areas of science. In reliability engineering it is used to represent strength

distributions. It is also used to represent stress. The lognormal distribution

is used to model repair probabilities.

Exponential Distribution

The exponential distribution is commonly used in the field of reliability. In

its general form it is written

– kx

f ( x ) = ke , for x ≥ 0 ;

= 0, for x < 0

(2-16)

The cumulative distribution function is

F ( x ) = 0, for x < 0 ; = 1 – e

– kx

, for x ≥ 0

(2-17)

The equation is valid for values of k greater than zero. The CDF will reach

the value of one at x = ∞ (which is expected). Figure 2-6 shows a plot of an

exponential distribution PDF and CDF where k = 0.6. Note: PDF(x) =

d[CDF(x)]/dx.

Figure 2-6. Exponential Distribution

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

24

Control Systems Safety Evaluation and Reliability

Normal Distribution

The PDF is perfectly symmetrical about the mean. The “spread” is measured by variance. The larger the value of variance, the flatter the distribution. Figure 2-7 shows a normal distribution PDF and CDF where the

mean equals four and the standard deviation equals one. Because the PDF

is perfectly symmetric, the CDF always equals one half at the mean value

of the PDF. The PDF is given by

2

–--------------------( x – μ )2

2σ

1

f ( x ) = -------------- e

σ 2π

(2-18)

where μ is the mean value and σ is the standard deviation. The CDF is given

by

2

x

1

F ( x ) = -------------- e

σ 2π – ∞

–---------------------(x – μ)

2

2σ

dx

Figure 2-7. Normal Distribution

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

(2-19)

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

The normal distribution is the most well known and widely used probability distribution in general science fields. It is so well known because it

applies (or seems to apply) to so many processes. In reliability engineering, as mentioned, it primarily applies to measurements of product

strength and external stress.

Understanding Random Events

25

There is no closed-form solution for Equation 2-19, i.e., a formula cannot

be written in the variable x which will give us the value for F(x). Fortunately, simple numerical techniques are available and standard tables

exist to provide needed answers.

Standard Normal Distribution

If

(x – μ)

z = ----------------σ

(2-20)

is substituted into the normal PDF function,

2

1

f ( z ) = ---------- e

2π

z

– ----

2

(2-21)

This is a normal distribution that has a mean value of zero and a standard

deviation of one. Tables can be generated (Appendix A and Ref. 2, Table

1.1.12) showing the numerical values of the cumulative distribution function. These are done for different values of z. Any normal distribution

with any particular σ and μ can be translated into a standard normal distribution by scaling its variable x into z using Equation 2-20. Through the

use of these techniques, numerical probabilities can be obtained for any

range of values for any normal distribution.

EXAMPLE 2-15

Problem: A control system will fail if the ambient temperature

exceeds 70°C. The ambient temperature follows a normal distribution

with a mean of 40°C and a standard deviation of 10°C. What is the

probability of failure?

Solution: The probability of getting a temperature above 70°C must

be found. Using Equation 2-20,

( 70 – 40 )

z = ------------------------ = 3

10

Checking the chart in Appendix A for a value of 3.00, the left column

(0.00 adder) across from 3.0, a value of 0.99865 is found. This

means that 99.865% of the area under the PDF occurs at or below

the temperature of 70°C:

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

P ( T ≤ 70 ) = 0.99865

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

26

Control Systems Safety Evaluation and Reliability

EXAMPLE 2-15 continued

To obtain a probability for temperatures greater than 70°C, use the

rule of complementary events (see Appendix C). Since the system

will fail as soon as the temperature goes above 70°C, this is the

probability of failure.

P ( T > 70 ) = 1 – 0.99865 = 0.00135

This is shown in Figure 2-8.

Lognormal Distribution

The random variable X has a lognormal PDF if lnX has a normal distribution. Thus, the lognormal distribution is related to the normal distribution

and also has two parameters.

The lognormal distribution has been used to model probability of completion for many human activities, including “repair time.” It has also been

used in reliability engineering to model uncertainty in failure rate information. Figure 2-9 shows the PDF and CDF of a lognormal distribution

with a μ of 0.1 and a σ of 1. It should be noted that these are not the mean

and standard deviations of the distribution.

The PDF is given by

2

, for t ≥ 0 ; = 0, for t < 0

(2-22)

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

1

f ( t ) = ----------------- e

tσ 2π

–-------------------------( ln t – μ ) 2

( 2σ )

Figure 2-8. Example 2-15 Distribution

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Understanding Random Events

27

Figure 2-9. Lognormal Distribution

Exercises

2.1

A failure log is provided. Create a histogram of failure categories:

software failure, hardware failure, operations failure and maintenance failure.

FAILURE LOG - 10 sites

Symptom

1. PC crashed

2. Fuse Blown

3. Wire shorted during repair

4. Program stopped during use

5. Unplugged wrong module

6. Invalid display

7. Scan time overrun

8. Water in cabinet

9. Wrong command

10. General protection fault

11. Cursor disappeared

12. Coffee spilled

13. Dust on thermocouple terminal

14. Memory full error

15. Mouse nest between circuits

16. PLC crashed after download

17. Output switch shorted

18. LAN addresses duplicated

19. Computer memory chip failed

20. Power supply failed

21. Sensor switch shorted

22. Valve stuck open

23. Bypass wire left in place

24. PC virus causes crash

25. Hard disk failure

Failure Category

Software

Hardware

Maintenance

Software

Maintenance

Software

Software

Maintenance

Operations

Software

Software

Hardware

Hardware

Software

Hardware

Software

Hardware

Maintenance

Hardware

Hardware

Hardware

Hardware

Maintenance

Software

Hardware

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

28

Control Systems Safety Evaluation and Reliability

Draw a Venn diagram (see Appendix C for a Venn diagram explanation) of the failure types.

2.2

Based on the histogram of exercise 2.1, what is the chance of the

next failure being a software failure?

2.3

A discrete random variable has the possible outcomes and probabilities listed below.

Outcome

1

2

3

4

5

Probability

0.1

0.2

0.5

0.1

0.1

Plot the PDF and the CDF.

2.4

What is the mean of exercise 2.3?

2.5

What is the variance of exercise 2.3?

2.6

The following set of numbers is provided from an accelerated life

test in which power supplies were run until failure (days until failure). What is the mean of this data?

75

95

110

112

121

125

140

174

183

228

250

342

554

671

823

1065

1289

1346

What is the average failure time in days? What is the median failure time in days?

2.7

A controller will fail when the temperature gets above 80°C. The

ambient temperature follows a normal distribution with a mean of

40°C and a standard deviation of 10°C. What is the probability of

failure?

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

Understanding Random Events

29

Answers to Exercises

2.1

Software failures - 9, Hardware failures - 10, Maintenance failures 5, Operations failures - 1. The histogram is shown in Figure 2-10.

The Venn diagram is shown in Figure 2-11.

Figure 2-10. Histogram of Failure Types

2.2

The chances that the next failure will be a software failure are 9/25.

2.3

Figure 2-12 shows the PDF. Figure 2-13 shows the CDF.

2.4

The mean is calculated by multiplying the values times the probabilities per equation 2-8. Mean = 1 × 0.1 + 2 × 0.2 + 3 × 0.5 + 4 × 0.1

+ 5 × 0.1 = 2.9.

2.5

The variance is calculated using equation 2-12. Answer 1.09.

Copyright International Society of Automation

Provided by IHS under license with ISA

No reproduction or networking permitted without license from IHS

Licensee=FMC Technologies /5914950002, User=klayjamraeng, jutapol

Not for Resale, 06/01/2017 00:00:50 MDT

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Figure 2-11. Venn Diagram of Failure Types

30

Control Systems Safety Evaluation and Reliability

Figure 2-12. PDF of Problem 2-3

--``,,`,,,`,,`,`,,,```,,,``,``,,-`-`,,`,,`,`,,`---

Figure 2-13. CDF for Problem 2-3

2.6

Mean of the data equals 427.94 days. Average failure time in hours

equals 427.94 × 24 = 10271 hours. Median failure time in days

equals (183+228)/2 = 205.5. In hours = 4932 hours. Note that this

assumes that failure occurred precisely at the end of each 24-hour

day. This is not accurate but a common assumption.

2.7