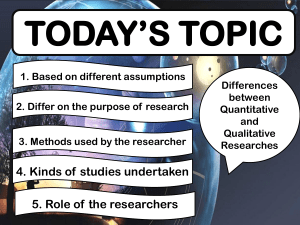

Levels of Evidence Pyramid—Level I=most scientific, Level VII= least scientific Level I—Systemic review/Meta-analysis of RCT o Systemic Review—summative assessment o Meta-analysis—STATISTICAL analysis of reported data Level II—Randomized Control Trials (RCTs) o Experimental/gold standard of research Level III—Quasi-Experimental Studies o No randomization occurs Level IV—Nonexperimental Studies o Observational studies, no control, randomization, or variable manipulation Level V—Meta Synthesis o Like a textbook, summary of research/qualitative studies Level VI—Qualitative Studies o One study Level VII—Expert Opinions/Expert Committee or Organization Reports, Integrative reviews o Not based in research , no statistical analyses The Seven Steps of Evidence-Based Practice—Melynk Step 0—Cultivate a spirt of inquiry Step 1—Ask clinical questions in PICOT format o P—population of interest o I—intervention o C—comparison (optional in some cases) o O—outcomes o T—time o Provides a framework for searching databases Step 2—Search for the best evidence o PICOT helps to identify key words/phrases and narrow down article results Step 3—Critically appraise the evidence o Rapidly appraise the study to see if it’s relevant/applicable to your clinical question Step 4—Integrate the evidence with clinical expertise and patient preferences/values o You need more than just research evidence, you need real world data also Step 5—Evaluate the outcomes of the practice decisions or changes based on evidence o After implementing EBP, you must monitor/evaluate outcomes for positive and negative effects Step 6—Disseminate EBP results o Must share the outcomes with colleagues/other HC organizations through presentations, conferences, journals, etc. How to Read A Research Article—Hudson-Barr Start by identifying the conclusions of the study—begin at the end Read three sections first—Title, Abstract, Discussion Then go back to the beginning and read the Introduction o Provides the rationale for the study, usually with a discrepancy between what is current practice and would be ideal practice and concludes with gaps in the literature o Identify what question the researcher is asking Next move on to Methods o Procedures used during the study—Subjects, Data collection Ask yourself, “did the researches choose the “right” subjects and variables, do the procedures make sense, was the data-collection logical or was there potential for error? Finding/Results and Data Analysis Specifics about the collected data, usually descriptive data, relationships between variables You will have a general understanding of statistically significant results from this section Finally, use your clinical judgement to evaluate the article What was the studying doing? Do you accept the conclusions? Is it useful for practice? Chapter 1 Nursing research—scientific process that validates and refines existing knowledge and generates NEW KNOWLEDGE that directly/indirectly influences nursing practice Evidence-Based Practice (EBP)—a problem solving approach to clinical practice which incorporates the best evidence from studies, patient values/preferences, and clinician’s expertise in making decisions for patient care Nursing Research Systematic, rigorous, investigation aimed at answering questions about nursing phenomena Can be quantitative or qualitative Begins with research questions, tested with a design EBP Collection, interpretation, and integration of valid RESEARCH EVIDENCE Combined with clinical expertise And patient values/preferences Informs clinical decision making Begins with compelling clinical question (PICOT), used to search literature for already completed studies Clinical Guidelines—systematically developed statements to assist practitioner/patient health care decisions o Developed at national level by government agencies, HC organizations, expert panels Triad of EBP o 1. Best research evidence (clinical research, guidelines, expert opinions) o 2. One’s own clinical expertise o 3. Patient preferences and values Chapter 2 Clinical Questions—first step in development of EBP project o Arise from clinical situations for which there are no ready answers o This is where the critical appraisal of research studies begins—critiquing the research question and hypotheses Research Question—idea to be examined in the research study o AKA “problem statement”—concise, interrogative statement written in the present tense o Must do three things: Clearly identify variables under consideration Specify the population being studied Imply the possibility of empirical testing/decide on a research approach/design Variables—characteristic, event, or response that represents the elements of the research question in a detectable/measurable way Independent Variable—the thing being changed, i.e. the intervention, condition or characteristic that will cause an outcome. Presumed to have an effect on the dependent variable (Cause) Dependent Variable—outcome of interest, the thing being effected by the independent variable (Effect) Research questions originate from clinical practice experiences, critical appraisal of scientific lit, gaps in lit, interest in untested theory Purpose—encompasses the aims/objectives of the research, NOT the question to be answered Hypothesis—statement about a relationship between two or more variables that suggests an answer to the research question Only used when testing a causal or associative relationship between variables Not always explicitly stated—often embedded in data analysis/results/discussion section Must be a declarative statement about a relationship between an independent and a dependent variable Must be testable Identifies “direction of interest” Null (Statistical) Hypothesis—states there is no relationship b/t the independent and dependent variables Alternative Hypothesis Non-directional—two sided hypothesis—change in any direction (positive or negative) States that a relationship exists but does not anticipate the direction Directional—one sided hypothesis—interested in only one direction of change Specifies the expected direction of the relationship b/t IV and DV Chapter 3 Literature Review—a systematic/critical appraisal that provides the foundation of a research study o Essential for EBP o Identifies “areas for future research” Gaps in the literature Need for replication of study Need for extension of knowledge base We need to know “does the issue really matter? researcher examined the question’s potential significance to guide/extend the scientific body of nursing knowledge Feasibility—what’s necessary to do the study and do the benefits outweigh the costs? Time, subject/facility/equipment availability, money, researcher experience, ethics, etc. Population—a well defined set with certain characteristics Target Population—the entire set of cases that the researcher would like to generalize about Accessible Population—a population that meets the targe population and that is available for the research study Testability—is the question able to be tested? Must be measurable by quantitative or qualitative measures Must propose a relationship between an independent and depend variable Conducting EBP Synthesize the strengths/weaknesses of the evidence available Draw conclusions about the quality/consistency/strengths of the evidence Decided if it’s applicable First focus on the specifics of your PICOT, then explore/widen your search Careful search of published and unpublished literature Unless it’s a seminal study, articles should be from within the past 5 years When appraising evidence, look for: Quality—aggregate of quality ratings for individual studies, predicated on the extent to which bias was minimized Quantity—magnitude of effect, numbers of studies, sample size, power Consistency—extent to which similar findings are reported using similar/different study design What was the aim of the study? Describe a population?—descriptive To quantify the relationship between factors—analytic If analytic, was the intervention randomly allocated? Yes—RCT No—Observational study If observational, when were the outcomes determined? Sometime after the exposure/intervention—cohort study At the same time as the exposure/intervention—cross sectional study/survey Chapter 4 Theory—provides a foundation/blueprint and structure; a set of interrelated concepts that provides a systemic view of phenomenon—made up of a set of interrelated concepts o Gives researchers a logical way of collecting data to describe, explain, and predict nursing practice Concept—image/symbolic representation of an abstract idea, which can be concreate or abstract Construct—complex concept, usually comprise more than one concept built together to fit a purpose Ways theories are used in the research process o Theory is generated as the outcome of research (qualitative) o Theory is used as a research framework (qualitative or quantitative) o Research is undertaken to test theory (quantitative) Chapter 8: Quantitative Research Types Experimental: research design that includes randomization, control, and manipulation Quasi-experimental: research design where random assignment is not used, but the IV is still manipulated with some level of control used Non- experimental: research design where the researcher observes a phenomenon w/o manipulating the IV Considerations Objectivity: based on observable phenomenon and presented factually w/o bias. Accuracy: achieved when all aspects of a study systematically flow form the research question or hypothesis o Pilot study: a research question that has not been studied before, usually recommended as a sort of preliminary study before conducting a ‘big’ study Feasibility: pragmatic considerations such as time, money, subject availability, facility, equipment, researcher experience, and ethics Control: measures used to hold the conditions of the study uniform and avoid the possible impingement of bias on the dependent variable or outcome variable o Intervention fidelity (faithfulness): selecting a design to maximize control or uniformity. Achieved when the researcher actively standardizes the intervention and plans the intervention with the same manner and condition to all subjects. o Researchers may use instruments, standardized data collection, trained assistants, and clear inclusion/exclusion criteria o Extraneous variables: variables that influence an outcome but are not part of the experiment. Will often interfere and confuse interpretations. control is established when ruling out these variables 4 Means of Control 1. Homogenous sampling a. May limit generalizability (application of findings to other populations) 2. Constancy in data collection (intervention fidelity) a. Constancy (intervention fidelity): notion that data-collection procedures are approached so that researchers control the conditions of the study b. Collecting data in the same manner, under the same conditions, and by trained data collectors 3. Manipulation of the IV a. The administration of a program, tx, or intervention to only 1 group in the study b. 1st group = experimental/intervention group c. 2nd group = control group (variables held under constant or comparison level; normal, status quo level) d. Only experimental and quasi-experimental studies e. Manipulation increases the power to draw conclusions 4. Randomization a. The sampling selection procedure in which each member of the population has an equal chance of being selected to either the experimental or control group b. Helps eliminate bias c. Helps in achieving a representative sample Validity Internal validity: ask if the IV really made a difference in the DV or not o Threats: History: an event outside the experimental setting that may affect the DV Selection bias: issues with representative samples (ie: voluntary subjects) Maturation: the developmental, biological, and psychological processes of the subjects that may affect the DV Testing: repeated testing may yield a sensitized participant Mortality Instrumentation: data collector training, calibration External validity: ability to generalize the findings from a research study to other populations, places, and situations. describes what conditions and with what types of subjects can you expect to get the same results o Threats: Selection effect: the way subjects are recruited, may affect generalization Reactive effects: the subjects’ responses to being studied (ie: Hawthorne effect) Measurement effects: factors affecting measurement (ie: pre/post test) Chapter 9 Experimental/Quasi-experimental Designs o Research actively intervenes to bring about desired effect o Test cause and effect relationships o Level II and III evidence Experimental Designs Require: o Randomization—each subject has equal chance of being assigned to a group Assumes that any important intervening variable will be equally distributed b/t groups decreasing bias Control—conditions constant to limit bias/influence on dependent variable Control group receives the usual treatment/placebo—no intervention made with this group Manipulation—doing something to at least some of the subjects/independent variable is manipulated with the experimental group and not with the control group Help to eliminate alternative explanations for the findings Experimental Designs Randomized Control Trial (RCT)—gold standard (level II evidence) Threats—maturation, history, selection bias, instrumentation Measurement of the variables of interest (dependent variables) done BEFORE the intervention Uses experimental and control groups Every subject receiving the intervention receives identical intervention Sample size is important! Too large=wastes time/resources/money Too small=inaccurate results Power Analysis—determining the right sample size Large enough to determine statistical significance Effect Size—an estimate of how large a difference there is b/t the intervention and control group Strengths—most powerful for testing cause-and-effect relationships b/c of use of control, manipulation, and randomization Weakness—complicated/expensive/impractical for certain settings Quasi-Experimental—no randomization or no control group (level III evidence) Lack of control makes evidence less convincing Types Nonequivalent Control Group—experimental and control group but no randomization After Only Nonequivalent—assumption that experimental and control groups are equivalent before introduction of the independent variable—no baseline data collected Time Series—trends over time/data collected multiple times with one group before and after intervention One Group (Pre-Test/Post-Test)—used when only one group is available, data collected before and after experimental treatment—no control/no randomization Strengths—Practical, less costly, adaptable to real world problems Weakness—cannot demonstrate cause-and-effect relationships Chapter 10: Non-experimental Design Overview IV is not manipulated Subjects not randomized No control group clear research question or hypothesis based on a theoretical framework should exist Implementation Constructing a picture of a phenomenon Exploring events, people, or situations occurring in nature Testing relationships and differences among variables Types Survey studies types: Descriptive Exploratory Comparative (used to determine difference between variables. Collected via questionnaires or interviews) Use of the data to justify and assess current conditions and practice Strengths Seeks to relate variables to each other; flexible Weaknesses Info collected may be broad and superficial Requires expertise in several research areas and statistical analysis Large-scale surveys can be time-consuming and costly Relationship/Difference studies Correlational: type of study that examines the association between 2+ variables. Concerned in quantifying the strength and direction (+/-) of the relationship Strengths = flexibility in complex relationships, efficient in large data collection, can be clinically practical, potential for future recommendation, framework for exploring relationship of variables that cannot be manipulated, and potential for evidence-based application. Weaknesses = variables are not manipulated, randomization is not used because groups are preexisting (generalizability is decreased), strength and quality of evidence is limited by relationship between variables, causal relationship cannot be determined Developmental: type of study concerned with the relationships and difference among phenomena at 1 point in time and with changes that result over time. Cross-sectional: only looks at 1 point in time, less time-consuming, prone to confounding variables, large amounts of data can be compressed to one point in time. Explore relationships, correlations, differences, and comparisons Strengths = less time-consuming, less costly, large amount of data can be collected at 1 point (more readily available results), and maturation threat is not present Weaknesses = hard to establish in-depth developmental assessment of interrelationships and phenomena Longitudinal/Prospective, Cohort (repeated measures studies): collection of data from the same sample at different points in time. Explore relationships, correlations, differences, and comparisons. Strengths = each subject f/u separately and serves as their own control, increased depth of responses, analysis of early trends, and analysis in changes over time between variables Weaknesses = can be costly, often slow Retrospective/Ex post facto/Case control (causal-comparative or comparative studies): studies that look back in time and tends to examine the exposure to the IV. The DV has already been affected by the IV. These studies attempt to link present to past events. Strengths = higher level of control compared to correlational studies; more confident findings, cheaper, faster Weaknesses = causality cannot be inferred, only associated. Less control of confounding variables Both longitudinal and retrospective findings suggest the association or relationship of variables. Chapter 11 Systematic Review—summation and assessment of research studies using systematic and explicit methods (level I) Meta-analysis—review of studies using STATISTICAL methods (level I) Largest repository=Cochrane Collaboration/Review o Phases I—data extracted II—decision made as to whether or not it is appropriate to calculate what is known as pooled aver result (effect) of the studies reviewed Effect Size—calculated using the difference in the averages of scores b/t the intervention and control groups from each study Estimate of how large a difference there is b/t intervention and control groups Integrative Review—critically appraises the literature but without statistical analysis Broadest category of review Chapter 12: Sampling Concepts Population: a well-defined set that has certain specified properties target population: the entire set of cases about which a researcher would like to make generalizations accessible population: group or individual that meets the target population criteria and are available for study participation Criteria Inclusion (eligibility) criteria: population descriptors basis for inclusion Exclusion (delimiting) criteria: characteristics that restrict the population to a homogenous group of subj. Sampling: process of selecting a portion/subset of the designated population Sample: a set of elements that make up the population Representative sampling: the most important criteria where the key characteristics that closely reflect the target population No complete guarantee of complete representation Sampling is geared towards obtaining a representative sample in quantitative studies and to obtaining data representative of the target of interest. Strategies Non-probability (quantitative, qualitative) = non-random selection Probability (quantitative) = random selection Sampling Types Non-probability sampling Inclusion in a group is not random, less generalizable and representative 4 Types: Convenience: use of the most readily accessible persons/objects for the study. Easy to obtain but high bias risk due to voluntary participation Quota: identification of specific properties used to establish a sample and determine sizes for groups to increase level of representation. Requires use of strict inclusion/exclusion criteria and power analysis to determine sample size Purposive (Judgment sampling): participants chosen based on personal decision of who would be the most representative; handpicked subjects by the researchers. Often used in qualitative studies to recruit homogenous samples. Risk for over/under-representation of the population Snowball (Network sampling): researchers asks participants for prospective participants Probability sampling Uses randomization to assign subjects; each element of the population has an equal and independent chance of being included in the sample (random sampling) More generalizable and representative 3 Types: Simple random sampling: population group extracted by a formula that every member of the population has an equal chance of being selected. Maximized representation and randomization. Often time consuming and inefficient. Stratified random sampling: random sampling of strata or subgroups. Good for enhanced representation, comparing subsets, and equitable representation for small subgroups. Disadvantages include time consuming, difficulty enrolling in strata, and difficulty in obtaining a population list with complete critical variable info. Cluster (multi-stage) sampling: random sampling of units (cluster) progressing from large to small. More economical. Disadvantages include sampling errors which tend to occur more compared to stratified and simple random sampling. Complicated handling of statistical data from cluster samples. Chapter 14 Before data collection the researcher needs to consider validity and reliability of the tools: Validity- addresses the concern “are we measuring what we think we are measuring?” Reliability –the extent to which the instrument yields the same results on repeated measures Choosing a data collection method is one of the most lengthy steps. Nurses are always collecting data in patient care situations, however it is different from data collected during research as it may be biased, based on personal beliefs and values, and may not be consistent. Collection methods: 1. Existing data a. Considered secondary data analysis and can be collected through hospital records or national databases b. Advantages include no intrusion in participants lives, reevaluation of data allows for different findings, cost and time saving c. Limitations include restrictiveness of data sources and inability to add questions 2. New data can be collected through a. Observation - method of collecting data of how people behave under certain conditions b. Structured observation - the format is set in advance, only required events and behaviors are observed c. Unstructured - still structured but more descriptive, includes field notes (a short summary of observation) and anecdotes (focus on behaviors of interest and illustrate a specific point) d. Advantages include the understanding of setting and ability to observe individuals with low verbal skills, disadvantages include potential biases, being time-consuming, may present ethical concerns, may cause individuals to modify their usual behavior 3. Self-reports require subjects to respond directly about their experiences, behaviors, feelings, etc. Most useful for collecting data on variables that cannot be directly observed or measured a. interviews - participants respond to a set of open-ended and closed-ended questions, used in both quantitative and qualitative research, usually used when the task needs to clarify to a respondent or when personal information needs to be collected b. Questionnaires are used for the survey process when there is a specific set of questions. Used to collect the info on knowledge, attitude, beliefs i. Traditional - include open-ended and closed ended questions ii. Scales - response that provides a graded numerical option. Likert scale is most used, lists of statements on which responders indicate if they “strongly agree”, “disagree”, etc. iii. Internet surveys - able to assess large population c. Limitations of self-report methods include social desirability (no way to know if the responses are truthful, often responders try to please the researcher and pick the most socially desirable response) and respondent burden (long and complex question may lead to incomplete or wrongful responses) 4. Physiological measurement - typically requires the use of equipment, suitable for nursing research. Measurements can be physical (weight), microbiological (cultures), chemical (blood glucose), or anatomic (xrays) a. Advantages include precise, sensitive, objective data, unlikeliness to hinder results. b. Disadvantages include the requirement of equipment, the requirement of knowledge how to use the equipment, data is affected by the environment i. drinking something hot before measuring temperature may increase the body temperature, white coat syndrome ii. important to consider whether the researcher controlled the environmental variables 5. In the event the researcher cannot locate an instrument or scale with acceptable reliability and validity to measure variables of interest, a new instrument is constructed. It is, however, complex, and time-consuming a. EBP focuses on existing data and its quality highly depends on consistency and accuracy of data, as well as commitment and ability to find the right data. Measurement errors: difference between what really exist and what is measured Random error - score vary in a random way (standard procedures are not used for collecting data consistently among subjects) Systematic error - scores are incorrect but in the same direction (scale was not zeroed upon weighing all the participants) Fidelity refers to data being collected in the same manner in the same method. Chapter 15 Two types of measurement errors (refer to chapter 14) Factors contributing to measurement errors Situational (time of day, physical factors) Transitory personal factors (pain, fatigue, mood) Response bias (willingness to respond in a certain way) Instrument issues (clarity and understanding, type and length of questions) Variation in how instrument is administered (group vs individual) Reliability: the ability of an instrument to measure the attributes of a concept or construct consistently. Focuses on three attributes: Stability: same results with repeated testing (test-retest - same instructions are given to subjects under similar conditions 2 or more times, parallel or alternate form (only if 2 compatible versions of the same instrument) Homogeneity (internal consistency): all items in the test measure the same construct, items within the scale correlate or complimentary of each other Equivalence: if it produces the same result when equivalent instruments are used (refers to the consistency of observers using the same instrument, an instrument indicates equivalence when 2 or more observers have a high percentage of agreement of an observed behavior) Reliability coefficient considered the degree of consistency of two results measured at two independent times (closer to 1 is more reliable, 0.7 is considered acceptable for research instrument, 0.9 is considered acceptable for clinical instrument) Validity: the extent to which instrument measures the attributes accurately (does it measure what it says it will measure). Three major kinds: Content validity: represents the universe of content, or the domain of a given variable. The universe of content provides the basis for developing the items that will adequately represent the content. The concern is whether the instrument and the items it contains are representative of the content domain that the researcher intends to measure. Criterion: related - indicates to what degree the subject’s performance on the measurement tool and the subject’s actual behavior are related. Concurrent: the extent to which the result of a particular test or measurement correspond to those of a previously established measurement for the same construct Predictive: degree of correlation between a measure and a future measure Construct validity: extent to which a test measures a theoretical construct, attribute, or trait. It attempts to validate the theory underlying the measurement by testing hypothesized relationships Hypothesis testing approach- develops a hypothesis about the behavior of people with varying scores on the tool and collects data to test the hypothesis. Convergent and divergent approaches Contrasted-groups approach Factor analytical approach Chapter 16 2 main types of statistics Descriptive - summarizing the data, describing the sample, mean, median and mode Inferential - you are going to infer something about the population, combines logic and math to test the hypothesis, answer research questions, draw conclusions Levels of measurement 1 - male, 2-female Determines the type of statistic to use The higher the level the greater the flexibility in choosing 4 levels Nominal - lowest level of measurement, math exclusive, labels genders as numbers, exclusive values like yes/no, true/false, single/married/divorced. The numbers assigned to each category are only labels and do not characterize the category. Can be proportionally compared. Data from this level takes the form of frequencies or percentages Ordinal - second to lowest, shows rankings, ranking people on their wellness score. The intervals are not necessarily equal and there is no absolute zero. Numbers reflect relative amounts. Data from this level takes the form of frequencies or percentages Interval measurement - show ranking of variables on a scale of equal intervals between numbers, there is still no absolute zero!!! Can be summed, averaged, subtracted. Data from this level is reported as a measure of central tendency (mean, median, mode) Ratio - highest - show rankings of variables on a scale with an absolute zero (BP, weight, annual income). Data from this level is reported as a measure of central tendency (mean, median, mode) Descriptive statistics normal distribution is described as bell-shaped or symmetric distribution, mean median and mode are equal standard deviation measures how far each number in a set of data from the mean. Most frequently used measure of variability For normally distributed data, the standard deviation has a very straightforward interpretation, if the data does not follow within following with cannot use parametric (mean, median, mode) and have to use nonparametric(ranking the data): 68% of the values will fall within one SD of the mean 95% of the values will fall within two SD of the mean 99% of the values will fall within three SD of the mean Confidence interval: the range of values we can be reasonably certain of represents a true value (95% confidence) Inferential statistics Statistical inference is the process by which we acquire information about populations from samples Scale used must be interval or ratio Sample should be a probability sample Null hypothesis in the one that is being tested (states that there is no difference between the groups) and we either accept or reject it while accepting the alternative hypothesis Statistical probability is based on a concept of sampling error ( type I error - rejecting a true null hypothesis more serious, type II error - accepting a false null hypothesis - sample size is too small) Level of significance - the probability of making type I error 0.05 minimum level in nursing studies (we will most likely be correct, 95% chance). Nothing greater than 0.05 will be accepted 0.05 is a p value (95% - p value 0.05, 99% - p value is 0.01) Power analysis - the risk of a type II error, in nursing research we aim for no more than 20% of type II error or 80% statistical power Calculating sample size (if we know the three, we can determine the fourth) Alpha (significance or p value) Beta (statistical power) Tests of significance Parametric - more powerful, used with interval or ratio variables, normal distribution where mean=mode=median (T test) Nonparametric (chi-Square, Mann - Whitney U) Chapter 17 The findings are the results, conclusions, interpretations, and recommendations for future research and nursing practice, which are addressed by separating the presentation into two major areas: results (focuses on results or statistical findings of the study and discussion (focuses on the remaining topics). Results This is the section where quantitative data generated by the descriptive and inferential tests are presented, also called analysis, should interpret each research question or hypothesis tested, and the test that was used to analyze should be stated (if not stated,obtained values should be stated) Should clearly provide an understanding if the data is significant Should state if the data support the hypothesis Results should be stated objectively (the facts without phrases like as we expected, surprisingly, etc) Tables are a good way of interpretation of data, however it should not repeat the text, have precise headings, supplement and economize the text) Discussion Study limitations (threats to internal or external validity, intervention fidelity, etc) Study recommendations Discussion of findings Enable you to judge the data Needs to discuss both supported and non-supported data Important to differentiate between statistical and clinical significance (statistical significance is just a number as a result they lost 1 pound, clinical significance is when it is actually meaningful - 1 pound weight loss might not be significant as we expected a greater weight loss). Generalizability - the data is generalized beyond the population of the study. This should not be done and we need to beware of the studies that do that Chapter 18 Critical appraisal is an evaluation of the strengths and quality of the study, it is not a criticism, it is being objective Styles of journals Articles about conduct, methods, or results of research critical, educational and research articles General layout of a research study as determined by editorial guidelines: Abstract Introduction Background (lit review) Methods Results Discussion conclusion Chapter 5,6,7 Qualitative research is based on assumptions that truth is dynamic and there are multiple realities (example: the experience of having a baby is different for every mother). Thus, qualitative researchers believe that reality is socially constructed and context dependent. Offer an avenue through understanding and exploration of humanity that are not possible through quantitative. Qualitative research Includes the insider point of view Embraces different perspectives Useful to start to answer questions about which little is known Useful to answer “why” questions that result from research Essentially is the outcome of the quantitative research, trying to gain a deeper understanding on an issue or human experience Methods of qualitative research 1. The phenomenological method (philosophy) Process of learning and constructing the meaning of human experience through intensive dialogue with persons who are living the experience Research question: “what is the human experience of…?” example : lived experience of multiple sclerosis 2. Grounded theory (sociology) Really is trying to understand the influences of social impact on the experience Looking for a root Data collection until saturation occurs (no new knowledge can be acquired) Research question: “how does this social group interact to…” 3. Ethnographic method (anthropology) Understanding the experience based on culture Aims to address question how cultural norms, values, and knowledge influence one’s health experience Data collection is done through interviews and field observation (example: ethnography of nursing home) 4. Case study Based on interviews, trying to understand the uniqueness of the situation Research question: “what are the details and complexities of the story of…?” 5. Discourse analysis (linguistics) The goal is to understand how people use language to create and enact identities and activities The same word in different communities may have a totally different meaning The root is to look at the language being used 6. Historical research Systemic approach for understanding the past through collection, organization, and critical appraisal of facts Just like in quantitative research, ethical concerns arise, studies require approval by IRB MetaSynthesis - level V - type of qualitative research- metasynthesis of a particular question Mixed methods - use of combination of qualitative and quantitative (allow to reduce bias and provide a deeper and wider understanding of a problem, it is however more costly and require more resources) Trustworthiness in qualitative research is described as credibility, transferability, dependability, and confirmability Key differences Qualitative Quantitative Emic point of view Etic point of view Explores different perspectives Seeks to minimize differences Inductive process of inquiry Deductive process of inquiry Small sample size Large sample size Critiquing qualitative research What is the phenomenon? Is it clearly stated? What is the purpose? What was the method? What type of sampling? Are the steps followed? Is it credible? Or do the participants recognize the experience as their own? Audibility? Or can the reader follow the researcher’s reasoning? Fittingness? Are the findings applicable to other, similar situations? findings?