In Praise of Programming Language Pragmatics,Third Edition

The ubiquity of computers in everyday life in the 21st century justifies the centrality of programming languages to computer science education. Programming languages is the area that connects the

theoretical foundations of computer science, the source of problem-solving algorithms, to modern

computer architectures on which the corresponding programs produce solutions. Given the speed

with which computing technology advances in this post-Internet era, a computing textbook must

present a structure for organizing information about a subject, not just the facts of the subject itself.

In this book, Michael Scott broadly and comprehensively presents the key concepts of programming

languages and their implementation, in a manner appropriate for computer science majors.

— From the Foreword by Barbara Ryder, Virginia Tech

Programming Language Pragmatics is an outstanding introduction to language design and implementation. It illustrates not only the theoretical underpinnings of the languages that we use, but also the

ways in which they have been guided by the development of computer architecture, and the ways in

which they continue to evolve to meet the challenge of exploiting multicore hardware.

— Tim Harris, Microsoft Research

Michael Scott has provided us with a book that is faithful to its title—Programming Language Pragmatics. In addition to coverage of traditional language topics, this text delves into the sometimes

obscure, but always necessary, details of fielding programming artifacts. This new edition is current

in its coverage of modern language fundamentals, and now includes new and updated material on

modern run-time environments, including virtual machines. This book is an excellent introduction

for anyone wishing to develop languages for real-world applications.

— Perry Alexander, Kansas University

Michael Scott has improved this new edition of Programming Language Pragmatic in big and small

ways. Changes include the addition of even more insightful examples, the conversion of Pascal

and MIPS examples to C and Intel 86, as well as a completely new chapter on run-time systems.

The additional chapter provides a deeper appreciation of the design and implementation issues of

modern languages.

— Eileen Head, Binghamton University

This new edition brings the gold standard of this dynamic field up to date while maintaining an

excellent balance of the three critical qualities needed in a textbook: breadth, depth, and clarity.

— Christopher Vickery, Queens College of CUNY

Programming Language Pragmatics provides a comprehensive treatment of programming language

theory and implementation. Michael Scott explains the concepts well and illustrates the practical

implications with hundreds of examples from the most popular and influential programming languages. With the welcome addition of a chapter on run-time systems, the third edition includes new

topics such as virtual machines, just-in-time compilation and symbolic debugging.

— William Calhoun, Bloomsburg University

This page intentionally left blank

Programming Language Pragmatics

THIRD EDITION

About the Author

Michael L. Scott is a professor and past chair of the Department of Computer Science at the University of Rochester. He received his Ph.D. in computer sciences in

1985 from the University of Wisconsin–Madison. His research interests lie at the

intersection of programming languages, operating systems, and high-level computer architecture, with an emphasis on parallel and distributed computing. He

is the designer of the Lynx distributed programming language and a co-designer

of the Charlotte and Psyche parallel operating systems, the Bridge parallel file

system, the Cashmere and InterWeave shared memory systems, and the RSTM

suite of transactional memory implementations. His MCS mutual exclusion lock,

co-designed with John Mellor-Crummey, is used in a variety of commercial and

academic systems. Several other algorithms, designed with Maged Michael, Bill

Scherer, and Doug Lea appear in the java.util.concurrent standard library.

In 2006 he and Dr. Mellor-Crummey shared the ACM SIGACT/SIGOPS Edsger

W. Dijkstra Prize in Distributed Computing.

Dr. Scott is a Fellow of the Association for Computing Machinery, a Senior

Member of the Institute of Electrical and Electronics Engineers, and a member

of the Union of Concerned Scientists and Computer Professionals for Social

Responsibility. He has served on a wide variety of program committees and grant

review panels, and has been a principal or co-investigator on grants from the NSF,

ONR, DARPA, NASA, the Departments of Energy and Defense, the Ford Foundation, Digital Equipment Corporation (now HP), Sun Microsystems, IBM, Intel,

and Microsoft. The author of more than 100 refereed publications, he served as

General Chair of the 2003 ACM Symposium on Operating Systems Principles

and as Program Chair of the 2007 ACM SIGPLAN Workshop on Transactional

Computing and the 2008 ACM SIGPLAN Symposium on Principles and Practice of Parallel Programming. In 2001 he received the University of Rochester’s

Robert and Pamela Goergen Award for Distinguished Achievement and Artistry

in Undergraduate Teaching.

Programming Language Pragmatics

TH I R D E D I TI O N

Michael L. Scott

Department of Computer Science

University of Rochester

AMSTERDAM • BOSTON • HEIDELBERG • LONDON

NEW YORK • OXFORD • PARIS • SAN DIEGO

SAN FRANCISCO • SINGAPORE • SYDNEY • TOKYO

Morgan Kaufmann Publishers is an imprint of Elsevier

Morgan Kaufmann Publishers is an imprint of Elsevier

30 Corporate Drive, Suite 400

Burlington, MA 01803

This book is printed on acid-free paper.

∞

c 2009 by Elsevier Inc. All rights reserved.

Copyright Designations used by companies to distinguish their products are often claimed as trade-marks or

registered trademarks. In all instances in which Morgan Kaufmann Publishers is aware of a claim,

the product names appear in initial capital or all capital letters. Readers, however, should contact the

appropriate companies for more complete information regarding trademarks and registration.

No part of this publication may be reproduced, stored in a retrieval system, or transmitted in any form

or by any means, electronic, mechanical, photocopying, scanning, or otherwise, without prior written

permission of the publisher.

Permissions may be sought directly from Elsevier’s Science & Technology Rights Department in

Oxford, UK: phone: (+44) 1865 843830, fax: (+44) 1865 853333, e-mail: permissions@elsevier.com.

You may also complete your request on-line via the Elsevier homepage (http://elsevier.com), by

selecting “Support & Contact” then “Copyright and Permission” and then “Obtaining Permissions.”

Library of Congress Cataloging-in-Publication Data

Application submitted.

ISBN 13: 978-0-12-374514-9

c 2008, Michael L. Scott.

Cover image: Copyright Beaver Lake, near Lowville, NY, in the foothills of the Adirondacks

For all information on all Morgan Kaufmann publications,

visit our Website at www.books.elsevier.com

Printed in the United States

Transferred to Digital Printing in 2011

To my parents,

Dorothy D. Scott and Peter Lee Scott,

who modeled for their children

the deepest commitment

to humanistic values.

This page intentionally left blank

Contents

Foreword

Preface

I

FOUNDATIONS

1 Introduction

1.1 The Art of Language Design

xxi

xxiii

3

5

7

1.2 The Programming Language Spectrum

10

1.3 Why Study Programming Languages?

14

1.4 Compilation and Interpretation

16

1.5 Programming Environments

24

1.6 An Overview of Compilation

1.6.1 Lexical and Syntax Analysis

1.6.2 Semantic Analysis and Intermediate Code Generation

1.6.3 Target Code Generation

1.6.4 Code Improvement

25

27

29

33

33

1.7 Summary and Concluding Remarks

35

1.8 Exercises

36

1.9 Explorations

37

1.10 Bibliographic Notes

2 Programming Language Syntax

2.1 Specifying Syntax: Regular Expressions and Context-Free Grammars

2.1.1 Tokens and Regular Expressions

2.1.2 Context-Free Grammars

2.1.3 Derivations and Parse Trees

39

41

42

43

46

48

x

Contents

2.2 Scanning

2.2.1 Generating a Finite Automaton

2.2.2 Scanner Code

2.2.3 Table-Driven Scanning

2.2.4 Lexical Errors

2.2.5 Pragmas

51

55

60

63

63

65

2.3 Parsing

2.3.1 Recursive Descent

2.3.2 Table-Driven Top-Down Parsing

2.3.3 Bottom-Up Parsing

2.3.4 Syntax Errors

1

67

70

76

87

99

2.4 Theoretical Foundations

2.4.1 Finite Automata

2.4.2 Push-Down Automata

2.4.3 Grammar and Language Classes

13 · 100

13

18

19

·

2.5 Summary and Concluding Remarks

101

2.6 Exercises

102

2.7 Explorations

108

2.8 Bibliographic Notes

109

3 Names, Scopes, and Bindings

111

3.1 The Notion of Binding Time

112

3.2 Object Lifetime and Storage Management

3.2.1 Static Allocation

3.2.2 Stack-Based Allocation

3.2.3 Heap-Based Allocation

3.2.4 Garbage Collection

114

115

117

118

120

3.3 Scope Rules

3.3.1 Static Scoping

3.3.2 Nested Subroutines

3.3.3 Declaration Order

3.3.4 Modules

3.3.5 Module Types and Classes

3.3.6 Dynamic Scoping

121

123

124

127

132

136

139

3.4 Implementing Scope

3.4.1 Symbol Tables

3.4.2 Association Lists and Central Reference Tables

3.5 The Meaning of Names within a Scope

3.5.1 Aliases

29 · 143

29

33

144

144

xi

Contents

3.5.2 Overloading

3.5.3 Polymorphism and Related Concepts

146

148

3.6 The Binding of Referencing Environments

3.6.1 Subroutine Closures

3.6.2 First-Class Values and Unlimited Extent

3.6.3 Object Closures

151

153

154

157

3.7 Macro Expansion

159

3.8 Separate Compilation

3.8.1 Separate Compilation in C

3.8.2 Packages and Automatic Header Inference

3.8.3 Module Hierarchies

39 · 161

40

42

43

3.9 Summary and Concluding Remarks

162

3.10 Exercises

163

3.11 Explorations

171

3.12 Bibliographic Notes

172

4 Semantic Analysis

175

4.1 The Role of the Semantic Analyzer

176

4.2 Attribute Grammars

180

4.3 Evaluating Attributes

182

4.4 Action Routines

191

4.5 Space Management for Attributes

4.5.1 Bottom-Up Evaluation

4.5.2 Top-Down Evaluation

49 · 196

49

54

4.6 Decorating a Syntax Tree

197

4.7 Summary and Concluding Remarks

204

4.8 Exercises

205

4.9 Explorations

209

4.10 Bibliographic Notes

5 Target Machine Architecture

210

65 · 213

5.1 The Memory Hierarchy

66

5.2 Data Representation

5.2.1 Integer Arithmetic

5.2.2 Floating-Point Arithmetic

68

69

72

xii

Contents

II

5.3 Instruction Set Architecture

5.3.1 Addressing Modes

5.3.2 Conditions and Branches

75

75

76

5.4 Architecture and Implementation

5.4.1 Microprogramming

5.4.2 Microprocessors

5.4.3 RISC

5.4.4 Multithreading and Multicore

5.4.5 Two Example Architectures: The x86 and MIPS

78

79

80

81

82

84

5.5 Compiling for Modern Processors

5.5.1 Keeping the Pipeline Full

5.5.2 Register Allocation

91

91

96

5.6 Summary and Concluding Remarks

101

5.7 Exercises

103

5.8 Explorations

107

5.9 Bibliographic Notes

109

CORE ISSUES IN LANGUAGE DESIGN

6 Control Flow

217

219

6.1 Expression Evaluation

6.1.1 Precedence and Associativity

6.1.2 Assignments

6.1.3 Initialization

6.1.4 Ordering within Expressions

6.1.5 Short-Circuit Evaluation

220

222

224

233

235

238

6.2 Structured and Unstructured Flow

6.2.1 Structured Alternatives to goto

6.2.2 Continuations

241

242

245

6.3 Sequencing

246

6.4 Selection

6.4.1 Short-Circuited Conditions

6.4.2 Case / Switch Statements

247

248

251

6.5 Iteration

6.5.1 Enumeration-Controlled Loops

6.5.2 Combination Loops

256

256

261

Contents

6.5.3 Iterators

6.5.4 Generators in Icon

6.5.5 Logically Controlled Loops

6.6 Recursion

6.6.1 Iteration and Recursion

6.6.2 Applicative- and Normal-Order Evaluation

6.7 Nondeterminacy

xiii

262

111 · 268

268

270

271

275

115 · 277

6.8 Summary and Concluding Remarks

278

6.9 Exercises

279

6.10 Explorations

285

6.11 Bibliographic Notes

287

7 Data Types

7.1 Type Systems

7.1.1 Type Checking

7.1.2 Polymorphism

7.1.3 The Meaning of “Type”

7.1.4 Classification of Types

7.1.5 Orthogonality

289

290

291

291

293

294

301

7.2 Type Checking

7.2.1 Type Equivalence

7.2.2 Type Compatibility

7.2.3 Type Inference

7.2.4 The ML Type System

303

303

310

314

125 · 316

7.3 Records (Structures) and Variants (Unions)

7.3.1 Syntax and Operations

7.3.2 Memory Layout and Its Impact

7.3.3 With Statements

7.3.4 Variant Records (Unions)

317

318

319

135 · 323

139 · 324

7.4 Arrays

7.4.1 Syntax and Operations

7.4.2 Dimensions, Bounds, and Allocation

7.4.3 Memory Layout

325

326

330

335

7.5 Strings

342

7.6 Sets

344

7.7 Pointers and Recursive Types

7.7.1 Syntax and Operations

345

346

xiv

Contents

7.7.2 Dangling References

7.7.3 Garbage Collection

7.8 Lists

7.9 Files and Input/Output

7.9.1 Interactive I/O

7.9.2 File-Based I/O

7.9.3 Text I/O

149 · 356

357

364

153 · 367

153

154

156

7.10 Equality Testing and Assignment

368

7.11 Summary and Concluding Remarks

371

7.12 Exercises

373

7.13 Explorations

379

7.14 Bibliographic Notes

380

8 Subroutines and Control Abstraction

8.1 Review of Stack Layout

383

384

8.2 Calling Sequences

8.2.1 Displays

8.2.2 Case Studies: C on the MIPS; Pascal on the x86

8.2.3 Register Windows

8.2.4 In-Line Expansion

386

·

169

389

173 · 389

181 · 390

391

8.3 Parameter Passing

8.3.1 Parameter Modes

8.3.2 Call-by-Name

8.3.3 Special-Purpose Parameters

8.3.4 Function Returns

393

394

185 · 402

403

408

8.4 Generic Subroutines and Modules

8.4.1 Implementation Options

8.4.2 Generic Parameter Constraints

8.4.3 Implicit Instantiation

8.4.4 Generics in C++, Java, and C#

410

412

414

416

189 · 417

8.5 Exception Handling

8.5.1 Defining Exceptions

8.5.2 Exception Propagation

8.5.3 Implementation of Exceptions

418

421

423

425

8.6 Coroutines

8.6.1 Stack Allocation

8.6.2 Transfer

428

430

432

Contents

8.6.3 Implementation of Iterators

8.6.4 Discrete Event Simulation

8.7 Events

8.7.1 Sequential Handlers

8.7.2 Thread-Based Handlers

xv

201 · 433

205 · 433

434

434

436

8.8 Summary and Concluding Remarks

438

8.9 Exercises

439

8.10 Explorations

446

8.11 Bibliographic Notes

447

9 Data Abstraction and Object Orientation

449

9.1 Object-Oriented Programming

451

9.2 Encapsulation and Inheritance

9.2.1 Modules

9.2.2 Classes

9.2.3 Nesting (Inner Classes)

9.2.4 Type Extensions

9.2.5 Extending without Inheritance

460

460

463

465

466

468

9.3 Initialization and Finalization

9.3.1 Choosing a Constructor

9.3.2 References and Values

9.3.3 Execution Order

9.3.4 Garbage Collection

469

470

472

475

477

9.4 Dynamic Method Binding

9.4.1 Virtual and Nonvirtual Methods

9.4.2 Abstract Classes

9.4.3 Member Lookup

9.4.4 Polymorphism

9.4.5 Object Closures

478

480

482

482

486

489

9.5 Multiple Inheritance

9.5.1 Semantic Ambiguities

9.5.2 Replicated Inheritance

9.5.3 Shared Inheritance

9.5.4 Mix-In Inheritance

215 · 491

217

220

222

223

9.6 Object-Oriented Programming Revisited

9.6.1 The Object Model of Smalltalk

492

·

227

493

9.7 Summary and Concluding Remarks

494

xvi

Contents

9.8 Exercises

495

9.9 Explorations

498

9.10 Bibliographic Notes

III

ALTERNATIVE PROGRAMMING MODELS

10 Functional Languages

499

503

505

10.1 Historical Origins

506

10.2 Functional Programming Concepts

507

10.3 A Review/Overview of Scheme

10.3.1 Bindings

10.3.2 Lists and Numbers

10.3.3 Equality Testing and Searching

10.3.4 Control Flow and Assignment

10.3.5 Programs as Lists

10.3.6 Extended Example: DFA Simulation

509

512

513

514

515

517

519

10.4 Evaluation Order Revisited

10.4.1 Strictness and Lazy Evaluation

10.4.2 I/O: Streams and Monads

521

523

525

10.5 Higher-Order Functions

530

10.6 Theoretical Foundations

10.6.1 Lambda Calculus

10.6.2 Control Flow

10.6.3 Structures

237 · 534

239

242

244

10.7 Functional Programming in Perspective

534

10.8 Summary and Concluding Remarks

537

10.9 Exercises

538

10.10 Explorations

542

10.11 Bibliographic Notes

543

11 Logic Languages

545

11.1 Logic Programming Concepts

546

11.2 Prolog

11.2.1 Resolution and Unification

11.2.2 Lists

547

549

550

Contents

11.2.3

11.2.4

11.2.5

11.2.6

11.2.7

Arithmetic

Search/Execution Order

Extended Example: Tic-Tac-Toe

Imperative Control Flow

Database Manipulation

11.3 Theoretical Foundations

11.3.1 Clausal Form

11.3.2 Limitations

11.3.3 Skolemization

xvii

551

552

554

557

561

253 · 566

254

255

257

11.4 Logic Programming in Perspective

11.4.1 Parts of Logic Not Covered

11.4.2 Execution Order

11.4.3 Negation and the “Closed World” Assumption

566

566

567

568

11.5 Summary and Concluding Remarks

570

11.6 Exercises

571

11.7 Explorations

573

11.8 Bibliographic Notes

573

12 Concurrency

575

12.1 Background and Motivation

12.1.1 The Case for Multithreaded Programs

12.1.2 Multiprocessor Architecture

576

579

581

12.2 Concurrent Programming Fundamentals

12.2.1 Communication and Synchronization

12.2.2 Languages and Libraries

12.2.3 Thread Creation Syntax

12.2.4 Implementation of Threads

586

587

588

589

598

12.3 Implementing Synchronization

12.3.1 Busy-Wait Synchronization

12.3.2 Nonblocking Algorithms

12.3.3 Memory Consistency Models

12.3.4 Scheduler Implementation

12.3.5 Semaphores

603

604

607

610

613

617

12.4 Language-Level Mechanisms

12.4.1 Monitors

12.4.2 Conditional Critical Regions

12.4.3 Synchronization in Java

619

619

624

626

xviii

Contents

12.4.4 Transactional Memory

12.4.5 Implicit Synchronization

12.5 Message Passing

12.5.1 Naming Communication Partners

12.5.2 Sending

12.5.3 Receiving

12.5.4 Remote Procedure Call

629

633

263 · 637

263

267

272

278

12.6 Summary and Concluding Remarks

638

12.7 Exercises

640

12.8 Explorations

645

12.9 Bibliographic Notes

647

13 Scripting Languages

649

13.1 What Is a Scripting Language?

13.1.1 Common Characteristics

650

652

13.2 Problem Domains

13.2.1 Shell (Command) Languages

13.2.2 Text Processing and Report Generation

13.2.3 Mathematics and Statistics

13.2.4 “Glue” Languages and General-Purpose Scripting

13.2.5 Extension Languages

655

655

663

667

668

676

13.3 Scripting the World Wide Web

13.3.1 CGI Scripts

13.3.2 Embedded Server-Side Scripts

13.3.3 Client-Side Scripts

13.3.4 Java Applets

13.3.5 XSLT

680

680

681

686

686

287 · 689

13.4 Innovative Features

13.4.1 Names and Scopes

13.4.2 String and Pattern Manipulation

13.4.3 Data Types

13.4.4 Object Orientation

691

691

696

704

710

13.5 Summary and Concluding Remarks

717

13.6 Exercises

718

13.7 Explorations

723

13.8 Bibliographic Notes

724

Contents

IV

A CLOSER LOOK AT IMPLEMENTATION

14 Building a Runnable Program

14.1 Back-End Compiler Structure

14.1.1 A Plausible Set of Phases

14.1.2 Phases and Passes

xix

727

729

729

730

734

14.2 Intermediate Forms

14.2.1 Diana

14.2.2 The gcc IFs

14.2.3 Stack-Based Intermediate Forms

303 · 734

303

306

736

14.3 Code Generation

14.3.1 An Attribute Grammar Example

14.3.2 Register Allocation

738

738

741

14.4 Address Space Organization

744

14.5 Assembly

14.5.1 Emitting Instructions

14.5.2 Assigning Addresses to Names

746

748

749

14.6 Linking

14.6.1 Relocation and Name Resolution

14.6.2 Type Checking

750

751

751

14.7 Dynamic Linking

14.7.1 Position-Independent Code

14.7.2 Fully Dynamic (Lazy) Linking

311 · 754

312

313

14.8 Summary and Concluding Remarks

755

14.9 Exercises

756

14.10 Explorations

758

14.11 Bibliographic Notes

759

15 Run-time Program Management

761

15.1 Virtual Machines

15.1.1 The Java Virtual Machine

15.1.2 The Common Language Infrastructure

764

766

775

15.2 Late Binding of Machine Code

15.2.1 Just-in-Time and Dynamic Compilation

15.2.2 Binary Translation

784

785

791

xx

Contents

15.2.3 Binary Rewriting

15.2.4 Mobile Code and Sandboxing

795

797

15.3 Inspection/Introspection

15.3.1 Reflection

15.3.2 Symbolic Debugging

15.3.3 Performance Analysis

799

799

806

809

15.4 Summary and Concluding Remarks

811

15.5 Exercises

812

15.6 Explorations

815

15.7 Bibliographic Notes

816

16 Code Improvement

321 · 817

16.1 Phases of Code Improvement

323

16.2 Peephole Optimization

325

16.3 Redundancy Elimination in Basic Blocks

16.3.1 A Running Example

16.3.2 Value Numbering

328

328

331

16.4 Global Redundancy and Data Flow Analysis

16.4.1 SSA Form and Global Value Numbering

16.4.2 Global Common Subexpression Elimination

336

336

339

16.5 Loop Improvement I

16.5.1 Loop Invariants

16.5.2 Induction Variables

346

347

348

16.6 Instruction Scheduling

351

16.7 Loop Improvement II

16.7.1 Loop Unrolling and Software Pipelining

16.7.2 Loop Reordering

355

355

359

16.8 Register Allocation

366

16.9 Summary and Concluding Remarks

370

16.10 Bibliographic Notes

377

A Programming Languages Mentioned

819

B Language Design and Language Implementation

831

C Numbered Examples

835

Bibliography

849

Index

867

Foreword

The ubiquity of computers in everyday life in the 21st century justifies the centrality of programming languages to computer science education. Programming

languages is the area that connects the theoretical foundations of computer science,

the source of problem-solving algorithms, to modern computer architectures on

which the corresponding programs produce solutions. Given the speed with which

computing technology advances in this post-Internet era, a computing textbook

must present a structure for organizing information about a subject, not just the

facts of the subject itself. In this book, Michael Scott broadly and comprehensively

presents the key concepts of programming languages and their implementation,

in a manner appropriate for computer science majors.

The key strength of Scott’s book is that he holistically combines descriptions of

language concepts with concrete explanations of how to realize them. The depth of

these discussions, which have been updated in this third edition to reflect current

research and practice, provide basic information as well as supplemental material

for the reader interested in a specific topic. By eliding some topics selectively,

the instructor can still create a coherent exploration of a subset of the subject

matter. Moreover, Scott uses numerous examples from real languages to illustrate

key points. For interested or motivated readers, additional in-depth and advanced

discussions and exercises are available on the book’s companion CD, enabling

students with a range of interests and abilities to further explore on their own the

fundamentals of programming languages and compilation.

I have taught a semester-long comparative programming languages course

using Scott’s book for the last several years. I emphasize to students that my

goal is for them to learn how to learn a programming language, rather than to

retain detailed specifics of any one programming language. The purpose of the

course is to teach students an organizational framework for learning new languages throughout their careers, a certainty in the computer science field. To this

end, I particularly like Scott’s chapters on programming language paradigms (i.e.,

functional, logic, object-oriented, scripting), and my course material is organized

in this manner. However, I also have included foundational topics such as memory

organization, names and locations, scoping, types, and garbage collection–all of

which benefit from being presented in a manner that links the language concept

to its implementation details. Scott’s explanations are to the point and intuitive,

with clear illustrations and good examples. Often, discussions are independent

of previously presented material, making it easier to pick and choose topics for

xxi

xxii

Foreword

the syllabus. In addition, many supplemental teaching materials are provided on

the Web.

Of key interest to me in this new edition are the new Chapter 15 on run-time

environments and virtual machines (VMs), and the major update of Chapter

12 on concurrency. Given the current emphasis on virtualization, including a

chapter on VMs, such as Java’s JVM and CLI, facilitates student understanding

of this important topic and explains how modern languages achieve portability

over many platforms. The discussion of dynamic compilation and binary translation provides a contrast to the more traditional model of compilation presented

earlier in the book. It is important that Scott includes this newer compilation

technology so that a student can better understand what is needed to support the

newer dynamic language features described. Further, the discussions of symbolic

debugging and performance analysis demonstrate that programming language

and compiler technology pervade the software development cycle.

Similarly, Chapter 12 has been augmented with discussions of newer topics

that have been the focus of recent research (e.g., memory consistency models,

software transactional memory). A discussion of concurrency as a programming

paradigm belongs in a programming languages course, not just in an operating

systems course. In this context, language design choices easily can be compared

and contrasted, and their required implementations considered. This blurring

of the boundaries between language design, compilation, operating systems, and

architecture characterizes current software development in practice. This reality

is mirrored in this third edition of Scott’s book.

Besides these major changes, this edition features updated examples (e.g., in

X86 code, in C rather than Pascal) and enhanced discussions in the context of

modern languages such as C#, Java 5, Python, and Eiffel. Presenting examples in

several programming languages helps students understand that it is the underlying

common concepts that are important, not their syntactic differences.

In summary, Michael Scott’s book is an excellent treatment of programming

languages and their implementation. This new third edition provides a good reference for students, to supplement materials presented in lectures. Several coherent

tracks through the textbook allow construction of several “flavors” of courses that

cover much, but not all of the material. The presentation is clear and comprehensive with language design and implementation discussed together and supporting

one another.

Congratulations to Michael on a fine third edition of this wonderful book!

Barbara G. Ryder

J. Byron Maupin Professor of Engineering

Head, Department of Computer Science

Virginia Tech

Preface

A course in computer programming provides the typical student’s first

exposure to the field of computer science. Most students in such a course will

have used computers all their lives, for email, games, web browsing, word processing, social networking, and a host of other tasks, but it is not until they write their

first programs that they begin to appreciate how applications work. After gaining

a certain level of facility as programmers (presumably with the help of a good

course in data structures and algorithms), the natural next step is to wonder how

programming languages work. This book provides an explanation. It aims, quite

simply, to be the most comprehensive and accurate languages text available, in a

style that is engaging and accessible to the typical undergraduate. This aim reflects

my conviction that students will understand more, and enjoy the material more,

if we explain what is really going on.

In the conventional “systems” curriculum, the material beyond data structures (and possibly computer organization) tends to be compartmentalized into a

host of separate subjects, including programming languages, compiler construction, computer architecture, operating systems, networks, parallel and distributed

computing, database management systems, and possibly software engineering,

object-oriented design, graphics, or user interface systems. One problem with this

compartmentalization is that the list of subjects keeps growing, but the number of

semesters in a Bachelor’s program does not. More important, perhaps, many of the

most interesting discoveries in computer science occur at the boundaries between

subjects. The RISC revolution, for example, forged an alliance between computer architecture and compiler construction that has endured for 25 years. More

recently, renewed interest in virtual machines has blurred the boundaries between

the operating system kernel, the compiler, and the language run-time system.

Programs are now routinely embedded in web pages, spreadsheets, and user interfaces. And with the rise of multicore processors, concurrency issues that used to be

an issue only for systems programmers have begun to impact everyday computing.

Increasingly, both educators and practitioners are recognizing the need to

emphasize these sorts of interactions. Within higher education in particular there

is a growing trend toward integration in the core curriculum. Rather than give the

typical student an in-depth look at two or three narrow subjects, leaving holes in all

the others, many schools have revised the programming languages and computer

organization courses to cover a wider range of topics, with follow-on electives

in various specializations. This trend is very much in keeping with the findings

of the ACM/IEEE-CS Computing Curricula 2001 task force, which emphasize the

xxiii

xxiv

Preface

growth of the field, the increasing need for breadth, the importance of flexibility

in curricular design, and the overriding goal of graduating students who “have

a system-level perspective, appreciate the interplay between theory and practice,

are familiar with common themes, and can adapt over time as the field evolves”

[CR01, Sec. 11.1, adapted].

The first two editions of Programming Language Pragmatics (PLP-1e and -2e)

had the good fortune of riding this curricular trend. This third edition continues

and strengthens the emphasis on integrated learning while retaining a central

focus on programming language design.

At its core, PLP is a book about how programming languages work. Rather than

enumerate the details of many different languages, it focuses on concepts that

underlie all the languages the student is likely to encounter, illustrating those

concepts with a variety of concrete examples, and exploring the tradeoffs that

explain why different languages were designed in different ways. Similarly, rather

than explain how to build a compiler or interpreter (a task few programmers will

undertake in its entirety), PLP focuses on what a compiler does to an input program, and why. Language design and implementation are thus explored together,

with an emphasis on the ways in which they interact.

Changes in the Third Edition

In comparison to the second edition, PLP-3e provides

1.

2.

3.

4.

A new chapter on virtual machines and run-time program management

A major revision of the chapter on concurrency

Numerous other reflections of recent changes in the field

Improvements inspired by instructor feedback or a fresh consideration of

familiar topics

Item 1 in this list is perhaps the most visible change. It reflects the increasingly

ubiquitous use of both managed code and scripting languages. Chapter 15 begins

with a general overview of virtual machines and then takes a detailed look at

the two most widely used examples: the JVM and the CLI. The chapter also

covers dynamic compilation, binary translation, reflection, debuggers, profilers,

and other aspects of the increasingly sophisticated run-time machinery found in

modern language systems.

Item 2 also reflects the evolving nature of the field. With the proliferation

of multicore processors, concurrent languages have become increasingly important to mainstream programmers, and the field is very much in flux. Changes to

Chapter 12 (Concurrency) include new sections on nonblocking synchronization,

memory consistency models, and software transactional memory, as well as

increased coverage of OpenMP, Erlang, Java 5, and Parallel FX for .NET.

Other new material (Item 3) appears throughout the text. Section 5.4.4 covers

the multicore revolution from an architectural perspective. Section 8.7 covers

Preface

xxv

event handling, in both sequential and concurrent languages. In Section 14.2,

coverage of gcc internals includes not only RTL, but also the newer GENERIC

and Gimple intermediate forms. References have been updated throughout to

accommodate such recent developments as Java 6, C++ ’0X, C# 3.0, F#, Fortran

2003, Perl 6, and Scheme R6RS.

Finally, Item 4 encompasses improvements to almost every section of the

text. Topics receiving particularly heavy updates include the running example

of Chapter 1 (moved from Pascal/MIPS to C/x86); bootstrapping (Section 1.4);

scanning (Section 2.2); table-driven parsing (Sections 2.3.2 and 2.3.3); closures

(Sections 3.6.2, 3.6.3, 8.3.1, 8.4.4, 8.7.2, and 9.2.3); macros (Section 3.7); evaluation order and strictness (Sections 6.6.2 and 10.4); decimal types (Section 7.1.4);

array shape and allocation (Section 7.4.2); parameter passing (Section 8.3); inner

(nested) classes (Section 9.2.3); monads (Section 10.4.2); and the Prolog examples

of Chapter 11 (now ISO conformant).

To accommodate new material, coverage of some topics has been condensed. Examples include modules (Chapters 3 and 9), loop control (Chapter 6),

packed types (Chapter 7), the Smalltalk class hierarchy (Chapter 9), metacircular interpretation (Chapter 10), interconnection networks (Chapter 12), and

thread creation syntax (also Chapter 12). Additional material has moved to the

companion CD. This includes all of Chapter 5 (Target Machine Architecture),

unions (Section 7.3.4), dangling references (Section 7.7.2), message passing

(Section 12.5), and XSLT (Section 13.3.5). Throughout the text, examples

drawn from languages no longer in widespread use have been replaced with more

recent equivalents wherever appropriate.

Overall, the printed text has grown by only some 30 pages, but there are nearly

100 new pages on the CD. There are also 14 more “Design & Implementations”

sidebars, more than 70 new numbered examples, a comparable number of new

“Check Your Understanding” questions, and more than 60 new end-of-chapter

exercises and explorations. Considerable effort has been invested in creating a

consistent and comprehensive index. As in earlier editions, Morgan Kaufmann

has maintained its commitment to providing definitive texts at reasonable

cost: PLP-3e is less expensive than competing alternatives, but larger and more

comprehensive.

The PLP CD - See Note on page xxx

To minimize the physical size of the text, make way for new material, and allow

students to focus on the fundamentals when browsing, approximately 350 pages

of more advanced or peripheral material appears on the PLP CD. Each CD section

is represented in the main text by a brief introduction to the subject and an “In

More Depth” paragraph that summarizes the elided material.

Note that placement of material on the CD does not constitute a judgment

about its technical importance. It simply reflects the fact that there is more material

worth covering than will fit in a single volume or a single semester course. Since

preferences and syllabi vary, most instructors will probably want to assign reading

xxvi

Preface

from the CD, and most will refrain from assigning certain sections of the printed

text. My intent has been to retain in print the material that is likely to be covered

in the largest number of courses.

Also contained on the CD are compilable copies of all significant code fragments

found in the text (in more than two dozen languages) and pointers to on-line

resources.

Design & Implementation Sidebars

Like its predecessors, PLP-3e places heavy emphasis on the ways in which language

design constrains implementation options, and the ways in which anticipated

implementations have influenced language design. Many of these connections and

interactions are highlighted in some 135 “Design & Implementations” sidebars.

A more detailed introduction to these sidebars appears on page 9 (Chapter 1).

A numbered list appears in Appendix B.

Numbered and Titled Examples

Examples in PLP-3e are intimately woven into the flow of the presentation. To

make it easier to find specific examples, to remember their content, and to refer

to them in other contexts, a number and a title for each is displayed in a marginal

note. There are nearly 1000 such examples across the main text and the CD. A

detailed list appears in Appendix C.

Exercise Plan

Review questions appear throughout the text at roughly 10-page intervals, at the

ends of major sections. These are based directly on the preceding material, and

have short, straightforward answers.

More detailed questions appear at the end of each chapter. These are

divided into Exercises and Explorations. The former are generally more challenging than the per-section review questions, and should be suitable for homework or brief projects. The latter are more open-ended, requiring web or

library research, substantial time commitment, or the development of subjective opinion. Solutions to many of the exercises (but not the explorations)

are available to registered instructors from a password-protected web site: visit

textbooks.elsevier.com/web/9780123745149.

How to Use the Book

Programming Language Pragmatics covers almost all of the material in the PL

“knowledge units” of the Computing Curricula 2001 report [CR01]. The book is

an ideal fit for the CS 341 model course (Programming Language Design), and

can also be used for CS 340 (Compiler Construction) or CS 343 (Programming

Preface

Fu

n

cti

Lo onal

12 gic

Co

nc

ur

ren

cy

13

Sc

rip

tin

g

14

Co

15 deG

Ru en

nt

im

16

e

Im

pr

ov

em

en

t

Part IV

11

cts

10

bje

9O

ro

ut

ine

s

Part III

8S

ub

7T

yp

es

Part II

3N

am

es

4S

em

a

5 A ntic

rch s

6 C itect

ur

on

tro e

l

1I

nt

ro

2S

yn

tax

Part I

xxvii

F

R

2.3.3

P

C

Q

14.5 15.2

2.2

2.3.2

8.3

F: The full-year/self-study plan

R: The one-semester Rochester plan

P: The traditional Programming Languages plan;

would also de-emphasize implementation material

throughout the chapters shown

C: The compiler plan; would also de-emphasize design material

throughout the chapters shown

Q: The 1+2 quarter plan: an overview quarter and two independent, optional

follow-on quarters, one language-oriented, the other compiler-oriented

Supplemental (CD) section

To be skimmed by students

in need of review

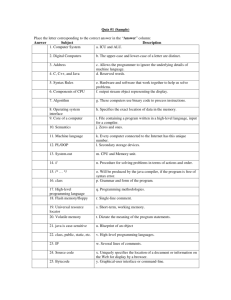

Figure 0.1

Paths through the text. Darker shaded regions indicate supplemental “In More Depth” sections on the PLP CD.

Section numbers are shown for breaks that do not correspond to supplemental material.

Paradigms). It contains a significant fraction of the content of CS 344 (Functional

Programming) and CS 346 (Scripting Languages). Figure 0.1 illustrates several

possible paths through the text.

For self-study, or for a full-year course (track F in Figure 0.1), I recommend

working through the book from start to finish, turning to the PLP CD as each “In

More Depth” section is encountered. The one-semester course at the University of

Rochester (track R ), for which the text was originally developed, also covers most

of the book, but leaves out most of the CD sections, as well as bottom-up parsing

(2.3.3) and the second halves of Chapters 14 (Building a Runnable Program)

and 15 (Run-time Program Management).

Some chapters (2, 4, 5, 14, 15, 16) have a heavier emphasis than others on

implementation issues. These can be reordered to a certain extent with respect

to the more design-oriented chapters. Many students will already be familiar

with much of the material in Chapter 5, most likely from a course on computer

organization; hence the placement of the chapter on the PLP CD. Some students

may also be familiar with some of the material in Chapter 2, perhaps from a course

on automata theory. Much of this chapter can then be read quickly as well, pausing

xxviii

Preface

perhaps to dwell on such practical issues as recovery from syntax errors, or the

ways in which a scanner differs from a classical finite automaton.

A traditional programming languages course (track P in Figure 0.1) might leave

out all of scanning and parsing, plus all of Chapter 4. It would also de-emphasize

the more implementation-oriented material throughout. In place of these it could

add such design-oriented CD sections as the ML type system (7.2.4), multiple

inheritance (9.5), Smalltalk (9.6.1), lambda calculus (10.6), and predicate calculus

(11.3).

PLP has also been used at some schools for an introductory compiler course

(track C in Figure 0.1). The typical syllabus leaves out most of Part III (Chapters 10

through 13), and de-emphasizes the more design-oriented material throughout.

In place of these it includes all of scanning and parsing, Chapters 14 through 16,

and a slightly different mix of other CD sections.

For a school on the quarter system, an appealing option is to offer an introductory one-quarter course and two optional follow-on courses (track Q in Figure 0.1). The introductory quarter might cover the main (non-CD) sections of

Chapters 1, 3, 6, and 7, plus the first halves of Chapters 2 and 8. A languageoriented follow-on quarter might cover the rest of Chapter 8, all of Part III, CD

sections from Chapters 6 through 8, and possibly supplemental material on formal

semantics, type systems, or other related topics. A compiler-oriented follow-on

quarter might cover the rest of Chapter 2; Chapters 4–5 and 14–16, CD sections

from Chapters 3 and 8–9, and possibly supplemental material on automatic code

generation, aggressive code improvement, programming tools, and so on.

Whatever the path through the text, I assume that the typical reader has already

acquired significant experience with at least one imperative language. Exactly

which language it is shouldn’t matter. Examples are drawn from a wide variety of

languages, but always with enough comments and other discussion that readers

without prior experience should be able to understand easily. Single-paragraph

introductions to more than 50 different languages appear in Appendix A. Algorithms, when needed, are presented in an informal pseudocode that should be

self-explanatory. Real programming language code is set in "typewriter" font.

Pseudocode is set in a sans-serif font.

Supplemental Materials

In addition to supplemental sections, the PLP CD contains a variety of other

resources, including

Links to language reference manuals and tutorials on the Web

Links to open-source compilers and interpreters

Complete source code for all nontrivial examples in the book

A search engine for both the main text and the CD-only content

Preface

xxix

Additional resources are available on-line at textbooks.elsevier.com/web/

9780123745149 (you may wish to check back from time to time). For instructors who have adopted the text, a password-protected page provides access to

Editable PDF source for all the figures in the book

Editable PowerPoint slides

Solutions to most of the exercises

Suggestions for larger projects

Acknowledgments for the Third Edition

In preparing the third edition I have been blessed with the generous assistance

of a very large number of people. Many provided errata or other feedback on

the second edition, among them Gerald Baumgartner, Manuel E. Bermudez,

William Calhoun, Betty Cheng, Yi Dai, Eileen Head, Nathan Hoot, Peter Ketcham,

Antonio Leitao, Jingke Li, Annie Liu, Dan Mullowney, Arthur Nunes-Harwitt,

Zongyan Qiu, Beverly Sanders, David Sattari, Parag Tamhankar, Ray Toal, Robert

van Engelen, Garrett Wollman, and Jingguo Yao. In several cases, good advice from

the 2004 class test went unheeded in the second edition due to lack of time; I am

glad to finally have the chance to incorporate it here. I also remain indebted to

the many individuals acknowledged in the first and second editions, and to the

reviewers, adopters, and readers who made those editions a success.

External reviewers for the third edition provided a wealth of useful suggestions; my thanks to Perry Alexander (University of Kansas), Hans Boehm (HP

Labs), Stephen Edwards (Columbia University), Tim Harris (Microsoft Research),

Eileen Head (Binghamton University), Doug Lea (SUNY Oswego), Jan-Willem

Maessen (Sun Microsystems Laboratories), Maged Michael (IBM Research),

Beverly Sanders (University of Florida), Christopher Vickery (Queens College,

City University of New York), and Garrett Wollman (MIT). Hans, Doug, and

Maged proofread parts of Chapter 12 on very short notice; Tim and Jan were

equally helpful with parts of Chapter 10. Mike Spear helped vet the transactional memory implementation of Figure 12.18. Xiao Zhang provided pointers for Section 15.3.3. Problems that remain in all these sections are entirely

my own.

In preparing the third edition, I have drawn on 20 years of experience teaching

this material to upper-level undergraduates at the University of Rochester. I am

grateful to all my students for their enthusiasm and feedback. My thanks as well

to my colleagues and graduate students, and to the department’s administrative,

secretarial, and technical staff for providing such a supportive and productive work

environment. Finally, my thanks to Barbara Ryder, whose forthright comments

on the first edition helped set me on the path to the second; I am honored to have

her as the author of the Foreword.

xxx

Preface

As they were on previous editions, the staff at Morgan Kaufmann have been a

genuine pleasure to work with, on both a professional and a personal level. My

thanks in particular to Nate McFadden, Senior Development Editor, who shepherded both this and the previous edition with unfailing patience, good humor,

and a fine eye for detail; to Marilyn Rash, who managed the book’s production;

and to Denise Penrose, whose gracious stewardship, first as Editor and then as

Publisher, have had a lasting impact.

Most important, I am indebted to my wife, Kelly, and our daughters, Erin and

Shannon, for their patience and support through endless months of writing and

revising. Computing is a fine profession, but family is what really matters.

Michael L. Scott

Rochester, NY

December 2008

PLP CD Content on a Companion Web Site

All content originally included on a CD is now available at this book’s companion

web site. Please visit the URL: http://www.elsevierdirect.com/9780123745149 and

click on “Companion Site”

This page intentionally left blank

I

Foundations

A central premise of Programming Language Pragmatics is that language design and implementation are intimately connected; it’s hard to study one without the other.

The bulk of the text—Parts II and III—is organized around topics in language design, but

with detailed coverage throughout of the many ways in which design decisions have been shaped

by implementation concerns.

The first five chapters—Part I—set the stage by covering foundational material in both

design and implementation. Chapter 1 motivates the study of programming languages, introduces the major language families, and provides an overview of the compilation process. Chapter 3 covers the high-level structure of programs, with an emphasis on names, the binding of

names to objects, and the scope rules that govern which bindings are active at any given time.

In the process it touches on storage management; subroutines, modules, and classes; polymorphism; and separate compilation.

Chapters 2, 4, and 5 are more implementation oriented. They provide the background

needed to understand the implementation issues mentioned in Parts II and III. Chapter 2

discusses the syntax, or textual structure, of programs. It introduces regular expressions and

context-free grammars, which designers use to describe program syntax, together with the scanning and parsing algorithms that a compiler or interpreter uses to recognize that syntax. Given

an understanding of syntax, Chapter 4 explains how a compiler (or interpreter) determines

the semantics, or meaning of a program. The discussion is organized around the notion of

attribute grammars, which serve to map a program onto something else that has meaning,

such as mathematics or some other existing language. Finally, Chapter 5 provides an overview

of assembly-level computer architecture, focusing on the features of modern microprocessors

most relevant to compilers. Programmers who understand these features have a better chance

not only of understanding why the languages they use were designed the way they were, but

also of using those languages as fully and effectively as possible.

This page intentionally left blank

1

Introduction

EXAMPLE

1.1

GCD program in x86

machine language

The first electronic computers were monstrous contraptions, filling

several rooms, consuming as much electricity as a good-size factory, and costing millions of 1940s dollars (but with the computing power of a modern

hand-held calculator). The programmers who used these machines believed that

the computer’s time was more valuable than theirs. They programmed in machine

language. Machine language is the sequence of bits that directly controls a processor, causing it to add, compare, move data from one place to another, and

so forth at appropriate times. Specifying programs at this level of detail is an

enormously tedious task. The following program calculates the greatest common

divisor (GCD) of two integers, using Euclid’s algorithm. It is written in machine

language, expressed here as hexadecimal (base 16) numbers, for the x86 (Pentium)

instruction set.

55 89 e5 53

00 00 39 c3

75 f6 89 1c

EXAMPLE

1.2

GCD program in x86

assembler

83 ec 04 83

74 10 8d b6

24 e8 6e 00

e4 f0 e8 31

00 00 00 00

00 00 8b 5d

00 00 00 89

39 c3 7e 13

fc c9 c3 29

c3 e8 2a 00

29 c3 39 c3

d8 eb eb 90

As people began to write larger programs, it quickly became apparent that a less

error-prone notation was required. Assembly languages were invented to allow

operations to be expressed with mnemonic abbreviations. Our GCD program

looks like this in x86 assembly language:

A:

pushl

movl

pushl

subl

andl

call

movl

call

cmpl

je

cmpl

%ebp

%esp, %ebp

%ebx

$4, %esp

$-16, %esp

getint

%eax, %ebx

getint

%eax, %ebx

C

%eax, %ebx

B:

C:

D:

jle

subl

cmpl

jne

movl

call

movl

leave

ret

subl

jmp

Programming Language Pragmatics. DOI: 10.1016/B978-0-12-374514-9.00010-0

Copyright © 2009 by Elsevier Inc. All rights reserved.

D

%eax, %ebx

%eax, %ebx

A

%ebx, (%esp)

putint

-4(%ebp), %ebx

%ebx, %eax

B

5

6

Chapter 1 Introduction

Assembly languages were originally designed with a one-to-one correspondence between mnemonics and machine language instructions, as shown in this

example.1 Translating from mnemonics to machine language became the job of a

systems program known as an assembler. Assemblers were eventually augmented

with elaborate “macro expansion” facilities to permit programmers to define

parameterized abbreviations for common sequences of instructions. The correspondence between assembly language and machine language remained obvious

and explicit, however. Programming continued to be a machine-centered enterprise: each different kind of computer had to be programmed in its own assembly

language, and programmers thought in terms of the instructions that the machine

would actually execute.

As computers evolved, and as competing designs developed, it became increasingly frustrating to have to rewrite programs for every new machine. It also became

increasingly difficult for human beings to keep track of the wealth of detail in large

assembly language programs. People began to wish for a machine-independent

language, particularly one in which numerical computations (the most common

type of program in those days) could be expressed in something more closely

resembling mathematical formulae. These wishes led in the mid-1950s to the

development of the original dialect of Fortran, the first arguably high-level programming language. Other high-level languages soon followed, notably Lisp and

Algol.

Translating from a high-level language to assembly or machine language is the

job of a systems program known as a compiler.2 Compilers are substantially more

complicated than assemblers because the one-to-one correspondence between

source and target operations no longer exists when the source is a high-level

language. Fortran was slow to catch on at first, because human programmers,

with some effort, could almost always write assembly language programs that

would run faster than what a compiler could produce. Over time, however, the

performance gap has narrowed, and eventually reversed. Increases in hardware

complexity (due to pipelining, multiple functional units, etc.) and continuing

improvements in compiler technology have led to a situation in which a stateof-the-art compiler will usually generate better code than a human being will.

Even in cases in which human beings can do better, increases in computer speed

and program size have made it increasingly important to economize on programmer effort, not only in the original construction of programs, but in subsequent

program maintenance—enhancement and correction. Labor costs now heavily

outweigh the cost of computing hardware.

1 The 22 lines of assembly code in the example are encoded in varying numbers of bytes in machine

language. The three cmp (compare) instructions, for example, all happen to have the same register

operands, and are encoded in the two-byte sequence ( 39 c3 ). The four mov (move) instructions

have different operands and lengths, and begin with 89 or 8b . The chosen syntax is that of the

GNU gcc compiler suite, in which results overwrite the last operand, not the first.

2 High-level languages may also be interpreted directly, without the translation step. We will return

to this option in Section 1.4. It is the principal way in which scripting languages like Python and

JavaScript are implemented.

1.1 The Art of Language Design

1.1

7

The Art of Language Design

Today there are thousands of high-level programming languages, and new ones

continue to emerge. Human beings use assembly language only for specialpurpose applications. In a typical undergraduate class, it is not uncommon to

find users of scores of different languages. Why are there so many? There are

several possible answers:

Evolution. Computer science is a young discipline; we’re constantly finding better

ways to do things. The late 1960s and early 1970s saw a revolution in “structured programming,” in which the goto -based control flow of languages like

Fortran, Cobol, and Basic3 gave way to while loops, case ( switch ) statements,

and similar higher level constructs. In the late 1980s the nested block structure

of languages like Algol, Pascal, and Ada began to give way to the object-oriented

structure of Smalltalk, C++, Eiffel, and the like.

Special Purposes. Many languages were designed for a specific problem domain.

The various Lisp dialects are good for manipulating symbolic data and complex

data structures. Icon and Awk are good for manipulating character strings. C is

good for low-level systems programming. Prolog is good for reasoning about

logical relationships among data. Each of these languages can be used successfully for a wider range of tasks, but the emphasis is clearly on the specialty.

Personal Preference. Different people like different things. Much of the parochialism of programming is simply a matter of taste. Some people love the terseness

of C; some hate it. Some people find it natural to think recursively; others prefer iteration. Some people like to work with pointers; others prefer the implicit

dereferencing of Lisp, Clu, Java, and ML. The strength and variety of personal

preference make it unlikely that anyone will ever develop a universally acceptable programming language.

Of course, some languages are more successful than others. Of the many that

have been designed, only a few dozen are widely used. What makes a language

successful? Again there are several answers:

Expressive Power. One commonly hears arguments that one language is more

“powerful” than another, though in a formal mathematical sense they are all

Turing complete—each can be used, if awkwardly, to implement arbitrary algorithms. Still, language features clearly have a huge impact on the programmer’s

ability to write clear, concise, and maintainable code, especially for very large

systems. There is no comparison, for example, between early versions of Basic

on the one hand, and Common Lisp or Ada on the other. The factors that

contribute to expressive power—abstraction facilities in particular—are a

major focus of this book.

3 The names of these languages are sometimes written entirely in uppercase letters and sometimes

in mixed case. For consistency’s sake, I adopt the convention in this book of using mixed case for

languages whose names are pronounced as words (e.g., Fortran, Cobol, Basic), and uppercase for

those pronounced as a series of letters (e.g., APL, PL/I, ML).

8

Chapter 1 Introduction

Ease of Use for the Novice. While it is easy to pick on Basic, one cannot deny its

success. Part of that success is due to its very low “learning curve.” Logo is

popular among elementary-level educators for a similar reason: even a 5-yearold can learn it. Pascal was taught for many years in introductory programming

language courses because, at least in comparison to other “serious” languages,

it is compact and easy to learn. In recent years Java has come to play a similar

role. Though substantially more complex than Pascal, it is much simpler than,

say, C++.

Ease of Implementation. In addition to its low learning curve, Basic is successful because it could be implemented easily on tiny machines, with limited

resources. Forth has a small but dedicated following for similar reasons.

Arguably the single most important factor in the success of Pascal was that

its designer, Niklaus Wirth, developed a simple, portable implementation of

the language, and shipped it free to universities all over the world (see Example 1.15).4 The Java designers took similar steps to make their language available

for free to almost anyone who wants it.

Standardization. Almost every widely used language has an official international

standard or (in the case of several scripting languages) a single canonical

implementation; and in the latter case the canonical implementation is almost

invariably written in a language that has a standard. Standardization—of both

the language and a broad set of libraries—is the only truly effective way

to ensure the portability of code across platforms. The relatively impoverished standard for Pascal, which is missing several features considered essential by many programmers (separate compilation, strings, static initialization,

random-access I/O), is at least partially responsible for the language’s drop

from favor in the 1980s. Many of these features were implemented in different

ways by different vendors.

Open Source. Most programming languages today have at least one open-source

compiler or interpreter, but some languages—C in particular—are much

more closely associated than others with freely distributed, peer-reviewed,

community-supported computing. C was originally developed in the early

1970s by Dennis Ritchie and Ken Thompson at Bell Labs,5 in conjunction

with the design of the original Unix operating system. Over the years Unix

evolved into the world’s most portable operating system—the OS of choice

for academic computer science—and C was closely associated with it. With

the standardization of C, the language has become available on an enormous

4 Niklaus Wirth (1934–), Professor Emeritus of Informatics at ETH in Zürich, Switzerland, is

responsible for a long line of influential languages, including Euler, Algol W, Pascal, Modula,

Modula-2, and Oberon. Among other things, his languages introduced the notions of enumeration, subrange, and set types, and unified the concepts of records (structs) and variants (unions).

He received the annual ACM Turing Award, computing’s highest honor, in 1984.

5 Ken Thompson (1943–) led the team that developed Unix. He also designed the B programming

language, a child of BCPL and the parent of C. Dennis Ritchie (1941–) was the principal force

behind the development of C itself. Thompson and Ritchie together formed the core of an

incredibly productive and influential group. They shared the ACM Turing Award in 1983.

1.1 The Art of Language Design

9

variety of additional platforms. Linux, the leading open-source operating system, is written in C. As of October 2008, C and its descendants account for 66%

of the projects hosted at the sourceforge.net repository.

Excellent Compilers. Fortran owes much of its success to extremely good compilers. In part this is a matter of historical accident. Fortran has been around

longer than anything else, and companies have invested huge amounts of time

and money in making compilers that generate very fast code. It is also a matter

of language design, however: Fortran dialects prior to Fortran 90 lack recursion and pointers, features that greatly complicate the task of generating fast

code (at least for programs that can be written in a reasonable fashion without

them!). In a similar vein, some languages (e.g., Common Lisp) are successful

in part because they have compilers and supporting tools that do an unusually

good job of helping the programmer manage very large projects.

Economics, Patronage, and Inertia. Finally, there are factors other than technical

merit that greatly influence success. The backing of a powerful sponsor is one.

PL/I, at least to first approximation, owes its life to IBM. Cobol and, more

recently, Ada owe their life to the U.S. Department of Defense: Ada contains a

wealth of excellent features and ideas, but the sheer complexity of implementation would likely have killed it if not for the DoD backing. Similarly, C#, despite

its technical merits, would probably not have received the attention it has without the backing of Microsoft. At the other end of the life cycle, some languages

remain widely used long after “better” alternatives are available because of a

huge base of installed software and programmer expertise, which would cost

too much to replace.

D E S I G N & I M P L E M E N TAT I O N

Introduction

Throughout the book, sidebars like this one will highlight the interplay of

language design and language implementation. Among other things, we will

consider the following.

Cases (such as those mentioned in this section) in which ease or difficulty

of implementation significantly affected the success of a language

Language features that many designers now believe were mistakes, at least

in part because of implementation difficulties

Potentially useful features omitted from some languages because of concern

that they might be too difficult or slow to implement

Language features introduced at least in part to facilitate efficient or elegant

implementations

Cases in which a machine architecture makes reasonable features unreasonably expensive

Various other tradeoffs in which implementation plays a significant role

A complete list of sidebars appears in Appendix B.

10

Chapter 1 Introduction

Clearly no single factor determines whether a language is “good.” As we study

programming languages, we shall need to consider issues from several points of

view. In particular, we shall need to consider the viewpoints of both the programmer and the language implementor. Sometimes these points of view will be in

harmony, as in the desire for execution speed. Often, however, there will be conflicts and tradeoffs, as the conceptual appeal of a feature is balanced against the

cost of its implementation. The tradeoff becomes particularly thorny when the

implementation imposes costs not only on programs that use the feature, but also

on programs that do not.

In the early days of computing the implementor’s viewpoint was predominant.

Programming languages evolved as a means of telling a computer what to do. For

programmers, however, a language is more aptly defined as a means of expressing algorithms. Just as natural languages constrain exposition and discourse, so

programming languages constrain what can and cannot easily be expressed, and

have both profound and subtle influence over what the programmer can think.

Donald Knuth has suggested that programming be regarded as the art of telling

another human being what one wants the computer to do [Knu84].6 This definition perhaps strikes the best sort of compromise. It acknowledges that both

conceptual clarity and implementation efficiency are fundamental concerns. This

book attempts to capture this spirit of compromise, by simultaneously considering

the conceptual and implementation aspects of each of the topics it covers.

1.2

EXAMPLE

1.3

Classification of

programming languages

The Programming Language Spectrum

The many existing languages can be classified into families based on their model of

computation. Figure 1.1 shows a common set of families. The top-level division

distinguishes between the declarative languages, in which the focus is on what the

computer is to do, and the imperative languages, in which the focus is on how the

computer should do it.

Declarative languages are in some sense “higher level”; they are more in tune

with the programmer’s point of view, and less with the implementor’s point

of view. Imperative languages predominate, however, mainly for performance

reasons. There is a tension in the design of declarative languages between the desire

to get away from “irrelevant” implementation details, and the need to remain close

enough to the details to at least control the outline of an algorithm. The design of

efficient algorithms, after all, is what much of computer science is about. It is not

yet clear to what extent, and in what problem domains, we can expect compilers to

6 Donald E. Knuth (1938–), Professor Emeritus at Stanford University and one of the foremost

figures in the design and analysis of algorithms, is also widely known as the inventor of the TEX

typesetting system (with which this book was produced) and of the literate programming methodology with which TEX was constructed. His multivolume The Art of Computer Programming has

an honored place on the shelf of most professional computer scientists. He received the ACM

Turing Award in 1974.

1.2 The Programming Language Spectrum

declarative

functional

dataflow

logic, constraint-based

template-based

imperative

von Neumann

scripting

object-oriented

11

Lisp/Scheme, ML, Haskell

Id, Val

Prolog, spreadsheets

XSLT

C, Ada, Fortran, . . .

Perl, Python, PHP, . . .

Smalltalk, Eiffel, Java, . . .

Figure 1.1 Classification of programming languages. Note that the categories are fuzzy, and

open to debate. In particular, it is possible for a functional language to be object-oriented, and

many authors do not consider functional programming to be declarative.

discover good algorithms for problems stated at a very high level of abstraction. In

any domain in which the compiler cannot find a good algorithm, the programmer

needs to be able to specify one explicitly.

Within the declarative and imperative families, there are several important

subclasses.

Functional languages employ a computational model based on the recursive

definition of functions. They take their inspiration from the lambda calculus,

a formal computational model developed by Alonzo Church in the 1930s. In

essence, a program is considered a function from inputs to outputs, defined in

terms of simpler functions through a process of refinement. Languages in this

category include Lisp, ML, and Haskell.

Dataflow languages model computation as the flow of information (tokens)

among primitive functional nodes. They provide an inherently parallel model:

nodes are triggered by the arrival of input tokens, and can operate concurrently.

Id and Val are examples of dataflow languages. Sisal, a descendant of Val, is

more often described as a functional language.

Logic- or constraint-based languages take their inspiration from predicate logic.

They model computation as an attempt to find values that satisfy certain

specified relationships, using goal-directed search through a list of logical rules.

Prolog is the best-known logic language. The term is also sometimes applied to

the SQL database language, the XSLT scripting language, and programmable

aspects of spreadsheets such as Excel and its predecessors.

The von Neumann languages are the most familiar and successful. They

include Fortran, Ada 83, C, and all of the others in which the basic means of

computation is the modification of variables.7 Whereas functional languages

7 John von Neumann (1903–1957) was a mathematician and computer pioneer who helped to

develop the concept of stored program computing, which underlies most computer hardware. In

a stored program computer, both programs and data are represented as bits in memory, which

the processor repeatedly fetches, interprets, and updates.

12

Chapter 1 Introduction

are based on expressions that have values, von Neumann languages are based

on statements (assignments in particular) that influence subsequent computation via the side effect of changing the value of memory.

Scripting languages are a subset of the von Neumann languages. They are

distinguished by their emphasis on “gluing together” components that were

originally developed as independent programs. Several scripting languages

were originally developed for specific purposes: csh and bash , for example,

are the input languages of job control (shell) programs; Awk was intended