Signals and Communication Technology

Aparna Vyas

Soohwan Yu

Joonki Paik

Multiscale

Transforms with

Application

to Image

Processing

Signals and Communication Technology

More information about this series at http://www.springer.com/series/4748

Aparna Vyas Soohwan Yu

Joonki Paik

•

Multiscale Transforms

with Application to Image

Processing

123

Aparna Vyas

Image Processing and Intelligent Systems

Laboratory, Graduate School of Advanced

Imaging Science, Multimedia and Film

Chung-Ang University

Seoul

South Korea

Joonki Paik

Image Processing and Intelligent Systems

Laboratory, Graduate School of Advanced

Imaging Science, Multimedia and Film

Chung-Ang University

Seoul

South Korea

Soohwan Yu

Image Processing and Intelligent Systems

Laboratory, Graduate School of Advanced

Imaging Science, Multimedia and Film

Chung-Ang University

Seoul

South Korea

ISSN 1860-4862

ISSN 1860-4870 (electronic)

Signals and Communication Technology

ISBN 978-981-10-7271-0

ISBN 978-981-10-7272-7 (eBook)

https://doi.org/10.1007/978-981-10-7272-7

Library of Congress Control Number: 2017959155

© Springer Nature Singapore Pte Ltd. 2018

This work is subject to copyright. All rights are reserved by the Publisher, whether the whole or part

of the material is concerned, specifically the rights of translation, reprinting, reuse of illustrations,

recitation, broadcasting, reproduction on microfilms or in any other physical way, and transmission

or information storage and retrieval, electronic adaptation, computer software, or by similar or dissimilar

methodology now known or hereafter developed.

The use of general descriptive names, registered names, trademarks, service marks, etc. in this

publication does not imply, even in the absence of a specific statement, that such names are exempt from

the relevant protective laws and regulations and therefore free for general use.

The publisher, the authors and the editors are safe to assume that the advice and information in this

book are believed to be true and accurate at the date of publication. Neither the publisher nor the

authors or the editors give a warranty, express or implied, with respect to the material contained herein or

for any errors or omissions that may have been made. The publisher remains neutral with regard to

jurisdictional claims in published maps and institutional affiliations.

Printed on acid-free paper

This Springer imprint is published by Springer Nature

The registered company is Springer Nature Singapore Pte Ltd.

The registered company address is: 152 Beach Road, #21-01/04 Gateway East, Singapore 189721, Singapore

Preface

Digital image processing is a popular, rapidly growing area of electrical and

computer engineering. Digital image processing has enabled various intelligent

applications such as face recognition, signature recognition, iris recognition,

forensics, automobile detection, and military vision applications. Its growth is

leveraged by technological innovations in the fields of computer processing, digital

imaging, and mass storage devices. Traditional analog imaging applications are

now switching to digital systems to utilize their usability and affordability.

Important examples include photography, medicine, video production, remote

sensing, and security monitoring. These sources produce a huge volume of digital

image data every day. Theoretically, image processing can be considered as the

processing of a two-dimensional image using a digital computer. The outcome of

image processing could be an image, a set of features, or characteristics related to

the image. Most image processing methods treat an image as a two-dimensional

signal and implement standard signal processing techniques.

Many image processing techniques were of only academic interest because

of their computational complexity. However, recent advances in processing and

memory technology made image processing a vital and cost-effective technology in

a host of applications. Multi-scale image transformations, such as Fourier transform, wavelet transform, complex wavelet transform, quaternion wavelet transform,

ridgelet transform, contourlet transform, curvelet transform, and shearlet transform,

play an extremely crucial role in image compression, image denoising, image

restoration, image enhancement, and super-resolution. Fourier transform is a

powerful tool that has been available to signal and image analysis for many years.

However, the problem with using Fourier transform is frequency analysis cannot

offer high frequency and time resolution at the same time. To overcome this

problem, windowed Fourier transform or short-time Fourier transform was introduced. Although the short-time Fourier transform has the ability to provide time

information, a complete multiresolution analysis is not possible. Wavelet is a

solution to the multiresolution problem. A wavelet has the important property of not

having a fixed-width sampling window. The wavelet transform can be classified

into (i) continuous wavelet transform and (ii) discrete wavelet transform. The

v

vi

Preface

discrete wavelet transform (DWT) algorithms have a firm position in processing of

images in many areas of research and industry.

The main focus of classical wavelets includes compression and efficient representation. Important features which play a role in analysis of functions in two

variables are dilation, translation, spatial and frequency localization, and singularity

orientation. Singularities of functions in more than one variable vary in dimensionality. Important singularities in one dimension are simply points. In two

dimensions, zero- and one-dimensional singularities are important. A smooth singularity in two dimensions may be a one-dimensional smooth manifold. Smooth

singularities in two-dimensional images often occur as boundaries of physical

objects. Efficient representation in two dimensions is a hard problem. To overcome

this problem, new multi-scale transformations such as ridgelet transform, contourlet

transform, curvelet transform, and shearlet transform were introduced. Recently,

these multi-scale transforms have become increasingly important in image

processing.

In this book, we will provide a complete introduction of multi-scale image

transformations followed by their applications to various image processing algorithms including image denoising, image restoration, image enhancement, and

super-resolution. The book is mainly divided into three parts. The readers will learn

about the basic introduction of image processing in the first part in Chaps. 1 and 2.

The second part starts with Fourier transform followed by wavelet transform and

new multi-scale constructions. The third part deals with applications of the

multi-scale transform in image processing.

The chapters of the present book consist of both tutorial and advanced theory.

Therefore, the book is intended to be a reference for graduate students and

researchers to obtain state-of-the-art knowledge on multi-scale image processing

applications. The technique of solving problems in the transform domain is common in applied mathematics as used in research and industry, but we do not devote

as much time to it as we should in the undergraduate curriculum. Also, the book is

intended to be used as a reference manual for scientists who are engaged in image

processing research, developers of image processing hardware and software systems, and practicing engineers and scientists who use image processing as a tool in

their applications.

Appendices summarize mostly used mathematical background in the book.

Seoul, South Korea

Aparna Vyas

Soohwan Yu

Joonki Paik

Contents

Part I

Introduction to Image Processing

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

3

3

3

4

10

2 Fourier Analysis and Fourier Transform . . . . . . . . . . . . . .

2.1 Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

2.2 Fourier Series . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

2.2.1 Periodic Functions . . . . . . . . . . . . . . . . . . . . .

2.2.2 Frequency and Amplitude . . . . . . . . . . . . . . . .

2.2.3 Phase . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

2.2.4 Fourier Series of Periodic Functions . . . . . . . .

2.2.5 Complex Form of Fourier Series . . . . . . . . . . .

2.3 Fourier Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . .

2.3.1 2D-Fourier Transform . . . . . . . . . . . . . . . . . . .

2.3.2 Properties of Fourier Transform . . . . . . . . . . .

2.4 Discrete Fourier Transform . . . . . . . . . . . . . . . . . . . . .

2.4.1 1D-Discrete Fourier Transform . . . . . . . . . . . .

2.4.2 Inverse 1D-Discrete Fourier Transform . . . . . .

2.4.3 2D-Discrete Fourier Transform and 2D-Inverse

Discrete Fourier Transform . . . . . . . . . . . . . . .

2.4.4 Properties of 2D-Discrete Fourier Transform . .

2.5 Fast Fourier Transform . . . . . . . . . . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

15

15

16

16

16

18

19

20

21

24

24

26

27

30

.......

.......

.......

31

32

34

1 Fundamentals of Digital Image Processing

1.1 Image Acquisition of Digital Camera .

1.1.1 Introduction . . . . . . . . . . . . .

1.2 Sampling . . . . . . . . . . . . . . . . . . . . .

References . . . . . . . . . . . . . . . . . . . . . . . . .

Part II

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

Multiscale Transform

vii

viii

Contents

2.6

The Discrete Cosine Transform . . . . . . . . . . . . . . . .

2.6.1 1D-Discrete Cosine Transform . . . . . . . . . .

2.6.2 2D-Discrete Cosine Transform . . . . . . . . . .

2.7 Heisenberg Uncertainty Principle . . . . . . . . . . . . . . .

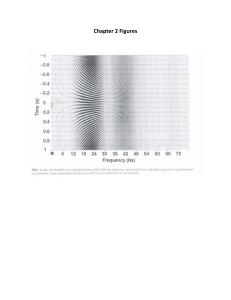

2.8 Windowed Fourier Transform or Short-Time Fourier

Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

2.8.1 1D and 2D Short-Time Fourier Transform . .

2.8.2 Drawback of Short-Time Fourier Transform

2.9 Other Spectral Transforms . . . . . . . . . . . . . . . . . . . .

References . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

39

39

40

41

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

41

41

42

42

43

3 Wavelets and Wavelet Transform . . . . . . . . . . . . . . . . . . . . .

3.1 Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

3.2 Wavelets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

3.3 Multiresolution Analysis . . . . . . . . . . . . . . . . . . . . . . . .

3.4 Wavelet Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . .

3.4.1 The Wavelet Series Expansions . . . . . . . . . . . . .

3.4.2 Discrete Wavelet Transform . . . . . . . . . . . . . . .

3.4.3 Motivation: From MRA to Discrete Wavelet

Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . .

3.4.4 The Quadrature Mirror Filter Conditions . . . . . .

3.5 The Fast Wavelet Transform . . . . . . . . . . . . . . . . . . . . .

3.6 Why Use Wavelet Transforms . . . . . . . . . . . . . . . . . . . .

3.7 Two-Dimensional Wavelets . . . . . . . . . . . . . . . . . . . . . .

3.8 2D-discrete Wavelet Transform . . . . . . . . . . . . . . . . . . .

3.9 Continuous Wavelet Transform . . . . . . . . . . . . . . . . . . .

3.9.1 1D Continuous Wavelet Transform . . . . . . . . . .

3.9.2 2D Continuous Wavelet Transform . . . . . . . . . .

3.10 Undecimated Wavelet Transform or Stationary Wavelet

Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

3.11 Biorthogonal Wavelet Transform . . . . . . . . . . . . . . . . . .

3.11.1 Linear Independence and Biorthogonality . . . . .

3.11.2 Dual MRA . . . . . . . . . . . . . . . . . . . . . . . . . . .

3.11.3 Discrete Transform for Biorthogonal Wavelets . .

3.12 Scarcity of Wavelet Transform . . . . . . . . . . . . . . . . . . .

3.13 Complex Wavelet Transform . . . . . . . . . . . . . . . . . . . . .

3.14 Dual-Tree Complex Wavelet Transform . . . . . . . . . . . . .

3.15 Quaternion Wavelet and Quaternion Wavelet Transform .

3.15.1 2D Hilbert Transform . . . . . . . . . . . . . . . . . . . .

3.15.2 Quaternion Algebra . . . . . . . . . . . . . . . . . . . . .

3.15.3 Quaternion Multiresolution Analysis . . . . . . . . .

References . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

45

45

46

48

53

53

54

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

55

57

62

65

66

67

69

69

69

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

70

70

70

72

73

76

78

79

83

84

85

89

90

Contents

ix

4 New Multiscale Constructions . . . . . . . . . . . . . . . . . . . . . . . . . . .

4.1 Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

4.2 Ridgelet Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

4.2.1 The Continuous Ridgelet Transform . . . . . . . . . . . .

4.2.2 Discrete Ridgelet Transform . . . . . . . . . . . . . . . . . .

4.2.3 The Orthonormal Finite Ridgelet Transform . . . . . . .

4.2.4 The Fast Slant Stack Ridgelet Transform . . . . . . . . .

4.2.5 Local Ridgelet Transform . . . . . . . . . . . . . . . . . . . .

4.2.6 Sparse Representation by Ridgelets . . . . . . . . . . . . .

4.3 Curvelets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

4.3.1 The First Generation Curvelet Transform . . . . . . . . .

4.3.2 Sparse Representation by First Generation Curvelets

4.3.3 The Second-Generation Curvelet Transform . . . . . . .

4.3.4 Sparse Representation by Second Generation

Curvelets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

4.4 Contourlet . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

4.5 Contourlet Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

4.5.1 Multiscale Decomposition . . . . . . . . . . . . . . . . . . . .

4.5.2 Directional Decomposition . . . . . . . . . . . . . . . . . . .

4.5.3 The Discrete Contourlet Transform . . . . . . . . . . . . .

4.6 Shearlet . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

4.7 Shearlet Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

4.7.1 Continuous Shearlet Transform . . . . . . . . . . . . . . . .

4.7.2 Discrete Shearlet Transform . . . . . . . . . . . . . . . . . .

4.7.3 Cone-Adapted Continuous Shearlet Transform . . . . .

4.7.4 Cone-Adapted Discrete Shearlet Transform . . . . . . .

4.7.5 Compactly Supported Shearlets . . . . . . . . . . . . . . . .

4.7.6 Sparse Representation by Shearlets . . . . . . . . . . . . .

References . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

Part III

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

93

93

94

94

98

100

100

101

101

102

102

103

104

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

105

106

107

108

109

110

112

115

115

116

118

121

123

125

126

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

133

133

134

136

136

137

137

137

137

138

138

Application of Multiscale Transforms to Image Processing

5 Image Restoration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5.1 Model of Image Degradation and Restoration Process .

5.2 Image Quality Assessments Metrics . . . . . . . . . . . . . .

5.3 Image Denoising . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5.4 Noise Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5.4.1 Additive Noise Model . . . . . . . . . . . . . . . . .

5.4.2 Multiplicative Noise Model . . . . . . . . . . . . . .

5.5 Types of Noise . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5.5.1 Amplifier (Gaussian) Noise . . . . . . . . . . . . . .

5.5.2 Rayleigh Noise . . . . . . . . . . . . . . . . . . . . . .

5.5.3 Uniform Noise . . . . . . . . . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

x

Contents

5.5.4 Impulsive (Salt and Pepper) Noise . . . . . . . . . . .

5.5.5 Exponential Noise . . . . . . . . . . . . . . . . . . . . . . .

5.5.6 Speckle Noise . . . . . . . . . . . . . . . . . . . . . . . . . .

5.6 Image Deblurring . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5.6.1 Gaussian Blur . . . . . . . . . . . . . . . . . . . . . . . . . .

5.6.2 Motion Blur . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5.6.3 Rectangular Blur . . . . . . . . . . . . . . . . . . . . . . . .

5.6.4 Defocus Blur . . . . . . . . . . . . . . . . . . . . . . . . . . .

5.7 Superresolution . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5.8 Classification of Image Restoration Algorithms . . . . . . . .

5.8.1 Spatial Filtering . . . . . . . . . . . . . . . . . . . . . . . . .

5.8.2 Frequency Domain Filtering . . . . . . . . . . . . . . . .

5.8.3 Direct Inverse Filtering . . . . . . . . . . . . . . . . . . . .

5.8.4 Constraint Least-Square Filter . . . . . . . . . . . . . . .

5.8.5 IBD (Iterative Blind Deconvolution) . . . . . . . . . .

5.8.6 NAS-RIF (Nonnegative and Support Constraints

Recursive Inverse Filtering) . . . . . . . . . . . . . . . .

5.8.7 Superresolution Restoration Algorithm Based on

Gradient Adaptive Interpolation . . . . . . . . . . . . .

5.8.8 Deconvolution Using a Sparse Prior . . . . . . . . . .

5.8.9 Block-Matching . . . . . . . . . . . . . . . . . . . . . . . . .

5.8.10 LPA-ICI Algorithm . . . . . . . . . . . . . . . . . . . . . .

5.8.11 Deconvolution Using Regularized Filter (DRF) . .

5.8.12 Lucy-Richardson Algorithm . . . . . . . . . . . . . . . .

5.8.13 Neural Network Approach . . . . . . . . . . . . . . . . .

5.9 Application of Multiscale Transform in Image Restoration

5.9.1 Image Restoration Using Wavelet Transform . . . .

5.9.2 Image Restoration Using Complex Wavelet

Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5.9.3 Image Restoration Using Quaternion Wavelet

Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5.9.4 Image Restoration Using Ridgelet Transform . . . .

5.9.5 Image Restoration Using Curvelet Transform . . . .

5.9.6 Image Restoration Using Contourlet Transform . .

5.9.7 Image Restoration Using Shearlet Transform . . . .

References . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

6 Image Enhancement . . . . . . . . . . . . . . . . . . . . . . . . . . .

6.1 Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

6.2 Spatial Domain Image Enhancement Techniques . .

6.2.1 Gray Level Transformation . . . . . . . . . . . .

6.2.2 Piecewise-Linear Transformation Functions

6.2.3 Histogram Processing . . . . . . . . . . . . . . . .

6.2.4 Spatial Filtering . . . . . . . . . . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

139

139

139

140

141

141

141

142

142

142

143

146

151

151

152

. . . . . 152

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

152

153

153

153

153

154

154

154

155

. . . . . 169

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

172

174

177

181

186

189

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

199

199

200

201

202

203

204

Contents

6.3

Frequency Domain Image Enhancement Techniques . . . . .

6.3.1 Smoothing Filters . . . . . . . . . . . . . . . . . . . . . . . .

6.3.2 Sharpening Filters . . . . . . . . . . . . . . . . . . . . . . .

6.3.3 Homomorphic Filtering . . . . . . . . . . . . . . . . . . .

6.4 Colour Image Enhancement . . . . . . . . . . . . . . . . . . . . . . .

6.5 Application of Multiscale Transforms in Image

Enhancement . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

6.5.1 Image Enhancement Using Fourier Transform . . .

6.5.2 Image Enhancement Using Wavelet Transform . .

6.5.3 Image Enhancement Using Complex Wavelet

Transform . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

6.5.4 Image Enhancement Using Curvelet Transform . .

6.5.5 Image Enhancement Using Contourlet Transform .

6.5.6 Image Enhancement Using Shearlet Transform . .

References . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

xi

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

205

205

207

208

209

. . . . . 210

. . . . . 212

. . . . . 214

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

219

222

223

225

228

Appendix A: Real and Complex Number System . . . . . . . . . . . . . . . . . . . 233

Appendix B: Vector Space . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 237

Appendix C: Linear Transformation, Matrices . . . . . . . . . . . . . . . . . . . . 239

Appendix D: Inner Product Space and Orthonormal Basis . . . . . . . . . . . 241

Appendix E: Functions and Convergence . . . . . . . . . . . . . . . . . . . . . . . . . 245

Index . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 251

About the Authors

Aparna Vyas was born in Allahabad, India, in 1983. She received her B.Sc. in

Science and M.Sc. in Mathematics from the University of Allahabad, Allahabad,

India, in 2004 and 2006, respectively. She received her Ph.D. degree in

Mathematics from the University of Allahabad, Allahabad, India, in 2010. She was

Assistant Professor, Department of Mathematics, School of Basic Sciences,

SHIATS, Allahabad, India, since 2006–2013. In 2014, she joined Manav Rachna

University, Faridabad, India, as Assistant Professor. She was a postdoctoral fellow

at Soongsil University from August 2016 to September 2016. Currently, she is a

postdoctoral fellow in Chung-Ang University under the BK21 Plus Project. She has

more than 10 years of teaching and research experience. She is also a life member

of the Indian Mathematical Society and Ramanujan Mathematical Society. Her

research interests include wavelet analysis and image processing.

Soohwan Yu was born in Incheon, Korea, in 1988. He received his B.S. in

Information and Communication Engineering from Suwon University, Korea, in

2013. He received his M.S. in Image Engineering from Chung-Ang University,

Korea, in 2016, where he is currently pursuing his Ph.D. in Image Engineering. His

research interests include image enhancement, super-resolution, and image

restoration.

Joonki Paik was born in Seoul, Korea, in 1960. He received his B.S. in Control

and Instrumentation Engineering from Seoul National University in 1984. He

received his M.S. and Ph.D. degrees in Electrical Engineering and in Computer

Science from Northwestern University in 1987 and in 1990, respectively. From

1990 to 1993, he worked at Samsung Electronics, where he designed image stabilization chipsets for consumer camcorders. Since 1993, he has been a faculty

member at Chung-Ang University, Seoul, South Korea. Currently, he is Professor

in the Graduate School of Advanced Imaging Science, Multimedia and Film. From

1999 to 2002, he was Visiting Professor in the Department of Electrical and

Computer Engineering at the University of Tennessee, Knoxville. Dr. Paik was the

recipient of the Chester-Sall Award from the IEEE Consumer Electronics Society,

the Academic Award from the Institute of Electronic Engineers of Korea, and the

xiii

xiv

About the Authors

Best Research Professor Award from Chung-Ang University. He served the

Consumer Electronics Society of IEEE as a member of the editorial board. Since

2005, he has been the head of the National Research Laboratory in the field of

image processing and intelligent systems. In 2008, he worked as a full-time technical consultant for the System LSI division at Samsung Electronics, where he

developed various computational photographic techniques including an extended

depth-of-field (EDoF) system. From 2005 to 2007, he served as Dean of the

Graduate School of Advanced Imaging Science, Multimedia and Film. From 2005

to 2007, he was Director of the Seoul Future Contents Convergence (SFCC) Cluster

established by the Seoul Research and Business Development (R&BD) Program.

Dr. Paik is currently serving as a member of the Presidential Advisory Board for

Scientific/Technical Policy for the Korean government and as a technical consultant

for the Korean Supreme Prosecutors Office for computational forensics.

Part I

Introduction to Image Processing

Chapter 1

Fundamentals of Digital Image Processing

1.1 Image Acquisition of Digital Camera

1.1.1 Introduction

The concept of the digital image was first introduced in the transportation of the

digital image using submarine cable system in the early twenty century [3]. In addition, the advance in the computational hardware and processing unit lead to the

development of modern digital image processing techniques. Specifically, the digital

image processing started in the application field of remote sensing. In 1964, the Jet

Propulsion Laboratory applied the digital image processing technique to improve

the visual quality of the transmitted digital image by Ranger 7 [1, 3]. In the medical

imaging, the image processing techniques were applied to develop the computerized tomography for medical imaging devices in early 1970s, which generates a

two-dimensional image and three-dimensional volume of the inside of the object by

passing the X-ray [3]. In addition to the remote sensing and medical imaging, the

digital image processing techniques have been widely used in various application

fields such as consumer electronics, defense, robot vision, surveillance systems, and

artificial intelligence systems.

In the modern image acquisition system, the image signal processing (ISP) chain

plays an important role to obtain the high-quality digital image as shown in Fig. 1.1.

The light pass the lens and color filter array (CFA). Since the imaging sensor without the CFA absorbs the light in all spectrum bands, we cannot obtain the color

information. To generate the color image, the digital camera uses the common CFA

called Bayer pattern, which consists of two green (G), one red (R), and one blue

(B) filter because the human visual system is more sensitive to the light in the green

wavelength [2]. The advanced CFA replaces the one green filter with white filter to

increase the amount of light.

In an imaging sensor such as charge coupled device (CCD) or complementary

metal oxide semiconductor (CMOS), the photon reacts to each semiconductor and

© Springer Nature Singapore Pte Ltd. 2018

A. Vyas et al., Multiscale Transforms with Application to Image

Processing, Signals and Communication Technology,

https://doi.org/10.1007/978-981-10-7272-7_1

3

4

1 Fundamentals of Digital Image Processing

Fig. 1.1 The block diagram of the image signal processing chain

converts the electrical charges to the electric analog signal. The analog front end

(AFE) module of ISP chain performs the sampling and quantization processes to

convert the analog signal to the digital signal. Sequentially, the AFE module also

controls the gain of the acquired signal to increase the signal-to-noise ratio (SNR).

In low-illumination condition, since the amount of the photons is decreased to react

an imaging sensor, the digital image having low contrast is acquired with low SNR

[4, 5, 7, 8]. In addition, the recent imaging devices increases the spatial resolution

of a digital image by drastically reducing the physical size of each pixel. However,

the reduced pixel size results in the chrominance noise called the cross-talk because

of the interference of the photons among the pixels and it also reduces the SNR in

each color channel.

The digital back end (DBE) module performs the digital image processing techniques to improve the quality of an input image. First, the DBE module performs

the demosaicing to separate the color information from the raw image data by using

the interpolation algorithms [6]. In addition, the image enhancement techniques are

performed to improve the dynamic range of an image. The auto white balance (AWB)

performs the color constancy, which makes the digital image be acquired under the

neutral light condition by correcting the chromaticity. Finally, the noise reduction

should be performed to remove the amplified noise in the image enhancement process.

Additionally, since the demosaicing and noise reduction techniques may decrease

the quality of the image by the blurring and jagging artifacts, the image restoration

techniques called super-resolution can be performed to obtain the high-resolution

image.

1.2 Sampling

The sampling and quantization are major processes performed in the AFE module of

ISP chain to convert a continuous image signal to a series of discrete signals. In this

section, we briefly describe the mathematical background of the sampling theorem.

1.2 Sampling

5

Fig. 1.2 The sampling operation of a continuous function using an impulse train.: a 1D continuous

function, b impulse train with period T , and c the sampled function by the multiplication of a and b

Let x(t) be a one-dimensional (1D) continuous function, the sampling operation

can be regarded as the multiplication of x(t) and impulse train p(t) with period T ,

for k = · · · , −2, −1, 0, 1, 2, . . ., as

xs (t) = x(t) p(t) = x(t)

∞

δ(t − kT ).

(1.2.1)

k=−∞

Figure 1.2 shows the sampling operation of an 1D continuous function using an

impulse train. As shown in Fig. 1.2, a continuous function is sampled with interval

T and the amplitude of an impulse train varies with that of the continuous function

x(t). The black dots represent the sampled values of x(t) at the location kT .

Since the impulse train is a periodic function with period T , it can be expressed

as the Fourier series expansion as

p(t) =

∞

n=−∞

an e j

2πk

T

t

,

(1.2.2)

6

1 Fundamentals of Digital Image Processing

where

an =

1

T

−T /2

T /2

1

T

1

= .

T

=

T /2

−T /2

p(t)e− j

δ(t)e− j

2πk

T t

2πk

T t

dt

dt

(1.2.3)

The Fourier transform of the impulse train is defined by using (1.2.2) as

∞

p(t)e− j2πut dt

∞

∞

1 j 2πk t − j2πut

=

e T

dt

e

T k=−∞

−∞

∞ ∞

1 k

=

e− j2π(u− T )t dt

T k=−∞ −∞

∞

1 k

=

δ u−

,

T k=−∞

T

P(u) =

−∞

(1.2.4)

where P(u) is the Fourier transform of p(t). The Fourier transform of impulse

train is an impulse train with period 1/T . In addition, since the multiplication of

Fourier transformed functions in the spatial domain is the convolution in the frequency domain, the Fourier transform of the sampled function in (1.2.1) can be

expressed as

X s (u) = X (u) ∗ P(u)

∞

=

X (τ )P(u − τ )dτ

−∞

∞

1 k

.

=

X u−

T k=−∞

T

(1.2.5)

where X s (u) represents the Fourier transform of the sampled function xs (t), and ∗

the convolution operator. The Fourier transform of the sampled function xs (t) is also

a sequence of the Fourier transform of x(t) at the location k/T with period 1/T .

Figure 1.3 shows that how a continuous function is sampled in the different

sampling rate 1/T in the frequency domain. Figure 1.3a is the Fourier transform of a continuous function x(t), which is filtered using the band-limit filter in

−u max ≤ u ≤ u max . Figure 1.3b shows the Fourier transform of the sampled function

with the higher sampling rate. Since the sampled signal is completely separated, X (u)

can be reconstructed from X s (u) using the band-limit filter which used in Fig. 1.3a.

1.2 Sampling

7

Fig. 1.3 Comparative results using different sampling rate.: a the Fourier transform of band-limited

continuous function, b the Fourier transform of the sampled function using T1 < 2u max , and c the

Fourier transform of the sampled function using T1 > 2u max

On the other hand, if the band-limited signal is sampled at lower sampling rate, the

Fourier transform of the sampled function is overlapped as shown in Fig. 1.3c. It

implies that the sampling operation is performed at the sampling rate higher than

twice the maximum frequency u max to completely reconstruct X (u). This is called

as Nyquist-Shannon sampling theorem.

1

> 2u max .

T

(1.2.6)

In the two-dimensional (2D) case, the sampling can be performed using an 2D

impulse train. Given the 2D continuous function x(t, q) and impulse train p(t, q),

the 2D sampled function xs (t, q) can be written as

xs (t, q) = x(t, q) p(t, q) = x(t, q)

∞

∞

δ(t − kT )δ(q − l Q).

(1.2.7)

l=−∞ k=−∞

Since the 2D impulse train is a periodic function, it can be expressed the Fourier

series expansion as 1D impulse train in (1.2.2). The Fourier series expansion of 2D

impulse train is defined as

8

1 Fundamentals of Digital Image Processing

∞

∞

p(t) =

bn e j

2πk

T t

2πl

Q

ej

q

,

(1.2.8)

l=−∞ k=−∞

where

1

bn =

TQ

Q/2

−T /2

T /2

−Q/2

Q/2

−T /2

−Q/2

1

TQ

1

=

.

TQ

=

T /2

p(t, q)e− j

δ(t, q)e− j

2πk

T t

2πk

T

e− j

2πl

Q

q

t − j 2πl

Q q

e

dtdq

dtdq

(1.2.9)

The Fourier transform of p(t, q) is defined as

∞

∞

p(t, q)e− j2πut e− j2πvq dtdq

∞ ∞

∞

∞

1 j 2πk t j 2πl

q

=

e T e Q

e− j2πut e− j2πvq dtdq

T

Q

−∞ −∞

l=−∞ k=−∞

P(u, v) =

−∞

−∞

∞ ∞ ∞

∞

1 k

− j2π v− Ql q

=

e− j2π(u− T )t e

dtdq

T Q l=−∞ k=−∞ −∞ −∞

∞

∞

1 l

k

=

δ v−

,

δ u−

T Q l=−∞ k=−∞

T

Q

(1.2.10)

The Fourier transform of 2D impulse train is a periodic impulse train with period

1/T and 1/Q in u and v directions. Let X s (u, v) be the Fourier transform of 2D

sampled function xs (t, q), X s (u, v) can be estimated using the convolution theorem

in the frequency domain as

X s (u, v) = X (u, v) ∗ P(u, v)

∞ ∞

=

X (τu , τv )P(u − τu , v − τv )dτu dτv

−∞ −∞

=

1

TQ

=

1

TQ

=

1

TQ

k

l

δ u − τu −

δ v − τv −

dτu dτv

T

Q

−∞ −∞

l=−∞ k=−∞

∞

∞ ∞ ∞

k

l

X (τu , τv )δ u − τu −

δ v − τv −

dτu dτv

T

Q

l=−∞ k=−∞ −∞ −∞

∞

∞

k

l

X u − ,v −

.

T

Q

∞

∞

X (τu , τv )

∞

∞

l=−∞ k=−∞

(1.2.11)

1.2 Sampling

9

Fig. 1.4 The spectrum of the sampled function with periods 1/T and 1/Q in the frequency domain

In the same manner as the sampling in the 1D case, the Fourier transform of

the sampling in the spatial domain results in the multiply copied version of the

frequency spectrum X (u, v) at the location k/T and l/Q. Figure 1.4 shows that the

periodic frequency spectrum of the sampled function with periods 1/T and 1/Q in

the frequency domain.

In order to reconstruct the original 2D continuous signal, the Nyquist-Shannon

sampling rate should be satisfied as

1

< 2u max ,

T

(1.2.12)

1

< 2vmax .

Q

(1.2.13)

and

where u max and vmax respectively represent the maximum frequency of sampled

spectrum in the u and v directions. Figure 1.5 shows that the spectrum of the sampled

function using the sampling rate lower than the twice of the maximum frequency

along the u and v directions, respectively.

10

1 Fundamentals of Digital Image Processing

Fig. 1.5 The Fourier transform of the sampled function with the sampling rate than the NyquistShannon sampling rate: a the spectrum of sampled function using T1 < 2u max , and b the spectrum

of sampled function using Q1 < 2vmax

References

1. Andrews, H.C., Tescher, A.G., Kruger, R.P.: Image processing by digital computer. IEEE Spectr.

9(7), 20–32 (1972)

2. Bayer, B.E.: Color imaging array. U.S. Patent No. 3,971,065 (1976)

3. Gonzalez, R.C., Woods, R.E.: Digital Image Processing, 3rd edn. Prentice Hall, New Jersey

(2006)

4. Ko, S., Yu, S., Kang, W., Park, C., Lee, S., Paik, J.: Artifact-free low-light video enhancement

using temporal similarity and guide map. IEEE Trans. Ind. Electron. 64(8), 6392–6401 (2017)

References

11

5. Ko, S, Yu, S., Kang, W., Park, S., Moon, B., Paik, J.: Variational framework for low-light image

enhancement using optimal transmission map and combined l1 and l2-minimization. Signal

Process.: Image Commun. 58, 99–110 (2017)

6. Malvar, H.S., He, L., Cutler, R.: High quality linear interpolation for demosaicing of bayerpatterned color images. Proc IEEE Int. Conf. Acoust. Speech Signal Process. 34(11), 2274–2282

(2004)

7. Park, S., Yu, S., Moon, B., Ko, S., Paik, J.: Low-light image enhancement using variational

optimization-based retinex model. IEEE Trans. Consum. Electron. 63(2), 178–184 (2017)

8. Park, S., Moon, B., Ko, S., Yu, S., Paik, J.: Low-light image restoration using bright channel

prior-based variational retinex model. EURASIP J. Image Video Process. 2017(1), 1–11 (2017)

Part II

Multiscale Transform

Chapter 2

Fourier Analysis and Fourier Transform

2.1 Overview

The origins of Fourier analysis in science can be found in Ptolemy’s decomposing

celestial orbits into cycles and epicycles and Pythagoras’ decomposing music into

consonances. Its modern history began with the eighteenth century work of Bernoulli,

Euler, and Gauss on what later came to be known as Fourier series. J. Fourier in

1822 [Theorie analytique de la Chaleur] was the first to claim that arbitrary periodic

functions could be expanded in a trigonometric (later called a Fourier) series, a

claim that was eventually shown to be incorrect, although not too far from the truth.

It is an amusing historical sidelight that this work won a prize from the French

Academy, in spite of serious concerns expressed by the judges (Laplace, Lagrange,

and Legendre) regarding Fourier’s lack of rigor. Fourier was apparently a better

engineer than mathematician. Dirichlet later made rigorous the basic results for

Fourier series and gave precise conditions under which they applied. The rigorous

theoretical development of general Fourier transforms did not follow until about one

hundred years later with the development of the Lebesgue integral.

Fourier analysis is a prototype of beautiful mathematics with many-faceted applications not only in mathematics, but also in science and engineering. Since the work

on heat flow of Jean Baptiste Joseph Fourier (March 21, 1768 to May 16, 1830) in the

treatise entitled Theorie Analytique de la Chaleur, Fourier series and Fourier transforms have gone from triumph to triumph, permeating mathematics such as partial

differential equations, harmonic analysis, representation theory, number theory and

geometry. Their societal impact can best be seen from spectroscopy to the effect that

atoms, molecules and hence matters can be identified by means of the frequency

spectrum of the light that they emit. Equipped with the fast Fourier transform in

computations and fulled by recent technological innovations in digital signals and

images, Fourier analysis has stood out as one of the very top achievements of mankind

comparable with the Calculus of Sir Isaac Newton.

© Springer Nature Singapore Pte Ltd. 2018

A. Vyas et al., Multiscale Transforms with Application to Image

Processing, Signals and Communication Technology,

https://doi.org/10.1007/978-981-10-7272-7_2

15

16

2 Fourier Analysis and Fourier Transform

The Fourier transform is of fundamental importance to image processing. It allows

us to perform tasks which would be impossible to perform any other way; its efficiency allows us to perform other tasks more quickly. The Fourier Transform provides, among other things, a powerful alternative to linear spatial filtering; it is more

efficient to use the Fourier transform than a spatial filter for a large filter. The Fourier

Transform also allows us to isolate and process particular image frequencies, and so

perform low-pass and high-pass filtering with a great degree of precision.

2.2 Fourier Series

The concept of frequency and the decomposition of waveforms into elementary

“harmonic” functions first arose in the context of music and sound.

2.2.1 Periodic Functions

A periodic function is a function that repeats its value in regular intervals or periods.

A function f is said to be periodic with period T (T = 0) if f (x + T ) = f (x) for

all values of x in the domain. The most important examples are the trigonometric

functions (i.e. sine or cosine), which repeat values over the intervals of 2π.

The sine function f (x) = sin(x) has the value 0 at the origin and performs exactly

one full cycle between the origin and the point x = 2π. Hence f (x) = sin(x) is a

periodic function with period 2π, i.e.

sin(x) = sin(x + 2π) = sin(x + 4π) = · · · = sin(x + 2nπ),

(2.2.1)

for all n ∈ Z. The same is true for cosine function except its value is 1 at the origin

i.e. cos(0) = 1, see Fig. 2.1.

2.2.2 Frequency and Amplitude

The number of oscillations of sin(x) over the distance T = 2π is one and thus the

value of the angular frequency ω = 2π/T = 1. If f (x) = sin(3x), we obtain a

compressed sine wave that oscillates three times faster than the original function

sin(x). The function sin(3x) performs five full cycles over a distance of 2π and thus

has the angular frequency ω = 3 and a period T = 2π/3, see Fig. 2.2.

In general, the period T relates the angular frequency ω as

T =

2π

ω

(2.2.2)

2.2 Fourier Series

Fig. 2.1 Sine and Cosine Graph

Fig. 2.2 sin(x) and sin(3x) Graph

17

18

2 Fourier Analysis and Fourier Transform

for ω > 0. The relationship between the angular frequency ω and the common

frequency f is given by

f =

ω

1

=

T

2π

or

ω = 2π f,

(2.2.3)

where f is measured in cycles per length or time unit. A sine or cosine function

oscillates between the peak values +1 and −1 and its amplitude is 1. Multiplying

by a constant a ∈ R changes the peak values of the function to +a and −a and its

amplitude to a. In general, the expression

a · sin(ωx)

and

a · cos(ωx)

(2.2.4)

denotes a sine or cosine function with amplitude a and angular velocity ω, evaluated

at position (or point in time) x.

2.2.3 Phase

Phase is the position of a point in time (an instant) on a waveform cycle. A complete

cycle is defined as the interval required for the waveform to return to its arbitrary

initial value. In sinusoidal functions or in waves “phase” has two different, but closely

related, meanings. One is the initial angle of a sinusoidal function at its origin and is

sometimes called phase offset or phase difference. Another usage is the fraction of

the wave cycle that has elapsed relative to the origin.

Shifting a sine function along the x-axis by distance ϕ,

sin(x) → sin(x − ϕ),

(2.2.5)

changes the phase of the sine wave and ϕ denotes the phase angle of the resulting

function, see Fig. 2.3. Thus, we have

sin(nx) = cos(ωx − π/2).

(2.2.6)

i.e. cosine and sine functions are orthogonal in a sense and we can use this fact to

create new sinusoidal function with arbitrary frequency, phase and amplitude. In

particular, adding a cosine and a sine function with the identical frequency ω and

arbitrary amplitude A and B, respectively create another sinusoids

A · cos(ωx) + B · sin(ωx) = C · cos(ωx − ϕ).

where c =

√

A2 + B 2 and ϕ = tan −1 (B/A).

(2.2.7)

2.2 Fourier Series

19

Fig. 2.3 sin(x), sin(x − π/4) and sin(x − π) Graph

2.2.4 Fourier Series of Periodic Functions

As we seen earlier, sinusoidal function of arbitrary frequency, amplitude and phase

can be described as the sum of suitably weighted cosine and sine functions. Is it

possible to write non-sinusoidal functions to sum of cosine and sine functions? It

was Fourier [Jean Baptiste Joseph de Fourier (1768–1830)] who first extended this

idea to arbitrary functions and showed that (almost) any periodic function f (x) with

a fundamental frequency ω0 can be described as a infinite sum of harmonic sinusoids

i.e.

∞

A0 +

f (x) =

[An cos(ω0 nx) + Bn sin(ω0 nx)].

(2.2.8)

2

n=1

This is called Fourier series and A0 , An and Bn are called Fourier coefficients of the

function f (x), where

1 π

A0 =

f (x)d x,

(2.2.9)

π −π

An =

1

π

Bn =

1

π

π

−π

π

−π

f (x)cos(ω0 nx)d x,

(2.2.10)

f (x)sin(ω0 nx)d x.

(2.2.11)

20

2 Fourier Analysis and Fourier Transform

A Fourier series is an expression of a periodic function f (x) in terms of an infinite

sum of sines and cosines. Fourier series make use of the orthogonality relationships of

sine and cosine functions, since these functions form a complete orthogonal system

over [−π, π] or any interval of length 2π. The computation and study of Fourier

series is known as Harmonic analysis and is extremely useful as a way to break up an

arbitrary periodic function into a set of simple terms that can be plugged in, solved

individually and then recombined to obtain the solution of the original problem of

an approximation to it to whatever accuracy is desired or practical.

More general form of Fourier series is

∞

f (x) =

A0 +

[An cos(nx) + Bn sin(nx)],

2

n=1

where

A0 =

1

π

π

1

An =

π

1

Bn =

π

−π

π

−π

π

(2.2.12)

f (x)d x,

(2.2.13)

f (x)cos(nx)d x,

(2.2.14)

f (x)sin(nx)d x.

(2.2.15)

−π

The miracle of Fourier series is that as long as f (x) is continuous (or even piecewisecontinuous, with some caveats discussed in the Stewart text), such a decomposition

is always possible.

2.2.5 Complex Form of Fourier Series

The Fourier series representation for a periodic function f, can be expressed more

simply using complex exponentials. Moreover, because of the unique properties of

the exponential function, Fourier series are often easier to manipulate in complex

form. The transition from the real form to the complex form of a Fourier series is

made using the following identities, called Euler identities,

eiθ = cos(θ) + isin(θ) and

e−iθ = cos(θ) − isin(θ).

(2.2.16)

By adding these identities, and then dividing by 2, or subtracting them, and then

dividing by 2i, we have

cos(θ) =

eiθ + e−iθ

2

and

sin(θ) =

eiθ − e−iθ

.

2i

(2.2.17)

2.2 Fourier Series

21

The complex Fourier series is obtained from (2.2.12) by writing cos(nx) and sin(nx)

in their complex exponential form and rearranging as follows:

inx

inx

∞ e + e−inx

e − e−inx

A0 An

+

+ Bn

f (x) =

2

2

2i

n=1

=

∞ −1 A−m + i B−m imx

A0 An − i Bn inx

+

e +

e

2

2

2

m=−∞

n=1

where we substituted m = n in the last term on the last line. Equation clearly suggests

the much simpler complex form of the Fourier series

f (x) =

∞

Cn einx ,

(2.2.18)

f (x)e−inx d x.

(2.2.19)

n=−∞

with the coefficients given by

Cn =

1

2π

π

−π

Note that the Fourier coefficients Cn are complex valued. It is seen that for a realvalued function f (x), the following holds for the complex coefficients Cn

C−n = Cn ,

(2.2.20)

where Cn denotes the complex conjugate of Cn .

2.3 Fourier Transform

In the previous section we have seen how to expand a periodic function as a trigonometric series. This can be thought of as a decomposition of a periodic function in

terms of elementary modes, each of which has a definite frequency allowed by the

periodicity. This concept can be generalized to functions periodic on any interval.

If the function has period L, then the frequencies must be integer multiples of

the fundamental frequency k = 2π/L. The Fourier series of functions of arbitrary

periodicity is

∞

f (x) =

A0 +

[An cos(2πnx/L) + Bn sin(2πnx/L)],

2

n=1

(2.3.1)

22

2 Fourier Analysis and Fourier Transform

where

1

A0 =

L

1

An =

L

Bn =

1

L

L/2

−L/2

L/2

−L/2

L/2

f (x)d x,

(2.3.2)

f (x)cos(2πnx/L)d x,

(2.3.3)

f (x)sin(2πnx/L)d x,

(2.3.4)

−L/2

or in the exponential notation,

f (x) =

∞

Cn ei2πnx/L ,

(2.3.5)

f (x)e−i2πnx/L d x.

(2.3.6)

n=−∞

where

1

Cn =

L

L/2

−L/2

Fourier series was a powerful one and forms the backbone of the Fourier transform.

The Fourier transform can be viewed as an extension of the above Fourier series to

non-periodic functions and allows us to deal with non-periodic functions. A nonperiodic function can be thought of as a periodic function in the limit L → ∞.

Clearly, the larger L is, the less frequently the function repeats, until in the limit

L → ∞ the function does not repeat at all. In the limit L → ∞ the allowed

frequencies become a continuum and the Fourier sum goes over to a Fourier integral.

Consider a function f (x) defined on the real line. If f (x) were periodic with

period L, then f (x) can be expand by Eq. (2.3.5) as Fourier series converging to it

almost everywhere within each period [−L/2, L/2]. Even if f (x) is not periodic,

we can still define a function

f (x) =

∞

Cn ei2πnx/L ,

(2.3.7)

n=−∞

with the same Cn as above. Consider the limit in which L become very large.

Define

kn =

2nπ

,

L

2.3 Fourier Transform

23

then

f L (x) =

∞

Cn eikn x .

(2.3.8)

n=−∞

It is clear that for very large L the sum contains a very large number of waves with

wave-vector kn and that each successive wave differs from the last by a tiny change

in wave-vector (or wavelength),

k = kn+1 − kn =

2π

.

L

In the limit L → ∞ the allowed k becomes a continuous variable, the discrete

coefficients, Cn , become a continuous function of k, denoted by C(k) and the summation can be replaced by an integral and we have

f (x) =

1

2π

C(k) =

∞

C(k)eikx d x,

(2.3.9)

−∞

∞

−∞

f (x)e−ikx d x.

(2.3.10)

The functions f and C are called a Fourier transform pair, C is called the Fourier

transform of f and f is called the inverse Fourier transform of C.

This prompts us to define the 1D-Fourier transform of the function f (x) as

fˆ(k) =

∞

−∞

f (x)e−ikx d x,

(2.3.11)

provided that the integral exists. Not every function f (x) has a Fourier transform.

A sufficient condition is that it be square-integrable; that is, so that the following

integral converges:

|| f ||2 =

∞

−∞

| f (x)|2 d x.

(2.3.12)

If in addition of being square-integrable, the function is continuous, then one also

has the inversion formula

∞

1

ˇ

(2.3.13)

fˆ(k)eikx d x.

f (x) = ( f ) =

2π −∞

24

2 Fourier Analysis and Fourier Transform

2.3.1 2D-Fourier Transform

Two-dimensional (2D) Fourier transform of the function f (x, y) is defined as

fˆ(k, l) =

∞

−∞

∞

f (x, y)e−i(kx+ly) d xd y,

−∞

(2.3.14)

provided that the integral exists and a the two-dimensional (2D) inverse Fourier

transform is defined by

ˇf ) = 1

f (x, y) = ( 2π

∞

−∞

∞

−∞

fˆ(k, l)ei(kx+ly) dkdl.

(2.3.15)

2.3.2 Properties of Fourier Transform

Let fˆ(k) and ĝ(k) are Fourier transform of functions f (t) and g(t), respectively.

Then we have the following:

1. Linearity

a

f + bg(k) = a f (k) + b

g (k),

here a, b are constants, i.e. if we add two functions then the Fourier transform of

the resulting function is simply the sum of the individual Fourier transforms and if

we multiply a function by any constant then we must multiply the Fourier transform

by the same constant.

2. Shifting There are two basic shift properties of the Fourier transform:

i. Time Shifting

f (k)e±ikt0 .

( f

(t ± t0 ))(k) = ii. Frequency Shifting

±ik0 x )(k) = ( f (t)e

f (k ± k0 ).

Here t0 and k0 are constants. i.e. Translating a function in one domain corresponds

to a multiplication by a complex exponential function in the other domain.

3. Scaling

1 k

f (ax)(k) = f ( ),

a a

2.3 Fourier Transform

25

here a is constant. When a signal is expanded in time, it is compressed in frequency,

and vice versa i.e. we cannot be simultaneously short in time and short in frequency.

4. Differentiation

i. Time differentiation property

f (t)(k) = ik f (k).

Differentiating a function is said to amplify the higher frequency components because

of the additional multiplying factor k.

ii. Frequency differentiation property

d

f (k)

t

f (t)(k) = i

.

dk

5. Conjugate Symmetry The Fourier transform is conjugate symmetric for time

functions that are real-valued,

f (−k) = f (k).

From this it follows that the real part and the magnitude of the Fourier transform of

real valued time functions are even functions of frequency and that the imaginary

part and phase are odd functions of frequency. By property of conjugate symmetry,

in displaying or specifying the Fourier transform of a real-valued time function it is

necessary to display the transform only for positive values of k.

6. Reversal

f

(−x)(k) = f (−k),

for

x, k ∈ R.

7. Duality This property relates to the fact that the analysis equation and synthesis

1

equation look almost identical except for a factor of 2π

and the difference of a minus

sign in the exponential in the integral.

f (t) =

1

2π

∞

−∞

fˆ(k)eikt dk ⇐⇒

fˆ(k) =

∞

−∞

f (t)e−ikt dt

8. Convolution The convolution theorem states that convolution in time domain corresponds to multiplication in frequency domain and vice versa:

( f

∗ g)(t)(k) = f (k)

g (k).

9. Parseval’s Relation

∞

−∞

| f (t)|2 dt =

1

2π

∞

−∞

| fˆ(k)|2 dk.

26

2 Fourier Analysis and Fourier Transform

2.4 Discrete Fourier Transform

We assume that vectors in C N , i.e., sequences of N complex numbers, are indexed

from 0 to N − 1 instead of {1, 2, 3, . . . , N }. we regard x as a function defined on the

finite set

(2.4.1)

Z N = {0, 1, 2, . . . , N − 1},

and we identify x with column vector

⎡

⎤

x0

x1

.

.

.

⎢

⎢

⎢

x =⎢

⎢

⎢

⎣

⎥

⎥

⎥

⎥.

⎥

⎥

⎦

x N −1

This allows us to write the product of N × N matrix A by x as Ax. To save space,

we usually writ such a x horizontally instead of vertically x = (x0 , x1 , . . . , x N −1 ).

In order to be consistent with the notation for functions used later in the infinite

dimensional context, we write l 2 (Z N ) in place of C N . So, formally,

l 2 (Z N ) = {x = (x0 , x1 , . . . , x N −1 ) :

x j ∈ C, 0 ≤ j ≤ N − 1}.

(2.4.2)

With the usual component-wise addition and scalar multiplication, l 2 (Z N ) is an N dimensional vector space over C. One basis for l 2 (Z N ) is an standard or Euclidean

basis E = {e0 , e1 , . . . , e N −1 }, where

1, if n = j

e j (n) =

0, otherwise.

(2.4.3)

In this notation, the complex inner product on l 2 (Z N ) is

x, y =

N −1

xk y k ,

(2.4.4)

k=0

with the associated norm

||x|| =

N −1

k=0

called the l 2 -norm.

1/2

|xk |

2

,

(2.4.5)

2.4 Discrete Fourier Transform

27

2.4.1 1D-Discrete Fourier Transform

Definition 2.1 Define E 0 , E 1 , . . . , E N −1 ∈ l 2 (Z N ) by

1

E m (n) = √ e2πimn/N ,

N

for

0 ≤ m, n ≤ N − 1.

(2.4.6)

Clearly, the set {E 0 , E 1 , . . . , E N −1 } is an orthonormal basis for l 2 (Z N ). We have

x=

N −1

x, E m E m ,

(2.4.7)

x, E m y, E m ,

(2.4.8)

m=0

x, y =

N −1

m=0

||x|| =

2

N −1

| x, E m |2 .

(2.4.9)

m=0

By definition of inner product

x, E m =

N −1

n=0

N −1

1

1 xn √ e2πimn/N = √

xn e−2πimn/N .

N

N m=0

(2.4.10)

Definition 2.2 Suppose x = (x0 , x1 , . . . , x N −1 ) ∈ l 2 (Z N ). For m = 0, 1, 2, . . . ,

N − 1, define

N −1

xn e−2πimn/N .

(2.4.11)

xm =

n=0

Then x = (

x0 , x1 , . . . , x N −1 ) ∈ l 2 (Z N ). The map : l 2 (Z N ) → l 2 (Z N ), which takes

x to x , is called the 1D-discrete Fourier transform (DFT).

It can easily see that xm , m ∈ Z is periodic with period N :

xm+N =

N

−1

m=0

xn e−2πi(m+N )n/N =

N

−1

m=0

xn e−2πimn/N e−2πi N n/N =

N

−1

xn e−2πimn/N = xm ,

m=0

since e−2πi N n/N = e−2πin = 1, for every n ∈ Z. Comparing the Eqs. (2.4.10) and

(2.4.11), we have

√

(2.4.12)

xm = N x, E m .

28

2 Fourier Analysis and Fourier Transform

Remark 2.1 Equation (2.4.12) actually defines the DFT coefficients xk for any index

k and resulting xk are periodic with period N in the index. We will thus sometimes

refers to the xk on the other ranges of the index, for example −N /2 < k ≤ N /2

when N is even. Actually, even if N is odd, the range −N /2 < k ≤ N /2 works

because k is required to be an integer.

Equation (2.4.12) leads to the following reformulation of formulae (2.4.7), (2.4.8)

x1 , . . . , x N −1 ) and y = (

y0 , y1 , . . . , y N −1 ) ∈ l 2 (Z N ). Then

and (2.4.9). Let x = (

x0 , (i) Fourier Inversion Formula:

N −1

1 xm e−2πimn/N ,

xn =

N m=0

for

n = 0, 1, 2, . . . , N − 1.

(2.4.13)

(ii) Parseval’s Relation:

x, y =

N −1

1 1

xm , xm ym =

ym .

N m=0

N

(2.4.14)

N −1

1 1

xm ||2 .

|

xm |2 = ||

N m=0

N

(2.4.15)

(ii) Plancherel Theorem:

||x||2 =

The DFT can be represented by matrix, since Eq. (2.4.11) shows that the map

taking x to x is a linear transformation. Define

w N = e−2πi/N .

Then we have

e−2πimn/N = w mn

N

and

xm =

e2πimn/N = w −mn

N ,

and

N −1

xn w mn

N .

(2.4.16)

n=0

Definition 2.3 Let W N be the matrix [wmn ]0≤m,n≤N −1 , such that wmn = w mn

N . Hence

⎡

1 1

1

⎢ 1 wN

w 2N

⎢

⎢

2

⎢

w 4N

WN = ⎢ 1 wN

⎢·

·

·

⎢

⎣·

·

·

−1)

2(N −1)

w

1 w (N

N

N

..

··

·

·

·

·

1

w NN −1

−1)

· w 2(N

N

·

·

·

·

−1)(N −1)

· w (N

N

⎤

⎥

⎥

⎥

⎥

⎥.

⎥

⎥

⎦

2.4 Discrete Fourier Transform

29

Regarding x, x ∈ l 2 (Z N ) as column vectors (as Eq. 2.4.11), the m th component

of W N x is

N −1

wmn xn =

n=0

N −1

xn w mn

xm

N =

0 ≤ m ≤ N − 1.

n=0

Hence

x = W N x.

(2.4.17)

Now, we only compute a simple example in order to demonstrate the definitions.

We could use Eq. (2.4.12) for this, but it is easier to use Eq. (2.4.17). The values of

matrices W2 and W4 are as follows:

⎡

⎤

1 1 1 1

⎢ 1 −i −1 i ⎥

1 1

⎥

W2 =

and W4 = ⎢

⎣ 1 −1 1 −1 ⎦ .

1 −1

1 i −1 −i

Example 2.1 Let x = (1, 0, −3, 4) ∈ l 2 (Z4 ). Find x.

Solution

⎡

1

⎢1

x = W4 x = ⎢

⎣1

1

1

−i

−1

i

1

−1

1

−1

⎤

⎤ ⎡

⎤⎡

2

1

1

⎥

⎢

⎥ ⎢

i ⎥

⎥ ⎢ 0 ⎥ = ⎢ 4 + 4i ⎥ .

−1 ⎦ ⎣ −3 ⎦ ⎣ −6 ⎦

4 − 4i

4

−i

The matrix W N has a lot of structure. This structure can even be exploited to

develop an algorithm called the fast Fourier transform that provides a very efficient

method for computing DFT’s without actually doing the full matrix multiplication.

Definition 2.4 (Convolution) For x, y ∈ l 2 (Z N ), the convolution x ∗ y ∈ l 2 (Z N ) is

the vector with components

(x ∗ y)(m) =

N −1

x(m − n)y(n),

(2.4.18)

m=0

for all m.

Suppose x, y ∈ l 2 (Z N ). Then for each m,

xm ym .

(x

∗ y)m = (2.4.19)

30

2 Fourier Analysis and Fourier Transform

2.4.2 Inverse 1D-Discrete Fourier Transform

To interpret the Fourier inversion formula (2.4.13), we make the following definition:

Definition 2.5 Let m = 0, 1, 2, . . . , N − 1. Define Fm ∈ l 2 (Z N ) by

Fm (n) =

1 2πimn/N

e

,

N

for

0 ≤ n ≤ N − 1.

(2.4.20)

Then F = {F0 , F1 , . . . , FN −1 } is called the Fourier basis for l 2 (Z N ).

Form Eq. (2.4.6) we have

1

Fm = √ E m .

N

(2.4.21)

Since E m is orthonormal basis for l 2 (Z N ), F is an orthogonal basis for l 2 (Z N ). With

this notation, Eq. (2.4.13) becomes

x=

N −1

xm Fm .

(2.4.22)

m=0

The Fourier inversion formula (2.4.13) shows that the linear transformation

: l 2 (Z N ) → l 2 (Z N ) is a one-one map. Therefore is invertible. Hence, Eq. (2.4.13)

gives us a formula for the inverse of discrete Fourier transform and it is denoted byˇ.

Definition 2.6 Let y = (y0 , y1 , . . . , y N −1 ) ∈ l 2 (Z N ). Define

y̌n =

N −1

1 yn e2πimn/N

N m=0

For

n = 0, 1, 2, . . . , N − 1.

(2.4.23)

Then y̌ = ( y̌0 , y̌1 , . . . , y̌ N −1 ) ∈ l 2 (Z N ). The mapˇ : l 2 (Z N ) → l 2 (Z N ), which takes

y to y̌, is called the 1D-inverse discrete Fourier transform (IDFT).

We can easily see that y̌m , m ∈ Z is also periodic function with period N . Fourier

inversion formula states that for x ∈ l 2 (Z N ),

(

xˇn ) = xn or (x̌n ) = xn , for n = 0, 1, 2, . . . , N − 1.

Since the DFT is an invertible linear transformation, the matrix W N is invertible and

x . Substituting x = y and equivalently x = y̌ in equations,

we must have x = W N−1

we have

(2.4.24)

y̌ = W N−1 y.

2.4 Discrete Fourier Transform

31

In the notation of formula (2.4.16), formula (2.4.21) becomes

y̌ =

N −1

yn

n=0

N −1

1 −mn 1 mn

wN =

yn w N .

N

N

n=0

w mn

This shows that the (n, m) entry of W N−1 is NN , which is 1/N times of the complex

conjugate of the (n, m) entry of W N . If we denote by W N the matrix whose entries

are the complex conjugates of the entries of W N , we have

W N−1 =

1

WN .

N

⎡

We have

W2−1 =

1 1 1

2 1 −1

and W4−1

1

1⎢

1

= ⎢

4 ⎣1

1

1

i

−1

−i

1

−1

1

−1

⎤

1

−i ⎥

⎥.

−1 ⎦

i

Example 2.2 Let y = (2, 4 + 4i, −6, 4 − 4i) ∈ l 2 (Z4 ). Find y̌.

⎡

Solution

W4−1

1 1

1⎢

1 i

= ⎢

4 ⎣ 1 −1

1 −i

1

−1

1

−1

⎤⎡

⎤ ⎡

⎤

1

2

1

⎢

⎥ ⎢

⎥

−i ⎥

⎥ ⎢ 4 + 4i ⎥ = ⎢ 0 ⎥ .

−1 ⎦ ⎣ −6 ⎦ ⎣ −3 ⎦

i

4 − 4i

4

2.4.3 2D-Discrete Fourier Transform and 2D-Inverse

Discrete Fourier Transform

The definition of the two-dimensional (2D) discrete Fourier transform is very similar

to that for one dimension. The forward and inverse transforms for an M × N matrix,

where for notational convenience we assume that the m indices are from 0 to M − 1

and the n indices are from 0 to N − 1 are:

x(r,s) =

M−1

N −1

x(m.n) e−2πi( M + N ) ,

mr

ns

(2.4.25)

m=0 n=0

and

x̌(r,s) =

M−1 N −1

1 mr

ns

x(m,n) e2πi( M + N ) .

M N m=0 n=0

(2.4.26)

32

2 Fourier Analysis and Fourier Transform

2.4.4 Properties of 2D-Discrete Fourier Transform

All the properties of the one-dimensional DFT transfer into two dimensions. But

there are some further properties not previously mentioned, which are of particular

use for image processing.

1. Similarity First notice that the forward and inverse transforms are very similar,

with the exception of the scale factor 1/M N in the inverse transform, and the negative

sign in the exponent of the forward transform. This means that the same algorithm,

only very slightly adjusted, can be used for both the forward an inverse transforms.

2. The DFT as a spatial filter Note that the values

e±2πi( M + N )

mr

ns

(2.4.27)

are independent of the values x or x̌. This means that they can be calculated in

advance, and only then put into the formulas above. It also means that every value

of x(r,s) is obtained by multiplying every value of x̌(r,s) by a fixed value, and adding

up all the results. But this is precisely what a linear spatial filter does: it multiplies

all elements under a mask with fixed values, and adds them all up. Thus we can

consider the DFT as a linear spatial filter which is as big as the image. To deal with

the problem of edges, we assume that the image is tiled in all directions, so that the

mask always has image values to use.

3. Separability Notice that the discrete Fourier transform filter elements can be

expressed as products:

mr

ns

mr

ns

(2.4.28)

e2πi( M + N ) = e2πi( M ) e2πi( N ) .

The first product value

e2πi( M )

mr

(2.4.29)

depends only on m and r, and is independent of n and s. Conversely, the second

product value

ns

(2.4.30)

e2πi( N )

depends only on n and s, and is independent of m and r . This means that we can

break down our formulas above to simpler formulas that work on single rows or

columns:

M−1