SOFE 3980U: Software Quality

Software Metrics

Instructor:

Akramul Azim, PhD

Winter 2022

Faculty of Engineering and Applied Science

University of Ontario Institute of Technology (UOIT)

(Slides from Dr. Ivan Bruha on Software Metrics)

How many Lines of Code?

https://www.youtube.com/watch?v=8Io6IRiwYio

SOFTWARE QUALITY METRICS

BASICS

How many Lines of Code?

with TEXT_IO; use TEXT_IO;

procedure Main is

--This program copies characters from an input

--file to an output file. Termination occurs

--either when all characters are copied or

--when a NULL character is input

Nullchar, Eof: exception;

Char: CHARACTER;

Input_file, Output_file, Console: FILE_TYPE;

Begin

loop

Open (FILE => Input_file, MODE => IN_FILE,

NAME => “CharsIn”);

Open (FILE => Output_file, MODE =>OUT_FILE,

NAME => “CharOut”);

Get (Input_file, Char);

if END_OF_FILE (Input_file) then

raise Eof;

elseif Char = ASCII.NUL then

raise Nullchar;

else

Put(Output_file, Char);

end if;

end loop;

exception

when Eof => Put (Console, “no null characters”);

when Nullchar => Put (Console, “null terminator”);

end Main

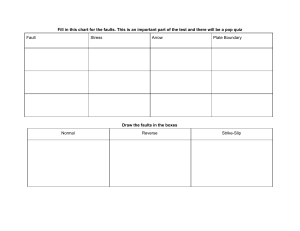

Software Quality Models

Use

Factor

Criteria

Communicativeness

Usability

Product

operation

Reliability

Accuracy

Consistency

Device Efficiency

Efficiency

Accessibility

Completeness

Reusability

METRICS

Structuredness

Maintainability

Product

revision

Conciseness

Device independence

Portability

Testability

Legability

Self-descriptiveness

Traceability

Definition of system reliability

The reliability of a system is the probability that

the system will execute without failure in a

given environment for a given period of time.

Implications:

• No single reliability number for a given system dependent on how the system is used

• Use probability to express our uncertainty

• Time dependent

What is a software failure?

Alternative views:

• Formal view

–

–

–

Any deviation from specified program behaviour is a failure

Conformance with specification is all that matters

This is the view adopted in computer science

• Engineering view

–

–

Any deviation from required, specified or expected behaviour is a failure

If an input is unspecified the program should produce a “sensible” output

appropriate for the circumstances

– This is the view adopted in dependability assessment

Human errors, faults, and failures

?

can lead to

human error

can lead to

fault

failure

• Human Error: Designer’s mistake

• Fault: Encoding of an error into a software

document/product

• Failure: Deviation of the software system from specified

or expected behaviour

Processing errors

In the absence of fault tolerance:

Human

Error

Fault

Input

Processing

Error

Failure

Relationship between faults and failures

Faults

Failures (sized by MTTF)

35% of all faults only lead to very

rare failures (MTTF>5000 years)

The relationship between faults

and failures

• Most faults are benign

• For most faults: removal will not lead to greatly improved

reliability

• Large reliability improvements only come when we

eliminate the small proportion of faults which lead to the

more frequent failures

• Does not mean we should stop looking for faults, but

warns us to be careful about equating fault counts with

reliability

The ‘defect density’ measure: an

important health warning

• Defects = {faults} {failures}

– but sometimes defects = {faults} or defects = {failures}

• System defect density =

number of defects found

system size

– where size is usually measured as thousands of lines of code (KLOC)

• Defect density is used as a de-facto measure of software

quality.

• What are industry ‘norms’ and what do they mean?

Defect density Vs module size

Defect

Density

Theory

Observation?

Lines of Code

A Study in Relative Efficiency of Testing

Methods

Testing Type

Defects found

per hour

Regular use

0.21

Black box

0.282

White box

0.322

Reading/Inspections

1.057

R B Grady, ‘Practical Software metrics for Project Management

and Process Improvement’, Prentice Hall, 1992

The problem with ‘problems’

• Defects

• Faults

• Failures

• Anomalies

• Bugs

• Crashes

Incident Types

• Failure (in pre or post release)

• Fault

• Change request

Generic Data

Applicable to all incident types

What: Product details

Where (Location): Where is it?

Who: Who found it?

When (Timing): When did it occur?

What happened (End Result): What was observed?

How (Trigger): How did it arise?

Why (Cause): Why did it occur?

Severity/Criticality/Urgency

Change

Example: Failure Data

What: ABC Software Version 2.3

Where: Norman’s home PC

Who: Norman

When: 13 Jan 2000 at 21:08 after 35 minutes of operational

use

End result: Program crashed with error message xyz

How: Loaded external file and clicked the command Z.

Why: <BLANK - refer to fault>

Severity: Major

Change: <BLANK>

Example: Fault Data (1) - reactive

What: ABC Software Version 2.3

Where: Help file, section 5.7

Who: Norman

When: 15 Jan 2000, during formal inspection

End result: Likely to cause users to enter invalid passwords

How: The text wrongly says that passwords are case sensitive

Why: <BLANK>

Urgency: Minor

Change: Suggest rewording as follows ...

Example: Fault Data (2) - responsive

What: ABC Software Version 2.3

Where: Function <abcd> in Module <ts0023>

Who: Simon

When: 14 Jan 2000, after 2 hours investigation

What happened: Caused reported failure id <0096>

How: <BLANK>

Why: Missing exception code for command Z

Urgency: Major

Change: exception code for command Z added to function

<abcd> and also to function <efgh>. Closed on 15 Jan

2000.

Example: Change Request

What: ABC Software Version 2.3

Where: File save menu options

Who: Norman

When: 20 Jan 2000

End result: <BLANK>

How: <BLANK>

Why: Must be able to save files in ascii format - currently not

possible

Urgency: Major

Change: Add function to enable ascii format file saving

Tracking incidents to components

Incidents need to be traceable to identifiable components but at what level of granularity?

• Unit

• Module

• Subsystem

• System

Fault classifications used in Eurostar

control system

Cause

error in software design

error in software implementation

error in test procedure

deviation from functional specification

hardware not configured as specified

change or correction induced error

clerical error

other (specify)

Category

category not applicable

initialisation

logic/control structure

interface (external)

interface (internal)

data definition

data handling

computation

timing

other (specify)

Summary of Software Metrics Basics

• Software quality is a multi-dimensional notion

• Defect density is a common (but confusing) way of

measuring software quality

• Much data collection focuses on ‘incident types: failures,

faults, and changes. There are ‘who, when, where,..’ type

data to collect in each case

• System components must be identified at appropriate levels

of granularity

SOFTWARE METRICS

PRACTICE

Why software measurement?

• To assess software products

• To assess software methods

• To help improve software processes

From Goals to Actions

Goals

Measures

Data

Facts/trends

Decisions

Actions

Goal Question Metric (GQM)

• There should be a clearly-defined need for every

measurement.

• Begin with the overall goals of the project or product.

• From the goals, generate questions whose answers will

tell you if the goals are met.

• From the questions, suggest measurements that can help

to answer the questions.

From Basili and Rombach’s Goal-Question-Metrics paradigm, described in

IEEE Transactions on Software Engineering, 1988 paper on the TAME

project.

GQM Example

Goal

Questions

Metrics

Identify fault-prone modules as early as possible

What do we mean by Does ‘complexity’ impactHow much testing

‘fault-prone’ module? fault-proneness?

is done per module?

….

‘Defect data’ for each module

‘Effort data’ for each module

‘Size/complexity data’

• # faults found per testing phase• Testing effort per testing phase for each module

• # failures traced to module

• # faults found per testing phase • KLOC

• complexity metrics

The Metrics Plan

For each technical goal this contains information about

• WHY metrics can address the goal

• WHAT metrics will be collected, how they will be defined, and how

they will be analyzed

• WHO will do the collecting, who will do the analyzing, and who will see

the results

• HOW it will be done - what tools, techniques and practices will be used

to support metrics collection and analysis

• WHEN in the process and how often the metrics will be collected and

analyzed

• WHERE the data will be stored

The Enduring LOC Measure

• LOC: Number of Lines Of Code

• The simplest and most widely used measure of

program size. Easy to compute and automate

• Used (as normalising measure) for

– productivity assessment (LOC/effort)

– effort/cost estimation (Effort = f(LOC))

– quality assessment/estimation (defects/LOC))

• Alternative (similar) measures

–

–

–

–

KLOC: Thousands of Lines Of Code

KDSI: Thousands of Delivered Source Instructions

NCLOC: Non-Comment Lines of Code

Number of Characters or Number of Bytes

Example: Software Productivity at

Toshiba

Instructions per

programmer month

300

250

Introduced Software

Workbench System

200

150

100

50

0

1972

1974

1976

1978

1980

1982

Problems with LOC type measures

• No standard definition

• Measures length of programs rather than size

• Wrongly used as a surrogate for:

– effort

– complexity

– functionality

• Fails to take account of redundancy and reuse

• Cannot be used comparatively for different types of programming

languages

• Only available at the end of the development life-cycle

Fundamental software size attributes

• length the physical size of the product

• functionality measures the functions supplied by the

product to the user

• complexity

– Problem complexity measures the complexity of the underlying

problem.

– Algorithmic complexity reflects the complexity/efficiency of the

algorithm implemented to solve the problem

– Structural complexity measures the structure of the software used to

implement the algorithm (includes control flow structure, hierarchical

structure and modular structure)

– Cognitive complexity measures the effort required to understand the

software.

The search for more discriminating

metrics

Measures that:

• capture cognitive complexity

• capture structural complexity

• capture functionality (or functional complexity)

• are language independent

• can be extracted at early life-cycle phases

The 1970’s: Measures of Source Code

Characterized by

• Halstead’s ‘Software Science’ metrics

• McCabe’s ‘Cyclomatic Complexity’ metric

Influenced by:

• Growing acceptance of structured programming

• Notions of cognitive complexity

Halstead’s Software Science Metrics

A program P is a collection of tokens, classified as

either operators or operands.

n1 = number of unique operators

n2 = number of unique operands

N1 = total occurrences of operators

N2 = total occurrences of operands

Length of P is N = N1+N2 Vocabulary of P is n = n1+n2

Theory: Estimate of N is N = n1 log n1 + n2 log n2

Theory: Effort required to generate P is

E=

n1 N2 N log n

2n2

(elementary mental

discriminations)

Theory: Time required to program P is T=E/18 seconds

McCabe’s Cyclomatic Complexity

Metric v

If G is the control flowgraph of program P

and G has e edges (arcs) and n nodes

v(P) = e-n+2

v(P) is the number of linearly

independent paths in G

here e = 16 n =13

v(P) = 5

More simply, if d is the number of

decision nodes in G then

v(P) = d+1

McCabe proposed: v(P)<10 for each module P

The 1980’s: Early Life-Cycle Measures

• Predictive process measures - effort and cost estimation

• Measures of designs

• Measures of specifications

Software Cost Estimation

See that building

on the screen?

I want

to know

its weight

How can I tell by

just looking at the

screen? I don’t

have any instruments

or context

I don’t care. You’ve

got your eyes and

a thumb and I want

the answer to the

nearest milligram

Simple COCOMO Effort Prediction

effort = a (size)b

effort = person months

size = KDSI (predicted)

a,b constants depending on type of system:

‘organic’:

a = 2.4 b = 1.05

‘semi-detached’: a = 3.0 b = 1.12

‘embedded’:

a = 3.6 b = 1.2

COCOMO Development Time

Prediction

time = a (effort)b

effort = person months

time = development time (months)

a,b constants depending on type of system:

‘organic’:

a = 2.5 b = 0.32

‘semi-detached’: a = 2.5 b = 0.35

‘embedded’:

a = 2.5 b = 0.38

Regression Based Cost Modelling

log E (Effort)

10,000

Slope b

1000

100

log E = log a + b * log S

10

E=a*Sb

log a

1K

10K

100K

1000K

10000K

log S(Size)

Albrecht’s Function Points

Count the number of:

External inputs

External outputs

External inquiries

External files

Internal files

giving each a ‘weighting factor’

The Unadjusted Function Count (UFC) is the sum of

all these weighted scores

To get the Adjusted Function Count (FP), multiply

by a Technical Complexity Factor (TCF)

FP = UFC x TCF

Function Points: Example

Spell-Checker Spec: The checker accepts as input a document file and an

optional personal dictionary file. The checker lists all words not contained

in either of these files. The user can query the number of words processed

and the number of spelling errors found at any stage during processing

errors found enquiry

words processes enquiry

User

Document file

# words processed message

# errors message

Spelling

Checker

Personal dictionary

User

report on misspelt words

words

Dictionary

A = # external inputs = 2, B =# external outputs = 3, C = # inquiries = 2,

D = # external files = 2, E = # internal files = 1

Assuming average complexity in each case

UFC = 4A + 5B + 4C +10D + 7E = 58

Function Points: Applications

• Used extensively as a ‘size’ measure in preference to LOC

• Examples:

Productivity

FP

Person months effort

Quality

Defects

FP

Effort prediction

E=f(FP)

Function Points and Program Size

Language

Source Statements per FP

Assembler

C

Algol

COBOL

FORTRAN

Pascal

RPG

PL/1

MODULA-2

PROLOG

LISP

BASIC

4 GL Database

APL

SMALLTALK

Query languages

Spreadsheet languages

320

150

106

106

106

91

80

80

71

64

64

64

40

32

21

16

6

The 1990’s: Broader Perspective

• Reports on Company-wide measurement

programmes

• Benchmarking

• Impact of SEI’s CMM process assessment

• Use of metrics tools

• Measurement theory as a unifying framework

• Emergence of international software measurement

standards

– measuring software quality

– function point counting

– general data collection

Process improvement at

Motorola

In-process

defects/MAELOC

1000

800

600

400

200

0

1

2

3

SEI level

4

5

Flashback: The SEI Capability Maturity Model

Level 5: Optimising

Process change management

Technology change management

Defect prevention

Level 4: Managed

Software quality management

Quantitative process mgment

Level 3: Defined

Peer reviews

Training programme

Intergroup coordination

Integrated s/w management

Organization process definition/focus

Level 2: Repeatable

S/W configuration management

S/W QA S/W project planning

S/W subcontract management

S/W requirements management

Level 1: Initial/ad-hoc

IBM Space Shuttle Software Metrics

Program (1)

Early detection rate

Total inserted error rate

IBM Space Shuttle Software Metrics

Program (2)

Predicted total error rate trend (errors per KLOC)

14

12

10

8

95% high

6

Actual

4

expected

2

95% low

0

1

3

5

7

8A

Onboard flight software releases

8C

8F

IBM Space Shuttle Software

Metrics Program (3)

Onboard flight software failures

occurring per base system

25

20

15

10

5

0

8B

8C

8D

Basic operational increment

20

SOFTWARE METRICS FRAMEWORK

Software Measurement Activities

Cost

Estimation

Algorithmic

complexity

Function

Points

Productivity

Models

Software

Quality

Models

Structural

Measures

Complexity

Metrics

Reliability

Models

GQM

Are these diverse activities related?

Opposing Views on Measurement?

‘‘When you can measure what you are speaking about, and

express it in numbers, you know something about it; but

when you cannot measure it, when you cannot express it

in numbers, your knowledge is of a meagre kind.”

Lord Kelvin

“In truth, a good case could be made that if your knowledge

is meagre and unsatisfactory, the last thing in the world

you should do is make measurements. The chance is

negligible that you will measure the right things

accidentally.”

George Miller

Definition of Measurement

Measurement is the process of empirical

objective assignment of numbers to

entities, in order to characterise a specific

attribute.

• Entity: an object or event

• Attribute: a feature or property of an entity

• Objective: the measurement process must

be based on a well-defined rule whose results

are repeatable

Example Measures

ENTITY

Person

Person

Source code

Source code

Testing process

ATTRIBUTE

Age

Age

Length

Length

duration

Tester

efficiency

Testing process

fault

frequency

quality

Source code

Operating system reliability

MEASURE

Years at last birthday

Months since birth

# Lines of Code (LOC)

# Executable statements

Time in hours from start to

finish

Number of faults found per

KLOC

Number of faults found per

KLOC

Number of faults found per

KLOC

Mean Time to failure

rate of occurrence of

failures

Avoiding Mistakes in Measurement

Common mistakes in software measurement can be

avoided simply by adhering to the definition of

measurement. In particular:

• You must specify both entity and attribute

• The entity must be defined precisely

• You must have a reasonable, intuitive understanding of

the attribute before you propose a measure

Be Clear of Your Attribute

It is a mistake to propose a ‘measure’ if there is no

consensus on what attribute it characterises.

o Results of an IQ test

– intelligence?

– or verbal ability?

– or problem solving skills?

o # defects found / KLOC

– quality of code?

– quality of testing?

A Cautionary Note

We must not re-define an attribute to fit in with an

existing measure.

His IQ rating

is zero - he

didn’t manage

a single answer

Well I know he can’t

write yet, but I’ve always

regarded him as a

rather intelligent dog

Types and uses of measurement

• Two distinct types of measurement:

– direct measurement

– indirect measurement

• Two distinct uses of measurement:

– for assessment

– for prediction

Measurement for prediction requires a prediction

system

Some Direct Software Measures

• Length of source code (measured by LOC)

• Duration of testing process (measured by elapsed time in

hours)

• Number of defects discovered during the testing process

(measured by counting defects)

• Effort of a programmer on a project (measured by person

months worked)

Some Indirect Software Measures

Programmer productivity

Module defect density

Defect detection

efficiency

Requirements stability

Test effectiveness ratio

System spoilage

LOC produced

person months of effort

number of defects

module size

number of defects detected

total number of defects

numb of initial requirements

total number of requirements

number of items covered

total number of items

effort spent fixing faults

total project effort

Predictive Measurement

Measurement for prediction requires a prediction system.

This consists of:

• Mathematical model

– e.g. ‘E=aSb’ where E is effort in person months (to be predicted), S is size

(LOC), and a and b are constants.

• Procedures for determining model parameters

– e.g. ‘Use regression analysis on past project data to determine a and b’.

• Procedures for interpreting the results

– e.g. ‘Use Bayesian probability to determine the likelihood that your prediction

is accurate to within 10%’

No Shortcut to Accurate Prediction

‘‘Testing your methods on a sample of past data gets to the

heart of the scientific approach to gambling. Unfortunately

this implies some preliminary spadework, and most people

skimp on that bit, preferring to rely on blind faith instead’’

•

[Drapkin and Forsyth 1987]

Software prediction (such as cost estimation) is no different

from gambling in this respect

Products, Processes, and Resources

Resources

Processes

Products

Process: a software related activity or event

– testing, designing, coding, etc.

Product: an object which results from a process

– test plans, specification and design documents, source and object code,

minutes of meetings, etc.

Resource: an item which is input to a process

– people, hardware, software, etc.

Internal and External Attributes

Let X be a product, process, or resource

• External attributes of X are those which can only be

measured with respect to how X relates to its

environment

– e.g. reliability or maintainability of source code (product)

• Internal attributes of X are those which can be measured

purely in terms of X itself

– e.g. length or structuredness of source code (product)

The Framework Applied

ATTRIBUTES

ENTITIES

Internal

External

PRODUCTS

Specification

Source Code

....

Length, functionality

modularity, structuredness,

reuse ....

maintainability

reliability

.....

PROCESSES

Design

Test

....

time, effort, #spec faults found

time, effort, #failures observed

....

stability

cost-effectiveness

....

RESOURCES

People

Tools

....

age, price, CMM level

price, size

....

productivity

usability, quality

....

CASE STUDY :

COMPANY OBJECTIVES

• Monitor and improve product reliability

– requires information about actual operational failures

• Monitor and improve product maintainability

– requires information about fault discovery and fixing

• ‘Process improvement’

– too high a level objective for metrics programme

– previous objectives partially characterise process improvement

General System Information

• 27 releases since Nov '87 implementation

• Currently 1.6 Million LOC in main system (15.2%

increase from 1991 to 1992)

1600000

1400000

1200000

LOC

1000000

COBOL

800000

Natural

600000

400000

200000

0

1991

1992

Main Data

Fault NumberWeek In System Area Fault Type Week OutHours to Repair

...

F254

...

...

92/14

C2

...

P

...

...

92/17

5.5

• ‘faults’ are really failures (the lack of a distinction caused

problems)

• 481 (distinct) cleared faults during the year

• 28 system areas (functionally cohesive)

• 11 classes of faults

• Repair time: actual time to locate and fix defect

Case Study Components

• 28 ‘System areas’

– All closed faults traced to system area

• System areas made up of Natural, Batch COBOL, and CICS

COBOL programs

– Typically 80 programs in each. Typical program 1000 LOC

• No documented mapping of program to system area

• For most faults: ‘batch’ repair and reporting

– No direct, recorded link between fault and program in most cases

• No database with program size information

• No historical database to capture trends

Single Incident Close Report

Fault id

Reported

Definition

F752

18/6/92

Logically deleted work done records

appear on enquiries

Description

Causes misleading info to users

Amend ADDITIONAL WORK PERFORMED

RDVIPG2A to ignore work done records with

FLAG-AMEND = 1 or 2

Programs changed RDVIPG2A, RGHXXZ3B

SPE

Joe Bloggs

Date closed

26/6/92

Single Incident Close Report:

Improved Version

Fault id

Reported

Trigger

F752

18/6/92

Delete work done record, then open enquiry

End result

Deleted records appear on enquiries, providing

misleading info to users

Cause

Omission of appropriate flag variables

for work done records

Change

Amend ADDITIONAL WORK PERFORMED

in RDVIPG2A to ignore work done records with

FLAG-AMEND = 1 or 2

Programs changed

SPE

Date closed

RDVIPG2A, RGHXXZ3B

Joe Bloggs

26/6/92

Fault Classification

Non-orthogonal:

Data

Micro

JCL

Operations

Misc

Unresolved

Program

Query

Release

Specification

User

Missing Data

• Recoverable

– Size information

– Static/complexity information

– Mapping of faults to programs

– Severity categories

• Non-recoverable

– Operational usage per system area

– Success/failure of fixes

– Number of repeated failures

‘Reliability’ Trend

Faults received per week

50

40

Faults

30

20

10

0

10

20

30

Week

40

50

Identifying Fault Prone Systems?

Number or faults per system area (1992)

90

80

70

60

faults

50

40

30

20

10

0

C2

J

System area

Analysis of Fault Types

Faults by fault type (total 481 faults)

Others

Data

User

Query

Unresolved

Release

Misc

Program

Fault Types and System Areas

Most common faults over system areas

70

60

50

Program

faults40

Data

User

30

Release

20

Unresolved

10

Query

0

Miscellaneous

C2 C

J G

Unresolved

G2 N

Area

T

User

C3

W

D

Program

F

C1

Miscellaneous

Maintainability Across System Areas

Mean Time To Repair Fault (by system area)

10

9

8

hours

7

6

5

4

3

2

1

0

D

O

S W1 F

W

C3

P

L

G C1

J

System Area

T

D1 G2 N

Z

C

C2 G1

U

Maintainability Across Fault Types

Mean Time To Repair Fault (by fault type)

9

8

7

6

5

4

3

2

1

0

Fault type

Case study results with additional

data: System Structure

Normalised Fault Rates (1)

20.00

18.00

16.00

14.00

12.00

Faults per KLOC

10.00

8.00

6.00

4.00

2.00

0.00

C2 C3

P

C

L G2

N

J

G

F

W G1

Area

S

D

O W1 C4

M D1

I

Z

B

Normalised Fault Rates (2)

1.20

1.00

0.80

Faults per KLOC

0.60

0.40

0.20

0.00

C3

P

C

L

G2

N

J

G

F

W

G1

Area

S

D

O W1

C4

M

D1

I

Z

B

Case Study 1 Summary

• The ‘hard to collect’ data was mostly all there

– Exceptional information on post-release ‘faults’ and maintenance

effort

– It is feasible to collect this crucial data

• Some ‘easy to collect’ (but crucial) data was

omitted or not accessible

– The addition to the metrics database of some basic information

(mostly already collected elsewhere) would have enabled

proactive activity.

– Goals almost fully met with the simple additional data.

– Crucial explanatory analysis possible with simple additional data

– Goals of monitoring reliability and maintainability only partly met

with existing data

SOFTWARE METRICS:

MEASUREMENT THEORY AND

STATISTICAL ANALYSIS

Natural Evolution of Measures

As our understanding of an attribute grows, it is possible

to define more sophisticated measures; e.g.

temperature of liquids:

• 200BC - rankings, ‘‘hotter than’’

• 1600 - first thermometer preserving ‘‘hotter than’’

• 1720 - Fahrenheit scale

• 1742 - Centigrade scale

• 1854 - Absolute zero, Kelvin scale

Measurement Theory Objectives

Measurement theory is the scientific basis for all types of

measurement. It is used to determine formally:

• When we have really defined a measure

• Which statements involving measurement are

meaningful

• What the appropriate scale type is

• What types of statistical operations can be applied to

measurement data

Measurement Theory: Key Components

• Empirical relation system

– the relations which are observed on entities in the real world which characterise

our understanding of the attribute in question,

e.g. ‘Fred taller than Joe’ (for height of people)

• Representation condition

– real world entities are mapped to number (the measurement mapping) in such a

way that all empirical relations are preserved in numerical relations and no new

relations are created

e.g. M(Fred) > M(Joe) precisely when Fred is taller than Joe

• Uniqueness Theorem

– Which different mappings satisfy the representation condition,

e.g. we can measure height in inches, feet, centimetres, etc but all such

mappings are related in a special way.

Representation Condition

Real World

Number System

M

Joe

Fred

63

Joe taller than Fred

Empirical relation

72

M(Joe) > M(Fred)

preserved under M as

Numerical relation

Meaningfulness in Measurement

Some statements involving measurement appear more

meaningful than others:

• Fred is twice as tall as Jane

• The temperature in Tokyo today is twice that in

London

• The difference in temperature between Tokyo and

London today is twice what it was yesterday

Formally a statement involving measurement is

meaningful if its truth value is invariant of

transformations of allowable scales

Measurement Scale Types

Some measures seem to be of a different ‘type’ to

others, depending on what kind of statements are

meaningful. The 5 most important scale types of

measurement are:

• Nominal

• Ordinal

• Interval

• Ratio

• Absolute

Increasing order

of sophistication

Nominal Scale Measurement

• Simplest possible measurement

• Empirical relation system consists only of different

classes; no notion of ordering.

• Any distinct numbering of the classes is an

acceptable measure (could even use symbols

rather than numbers), but the size of the numbers

have no meaning for the measure

Ordinal Scale Measurement

• In addition to classifying, the classes are also ordered

with respect to the attribute

• Any mapping that preserves the ordering (i.e. any

monotonic function) is acceptable

• The numbers represent ranking only, so addition and

subtraction (and other arithmetic operations) have no

meaning

Interval Scale Measurement

•

•

•

•

Powerful, but rare in practice

Distances between entities matters, but not ratios

Mapping must preserve order and intervals

Examples:

– Timing of events’ occurrence, e.g. could measure these in units

of years, days, hours etc, all relative to different fixed events.

Thus it is meaningless to say ‘‘Project X started twice as early as

project Y’’, but meaningful to say ‘‘the time between project X

starting and now is twice the time between project Y starting

and now’’

– Air Temperature measured on Fahrenheit or Centigrade scale

Ratio Scale Measurement

Common in physical sciences. Most useful scale of

measurement

• Ordering, distance between entities, ratios

• Zero element (representing total lack of the attribute)

• Numbers start at zero and increase at equal intervals

(units)

• All arithmetic can be meaningfully applied

Absolute Scale Measurement

• Absolute scale measurement is just counting

• The attribute must always be of the form of

‘number of occurrences of x in the entity’

– number of failures observed during integration testing

– number of students in this class

• Only one possible measurement mapping (the

actual count)

• All arithmetic is meaningful

Validation of Measures

• Validation of a software measure is the process of

ensuring that the measure is a proper numerical

characterisation of the claimed attribute

• Example:

–

A valid measure of length of programs must not contradict any intuitive

notion about program length

–

If program P2 is bigger than P1 then m(P2) > m(P1)

–

If m(P1) = 7 and m(P2) = 9 then if P1 and P2 are concatenated then

m(P1;P2) must equal m(P1)+m(P2) = 16

• A stricter criterion is to demonstrate that the measure is

itself part of valid prediction system

Validation of Prediction Systems

• Validation of a prediction system, in a given environment,

is the process of establishing the accuracy of the

predictions made by empirical means

– i.e. by comparing predictions against known data points

• Methods

– Experimentation

– Actual use

• Tools

– Statistics

– Probability

Scale Types Summary

Scale Types

Nominal

Ordinal

Interval

Ratio

Absolute

Characteristics

Entities are classified. No arithmetic

meaningful.

Entities are classified and ordered. Cannot

use + or -.

Entities classified, ordered, and differences

between them understood (‘units’). No zero,

but can use ordinary arithmetic on intervals.

Zeros, units, ratios between entities. All

arithmetic.

Counting; only one possible measure. All

arithmetic.

Meaningfulness and Statistics

The scale type of a measure affects what operations it is

meaningful to perform on the data

Many statistical analyses use arithmetic operators

These techniques cannot be used on certain data particularly nominal and ordinal measures

Example: The Mean

• Suppose we have a set of values {a1,a2,...,an}

and wish to compute the ‘average’

• The mean is a1+a2+...an

n

• The mean is not a meaningful average for a

set of ordinal scale data

Alternative Measures of Average

Median: The midpoint of the data when it is

arranged in increasing order. It divides the data

into two equal parts

Suitable for ordinal data. Not suitable for nominal

data since it relies on order having meaning.

Mode: The commonest value

Suitable for nominal data

Summary of Meaningful Statistics

Scale Type

Average

Spread

Nominal

Mode

Frequency

Ordinal

Median

Percentile

Interval

Arithmetic mean

Standard deviation

Ratio

Geometric mean

Coefficient of variation

Absolute

Any

Any

Non-Parametric Techniques

• Most software measures cannot be assumed to be

normally distributed. This restricts the kind of analytical

techniques we can apply.

• Hence we use non-parametric techniques:

– Pie charts

– Bar graphs

– Scatter plots

– Box plots

Box Plots

• Graphical representation of the spread of data.

• Consists of a box with tails drawn relative to a scale.

• Constructing the box plot:

– Arrange data in increasing order

– The box is defined by the median, upper quartile (u) and lower quartile (l) of the data.

Box length b is u l

– Upper tail is u+1.5b, lower tail is l 1.5b

– Mark any data items outside upper or lower tail (outliers)

– If necessary truncate tails (usually at 0) to avoid meaningless concepts like negative lines

of code

median

upper quartile

lower tail

scale

lower quartile

upper tail

x

outlier

Box Plots: Examples

31

System

A

B

C

D

E

F

G

H

I

J

K

L

M

N

P

Q

R

KLOC MOD

FD

10

23

26

31

31

40

47

52

54

67

70

75

83

83

100

110

200

36

22

15

33

15

13

22

16

15

18

10

34

16

18

12

20

21

15

43

61

10

43

57

58

65

50

60

50

96

51

61

32

78

48

54

83

161

R

x

KLOC 0

50

D A

100

16

43

150

51

200

61

88

x x

L

x

MOD

0

25

4.5

50

15

18

75

22

32.5

FD

0

10

20

100

30

x x

x

D L

A

40

Scatterplots

• Scatterplots are used to represent data for which two

measures are given for each entity

• Two dimensional plot where each axis represents one

measure and each entity is plotted as a point in the 2D plane

Example Scatterplot: Length vs Effort

60

40

Effort

(months)

20

0

0

10

20

Length (KLOC)

30

Determining Relationships

60

non-linear fit

linear fit

40

Effort

(months)

outliers?

20

0

0

10

20

Length (KLOC)

30

Causes of Outliers

• There may be many causes of outliers, some acceptable

and others not. Further investigation is needed to

determine the cause

• Example: A long module with few errors may be due to:

– the code being of high quality

– the module being especially simple

– reuse of code

– poor testing

Only the last requires action, although if it is the first it would be

useful to examine further explanatory factors so that the good

lessons can be learnt (was it use of a special tool or method,

was it just because of good people or management, or was it

just luck?)

Control Charts

• Help you to see when your data are within acceptable

bounds

• By watching the data trends over time, you can decide

whether to take action to prevent problems before they

occur.

• Calculate the mean and standard deviation of the data,

and then two control limits.

Control Chart Example

4.0

3.5

Preparation hours

per hour of 3.0

inspection 2.5

2.0

1.5

1.0

0.5

0

Upper

Control

Limit

Mean

Lower

Control

Limit

1

2

3

4

5

Components

6

7

EMPIRICAL RESULTS

Case study: Basic data

Release

n (sample size 140

modules)

n+1 (sample size 246

modules)

Function test

916

Number of faults

System test

Site test

682

19

Operation

52

2292

1008

108

238

• Major switching system software

• Modules randomly selected from those that

were new or modified in each release

• Module is typically 2,000 LOC

• Only distinct faults that were fixed are conted

• Numerous metrics for each module

Hypotheses tested

• Hypotheses relating to Pareto principle of

distribution of faults and failures

• Hypotheses relating to the use of early fault data

to predict later fault and failure data

• Hypotheses about metrics for fault prediction

• Benchmarking hypotheses

Hypothesis 1a: a small number of modules contain most of

the faults discovered during testing

100

80

60

% of Faults

40

20

0

30

60

% of Modules

90

Hypothesis 1b:

• If a small number of modules contain most of the faults

discovered during pre-release testing then this is simply

because those modules constitute most of the code size.

• For release n, the 20% of the modules which account for

60% of the faults (discussed in hypothesis 1a) actually

make up just 30% of the system size. The result for

release n+1 was almost identical.

Hypothesis 2a: a small number of modules

contain most of the operational faults?

100

80

60

% of Failures

40

20

0

10

% of Modules

100

Hypothesis 2b

if a small number of modules contain most of the

operational faults then this is simply because those

modules constitute most of the code size.

• No: very strong evidence in favour of a converse

hypothesis:

most operational faults are caused by faults in a

small proportion of the code

• For release n, 100% of operational faults contained

in modules that make up just 12% of entire system

size. For release n+1, 80% of operational faults

contained in modules that make up 10% of the

entire system size.

Higher incidence of faults in function testing (FT) implies

higher incidence of faults in system testing (ST)?

100%

80%

% of Accumalated

Faults in ST

60%

ST

FT

40%

20%

0%

15%

30%

45%

60%

% of Modules

75%

90%

Hypothesis 4:Higher incidence of faults pre-release implies

higher incidence of faults post-release?

• At the module level

• This hypothesis underlies the wide acceptance

of the fault-density measure

Pre-release vs post-release faults

35

Modules ‘fault prone’ pre-release

are NOT ‘fault-prone post-release demolishes most defect prediction models

30

25

Post-release faults

20

15

10

5

0

0

20

40

60

80

100

Pre-release faults

120

140

160

Size metrics good predictors of fault and

failure prone modules?

• Hypothesis 5a: Smaller modules are less likely to

be failure prone than larger ones

• Hypothesis 5b Size metrics are good predictors of.

number of pre-release faults in a module

• Hypothesis 5c: Size metrics are good predictors of

number of post-release faults in a module

• Hypothesis 5d: Size metrics are good predictors of

a module’s (pre-release) fault-density

• Hypothesis 5e: Size metrics are good predictors of

a module’s (post-release) fault-density

Plotting faults against size

160

140

Correlation but

poor prediction

120

Faults

100

80

60

40

20

0

0

2000

4000

6000

Lines of code

8000

10000

Cyclomatic complexity against pre-and postrelease faults

Pre-release

Faults

Post-release

160

140

120

100

35

30

25

Faults

80

60

40

20

0

20

15

10

5

0

0

1000

2000

Cyclomatic complexity

3000

0

1000

2000

Cyclomatic complexity

Cyclomatic complexity no better

at prediction than KLOC (for either pre- or post-release)

3000

Defect density Vs size

35

Size is no indicator of defect density

(this demolishes many

software engineering assumptions)

30

25

Defects

per KLOC

20

15

10

5

0

0

2000

4000

6000

8000

Module size (KLOC)

10000

Benchmarking hypotheses

Do software systems produced in similar environments

have broadly similar fault densities at similar testing and

operational phases?

Release

Pre-release

fault density

n (sample size 140

modules)

n+1 (sample size 246

modules)

6.6

Post-release

faultdensity

0.23

5.93

0.63

Evaluating Software

Engineering Technologies

through Measurement

The Uncertainty of Reliability Achievement

methods

• Software engineering is dominated by revolutionary

methods that are supposed to solve the software crisis

• Most methods focus on fault avoidance

• Proponents of methods claim theirs is best

• Adopting a new method can require a massive overhead

with uncertain benefits

• Potential users have to rely on what the experts say

Use of Measurement in Evaluating Methods

• Measurement is the only truly convincing means of

establishing the efficacy of a method/tool/technique

• Quantitative claims must be supported by empirical

evidence

We cannot rely on anecdotal evidence.

There is simply too much at stake.

Actual Promotional Claims for Formal

Methods

Productivity

gains of

250%

Maintenance

effort reduced

80%

Software

Integration

time-scales

cut to

1/6

What are we to make of such claims?

Formal Methods for Safety Critical Systems

• Wide consensus that formal methods must be used

• Formal methods mandatory in Def Stan 00-55

‘‘These mathematical approaches provide us with the best

available approach to the development of high-integrity

systems.’’

McDermid JA, ‘Safety critical systems: a vignette’, IEE Software Eng J, 8(1), 2-3,

1993

SMARTIE Formal Methods Study

CDIS Air

Traffic Control System

Best quantitative evidence yet to support FM

• Mixture of formally (VDM, CCS) and informally developed

modules.

• The techniques used resulted in extraordinarily high

levels of reliability (0.81 failures per KLOC).

• Little difference in total number of pre-delivery faults for

formal and informal methods (though unit testing

revealed fewer errors in modules developed using formal

techniques), but clear difference in the post-delivery

failures.

Relative sizes and changes reported for

each design type in delivered code

Design Type

FSM

VDM

VDM/CCS

Formal

Informal

Total Lines

of Delivered

Code

19064

61061

22201

102326

78278

Number of

Fault

Reportgenerated

Code

Changes in

Delivered

Code

260

1539

202

2001

1644

Code

Changes

per

KLOC

Number

of

Modules

Having

This

Design

Type

Total

Number

of

Delivered

Modules

Changed

Percent

Delivered

Modules

Changed

13.6

25.2

9.1

19.6

21.0

67

352

82

501

469

52

284

57

393

335

78%

81%

70%

78%

71%

Code changes by design type for modules

requiring many changes

Design Type

FSM

VDM

VDM/CCS

Formal

Informal

Total

Number

of

Modules

Changed

Number

of

Modules

with Over

5 Changes

Per

Module

Percent of

Modules

Changed

58

284

58

400

556

11

89

11

111

108

16%

25%

13%

22%

19%

Number

of

Modules

with Over

10

Changes

Per

Module

8

35

3

46

31

Percent

of

Modules

Changed

12%

19%

4%

9%

7%

Changes Normalized by KLOC for

Delivered Code by Design Type

10

9

Changes per Quarter/KLOC

8

7

FSM

6

Informal

5

VDM

4

VDM/CCS

3

2

1

0

0

2

4

Quarter of Year

6

8

Faults discovered during unit testing

Design Type

FSM

VDM

VDM/CCS

Formal

Informal

Number of

Faults

Discovered

43

184

11

238

487

Number of

Modules Having

This Design Type

77

352

83

512

692

Number of Faults

Normalized by Number of

Modules

.56

.52

.13

.46

.70

Changes to delivered code as a result of

post-delivery problems

Design Type

Number of

Changes

Number of Lines of

Code Having This

Design Type

Number of

Changes

Normalized

by KLOC

Number of

Modules

Having This

Design Type

FSM

VDM

VDM/CCS

Formal

Informal

6

44

9

59

126

19064

61061

22201

102326

78278

.31

.72

.41

.58

1.61

67

352

82

501

469

Number of

Changes

Normalized

by Number

of Modules

.09

.13

.11

.12

.27

Post-delivery problems discovered in each

problem category

Category

1

2

3

Specification

Design

Testing

Documentation

None assigned

Number of problems

6

6

46

1

1

29

11

47

Post-delivery problem rates reported in

the literature

Source

Siemens operating system

NAG scientific libraries

CDIS air traffic control support

Lloyd’s language parser

IBM cleanroom development

IBM normal development

Satellite planning study

Unisys communications software

Language

Failures per

KLOC

Formal

methods

used?

Assembly

Fortran

C

C

Various

Various

Fortran

Ada

6-15

3.00

.81

1.40

3.40

30.0

6-16

2-9

No

No

Yes

Yes

Partly

No

No

No

SOFTWARE METRICS FOR RISK

AND UNCERTAINTY

The Classic size driven approach

• Since mid-1960’s LOC used as surrogate for different

notions of software size

• LOC used as driver in early resource prediction and

defect prediction models

• Drawbacks of LOC led to complexity metrics and function

points

• ...But approach to both defects prediction and resource

prediction remains ‘size’ driven

Predicting road fatalities

Month

Weather

conditions

Month

Number

of

fatalities

Naïve model

Road

conditions

Number

of

journeys

Average

speed

Number

of

fatalities

Causal/explanatory model

Predicting software effort

Problem

Complexity

Size

Size

Schedule

Effort

Effort

Product

Quality

Naïve model

Causal/explanatory model

Resource

quality

Typical software/systems assessment

problem

“Is this system sufficiently reliable to ship?”

You might have:

• Measurement data from testing

• Empirical data

• Process/resource information

• Proof of correctness

• ….

None alone is sufficient

So decisions inevitably involve expert judgement

What we really need for assessment

We need to be able to incorporate:

• uncertainty

• diverse process and product information

• empirical evidence and expert judgement

• genuine cause and effect relationships

• incomplete information

We also want visibility of all assumptions

Bayesian Belief Nets (BBNs)

• Powerful graphical framework in which to reason about

uncertainty using diverse forms of evidence

• Nodes of graph represent uncertain variables

• Arcs of graph represent causal or influential relationships

between the variables

• Associated with each node is a probability table (NPT)

A

P(B | C)

B

P(A |B,C)

C

D

P(D)

P(C)

Defects BBN (simplified)

Problem Complexity

Testing Effort

Defects Introduced

Defects Detected

Operational usage

Design Effort

Residual Defects

Operational defects

Bayes’ Theorem

A: ‘Person has cancer’ p(A)=0.1 (prior)

B: ‘Person is smoker’ p(B)=0.5

What is p(A|B)?

p(B|A)=0. 8

Posterior

probability

(posterior)

(likelihood)

Likelihood

Prior

probability

p ( B| A ) p ( A)

p( A| B ) =

p( B )

So

p(A|B)=0.16

Bayesian Propagation

• Applying Bayes theorem to update all probabilities when

new evidence is entered

• Intractable even for small BBNs

• Breakthrough in late 1980s - fast algorithm

• Tools like Hugin implement efficient propagation

• Propagation is multi-directional

• Make predictions even with missing/incomplete data

Classic approach to defect modelling

Complexity

Functionality

Quality of staff,

tools

Resources/

process

quality

Solution/problem

size/complexity

Number of

defects

Problems with classic defects modelling

approach

• Fails to distinguish different notions of ‘defect’

• Statistical approaches often flawed

• Size/complexity not causal factors

• Obvious causal factors not modelled

• Black box models hide crucial assumptions

• Cannot handle uncertainty

• Cannot be used for real risk assessment

Many defects pre-release, few after

Few defects pre-release,

many after

Schematic of classic resource model

Complexity

Functionality

Quality of staff,

tools

Solution/problem

size

Required

reliability

Resources

quality

Solution

quality

Required

Resources

Required duration

Required effort

Problems with classic approach to

resource prediction

• Based on historical projects which happened to be completed (but

not necessarily successful)

• Obvious causal factors not modelled or modelled incorrectly solution size should never be a ‘driver’

• Flawed assumption that resource levels are not already fixed in

some way before estimation (i.e. cannot handle realistic contraints)

• Statistical approaches often flawed

• Black box models hide crucial assumptions

• Cannot handle uncertainty

• Cannot be used for real risk assessment

Classic approach cannot handle questions

we really want to ask

• For a problem of this size, and given these limited resources, how

likely am I to achieve a product of suitable quality?

• How much can I scale down the resources if I am prepared to put

up with a product of specified lesser quality?

• The model predicts that I need 4 people over 2 years to build a

system of this kind of size. But I only have funding for 3 people over

one year. If I cannot sacrifice quality, how good do the staff have to

be to build the systems with the limited resources?

Schematic of ‘resources’ BBN

Complexity

Functionality

Problem size

Functionality

Solution size

Problem size

Quality of staff,

tools

Required

resources

Proportion

implemented

Required duration

Required effort

Appropriateness

of actual

Actual duration

resources

Actual effort

Solution

quality

Solution

reliability

“Appropriateness of resources” Subnet

required_effort

number_staff

actual_duration

actual_effort

required_duration

appropriate_effort

appropriate_duration

appropriate_resources

Specific values for problem size

Now we require high accuracy

Actual resources entered

Actual resource quality entered

Software defects and resource prediction

summary

• Classical approaches:

– Mainly regression-based black-box models

– Predicted_attribute = f(size)

– Crucial assumptions often hidden

– Obvious causal factors not modelled

– Cannot handle uncertainty

– Cannot be used for real risk assessment

• BBNs provide realistic alternative approach

Conclusions: Benefits of BBNs

• Help risk assessment and decision making in a wide range of

applications

• Model cause-effect relationships, uncertainty

• Incorporate expert judgement

• Combine diverse types of information

• All assumptions visible and auditable

• Ability to forecast with missing data

• Rigorous, mathematical semantics

• Good tool support

References

• Fenton NE and Pfleeger SL, ‘Software Metrics: A Rigorous &

Practical Approach’

• Software metrics slides by Ivan Bruha